Jingjing Liu

Adversarial VQA: A New Benchmark for Evaluating the Robustness of VQA Models

Jun 01, 2021

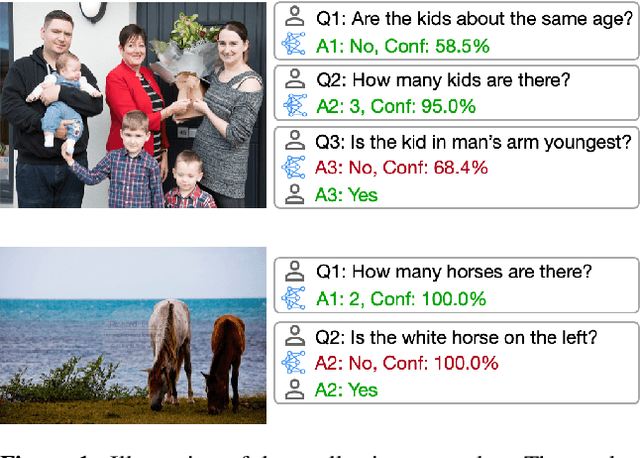

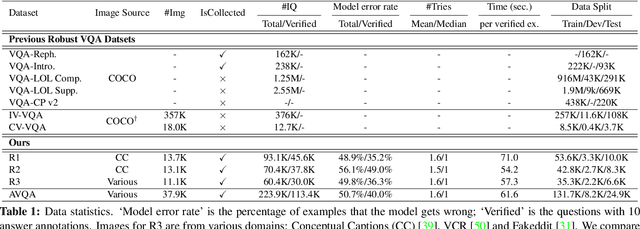

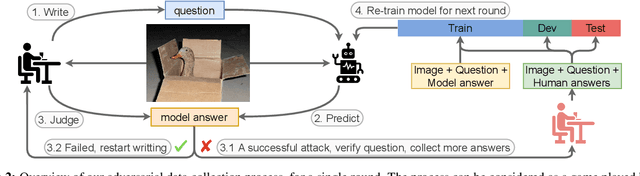

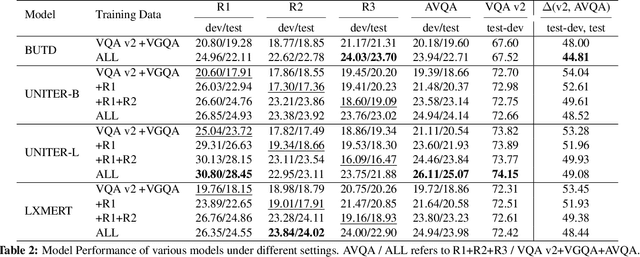

Abstract:With large-scale pre-training, the past two years have witnessed significant performance boost on the Visual Question Answering (VQA) task. Though rapid progresses have been made, it remains unclear whether these state-of-the-art (SOTA) VQA models are robust when encountering test examples in the wild. To study this, we introduce Adversarial VQA, a new large-scale VQA benchmark, collected iteratively via an adversarial human-and-model-in-the-loop procedure. Through this new benchmark, we present several interesting findings. (i) Surprisingly, during dataset collection, we find that non-expert annotators can successfully attack SOTA VQA models with relative ease. (ii) We test a variety of SOTA VQA models on our new dataset to highlight their fragility, and find that both large-scale pre-trained models and adversarial training methods can only achieve far lower performance than what they can achieve on the standard VQA v2 dataset. (iii) When considered as data augmentation, our dataset can be used to improve the performance on other robust VQA benchmarks. (iv) We present a detailed analysis of the dataset, providing valuable insights on the challenges it brings to the community. We hope Adversarial VQA can serve as a valuable benchmark that will be used by future work to test the robustness of its developed VQA models. Our dataset is publicly available at https://adversarialvqa. github.io/.

Playing Lottery Tickets with Vision and Language

Apr 23, 2021

Abstract:Large-scale transformer-based pre-training has recently revolutionized vision-and-language (V+L) research. Models such as LXMERT, ViLBERT and UNITER have significantly lifted the state of the art over a wide range of V+L tasks. However, the large number of parameters in such models hinders their application in practice. In parallel, work on the lottery ticket hypothesis has shown that deep neural networks contain small matching subnetworks that can achieve on par or even better performance than the dense networks when trained in isolation. In this work, we perform the first empirical study to assess whether such trainable subnetworks also exist in pre-trained V+L models. We use UNITER, one of the best-performing V+L models, as the testbed, and consolidate 7 representative V+L tasks for experiments, including visual question answering, visual commonsense reasoning, visual entailment, referring expression comprehension, image-text retrieval, GQA, and NLVR$^2$. Through comprehensive analysis, we summarize our main findings as follows. ($i$) It is difficult to find subnetworks (i.e., the tickets) that strictly match the performance of the full UNITER model. However, it is encouraging to confirm that we can find "relaxed" winning tickets at 50%-70% sparsity that maintain 99% of the full accuracy. ($ii$) Subnetworks found by task-specific pruning transfer reasonably well to the other tasks, while those found on the pre-training tasks at 60%/70% sparsity transfer universally, matching 98%/96% of the full accuracy on average over all the tasks. ($iii$) Adversarial training can be further used to enhance the performance of the found lottery tickets.

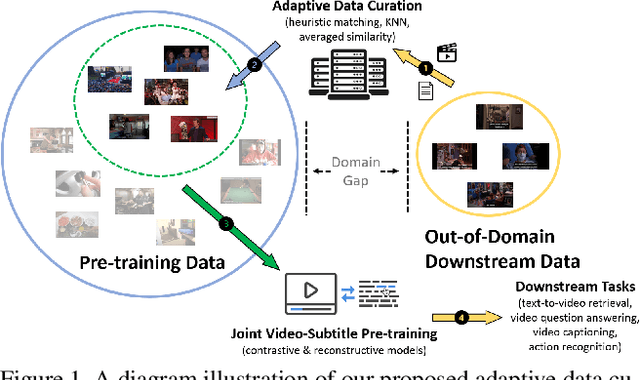

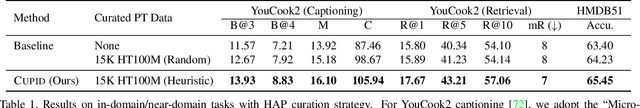

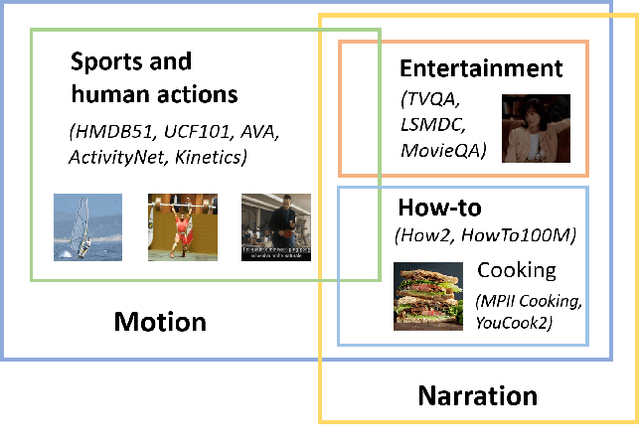

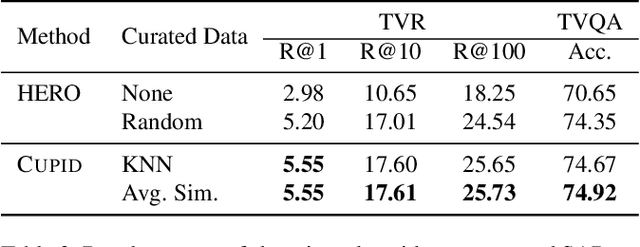

CUPID: Adaptive Curation of Pre-training Data for Video-and-Language Representation Learning

Apr 13, 2021

Abstract:This work concerns video-language pre-training and representation learning. In this now ubiquitous training scheme, a model first performs pre-training on paired videos and text (e.g., video clips and accompanied subtitles) from a large uncurated source corpus, before transferring to specific downstream tasks. This two-stage training process inevitably raises questions about the generalization ability of the pre-trained model, which is particularly pronounced when a salient domain gap exists between source and target data (e.g., instructional cooking videos vs. movies). In this paper, we first bring to light the sensitivity of pre-training objectives (contrastive vs. reconstructive) to domain discrepancy. Then, we propose a simple yet effective framework, CUPID, to bridge this domain gap by filtering and adapting source data to the target data, followed by domain-focused pre-training. Comprehensive experiments demonstrate that pre-training on a considerably small subset of domain-focused data can effectively close the source-target domain gap and achieve significant performance gain, compared to random sampling or even exploiting the full pre-training dataset. CUPID yields new state-of-the-art performance across multiple video-language and video tasks, including text-to-video retrieval [72, 37], video question answering [36], and video captioning [72], with consistent performance lift over different pre-training methods.

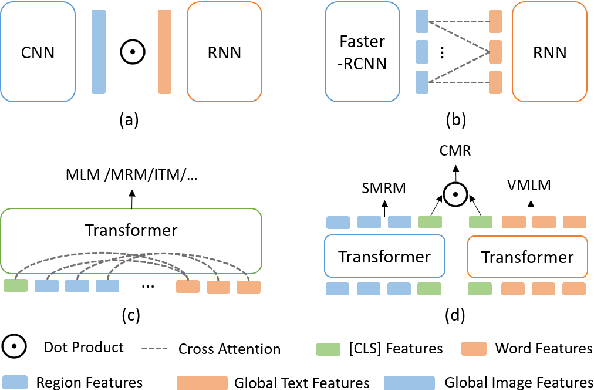

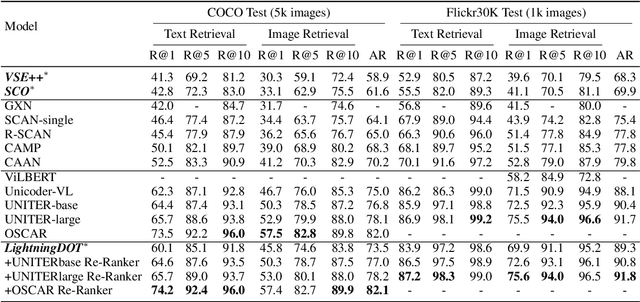

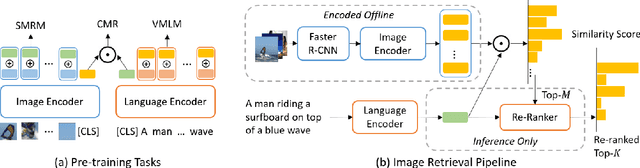

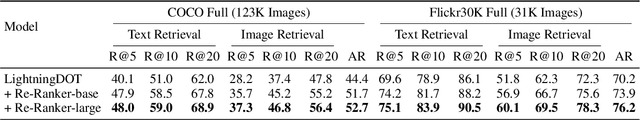

LightningDOT: Pre-training Visual-Semantic Embeddings for Real-Time Image-Text Retrieval

Apr 11, 2021

Abstract:Multimodal pre-training has propelled great advancement in vision-and-language research. These large-scale pre-trained models, although successful, fatefully suffer from slow inference speed due to enormous computation cost mainly from cross-modal attention in Transformer architecture. When applied to real-life applications, such latency and computation demand severely deter the practical use of pre-trained models. In this paper, we study Image-text retrieval (ITR), the most mature scenario of V+L application, which has been widely studied even prior to the emergence of recent pre-trained models. We propose a simple yet highly effective approach, LightningDOT that accelerates the inference time of ITR by thousands of times, without sacrificing accuracy. LightningDOT removes the time-consuming cross-modal attention by pre-training on three novel learning objectives, extracting feature indexes offline, and employing instant dot-product matching with further re-ranking, which significantly speeds up retrieval process. In fact, LightningDOT achieves new state of the art across multiple ITR benchmarks such as Flickr30k, COCO and Multi30K, outperforming existing pre-trained models that consume 1000x magnitude of computational hours. Code and pre-training checkpoints are available at https://github.com/intersun/LightningDOT.

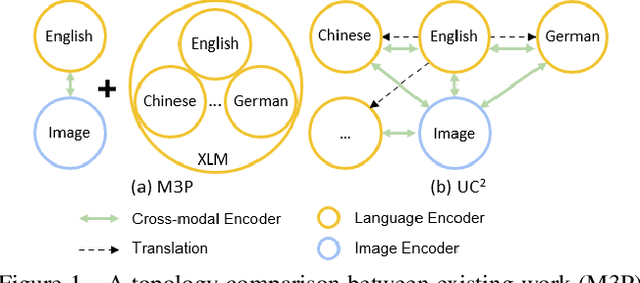

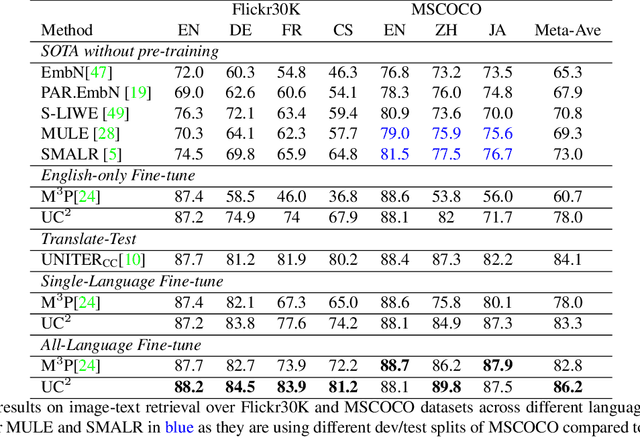

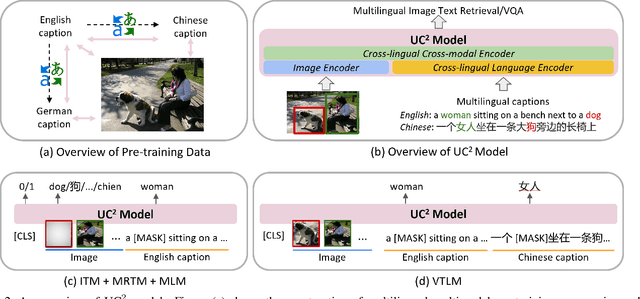

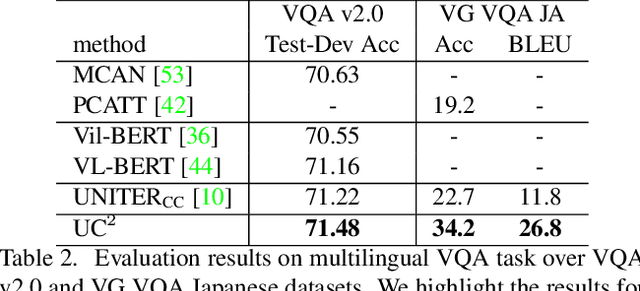

UC2: Universal Cross-lingual Cross-modal Vision-and-Language Pre-training

Apr 01, 2021

Abstract:Vision-and-language pre-training has achieved impressive success in learning multimodal representations between vision and language. To generalize this success to non-English languages, we introduce UC2, the first machine translation-augmented framework for cross-lingual cross-modal representation learning. To tackle the scarcity problem of multilingual captions for image datasets, we first augment existing English-only datasets with other languages via machine translation (MT). Then we extend the standard Masked Language Modeling and Image-Text Matching training objectives to multilingual setting, where alignment between different languages is captured through shared visual context (i.e, using image as pivot). To facilitate the learning of a joint embedding space of images and all languages of interest, we further propose two novel pre-training tasks, namely Masked Region-to-Token Modeling (MRTM) and Visual Translation Language Modeling (VTLM), leveraging MT-enhanced translated data. Evaluation on multilingual image-text retrieval and multilingual visual question answering benchmarks demonstrates that our proposed framework achieves new state-of-the-art on diverse non-English benchmarks while maintaining comparable performance to monolingual pre-trained models on English tasks.

The Elastic Lottery Ticket Hypothesis

Mar 30, 2021

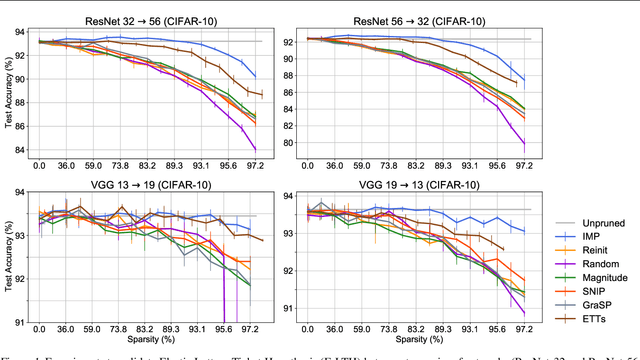

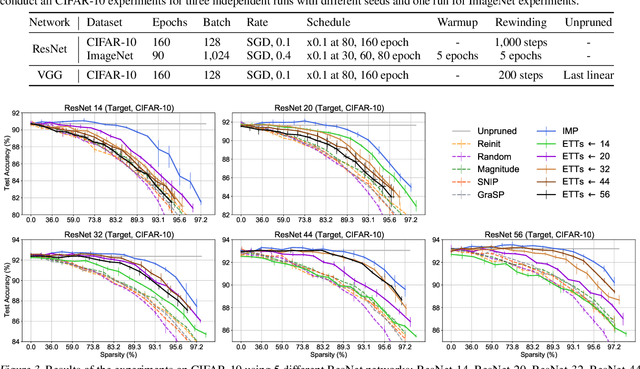

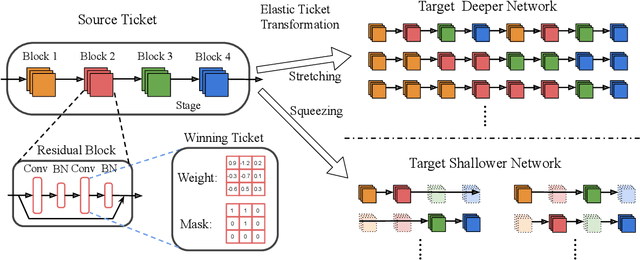

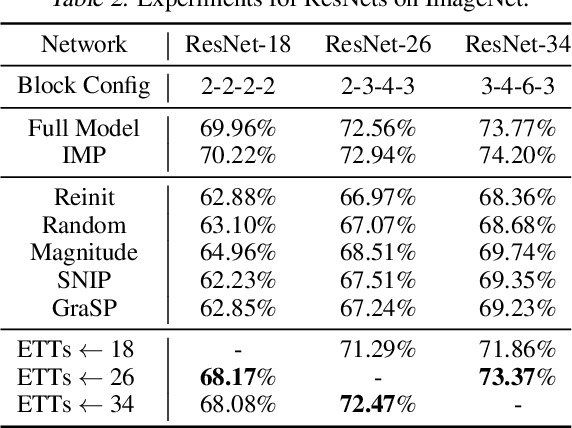

Abstract:Lottery Ticket Hypothesis raises keen attention to identifying sparse trainable subnetworks or winning tickets, at the initialization (or early stage) of training, which can be trained in isolation to achieve similar or even better performance compared to the full models. Despite many efforts being made, the most effective method to identify such winning tickets is still Iterative Magnitude-based Pruning (IMP), which is computationally expensive and has to be run thoroughly for every different network. A natural question that comes in is: can we "transform" the winning ticket found in one network to another with a different architecture, yielding a winning ticket for the latter at the beginning, without re-doing the expensive IMP? Answering this question is not only practically relevant for efficient "once-for-all" winning ticket finding, but also theoretically appealing for uncovering inherently scalable sparse patterns in networks. We conduct extensive experiments on CIFAR-10 and ImageNet, and propose a variety of strategies to tweak the winning tickets found from different networks of the same model family (e.g., ResNets). Based on these results, we articulate the Elastic Lottery Ticket Hypothesis (E-LTH): by mindfully replicating (or dropping) and re-ordering layers for one network, its corresponding winning ticket could be stretched (or squeezed) into a subnetwork for another deeper (or shallower) network from the same family, whose performance is nearly as competitive as the latter's winning ticket directly found by IMP. We have also thoroughly compared E-LTH with pruning-at-initialization and dynamic sparse training methods, and discuss the generalizability of E-LTH to different model families, layer types, and even across datasets. Our codes are publicly available at https://github.com/VITA-Group/ElasticLTH.

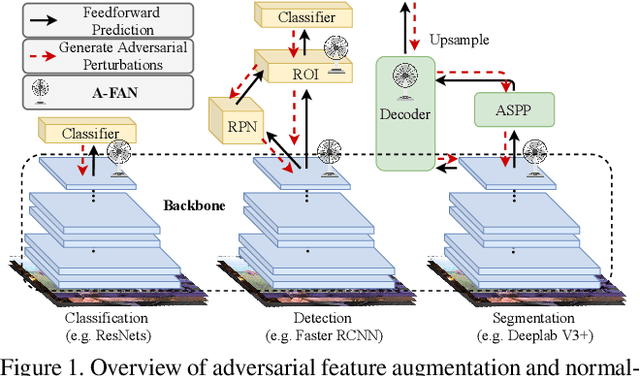

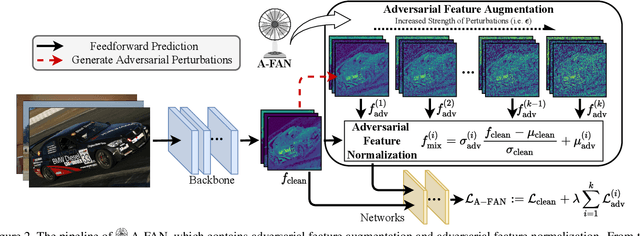

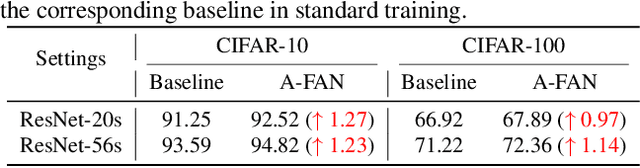

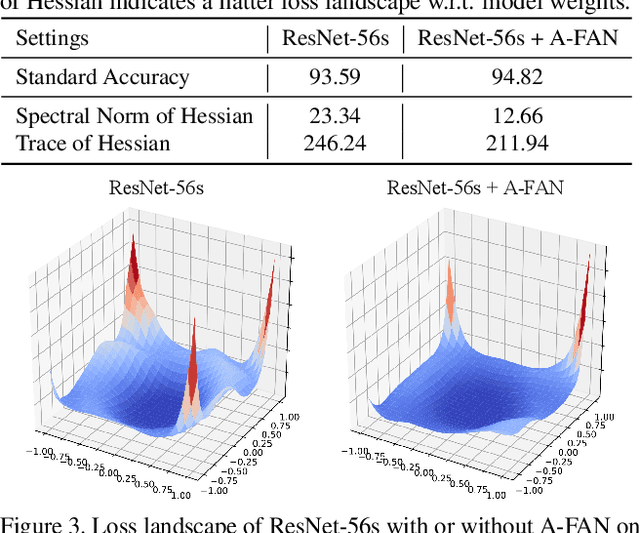

Adversarial Feature Augmentation and Normalization for Visual Recognition

Mar 22, 2021

Abstract:Recent advances in computer vision take advantage of adversarial data augmentation to ameliorate the generalization ability of classification models. Here, we present an effective and efficient alternative that advocates adversarial augmentation on intermediate feature embeddings, instead of relying on computationally-expensive pixel-level perturbations. We propose Adversarial Feature Augmentation and Normalization (A-FAN), which (i) first augments visual recognition models with adversarial features that integrate flexible scales of perturbation strengths, (ii) then extracts adversarial feature statistics from batch normalization, and re-injects them into clean features through feature normalization. We validate the proposed approach across diverse visual recognition tasks with representative backbone networks, including ResNets and EfficientNets for classification, Faster-RCNN for detection, and Deeplab V3+ for segmentation. Extensive experiments show that A-FAN yields consistent generalization improvement over strong baselines across various datasets for classification, detection and segmentation tasks, such as CIFAR-10, CIFAR-100, ImageNet, Pascal VOC2007, Pascal VOC2012, COCO2017, and Cityspaces. Comprehensive ablation studies and detailed analyses also demonstrate that adding perturbations to specific modules and layers of classification/detection/segmentation backbones yields optimal performance. Codes and pre-trained models will be made available at: https://github.com/VITA-Group/CV_A-FAN.

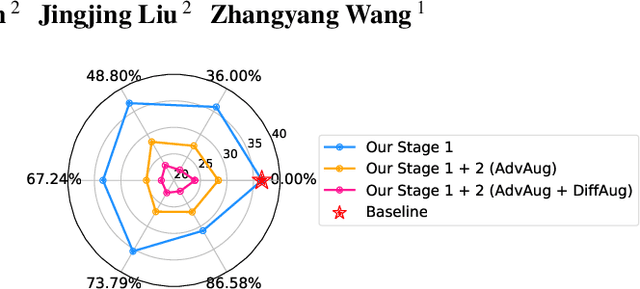

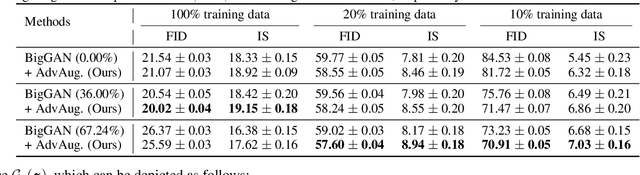

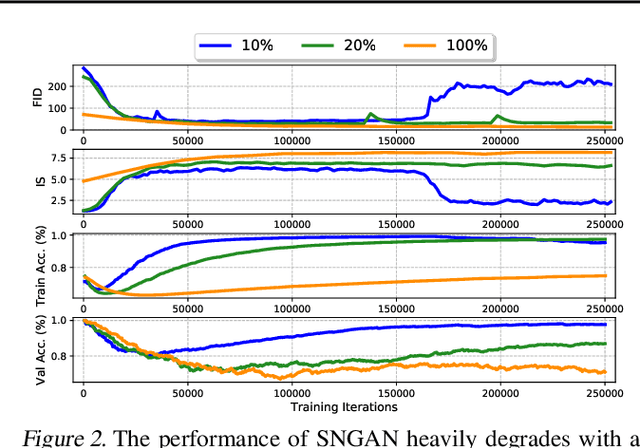

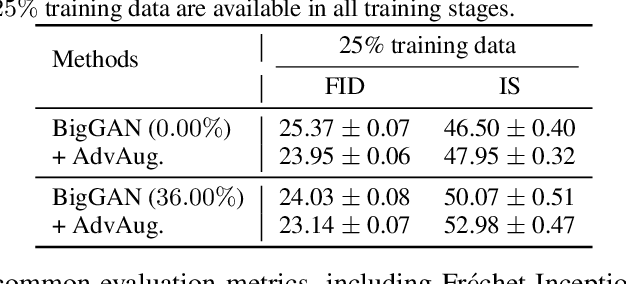

Ultra-Data-Efficient GAN Training: Drawing A Lottery Ticket First, Then Training It Toughly

Feb 28, 2021

Abstract:Training generative adversarial networks (GANs) with limited data generally results in deteriorated performance and collapsed models. To conquer this challenge, we are inspired by the latest observation of Kalibhat et al. (2020); Chen et al.(2021d), that one can discover independently trainable and highly sparse subnetworks (a.k.a., lottery tickets) from GANs. Treating this as an inductive prior, we decompose the data-hungry GAN training into two sequential sub-problems: (i) identifying the lottery ticket from the original GAN; then (ii) training the found sparse subnetwork with aggressive data and feature augmentations. Both sub-problems re-use the same small training set of real images. Such a coordinated framework enables us to focus on lower-complexity and more data-efficient sub-problems, effectively stabilizing training and improving convergence. Comprehensive experiments endorse the effectiveness of our proposed ultra-data-efficient training framework, across various GAN architectures (SNGAN, BigGAN, and StyleGAN2) and diverse datasets (CIFAR-10, CIFAR-100, Tiny-ImageNet, and ImageNet). Besides, our training framework also displays powerful few-shot generalization ability, i.e., generating high-fidelity images by training from scratch with just 100 real images, without any pre-training. Codes are available at: https://github.com/VITA-Group/Ultra-Data-Efficient-GAN-Training.

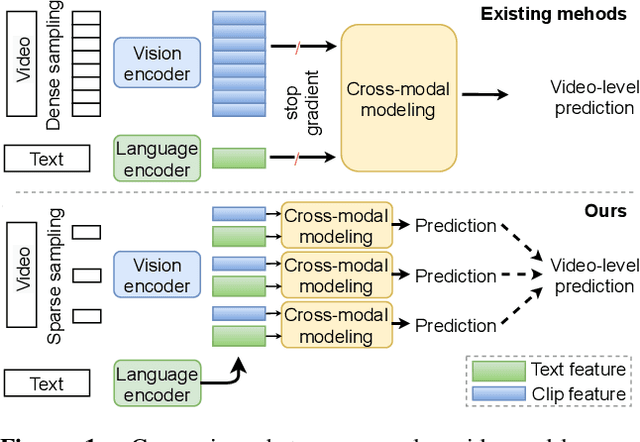

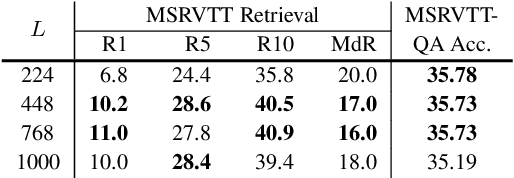

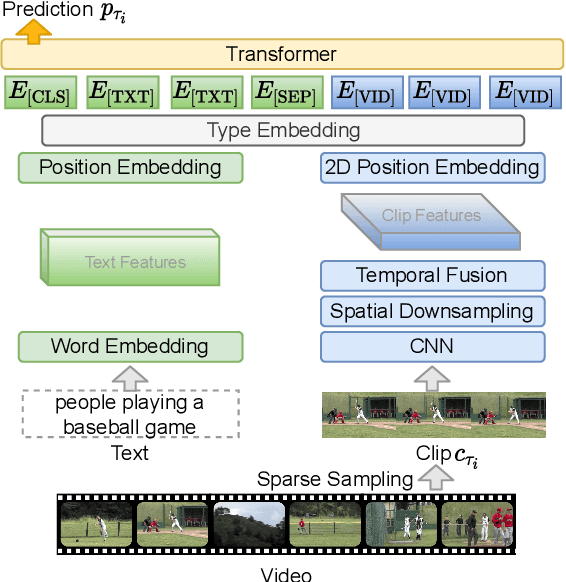

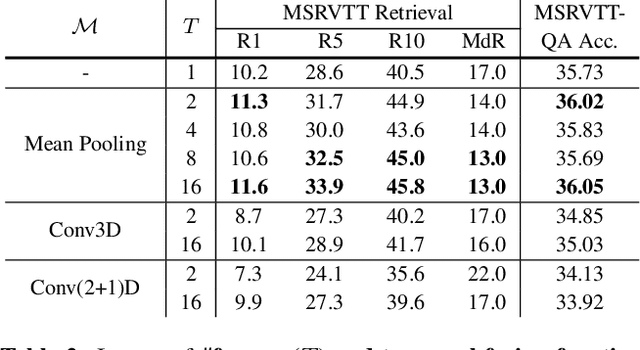

Less is More: ClipBERT for Video-and-Language Learning via Sparse Sampling

Feb 11, 2021

Abstract:The canonical approach to video-and-language learning (e.g., video question answering) dictates a neural model to learn from offline-extracted dense video features from vision models and text features from language models. These feature extractors are trained independently and usually on tasks different from the target domains, rendering these fixed features sub-optimal for downstream tasks. Moreover, due to the high computational overload of dense video features, it is often difficult (or infeasible) to plug feature extractors directly into existing approaches for easy finetuning. To provide a remedy to this dilemma, we propose a generic framework ClipBERT that enables affordable end-to-end learning for video-and-language tasks, by employing sparse sampling, where only a single or a few sparsely sampled short clips from a video are used at each training step. Experiments on text-to-video retrieval and video question answering on six datasets demonstrate that ClipBERT outperforms (or is on par with) existing methods that exploit full-length videos, suggesting that end-to-end learning with just a few sparsely sampled clips is often more accurate than using densely extracted offline features from full-length videos, proving the proverbial less-is-more principle. Videos in the datasets are from considerably different domains and lengths, ranging from 3-second generic domain GIF videos to 180-second YouTube human activity videos, showing the generalization ability of our approach. Comprehensive ablation studies and thorough analyses are provided to dissect what factors lead to this success. Our code is publicly available at https://github.com/jayleicn/ClipBERT

EarlyBERT: Efficient BERT Training via Early-bird Lottery Tickets

Dec 31, 2020

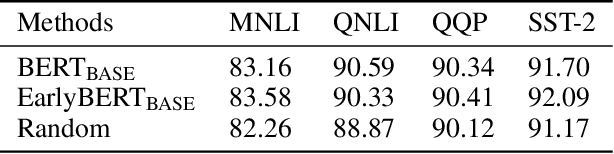

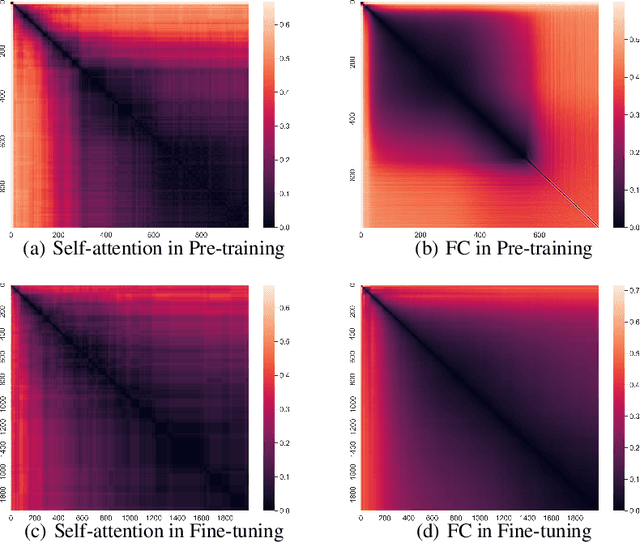

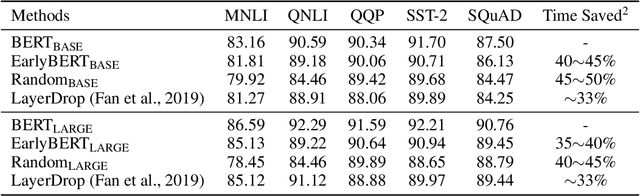

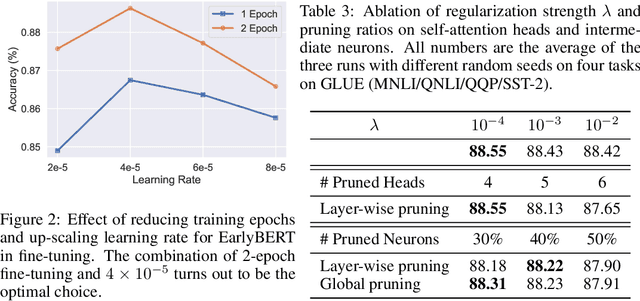

Abstract:Deep, heavily overparameterized language models such as BERT, XLNet and T5 have achieved impressive success in many NLP tasks. However, their high model complexity requires enormous computation resources and extremely long training time for both pre-training and fine-tuning. Many works have studied model compression on large NLP models, but only focus on reducing inference cost/time, while still requiring expensive training process. Other works use extremely large batch sizes to shorten the pre-training time at the expense of high demand for computation resources. In this paper, inspired by the Early-Bird Lottery Tickets studied for computer vision tasks, we propose EarlyBERT, a general computationally-efficient training algorithm applicable to both pre-training and fine-tuning of large-scale language models. We are the first to identify structured winning tickets in the early stage of BERT training, and use them for efficient training. Comprehensive pre-training and fine-tuning experiments on GLUE and SQuAD downstream tasks show that EarlyBERT easily achieves comparable performance to standard BERT with 35~45% less training time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge