Jianchun Liu

Beyond Physical Labels: Redefining Domains for Robust WiFi-based Gesture Recognition

Jan 08, 2026Abstract:In this paper, we propose GesFi, a novel WiFi-based gesture recognition system that introduces WiFi latent domain mining to redefine domains directly from the data itself. GesFi first processes raw sensing data collected from WiFi receivers using CSI-ratio denoising, Short-Time Fast Fourier Transform, and visualization techniques to generate standardized input representations. It then employs class-wise adversarial learning to suppress gesture semantic and leverages unsupervised clustering to automatically uncover latent domain factors responsible for distributional shifts. These latent domains are then aligned through adversarial learning to support robust cross-domain generalization. Finally, the system is applied to the target environment for robust gesture inference. We deployed GesFi under both single-pair and multi-pair settings using commodity WiFi transceivers, and evaluated it across multiple public datasets and real-world environments. Compared to state-of-the-art baselines, GesFi achieves up to 78% and 50% performance improvements over existing adversarial methods, and consistently outperforms prior generalization approaches across most cross-domain tasks.

SABlock: Semantic-Aware KV Cache Eviction with Adaptive Compression Block Size

Oct 26, 2025

Abstract:The growing memory footprint of the Key-Value (KV) cache poses a severe scalability bottleneck for long-context Large Language Model (LLM) inference. While KV cache eviction has emerged as an effective solution by discarding less critical tokens, existing token-, block-, and sentence-level compression methods struggle to balance semantic coherence and memory efficiency. To this end, we introduce SABlock, a \underline{s}emantic-aware KV cache eviction framework with \underline{a}daptive \underline{block} sizes. Specifically, SABlock first performs semantic segmentation to align compression boundaries with linguistic structures, then applies segment-guided token scoring to refine token importance estimation. Finally, for each segment, a budget-driven search strategy adaptively determines the optimal block size that preserves semantic integrity while improving compression efficiency under a given cache budget. Extensive experiments on long-context benchmarks demonstrate that SABlock consistently outperforms state-of-the-art baselines under the same memory budgets. For instance, on Needle-in-a-Haystack (NIAH), SABlock achieves 99.9% retrieval accuracy with only 96 KV entries, nearly matching the performance of the full-cache baseline that retains up to 8K entries. Under a fixed cache budget of 1,024, SABlock further reduces peak memory usage by 46.28% and achieves up to 9.5x faster decoding on a 128K context length.

Accelerating Mixture-of-Expert Inference with Adaptive Expert Split Mechanism

Sep 10, 2025

Abstract:Mixture-of-Experts (MoE) has emerged as a promising architecture for modern large language models (LLMs). However, massive parameters impose heavy GPU memory (i.e., VRAM) demands, hindering the widespread adoption of MoE LLMs. Offloading the expert parameters to CPU RAM offers an effective way to alleviate the VRAM requirements for MoE inference. Existing approaches typically cache a small subset of experts in VRAM and dynamically prefetch experts from RAM during inference, leading to significant degradation in inference speed due to the poor cache hit rate and substantial expert loading latency. In this work, we propose MoEpic, an efficient MoE inference system with a novel expert split mechanism. Specifically, each expert is vertically divided into two segments: top and bottom. MoEpic caches the top segment of hot experts, so that more experts will be stored under the limited VRAM budget, thereby improving the cache hit rate. During each layer's inference, MoEpic predicts and prefetches the activated experts for the next layer. Since the top segments of cached experts are exempt from fetching, the loading time is reduced, which allows efficient transfer-computation overlap. Nevertheless, the performance of MoEpic critically depends on the cache configuration (i.e., each layer's VRAM budget and expert split ratio). To this end, we propose a divide-and-conquer algorithm based on fixed-point iteration for adaptive cache configuration. Extensive experiments on popular MoE LLMs demonstrate that MoEpic can save about half of the GPU cost, while lowering the inference latency by about 37.51%-65.73% compared to the baselines.

Mitigating Catastrophic Forgetting with Adaptive Transformer Block Expansion in Federated Fine-Tuning

Jun 06, 2025

Abstract:Federated fine-tuning (FedFT) of large language models (LLMs) has emerged as a promising solution for adapting models to distributed data environments while ensuring data privacy. Existing FedFT methods predominantly utilize parameter-efficient fine-tuning (PEFT) techniques to reduce communication and computation overhead. However, they often fail to adequately address the catastrophic forgetting, a critical challenge arising from continual adaptation in distributed environments. The traditional centralized fine-tuning methods, which are not designed for the heterogeneous and privacy-constrained nature of federated environments, struggle to mitigate this issue effectively. Moreover, the challenge is further exacerbated by significant variation in data distributions and device capabilities across clients, which leads to intensified forgetting and degraded model generalization. To tackle these issues, we propose FedBE, a novel FedFT framework that integrates an adaptive transformer block expansion mechanism with a dynamic trainable-block allocation strategy. Specifically, FedBE expands trainable blocks within the model architecture, structurally separating newly learned task-specific knowledge from the original pre-trained representations. Additionally, FedBE dynamically assigns these trainable blocks to clients based on their data distributions and computational capabilities. This enables the framework to better accommodate heterogeneous federated environments and enhances the generalization ability of the model.Extensive experiments show that compared with existing federated fine-tuning methods, FedBE achieves 12-74% higher accuracy retention on general tasks after fine-tuning and a model convergence acceleration ratio of 1.9-3.1x without degrading the accuracy of downstream tasks.

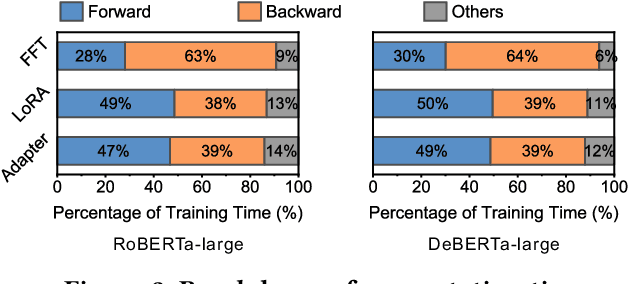

Efficient Federated Fine-Tuning of Large Language Models with Layer Dropout

Mar 13, 2025

Abstract:Fine-tuning plays a crucial role in enabling pre-trained LLMs to evolve from general language comprehension to task-specific expertise. To preserve user data privacy, federated fine-tuning is often employed and has emerged as the de facto paradigm. However, federated fine-tuning is prohibitively inefficient due to the tension between LLM complexity and the resource constraint of end devices, incurring unaffordable fine-tuning overhead. Existing literature primarily utilizes parameter-efficient fine-tuning techniques to mitigate communication costs, yet computational and memory burdens continue to pose significant challenges for developers. This work proposes DropPEFT, an innovative federated PEFT framework that employs a novel stochastic transformer layer dropout method, enabling devices to deactivate a considerable fraction of LLMs layers during training, thereby eliminating the associated computational load and memory footprint. In DropPEFT, a key challenge is the proper configuration of dropout ratios for layers, as overhead and training performance are highly sensitive to this setting. To address this challenge, we adaptively assign optimal dropout-ratio configurations to devices through an exploration-exploitation strategy, achieving efficient and effective fine-tuning. Extensive experiments show that DropPEFT can achieve a 1.3-6.3\times speedup in model convergence and a 40%-67% reduction in memory footprint compared to state-of-the-art methods.

Collaborative Speculative Inference for Efficient LLM Inference Serving

Mar 13, 2025

Abstract:Speculative inference is a promising paradigm employing small speculative models (SSMs) as drafters to generate draft tokens, which are subsequently verified in parallel by the target large language model (LLM). This approach enhances the efficiency of inference serving by reducing LLM inference latency and costs while preserving generation quality. However, existing speculative methods face critical challenges, including inefficient resource utilization and limited draft acceptance, which constrain their scalability and overall effectiveness. To overcome these obstacles, we present CoSine, a novel speculative inference system that decouples sequential speculative decoding from parallel verification, enabling efficient collaboration among multiple nodes. Specifically, CoSine routes inference requests to specialized drafters based on their expertise and incorporates a confidence-based token fusion mechanism to synthesize outputs from cooperating drafters, ensuring high-quality draft generation. Additionally, CoSine dynamically orchestrates the execution of speculative decoding and verification in a pipelined manner, employing batch scheduling to selectively group requests and adaptive speculation control to minimize idle periods. By optimizing parallel workflows through heterogeneous node collaboration, CoSine balances draft generation and verification throughput in real-time, thereby maximizing resource utilization. Experimental results demonstrate that CoSine achieves superior performance compared to state-of-the-art speculative approaches. Notably, with equivalent resource costs, CoSine achieves up to a 23.2% decrease in latency and a 32.5% increase in throughput compared to baseline methods.

A Robust Federated Learning Framework for Undependable Devices at Scale

Dec 28, 2024

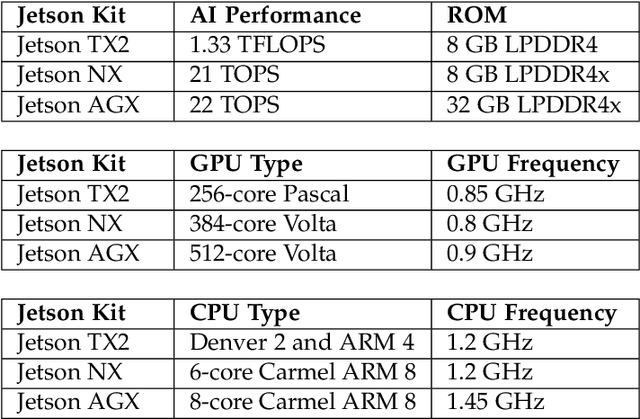

Abstract:In a federated learning (FL) system, many devices, such as smartphones, are often undependable (e.g., frequently disconnected from WiFi) during training. Existing FL frameworks always assume a dependable environment and exclude undependable devices from training, leading to poor model performance and resource wastage. In this paper, we propose FLUDE to effectively deal with undependable environments. First, FLUDE assesses the dependability of devices based on the probability distribution of their historical behaviors (e.g., the likelihood of successfully completing training). Based on this assessment, FLUDE adaptively selects devices with high dependability for training. To mitigate resource wastage during the training phase, FLUDE maintains a model cache on each device, aiming to preserve the latest training state for later use in case local training on an undependable device is interrupted. Moreover, FLUDE proposes a staleness-aware strategy to judiciously distribute the global model to a subset of devices, thus significantly reducing resource wastage while maintaining model performance. We have implemented FLUDE on two physical platforms with 120 smartphones and NVIDIA Jetson devices. Extensive experimental results demonstrate that FLUDE can effectively improve model performance and resource efficiency of FL training in undependable environments.

Adaptive Parameter-Efficient Federated Fine-Tuning on Heterogeneous Devices

Dec 28, 2024Abstract:Federated fine-tuning (FedFT) has been proposed to fine-tune the pre-trained language models in a distributed manner. However, there are two critical challenges for efficient FedFT in practical applications, i.e., resource constraints and system heterogeneity. Existing works rely on parameter-efficient fine-tuning methods, e.g., low-rank adaptation (LoRA), but with major limitations. Herein, based on the inherent characteristics of FedFT, we observe that LoRA layers with higher ranks added close to the output help to save resource consumption while achieving comparable fine-tuning performance. Then we propose a novel LoRA-based FedFT framework, termed LEGEND, which faces the difficulty of determining the number of LoRA layers (called, LoRA depth) and the rank of each LoRA layer (called, rank distribution). We analyze the coupled relationship between LoRA depth and rank distribution, and design an efficient LoRA configuration algorithm for heterogeneous devices, thereby promoting fine-tuning efficiency. Extensive experiments are conducted on a physical platform with 80 commercial devices. The results show that LEGEND can achieve a speedup of 1.5-2.8$\times$ and save communication costs by about 42.3% when achieving the target accuracy, compared to the advanced solutions.

Caesar: A Low-deviation Compression Approach for Efficient Federated Learning

Dec 28, 2024Abstract:Compression is an efficient way to relieve the tremendous communication overhead of federated learning (FL) systems. However, for the existing works, the information loss under compression will lead to unexpected model/gradient deviation for the FL training, significantly degrading the training performance, especially under the challenges of data heterogeneity and model obsolescence. To strike a delicate trade-off between model accuracy and traffic cost, we propose Caesar, a novel FL framework with a low-deviation compression approach. For the global model download, we design a greedy method to optimize the compression ratio for each device based on the staleness of the local model, ensuring a precise initial model for local training. Regarding the local gradient upload, we utilize the device's local data properties (\ie, sample volume and label distribution) to quantify its local gradient's importance, which then guides the determination of the gradient compression ratio. Besides, with the fine-grained batch size optimization, Caesar can significantly diminish the devices' idle waiting time under the synchronized barrier. We have implemented Caesar on two physical platforms with 40 smartphones and 80 NVIDIA Jetson devices. Extensive results show that Caesar can reduce the traffic costs by about 25.54%$\thicksim$37.88% compared to the compression-based baselines with the same target accuracy, while incurring only a 0.68% degradation in final test accuracy relative to the full-precision communication.

Enhancing Federated Graph Learning via Adaptive Fusion of Structural and Node Characteristics

Dec 25, 2024

Abstract:Federated Graph Learning (FGL) has demonstrated the advantage of training a global Graph Neural Network (GNN) model across distributed clients using their local graph data. Unlike Euclidean data (\eg, images), graph data is composed of nodes and edges, where the overall node-edge connections determine the topological structure, and individual nodes along with their neighbors capture local node features. However, existing studies tend to prioritize one aspect over the other, leading to an incomplete understanding of the data and the potential misidentification of key characteristics across varying graph scenarios. Additionally, the non-independent and identically distributed (non-IID) nature of graph data makes the extraction of these two data characteristics even more challenging. To address the above issues, we propose a novel FGL framework, named FedGCF, which aims to simultaneously extract and fuse structural properties and node features to effectively handle diverse graph scenarios. FedGCF first clusters clients by structural similarity, performing model aggregation within each cluster to form the shared structural model. Next, FedGCF selects the clients with common node features and aggregates their models to generate a common node model. This model is then propagated to all clients, allowing common node features to be shared. By combining these two models with a proper ratio, FedGCF can achieve a comprehensive understanding of the graph data and deliver better performance, even under non-IID distributions. Experimental results show that FedGCF improves accuracy by 4.94%-7.24% under different data distributions and reduces communication cost by 64.18%-81.25% to reach the same accuracy compared to baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge