Giulio Romualdi

Physics-Informed Learning for the Friction Modeling of High-Ratio Harmonic Drives

Oct 16, 2024

Abstract:This paper presents a scalable method for friction identification in robots equipped with electric motors and high-ratio harmonic drives, utilizing Physics-Informed Neural Networks (PINN). This approach eliminates the need for dedicated setups and joint torque sensors by leveraging the robo\v{t}s intrinsic model and state data. We present a comprehensive pipeline that includes data acquisition, preprocessing, ground truth generation, and model identification. The effectiveness of the PINN-based friction identification is validated through extensive testing on two different joints of the humanoid robot ergoCub, comparing its performance against traditional static friction models like the Coulomb-viscous and Stribeck-Coulomb-viscous models. Integrating the identified PINN-based friction models into a two-layer torque control architecture enhances real-time friction compensation. The results demonstrate significant improvements in control performance and reductions in energy losses, highlighting the scalability and robustness of the proposed method, also for application across a large number of joints as in the case of humanoid robots.

Online DNN-driven Nonlinear MPC for Stylistic Humanoid Robot Walking with Step Adjustment

Oct 10, 2024

Abstract:This paper presents a three-layered architecture that enables stylistic locomotion with online contact location adjustment. Our method combines an autoregressive Deep Neural Network (DNN) acting as a trajectory generation layer with a model-based trajectory adjustment and trajectory control layers. The DNN produces centroidal and postural references serving as an initial guess and regularizer for the other layers. Being the DNN trained on human motion capture data, the resulting robot motion exhibits locomotion patterns, resembling a human walking style. The trajectory adjustment layer utilizes non-linear optimization to ensure dynamically feasible center of mass (CoM) motion while addressing step adjustments. We compare two implementations of the trajectory adjustment layer: one as a receding horizon planner (RHP) and the other as a model predictive controller (MPC). To enhance MPC performance, we introduce a Kalman filter to reduce measurement noise. The filter parameters are automatically tuned with a Genetic Algorithm. Experimental results on the ergoCub humanoid robot demonstrate the system's ability to prevent falls, replicate human walking styles, and withstand disturbances up to 68 Newton. Website: https://sites.google.com/view/dnn-mpc-walking Youtube video: https://www.youtube.com/watch?v=x3tzEfxO-xQ

Automatic Gain Tuning for Humanoid Robots Walking Architectures Using Gradient-Free Optimization Techniques

Sep 27, 2024

Abstract:Developing sophisticated control architectures has endowed robots, particularly humanoid robots, with numerous capabilities. However, tuning these architectures remains a challenging and time-consuming task that requires expert intervention. In this work, we propose a methodology to automatically tune the gains of all layers of a hierarchical control architecture for walking humanoids. We tested our methodology by employing different gradient-free optimization methods: Genetic Algorithm (GA), Covariance Matrix Adaptation Evolution Strategy (CMA-ES), Evolution Strategy (ES), and Differential Evolution (DE). We validated the parameter found both in simulation and on the real ergoCub humanoid robot. Our results show that GA achieves the fastest convergence (10 x 10^3 function evaluations vs 25 x 10^3 needed by the other algorithms) and 100% success rate in completing the task both in simulation and when transferred on the real robotic platform. These findings highlight the potential of our proposed method to automate the tuning process, reducing the need for manual intervention.

Online Non-linear Centroidal MPC with Stability Guarantees for Robust Locomotion of Legged Robots

Sep 02, 2024

Abstract:Nonlinear model predictive locomotion controllers based on the reduced centroidal dynamics are nowadays ubiquitous in legged robots. These schemes, even if they assume an inherent simplification of the robot's dynamics, were shown to endow robots with a step-adjustment capability in reaction to small pushes, and, moreover, in the case of uncertain parameters - as unknown payloads - they were shown to be able to provide some practical, albeit limited, robustness. In this work, we provide rigorous certificates of their closed loop stability via a reformulation of the centroidal MPC controller. This is achieved thanks to a systematic procedure inspired by the machinery of adaptive control, together with ideas coming from Control Lyapunov functions. Our reformulation, in addition, provides robustness for a class of unmeasured constant disturbances. To demonstrate the generality of our approach, we validated our formulation on a new generation of humanoid robots - the 56.7 kg ergoCub, as well as on a commercially available 21 kg quadruped robot, Aliengo.

Remote telepresence over large distances via robot avatars: case studies

Sep 02, 2024

Abstract:This paper discusses the necessary considerations and adjustments that allow a recently proposed avatar system architecture to be used with different robotic avatar morphologies (both wheeled and legged robots with various types of hands and kinematic structures) for the purpose of enabling remote (intercontinental) telepresence under communication bandwidth restrictions. The case studies reported involve robots using both position and torque control modes, independently of their software middleware.

XBG: End-to-end Imitation Learning for Autonomous Behaviour in Human-Robot Interaction and Collaboration

Jun 22, 2024

Abstract:This paper presents XBG (eXteroceptive Behaviour Generation), a multimodal end-to-end Imitation Learning (IL) system for a whole-body autonomous humanoid robot used in real-world Human-Robot Interaction (HRI) scenarios. The main contribution of this paper is an architecture for learning HRI behaviours using a data-driven approach. Through teleoperation, a diverse dataset is collected, comprising demonstrations across multiple HRI scenarios, including handshaking, handwaving, payload reception, walking, and walking with a payload. After synchronizing, filtering, and transforming the data, different Deep Neural Networks (DNN) models are trained. The final system integrates different modalities comprising exteroceptive and proprioceptive sources of information to provide the robot with an understanding of its environment and its own actions. The robot takes sequence of images (RGB and depth) and joints state information during the interactions and then reacts accordingly, demonstrating learned behaviours. By fusing multimodal signals in time, we encode new autonomous capabilities into the robotic platform, allowing the understanding of context changes over time. The models are deployed on ergoCub, a real-world humanoid robot, and their performance is measured by calculating the success rate of the robot's behaviour under the mentioned scenarios.

UKF-Based Sensor Fusion for Joint-Torque Sensorless Humanoid Robots

Feb 28, 2024

Abstract:This paper proposes a novel sensor fusion based on Unscented Kalman Filtering for the online estimation of joint-torques of humanoid robots without joint-torque sensors. At the feature level, the proposed approach considers multimodal measurements (e.g. currents, accelerations, etc.) and non-directly measurable effects, such as external contacts, thus leading to joint torques readily usable in control architectures for human-robot interaction. The proposed sensor fusion can also integrate distributed, non-collocated force/torque sensors, thus being a flexible framework with respect to the underlying robot sensor suit. To validate the approach, we show how the proposed sensor fusion can be integrated into a twolevel torque control architecture aiming at task-space torquecontrol. The performances of the proposed approach are shown through extensive tests on the new humanoid robot ergoCub, currently being developed at Istituto Italiano di Tecnologia. We also compare our strategy with the existing state-of-theart approach based on the recursive Newton-Euler algorithm. Results demonstrate that our method achieves low root mean square errors in torque tracking, ranging from 0.05 Nm to 2.5 Nm, even in the presence of external contacts.

Online Non-linear Centroidal MPC for Humanoid Robots Payload Carrying with Contact-Stable Force Parametrization

May 18, 2023

Abstract:In this paper we consider the problem of allowing a humanoid robot that is subject to a persistent disturbance, in the form of a payload-carrying task, to follow given planned footsteps. To solve this problem, we combine an online nonlinear centroidal Model Predictive Controller - MPC with a contact stable force parametrization. The cost function of the MPC is augmented with terms handling the disturbance and regularizing the parameter. The performance of the resulting controller is validated both in simulations and on the humanoid robot iCub. Finally, the effect of using the parametrization on the computational time of the controller is briefly studied.

Whole-Body Trajectory Optimization for Robot Multimodal Locomotion

Nov 23, 2022

Abstract:The general problem of planning feasible trajectories for multimodal robots is still an open challenge. This paper presents a whole-body trajectory optimisation approach that addresses this challenge by combining methods and tools developed for aerial and legged robots. First, robot models that enable the presented whole-body trajectory optimisation framework are presented. The key model is the so-called robot centroidal momentum, the dynamics of which is directly related to the models of the robot actuation for aerial and terrestrial locomotion. Then, the paper presents how these models can be employed in an optimal control problem to generate either terrestrial or aerial locomotion trajectories with a unified approach. The optimisation problem considers robot kinematics, momentum, thrust forces and their bounds. The overall approach is validated using the multimodal robot iRonCub, a flying humanoid robot that expresses a degree of terrestrial and aerial locomotion. To solve the associated optimal trajectory generation problem, we employ ADAM, a custom-made open-source library that implements a collection of algorithms for calculating rigid-body dynamics using CasADi.

Dynamic Complementarity Conditions and Whole-Body Trajectory Optimization for Humanoid Robot Locomotion

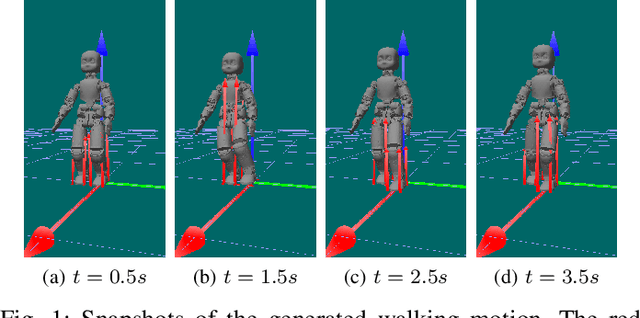

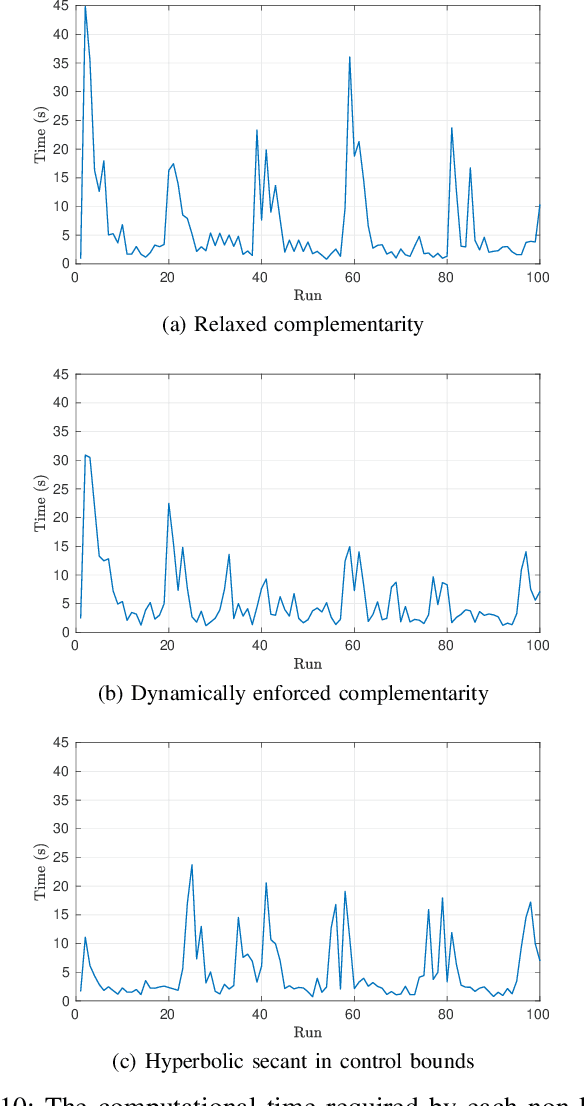

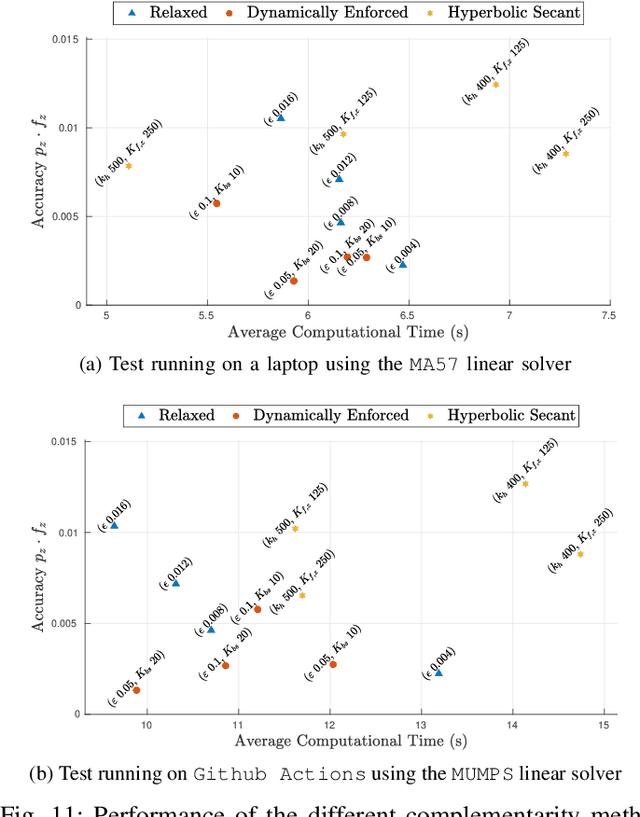

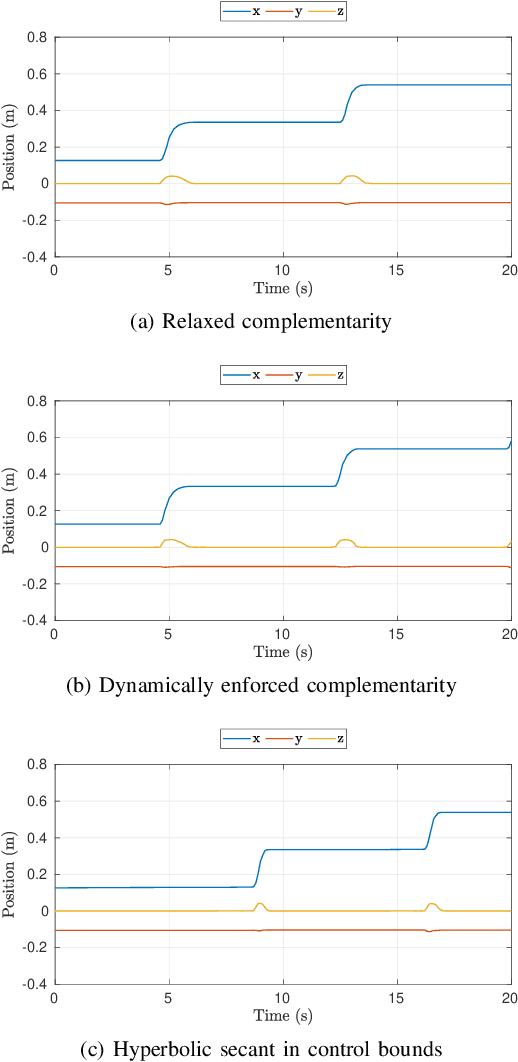

Jul 07, 2022

Abstract:The paper presents a planner to generate walking trajectories by using the centroidal dynamics and the full kinematics of a humanoid robot. The interaction between the robot and the walking surface is modeled explicitly via new conditions, the \emph{Dynamical Complementarity Constraints}. The approach does not require a predefined contact sequence and generates the footsteps automatically. We characterize the robot control objective via a set of tasks, and we address it by solving an optimal control problem. We show that it is possible to achieve walking motions automatically by specifying a minimal set of references, such as a constant desired center of mass velocity and a reference point on the ground. Furthermore, we analyze how the contact modelling choices affect the computational time. We validate the approach by generating and testing walking trajectories for the humanoid robot iCub.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge