Edmund Y. Lam

EPOFusion: Exposure aware Progressive Optimization Method for Infrared and Visible Image Fusion

Mar 17, 2026Abstract:Overexposure frequently occurs in practical scenarios, causing the loss of critical visual information. However, existing infrared and visible fusion methods still exhibit unsatisfactory performance in highly bright regions. To address this, we propose EPOFusion, an exposure-aware fusion model. Specifically, a guidance module is introduced to facilitate the encoder in extracting fine-grained infrared features from overexposed regions. Meanwhile, an iterative decoder incorporating a multiscale context fusion module is designed to progressively enhance the fused image, ensuring consistent details and superior visual quality. Finally, an adaptive loss function dynamically constrains the fusion process, enabling an effective balance between the modalities under varying exposure conditions. To achieve better exposure awareness, we construct the first infrared and visible overexposure dataset (IVOE) with high quality infrared guided annotations for overexposed regions. Extensive experiments show that EPOFusion outperforms existing methods. It maintains infrared cues in overexposed regions while achieving visually faithful fusion in non-overexposed areas, thereby enhancing both visual fidelity and downstream task performance. Code, fusion results and IVOE dataset will be made available at https://github.com/warren-wzw/EPOFusion.git.

Personalized Federated Learning with Residual Fisher Information for Medical Image Segmentation

Mar 16, 2026Abstract:Federated learning enables multiple clients (institutions) to collaboratively train machine learning models without sharing their private data. To address the challenge of data heterogeneity across clients, personalized federated learning (pFL) aims to learn customized models for each client. In this work, we propose pFL-ResFIM, a novel pFL framework that achieves client-adaptive personalization at the parameter level. Specifically, we introduce a new metric, Residual Fisher Information Matrix (ResFIM), to quantify the sensitivity of model parameters to domain discrepancies. To estimate ResFIM for each client model under privacy constraints, we employ a spectral transfer strategy that generates simulated data reflecting the domain styles of different clients. Based on the estimated ResFIM, we partition model parameters into domain-sensitive and domain-invariant components. A personalized model for each client is then constructed by aggregating only the domain-invariant parameters on the server. Extensive experiments on public datasets demonstrate that pFL-ResFIM consistently outperforms state-of-the-art methods, validating its effectiveness.

Joint Segmentation and Grading with Iterative Optimization for Multimodal Glaucoma Diagnosis

Mar 15, 2026Abstract:Accurate diagnosis of glaucoma is challenging, as early-stage changes are subtle and often lack clear structural or appearance cues. Most existing approaches rely on a single modality, such as fundus or optical coherence tomography (OCT), capturing only partial pathological information and often missing early disease progression. In this paper, we propose an iterative multimodal optimization model (IMO) for joint segmentation and grading. IMO integrates fundus and OCT features through a mid-level fusion strategy, enhanced by a cross-modal feature alignment (CMFA) module to reduce modality discrepancies. An iterative refinement decoder progressively optimizes the multimodal features through a denoising diffusion mechanism, enabling fine-grained segmentation of the optic disc and cup while supporting accurate glaucoma grading. Extensive experiments show that our method effectively integrates multimodal features, providing a comprehensive and clinically significant approach to glaucoma assessment. Source codes are available at https://github.com/warren-wzw/IMO.git.

FL-MedSegBench: A Comprehensive Benchmark for Federated Learning on Medical Image Segmentation

Mar 12, 2026Abstract:Federated learning (FL) offers a privacy-preserving paradigm for collaborative medical image analysis without sharing raw data. However, the absence of standardized benchmarks for medical image segmentation hinders fair and comprehensive evaluation of FL methods. To address this gap, we introduce FL-MedSegBench, the first comprehensive benchmark for federated learning on medical image segmentation. Our benchmark encompasses nine segmentation tasks across ten imaging modalities, covering both 2D and 3D formats with realistic clinical heterogeneity. We systematically evaluate eight generic FL (gFL) and five personalized FL (pFL) methods across multiple dimensions: segmentation accuracy, fairness, communication efficiency, convergence behavior, and generalization to unseen domains. Extensive experiments reveal several key insights: (i) pFL methods, particularly those with client-specific batch normalization (\textit{e.g.}, FedBN), consistently outperform generic approaches; (ii) No single method universally dominates, with performance being dataset-dependent; (iii) Communication frequency analysis shows normalization-based personalization methods exhibit remarkable robustness to reduced communication frequency; (iv) Fairness evaluation identifies methods like Ditto and FedRDN that protect underperforming clients; (v) A method's generalization to unseen domains is strongly tied to its ability to perform well across participating clients. We will release an open-source toolkit to foster reproducible research and accelerate clinically applicable FL solutions, providing empirically grounded guidelines for real-world clinical deployment. The source code is available at https://github.com/meiluzhu/FL-MedSegBench.

Gradually Excavating External Knowledge for Implicit Complex Question Answering

Mar 09, 2026Abstract:Recently, large language models (LLMs) have gained much attention for the emergence of human-comparable capabilities and huge potential. However, for open-domain implicit question-answering problems, LLMs may not be the ultimate solution due to the reasons of: 1) uncovered or out-of-date domain knowledge, 2) one-shot generation and hence restricted comprehensiveness. To this end, this work proposes a gradual knowledge excavation framework for open-domain complex question answering, where LLMs iteratively and actively acquire external information, and then reason based on acquired historical knowledge. Specifically, during each step of the solving process, the model selects an action to execute, such as querying external knowledge or performing a single logical reasoning step, to gradually progress toward a final answer. Our method can effectively leverage plug-and-play external knowledge and dynamically adjust the strategy for solving complex questions. Evaluated on the StrategyQA dataset, our method achieves 78.17% accuracy with less than 6% parameters of its competitors, setting new SOTA for ~10B-scale LLMs.

EventTracer: Fast Path Tracing-based Event Stream Rendering

Aug 25, 2025

Abstract:Simulating event streams from 3D scenes has become a common practice in event-based vision research, as it meets the demand for large-scale, high temporal frequency data without setting up expensive hardware devices or undertaking extensive data collections. Yet existing methods in this direction typically work with noiseless RGB frames that are costly to render, and therefore they can only achieve a temporal resolution equivalent to 100-300 FPS, far lower than that of real-world event data. In this work, we propose EventTracer, a path tracing-based rendering pipeline that simulates high-fidelity event sequences from complex 3D scenes in an efficient and physics-aware manner. Specifically, we speed up the rendering process via low sample-per-pixel (SPP) path tracing, and train a lightweight event spiking network to denoise the resulting RGB videos into realistic event sequences. To capture the physical properties of event streams, the network is equipped with a bipolar leaky integrate-and-fired (BiLIF) spiking unit and trained with a bidirectional earth mover distance (EMD) loss. Our EventTracer pipeline runs at a speed of about 4 minutes per second of 720p video, and it inherits the merit of accurate spatiotemporal modeling from its path tracing backbone. We show in two downstream tasks that EventTracer captures better scene details and demonstrates a greater similarity to real-world event data than other event simulators, which establishes it as a promising tool for creating large-scale event-RGB datasets at a low cost, narrowing the sim-to-real gap in event-based vision, and boosting various application scenarios such as robotics, autonomous driving, and VRAR.

Learned Off-aperture Encoding for Wide Field-of-view RGBD Imaging

Jul 30, 2025Abstract:End-to-end (E2E) designed imaging systems integrate coded optical designs with decoding algorithms to enhance imaging fidelity for diverse visual tasks. However, existing E2E designs encounter significant challenges in maintaining high image fidelity at wide fields of view, due to high computational complexity, as well as difficulties in modeling off-axis wave propagation while accounting for off-axis aberrations. In particular, the common approach of placing the encoding element into the aperture or pupil plane results in only a global control of the wavefront. To overcome these limitations, this work explores an additional design choice by positioning a DOE off-aperture, enabling a spatial unmixing of the degrees of freedom and providing local control over the wavefront over the image plane. Our approach further leverages hybrid refractive-diffractive optical systems by linking differentiable ray and wave optics modeling, thereby optimizing depth imaging quality and demonstrating system versatility. Experimental results reveal that the off-aperture DOE enhances the imaging quality by over 5 dB in PSNR at a FoV of approximately $45^\circ$ when paired with a simple thin lens, outperforming traditional on-aperture systems. Furthermore, we successfully recover color and depth information at nearly $28^\circ$ FoV using off-aperture DOE configurations with compound optics. Physical prototypes for both applications validate the effectiveness and versatility of the proposed method.

Dark-EvGS: Event Camera as an Eye for Radiance Field in the Dark

Jul 16, 2025

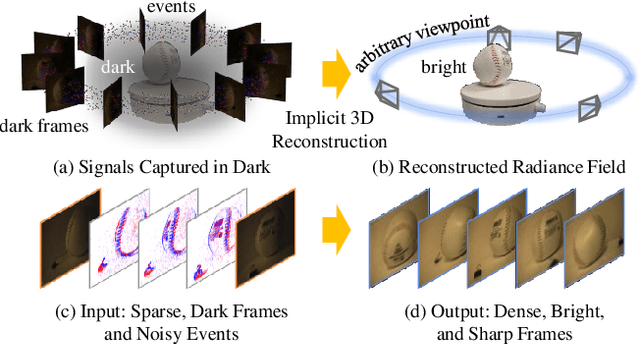

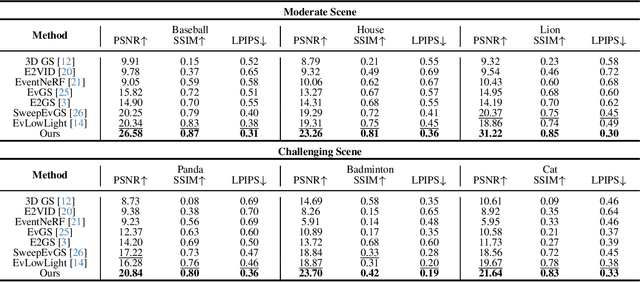

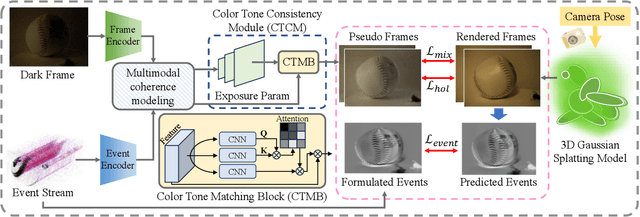

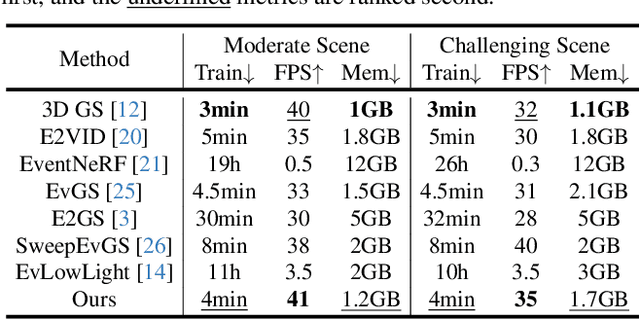

Abstract:In low-light environments, conventional cameras often struggle to capture clear multi-view images of objects due to dynamic range limitations and motion blur caused by long exposure. Event cameras, with their high-dynamic range and high-speed properties, have the potential to mitigate these issues. Additionally, 3D Gaussian Splatting (GS) enables radiance field reconstruction, facilitating bright frame synthesis from multiple viewpoints in low-light conditions. However, naively using an event-assisted 3D GS approach still faced challenges because, in low light, events are noisy, frames lack quality, and the color tone may be inconsistent. To address these issues, we propose Dark-EvGS, the first event-assisted 3D GS framework that enables the reconstruction of bright frames from arbitrary viewpoints along the camera trajectory. Triplet-level supervision is proposed to gain holistic knowledge, granular details, and sharp scene rendering. The color tone matching block is proposed to guarantee the color consistency of the rendered frames. Furthermore, we introduce the first real-captured dataset for the event-guided bright frame synthesis task via 3D GS-based radiance field reconstruction. Experiments demonstrate that our method achieves better results than existing methods, conquering radiance field reconstruction under challenging low-light conditions. The code and sample data are included in the supplementary material.

DreamComposer++: Empowering Diffusion Models with Multi-View Conditions for 3D Content Generation

Jul 03, 2025

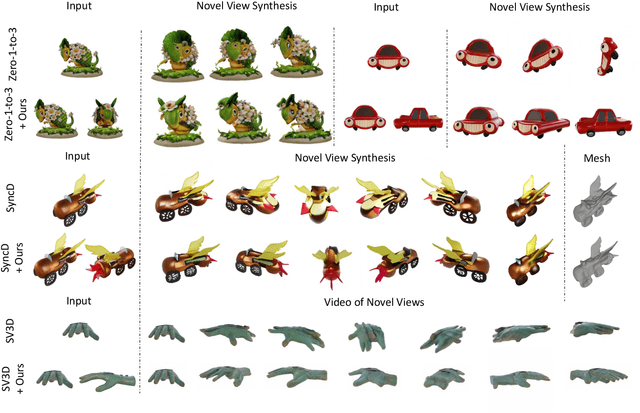

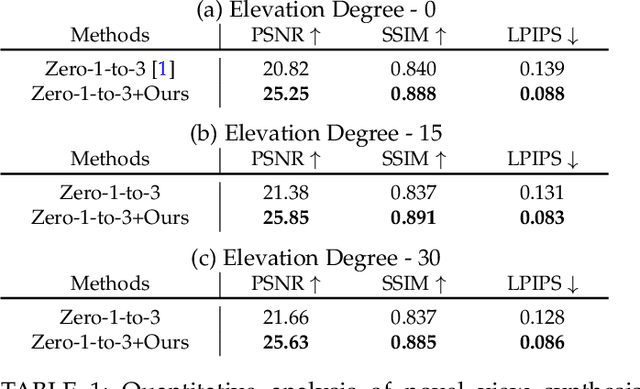

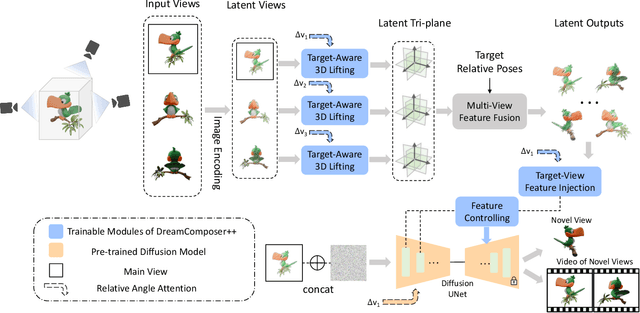

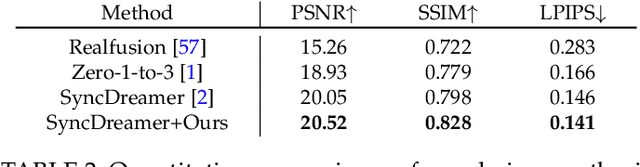

Abstract:Recent advancements in leveraging pre-trained 2D diffusion models achieve the generation of high-quality novel views from a single in-the-wild image. However, existing works face challenges in producing controllable novel views due to the lack of information from multiple views. In this paper, we present DreamComposer++, a flexible and scalable framework designed to improve current view-aware diffusion models by incorporating multi-view conditions. Specifically, DreamComposer++ utilizes a view-aware 3D lifting module to extract 3D representations of an object from various views. These representations are then aggregated and rendered into the latent features of target view through the multi-view feature fusion module. Finally, the obtained features of target view are integrated into pre-trained image or video diffusion models for novel view synthesis. Experimental results demonstrate that DreamComposer++ seamlessly integrates with cutting-edge view-aware diffusion models and enhances their abilities to generate controllable novel views from multi-view conditions. This advancement facilitates controllable 3D object reconstruction and enables a wide range of applications.

Near-infrared Image Deblurring and Event Denoising with Synergistic Neuromorphic Imaging

Mar 05, 2025

Abstract:The fields of imaging in the nighttime dynamic and other extremely dark conditions have seen impressive and transformative advancements in recent years, partly driven by the rise of novel sensing approaches, e.g., near-infrared (NIR) cameras with high sensitivity and event cameras with minimal blur. However, inappropriate exposure ratios of near-infrared cameras make them susceptible to distortion and blur. Event cameras are also highly sensitive to weak signals at night yet prone to interference, often generating substantial noise and significantly degrading observations and analysis. Herein, we develop a new framework for low-light imaging combined with NIR imaging and event-based techniques, named synergistic neuromorphic imaging, which can jointly achieve NIR image deblurring and event denoising. Harnessing cross-modal features of NIR images and visible events via spectral consistency and higher-order interaction, the NIR images and events are simultaneously fused, enhanced, and bootstrapped. Experiments on real and realistically simulated sequences demonstrate the effectiveness of our method and indicate better accuracy and robustness than other methods in practical scenarios. This study gives impetus to enhance both NIR images and events, which paves the way for high-fidelity low-light imaging and neuromorphic reasoning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge