David Navarro-Alarcon

Bimanual Deformable Bag Manipulation Using a Structure-of-Interest Based Latent Dynamics Model

Jan 21, 2024Abstract:The manipulation of deformable objects by robotic systems presents a significant challenge due to their complex and infinite-dimensional configuration spaces. This paper introduces a novel approach to Deformable Object Manipulation (DOM) by emphasizing the identification and manipulation of Structures of Interest (SOIs) in deformable fabric bags. We propose a bimanual manipulation framework that leverages a Graph Neural Network (GNN)-based latent dynamics model to succinctly represent and predict the behavior of these SOIs. Our approach involves constructing a graph representation from partial point cloud data of the object and learning the latent dynamics model that effectively captures the essential deformations of the fabric bag within a reduced computational space. By integrating this latent dynamics model with Model Predictive Control (MPC), we empower robotic manipulators to perform precise and stable manipulation tasks focused on the SOIs. We have validated our framework through various empirical experiments demonstrating its efficacy in bimanual manipulation of fabric bags. Our contributions not only address the complexities inherent in DOM but also provide new perspectives and methodologies for enhancing robotic interactions with deformable objects by concentrating on their critical structural elements. Experimental videos can be obtained from https://sites.google.com/view/bagbot.

Implicit Subgoal Planning with Variational Autoencoders for Long-Horizon Sparse Reward Robotic Tasks

Dec 25, 2023

Abstract:The challenges inherent to long-horizon tasks in robotics persist due to the typical inefficient exploration and sparse rewards in traditional reinforcement learning approaches. To alleviate these challenges, we introduce a novel algorithm, Variational Autoencoder-based Subgoal Inference (VAESI), to accomplish long-horizon tasks through a divide-and-conquer manner. VAESI consists of three components: a Variational Autoencoder (VAE)-based Subgoal Generator, a Hindsight Sampler, and a Value Selector. The VAE-based Subgoal Generator draws inspiration from the human capacity to infer subgoals and reason about the final goal in the context of these subgoals. It is composed of an explicit encoder model, engineered to generate subgoals, and an implicit decoder model, designed to enhance the quality of the generated subgoals by predicting the final goal. Additionally, the Hindsight Sampler selects valid subgoals from an offline dataset to enhance the feasibility of the generated subgoals. The Value Selector utilizes the value function in reinforcement learning to filter the optimal subgoals from subgoal candidates. To validate our method, we conduct several long-horizon tasks in both simulation and the real world, including one locomotion task and three manipulation tasks. The obtained quantitative and qualitative data indicate that our approach achieves promising performance compared to other baseline methods. These experimental results can be seen in the website \url{https://sites.google.com/view/vaesi/home}.

Learning Rhythmic Trajectories with Geometric Constraints for Laser-Based Skincare Procedures

Dec 21, 2023Abstract:The increasing deployment of robots has significantly enhanced the automation levels across a wide and diverse range of industries. This paper investigates the automation challenges of laser-based dermatology procedures in the beauty industry; This group of related manipulation tasks involves delivering energy from a cosmetic laser onto the skin with repetitive patterns. To automate this procedure, we propose to use a robotic manipulator and endow it with the dexterity of a skilled dermatology practitioner through a learning-from-demonstration framework. To ensure that the cosmetic laser can properly deliver the energy onto the skin surface of an individual, we develop a novel structured prediction-based imitation learning algorithm with the merit of handling geometric constraints. Notably, our proposed algorithm effectively tackles the imitation challenges associated with quasi-periodic motions, a common feature of many laser-based cosmetic tasks. The conducted real-world experiments illustrate the performance of our robotic beautician in mimicking realistic dermatological procedures; Our new method is shown to not only replicate the rhythmic movements from the provided demonstrations but also to adapt the acquired skills to previously unseen scenarios and subjects.

Collision-Free Navigation of Wheeled Mobile Robots: An Integrated Path Planning and Tube-Following Control Approach

Dec 19, 2023

Abstract:In this paper, an integrated path planning and tube-following control scheme is proposed for collision-free navigation of a wheeled mobile robot (WMR) in a compact convex workspace cluttered with sufficiently separated spherical obstacles. An analytical path planning algorithm is developed based on Bouligand's tangent cones and Nagumo's invariance theorem, which enables the WMR to navigate towards a designated goal location from almost all initial positions in the free space, without entering into augmented obstacle regions with safety margins. We further construct a virtual "safe tube" around the reference trajectory, ensuring that its radius does not exceed the size of the safety margin. Subsequently, a saturated adaptive controller is designed to achieve safe trajectory tracking in the presence of disturbances. It is shown that this tube-following controller guarantees that the WMR tracks the reference trajectory within the predefined tube, while achieving uniform ultimate boundedness of both the position tracking and parameter estimation errors. This indicates that the WMR will not collide with any obstacles along the way. Finally, we report simulation and experimental results to validate the effectiveness of the proposed method.

Leader-Follower Formation Control of Perturbed Nonholonomic Agents along Parametric Curves with Directed Communication

Oct 11, 2023

Abstract:In this paper, we propose a novel formation controller for nonholonomic agents to form general parametric curves. First, we derive a unified parametric representation for both open and closed curves. Then, a leader-follower formation controller is designed to form the parametric curves. We consider directed communications and constant input disturbances rejection in the controller design. Rigorous Lyapunov-based stability analysis proves the asymptotic stability of the proposed controller. Detailed numerical simulations and experimental studies are conducted to verify the performance of the proposed method.

Robust Integral Consensus Control of Multi-Agent Networks Perturbed by Matched and Unmatched Disturbances: The Case of Directed Graphs

Sep 30, 2023Abstract:This work presents a new method to design consensus controllers for perturbed double integrator systems whose interconnection is described by a directed graph containing a rooted spanning tree. We propose new robust controllers to solve the consensus and synchronization problems when the systems are under the effects of matched and unmatched disturbances. In both problems, we present simple continuous controllers, whose integral actions allow us to handle the disturbances. A rigorous stability analysis based on Lyapunov's direct method for unperturbed networked systems is presented. To assess the performance of our result, a representative simulation study is presented.

PSO-Based Optimal Coverage Path Planning for Surface Defect Inspection of 3C Components with a Robotic Line Scanner

Jul 10, 2023

Abstract:The automatic inspection of surface defects is an important task for quality control in the computers, communications, and consumer electronics (3C) industry. Conventional devices for defect inspection (viz. line-scan sensors) have a limited field of view, thus, a robot-aided defect inspection system needs to scan the object from multiple viewpoints. Optimally selecting the robot's viewpoints and planning a path is regarded as coverage path planning (CPP), a problem that enables inspecting the object's complete surface while reducing the scanning time and avoiding misdetection of defects. However, the development of CPP strategies for robotic line scanners has not been sufficiently studied by researchers. To fill this gap in the literature, in this paper, we present a new approach for robotic line scanners to detect surface defects of 3C free-form objects automatically. Our proposed solution consists of generating a local path by a new hybrid region segmentation method and an adaptive planning algorithm to ensure the coverage of the complete object surface. An optimization method for the global path sequence is developed to maximize the scanning efficiency. To verify our proposed methodology, we conduct detailed simulation-based and experimental studies on various free-form workpieces, and compare its performance with a state-of-the-art solution. The reported results demonstrate the feasibility and effectiveness of our approach.

Efficient Robot Skill Learning with Imitation from a Single Video for Contact-Rich Fabric Manipulation

Apr 24, 2023

Abstract:Classical policy search algorithms for robotics typically require performing extensive explorations, which are time-consuming and expensive to implement with real physical platforms. To facilitate the efficient learning of robot manipulation skills, in this work, we propose a new approach comprised of three modules: (1) learning of general prior knowledge with random explorations in simulation, including state representations, dynamic models, and the constrained action space of the task; (2) extraction of a state alignment-based reward function from a single demonstration video; (3) real-time optimization of the imitation policy under systematic safety constraints with sampling-based model predictive control. This solution results in an efficient one-shot imitation-from-video strategy that simplifies the learning and execution of robot skills in real applications. Specifically, we learn priors in a scene of a task family and then deploy the policy in a novel scene immediately following a single demonstration, preventing time-consuming and risky explorations in the environment. As we do not make a strong assumption of dynamic consistency between the scenes, learning priors can be conducted in simulation to avoid collecting data in real-world circumstances. We evaluate the effectiveness of our approach in the context of contact-rich fabric manipulation, which is a common scenario in industrial and domestic tasks. Detailed numerical simulations and real-world hardware experiments reveal that our method can achieve rapid skill acquisition for challenging manipulation tasks.

Self-Reconfigurable Soft-Rigid Mobile Agent with Variable Stiffness and Adaptive Morphology

Oct 16, 2022

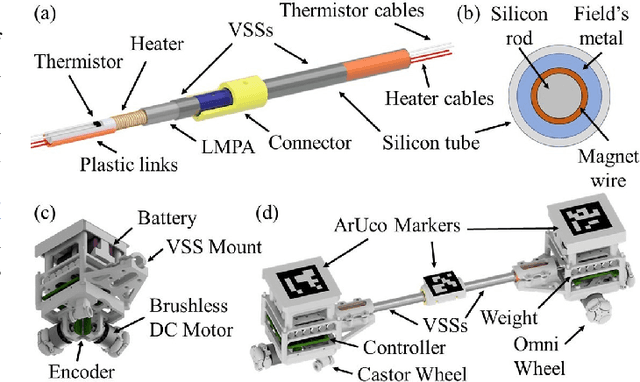

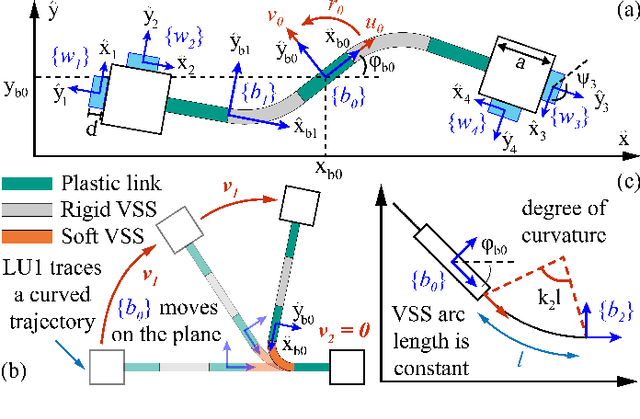

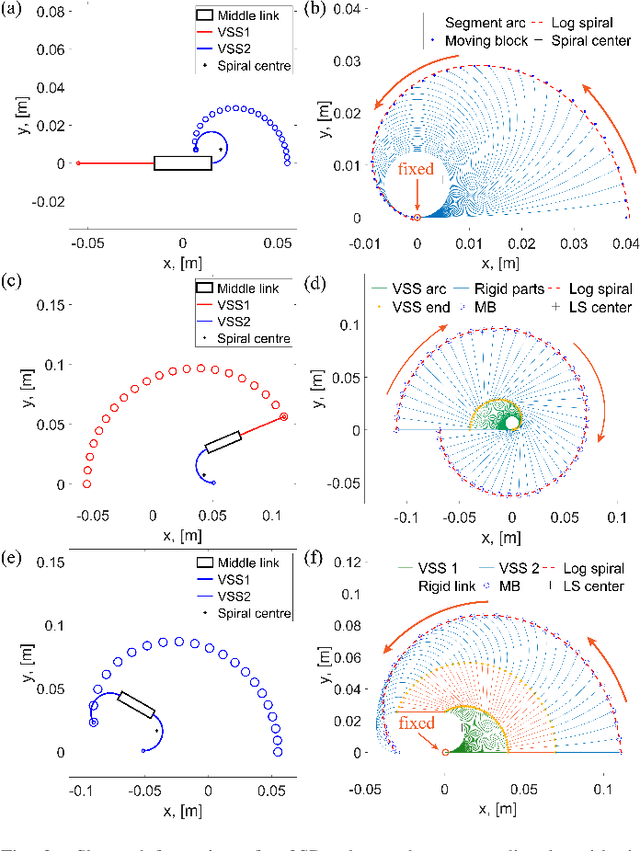

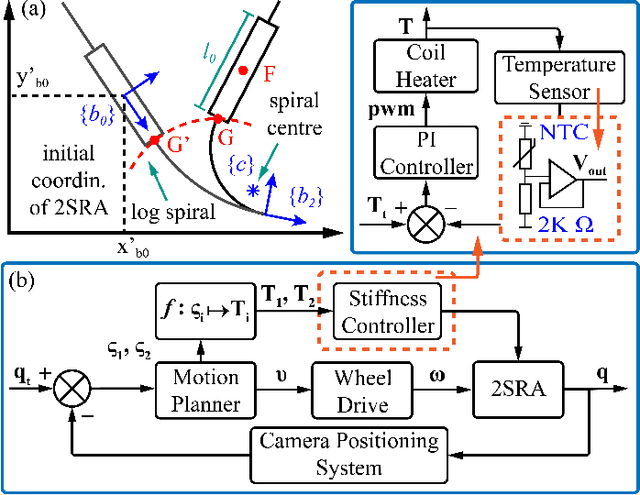

Abstract:In this paper, we propose a novel design of a hybrid mobile robot with controllable stiffness and deformable shape. Compared to conventional mobile agents, our system can switch between rigid and compliant phases by solidifying or melting Field's metal in its structure and, thus, alter its shape through the motion of its active components. In the soft state, the robot's main body can bend into circular arcs, which enables it to conform to surrounding curved objects. This variable geometry of the robot creates new motion modes which cannot be described by standard (i.e., fixed geometry) models. To this end, we develop a unified mathematical model that captures the differential kinematics of both rigid and soft states. An optimised control strategy is further proposed to select the most appropriate phase states and motion modes needed to reach a target pose-shape configuration. The performance of our new method is validated with numerical simulations and experiments conducted on a prototype system. The simulation source code is available at https://github.com/Louashka/2sr-agent-simulation.git}{GitHub repository.

A Dual-Arm Collaborative Framework for Dexterous Manipulation in Unstructured Environments with Contrastive Planning

Sep 13, 2022

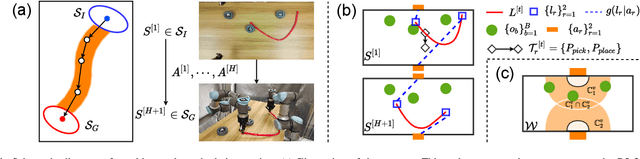

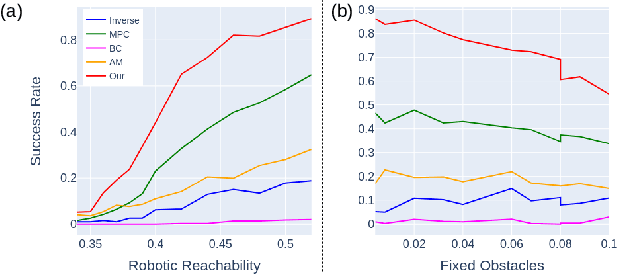

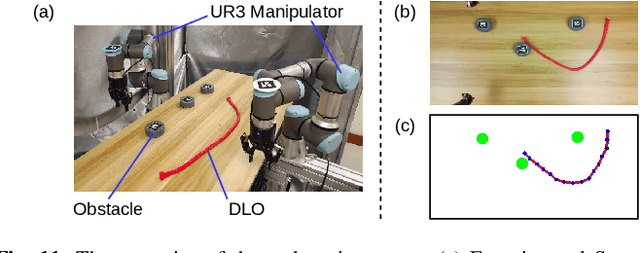

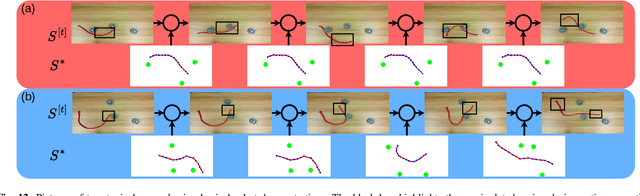

Abstract:Most object manipulation strategies for robots are based on the assumption that the object is rigid (i.e., with fixed geometry) and the goal's details have been fully specified (e.g., the exact target pose). However, there are many tasks that involve spatial relations in human environments where these conditions may be hard to satisfy, e.g., bending and placing a cable inside an unknown container. To develop advanced robotic manipulation capabilities in unstructured environments that avoid these assumptions, we propose a novel long-horizon framework that exploits contrastive planning in finding promising collaborative actions. Using simulation data collected by random actions, we learn an embedding model in a contrastive manner that encodes the spatio-temporal information from successful experiences, which facilitates the subgoal planning through clustering in the latent space. Based on the keypoint correspondence-based action parameterization, we design a leader-follower control scheme for the collaboration between dual arms. All models of our policy are automatically trained in simulation and can be directly transferred to real-world environments. To validate the proposed framework, we conduct a detailed experimental study on a complex scenario subject to environmental and reachability constraints in both simulation and real environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge