Chenxing Jiang

WaterSplat-SLAM: Photorealistic Monocular SLAM in Underwater Environment

Apr 06, 2026Abstract:Underwater monocular SLAM is a challenging problem with applications from autonomous underwater vehicles to marine archaeology. However, existing underwater SLAM methods struggle to produce maps with high-fidelity rendering. In this paper, we propose WaterSplat-SLAM, a novel monocular underwater SLAM system that achieves robust pose estimation and photorealistic dense mapping. Specifically, we couple semantic medium filtering into two-view 3D reconstruction prior to enable underwater-adapted camera tracking and depth estimation. Furthermore, we present a semantic-guided rendering and adaptive map management strategy with an online medium-aware Gaussian map, modeling underwater environment in a photorealistic and compact manner. Experiments on multiple underwater datasets demonstrate that WaterSplat-SLAM achieves robust camera tracking and high-fidelity rendering in underwater environments.

MG-Grasp: Metric-Scale Geometric 6-DoF Grasping Framework with Sparse RGB Observations

Mar 17, 2026Abstract:Single-view RGB-D grasp detection remains a com- mon choice in 6-DoF robotic grasping systems, which typically requires a depth sensor. While RGB-only 6-DoF grasp methods has been studied recently, their inaccurate geometric repre- sentation is not directly suitable for physically reliable robotic manipulation, thereby hindering reliable grasp generation. To address these limitations, we propose MG-Grasp, a novel depth- free 6-DoF grasping framework that achieves high-quality object grasping. Leveraging two-view 3D foundation model with camera intrinsic/extrinsic, our method reconstructs metric- scale and multi-view consistent dense point clouds from sparse RGB images and generates stable 6-DoF grasp. Experiments on GraspNet-1Billion dataset and real world demonstrate that MG-Grasp achieves state-of-the-art (SOTA) grasp performance among RGB-based 6-DoF grasping methods.

Geometry-Grounded Gaussian Splatting

Jan 25, 2026Abstract:Gaussian Splatting (GS) has demonstrated impressive quality and efficiency in novel view synthesis. However, shape extraction from Gaussian primitives remains an open problem. Due to inadequate geometry parameterization and approximation, existing shape reconstruction methods suffer from poor multi-view consistency and are sensitive to floaters. In this paper, we present a rigorous theoretical derivation that establishes Gaussian primitives as a specific type of stochastic solids. This theoretical framework provides a principled foundation for Geometry-Grounded Gaussian Splatting by enabling the direct treatment of Gaussian primitives as explicit geometric representations. Using the volumetric nature of stochastic solids, our method efficiently renders high-quality depth maps for fine-grained geometry extraction. Experiments show that our method achieves the best shape reconstruction results among all Gaussian Splatting-based methods on public datasets.

WING: Wheel-Inertial Neural Odometry with Ground Manifold Constraints

Jul 14, 2024

Abstract:In this paper, we propose an interoceptive-only odometry system for ground robots with neural network processing and soft constraints based on the assumption of a globally continuous ground manifold. Exteroceptive sensors such as cameras, GPS and LiDAR may encounter difficulties in scenarios with poor illumination, indoor environments, dusty areas and straight tunnels. Therefore, improving the pose estimation accuracy only using interoceptive sensors is important to enhance the reliability of navigation system even in degrading scenarios mentioned above. However, interoceptive sensors like IMU and wheel encoders suffer from large drift due to noisy measurements. To overcome these challenges, the proposed system trains deep neural networks to correct the measurements from IMU and wheel encoders, while considering their uncertainty. Moreover, because ground robots can only travel on the ground, we model the ground surface as a globally continuous manifold using a dual cubic B-spline manifold to further improve the estimation accuracy by this soft constraint. A novel space-based sliding-window filtering framework is proposed to fully exploit the $C^2$ continuity of ground manifold soft constraints and fuse all the information from raw measurements and neural networks in a yaw-independent attitude convention. Extensive experiments demonstrate that our proposed approach can outperform state-of-the-art learning-based interoceptive-only odometry methods.

H3-Mapping: Quasi-Heterogeneous Feature Grids for Real-time Dense Mapping Using Hierarchical Hybrid Representation

Mar 16, 2024

Abstract:In recent years, implicit online dense mapping methods have achieved high-quality reconstruction results, showcasing great potential in robotics, AR/VR, and digital twins applications. However, existing methods struggle with slow texture modeling which limits their real-time performance. To address these limitations, we propose a NeRF-based dense mapping method that enables faster and higher-quality reconstruction. To improve texture modeling, we introduce quasi-heterogeneous feature grids, which inherit the fast querying ability of uniform feature grids while adapting to varying levels of texture complexity. Besides, we present a gradient-aided coverage-maximizing strategy for keyframe selection that enables the selected keyframes to exhibit a closer focus on rich-textured regions and a broader scope for weak-textured areas. Experimental results demonstrate that our method surpasses existing NeRF-based approaches in texture fidelity, geometry accuracy, and time consumption. The code for our method will be available at: https://github.com/SYSU-STAR/H3-Mapping.

H2-Mapping: Real-time Dense Mapping Using Hierarchical Hybrid Representation

Jun 05, 2023Abstract:Constructing a high-quality dense map in real-time is essential for robotics, AR/VR, and digital twins applications. As Neural Radiance Field (NeRF) greatly improves the mapping performance, in this paper, we propose a NeRF-based mapping method that enables higher-quality reconstruction and real-time capability even on edge computers. Specifically, we propose a novel hierarchical hybrid representation that leverages implicit multiresolution hash encoding aided by explicit octree SDF priors, describing the scene at different levels of detail. This representation allows for fast scene geometry initialization and makes scene geometry easier to learn. Besides, we present a coverage-maximizing keyframe selection strategy to address the forgetting issue and enhance mapping quality, particularly in marginal areas. To the best of our knowledge, our method is the first to achieve high-quality NeRF-based mapping on edge computers of handheld devices and quadrotors in real-time. Experiments demonstrate that our method outperforms existing NeRF-based mapping methods in geometry accuracy, texture realism, and time consumption. The code will be released at: https://github.com/SYSU-STAR/H2-Mapping

DIDO: Deep Inertial Quadrotor Dynamical Odometry

Mar 07, 2022

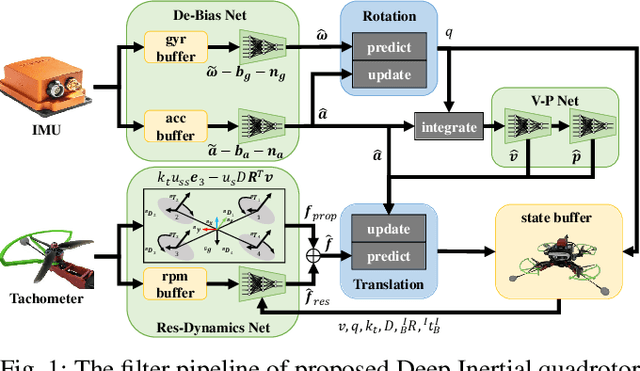

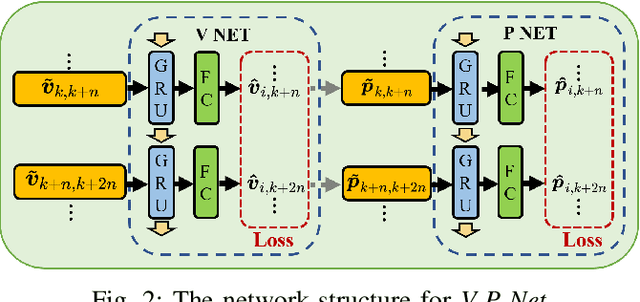

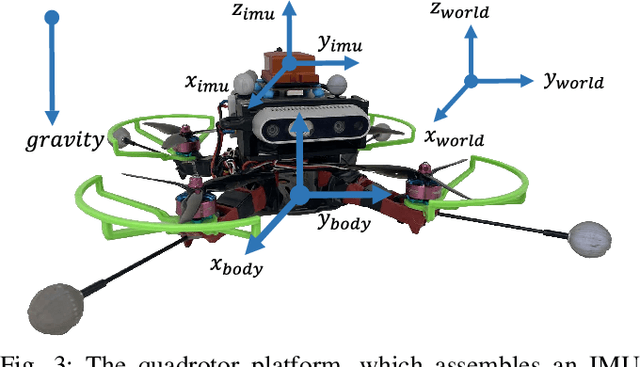

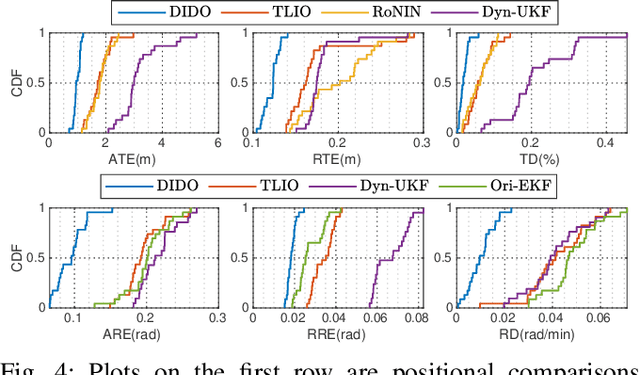

Abstract:In this work, we propose an interoceptive-only state estimation system for a quadrotor with deep neural network processing, where the quadrotor dynamics is considered as a perceptive supplement of the inertial kinematics. To improve the precision of multi-sensor fusion, we train cascaded networks on real-world quadrotor flight data to learn IMU kinematic properties, quadrotor dynamic characteristics, and motion states of the quadrotor along with their uncertainty information, respectively. This encoded information empowers us to address the issues of IMU bias stability, dynamic constraints, and multi-sensor calibration during sensor fusion. The above multi-source information is fused into a two-stage Extended Kalman Filter (EKF) framework for better estimation. Experiments have demonstrated the advantages of our proposed work over several conventional and learning-based methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge