"Time": models, code, and papers

A Novel Framework for Neural Architecture Search in the Hill Climbing Domain

Feb 22, 2021

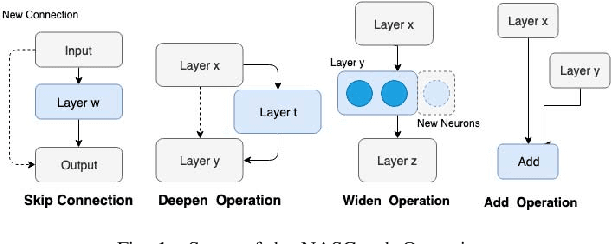

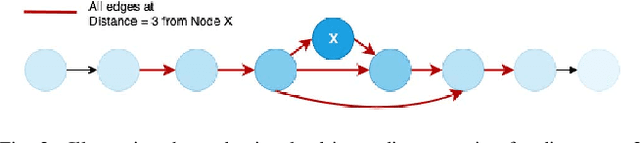

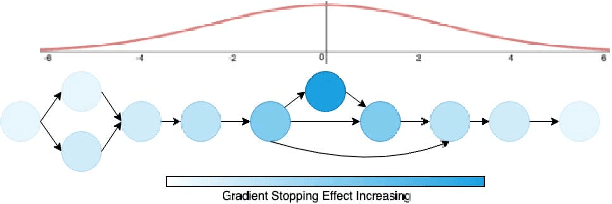

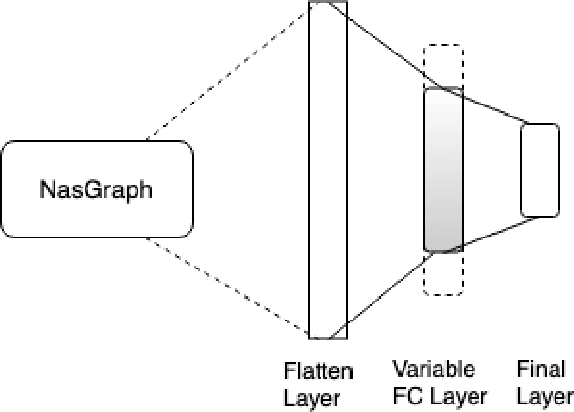

Neural networks have now long been used for solving complex problems of image domain, yet designing the same needs manual expertise. Furthermore, techniques for automatically generating a suitable deep learning architecture for a given dataset have frequently made use of reinforcement learning and evolutionary methods which take extensive computational resources and time. We propose a new framework for neural architecture search based on a hill-climbing procedure using morphism operators that makes use of a novel gradient update scheme. The update is based on the aging of neural network layers and results in the reduction in the overall training time. This technique can search in a broader search space which subsequently yields competitive results. We achieve a 4.96% error rate on the CIFAR-10 dataset in 19.4 hours of a single GPU training.

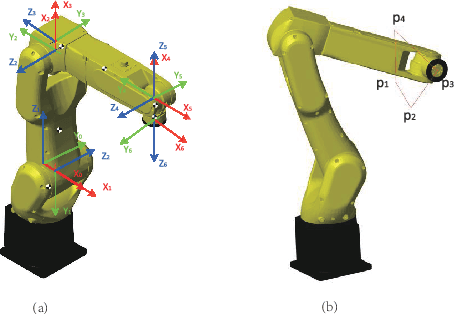

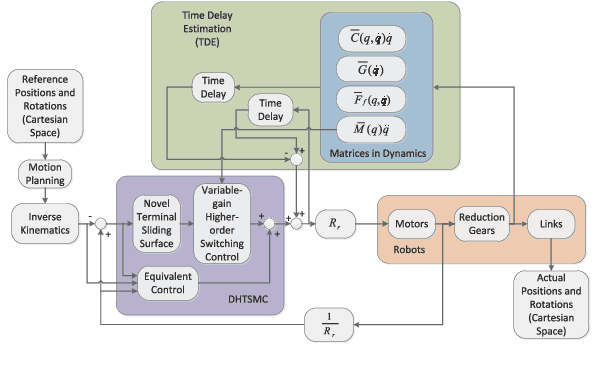

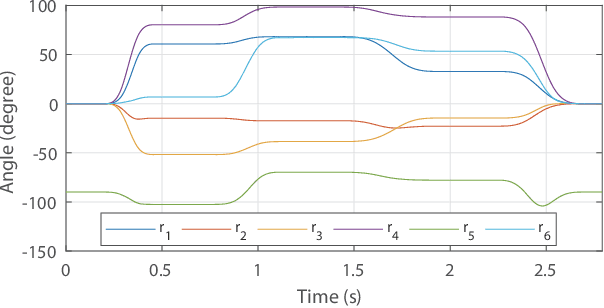

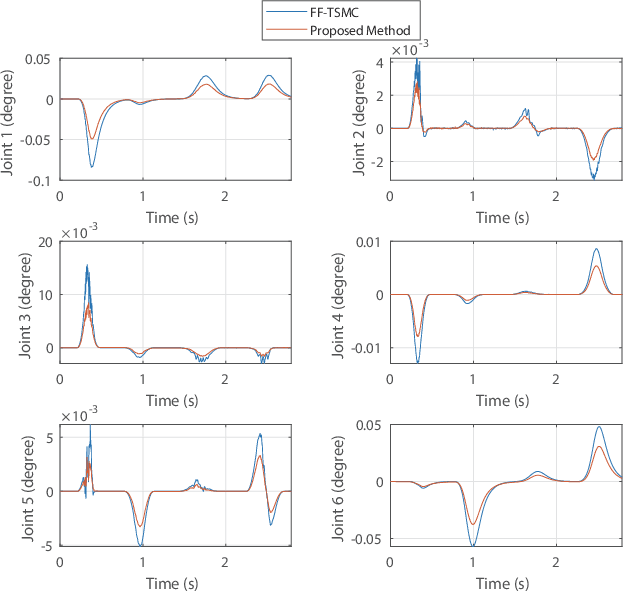

Feedback-based Digital Higher-order Terminal Sliding Mode for 6-DOF Industrial Manipulators

Feb 06, 2021

The precise motion control of a multi-degree of freedom~(DOF) robot manipulator is always challenging due to its nonlinear dynamics, disturbances, and uncertainties. Because most manipulators are controlled by digital signals, a novel higher-order sliding mode controller in the discrete-time form with time delay estimation is proposed in this paper. The dynamic model of the manipulator used in the design allows proper handling of nonlinearities, uncertainties and disturbances involved in the problem. Specifically, parametric uncertainties and disturbances are handled by the time delay estimation and the nonlinearity of the manipulator is addressed by the feedback structure of the controller. The combination of terminal sliding mode surface and higher-order control scheme in the controller guarantees a fast response with a small chattering amplitude. Moreover, the controller is designed with a modified sliding mode surface and variable-gain structure, so that the performance of the controller is further enhanced. We also analyze the condition to guarantee the stability of the closed-loop system in this paper. Finally, the simulation and experimental results prove that the proposed control scheme has a precise performance in a robot manipulator system.

Energy-Efficient Resource Allocation in Massive MIMO-NOMA Networks with Wireless Power Transfer: A Distributed ADMM Approach

Mar 24, 2021

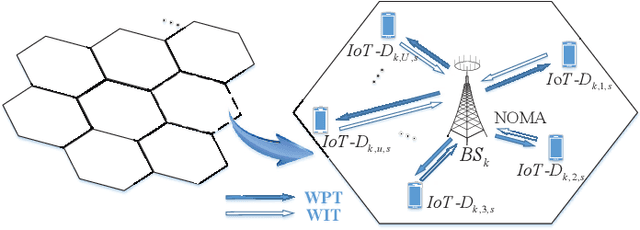

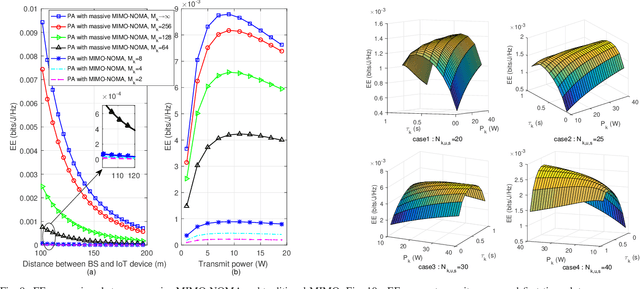

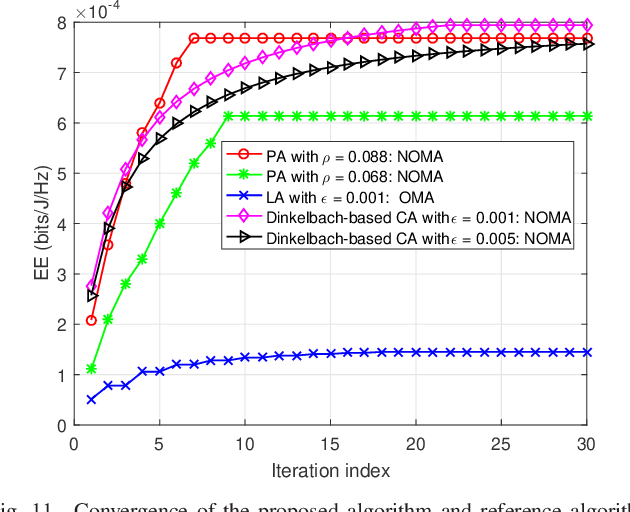

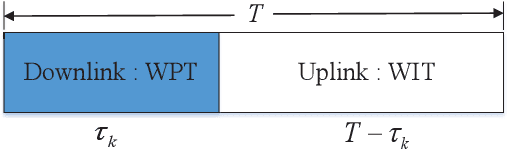

In multicell massive multiple-input multiple-output (MIMO) non-orthogonal multiple access (NOMA) networks, base stations (BSs) with multiple antennas deliver their radio frequency energy in the downlink, and Internet-of-Things (IoT) devices use their harvested energy to support uplink data transmission. This paper investigates the energy efficiency (EE) problem for multicell massive MIMO NOMA networks with wireless power transfer (WPT). To maximize the EE of the network, we propose a novel joint power, time, antenna selection, and subcarrier resource allocation scheme, which can properly allocate the time for energy harvesting and data transmission. Both perfect and imperfect channel state information (CSI) are considered, and their corresponding EE performance is analyzed. Under quality-of-service (QoS) requirements, an EE maximization problem is formulated, which is non-trivial due to non-convexity. We first adopt nonlinear fraction programming methods to convert the problem to be convex, and then, develop a distributed alternating direction method of multipliers (ADMM)- based approach to solve the problem. Simulation results demonstrate that compared to alternative methods, the proposed algorithm can converge quickly within fewer iterations, and can achieve better EE performance.

MR elasticity reconstruction using statistical physical modeling and explicit data-driven denoising regularizer

May 27, 2021

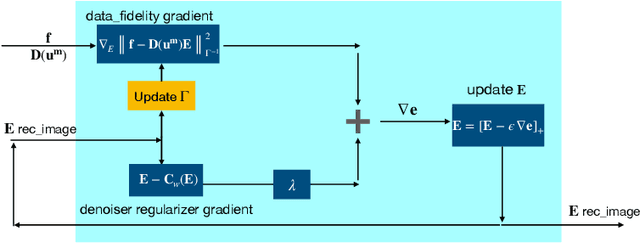

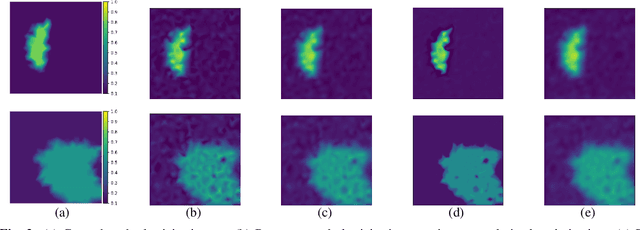

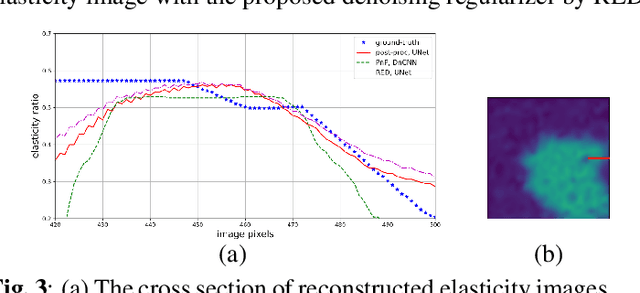

Elasticity image, visualizing the quantitative map of tissue stiffness, can be reconstructed by solving an inverse problem. Classical methods for magnetic resonance elastography (MRE) try to solve a regularized optimization problem comprising a deterministic physical model and a prior constraint as data-fidelity term and regularization term, respectively. For improving the elasticity reconstructions, appropriate prior about the underlying elasticity distribution is required which is not unique. This article proposes an infused approach for MRE reconstruction by integrating the statistical representation of the physical laws of harmonic motions and learning-based prior. For data-fidelity term, we use a statistical linear-algebraic model of equilibrium equations and for the regularizer, data-driven regularization by denoising (RED) is utilized. In the proposed optimization paradigm, the regularizer gradient is simply replaced by the residual of learned denoiser leading to time-efficient computation and convex explicit objective function. Simulation results of elasticity reconstruction verify the effectiveness of the proposed approach.

Optimal Estimator Design and Properties Analysis for Interconnected Systems with Asymmetric Information Structure

May 27, 2021

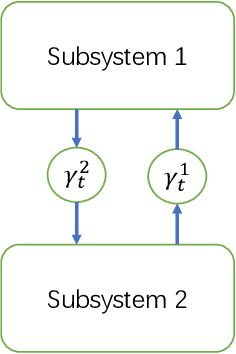

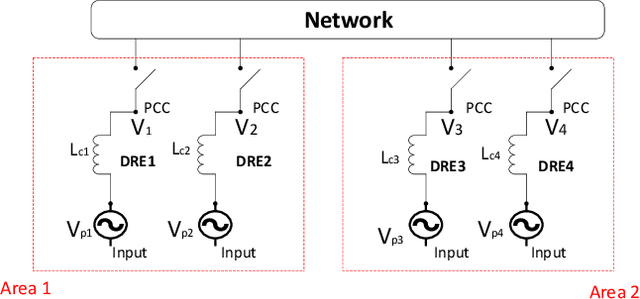

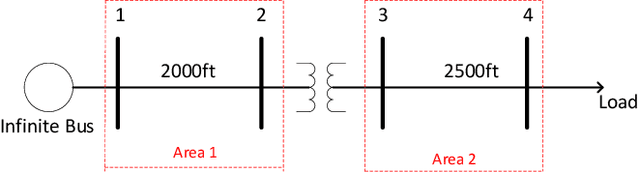

This paper studies the optimal state estimation problem for interconnected systems. Each subsystem can obtain its own measurement in real time, while, the measurements transmitted between the subsystems suffer from random delay. The optimal estimator is analytically designed for minimizing the conditional error covariance. Due to the random delay, the error covariance of the estimation is random. The boundedness of the expected error covariance (EEC) is analyzed. In particular, a new condition that is easy to verify is established for the boundedness of EEC. Further, the properties about EEC with respect to the delay probability is studied. We found that there exists a critical probability such that the EEC is bounded if the delay probability is below the critical probability. Also, a lower and upper bound of the critical probability is effectively computed. Finally, the proposed results are applied to a power system, and the effectiveness of the designed methods is illustrated by simulations.

You are AllSet: A Multiset Function Framework for Hypergraph Neural Networks

Jun 24, 2021

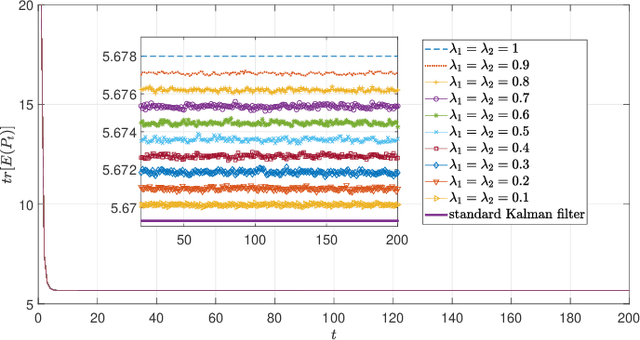

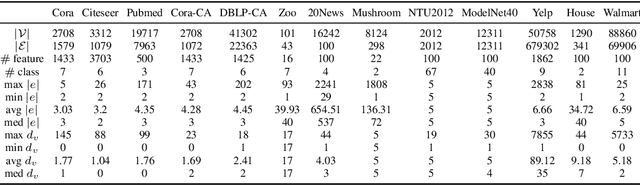

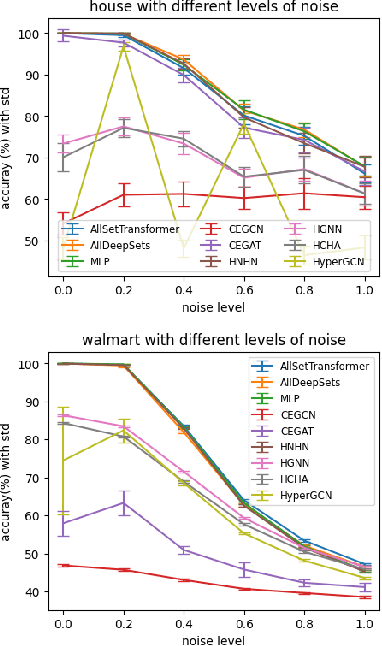

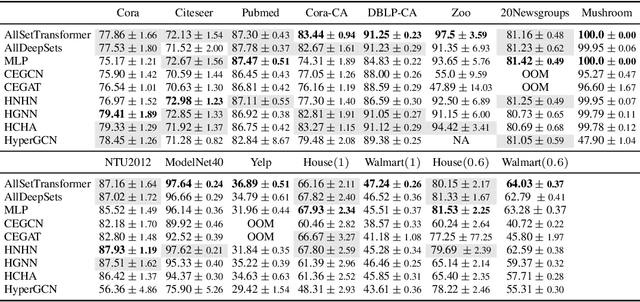

Hypergraphs are used to model higher-order interactions amongst agents and there exist many practically relevant instances of hypergraph datasets. To enable efficient processing of hypergraph-structured data, several hypergraph neural network platforms have been proposed for learning hypergraph properties and structure, with a special focus on node classification. However, almost all existing methods use heuristic propagation rules and offer suboptimal performance on many datasets. We propose AllSet, a new hypergraph neural network paradigm that represents a highly general framework for (hyper)graph neural networks and for the first time implements hypergraph neural network layers as compositions of two multiset functions that can be efficiently learned for each task and each dataset. Furthermore, AllSet draws on new connections between hypergraph neural networks and recent advances in deep learning of multiset functions. In particular, the proposed architecture utilizes Deep Sets and Set Transformer architectures that allow for significant modeling flexibility and offer high expressive power. To evaluate the performance of AllSet, we conduct the most extensive experiments to date involving ten known benchmarking datasets and three newly curated datasets that represent significant challenges for hypergraph node classification. The results demonstrate that AllSet has the unique ability to consistently either match or outperform all other hypergraph neural networks across the tested datasets. Our implementation and dataset will be released upon acceptance.

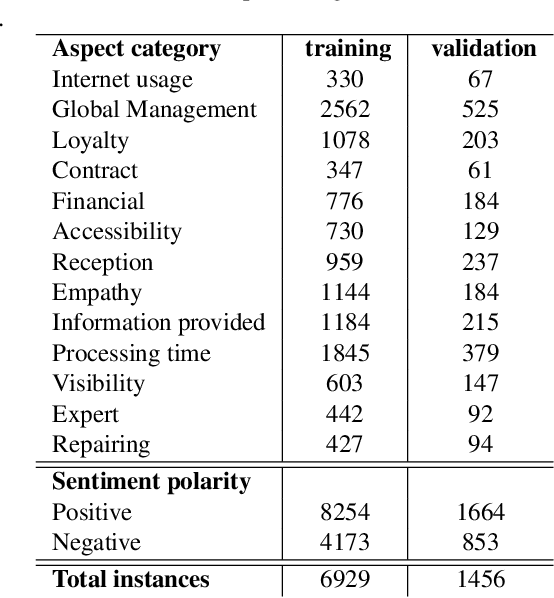

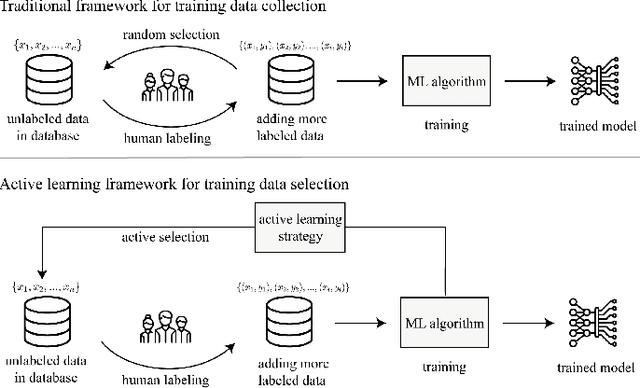

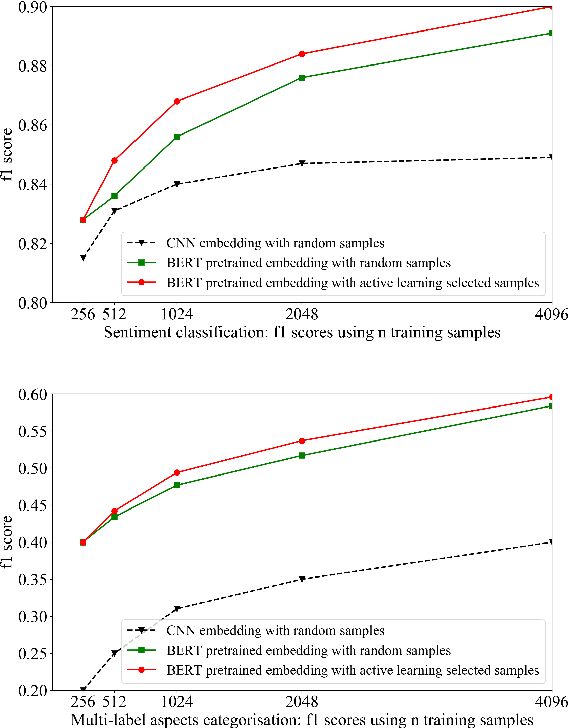

Understand customer reviews with less data and in short time: pretrained language representation and active learning

Oct 29, 2019

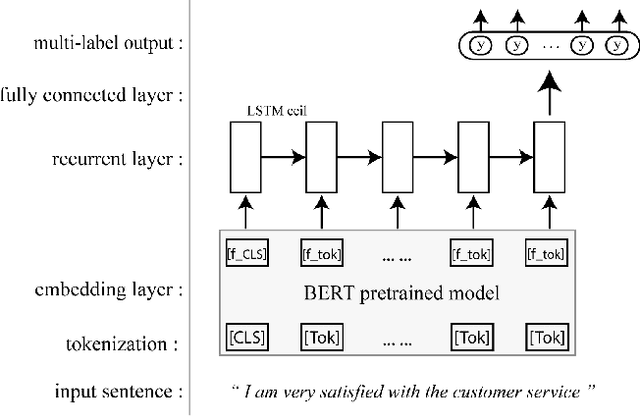

In this paper, we address customer review understanding problems by using supervised machine learning approaches, in order to achieve a fully automatic review aspects categorisation and sentiment analysis. In general, such supervised learning algorithms require domain-specific expert knowledge for generating high quality labeled training data, and the cost of labeling can be very high. To achieve an in-production customer review machine learning enabled analysis tool with only a limited amount of data and within a reasonable training data collection time, we propose to use pre-trained language representation to boost model performance and active learning framework for accelerating the iterative training process. The results show that with integration of both components, the fully automatic review analysis can be achieved at a much faster pace.

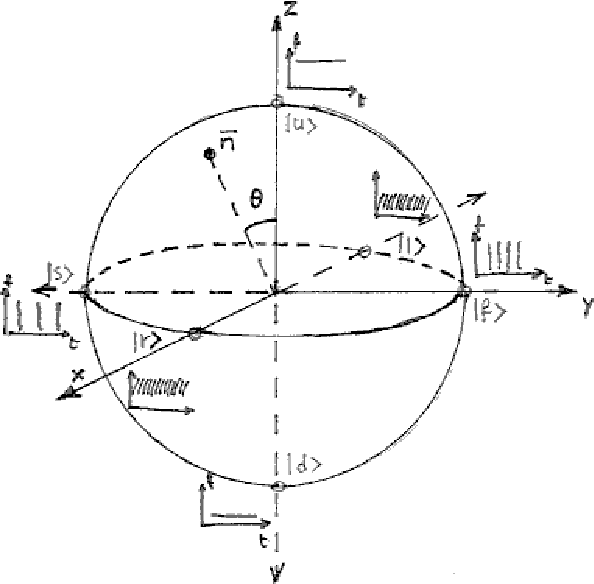

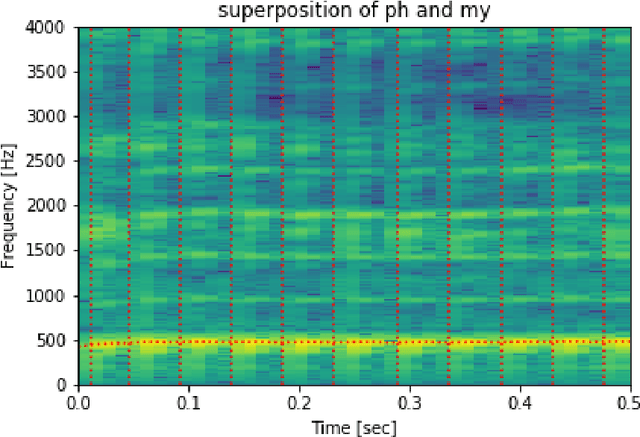

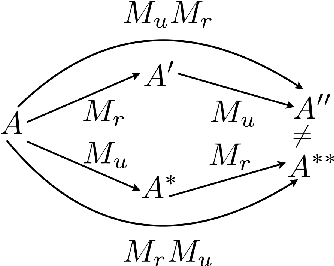

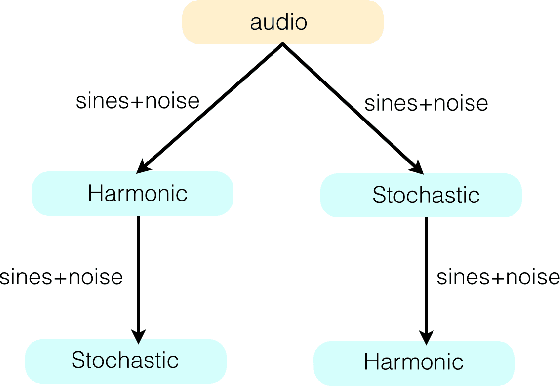

Quanta in sound, the sound of quanta: a voice-informed quantum theoretical perspective on sound

May 22, 2021

Humans have a privileged, embodied way to explore the world of sounds, through vocal imitation. The Quantum Vocal Theory of Sounds (QVTS) starts from the assumption that any sound can be expressed and described as the evolution of a superposition of vocal states, i.e., phonation, turbulence, and supraglottal myoelastic vibrations. The postulates of quantum mechanics, with the notions of observable, measurement, and time evolution of state, provide a model that can be used for sound processing, in both directions of analysis and synthesis. QVTS can give a quantum-theoretic explanation to some auditory streaming phenomena, eventually leading to practical solutions of relevant sound-processing problems, or it can be creatively exploited to manipulate superpositions of sonic elements. Perhaps more importantly, QVTS may be a fertile ground to host a dialogue between physicists, computer scientists, musicians, and sound designers, possibly giving us unheard manifestations of human creativity.

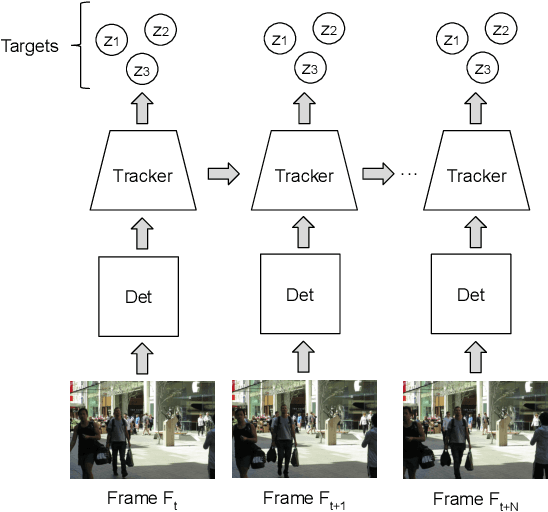

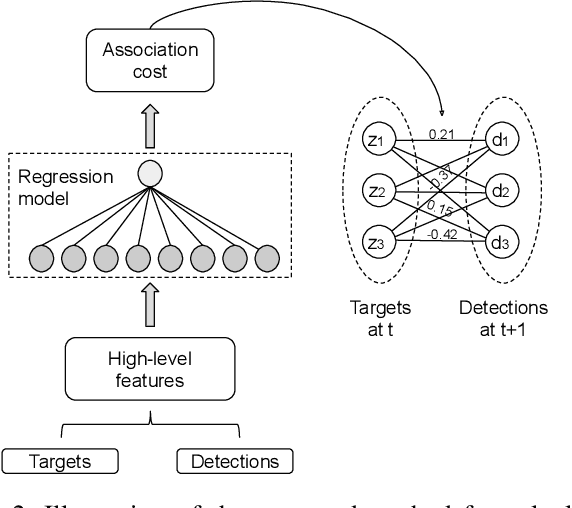

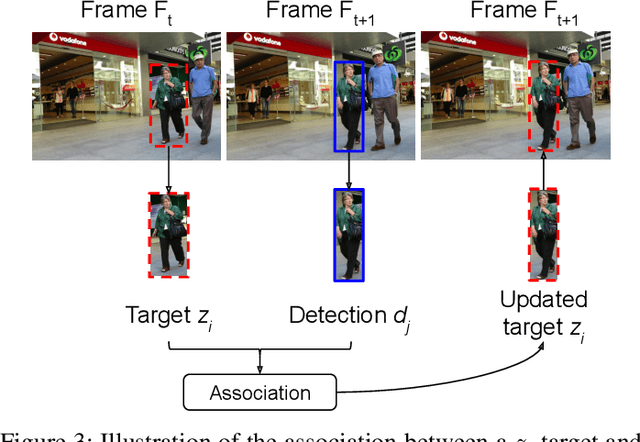

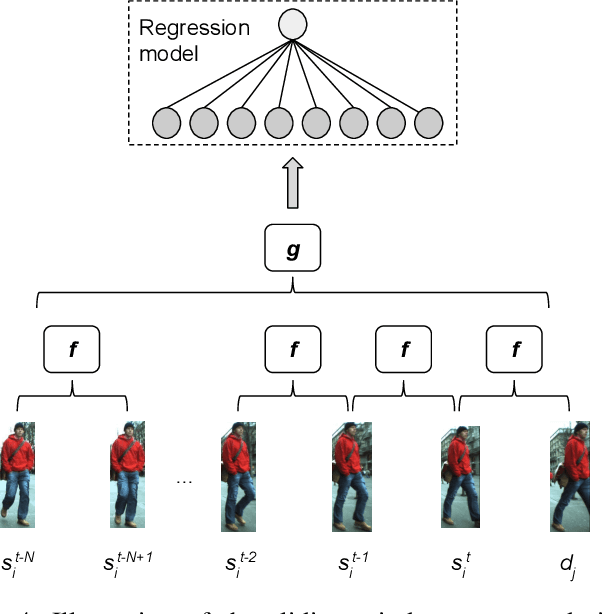

Learning to associate detections for real-time multiple object tracking

Jul 12, 2020

With the recent advances in the object detection research field, tracking-by-detection has become the leading paradigm adopted by multi-object tracking algorithms. By extracting different features from detected objects, those algorithms can estimate the objects' similarities and association patterns along successive frames. However, since similarity functions applied by tracking algorithms are handcrafted, it is difficult to employ them in new contexts. In this study, it is investigated the use of artificial neural networks to learning a similarity function that can be used among detections. During training, the networks were introduced to correct and incorrect association patterns, sampled from a pedestrian tracking data set. For such, different motion and appearance features combinations have been explored. Finally, a trained network has been inserted into a multiple-object tracking framework, which has been assessed on the MOT Challenge benchmark. Throughout the experiments, the proposed tracker matched the results obtained by state-of-the-art methods, it has run 58\% faster than a recent and similar method, used as baseline.

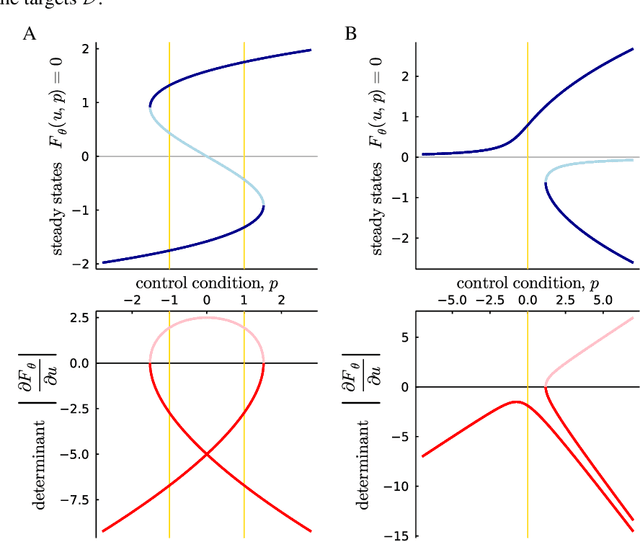

Parameter Inference with Bifurcation Diagrams

Jun 11, 2021

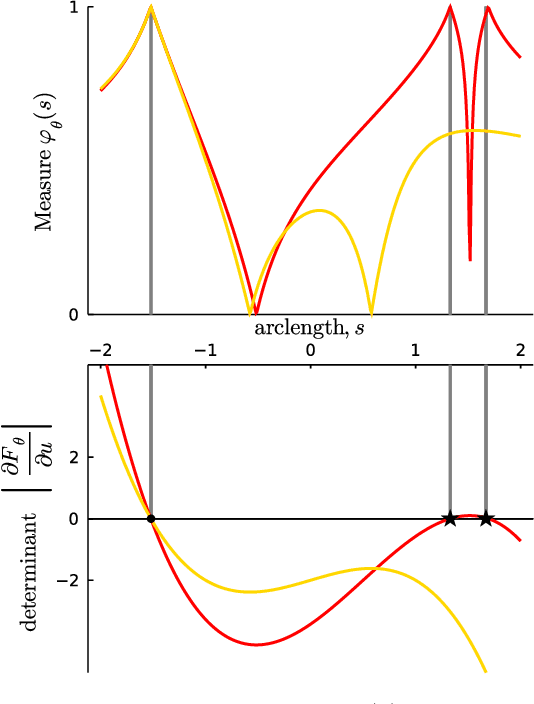

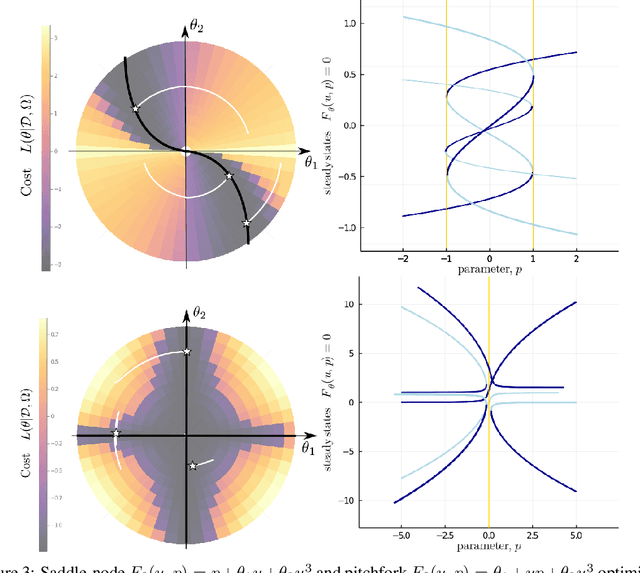

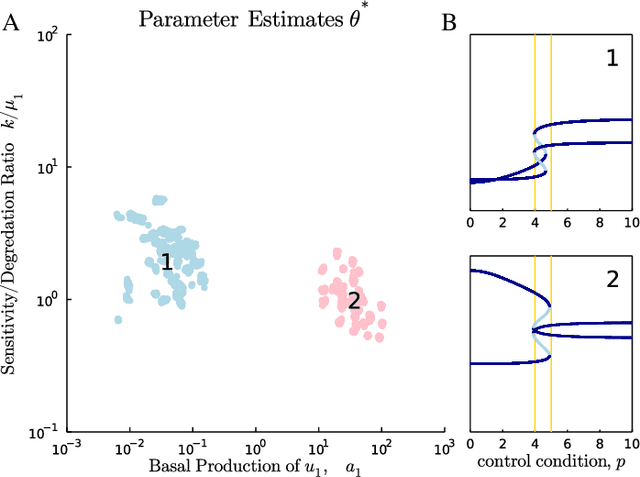

Estimation of parameters in differential equation models can be achieved by applying learning algorithms to quantitative time-series data. However, sometimes it is only possible to measure qualitative changes of a system in response to a controlled condition. In dynamical systems theory, such change points are known as bifurcations and lie on a function of the controlled condition called the bifurcation diagram. In this work, we propose a gradient-based semi-supervised approach for inferring the parameters of differential equations that produce a user-specified bifurcation diagram. The cost function contains a supervised error term that is minimal when the model bifurcations match the specified targets and an unsupervised bifurcation measure which has gradients that push optimisers towards bifurcating parameter regimes. The gradients can be computed without the need to differentiate through the operations of the solver that was used to compute the diagram. We demonstrate parameter inference with minimal models which explore the space of saddle-node and pitchfork diagrams and the genetic toggle switch from synthetic biology. Furthermore, the cost landscape allows us to organise models in terms of topological and geometric equivalence.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge