"Time": models, code, and papers

A Zero-Shot Adaptive Quadcopter Controller

Sep 19, 2022

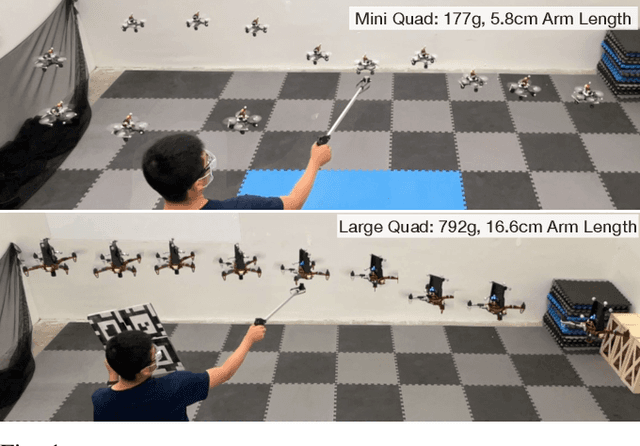

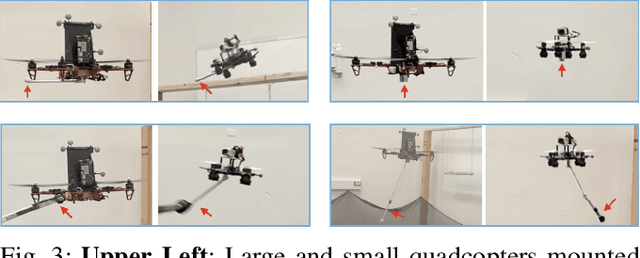

This paper proposes a universal adaptive controller for quadcopters, which can be deployed zero-shot to quadcopters of very different mass, arm lengths and motor constants, and also shows rapid adaptation to unknown disturbances during runtime. The core algorithmic idea is to learn a single policy that can adapt online at test time not only to the disturbances applied to the drone, but also to the robot dynamics and hardware in the same framework. We achieve this by training a neural network to estimate a latent representation of the robot and environment parameters, which is used to condition the behaviour of the controller, also represented as a neural network. We train both networks exclusively in simulation with the goal of flying the quadcopters to goal positions and avoiding crashes to the ground. We directly deploy the same controller trained in the simulation without any modifications on two quadcopters with differences in mass, inertia, and maximum motor speed of up to 4 times. In addition, we show rapid adaptation to sudden and large disturbances (up to 35.7%) in the mass and inertia of the quadcopters. We perform an extensive evaluation in both simulation and the physical world, where we outperform a state-of-the-art learning-based adaptive controller and a traditional PID controller specifically tuned to each platform individually. Video results can be found at https://dz298.github.io/universal-drone-controller/.

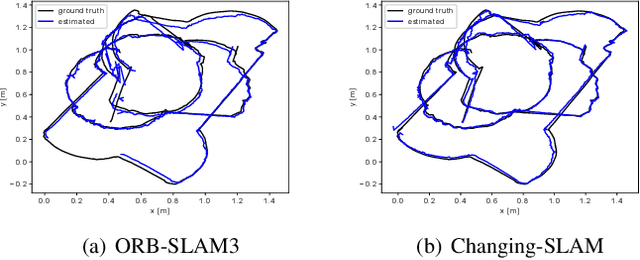

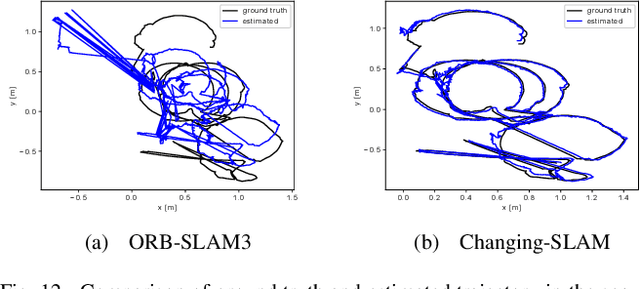

Visual Localization and Mapping in Dynamic and Changing Environments

Sep 21, 2022

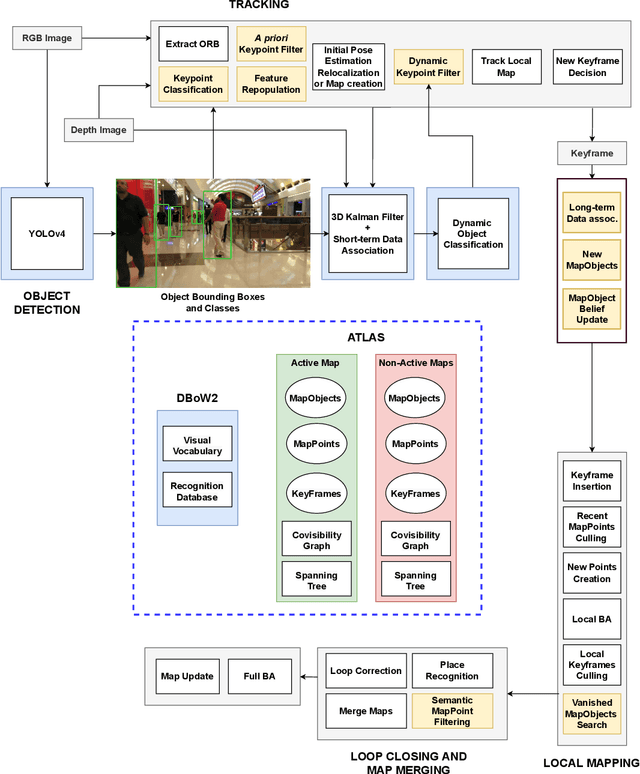

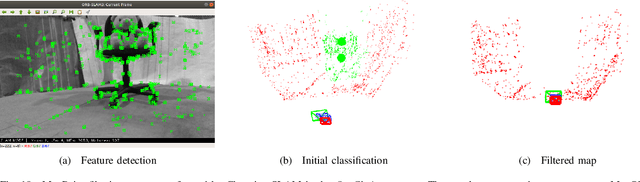

The real-world deployment of fully autonomous mobile robots depends on a robust SLAM (Simultaneous Localization and Mapping) system, capable of handling dynamic environments, where objects are moving in front of the robot, and changing environments, where objects are moved or replaced after the robot has already mapped the scene. This paper presents Changing-SLAM, a method for robust Visual SLAM in both dynamic and changing environments. This is achieved by using a Bayesian filter combined with a long-term data association algorithm. Also, it employs an efficient algorithm for dynamic keypoints filtering based on object detection that correctly identify features inside the bounding box that are not dynamic, preventing a depletion of features that could cause lost tracks. Furthermore, a new dataset was developed with RGB-D data especially designed for the evaluation of changing environments on an object level, called PUC-USP dataset. Six sequences were created using a mobile robot, an RGB-D camera and a motion capture system. The sequences were designed to capture different scenarios that could lead to a tracking failure or a map corruption. To the best of our knowledge, Changing-SLAM is the first Visual SLAM system that is robust to both dynamic and changing environments, not assuming a given camera pose or a known map, being also able to operate in real time. The proposed method was evaluated using benchmark datasets and compared with other state-of-the-art methods, proving to be highly accurate.

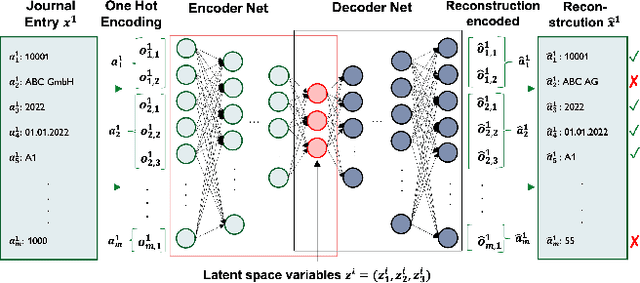

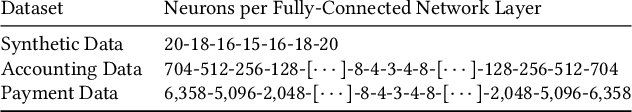

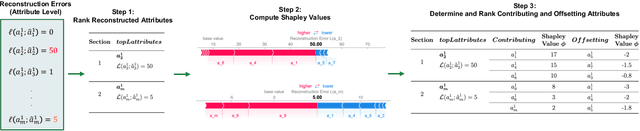

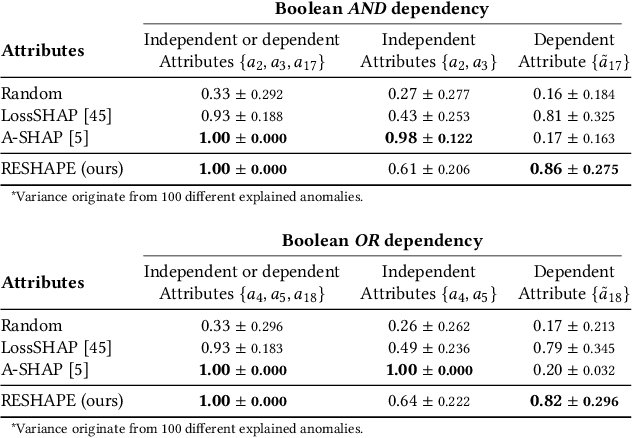

RESHAPE: Explaining Accounting Anomalies in Financial Statement Audits by enhancing SHapley Additive exPlanations

Sep 19, 2022

Detecting accounting anomalies is a recurrent challenge in financial statement audits. Recently, novel methods derived from Deep-Learning (DL) have been proposed to audit the large volumes of a statement's underlying accounting records. However, due to their vast number of parameters, such models exhibit the drawback of being inherently opaque. At the same time, the concealing of a model's inner workings often hinders its real-world application. This observation holds particularly true in financial audits since auditors must reasonably explain and justify their audit decisions. Nowadays, various Explainable AI (XAI) techniques have been proposed to address this challenge, e.g., SHapley Additive exPlanations (SHAP). However, in unsupervised DL as often applied in financial audits, these methods explain the model output at the level of encoded variables. As a result, the explanations of Autoencoder Neural Networks (AENNs) are often hard to comprehend by human auditors. To mitigate this drawback, we propose (RESHAPE), which explains the model output on an aggregated attribute-level. In addition, we introduce an evaluation framework to compare the versatility of XAI methods in auditing. Our experimental results show empirical evidence that RESHAPE results in versatile explanations compared to state-of-the-art baselines. We envision such attribute-level explanations as a necessary next step in the adoption of unsupervised DL techniques in financial auditing.

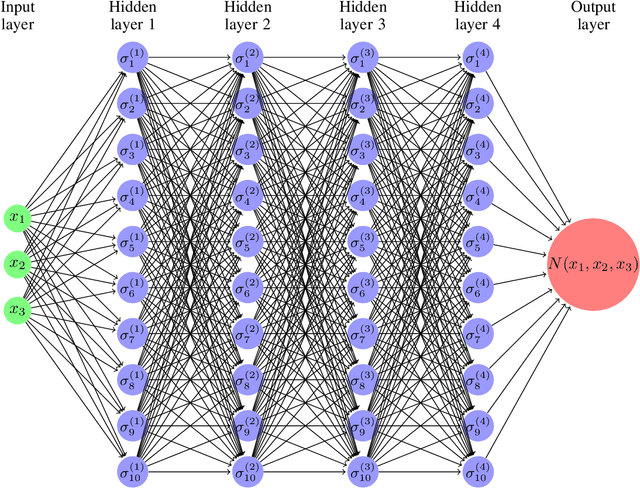

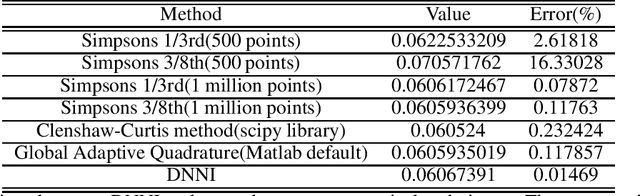

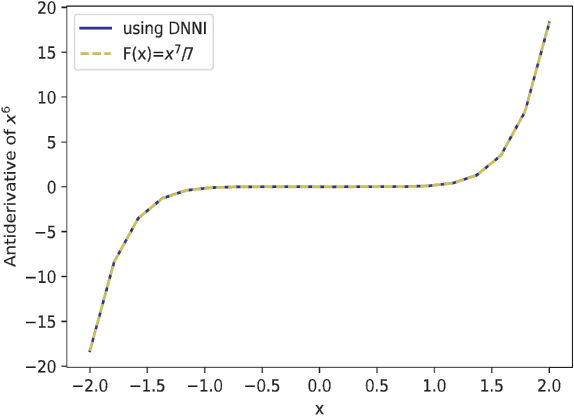

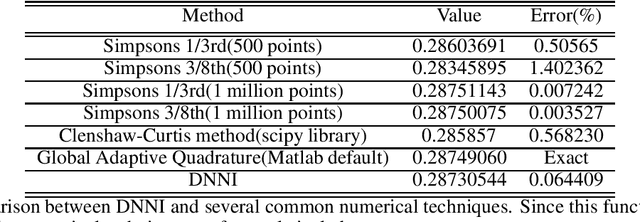

Computing Anti-Derivatives using Deep Neural Networks

Sep 19, 2022

This paper presents a novel algorithm to obtain the closed-form anti-derivative of a function using Deep Neural Network architecture. In the past, mathematicians have developed several numerical techniques to approximate the values of definite integrals, but primitives or indefinite integrals are often non-elementary. Anti-derivatives are necessarily required when there are several parameters in an integrand and the integral obtained is a function of those parameters. There is no theoretical method that can do this for any given function. Some existing ways to get around this are primarily based on either curve fitting or infinite series approximation of the integrand, which is then integrated theoretically. Curve fitting approximations are inaccurate for highly non-linear functions and require a different approach for every problem. On the other hand, the infinite series approach does not give a closed-form solution, and their truncated forms are often inaccurate. We claim that using a single method for all integrals, our algorithm can approximate anti-derivatives to any required accuracy. We have used this algorithm to obtain the anti-derivatives of several functions, including non-elementary and oscillatory integrals. This paper also shows the applications of our method to get the closed-form expressions of elliptic integrals, Fermi-Dirac integrals, and cumulative distribution functions and decrease the computation time of the Galerkin method for differential equations.

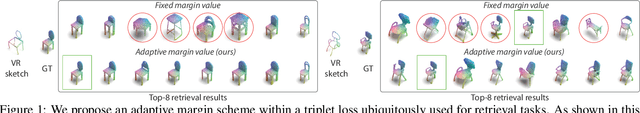

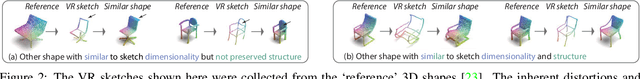

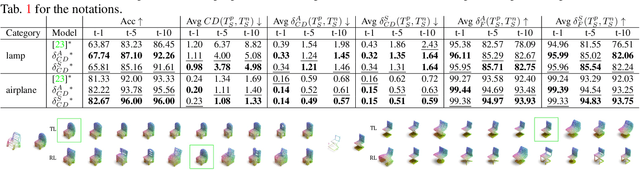

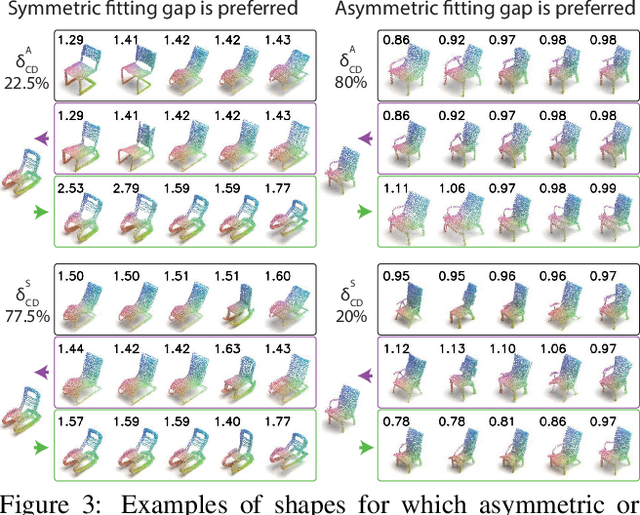

Structure-Aware 3D VR Sketch to 3D Shape Retrieval

Sep 19, 2022

We study the practical task of fine-grained 3D-VR-sketch-based 3D shape retrieval. This task is of particular interest as 2D sketches were shown to be effective queries for 2D images. However, due to the domain gap, it remains hard to achieve strong performance in 3D shape retrieval from 2D sketches. Recent work demonstrated the advantage of 3D VR sketching on this task. In our work, we focus on the challenge caused by inherent inaccuracies in 3D VR sketches. We observe that retrieval results obtained with a triplet loss with a fixed margin value, commonly used for retrieval tasks, contain many irrelevant shapes and often just one or few with a similar structure to the query. To mitigate this problem, we for the first time draw a connection between adaptive margin values and shape similarities. In particular, we propose to use a triplet loss with an adaptive margin value driven by a "fitting gap", which is the similarity of two shapes under structure-preserving deformations. We also conduct a user study which confirms that this fitting gap is indeed a suitable criterion to evaluate the structural similarity of shapes. Furthermore, we introduce a dataset of 202 VR sketches for 202 3D shapes drawn from memory rather than from observation. The code and data are available at https://github.com/Rowl1ng/Structure-Aware-VR-Sketch-Shape-Retrieval.

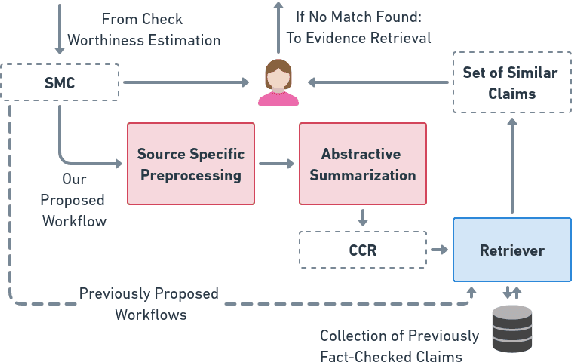

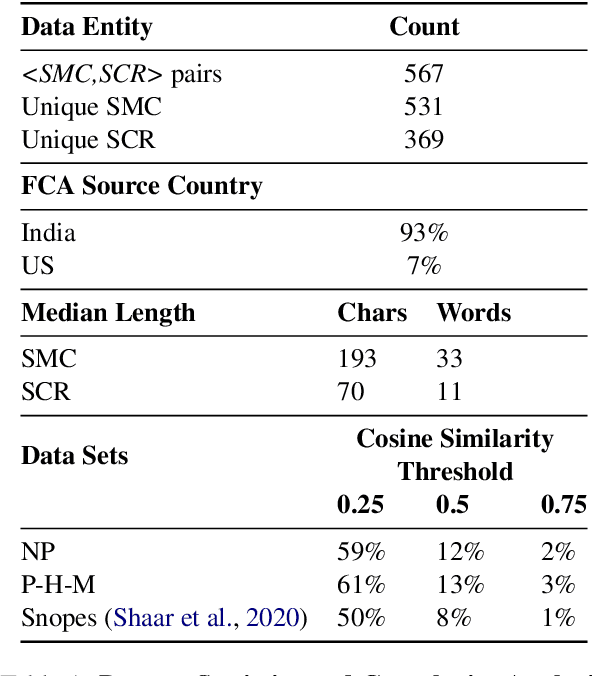

Harnessing Abstractive Summarization for Fact-Checked Claim Detection

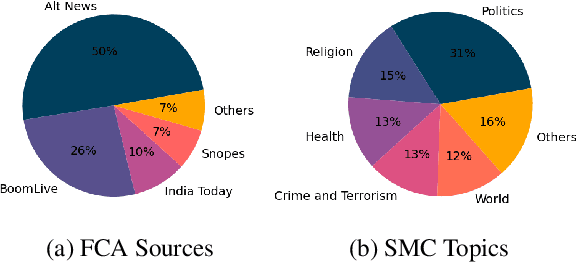

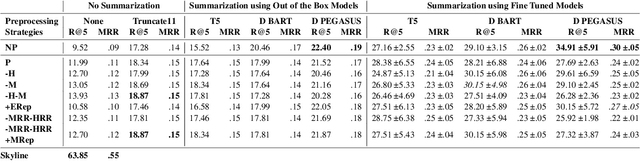

Sep 14, 2022

Social media platforms have become new battlegrounds for anti-social elements, with misinformation being the weapon of choice. Fact-checking organizations try to debunk as many claims as possible while staying true to their journalistic processes but cannot cope with its rapid dissemination. We believe that the solution lies in partial automation of the fact-checking life cycle, saving human time for tasks which require high cognition. We propose a new workflow for efficiently detecting previously fact-checked claims that uses abstractive summarization to generate crisp queries. These queries can then be executed on a general-purpose retrieval system associated with a collection of previously fact-checked claims. We curate an abstractive text summarization dataset comprising noisy claims from Twitter and their gold summaries. It is shown that retrieval performance improves 2x by using popular out-of-the-box summarization models and 3x by fine-tuning them on the accompanying dataset compared to verbatim querying. Our approach achieves Recall@5 and MRR of 35% and 0.3, compared to baseline values of 10% and 0.1, respectively. Our dataset, code, and models are available publicly: https://github.com/varadhbhatnagar/FC-Claim-Det/

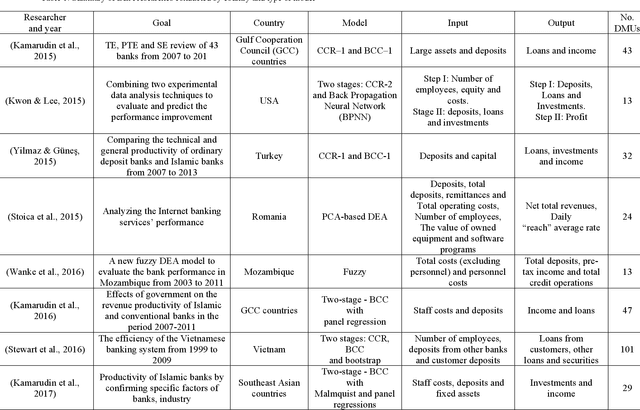

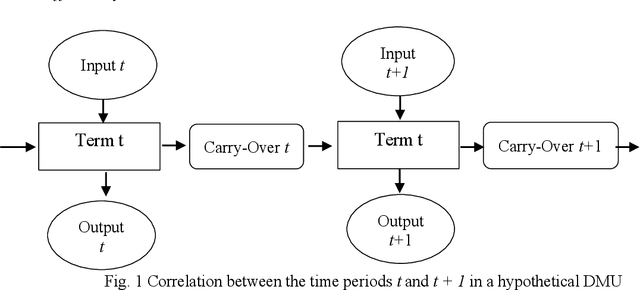

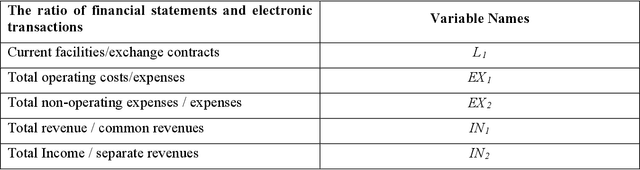

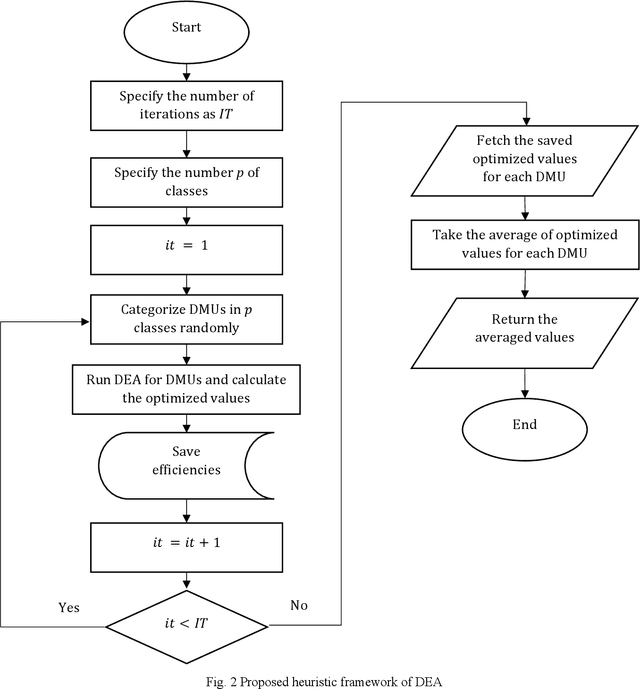

Efficiency Evaluation of Banks with Many Branches using a Heuristic Framework and Dynamic Data Envelopment Optimization Approach: A Real Case Study

Sep 11, 2022

Evaluating the efficiency of organizations and branches within an organization is a challenging issue for managers. Evaluation criteria allow organizations to rank their internal units, identify their position concerning their competitors, and implement strategies for improvement and development purposes. Among the methods that have been applied in the evaluation of bank branches, non-parametric methods have captured the attention of researchers in recent years. One of the most widely used non-parametric methods is the data envelopment analysis (DEA) which leads to promising results. However, the static DEA approaches do not consider the time in the model. Therefore, this paper uses a dynamic DEA (DDEA) method to evaluate the branches of a private Iranian bank over three years (2017-2019). The results are then compared with static DEA. After ranking the branches, they are clustered using the K-means method. Finally, a comprehensive sensitivity analysis approach is introduced to help the managers to decide about changing variables to shift a branch from one cluster to a more efficient one.

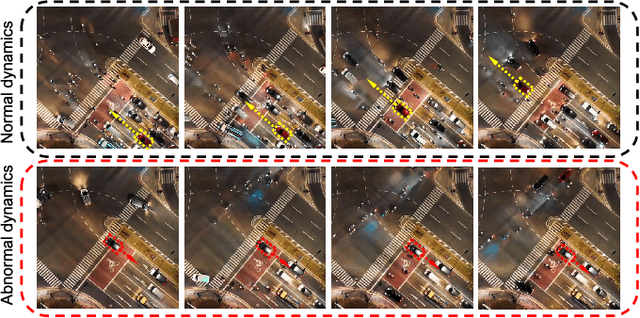

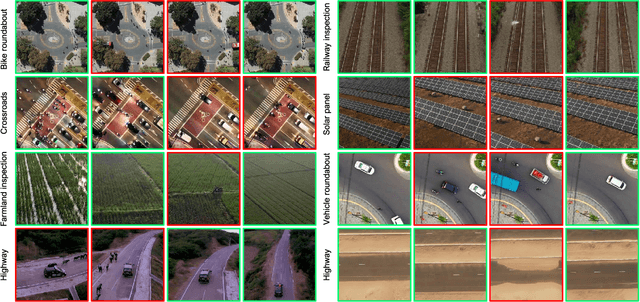

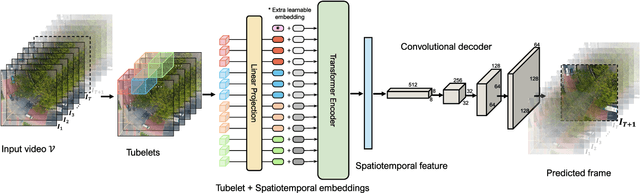

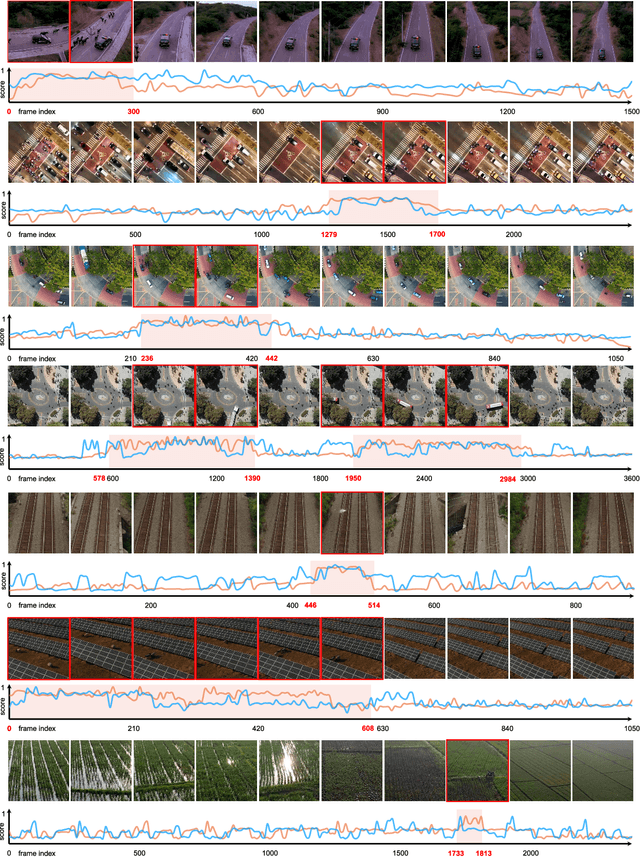

Anomaly Detection in Aerial Videos with Transformers

Sep 25, 2022

Unmanned aerial vehicles (UAVs) are widely applied for purposes of inspection, search, and rescue operations by the virtue of low-cost, large-coverage, real-time, and high-resolution data acquisition capacities. Massive volumes of aerial videos are produced in these processes, in which normal events often account for an overwhelming proportion. It is extremely difficult to localize and extract abnormal events containing potentially valuable information from long video streams manually. Therefore, we are dedicated to developing anomaly detection methods to solve this issue. In this paper, we create a new dataset, named DroneAnomaly, for anomaly detection in aerial videos. This dataset provides 37 training video sequences and 22 testing video sequences from 7 different realistic scenes with various anomalous events. There are 87,488 color video frames (51,635 for training and 35,853 for testing) with the size of $640 \times 640$ at 30 frames per second. Based on this dataset, we evaluate existing methods and offer a benchmark for this task. Furthermore, we present a new baseline model, ANomaly Detection with Transformers (ANDT), which treats consecutive video frames as a sequence of tubelets, utilizes a Transformer encoder to learn feature representations from the sequence, and leverages a decoder to predict the next frame. Our network models normality in the training phase and identifies an event with unpredictable temporal dynamics as an anomaly in the test phase. Moreover, To comprehensively evaluate the performance of our proposed method, we use not only our Drone-Anomaly dataset but also another dataset. We will make our dataset and code publicly available. A demo video is available at https://youtu.be/ancczYryOBY. We make our dataset and code publicly available .

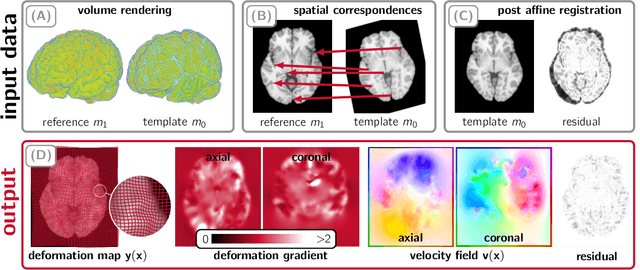

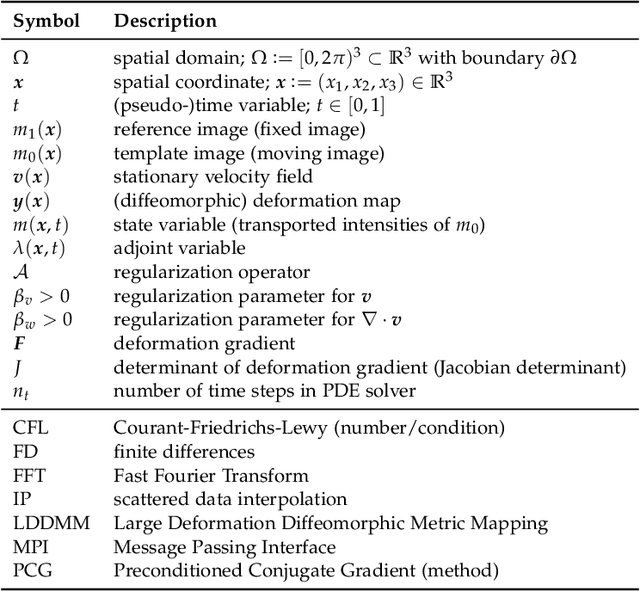

CLAIRE -- Parallelized Diffeomorphic Image Registration for Large-Scale Biomedical Imaging Applications

Sep 16, 2022

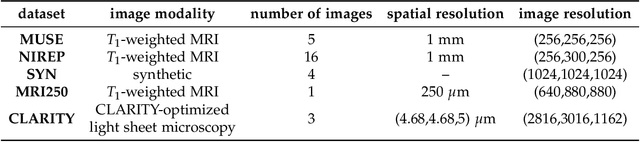

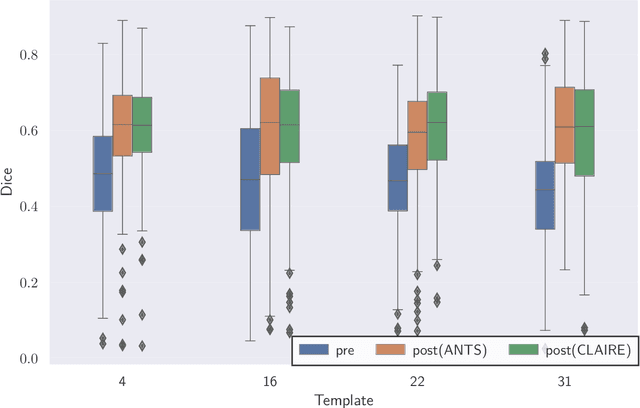

We study the performance of CLAIRE -- a diffeomorphic multi-node, multi-GPU image-registration algorithm, and software -- in large-scale biomedical imaging applications with billions of voxels. At such resolutions, most existing software packages for diffeomorphic image registration are prohibitively expensive. As a result, practitioners first significantly downsample the original images and then register them using existing tools. Our main contribution is an extensive analysis of the impact of downsampling on registration performance. We study this impact by comparing full-resolution registrations obtained with CLAIRE to lower-resolution registrations for synthetic and real-world imaging datasets. Our results suggest that registration at full resolution can yield a superior registration quality -- but not always. For example, downsampling a synthetic image from $1024^3$ to $256^3$ decreases the Dice coefficient from 92% to 79%. However, the differences are less pronounced for noisy or low-contrast high-resolution images. CLAIRE allows us not only to register images of clinically relevant size in a few seconds but also to register images at unprecedented resolution in a reasonable time. The highest resolution considered is CLARITY images of size $2816\times3016\times1162$. To the best of our knowledge, this is the first study on image registration quality at such resolutions.

Private and polynomial time algorithms for learning Gaussians and beyond

Nov 22, 2021We present a fairly general framework for reducing $(\varepsilon, \delta)$ differentially private (DP) statistical estimation to its non-private counterpart. As the main application of this framework, we give a polynomial time and $(\varepsilon,\delta)$-DP algorithm for learning (unrestricted) Gaussian distributions in $\mathbb{R}^d$. The sample complexity of our approach for learning the Gaussian up to total variation distance $\alpha$ is $\widetilde{O}\left(\frac{d^2}{\alpha^2}+\frac{d^2 \sqrt{\ln{1/\delta}}}{\alpha\varepsilon} \right)$, matching (up to logarithmic factors) the best known information-theoretic (non-efficient) sample complexity upper bound of Aden-Ali, Ashtiani, Kamath~(ALT'21). In an independent work, Kamath, Mouzakis, Singhal, Steinke, and Ullman~(arXiv:2111.04609) proved a similar result using a different approach and with $O(d^{5/2})$ sample complexity dependence on $d$. As another application of our framework, we provide the first polynomial time $(\varepsilon, \delta)$-DP algorithm for robust learning of (unrestricted) Gaussians.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge