Gabriel Fischer Abati

BinWalker: Development and Field Evaluation of a Quadruped Manipulator Platform for Sustainable Litter Collection

Mar 11, 2026Abstract:Litter pollution represents a growing environmental problem affecting natural and urban ecosystems worldwide. Waste discarded in public spaces often accumulates in areas that are difficult to access, such as uneven terrains, coastal environments, parks, and roadside vegetation. Over time, these materials degrade and release harmful substances, including toxic chemicals and microplastics, which can contaminate soil and water and pose serious threats to wildlife and human health. Despite increasing awareness of the problem, litter collection is still largely performed manually by human operators, making large-scale cleanup operations labor-intensive, time-consuming, and costly. Robotic solutions have the potential to support and partially automate environmental cleanup tasks. In this work, we present a quadruped robotic system designed for autonomous litter collection in challenging outdoor scenarios. The robot combines the mobility advantages of legged locomotion with a manipulation system consisting of a robotic arm and an onboard litter container. This configuration enables the robot to detect, grasp, and store litter items while navigating through uneven terrains. The proposed system aims to demonstrate the feasibility of integrating perception, locomotion, and manipulation on a legged robotic platform for environmental cleanup tasks. Experimental evaluations conducted in outdoor scenarios highlight the effectiveness of the approach and its potential for assisting large-scale litter removal operations in environments that are difficult to reach with traditional robotic platforms. The code associated with this work can be found at: https://github.com/iit-DLSLab/trash-collection-isaaclab.

Panoptic-SLAM: Visual SLAM in Dynamic Environments using Panoptic Segmentation

May 03, 2024

Abstract:The majority of visual SLAM systems are not robust in dynamic scenarios. The ones that deal with dynamic objects in the scenes usually rely on deep-learning-based methods to detect and filter these objects. However, these methods cannot deal with unknown moving objects. This work presents Panoptic-SLAM, an open-source visual SLAM system robust to dynamic environments, even in the presence of unknown objects. It uses panoptic segmentation to filter dynamic objects from the scene during the state estimation process. Panoptic-SLAM is based on ORB-SLAM3, a state-of-the-art SLAM system for static environments. The implementation was tested using real-world datasets and compared with several state-of-the-art systems from the literature, including DynaSLAM, DS-SLAM, SaD-SLAM, PVO and FusingPanoptic. For example, Panoptic-SLAM is on average four times more accurate than PVO, the most recent panoptic-based approach for visual SLAM. Also, experiments were performed using a quadruped robot with an RGB-D camera to test the applicability of our method in real-world scenarios. The tests were validated by a ground-truth created with a motion capture system.

Visual Localization and Mapping in Dynamic and Changing Environments

Sep 21, 2022

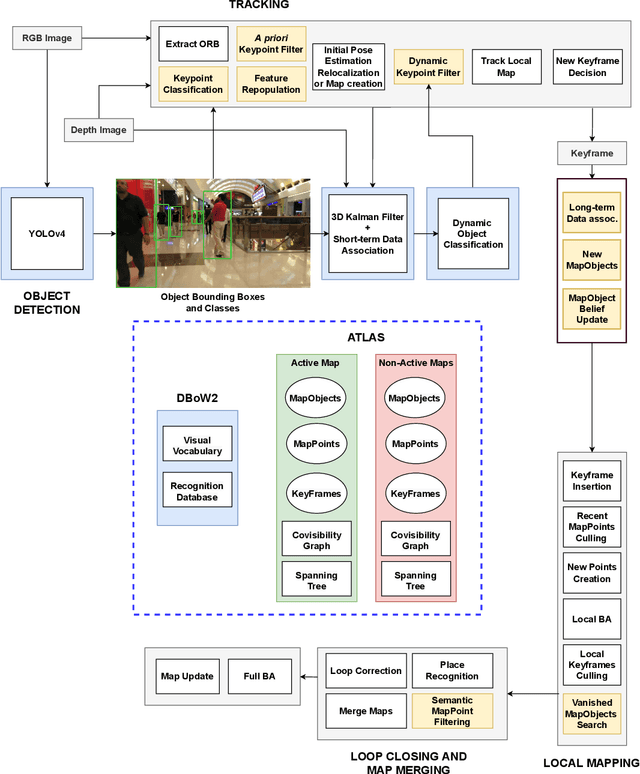

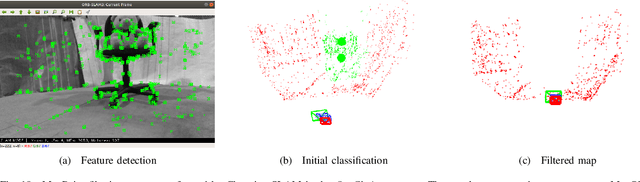

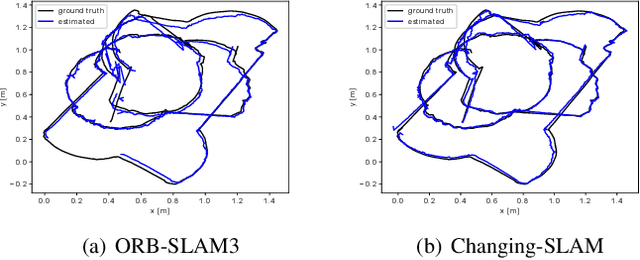

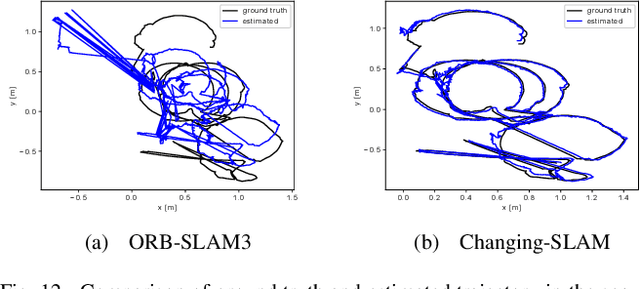

Abstract:The real-world deployment of fully autonomous mobile robots depends on a robust SLAM (Simultaneous Localization and Mapping) system, capable of handling dynamic environments, where objects are moving in front of the robot, and changing environments, where objects are moved or replaced after the robot has already mapped the scene. This paper presents Changing-SLAM, a method for robust Visual SLAM in both dynamic and changing environments. This is achieved by using a Bayesian filter combined with a long-term data association algorithm. Also, it employs an efficient algorithm for dynamic keypoints filtering based on object detection that correctly identify features inside the bounding box that are not dynamic, preventing a depletion of features that could cause lost tracks. Furthermore, a new dataset was developed with RGB-D data especially designed for the evaluation of changing environments on an object level, called PUC-USP dataset. Six sequences were created using a mobile robot, an RGB-D camera and a motion capture system. The sequences were designed to capture different scenarios that could lead to a tracking failure or a map corruption. To the best of our knowledge, Changing-SLAM is the first Visual SLAM system that is robust to both dynamic and changing environments, not assuming a given camera pose or a known map, being also able to operate in real time. The proposed method was evaluated using benchmark datasets and compared with other state-of-the-art methods, proving to be highly accurate.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge