"Information": models, code, and papers

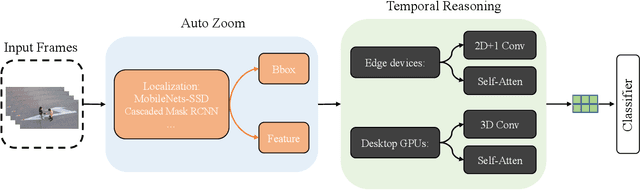

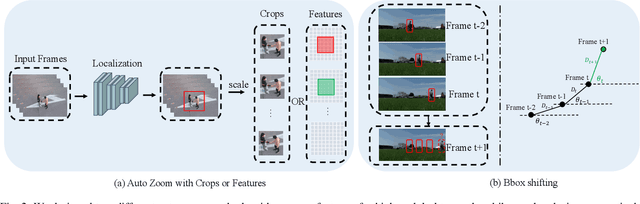

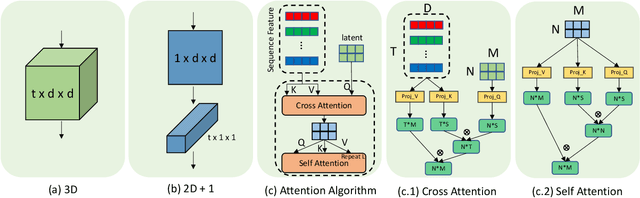

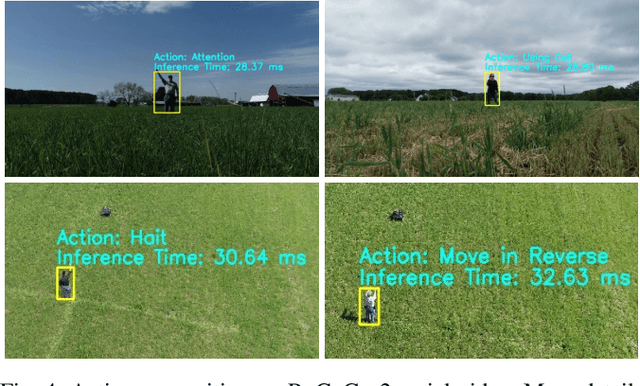

AZTR: Aerial Video Action Recognition with Auto Zoom and Temporal Reasoning

Mar 02, 2023

We propose a novel approach for aerial video action recognition. Our method is designed for videos captured using UAVs and can run on edge or mobile devices. We present a learning-based approach that uses customized auto zoom to automatically identify the human target and scale it appropriately. This makes it easier to extract the key features and reduces the computational overhead. We also present an efficient temporal reasoning algorithm to capture the action information along the spatial and temporal domains within a controllable computational cost. Our approach has been implemented and evaluated both on the desktop with high-end GPUs and on the low power Robotics RB5 Platform for robots and drones. In practice, we achieve 6.1-7.4% improvement over SOTA in Top-1 accuracy on the RoCoG-v2 dataset, 8.3-10.4% improvement on the UAV-Human dataset and 3.2% improvement on the Drone Action dataset.

Contrastive Hierarchical Clustering

Mar 03, 2023

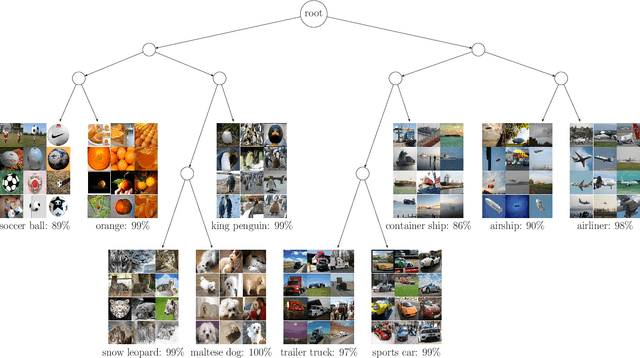

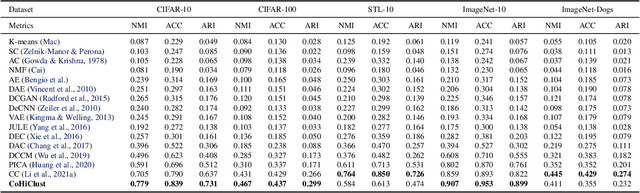

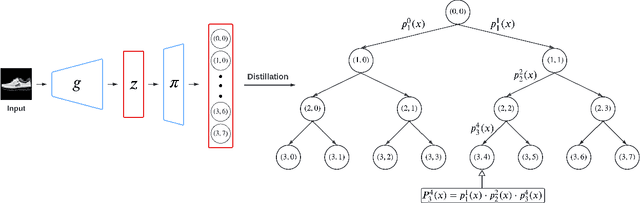

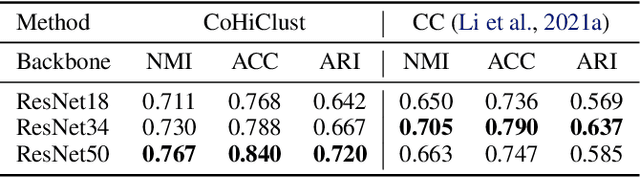

Deep clustering has been dominated by flat models, which split a dataset into a predefined number of groups. Although recent methods achieve an extremely high similarity with the ground truth on popular benchmarks, the information contained in the flat partition is limited. In this paper, we introduce CoHiClust, a Contrastive Hierarchical Clustering model based on deep neural networks, which can be applied to typical image data. By employing a self-supervised learning approach, CoHiClust distills the base network into a binary tree without access to any labeled data. The hierarchical clustering structure can be used to analyze the relationship between clusters, as well as to measure the similarity between data points. Experiments demonstrate that CoHiClust generates a reasonable structure of clusters, which is consistent with our intuition and image semantics. Moreover, it obtains superior clustering accuracy on most of the image datasets compared to the state-of-the-art flat clustering models.

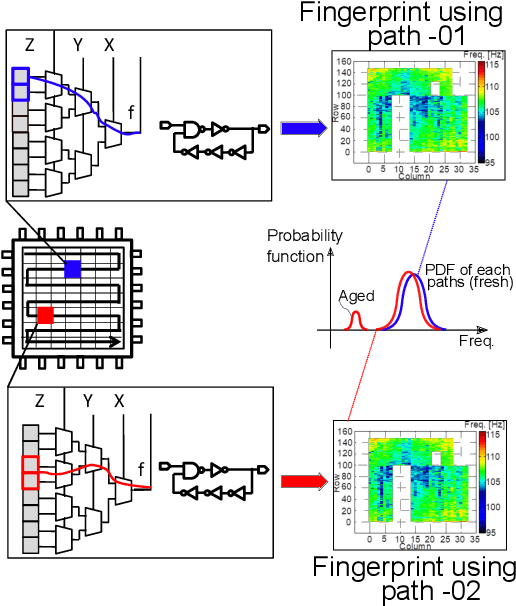

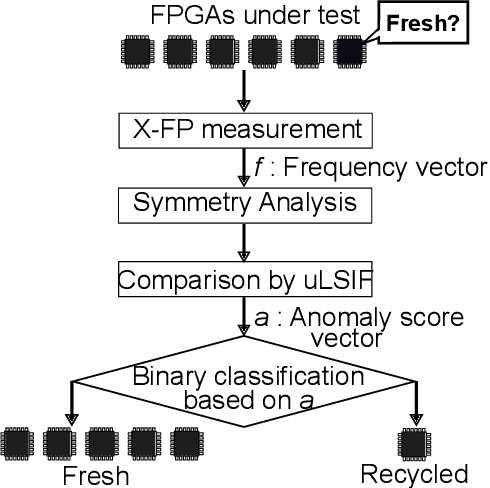

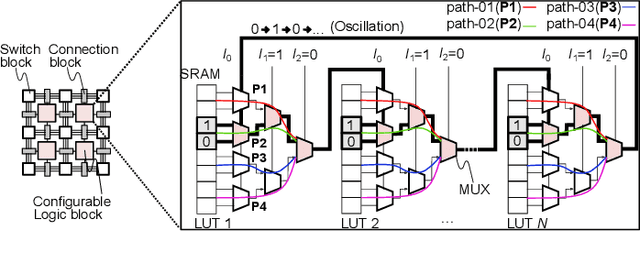

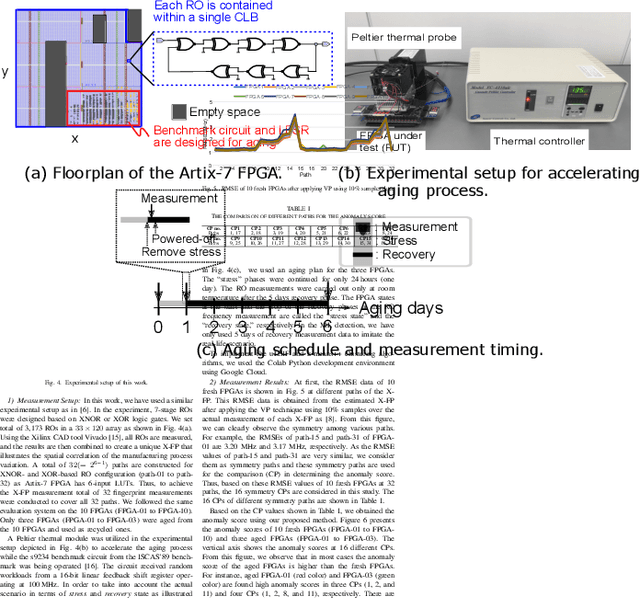

Unsupervised Recycled FPGA Detection Using Symmetry Analysis

Mar 03, 2023

Recently, recycled field-programmable gate arrays (FPGAs) pose a significant hardware security problem due to the proliferation of the semiconductor supply chain. Ring oscillator (RO) based frequency analyzing technique is one of the popular methods, where most studies used the known fresh FPGAs (KFFs) in machine learning-based detection, which is not a realistic approach. In this paper, we present a novel recycled FPGA detection method by examining the symmetry information of the RO frequency using unsupervised anomaly detection method. Due to the symmetrical array structure of the FPGA, some adjacent logic blocks on an FPGA have comparable RO frequencies, hence our method simply analyzes the RO frequencies of those blocks to determine how similar they are. The proposed approach efficiently categorizes recycled FPGAs by utilizing direct density ratio estimation through outliers detection. Experiments using Xilinx Artix-7 FPGAs demonstrate that the proposed method accurately classifies recycled FPGAs from 10 fresh FPGAs by x fewer computations compared with the conventional method.

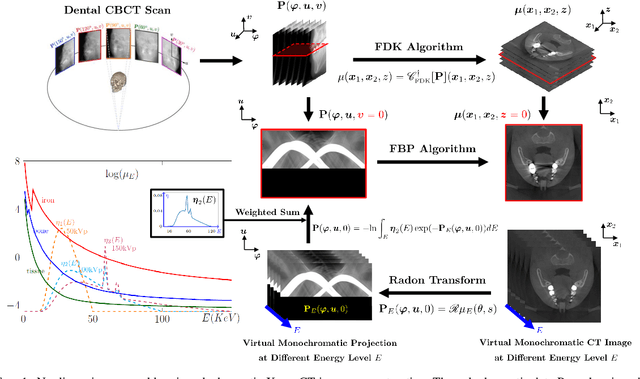

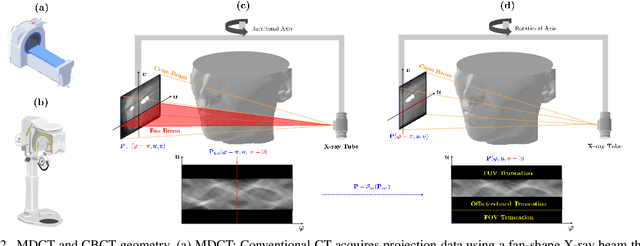

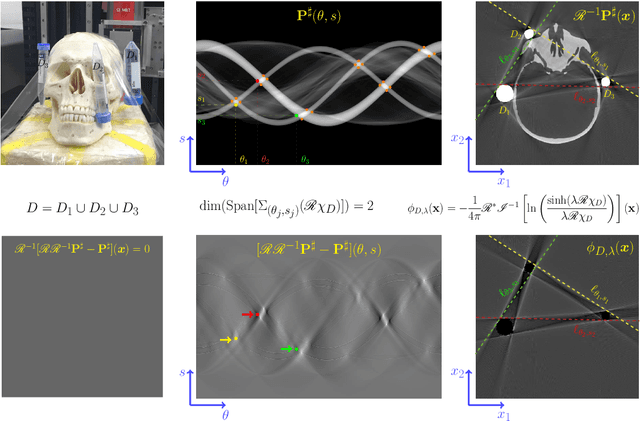

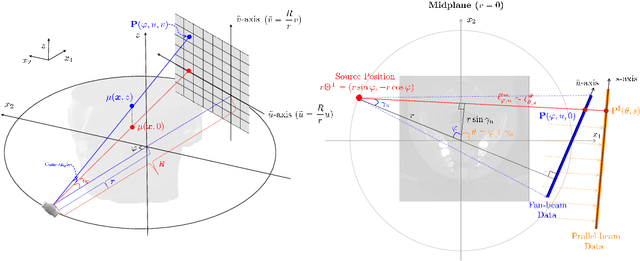

Nonlinear ill-posed problem in low-dose dental cone-beam computed tomography

Mar 03, 2023

This paper describes the mathematical structure of the ill-posed nonlinear inverse problem of low-dose dental cone-beam computed tomography (CBCT) and explains the advantages of a deep learning-based approach to the reconstruction of computed tomography images over conventional regularization methods. This paper explains the underlying reasons why dental CBCT is more ill-posed than standard computed tomography. Despite this severe ill-posedness, the demand for dental CBCT systems is rapidly growing because of their cost competitiveness and low radiation dose. We then describe the limitations of existing methods in the accurate restoration of the morphological structures of teeth using dental CBCT data severely damaged by metal implants. We further discuss the usefulness of panoramic images generated from CBCT data for accurate tooth segmentation. We also discuss the possibility of utilizing radiation-free intra-oral scan data as prior information in CBCT image reconstruction to compensate for the damage to data caused by metal implants.

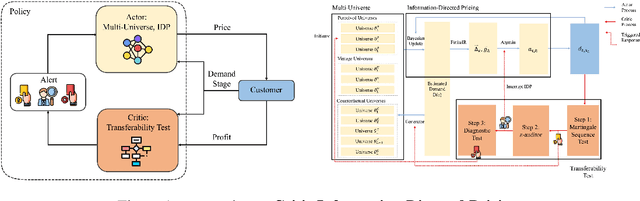

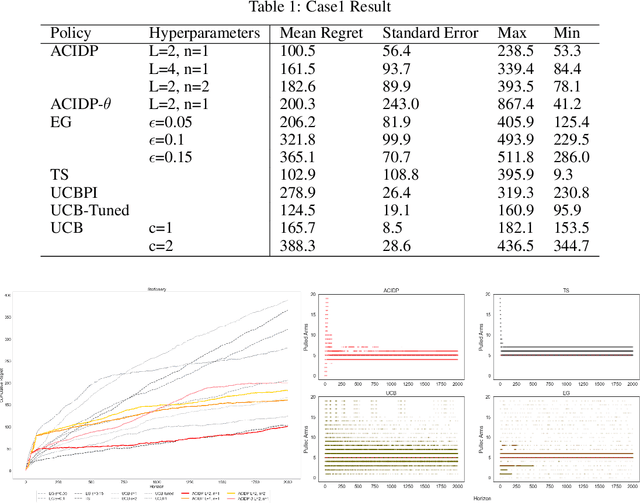

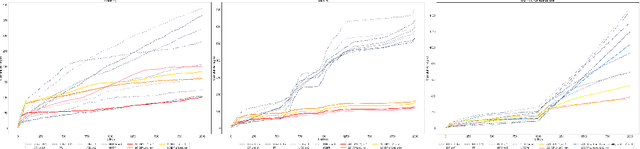

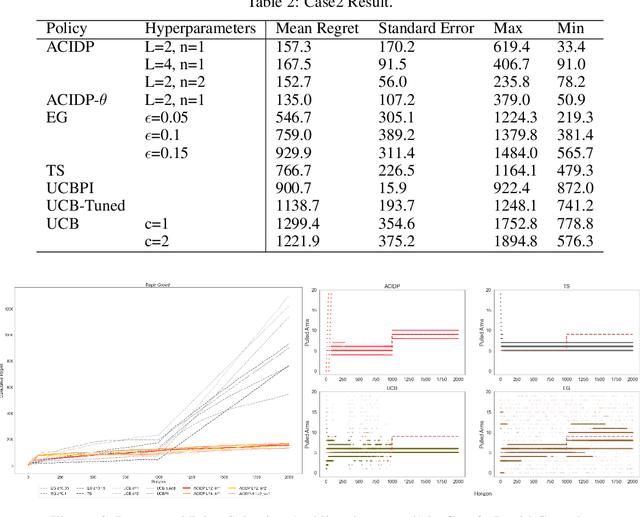

Non-Stationary Dynamic Pricing Via Actor-Critic Information-Directed Pricing

Aug 19, 2022

This paper presents a novel non-stationary dynamic pricing algorithm design, where pricing agents face incomplete demand information and market environment shifts. The agents run price experiments to learn about each product's demand curve and the profit-maximizing price, while being aware of market environment shifts to avoid high opportunity costs from offering sub-optimal prices. The proposed ACIDP extends information-directed sampling (IDS) algorithms from statistical machine learning to include microeconomic choice theory, with a novel pricing strategy auditing procedure to escape sub-optimal pricing after market environment shift. The proposed ACIDP outperforms competing bandit algorithms including Upper Confidence Bound (UCB) and Thompson sampling (TS) in a series of market environment shifts.

M-SENSE: Modeling Narrative Structure in Short Personal Narratives Using Protagonist's Mental Representations

Feb 18, 2023

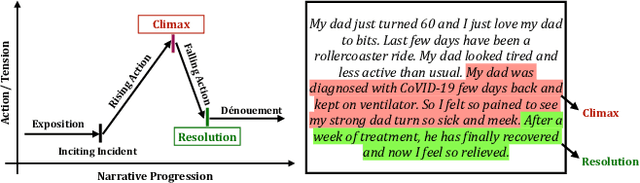

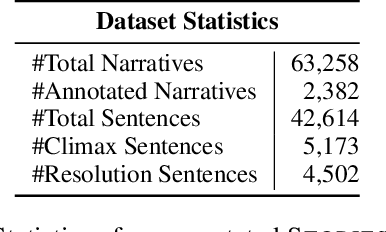

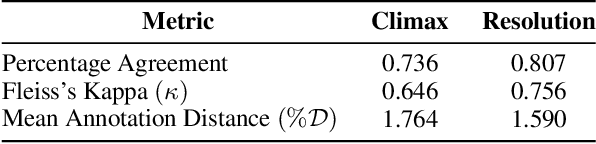

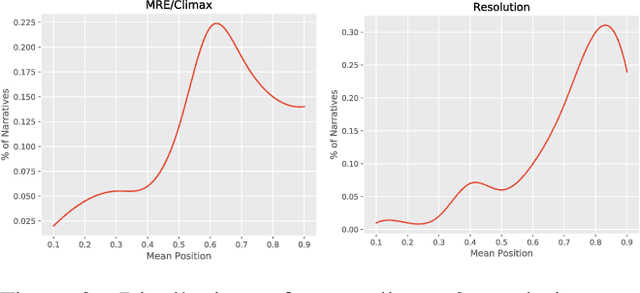

Narrative is a ubiquitous component of human communication. Understanding its structure plays a critical role in a wide variety of applications, ranging from simple comparative analyses to enhanced narrative retrieval, comprehension, or reasoning capabilities. Prior research in narratology has highlighted the importance of studying the links between cognitive and linguistic aspects of narratives for effective comprehension. This interdependence is related to the textual semantics and mental language in narratives, referring to characters' motivations, feelings or emotions, and beliefs. However, this interdependence is hardly explored for modeling narratives. In this work, we propose the task of automatically detecting prominent elements of the narrative structure by analyzing the role of characters' inferred mental state along with linguistic information at the syntactic and semantic levels. We introduce a STORIES dataset of short personal narratives containing manual annotations of key elements of narrative structure, specifically climax and resolution. To this end, we implement a computational model that leverages the protagonist's mental state information obtained from a pre-trained model trained on social commonsense knowledge and integrates their representations with contextual semantic embed-dings using a multi-feature fusion approach. Evaluating against prior zero-shot and supervised baselines, we find that our model is able to achieve significant improvements in the task of identifying climax and resolution.

Time Series Forecasting via Semi-Asymmetric Convolutional Architecture with Global Atrous Sliding Window

Jan 31, 2023

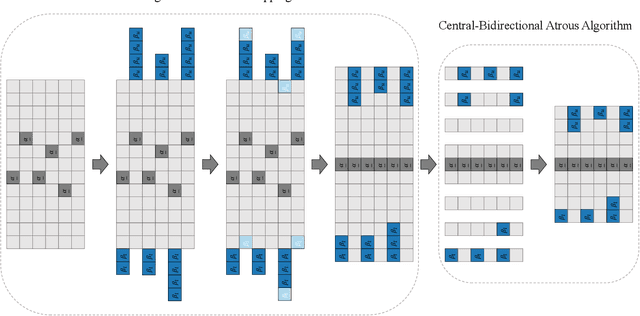

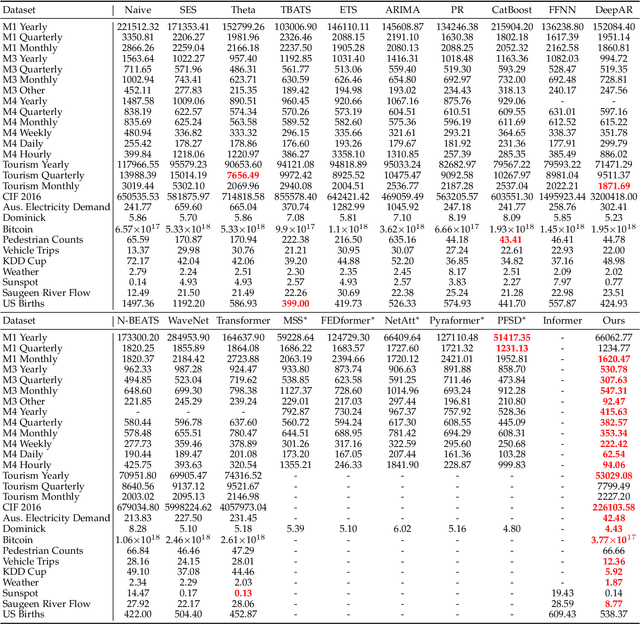

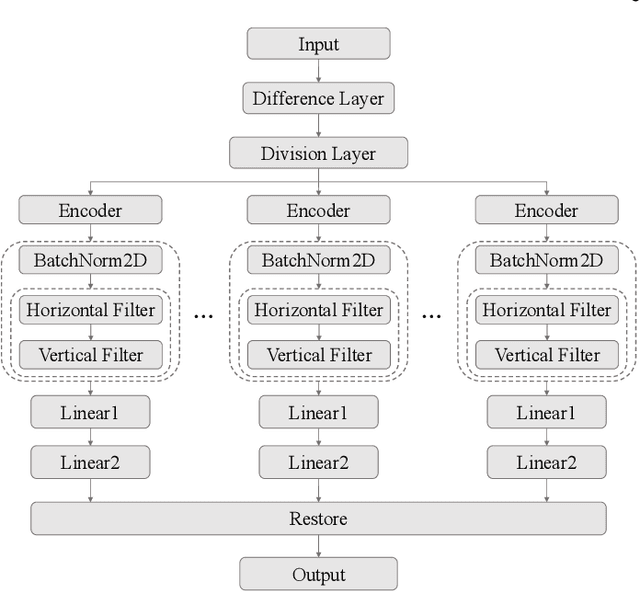

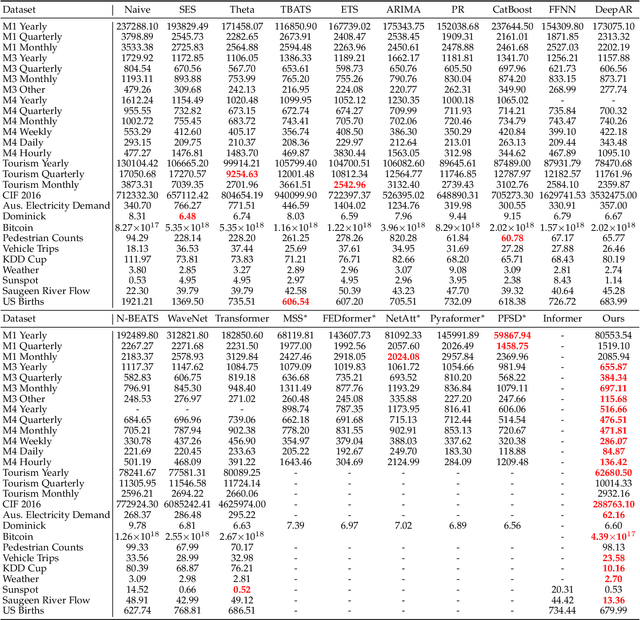

The proposed method in this paper is designed to address the problem of time series forecasting. Although some exquisitely designed models achieve excellent prediction performances, how to extract more useful information and make accurate predictions is still an open issue. Most of modern models only focus on a short range of information, which are fatal for problems such as time series forecasting which needs to capture long-term information characteristics. As a result, the main concern of this work is to further mine relationship between local and global information contained in time series to produce more precise predictions. In this paper, to satisfactorily realize the purpose, we make three main contributions that are experimentally verified to have performance advantages. Firstly, original time series is transformed into difference sequence which serves as input to the proposed model. And secondly, we introduce the global atrous sliding window into the forecasting model which references the concept of fuzzy time series to associate relevant global information with temporal data within a time period and utilizes central-bidirectional atrous algorithm to capture underlying-related features to ensure validity and consistency of captured data. Thirdly, a variation of widely-used asymmetric convolution which is called semi-asymmetric convolution is devised to more flexibly extract relationships in adjacent elements and corresponding associated global features with adjustable ranges of convolution on vertical and horizontal directions. The proposed model in this paper achieves state-of-the-art on most of time series datasets provided compared with competitive modern models.

DECOMPL: Decompositional Learning with Attention Pooling for Group Activity Recognition from a Single Volleyball Image

Mar 11, 2023

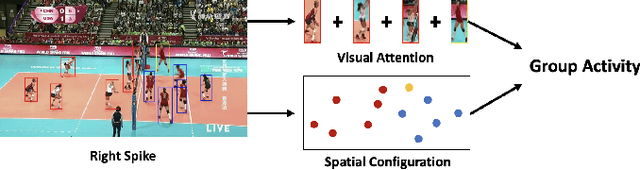

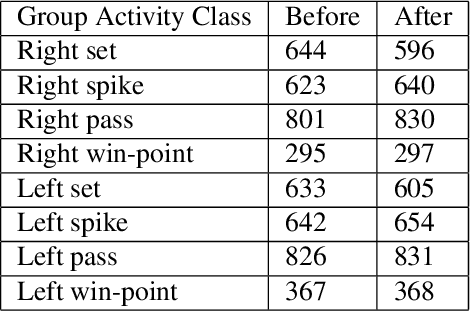

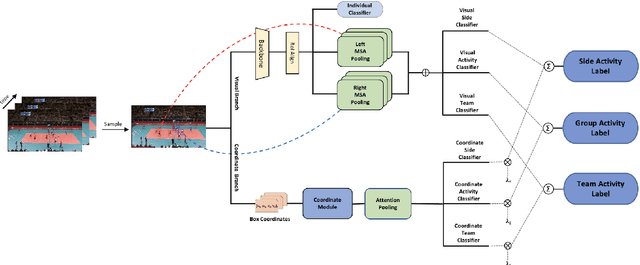

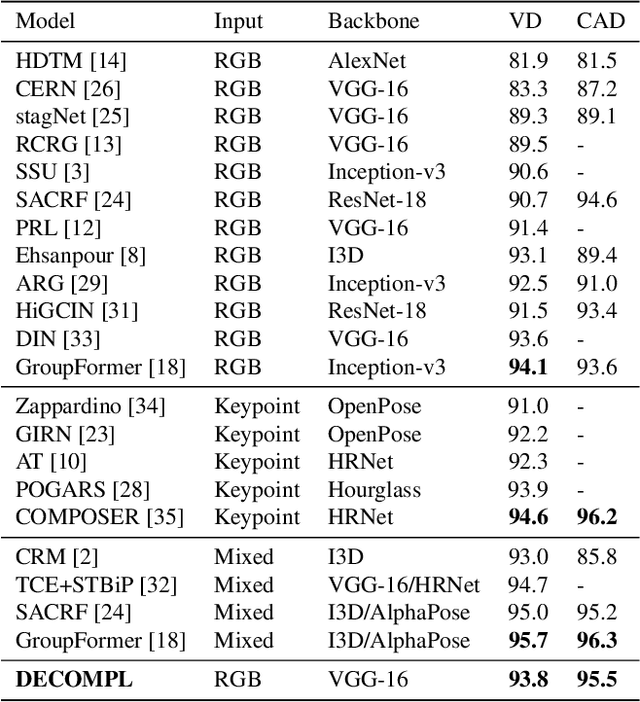

Group Activity Recognition (GAR) aims to detect the activity performed by multiple actors in a scene. Prior works model the spatio-temporal features based on the RGB, optical flow or keypoint data types. However, using both the temporality and these data types altogether increase the computational complexity significantly. Our hypothesis is that by only using the RGB data without temporality, the performance can be maintained with a negligible loss in accuracy. To that end, we propose a novel GAR technique for volleyball videos, DECOMPL, which consists of two complementary branches. In the visual branch, it extracts the features using attention pooling in a selective way. In the coordinate branch, it considers the current configuration of the actors and extracts the spatial information from the box coordinates. Moreover, we analyzed the Volleyball dataset that the recent literature is mostly based on, and realized that its labeling scheme degrades the group concept in the activities to the level of individual actors. We manually reannotated the dataset in a systematic manner for emphasizing the group concept. Experimental results on the Volleyball as well as Collective Activity (from another domain, i.e., not volleyball) datasets demonstrated the effectiveness of the proposed model DECOMPL, which delivered the best/second best GAR performance with the reannotations/original annotations among the comparable state-of-the-art techniques. Our code, results and new annotations will be made available through GitHub after the revision process.

Learning Grounded Vision-Language Representation for Versatile Understanding in Untrimmed Videos

Mar 11, 2023

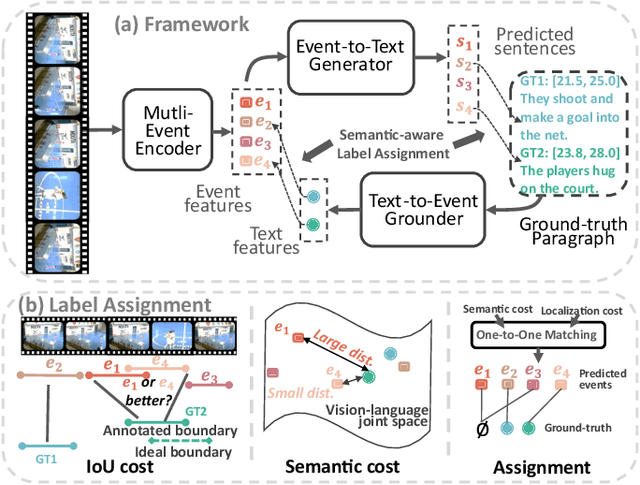

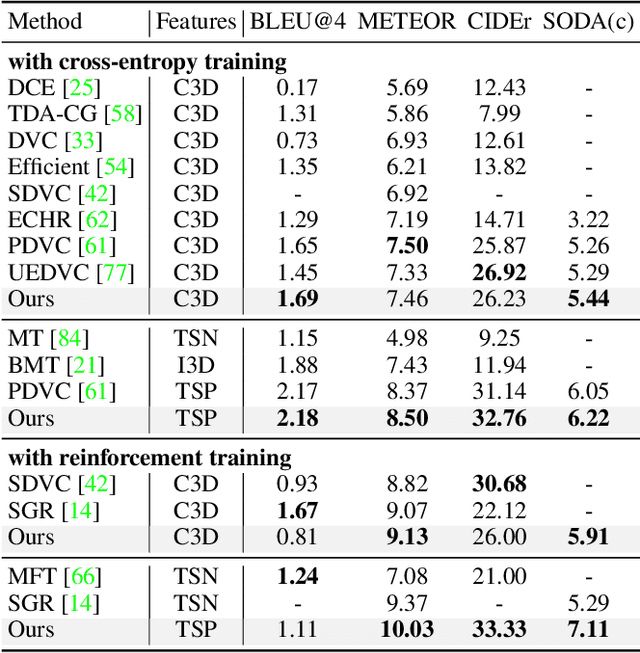

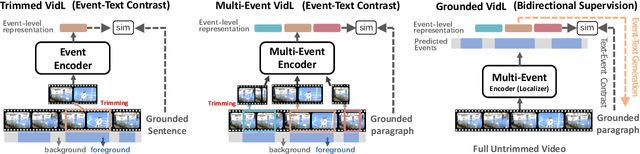

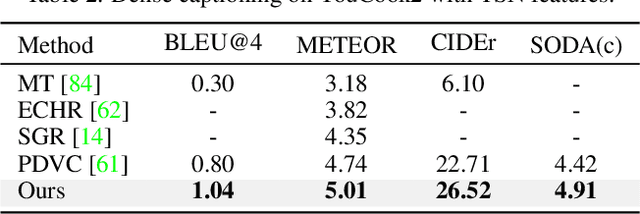

Joint video-language learning has received increasing attention in recent years. However, existing works mainly focus on single or multiple trimmed video clips (events), which makes human-annotated event boundaries necessary during inference. To break away from the ties, we propose a grounded vision-language learning framework for untrimmed videos, which automatically detects informative events and effectively excavates the alignments between multi-sentence descriptions and corresponding event segments. Instead of coarse-level video-language alignments, we present two dual pretext tasks to encourage fine-grained segment-level alignments, i.e., text-to-event grounding (TEG) and event-to-text generation (ETG). TEG learns to adaptively ground the possible event proposals given a set of sentences by estimating the cross-modal distance in a joint semantic space. Meanwhile, ETG aims to reconstruct (generate) the matched texts given event proposals, encouraging the event representation to retain meaningful semantic information. To encourage accurate label assignment between the event set and the text set, we propose a novel semantic-aware cost to mitigate the sub-optimal matching results caused by ambiguous boundary annotations. Our framework is easily extensible to tasks covering visually-grounded language understanding and generation. We achieve state-of-the-art dense video captioning performance on ActivityNet Captions, YouCook2 and YouMakeup, and competitive performance on several other language generation and understanding tasks. Our method also achieved 1st place in both the MTVG and MDVC tasks of the PIC 4th Challenge.

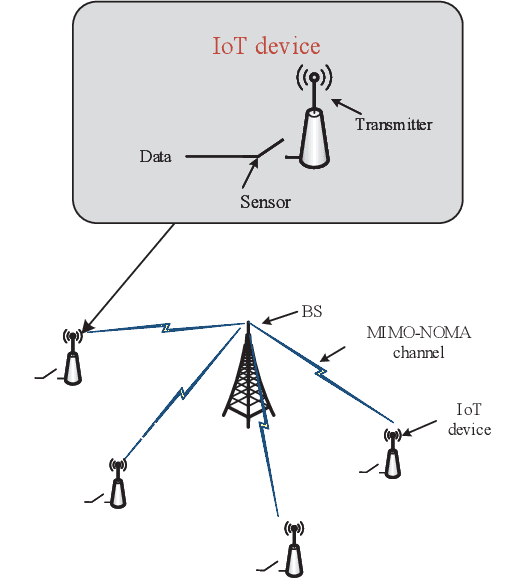

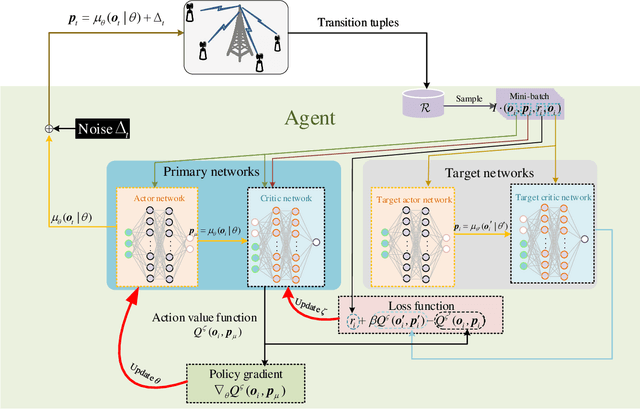

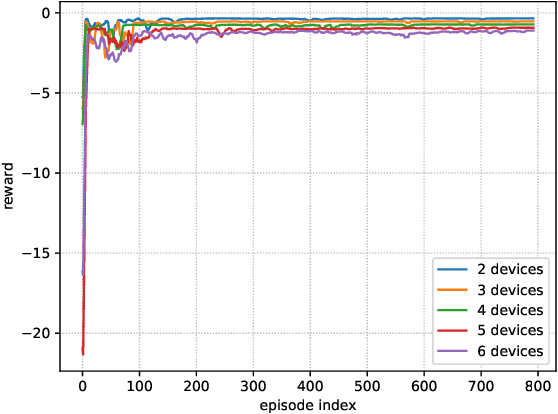

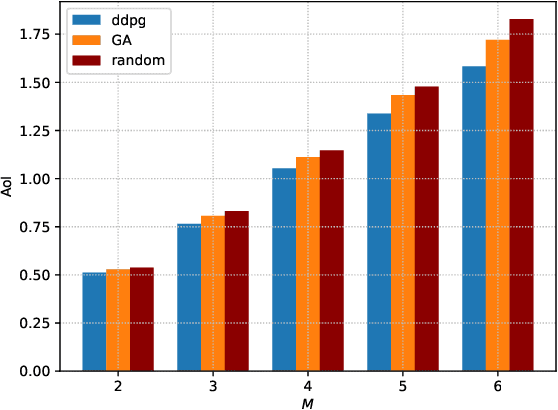

Deep Reinforcement Learning Based Power Allocation for Minimizing AoI and Energy Consumption in MIMO-NOMA IoT Systems

Mar 11, 2023

Multi-input multi-out and non-orthogonal multiple access (MIMO-NOMA) internet-of-things (IoT) systems can improve channel capacity and spectrum efficiency distinctly to support the real-time applications. Age of information (AoI) is an important metric for real-time application, but there is no literature have minimized AoI of the MIMO-NOMA IoT system, which motivates us to conduct this work. In MIMO-NOMA IoT system, the base station (BS) determines the sample collection requirements and allocates the transmission power for each IoT device. Each device determines whether to sample data according to the sample collection requirements and adopts the allocated power to transmit the sampled data to the BS over MIMO-NOMA channel. Afterwards, the BS employs successive interference cancelation (SIC) technique to decode the signal of the data transmitted by each device. The sample collection requirements and power allocation would affect AoI and energy consumption of the system. It is critical to determine the optimal policy including sample collection requirements and power allocation to minimize the AoI and energy consumption of MIMO-NOMA IoT system, where the transmission rate is not a constant in the SIC process and the noise is stochastic in the MIMO-NOMA channel. In this paper, we propose the optimal power allocation to minimize the AoI and energy consumption of MIMO- NOMA IoT system based on deep reinforcement learning (DRL). Extensive simulations are carried out to demonstrate the superiority of the optimal power allocation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge