facial recognition

Facial recognition is an AI-based technique for identifying or confirming an individual's identity using their face. It maps facial features from an image or video and then compares the information with a collection of known faces to find a match.

Papers and Code

Autonomous AI Surveillance: Multimodal Deep Learning for Cognitive and Behavioral Monitoring

Jul 02, 2025

This study presents a novel classroom surveillance system that integrates multiple modalities, including drowsiness, tracking of mobile phone usage, and face recognition,to assess student attentiveness with enhanced precision.The system leverages the YOLOv8 model to detect both mobile phone and sleep usage,(Ghatge et al., 2024) while facial recognition is achieved through LResNet Occ FC body tracking using YOLO and MTCNN.(Durai et al., 2024) These models work in synergy to provide comprehensive, real-time monitoring, offering insights into student engagement and behavior.(S et al., 2023) The framework is trained on specialized datasets, such as the RMFD dataset for face recognition and a Roboflow dataset for mobile phone detection. The extensive evaluation of the system shows promising results. Sleep detection achieves 97. 42% mAP@50, face recognition achieves 86. 45% validation accuracy and mobile phone detection reach 85. 89% mAP@50. The system is implemented within a core PHP web application and utilizes ESP32-CAM hardware for seamless data capture.(Neto et al., 2024) This integrated approach not only enhances classroom monitoring, but also ensures automatic attendance recording via face recognition as students remain seated in the classroom, offering scalability for diverse educational environments.(Banada,2025)

CAST-Phys: Contactless Affective States Through Physiological signals Database

Jul 08, 2025In recent years, affective computing and its applications have become a fast-growing research topic. Despite significant advancements, the lack of affective multi-modal datasets remains a major bottleneck in developing accurate emotion recognition systems. Furthermore, the use of contact-based devices during emotion elicitation often unintentionally influences the emotional experience, reducing or altering the genuine spontaneous emotional response. This limitation highlights the need for methods capable of extracting affective cues from multiple modalities without physical contact, such as remote physiological emotion recognition. To address this, we present the Contactless Affective States Through Physiological Signals Database (CAST-Phys), a novel high-quality dataset explicitly designed for multi-modal remote physiological emotion recognition using facial and physiological cues. The dataset includes diverse physiological signals, such as photoplethysmography (PPG), electrodermal activity (EDA), and respiration rate (RR), alongside high-resolution uncompressed facial video recordings, enabling the potential for remote signal recovery. Our analysis highlights the crucial role of physiological signals in realistic scenarios where facial expressions alone may not provide sufficient emotional information. Furthermore, we demonstrate the potential of remote multi-modal emotion recognition by evaluating the impact of individual and fused modalities, showcasing its effectiveness in advancing contactless emotion recognition technologies.

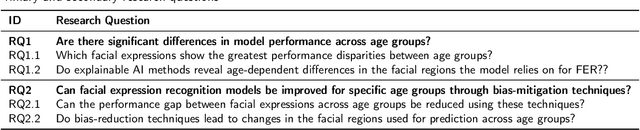

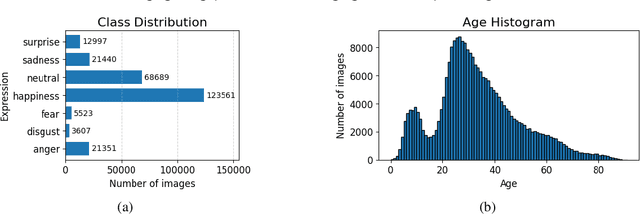

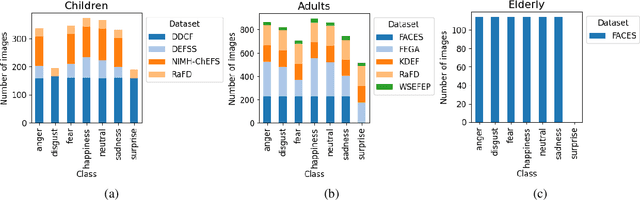

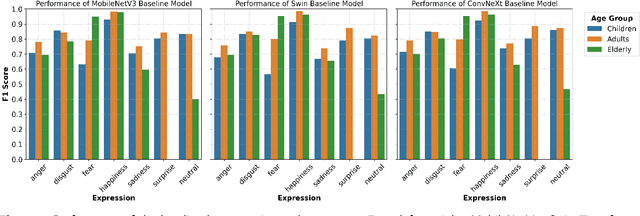

Bridging the gap in FER: addressing age bias in deep learning

Jul 10, 2025

Facial Expression Recognition (FER) systems based on deep learning have achieved impressive performance in recent years. However, these models often exhibit demographic biases, particularly with respect to age, which can compromise their fairness and reliability. In this work, we present a comprehensive study of age-related bias in deep FER models, with a particular focus on the elderly population. We first investigate whether recognition performance varies across age groups, which expressions are most affected, and whether model attention differs depending on age. Using Explainable AI (XAI) techniques, we identify systematic disparities in expression recognition and attention patterns, especially for "neutral", "sadness", and "anger" in elderly individuals. Based on these findings, we propose and evaluate three bias mitigation strategies: Multi-task Learning, Multi-modal Input, and Age-weighted Loss. Our models are trained on a large-scale dataset, AffectNet, with automatically estimated age labels and validated on balanced benchmark datasets that include underrepresented age groups. Results show consistent improvements in recognition accuracy for elderly individuals, particularly for the most error-prone expressions. Saliency heatmap analysis reveals that models trained with age-aware strategies attend to more relevant facial regions for each age group, helping to explain the observed improvements. These findings suggest that age-related bias in FER can be effectively mitigated using simple training modifications, and that even approximate demographic labels can be valuable for promoting fairness in large-scale affective computing systems.

Facial Recognition Leveraging Generative Adversarial Networks

May 17, 2025

Face recognition performance based on deep learning heavily relies on large-scale training data, which is often difficult to acquire in practical applications. To address this challenge, this paper proposes a GAN-based data augmentation method with three key contributions: (1) a residual-embedded generator to alleviate gradient vanishing/exploding problems, (2) an Inception ResNet-V1 based FaceNet discriminator for improved adversarial training, and (3) an end-to-end framework that jointly optimizes data generation and recognition performance. Experimental results demonstrate that our approach achieves stable training dynamics and significantly improves face recognition accuracy by 12.7% on the LFW benchmark compared to baseline methods, while maintaining good generalization capability with limited training samples.

2D-3D Attention and Entropy for Pose Robust 2D Facial Recognition

May 14, 2025

Despite recent advances in facial recognition, there remains a fundamental issue concerning degradations in performance due to substantial perspective (pose) differences between enrollment and query (probe) imagery. Therefore, we propose a novel domain adaptive framework to facilitate improved performances across large discrepancies in pose by enabling image-based (2D) representations to infer properties of inherently pose invariant point cloud (3D) representations. Specifically, our proposed framework achieves better pose invariance by using (1) a shared (joint) attention mapping to emphasize common patterns that are most correlated between 2D facial images and 3D facial data and (2) a joint entropy regularizing loss to promote better consistency$\unicode{x2014}$enhancing correlations among the intersecting 2D and 3D representations$\unicode{x2014}$by leveraging both attention maps. This framework is evaluated on FaceScape and ARL-VTF datasets, where it outperforms competitive methods by achieving profile (90$\unicode{x00b0}$$\unicode{x002b}$) TAR @ 1$\unicode{x0025}$ FAR improvements of at least 7.1$\unicode{x0025}$ and 1.57$\unicode{x0025}$, respectively.

Color histogram equalization and fine-tuning to improve expression recognition of (partially occluded) faces on sign language datasets

Jul 27, 2025The goal of this investigation is to quantify to what extent computer vision methods can correctly classify facial expressions on a sign language dataset. We extend our experiments by recognizing expressions using only the upper or lower part of the face, which is needed to further investigate the difference in emotion manifestation between hearing and deaf subjects. To take into account the peculiar color profile of a dataset, our method introduces a color normalization stage based on histogram equalization and fine-tuning. The results show the ability to correctly recognize facial expressions with 83.8% mean sensitivity and very little variance (.042) among classes. Like for humans, recognition of expressions from the lower half of the face (79.6%) is higher than that from the upper half (77.9%). Noticeably, the classification accuracy from the upper half of the face is higher than human level.

Accuracy and Fairness of Facial Recognition Technology in Low-Quality Police Images: An Experiment With Synthetic Faces

May 20, 2025Facial recognition technology (FRT) is increasingly used in criminal investigations, yet most evaluations of its accuracy rely on high-quality images, unlike those often encountered by law enforcement. This study examines how five common forms of image degradation--contrast, brightness, motion blur, pose shift, and resolution--affect FRT accuracy and fairness across demographic groups. Using synthetic faces generated by StyleGAN3 and labeled with FairFace, we simulate degraded images and evaluate performance using Deepface with ArcFace loss in 1:n identification tasks. We perform an experiment and find that false positive rates peak near baseline image quality, while false negatives increase as degradation intensifies--especially with blur and low resolution. Error rates are consistently higher for women and Black individuals, with Black females most affected. These disparities raise concerns about fairness and reliability when FRT is used in real-world investigative contexts. Nevertheless, even under the most challenging conditions and for the most affected subgroups, FRT accuracy remains substantially higher than that of many traditional forensic methods. This suggests that, if appropriately validated and regulated, FRT should be considered a valuable investigative tool. However, algorithmic accuracy alone is not sufficient: we must also evaluate how FRT is used in practice, including user-driven data manipulation. Such cases underscore the need for transparency and oversight in FRT deployment to ensure both fairness and forensic validity.

AU-Blendshape for Fine-grained Stylized 3D Facial Expression Manipulation

Jul 16, 2025

While 3D facial animation has made impressive progress, challenges still exist in realizing fine-grained stylized 3D facial expression manipulation due to the lack of appropriate datasets. In this paper, we introduce the AUBlendSet, a 3D facial dataset based on AU-Blendshape representation for fine-grained facial expression manipulation across identities. AUBlendSet is a blendshape data collection based on 32 standard facial action units (AUs) across 500 identities, along with an additional set of facial postures annotated with detailed AUs. Based on AUBlendSet, we propose AUBlendNet to learn AU-Blendshape basis vectors for different character styles. AUBlendNet predicts, in parallel, the AU-Blendshape basis vectors of the corresponding style for a given identity mesh, thereby achieving stylized 3D emotional facial manipulation. We comprehensively validate the effectiveness of AUBlendSet and AUBlendNet through tasks such as stylized facial expression manipulation, speech-driven emotional facial animation, and emotion recognition data augmentation. Through a series of qualitative and quantitative experiments, we demonstrate the potential and importance of AUBlendSet and AUBlendNet in 3D facial animation tasks. To the best of our knowledge, AUBlendSet is the first dataset, and AUBlendNet is the first network for continuous 3D facial expression manipulation for any identity through facial AUs. Our source code is available at https://github.com/wslh852/AUBlendNet.git.

FG 2025 TrustFAA: the First Workshop on Towards Trustworthy Facial Affect Analysis: Advancing Insights of Fairness, Explainability, and Safety (TrustFAA)

Jun 05, 2025With the increasing prevalence and deployment of Emotion AI-powered facial affect analysis (FAA) tools, concerns about the trustworthiness of these systems have become more prominent. This first workshop on "Towards Trustworthy Facial Affect Analysis: Advancing Insights of Fairness, Explainability, and Safety (TrustFAA)" aims to bring together researchers who are investigating different challenges in relation to trustworthiness-such as interpretability, uncertainty, biases, and privacy-across various facial affect analysis tasks, including macro/ micro-expression recognition, facial action unit detection, other corresponding applications such as pain and depression detection, as well as human-robot interaction and collaboration. In alignment with FG2025's emphasis on ethics, as demonstrated by the inclusion of an Ethical Impact Statement requirement for this year's submissions, this workshop supports FG2025's efforts by encouraging research, discussion and dialogue on trustworthy FAA.

TKFNet: Learning Texture Key Factor Driven Feature for Facial Expression Recognition

May 15, 2025Facial expression recognition (FER) in the wild remains a challenging task due to the subtle and localized nature of expression-related features, as well as the complex variations in facial appearance. In this paper, we introduce a novel framework that explicitly focuses on Texture Key Driver Factors (TKDF), localized texture regions that exhibit strong discriminative power across emotional categories. By carefully observing facial image patterns, we identify that certain texture cues, such as micro-changes in skin around the brows, eyes, and mouth, serve as primary indicators of emotional dynamics. To effectively capture and leverage these cues, we propose a FER architecture comprising a Texture-Aware Feature Extractor (TAFE) and Dual Contextual Information Filtering (DCIF). TAFE employs a ResNet-based backbone enhanced with multi-branch attention to extract fine-grained texture representations, while DCIF refines these features by filtering context through adaptive pooling and attention mechanisms. Experimental results on RAF-DB and KDEF datasets demonstrate that our method achieves state-of-the-art performance, verifying the effectiveness and robustness of incorporating TKDFs into FER pipelines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge