Yingwei Pan

Control3D: Towards Controllable Text-to-3D Generation

Nov 09, 2023Abstract:Recent remarkable advances in large-scale text-to-image diffusion models have inspired a significant breakthrough in text-to-3D generation, pursuing 3D content creation solely from a given text prompt. However, existing text-to-3D techniques lack a crucial ability in the creative process: interactively control and shape the synthetic 3D contents according to users' desired specifications (e.g., sketch). To alleviate this issue, we present the first attempt for text-to-3D generation conditioning on the additional hand-drawn sketch, namely Control3D, which enhances controllability for users. In particular, a 2D conditioned diffusion model (ControlNet) is remoulded to guide the learning of 3D scene parameterized as NeRF, encouraging each view of 3D scene aligned with the given text prompt and hand-drawn sketch. Moreover, we exploit a pre-trained differentiable photo-to-sketch model to directly estimate the sketch of the rendered image over synthetic 3D scene. Such estimated sketch along with each sampled view is further enforced to be geometrically consistent with the given sketch, pursuing better controllable text-to-3D generation. Through extensive experiments, we demonstrate that our proposal can generate accurate and faithful 3D scenes that align closely with the input text prompts and sketches.

ControlStyle: Text-Driven Stylized Image Generation Using Diffusion Priors

Nov 09, 2023

Abstract:Recently, the multimedia community has witnessed the rise of diffusion models trained on large-scale multi-modal data for visual content creation, particularly in the field of text-to-image generation. In this paper, we propose a new task for ``stylizing'' text-to-image models, namely text-driven stylized image generation, that further enhances editability in content creation. Given input text prompt and style image, this task aims to produce stylized images which are both semantically relevant to input text prompt and meanwhile aligned with the style image in style. To achieve this, we present a new diffusion model (ControlStyle) via upgrading a pre-trained text-to-image model with a trainable modulation network enabling more conditions of text prompts and style images. Moreover, diffusion style and content regularizations are simultaneously introduced to facilitate the learning of this modulation network with these diffusion priors, pursuing high-quality stylized text-to-image generation. Extensive experiments demonstrate the effectiveness of our ControlStyle in producing more visually pleasing and artistic results, surpassing a simple combination of text-to-image model and conventional style transfer techniques.

Transforming Radiance Field with Lipschitz Network for Photorealistic 3D Scene Stylization

Mar 23, 2023

Abstract:Recent advances in 3D scene representation and novel view synthesis have witnessed the rise of Neural Radiance Fields (NeRFs). Nevertheless, it is not trivial to exploit NeRF for the photorealistic 3D scene stylization task, which aims to generate visually consistent and photorealistic stylized scenes from novel views. Simply coupling NeRF with photorealistic style transfer (PST) will result in cross-view inconsistency and degradation of stylized view syntheses. Through a thorough analysis, we demonstrate that this non-trivial task can be simplified in a new light: When transforming the appearance representation of a pre-trained NeRF with Lipschitz mapping, the consistency and photorealism across source views will be seamlessly encoded into the syntheses. That motivates us to build a concise and flexible learning framework namely LipRF, which upgrades arbitrary 2D PST methods with Lipschitz mapping tailored for the 3D scene. Technically, LipRF first pre-trains a radiance field to reconstruct the 3D scene, and then emulates the style on each view by 2D PST as the prior to learn a Lipschitz network to stylize the pre-trained appearance. In view of that Lipschitz condition highly impacts the expressivity of the neural network, we devise an adaptive regularization to balance the reconstruction and stylization. A gradual gradient aggregation strategy is further introduced to optimize LipRF in a cost-efficient manner. We conduct extensive experiments to show the high quality and robust performance of LipRF on both photorealistic 3D stylization and object appearance editing.

Modality-Agnostic Debiasing for Single Domain Generalization

Mar 13, 2023

Abstract:Deep neural networks (DNNs) usually fail to generalize well to outside of distribution (OOD) data, especially in the extreme case of single domain generalization (single-DG) that transfers DNNs from single domain to multiple unseen domains. Existing single-DG techniques commonly devise various data-augmentation algorithms, and remould the multi-source domain generalization methodology to learn domain-generalized (semantic) features. Nevertheless, these methods are typically modality-specific, thereby being only applicable to one single modality (e.g., image). In contrast, we target a versatile Modality-Agnostic Debiasing (MAD) framework for single-DG, that enables generalization for different modalities. Technically, MAD introduces a novel two-branch classifier: a biased-branch encourages the classifier to identify the domain-specific (superficial) features, and a general-branch captures domain-generalized features based on the knowledge from biased-branch. Our MAD is appealing in view that it is pluggable to most single-DG models. We validate the superiority of our MAD in a variety of single-DG scenarios with different modalities, including recognition on 1D texts, 2D images, 3D point clouds, and semantic segmentation on 2D images. More remarkably, for recognition on 3D point clouds and semantic segmentation on 2D images, MAD improves DSU by 2.82\% and 1.5\% in accuracy and mIOU.

Semantic-Conditional Diffusion Networks for Image Captioning

Dec 06, 2022Abstract:Recent advances on text-to-image generation have witnessed the rise of diffusion models which act as powerful generative models. Nevertheless, it is not trivial to exploit such latent variable models to capture the dependency among discrete words and meanwhile pursue complex visual-language alignment in image captioning. In this paper, we break the deeply rooted conventions in learning Transformer-based encoder-decoder, and propose a new diffusion model based paradigm tailored for image captioning, namely Semantic-Conditional Diffusion Networks (SCD-Net). Technically, for each input image, we first search the semantically relevant sentences via cross-modal retrieval model to convey the comprehensive semantic information. The rich semantics are further regarded as semantic prior to trigger the learning of Diffusion Transformer, which produces the output sentence in a diffusion process. In SCD-Net, multiple Diffusion Transformer structures are stacked to progressively strengthen the output sentence with better visional-language alignment and linguistical coherence in a cascaded manner. Furthermore, to stabilize the diffusion process, a new self-critical sequence training strategy is designed to guide the learning of SCD-Net with the knowledge of a standard autoregressive Transformer model. Extensive experiments on COCO dataset demonstrate the promising potential of using diffusion models in the challenging image captioning task. Source code is available at \url{https://github.com/YehLi/xmodaler/tree/master/configs/image_caption/scdnet}.

SPE-Net: Boosting Point Cloud Analysis via Rotation Robustness Enhancement

Nov 15, 2022Abstract:In this paper, we propose a novel deep architecture tailored for 3D point cloud applications, named as SPE-Net. The embedded ``Selective Position Encoding (SPE)'' procedure relies on an attention mechanism that can effectively attend to the underlying rotation condition of the input. Such encoded rotation condition then determines which part of the network parameters to be focused on, and is shown to efficiently help reduce the degree of freedom of the optimization during training. This mechanism henceforth can better leverage the rotation augmentations through reduced training difficulties, making SPE-Net robust against rotated data both during training and testing. The new findings in our paper also urge us to rethink the relationship between the extracted rotation information and the actual test accuracy. Intriguingly, we reveal evidences that by locally encoding the rotation information through SPE-Net, the rotation-invariant features are still of critical importance in benefiting the test samples without any actual global rotation. We empirically demonstrate the merits of the SPE-Net and the associated hypothesis on four benchmarks, showing evident improvements on both rotated and unrotated test data over SOTA methods. Source code is available at https://github.com/ZhaofanQiu/SPE-Net.

3D Cascade RCNN: High Quality Object Detection in Point Clouds

Nov 15, 2022

Abstract:Recent progress on 2D object detection has featured Cascade RCNN, which capitalizes on a sequence of cascade detectors to progressively improve proposal quality, towards high-quality object detection. However, there has not been evidence in support of building such cascade structures for 3D object detection, a challenging detection scenario with highly sparse LiDAR point clouds. In this work, we present a simple yet effective cascade architecture, named 3D Cascade RCNN, that allocates multiple detectors based on the voxelized point clouds in a cascade paradigm, pursuing higher quality 3D object detector progressively. Furthermore, we quantitatively define the sparsity level of the points within 3D bounding box of each object as the point completeness score, which is exploited as the task weight for each proposal to guide the learning of each stage detector. The spirit behind is to assign higher weights for high-quality proposals with relatively complete point distribution, while down-weight the proposals with extremely sparse points that often incur noise during training. This design of completeness-aware re-weighting elegantly upgrades the cascade paradigm to be better applicable for the sparse input data, without increasing any FLOP budgets. Through extensive experiments on both the KITTI dataset and Waymo Open Dataset, we validate the superiority of our proposed 3D Cascade RCNN, when comparing to state-of-the-art 3D object detection techniques. The source code is publicly available at \url{https://github.com/caiqi/Cascasde-3D}.

Dynamic Temporal Filtering in Video Models

Nov 15, 2022Abstract:Video temporal dynamics is conventionally modeled with 3D spatial-temporal kernel or its factorized version comprised of 2D spatial kernel and 1D temporal kernel. The modeling power, nevertheless, is limited by the fixed window size and static weights of a kernel along the temporal dimension. The pre-determined kernel size severely limits the temporal receptive fields and the fixed weights treat each spatial location across frames equally, resulting in sub-optimal solution for long-range temporal modeling in natural scenes. In this paper, we present a new recipe of temporal feature learning, namely Dynamic Temporal Filter (DTF), that novelly performs spatial-aware temporal modeling in frequency domain with large temporal receptive field. Specifically, DTF dynamically learns a specialized frequency filter for every spatial location to model its long-range temporal dynamics. Meanwhile, the temporal feature of each spatial location is also transformed into frequency feature spectrum via 1D Fast Fourier Transform (FFT). The spectrum is modulated by the learnt frequency filter, and then transformed back to temporal domain with inverse FFT. In addition, to facilitate the learning of frequency filter in DTF, we perform frame-wise aggregation to enhance the primary temporal feature with its temporal neighbors by inter-frame correlation. It is feasible to plug DTF block into ConvNets and Transformer, yielding DTF-Net and DTF-Transformer. Extensive experiments conducted on three datasets demonstrate the superiority of our proposals. More remarkably, DTF-Transformer achieves an accuracy of 83.5% on Kinetics-400 dataset. Source code is available at \url{https://github.com/FuchenUSTC/DTF}.

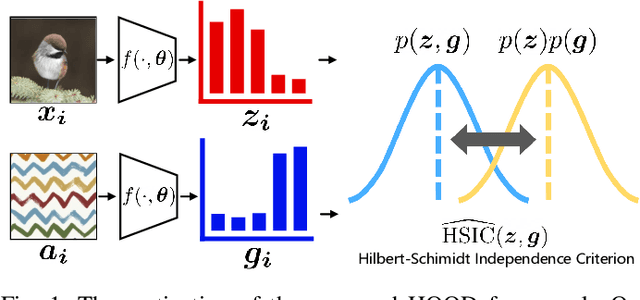

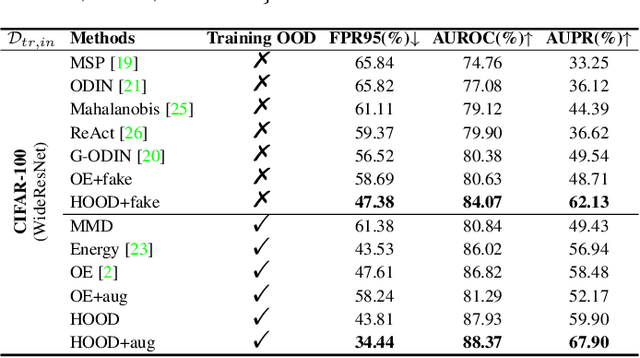

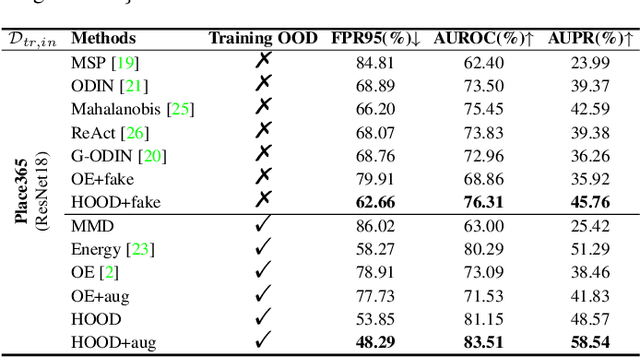

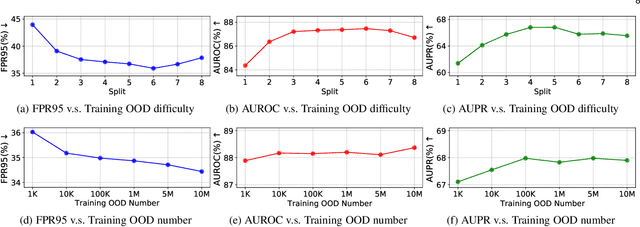

Out-of-Distribution Detection with Hilbert-Schmidt Independence Optimization

Sep 26, 2022

Abstract:Outlier detection tasks have been playing a critical role in AI safety. There has been a great challenge to deal with this task. Observations show that deep neural network classifiers usually tend to incorrectly classify out-of-distribution (OOD) inputs into in-distribution classes with high confidence. Existing works attempt to solve the problem by explicitly imposing uncertainty on classifiers when OOD inputs are exposed to the classifier during training. In this paper, we propose an alternative probabilistic paradigm that is both practically useful and theoretically viable for the OOD detection tasks. Particularly, we impose statistical independence between inlier and outlier data during training, in order to ensure that inlier data reveals little information about OOD data to the deep estimator during training. Specifically, we estimate the statistical dependence between inlier and outlier data through the Hilbert-Schmidt Independence Criterion (HSIC), and we penalize such metric during training. We also associate our approach with a novel statistical test during the inference time coupled with our principled motivation. Empirical results show that our method is effective and robust for OOD detection on various benchmarks. In comparison to SOTA models, our approach achieves significant improvement regarding FPR95, AUROC, and AUPR metrics. Code is available: \url{https://github.com/jylins/hood}.

Dual Vision Transformer

Jul 12, 2022

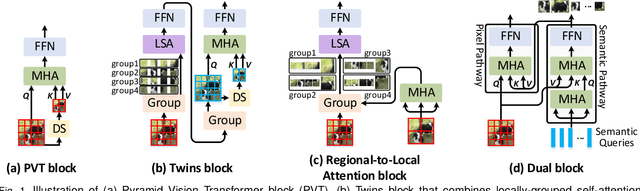

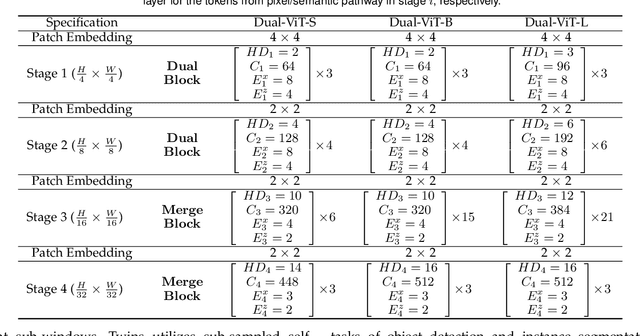

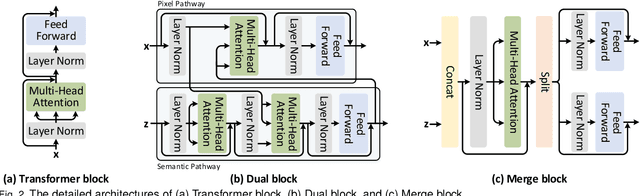

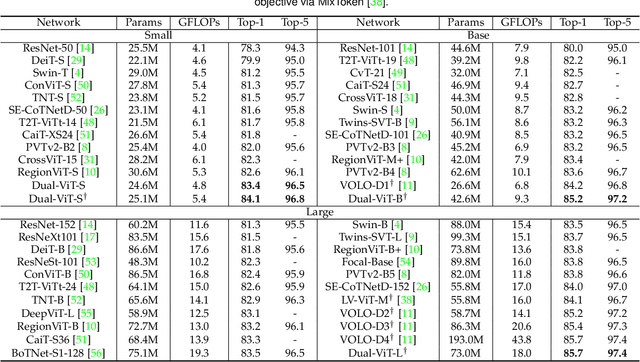

Abstract:Prior works have proposed several strategies to reduce the computational cost of self-attention mechanism. Many of these works consider decomposing the self-attention procedure into regional and local feature extraction procedures that each incurs a much smaller computational complexity. However, regional information is typically only achieved at the expense of undesirable information lost owing to down-sampling. In this paper, we propose a novel Transformer architecture that aims to mitigate the cost issue, named Dual Vision Transformer (Dual-ViT). The new architecture incorporates a critical semantic pathway that can more efficiently compress token vectors into global semantics with reduced order of complexity. Such compressed global semantics then serve as useful prior information in learning finer pixel level details, through another constructed pixel pathway. The semantic pathway and pixel pathway are then integrated together and are jointly trained, spreading the enhanced self-attention information in parallel through both of the pathways. Dual-ViT is henceforth able to reduce the computational complexity without compromising much accuracy. We empirically demonstrate that Dual-ViT provides superior accuracy than SOTA Transformer architectures with reduced training complexity. Source code is available at \url{https://github.com/YehLi/ImageNetModel}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge