Tomáš Krajník

Federated Reinforcement Learning for Collective Navigation of Robotic Swarms

Feb 02, 2022

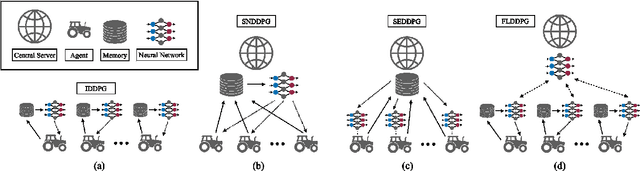

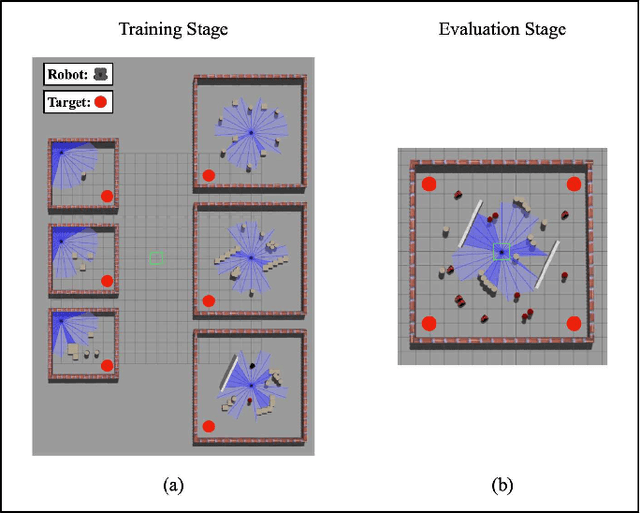

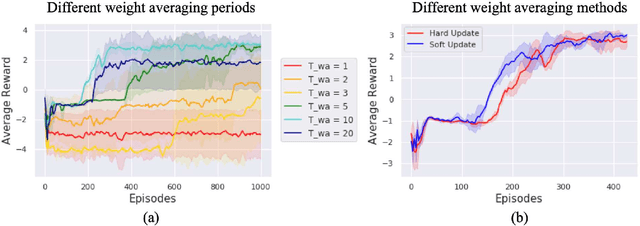

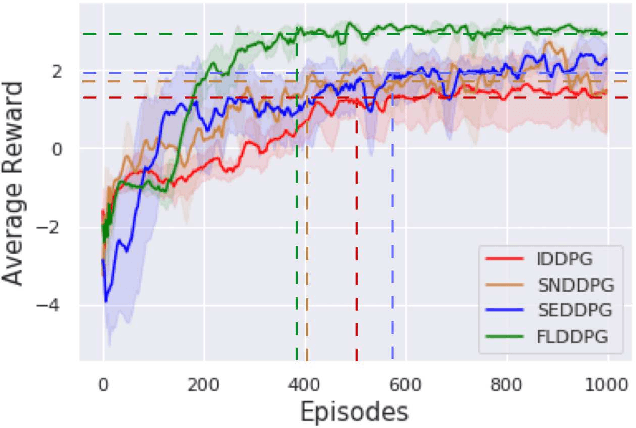

Abstract:The recent advancement of Deep Reinforcement Learning (DRL) contributed to robotics by allowing automatic controller design. Automatic controller design is a crucial approach for designing swarm robotic systems, which require more complex controller than a single robot system to lead a desired collective behaviour. Although DRL-based controller design method showed its effectiveness, the reliance on the central training server is a critical problem in the real-world environments where the robot-server communication is unstable or limited. We propose a novel Federated Learning (FL) based DRL training strategy for use in swarm robotic applications. As FL reduces the number of robot-server communication by only sharing neural network model weights, not local data samples, the proposed strategy reduces the reliance on the central server during controller training with DRL. The experimental results from the collective learning scenario showed that the proposed FL-based strategy dramatically reduced the number of communication by minimum 1600 times and even increased the success rate of navigation with the trained controller by 2.8 times compared to the baseline strategies that share a central server. The results suggest that our proposed strategy can efficiently train swarm robotic systems in the real-world environments with the limited robot-server communication, e.g. agri-robotics, underwater and damaged nuclear facilities.

System for multi-robotic exploration of underground environments CTU-CRAS-NORLAB in the DARPA Subterranean Challenge

Oct 12, 2021

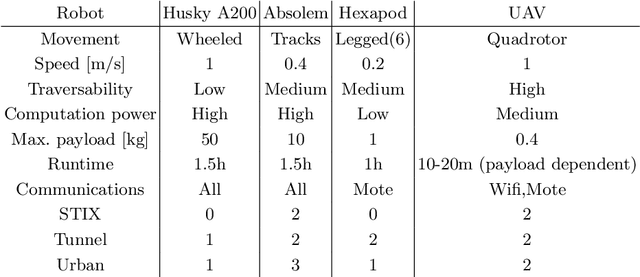

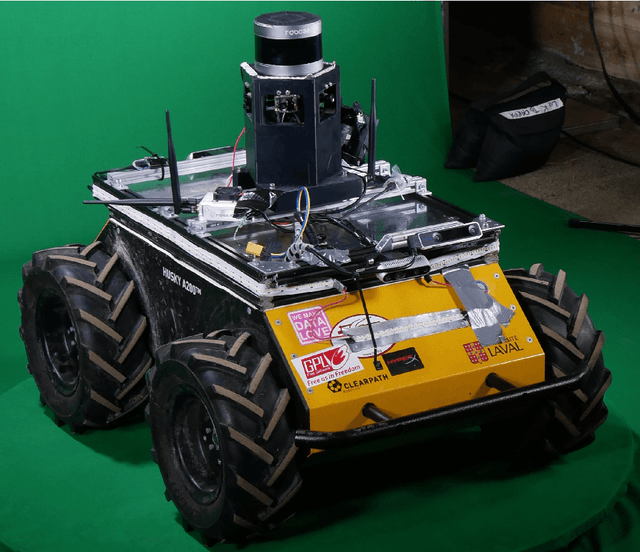

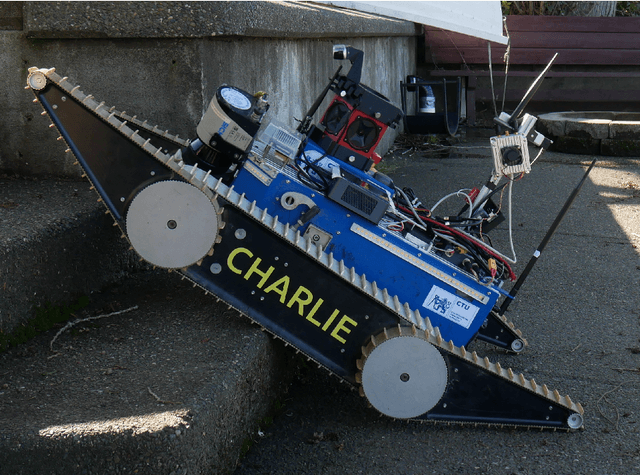

Abstract:We present a field report of CTU-CRAS-NORLAB team from the Subterranean Challenge (SubT) organised by the Defense Advanced Research Projects Agency (DARPA). The contest seeks to advance technologies that would improve the safety and efficiency of search-and-rescue operations in GPS-denied environments. During the contest rounds, teams of mobile robots have to find specific objects while operating in environments with limited radio communication, e.g. mining tunnels, underground stations or natural caverns. We present a heterogeneous exploration robotic system of the CTU-CRAS-NORLAB team, which achieved the third rank at the SubT Tunnel and Urban Circuit rounds and surpassed the performance of all other non-DARPA-funded teams. The field report describes the team's hardware, sensors, algorithms and strategies, and discusses the lessons learned by participating at the DARPA SubT contest.

Cooperative Pollution Source Localization and Cleanup with a Bio-inspired Swarm Robot Aggregation

Jul 22, 2019

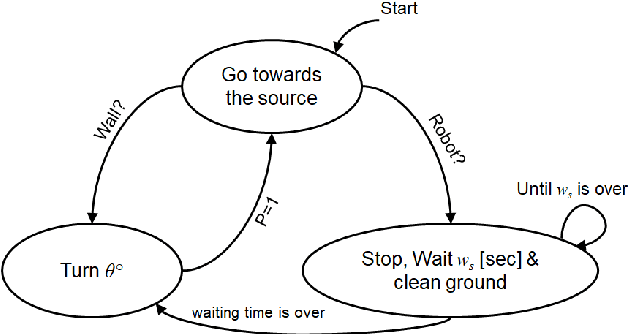

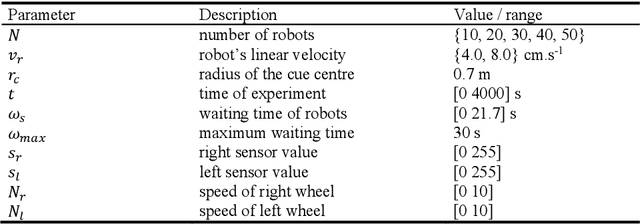

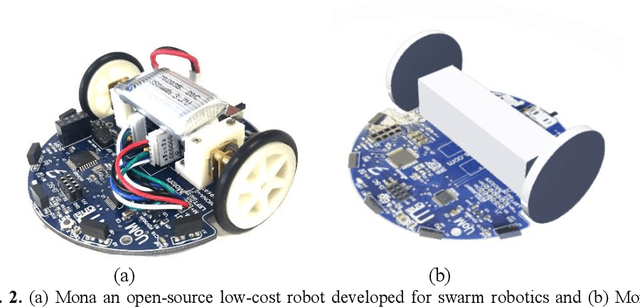

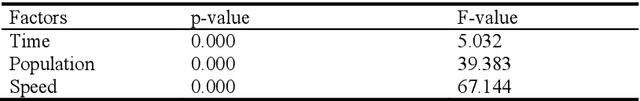

Abstract:Using robots for exploration of extreme and hazardous environments has the potential to significantly improve human safety. For example, robotic solutions can be deployed to find the source of a chemical leakage and clean the contaminated area. This paper demonstrates a proof-of-concept bio-inspired exploration method using a swarm robotic system, which is based on a combination of two bio-inspired behaviours: aggregation, and pheromone tracking. The main idea of the work presented is to follow pheromone trails to find the source of a chemical leakage and then carry out a decontamination task by aggregating at the critical zone. Using experiments conducted by a simulated model of a Mona robot, we evaluate the effects of population size and robot speed on the ability of the swarm in a decontamination task. The results indicate the feasibility of deploying robotic swarms in an exploration and cleaning task in an extreme environment.

Artificial Intelligence for Long-Term Robot Autonomy: A Survey

Jul 13, 2018

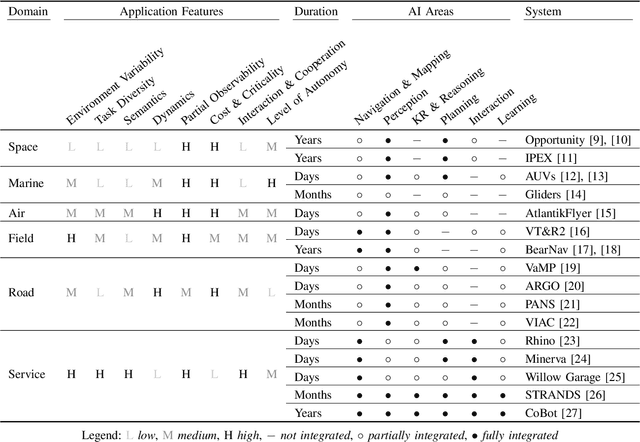

Abstract:Autonomous systems will play an essential role in many applications across diverse domains including space, marine, air, field, road, and service robotics. They will assist us in our daily routines and perform dangerous, dirty and dull tasks. However, enabling robotic systems to perform autonomously in complex, real-world scenarios over extended time periods (i.e. weeks, months, or years) poses many challenges. Some of these have been investigated by sub-disciplines of Artificial Intelligence (AI) including navigation & mapping, perception, knowledge representation & reasoning, planning, interaction, and learning. The different sub-disciplines have developed techniques that, when re-integrated within an autonomous system, can enable robots to operate effectively in complex, long-term scenarios. In this paper, we survey and discuss AI techniques as 'enablers' for long-term robot autonomy, current progress in integrating these techniques within long-running robotic systems, and the future challenges and opportunities for AI in long-term autonomy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge