Stefano Markidis

Making Room for AI: Multi-GPU Molecular Dynamics with Deep Potentials in GROMACS

Apr 08, 2026Abstract:GROMACS is a de-facto standard for classical Molecular Dynamics (MD). The rise of AI-driven interatomic potentials that pursue near-quantum accuracy at MD throughput now poses a significant challenge: embedding neural-network inference into multi-GPU simulations retaining high-performance. In this work, we integrate the MLIP framework DeePMD-kit into GROMACS, enabling domain-decomposed, GPU-accelerated inference across multi-node systems. We extend the GROMACS NNPot interface with a DeePMD backend, and we introduce a domain decomposition layer decoupled from the main simulation. The inference is executed concurrently on all processes, with two MPI collectives used each step to broadcast coordinates and to aggregate and redistribute forces. We train an in-house DPA-1 model (1.6 M parameters) on a dataset of solvated protein fragments. We validate the implementation on a small protein system, then we benchmark the GROMACS-DeePMD integration with a 15,668 atom protein on NVIDIA A100 and AMD MI250x GPUs up to 32 devices. Strong-scaling efficiency reaches 66% at 16 devices and 40% at 32; weak-scaling efficiency is 80% to 16 devices and reaches 48% (MI250x) and 40% (A100) at 32 devices. Profiling with the ROCm System profiler shows that >90% of the wall time is spent in DeePMD inference, while MPI collectives contribute <10%, primarily since they act as a global synchronization point. The principal bottlenecks are the irreducible ghost-atom cost set by the cutoff radius, confirmed by a simple throughput model, and load imbalance across ranks. These results demonstrate that production MD with near ab initio fidelity is feasible at scale in GROMACS.

Unsupervised Physics-Informed Operator Learning through Multi-Stage Curriculum Training

Feb 02, 2026Abstract:Solving partial differential equations remains a central challenge in scientific machine learning. Neural operators offer a promising route by learning mappings between function spaces and enabling resolution-independent inference, yet they typically require supervised data. Physics-informed neural networks address this limitation through unsupervised training with physical constraints but often suffer from unstable convergence and limited generalization capability. To overcome these issues, we introduce a multi-stage physics-informed training strategy that achieves convergence by progressively enforcing boundary conditions in the loss landscape and subsequently incorporating interior residuals. At each stage the optimizer is re-initialized, acting as a continuation mechanism that restores stability and prevents gradient stagnation. We further propose the Physics-Informed Spline Fourier Neural Operator (PhIS-FNO), combining Fourier layers with Hermite spline kernels for smooth residual evaluation. Across canonical benchmarks, PhIS-FNO attains a level of accuracy comparable to that of supervised learning, using labeled information only along a narrow boundary region, establishing staged, spline-based optimization as a robust paradigm for physics-informed operator learning.

Discovering Governing Equations of Geomagnetic Storm Dynamics with Symbolic Regression

Apr 25, 2025Abstract:Geomagnetic storms are large-scale disturbances of the Earth's magnetosphere driven by solar wind interactions, posing significant risks to space-based and ground-based infrastructure. The Disturbance Storm Time (Dst) index quantifies geomagnetic storm intensity by measuring global magnetic field variations. This study applies symbolic regression to derive data-driven equations describing the temporal evolution of the Dst index. We use historical data from the NASA OMNIweb database, including solar wind density, bulk velocity, convective electric field, dynamic pressure, and magnetic pressure. The PySR framework, an evolutionary algorithm-based symbolic regression library, is used to identify mathematical expressions linking dDst/dt to key solar wind. The resulting models include a hierarchy of complexity levels and enable a comparison with well-established empirical models such as the Burton-McPherron-Russell and O'Brien-McPherron models. The best-performing symbolic regression models demonstrate superior accuracy in most cases, particularly during moderate geomagnetic storms, while maintaining physical interpretability. Performance evaluation on historical storm events includes the 2003 Halloween Storm, the 2015 St. Patrick's Day Storm, and a 2017 moderate storm. The results provide interpretable, closed-form expressions that capture nonlinear dependencies and thresholding effects in Dst evolution.

Adaptive PCA-Based Outlier Detection for Multi-Feature Time Series in Space Missions

Apr 22, 2025

Abstract:Analyzing multi-featured time series data is critical for space missions making efficient event detection, potentially onboard, essential for automatic analysis. However, limited onboard computational resources and data downlink constraints necessitate robust methods for identifying regions of interest in real time. This work presents an adaptive outlier detection algorithm based on the reconstruction error of Principal Component Analysis (PCA) for feature reduction, designed explicitly for space mission applications. The algorithm adapts dynamically to evolving data distributions by using Incremental PCA, enabling deployment without a predefined model for all possible conditions. A pre-scaling process normalizes each feature's magnitude while preserving relative variance within feature types. We demonstrate the algorithm's effectiveness in detecting space plasma events, such as distinct space environments, dayside and nightside transients phenomena, and transition layers through NASA's MMS mission observations. Additionally, we apply the method to NASA's THEMIS data, successfully identifying a dayside transient using onboard-available measurements.

Optimizing FDTD Solvers for Electromagnetics: A Compiler-Guided Approach with High-Level Tensor Abstractions

Apr 12, 2025Abstract:The Finite Difference Time Domain (FDTD) method is a widely used numerical technique for solving Maxwell's equations, particularly in computational electromagnetics and photonics. It enables accurate modeling of wave propagation in complex media and structures but comes with significant computational challenges. Traditional FDTD implementations rely on handwritten, platform-specific code that optimizes certain kernels while underperforming in others. The lack of portability increases development overhead and creates performance bottlenecks, limiting scalability across modern hardware architectures. To address these challenges, we introduce an end-to-end domain-specific compiler based on the MLIR/LLVM infrastructure for FDTD simulations. Our approach generates efficient and portable code optimized for diverse hardware platforms.We implement the three-dimensional FDTD kernel as operations on a 3D tensor abstraction with explicit computational semantics. High-level optimizations such as loop tiling, fusion, and vectorization are automatically applied by the compiler. We evaluate our customized code generation pipeline on Intel, AMD, and ARM platforms, achieving up to $10\times$ speedup over baseline Python implementation using NumPy.

Discovering Partially Known Ordinary Differential Equations: a Case Study on the Chemical Kinetics of Cellulose Degradation

Apr 04, 2025

Abstract:The degree of polymerization (DP) is one of the methods for estimating the aging of the polymer based insulation systems, such as cellulose insulation in power components. The main degradation mechanisms in polymers are hydrolysis, pyrolysis, and oxidation. These mechanisms combined cause a reduction of the DP. However, the data availability for these types of problems is usually scarce. This study analyzes insulation aging using cellulose degradation data from power transformers. The aging problem for the cellulose immersed in mineral oil inside power transformers is modeled with ordinary differential equations (ODEs). We recover the governing equations of the degradation system using Physics-Informed Neural Networks (PINNs) and symbolic regression. We apply PINNs to discover the Arrhenius equation's unknown parameters in the Ekenstam ODE describing cellulose contamination content and the material aging process related to temperature for synthetic data and real DP values. A modification of the Ekenstam ODE is given by Emsley's system of ODEs, where the rate constant expressed by the Arrhenius equation decreases in time with the new formulation. We use PINNs and symbolic regression to recover the functional form of one of the ODEs of the system and to identify an unknown parameter.

AI in Space for Scientific Missions: Strategies for Minimizing Neural-Network Model Upload

Jun 20, 2024

Abstract:Artificial Intelligence (AI) has the potential to revolutionize space exploration by delegating several spacecraft decisions to an onboard AI instead of relying on ground control and predefined procedures. It is likely that there will be an AI/ML Processing Unit onboard the spacecraft running an inference engine. The neural-network will have pre-installed parameters that can be updated onboard by uploading, by telecommands, parameters obtained by training on the ground. However, satellite uplinks have limited bandwidth and transmissions can be costly. Furthermore, a mission operating with a suboptimal neural network will miss out on valuable scientific data. Smaller networks can thereby decrease the uplink cost, while increasing the value of the scientific data that is downloaded. In this work, we evaluate and discuss the use of reduced-precision and bare-minimum neural networks to reduce the time for upload. As an example of an AI use case, we focus on the NASA's Magnetosperic MultiScale (MMS) mission. We show how an AI onboard could be used in the Earth's magnetosphere to classify data to selectively downlink higher value data or to recognize a region-of-interest to trigger a burst-mode, collecting data at a high-rate. Using a simple filtering scheme and algorithm, we show how the start and end of a region-of-interest can be detected in on a stream of classifications. To provide the classifications, we use an established Convolutional Neural Network (CNN) trained to an accuracy >94%. We also show how the network can be reduced to a single linear layer and trained to the same accuracy as the established CNN. Thereby, reducing the overall size of the model by up to 98.9%. We further show how each network can be reduced by up to 75% of its original size, by using lower-precision formats to represent the network parameters, with a change in accuracy of less than 0.6 percentage points.

Opportunities for machine learning in scientific discovery

May 07, 2024Abstract:Technological advancements have substantially increased computational power and data availability, enabling the application of powerful machine-learning (ML) techniques across various fields. However, our ability to leverage ML methods for scientific discovery, {\it i.e.} to obtain fundamental and formalized knowledge about natural processes, is still in its infancy. In this review, we explore how the scientific community can increasingly leverage ML techniques to achieve scientific discoveries. We observe that the applicability and opportunity of ML depends strongly on the nature of the problem domain, and whether we have full ({\it e.g.}, turbulence), partial ({\it e.g.}, computational biochemistry), or no ({\it e.g.}, neuroscience) {\it a-priori} knowledge about the governing equations and physical properties of the system. Although challenges remain, principled use of ML is opening up new avenues for fundamental scientific discoveries. Throughout these diverse fields, there is a theme that ML is enabling researchers to embrace complexity in observational data that was previously intractable to classic analysis and numerical investigations.

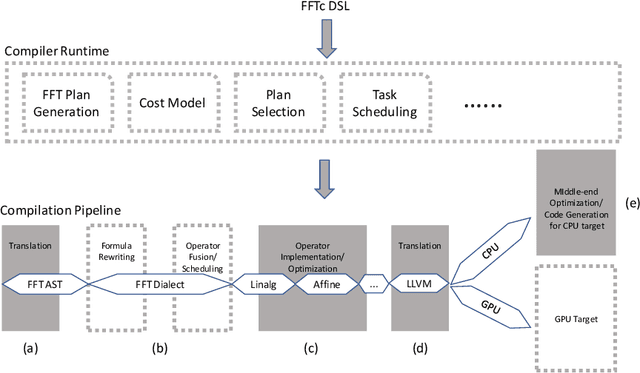

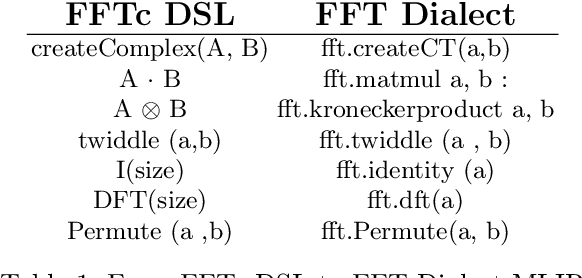

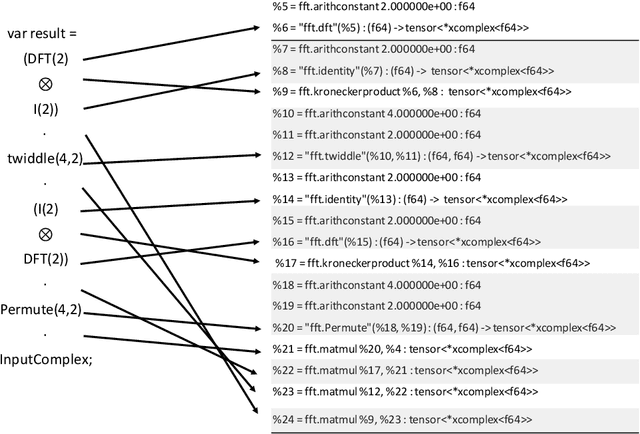

FFTc: An MLIR Dialect for Developing HPC Fast Fourier Transform Libraries

Jul 14, 2022

Abstract:Discrete Fourier Transform (DFT) libraries are one of the most critical software components for scientific computing. Inspired by FFTW, a widely used library for DFT HPC calculations, we apply compiler technologies for the development of HPC Fourier transform libraries. In this work, we introduce FFTc, a domain-specific language, based on Multi-Level Intermediate Representation (MLIR), for expressing Fourier Transform algorithms. We present the initial design, implementation, and preliminary results of FFTc.

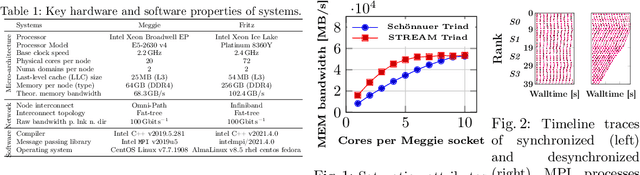

Exploring Techniques for the Analysis of Spontaneous Asynchronicity in MPI-Parallel Applications

May 27, 2022

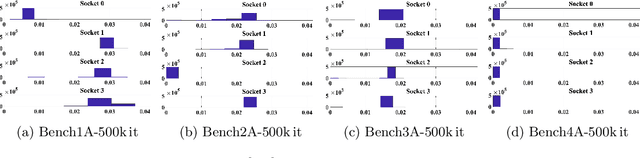

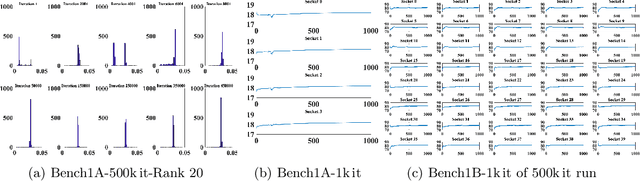

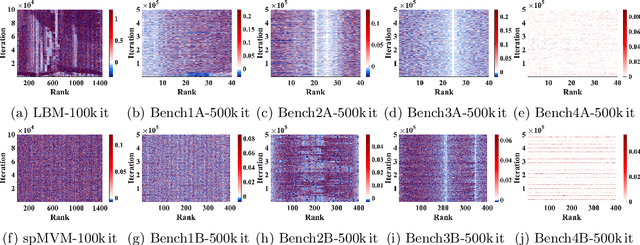

Abstract:This paper studies the utility of using data analytics and machine learning techniques for identifying, classifying, and characterizing the dynamics of large-scale parallel (MPI) programs. To this end, we run microbenchmarks and realistic proxy applications with the regular compute-communicate structure on two different supercomputing platforms and choose the per-process performance and MPI time per time step as relevant observables. Using principal component analysis, clustering techniques, correlation functions, and a new "phase space plot," we show how desynchronization patterns (or lack thereof) can be readily identified from a data set that is much smaller than a full MPI trace. Our methods also lead the way towards a more general classification of parallel program dynamics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge