Steven W. D. Chien

Higgs Boson Classification: Brain-inspired BCPNN Learning with StreamBrain

Aug 17, 2021

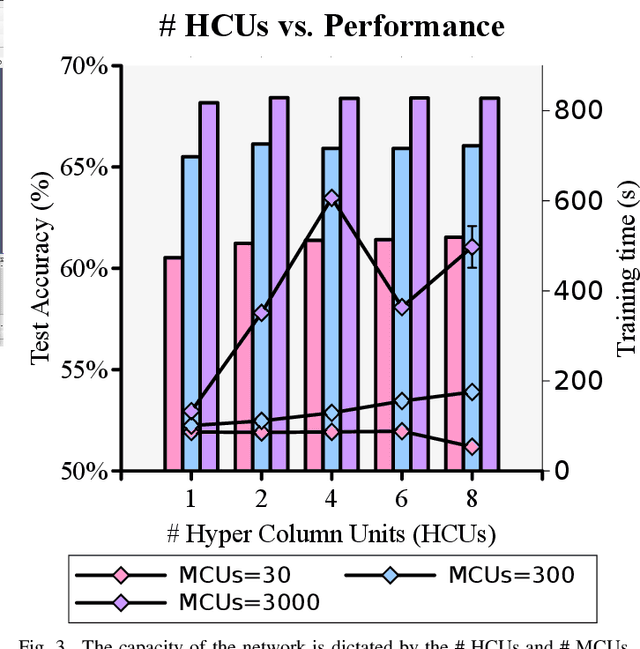

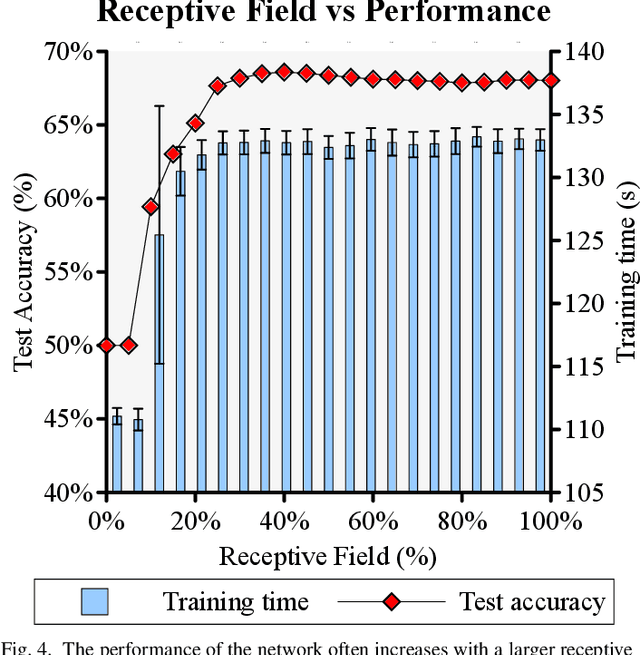

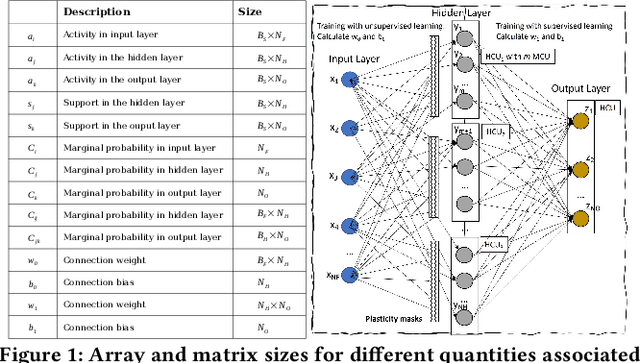

Abstract:One of the most promising approaches for data analysis and exploration of large data sets is Machine Learning techniques that are inspired by brain models. Such methods use alternative learning rules potentially more efficiently than established learning rules. In this work, we focus on the potential of brain-inspired ML for exploiting High-Performance Computing (HPC) resources to solve ML problems: we discuss the BCPNN and an HPC implementation, called StreamBrain, its computational cost, suitability to HPC systems. As an example, we use StreamBrain to analyze the Higgs Boson dataset from High Energy Physics and discriminate between background and signal classes in collisions of high-energy particle colliders. Overall, we reach up to 69.15% accuracy and 76.4% Area Under the Curve (AUC) performance.

StreamBrain: An HPC Framework for Brain-like Neural Networks on CPUs, GPUs and FPGAs

Jun 09, 2021

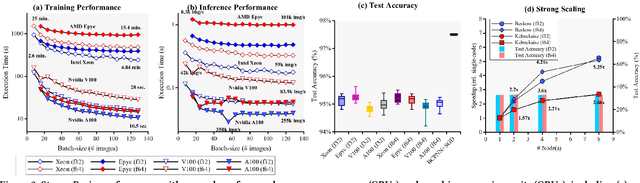

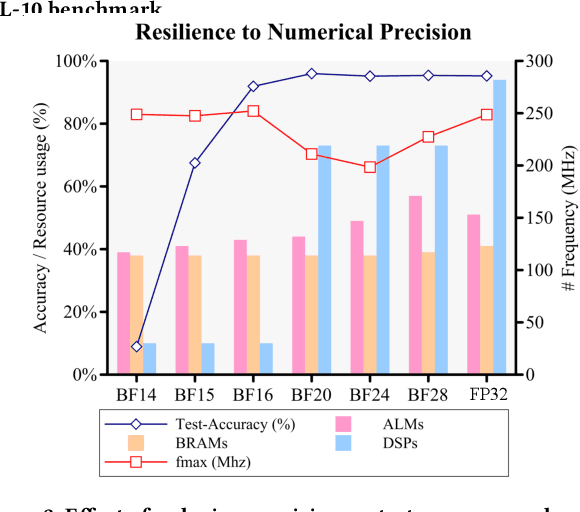

Abstract:The modern deep learning method based on backpropagation has surged in popularity and has been used in multiple domains and application areas. At the same time, there are other -- less-known -- machine learning algorithms with a mature and solid theoretical foundation whose performance remains unexplored. One such example is the brain-like Bayesian Confidence Propagation Neural Network (BCPNN). In this paper, we introduce StreamBrain -- a framework that allows neural networks based on BCPNN to be practically deployed in High-Performance Computing systems. StreamBrain is a domain-specific language (DSL), similar in concept to existing machine learning (ML) frameworks, and supports backends for CPUs, GPUs, and even FPGAs. We empirically demonstrate that StreamBrain can train the well-known ML benchmark dataset MNIST within seconds, and we are the first to demonstrate BCPNN on STL-10 size networks. We also show how StreamBrain can be used to train with custom floating-point formats and illustrate the impact of using different bfloat variations on BCPNN using FPGAs.

Automated classification of plasma regions using 3D particle energy distribution

Aug 27, 2019

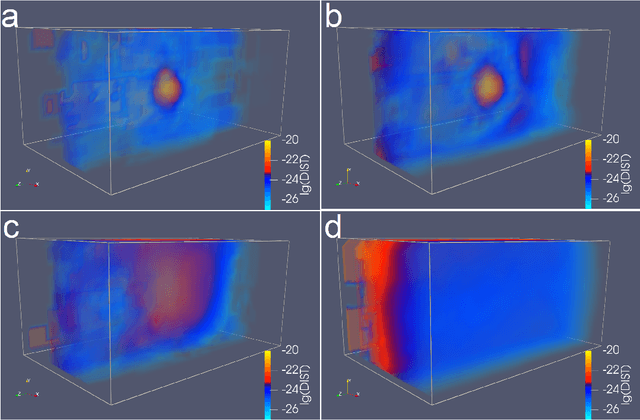

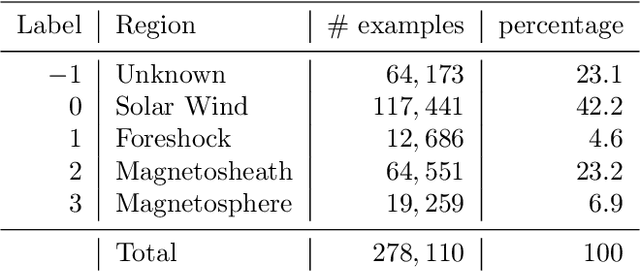

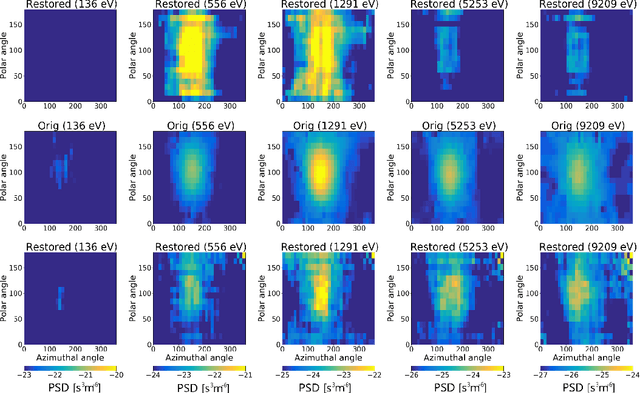

Abstract:Even though automatic classification and interpretation would be highly desired features for the Magnetospheric Multiscale mission (MMS), the gold rush era in machine learning has yet to reach the science done with observations collected by MMS. We investigate the properties of the ion sky maps produced by the Dual Ion Spectrometers (DIS) from the Fast Plasma Investigation (FPI). Running the Principal Component Analysis (PCA) on a mixed subset of the data suggests that more than 500 components are needed to cover 80% of the variance. Hence, simple machine learning techniques might not deal with classification of plasma regions. Use of a three-dimensional (3D) convolutional autoencoder (3D-CAE) allows to reduce the data dimensionality by 128 times while still maintaining a decent quality energy distribution. However, k-means clustering computed over the compressed data is not capable of separating measurements according to the physical properties of the plasma. A three-dimensional convolutional neural network (3D-CNN), trained on a rather small amount of human labelled training examples is able to predict plasma regions with 99% accuracy. The low probability predictions of the 3D-CNN reveal the mixed state regions, such as the magnetopause or bow shock, which are of key interest to researchers of the MMS mission. The 3D-CNN and data processing software could easily be deployed on ground-based computers and provide classification for the whole MMS database. Data processing through the trained 3D-CNN is fast and efficient, opening up the possibility for deployment in data-centers or in situ operation onboard the spacecraft.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge