Simon Doclo

Signal Processing Group, University of Oldenburg, Oldenburg, Germany, Cluster of Excellence Hearing4all

In-the-Loop Training of Deep Feedback Cancellation for Hearing Aids

Jun 02, 2026Abstract:Acoustic feedback limits the maximum gain in hearing aids. In addition to several approaches based on adaptive filtering, recently a deep-neural-network-based feedback cancellation (DFC) approach has been proposed, which is trained via an open-loop framework. Since open-loop-trained DFC (DFC-OL) can become unstable during inference at high gains, in this paper we propose an in-the-loop-trained DFC (DFC-IL) that integrates the DFC directly into the optimisation loop. This allows the model to be exposed to unstable conditions during training. A two-stage training strategy involving pre-training on stable systems and fine-tuning on a wider gain range enables DFC-IL to learn robust howling reduction. Experimental results on measured feedback paths demonstrate that in scenarios with small gains, the proposed DFC-IL performs similarly to DFC-OL, and both exceed the performance of adaptive filters. In scenarios with high amplification gains, DFC-IL clearly outperforms DFC-OL by maintaining system stability.

Flexible Multi-Channel Target Speaker Extraction Using Geometry-Conditioned Spatially Selective Non-linear Filters

May 18, 2026Abstract:Recently, a spatially selective non-linear filter (SSF) has been proposed for target speaker extraction, using the target direction-of-arrival (DOA) as a spatial cue. Since learned intermediate features are tied to the microphone geometry, the performance of the SSF degrades significantly when evaluated on mismatched array geometries. In this paper, we propose a geometry-conditioned SSF (GC-SSF), which incorporates a geometry-conditioning branch based on FiLM layers. Furthermore, we propose a feature that jointly encodes the DOA and the microphone positions (DOA-MPE). The conditioning branch modulates the intermediate feature maps of the SSF using the DOA-MPE feature to capture the spatial relationship between the microphone positions and the target speaker. Experimental results across circular, uniform linear, and random microphone arrays show that the proposed GC-SSF generalizes better to mismatched geometries while maintaining high spatial selectivity, demonstrating its ability to effectively adapt the filtering process to different array geometries

Distributed Multichannel Wiener Filtering for Wireless Acoustic Sensor Networks

Mar 10, 2026Abstract:In a wireless acoustic sensor network (WASN), devices (i.e., nodes) can collaborate through distributed algorithms to collectively perform audio signal processing tasks. This paper focuses on the distributed estimation of node-specific desired speech signals using network-wide Wiener filtering. The objective is to match the performance of a centralized system that would have access to all microphone signals, while reducing the communication bandwidth usage of the algorithm. Existing solutions, such as the distributed adaptive node-specific signal estimation (DANSE) algorithm, converge towards the multichannel Wiener filter (MWF) which solves a centralized linear minimum mean square error (LMMSE) signal estimation problem. However, they do so iteratively, which can be slow and impractical. Many solutions also assume that all nodes observe the same set of sources of interest, which is often not the case in practice. To overcome these limitations, we propose the distributed multichannel Wiener filter (dMWF) for fully connected WASNs. The dMWF is non-iterative and optimal even when nodes observe different sets of sources. In this algorithm, nodes exchange neighbor-pair-specific, low-dimensional (fused) signals estimating the contribution of sources observed by both nodes in the pair. We formally prove the optimality of dMWF and demonstrate its performance in simulated speech enhancement experiments. The proposed algorithm is shown to outperform DANSE in terms of objective metrics after short operation times, highlighting the benefit of its iterationless design.

DNN-Based Online Source Counting Based on Spatial Generalized Magnitude Squared Coherence

Jan 28, 2026Abstract:The number of active sound sources is a key parameter in many acoustic signal processing tasks, such as source localization, source separation, and multi-microphone speech enhancement. This paper proposes a novel method for online source counting by detecting changes in the number of active sources based on spatial coherence. The proposed method exploits the fact that a single coherent source in spatially white background noise yields high spatial coherence, whereas only noise results in low spatial coherence. By applying a spatial whitening operation, the source counting problem is reformulated as a change detection task, aiming to identify the time frames when the number of active sources changes. The method leverages the generalized magnitude-squared coherence as a measure to quantify spatial coherence, providing features for a compact neural network trained to detect source count changes framewise. Simulation results with binaural hearing aids in reverberant acoustic scenes with up to 4 speakers and background noise demonstrate the effectiveness of the proposed method for online source counting.

Reference Microphone Selection for Guided Source Separation based on the Normalized L-p Norm

Oct 31, 2025

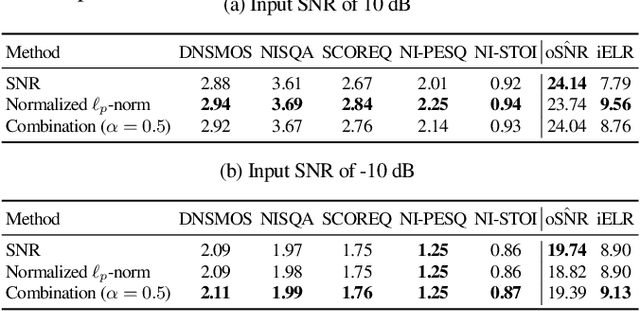

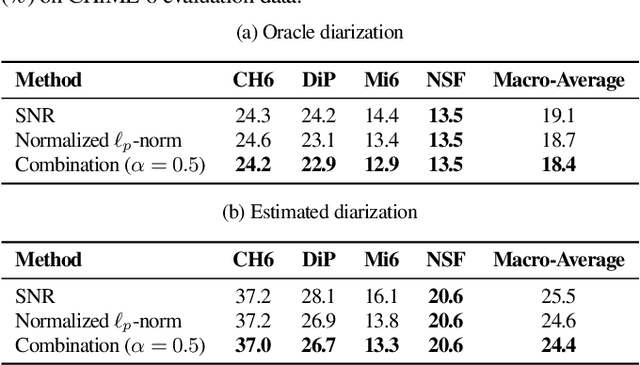

Abstract:Guided Source Separation (GSS) is a popular front-end for distant automatic speech recognition (ASR) systems using spatially distributed microphones. When considering spatially distributed microphones, the choice of reference microphone may have a large influence on the quality of the output signal and the downstream ASR performance. In GSS-based speech enhancement, reference microphone selection is typically performed using the signal-to-noise ratio (SNR), which is optimal for noise reduction but may neglect differences in early-to-late-reverberant ratio (ELR) across microphones. In this paper, we propose two reference microphone selection methods for GSS-based speech enhancement that are based on the normalized $\ell_p$-norm, either using only the normalized $\ell_p$-norm or combining the normalized $\ell_p$-norm and the SNR to account for both differences in SNR and ELR across microphones. Experimental evaluation using a CHiME-8 distant ASR system shows that the proposed $\ell_p$-norm-based methods outperform the baseline method, reducing the macro-average word error rate.

I-DCCRN-VAE: An Improved Deep Representation Learning Framework for Complex VAE-based Single-channel Speech Enhancement

Oct 14, 2025

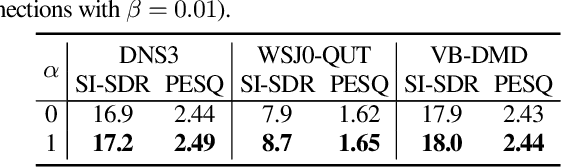

Abstract:Recently, a complex variational autoencoder (VAE)-based single-channel speech enhancement system based on the DCCRN architecture has been proposed. In this system, a noise suppression VAE (NSVAE) learns to extract clean speech representations from noisy speech using pretrained clean speech and noise VAEs with skip connections. In this paper, we improve DCCRN-VAE by incorporating three key modifications: 1) removing the skip connections in the pretrained VAEs to encourage more informative speech and noise latent representations; 2) using $\beta$-VAE in pretraining to better balance reconstruction and latent space regularization; and 3) a NSVAE generating both speech and noise latent representations. Experiments show that the proposed system achieves comparable performance as the DCCRN and DCCRN-VAE baselines on the matched DNS3 dataset but outperforms the baselines on mismatched datasets (WSJ0-QUT, Voicebank-DEMEND), demonstrating improved generalization ability. In addition, an ablation study shows that a similar performance can be achieved with classical fine-tuning instead of adversarial training, resulting in a simpler training pipeline.

A Steered Response Power Method for Sound Source Localization With Generic Acoustic Models

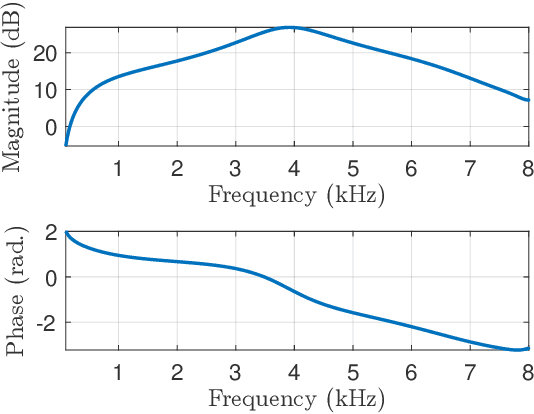

Sep 19, 2025Abstract:The steered response power (SRP) method is one of the most popular approaches for acoustic source localization with microphone arrays. It is often based on simplifying acoustic assumptions, such as an omnidirectional sound source in the far field of the microphone array(s), free field propagation, and spatially uncorrelated noise. In reality, however, there are many acoustic scenarios where such assumptions are violated. This paper proposes a generalization of the conventional SRP method that allows to apply generic acoustic models for localization with arbitrary microphone constellations. These models may consider, for instance, level differences in distributed microphones, the directivity of sources and receivers, or acoustic shadowing effects. Moreover, also measured acoustic transfer functions may be applied as acoustic model. We show that the delay-and-sum beamforming of the conventional SRP is not optimal for localization with generic acoustic models. To this end, we propose a generalized SRP beamforming criterion that considers generic acoustic models and spatially correlated noise, and derive an optimal SRP beamformer. Furthermore, we propose and analyze appropriate frequency weightings. Unlike the conventional SRP, the proposed method can jointly exploit observed level and time differences between the microphone signals to infer the source location. Realistic simulations of three different microphone setups with speech under various noise conditions indicate that the proposed method can significantly reduce the mean localization error compared to the conventional SRP and, in particular, a reduction of more than 60% can be archived in noisy conditions.

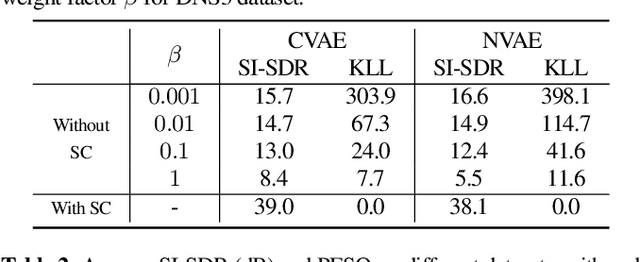

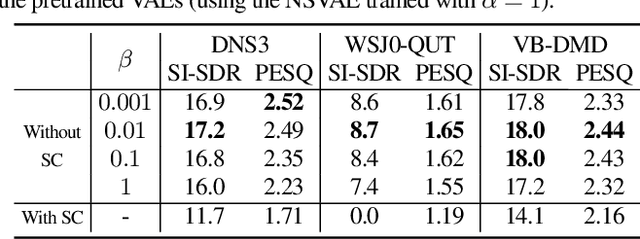

Investigation of Speech and Noise Latent Representations in Single-channel VAE-based Speech Enhancement

Aug 07, 2025Abstract:Recently, a variational autoencoder (VAE)-based single-channel speech enhancement system using Bayesian permutation training has been proposed, which uses two pretrained VAEs to obtain latent representations for speech and noise. Based on these pretrained VAEs, a noisy VAE learns to generate speech and noise latent representations from noisy speech for speech enhancement. Modifying the pretrained VAE loss terms affects the pretrained speech and noise latent representations. In this paper, we investigate how these different representations affect speech enhancement performance. Experiments on the DNS3, WSJ0-QUT, and VoiceBank-DEMAND datasets show that a latent space where speech and noise representations are clearly separated significantly improves performance over standard VAEs, which produce overlapping speech and noise representations.

Closed-Form Successive Relative Transfer Function Vector Estimation based on Blind Oblique Projection Incorporating Noise Whitening

Aug 06, 2025Abstract:Relative transfer functions (RTFs) of sound sources play a crucial role in beamforming, enabling effective noise and interference suppression. This paper addresses the challenge of online estimating the RTF vectors of multiple sound sources in noisy and reverberant environments, for the specific scenario where sources activate successively. While the RTF vector of the first source can be estimated straightforwardly, the main challenge arises in estimating the RTF vectors of subsequent sources during segments where multiple sources are simultaneously active. The blind oblique projection (BOP) method has been proposed to estimate the RTF vector of a newly activating source by optimally blocking this source. However, this method faces several limitations: high computational complexity due to its reliance on iterative gradient descent optimization, the introduction of random additional vectors, which can negatively impact performance, and the assumption of high signal-to-noise ratio (SNR). To overcome these limitations, in this paper we propose three extensions to the BOP method. First, we derive a closed-form solution for optimizing the BOP cost function, significantly reducing computational complexity. Second, we introduce orthogonal additional vectors instead of random vectors, enhancing RTF vector estimation accuracy. Third, we incorporate noise handling techniques inspired by covariance subtraction and whitening, increasing robustness in low SNR conditions. To provide a frame-by-frame estimate of the source activity pattern, required by both the conventional BOP method and the proposed method, we propose a spatial-coherence-based online source counting method. Simulations are performed with real-world reverberant noisy recordings featuring 3 successively activating speakers, with and without a-priori knowledge of the source activity pattern.

Binaural Localization Model for Speech in Noise

Jul 26, 2025

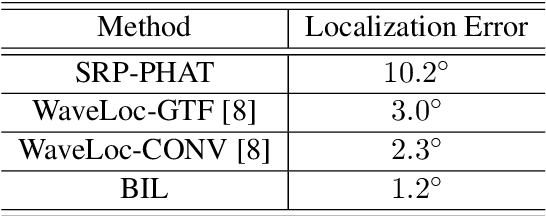

Abstract:Binaural acoustic source localization is important to human listeners for spatial awareness, communication and safety. In this paper, an end-to-end binaural localization model for speech in noise is presented. A lightweight convolutional recurrent network that localizes sound in the frontal azimuthal plane for noisy reverberant binaural signals is introduced. The model incorporates additive internal ear noise to represent the frequency-dependent hearing threshold of a typical listener. The localization performance of the model is compared with the steered response power algorithm, and the use of the model as a measure of interaural cue preservation for binaural speech enhancement methods is studied. A listening test was performed to compare the performance of the model with human localization of speech in noisy conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge