Sethu Vijayakumar

A behavioural transformer for effective collaboration between a robot and a non-stationary human

Jul 25, 2023

Abstract:A key challenge in human-robot collaboration is the non-stationarity created by humans due to changes in their behaviour. This alters environmental transitions and hinders human-robot collaboration. We propose a principled meta-learning framework to explore how robots could better predict human behaviour, and thereby deal with issues of non-stationarity. On the basis of this framework, we developed Behaviour-Transform (BeTrans). BeTrans is a conditional transformer that enables a robot agent to adapt quickly to new human agents with non-stationary behaviours, due to its notable performance with sequential data. We trained BeTrans on simulated human agents with different systematic biases in collaborative settings. We used an original customisable environment to show that BeTrans effectively collaborates with simulated human agents and adapts faster to non-stationary simulated human agents than SOTA techniques.

Online Multi-Contact Receding Horizon Planning via Value Function Approximation

Jun 12, 2023

Abstract:Planning multi-contact motions in a receding horizon fashion requires a value function to guide the planning with respect to the future, e.g., building momentum to traverse large obstacles. Traditionally, the value function is approximated by computing trajectories in a prediction horizon (never executed) that foresees the future beyond the execution horizon. However, given the non-convex dynamics of multi-contact motions, this approach is computationally expensive. To enable online Receding Horizon Planning (RHP) of multi-contact motions, we find efficient approximations of the value function. Specifically, we propose a trajectory-based and a learning-based approach. In the former, namely RHP with Multiple Levels of Model Fidelity, we approximate the value function by computing the prediction horizon with a convex relaxed model. In the latter, namely Locally-Guided RHP, we learn an oracle to predict local objectives for locomotion tasks, and we use these local objectives to construct local value functions for guiding a short-horizon RHP. We evaluate both approaches in simulation by planning centroidal trajectories of a humanoid robot walking on moderate slopes, and on large slopes where the robot cannot maintain static balance. Our results show that locally-guided RHP achieves the best computation efficiency (95\%-98.6\% cycles converge online). This computation advantage enables us to demonstrate online receding horizon planning of our real-world humanoid robot Talos walking in dynamic environments that change on-the-fly.

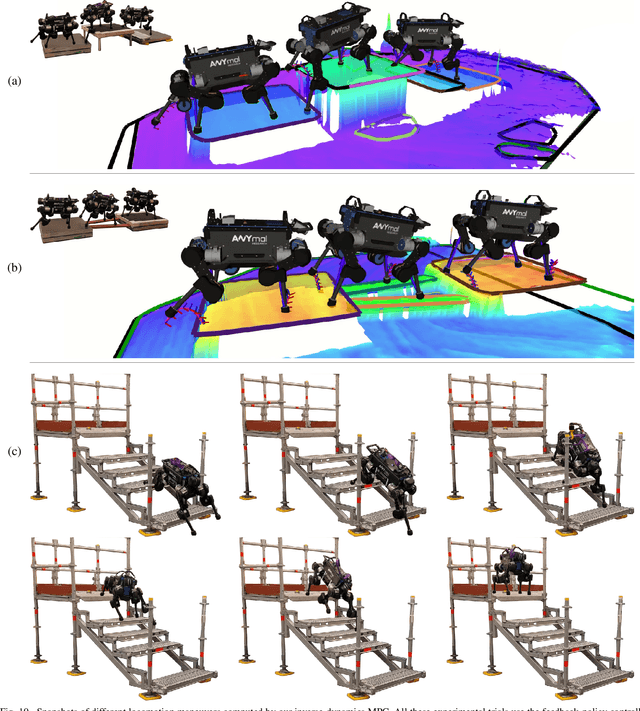

Perceptive Locomotion through Whole-Body MPC and Optimal Region Selection

May 15, 2023

Abstract:Real-time synthesis of legged locomotion maneuvers in challenging industrial settings is still an open problem, requiring simultaneous determination of footsteps locations several steps ahead while generating whole-body motions close to the robot's limits. State estimation and perception errors impose the practical constraint of fast re-planning motions in a model predictive control (MPC) framework. We first observe that the computational limitation of perceptive locomotion pipelines lies in the combinatorics of contact surface selection. Re-planning contact locations on selected surfaces can be accomplished at MPC frequencies (50-100 Hz). Then, whole-body motion generation typically follows a reference trajectory for the robot base to facilitate convergence. We propose removing this constraint to robustly address unforeseen events such as contact slipping, by leveraging a state-of-the-art whole-body MPC (Croccodyl). Our contributions are integrated into a complete framework for perceptive locomotion, validated under diverse terrain conditions, and demonstrated in challenging trials that push the robot's actuation limits, as well as in the ICRA 2023 quadruped challenge simulation.

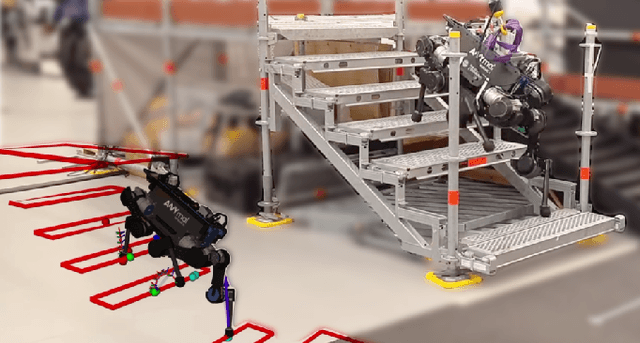

Topology-Based MPC for Automatic Footstep Placement and Contact Surface Selection

Mar 24, 2023

Abstract:State-of-the-art approaches to footstep planning assume reduced-order dynamics when solving the combinatorial problem of selecting contact surfaces in real time. However, in exchange for computational efficiency, these approaches ignore joint torque limits and limb dynamics. In this work, we address these limitations by presenting a topology-based approach that enables~\gls{mpc} to simultaneously plan full-body motions, torque commands, footstep placements, and contact surfaces in real time. To determine if a robot's foot is inside a contact surface, we borrow the winding number concept from topology. We then use this winding number and potential field to create a contact-surface penalty function. By using this penalty function,~\gls{mpc} can select a contact surface from all candidate surfaces in the vicinity and determine footstep placements within it. We demonstrate the benefits of our approach by showing the impact of considering full-body dynamics, which includes joint torque limits and limb dynamics, on the selection of footstep placements and contact surfaces. Furthermore, we validate the feasibility of deploying our topology-based approach in an~\gls{mpc} scheme and explore its potential capabilities through a series of experimental and simulation trials.

* 7 pages, 6 figures

RGB-D-Inertial SLAM in Indoor Dynamic Environments with Long-term Large Occlusion

Mar 23, 2023Abstract:This work presents a novel RGB-D-inertial dynamic SLAM method that can enable accurate localisation when the majority of the camera view is occluded by multiple dynamic objects over a long period of time. Most dynamic SLAM approaches either remove dynamic objects as outliers when they account for a minor proportion of the visual input, or detect dynamic objects using semantic segmentation before camera tracking. Therefore, dynamic objects that cause large occlusions are difficult to detect without prior information. The remaining visual information from the static background is also not enough to support localisation when large occlusion lasts for a long period. To overcome these problems, our framework presents a robust visual-inertial bundle adjustment that simultaneously tracks camera, estimates cluster-wise dense segmentation of dynamic objects and maintains a static sparse map by combining dense and sparse features. The experiment results demonstrate that our method achieves promising localisation and object segmentation performance compared to other state-of-the-art methods in the scenario of long-term large occlusion.

OpTaS: An Optimization-based Task Specification Library for Trajectory Optimization and Model Predictive Control

Jan 31, 2023

Abstract:This paper presents OpTaS, a task specification Python library for Trajectory Optimization (TO) and Model Predictive Control (MPC) in robotics. Both TO and MPC are increasingly receiving interest in optimal control and in particular handling dynamic environments. While a flurry of software libraries exists to handle such problems, they either provide interfaces that are limited to a specific problem formulation (e.g. TracIK, CHOMP), or are large and statically specify the problem in configuration files (e.g. EXOTica, eTaSL). OpTaS, on the other hand, allows a user to specify custom nonlinear constrained problem formulations in a single Python script allowing the controller parameters to be modified during execution. The library provides interface to several open source and commercial solvers (e.g. IPOPT, SNOPT, KNITRO, SciPy) to facilitate integration with established workflows in robotics. Further benefits of OpTaS are highlighted through a thorough comparison with common libraries. An additional key advantage of OpTaS is the ability to define optimal control tasks in the joint space, task space, or indeed simultaneously. The code for OpTaS is easily installed via pip, and the source code with examples can be found at https://github.com/cmower/optas.

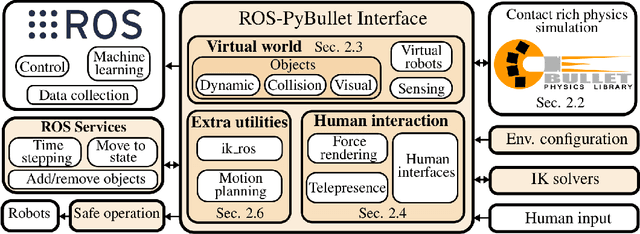

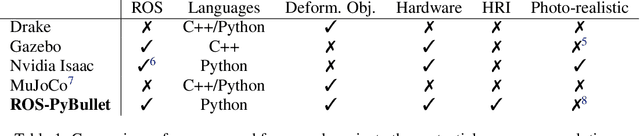

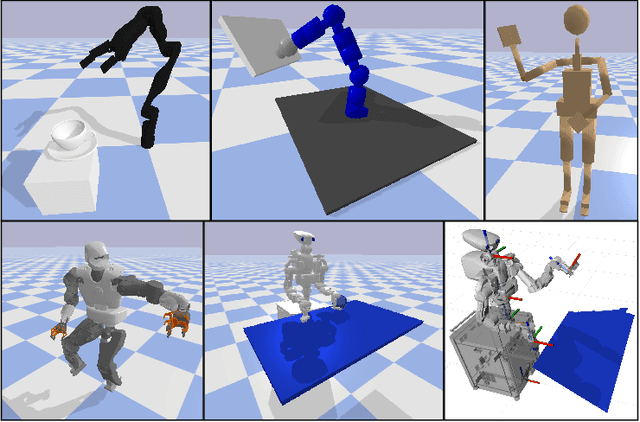

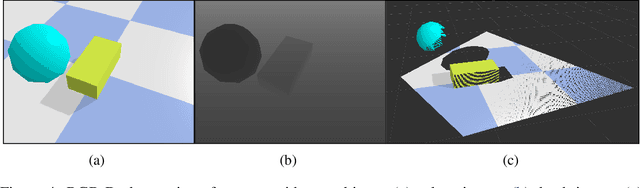

ROS-PyBullet Interface: A Framework for Reliable Contact Simulation and Human-Robot Interaction

Oct 13, 2022

Abstract:Reliable contact simulation plays a key role in the development of (semi-)autonomous robots, especially when dealing with contact-rich manipulation scenarios, an active robotics research topic. Besides simulation, components such as sensing, perception, data collection, robot hardware control, human interfaces, etc. are all key enablers towards applying machine learning algorithms or model-based approaches in real world systems. However, there is a lack of software connecting reliable contact simulation with the larger robotics ecosystem (i.e. ROS, Orocos), for a more seamless application of novel approaches, found in the literature, to existing robotic hardware. In this paper, we present the ROS-PyBullet Interface, a framework that provides a bridge between the reliable contact/impact simulator PyBullet and the Robot Operating System (ROS). Furthermore, we provide additional utilities for facilitating Human-Robot Interaction (HRI) in the simulated environment. We also present several use-cases that highlight the capabilities and usefulness of our framework. Please check our video, source code, and examples included in the supplementary material. Our full code base is open source and can be found at https://github.com/cmower/ros_pybullet_interface.

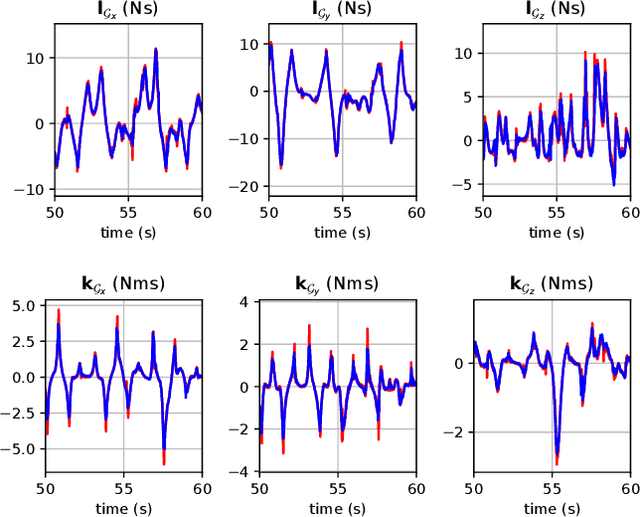

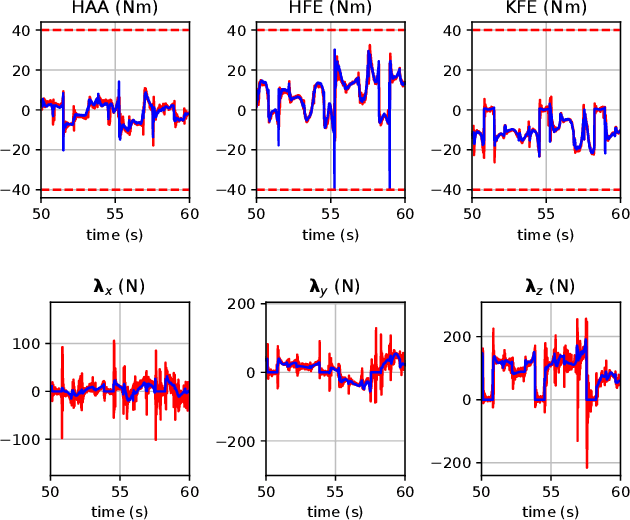

Inverse-Dynamics MPC via Nullspace Resolution

Sep 12, 2022

Abstract:Optimal control (OC) using inverse dynamics provides numerical benefits such as coarse optimization, cheaper computation of derivatives, and a high convergence rate. However, in order to take advantage of these benefits in model predictive control (MPC) for legged robots, it is crucial to handle its large number of equality constraints efficiently. To accomplish this, we first (i) propose a novel approach to handle equality constraints based on nullspace parametrization. Our approach balances optimality, and both dynamics and equality-constraint feasibility appropriately, which increases the basin of attraction to good local minima. To do so, we then (ii) adapt our feasibility-driven search by incorporating a merit function. Furthermore, we introduce (iii) a condensed formulation of the inverse dynamics that considers arbitrary actuator models. We also develop (iv) a novel MPC based on inverse dynamics within a perception locomotion framework. Finally, we present (v) a theoretical comparison of optimal control with the forward and inverse dynamics, and evaluate both numerically. Our approach enables the first application of inverse-dynamics MPC on hardware, resulting in state-of-the-art dynamic climbing on the ANYmal robot. We benchmark it over a wide range of robotics problems and generate agile and complex maneuvers. We show the computational reduction of our nullspace resolution and condensed formulation (up to 47.3%). We provide evidence of the benefits of our approach by solving coarse optimization problems with a high convergence rate (up to 10 Hz of discretization). Our algorithm is publicly available inside CROCODDYL.

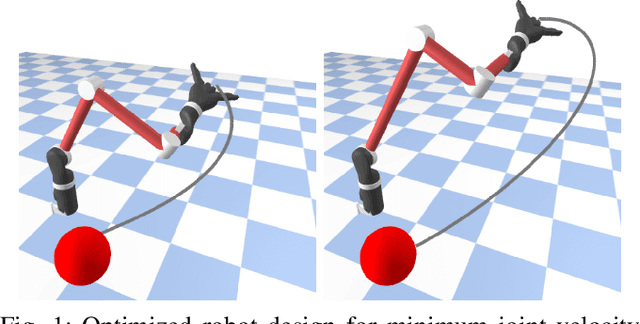

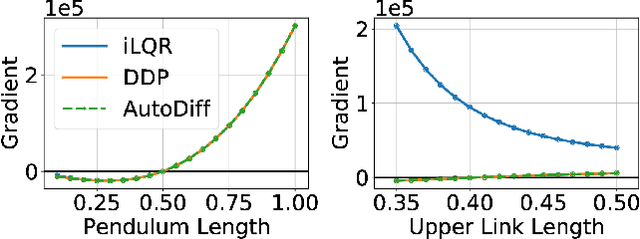

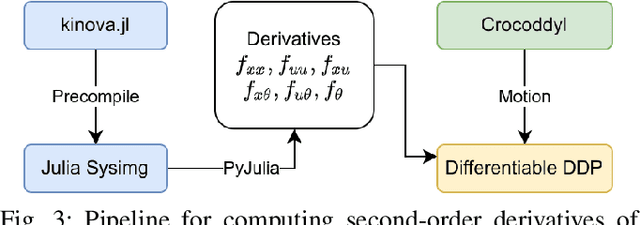

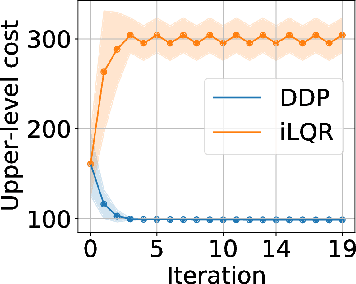

Differentiable Optimal Control via Differential Dynamic Programming

Sep 02, 2022

Abstract:Robot design optimization, imitation learning and system identification share a common problem which requires optimization over robot or task parameters at the same time as optimizing the robot motion. To solve these problems, we can use differentiable optimal control for which the gradients of the robot's motion with respect to the parameters are required. We propose a method to efficiently compute these gradients analytically via the differential dynamic programming (DDP) algorithm using sensitivity analysis (SA). We show that we must include second-order dynamics terms when computing the gradients. However, we do not need to include them when computing the motion. We validate our approach on the pendulum and double pendulum systems. Furthermore, we compare against using the derivatives of the iterative linear quadratic regulator (iLQR), which ignores these second-order terms everywhere, on a co-design task for the Kinova arm, where we optimize the link lengths of the robot for a target reaching task. We show that optimizing using iLQR gradients diverges as ignoring the second-order dynamics affects the computation of the derivatives. Instead, optimizing using DDP gradients converges to the same optimum for a range of initial designs allowing our formulation to scale to complex systems.

Sparse-Dense Motion Modelling and Tracking for Manipulation without Prior Object Models

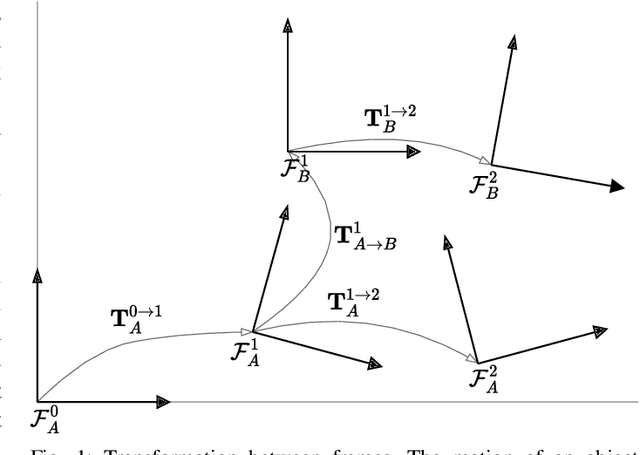

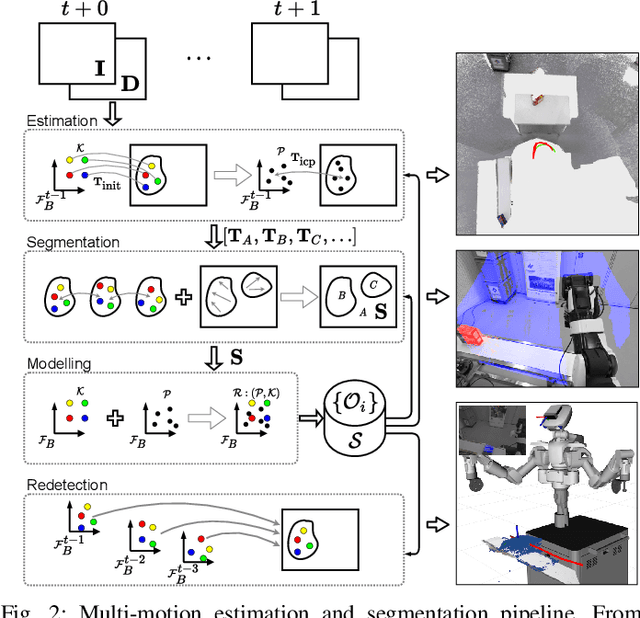

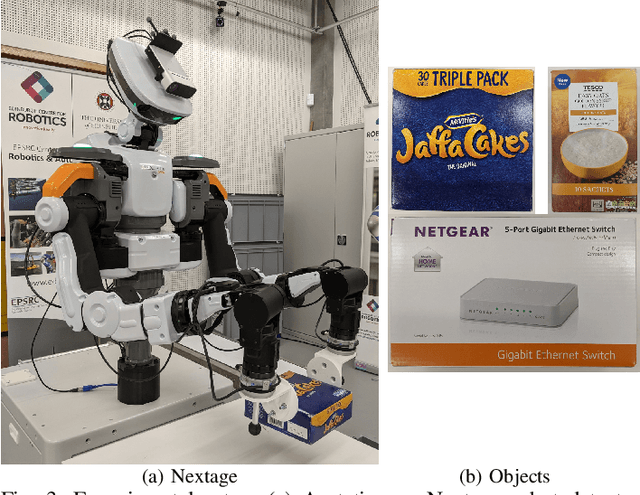

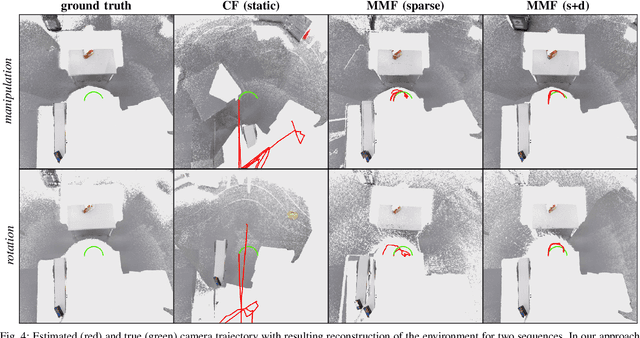

Apr 25, 2022

Abstract:This work presents an approach for modelling and tracking previously unseen objects for robotic grasping tasks. Using the motion of objects in a scene, our approach segments rigid entities from the scene and continuously tracks them to create a dense and sparse model of the object and the environment. While the dense tracking enables interaction with these models, the sparse tracking makes this robust against fast movements and allows to redetect already modelled objects. The evaluation on a dual-arm grasping task demonstrates that our approach 1) enables a robot to detect new objects online without a prior model and to grasp these objects using only a simple parameterisable geometric representation, and 2) is much more robust compared to the state of the art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge