Qian Du

Synthetic Aperture Radar Image Change Detection via Layer Attention-Based Noise-Tolerant Network

Aug 09, 2022

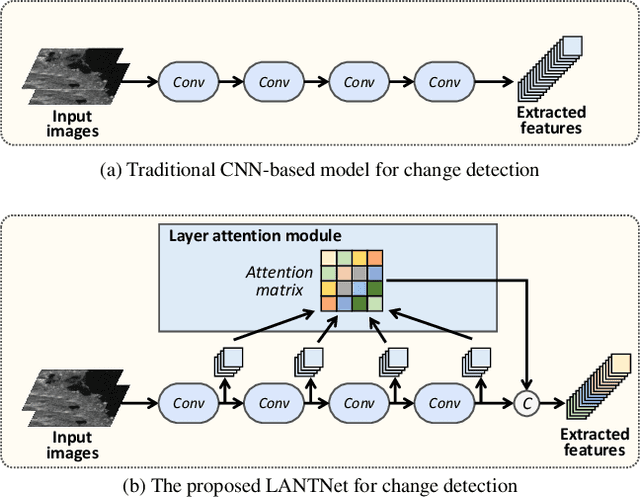

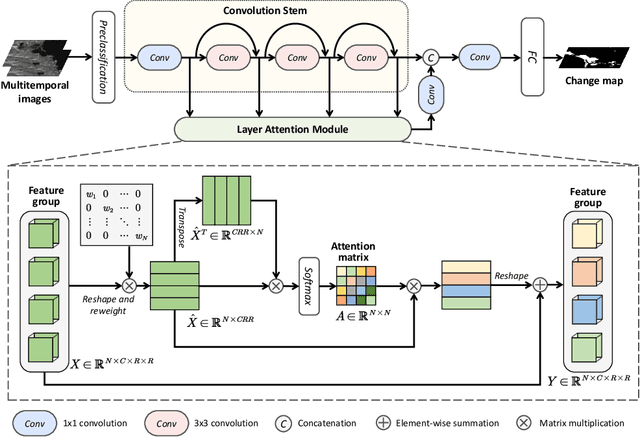

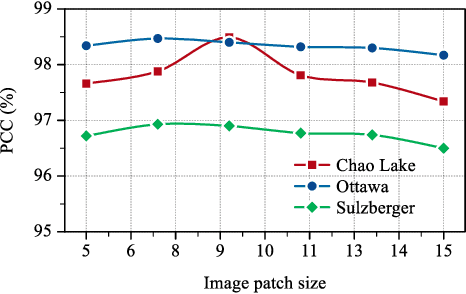

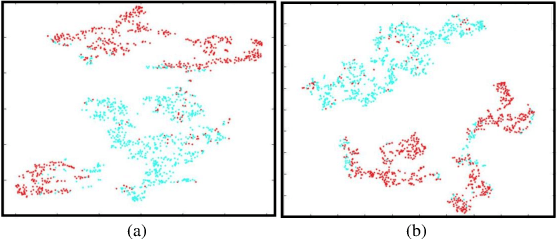

Abstract:Recently, change detection methods for synthetic aperture radar (SAR) images based on convolutional neural networks (CNN) have gained increasing research attention. However, existing CNN-based methods neglect the interactions among multilayer convolutions, and errors involved in the preclassification restrict the network optimization. To this end, we proposed a layer attention-based noise-tolerant network, termed LANTNet. In particular, we design a layer attention module that adaptively weights the feature of different convolution layers. In addition, we design a noise-tolerant loss function that effectively suppresses the impact of noisy labels. Therefore, the model is insensitive to noisy labels in the preclassification results. The experimental results on three SAR datasets show that the proposed LANTNet performs better compared to several state-of-the-art methods. The source codes are available at https://github.com/summitgao/LANTNet

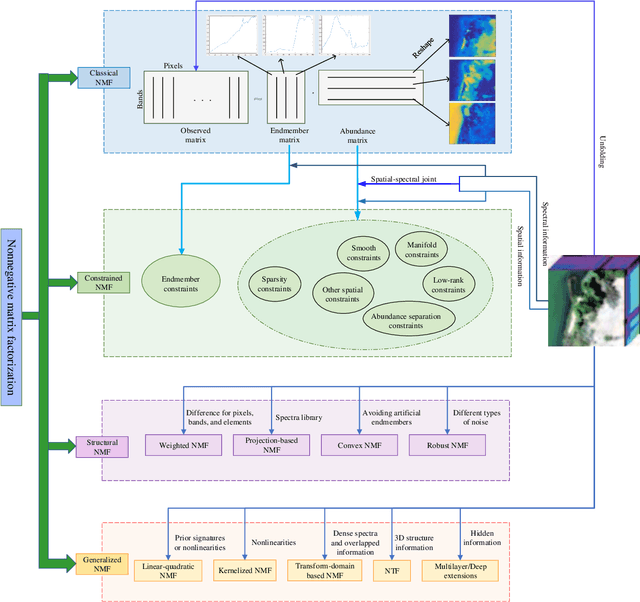

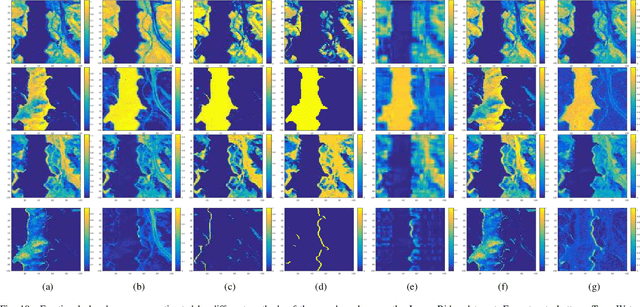

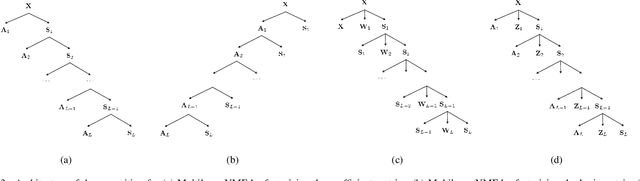

Hyperspectral Unmixing Based on Nonnegative Matrix Factorization: A Comprehensive Review

May 20, 2022

Abstract:Hyperspectral unmixing has been an important technique that estimates a set of endmembers and their corresponding abundances from a hyperspectral image (HSI). Nonnegative matrix factorization (NMF) plays an increasingly significant role in solving this problem. In this article, we present a comprehensive survey of the NMF-based methods proposed for hyperspectral unmixing. Taking the NMF model as a baseline, we show how to improve NMF by utilizing the main properties of HSIs (e.g., spectral, spatial, and structural information). We categorize three important development directions including constrained NMF, structured NMF, and generalized NMF. Furthermore, several experiments are conducted to illustrate the effectiveness of associated algorithms. Finally, we conclude the article with possible future directions with the purposes of providing guidelines and inspiration to promote the development of hyperspectral unmixing.

A Survey on Hyperspectral Image Restoration: From the View of Low-Rank Tensor Approximation

May 18, 2022

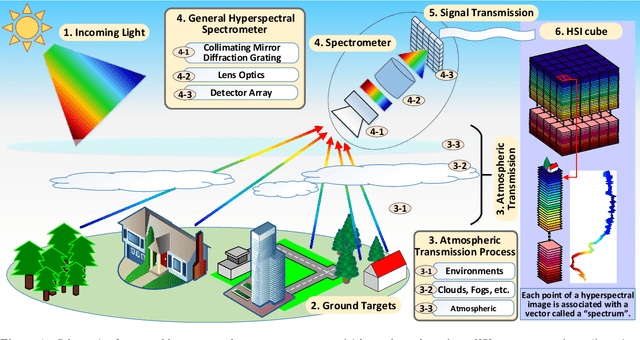

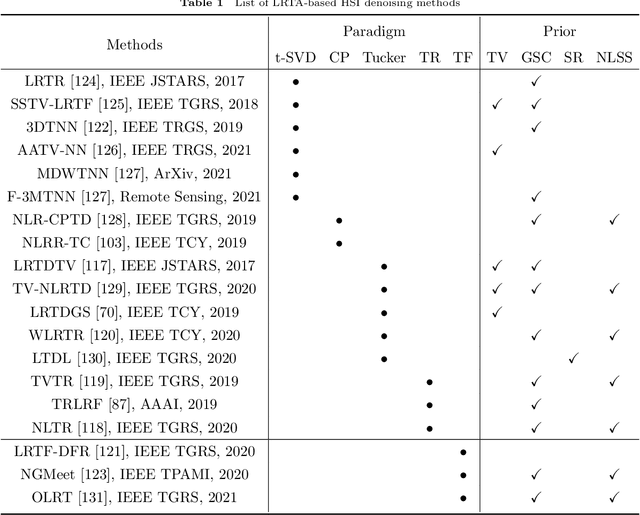

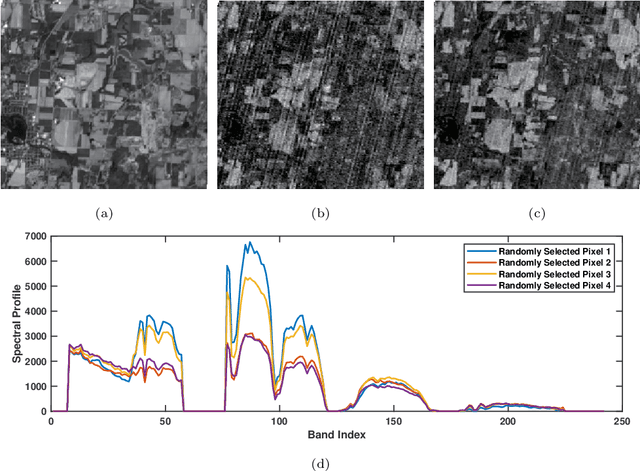

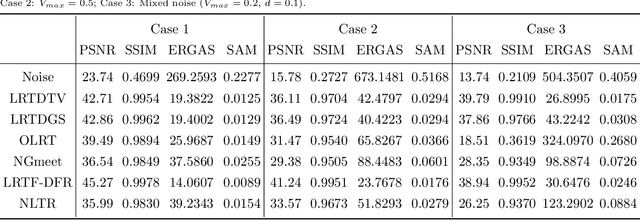

Abstract:The ability of capturing fine spectral discriminative information enables hyperspectral images (HSIs) to observe, detect and identify objects with subtle spectral discrepancy. However, the captured HSIs may not represent true distribution of ground objects and the received reflectance at imaging instruments may be degraded, owing to environmental disturbances, atmospheric effects and sensors' hardware limitations. These degradations include but are not limited to: complex noise (i.e., Gaussian noise, impulse noise, sparse stripes, and their mixtures), heavy stripes, deadlines, cloud and shadow occlusion, blurring and spatial-resolution degradation and spectral absorption, etc. These degradations dramatically reduce the quality and usefulness of HSIs. Low-rank tensor approximation (LRTA) is such an emerging technique, having gained much attention in HSI restoration community, with ever-growing theoretical foundation and pivotal technological innovation. Compared to low-rank matrix approximation (LRMA), LRTA is capable of characterizing more complex intrinsic structure of high-order data and owns more efficient learning abilities, being established to address convex and non-convex inverse optimization problems induced by HSI restoration. This survey mainly attempts to present a sophisticated, cutting-edge, and comprehensive technical survey of LRTA toward HSI restoration, specifically focusing on the following six topics: Denoising, Destriping, Inpainting, Deblurring, Super--resolution and Fusion. The theoretical development and variants of LRTA techniques are also elaborated. For each topic, the state-of-the-art restoration methods are compared by assessing their performance both quantitatively and visually. Open issues and challenges are also presented, including model formulation, algorithm design, prior exploration and application concerning the interpretation requirements.

Adaptive Cross-Attention-Driven Spatial-Spectral Graph Convolutional Network for Hyperspectral Image Classification

Apr 12, 2022

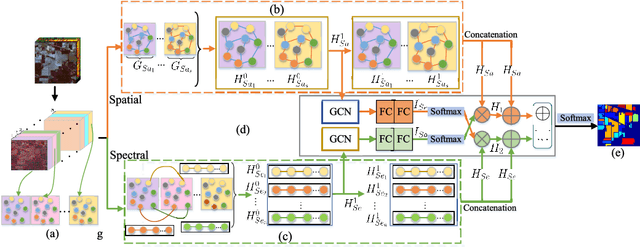

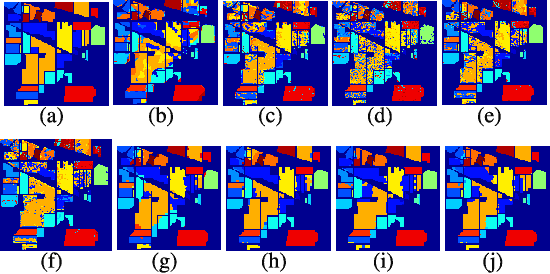

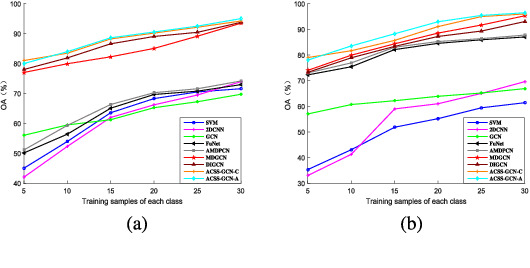

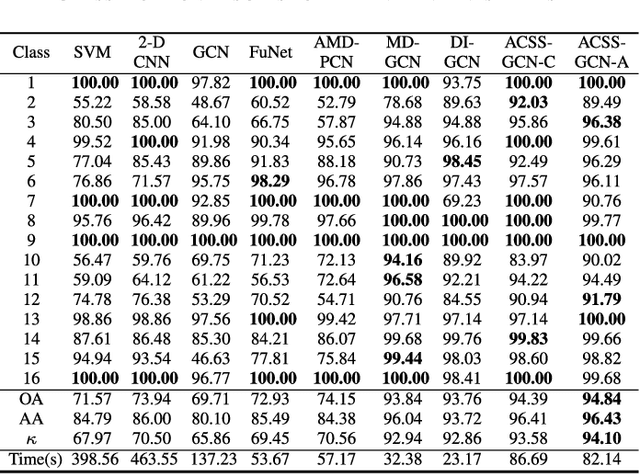

Abstract:Recently, graph convolutional networks (GCNs) have been developed to explore spatial relationship between pixels, achieving better classification performance of hyperspectral images (HSIs). However, these methods fail to sufficiently leverage the relationship between spectral bands in HSI data. As such, we propose an adaptive cross-attention-driven spatial-spectral graph convolutional network (ACSS-GCN), which is composed of a spatial GCN (Sa-GCN) subnetwork, a spectral GCN (Se-GCN) subnetwork, and a graph cross-attention fusion module (GCAFM). Specifically, Sa-GCN and Se-GCN are proposed to extract the spatial and spectral features by modeling correlations between spatial pixels and between spectral bands, respectively. Then, by integrating attention mechanism into information aggregation of graph, the GCAFM, including three parts, i.e., spatial graph attention block, spectral graph attention block, and fusion block, is designed to fuse the spatial and spectral features and suppress noise interference in Sa-GCN and Se-GCN. Moreover, the idea of the adaptive graph is introduced to explore an optimal graph through back propagation during the training process. Experiments on two HSI data sets show that the proposed method achieves better performance than other classification methods.

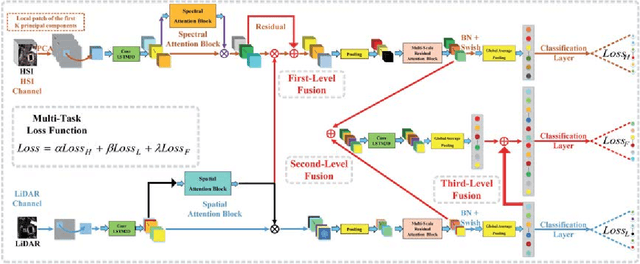

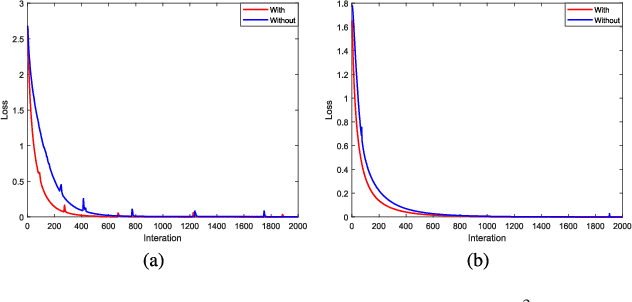

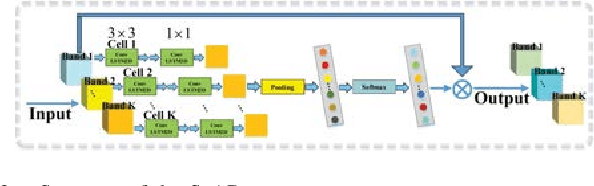

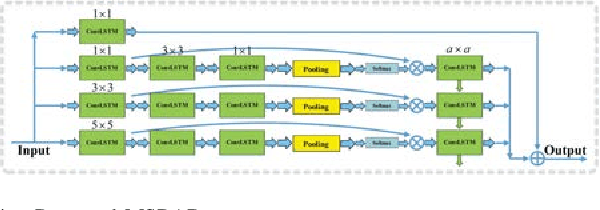

A3CLNN: Spatial, Spectral and Multiscale Attention ConvLSTM Neural Network for Multisource Remote Sensing Data Classification

Apr 09, 2022

Abstract:The problem of effectively exploiting the information multiple data sources has become a relevant but challenging research topic in remote sensing. In this paper, we propose a new approach to exploit the complementarity of two data sources: hyperspectral images (HSIs) and light detection and ranging (LiDAR) data. Specifically, we develop a new dual-channel spatial, spectral and multiscale attention convolutional long short-term memory neural network (called dual-channel A3CLNN) for feature extraction and classification of multisource remote sensing data. Spatial, spectral and multiscale attention mechanisms are first designed for HSI and LiDAR data in order to learn spectral- and spatial-enhanced feature representations, and to represent multiscale information for different classes. In the designed fusion network, a novel composite attention learning mechanism (combined with a three-level fusion strategy) is used to fully integrate the features in these two data sources. Finally, inspired by the idea of transfer learning, a novel stepwise training strategy is designed to yield a final classification result. Our experimental results, conducted on several multisource remote sensing data sets, demonstrate that the newly proposed dual-channel A3CLNN exhibits better feature representation ability (leading to more competitive classification performance) than other state-of-the-art methods.

* 16 pages, 10 figures

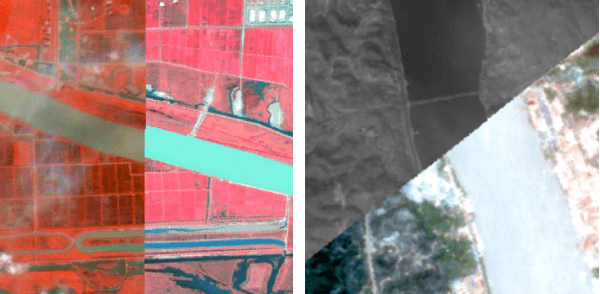

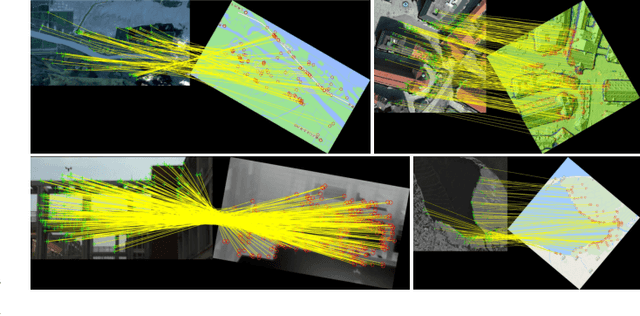

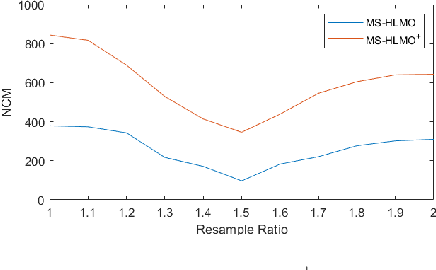

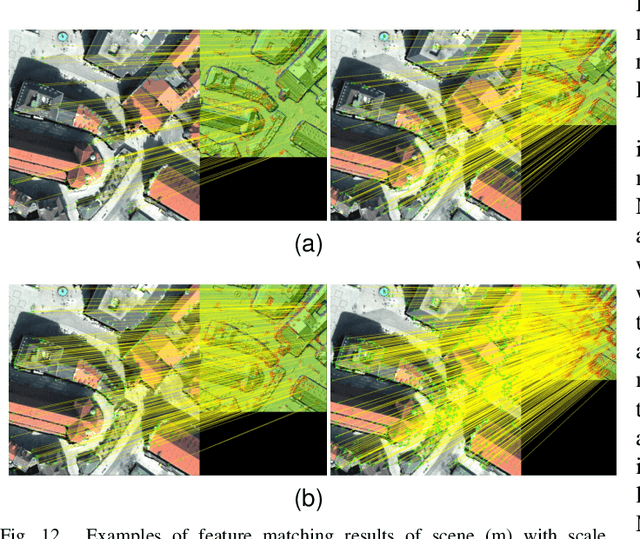

MS-HLMO: Multi-scale Histogram of Local Main Orientation for Remote Sensing Image Registration

Apr 01, 2022

Abstract:Multi-source image registration is challenging due to intensity, rotation, and scale differences among the images. Considering the characteristics and differences of multi-source remote sensing images, a feature-based registration algorithm named Multi-scale Histogram of Local Main Orientation (MS-HLMO) is proposed. Harris corner detection is first adopted to generate feature points. The HLMO feature of each Harris feature point is extracted on a Partial Main Orientation Map (PMOM) with a Generalized Gradient Location and Orientation Histogram-like (GGLOH) feature descriptor, which provides high intensity, rotation, and scale invariance. The feature points are matched through a multi-scale matching strategy. Comprehensive experiments on 17 multi-source remote sensing scenes demonstrate that the proposed MS-HLMO and its simplified version MS-HLMO$^+$ outperform other competitive registration algorithms in terms of effectiveness and generalization.

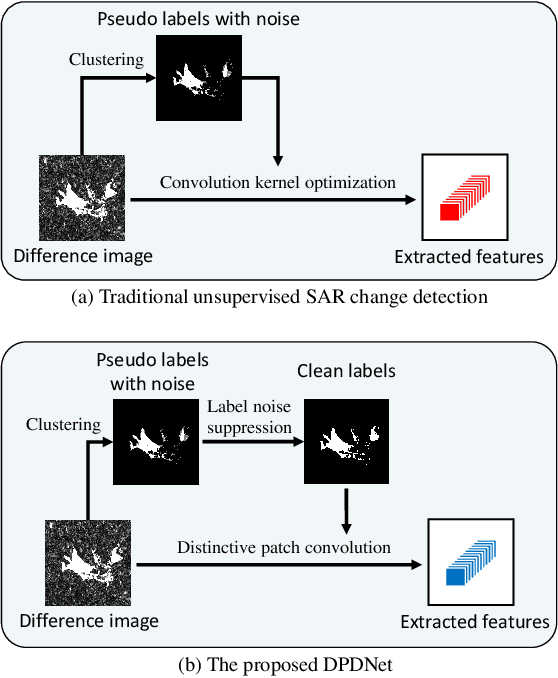

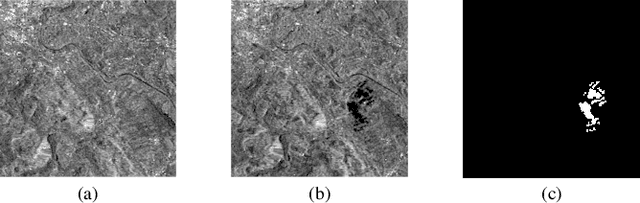

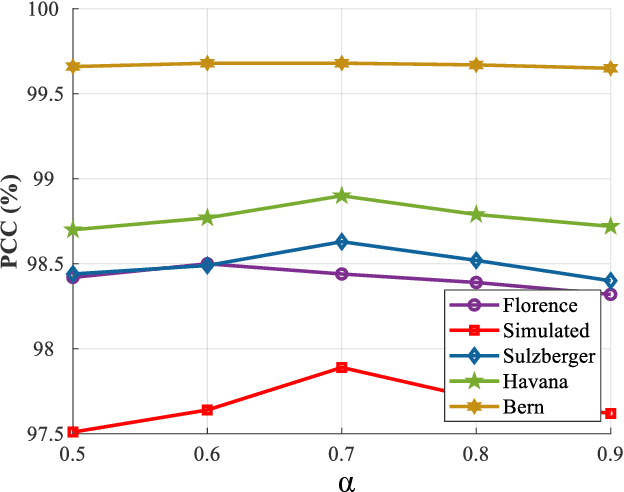

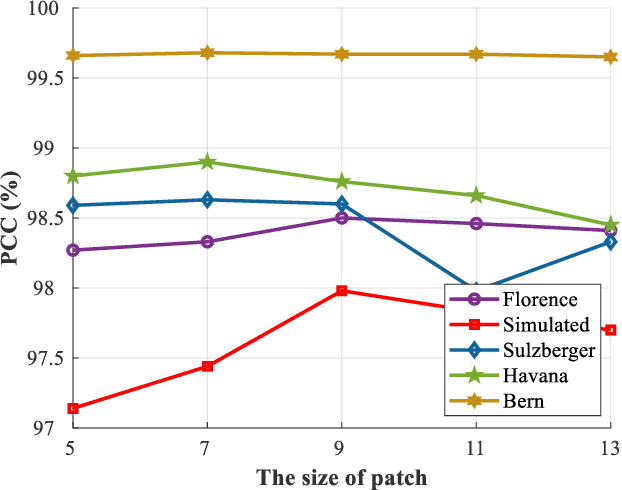

Change Detection from Synthetic Aperture Radar Images via Dual Path Denoising Network

Mar 13, 2022

Abstract:Benefited from the rapid and sustainable development of synthetic aperture radar (SAR) sensors, change detection from SAR images has received increasing attentions over the past few years. Existing unsupervised deep learning-based methods have made great efforts to exploit robust feature representations, but they consume much time to optimize parameters. Besides, these methods use clustering to obtain pseudo-labels for training, and the pseudo-labeled samples often involve errors, which can be considered as "label noise". To address these issues, we propose a Dual Path Denoising Network (DPDNet) for SAR image change detection. In particular, we introduce the random label propagation to clean the label noise involved in preclassification. We also propose the distinctive patch convolution for feature representation learning to reduce the time consumption. Specifically, the attention mechanism is used to select distinctive pixels in the feature maps, and patches around these pixels are selected as convolution kernels. Consequently, the DPDNet does not require a great number of training samples for parameter optimization, and its computational efficiency is greatly enhanced. Extensive experiments have been conducted on five SAR datasets to verify the proposed DPDNet. The experimental results demonstrate that our method outperforms several state-of-the-art methods in change detection results.

SSCU-Net: Spatial-Spectral Collaborative Unmixing Network for Hyperspectral Images

Mar 12, 2022

Abstract:Linear spectral unmixing is an essential technique in hyperspectral image processing and interpretation. In recent years, deep learning-based approaches have shown great promise in hyperspectral unmixing, in particular, unsupervised unmixing methods based on autoencoder networks are a recent trend. The autoencoder model, which automatically learns low-dimensional representations (abundances) and reconstructs data with their corresponding bases (endmembers), has achieved superior performance in hyperspectral unmixing. In this article, we explore the effective utilization of spatial and spectral information in autoencoder-based unmixing networks. Important findings on the use of spatial and spectral information in the autoencoder framework are discussed. Inspired by these findings, we propose a spatial-spectral collaborative unmixing network, called SSCU-Net, which learns a spatial autoencoder network and a spectral autoencoder network in an end-to-end manner to more effectively improve the unmixing performance. SSCU-Net is a two-stream deep network and shares an alternating architecture, where the two autoencoder networks are efficiently trained in a collaborative way for estimation of endmembers and abundances. Meanwhile, we propose a new spatial autoencoder network by introducing a superpixel segmentation method based on abundance information, which greatly facilitates the employment of spatial information and improves the accuracy of unmixing network. Moreover, extensive ablation studies are carried out to investigate the performance gain of SSCU-Net. Experimental results on both synthetic and real hyperspectral data sets illustrate the effectiveness and competitiveness of the proposed SSCU-Net compared with several state-of-the-art hyperspectral unmixing methods.

Change Detection from Synthetic Aperture Radar Images via Graph-Based Knowledge Supplement Network

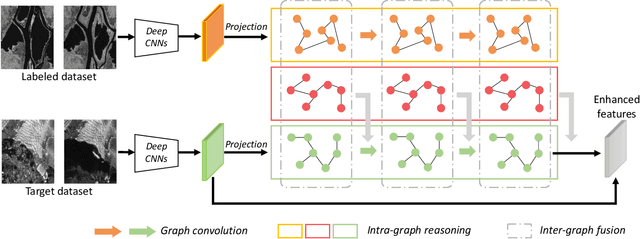

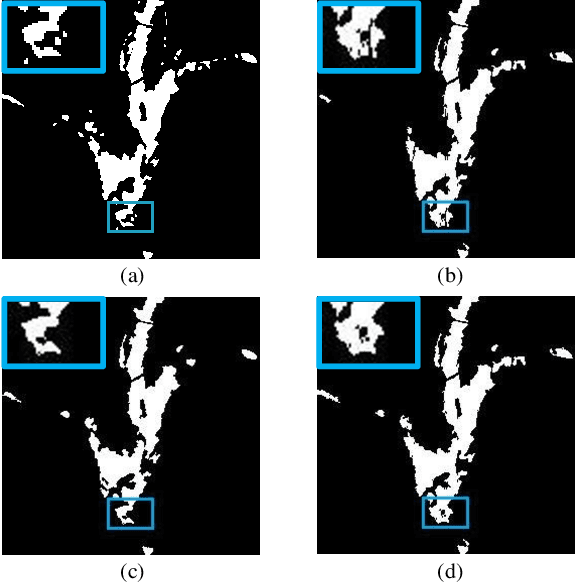

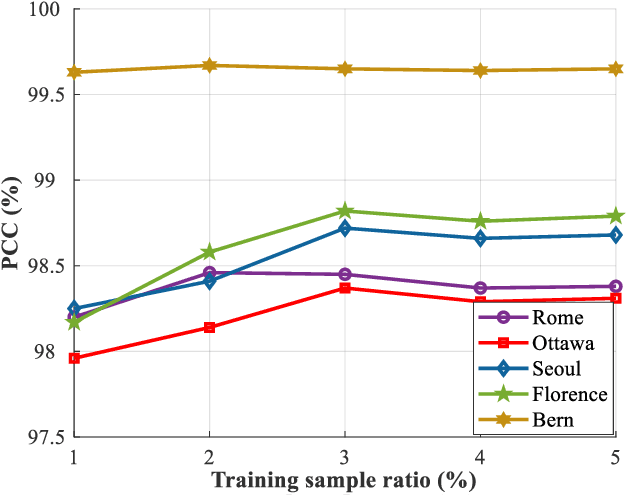

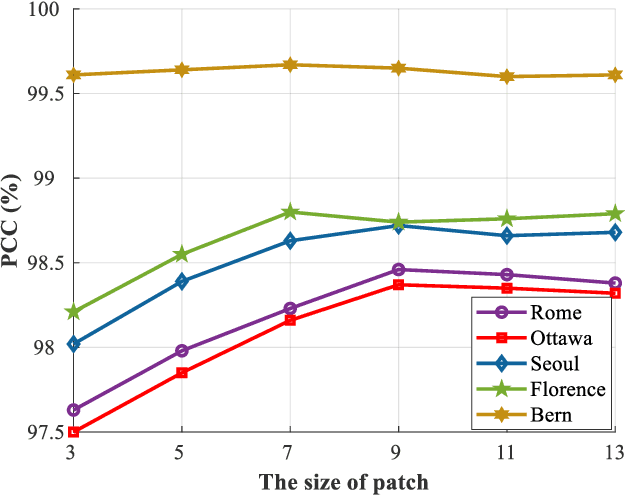

Feb 09, 2022

Abstract:Synthetic aperture radar (SAR) image change detection is a vital yet challenging task in the field of remote sensing image analysis. Most previous works adopt a self-supervised method which uses pseudo-labeled samples to guide subsequent training and testing. However, deep networks commonly require many high-quality samples for parameter optimization. The noise in pseudo-labels inevitably affects the final change detection performance. To solve the problem, we propose a Graph-based Knowledge Supplement Network (GKSNet). To be more specific, we extract discriminative information from the existing labeled dataset as additional knowledge, to suppress the adverse effects of noisy samples to some extent. Afterwards, we design a graph transfer module to distill contextual information attentively from the labeled dataset to the target dataset, which bridges feature correlation between datasets. To validate the proposed method, we conducted extensive experiments on four SAR datasets, which demonstrated the superiority of the proposed GKSNet as compared to several state-of-the-art baselines. Our codes are available at https://github.com/summitgao/SAR_CD_GKSNet.

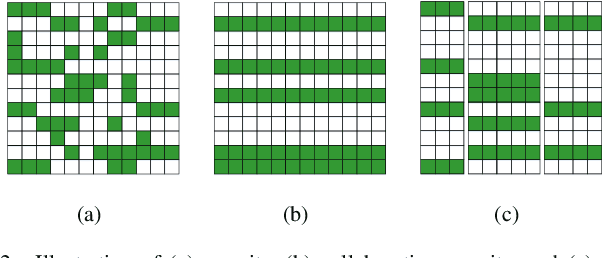

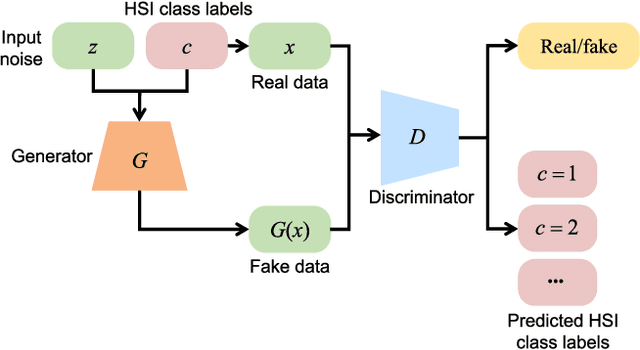

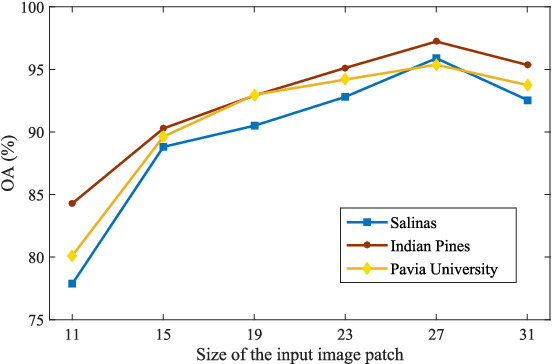

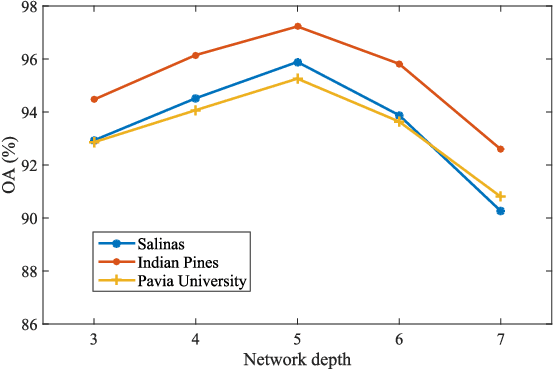

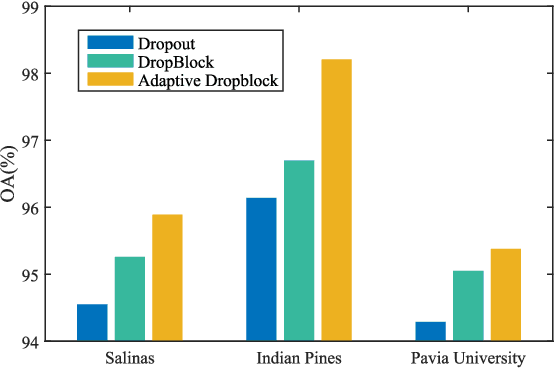

Adaptive DropBlock Enhanced Generative Adversarial Networks for Hyperspectral Image Classification

Jan 22, 2022

Abstract:In recent years, hyperspectral image (HSI) classification based on generative adversarial networks (GAN) has achieved great progress. GAN-based classification methods can mitigate the limited training sample dilemma to some extent. However, several studies have pointed out that existing GAN-based HSI classification methods are heavily affected by the imbalanced training data problem. The discriminator in GAN always contradicts itself and tries to associate fake labels to the minority-class samples, and thus impair the classification performance. Another critical issue is the mode collapse in GAN-based methods. The generator is only capable of producing samples within a narrow scope of the data space, which severely hinders the advancement of GAN-based HSI classification methods. In this paper, we proposed an Adaptive DropBlock-enhanced Generative Adversarial Networks (ADGAN) for HSI classification. First, to solve the imbalanced training data problem, we adjust the discriminator to be a single classifier, and it will not contradict itself. Second, an adaptive DropBlock (AdapDrop) is proposed as a regularization method employed in the generator and discriminator to alleviate the mode collapse issue. The AdapDrop generated drop masks with adaptive shapes instead of a fixed size region, and it alleviates the limitations of DropBlock in dealing with ground objects with various shapes. Experimental results on three HSI datasets demonstrated that the proposed ADGAN achieved superior performance over state-of-the-art GAN-based methods. Our codes are available at https://github.com/summitgao/HC_ADGAN

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge