Paolo Gamba

Hyperspectral Imaging

Aug 11, 2025

Abstract:Hyperspectral imaging (HSI) is an advanced sensing modality that simultaneously captures spatial and spectral information, enabling non-invasive, label-free analysis of material, chemical, and biological properties. This Primer presents a comprehensive overview of HSI, from the underlying physical principles and sensor architectures to key steps in data acquisition, calibration, and correction. We summarize common data structures and highlight classical and modern analysis methods, including dimensionality reduction, classification, spectral unmixing, and AI-driven techniques such as deep learning. Representative applications across Earth observation, precision agriculture, biomedicine, industrial inspection, cultural heritage, and security are also discussed, emphasizing HSI's ability to uncover sub-visual features for advanced monitoring, diagnostics, and decision-making. Persistent challenges, such as hardware trade-offs, acquisition variability, and the complexity of high-dimensional data, are examined alongside emerging solutions, including computational imaging, physics-informed modeling, cross-modal fusion, and self-supervised learning. Best practices for dataset sharing, reproducibility, and metadata documentation are further highlighted to support transparency and reuse. Looking ahead, we explore future directions toward scalable, real-time, and embedded HSI systems, driven by sensor miniaturization, self-supervised learning, and foundation models. As HSI evolves into a general-purpose, cross-disciplinary platform, it holds promise for transformative applications in science, technology, and society.

A Deep Learning Architecture for Land Cover Mapping Using Spatio-Temporal Sentinel-1 Features

Mar 10, 2025

Abstract:Land Cover (LC) mapping using satellite imagery is critical for environmental monitoring and management. Deep Learning (DL), particularly Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), have revolutionized this field by enhancing the accuracy of classification tasks. In this work, a novel approach combining a transformer-based Swin-Unet architecture with seasonal synthesized spatio-temporal images has been employed to classify LC types using spatio-temporal features extracted from Sentinel-1 (S1) Synthetic Aperture Radar (SAR) data, organized into seasonal clusters. The study focuses on three distinct regions - Amazonia, Africa, and Siberia - and evaluates the model performance across diverse ecoregions within these areas. By utilizing seasonal feature sequences instead of dense temporal sequences, notable performance improvements have been achieved, especially in regions with temporal data gaps like Siberia, where S1 data distribution is uneven and non-uniform. The results demonstrate the effectiveness and the generalization capabilities of the proposed methodology in achieving high overall accuracy (O.A.) values, even in regions with limited training data.

Benchmarking of a new data splitting method on volcanic eruption data

Oct 08, 2024

Abstract:In this paper, a novel method for data splitting is presented: an iterative procedure divides the input dataset of volcanic eruption, chosen as the proposed use case, into two parts using a dissimilarity index calculated on the cumulative histograms of these two parts. The Cumulative Histogram Dissimilarity (CHD) index is introduced as part of the design. Based on the obtained results the proposed model in this case, compared to both Random splitting and K-means implemented over different configurations, achieves the best performance, with a slightly higher number of epochs. However, this demonstrates that the model can learn more deeply from the input dataset, which is attributable to the quality of the splitting. In fact, each model was trained with early stopping, suitable in case of overfitting, and the higher number of epochs in the proposed method demonstrates that early stopping did not detect overfitting, and consequently, the learning was optimal.

SEN12-WATER: A New Dataset for Hydrological Applications and its Benchmarking

Sep 25, 2024

Abstract:Climate change and increasing droughts pose significant challenges to water resource management around the world. These problems lead to severe water shortages that threaten ecosystems, agriculture, and human communities. To advance the fight against these challenges, we present a new dataset, SEN12-WATER, along with a benchmark using a novel end-to-end Deep Learning (DL) framework for proactive drought-related analysis. The dataset, identified as a spatiotemporal datacube, integrates SAR polarization, elevation, slope, and multispectral optical bands. Our DL framework enables the analysis and estimation of water losses over time in reservoirs of interest, revealing significant insights into water dynamics for drought analysis by examining temporal changes in physical quantities such as water volume. Our methodology takes advantage of the multitemporal and multimodal characteristics of the proposed dataset, enabling robust generalization and advancing understanding of drought, contributing to climate change resilience and sustainable water resource management. The proposed framework involves, among the several components, speckle noise removal from SAR data, a water body segmentation through a U-Net architecture, the time series analysis, and the predictive capability of a Time-Distributed-Convolutional Neural Network (TD-CNN). Results are validated through ground truth data acquired on-ground via dedicated sensors and (tailored) metrics, such as Precision, Recall, Intersection over Union, Mean Squared Error, Structural Similarity Index Measure and Peak Signal-to-Noise Ratio.

From Graphs to Qubits: A Critical Review of Quantum Graph Neural Networks

Aug 12, 2024

Abstract:Quantum Graph Neural Networks (QGNNs) represent a novel fusion of quantum computing and Graph Neural Networks (GNNs), aimed at overcoming the computational and scalability challenges inherent in classical GNNs that are powerful tools for analyzing data with complex relational structures but suffer from limitations such as high computational complexity and over-smoothing in large-scale applications. Quantum computing, leveraging principles like superposition and entanglement, offers a pathway to enhanced computational capabilities. This paper critically reviews the state-of-the-art in QGNNs, exploring various architectures. We discuss their applications across diverse fields such as high-energy physics, molecular chemistry, finance and earth sciences, highlighting the potential for quantum advantage. Additionally, we address the significant challenges faced by QGNNs, including noise, decoherence, and scalability issues, proposing potential strategies to mitigate these problems. This comprehensive review aims to provide a foundational understanding of QGNNs, fostering further research and development in this promising interdisciplinary field.

Quanv4EO: Empowering Earth Observation by means of Quanvolutional Neural Networks

Jul 24, 2024

Abstract:A significant amount of remotely sensed data is generated daily by many Earth observation (EO) spaceborne and airborne sensors over different countries of our planet. Different applications use those data, such as natural hazard monitoring, global climate change, urban planning, and more. Many challenges are brought by the use of these big data in the context of remote sensing applications. In recent years, employment of machine learning (ML) and deep learning (DL)-based algorithms have allowed a more efficient use of these data but the issues in managing, processing, and efficiently exploiting them have even increased since classical computers have reached their limits. This article highlights a significant shift towards leveraging quantum computing techniques in processing large volumes of remote sensing data. The proposed Quanv4EO model introduces a quanvolution method for preprocessing multi-dimensional EO data. First its effectiveness is demonstrated through image classification tasks on MNIST and Fashion MNIST datasets, and later on, its capabilities on remote sensing image classification and filtering are shown. Key findings suggest that the proposed model not only maintains high precision in image classification but also shows improvements of around 5\% in EO use cases compared to classical approaches. Moreover, the proposed framework stands out for its reduced parameter size and the absence of training quantum kernels, enabling better scalability for processing massive datasets. These advancements underscore the promising potential of quantum computing in addressing the limitations of classical algorithms in remote sensing applications, offering a more efficient and effective alternative for image data classification and analysis.

QSpeckleFilter: a Quantum Machine Learning approach for SAR speckle filtering

Feb 02, 2024

Abstract:The use of Synthetic Aperture Radar (SAR) has greatly advanced our capacity for comprehensive Earth monitoring, providing detailed insights into terrestrial surface use and cover regardless of weather conditions, and at any time of day or night. However, SAR imagery quality is often compromised by speckle, a granular disturbance that poses challenges in producing accurate results without suitable data processing. In this context, the present paper explores the cutting-edge application of Quantum Machine Learning (QML) in speckle filtering, harnessing quantum algorithms to address computational complexities. We introduce here QSpeckleFilter, a novel QML model for SAR speckle filtering. The proposed method compared to a previous work from the same authors showcases its superior performance in terms of Peak Signal-to-Noise Ratio (PSNR) and Structural Similarity Index Measure (SSIM) on a testing dataset, and it opens new avenues for Earth Observation (EO) applications.

A Hybrid MLP-Quantum approach in Graph Convolutional Neural Networks for Oceanic Nino Index prediction

Jan 29, 2024Abstract:This paper explores an innovative fusion of Quantum Computing (QC) and Artificial Intelligence (AI) through the development of a Hybrid Quantum Graph Convolutional Neural Network (HQGCNN), combining a Graph Convolutional Neural Network (GCNN) with a Quantum Multilayer Perceptron (MLP). The study highlights the potentialities of GCNNs in handling global-scale dependencies and proposes the HQGCNN for predicting complex phenomena such as the Oceanic Nino Index (ONI). Preliminary results suggest the model potential to surpass state-of-the-art (SOTA). The code will be made available with the paper publication.

Spectral Superresolution of Multispectral Imagery with Joint Sparse and Low-Rank Learning

Jul 28, 2020

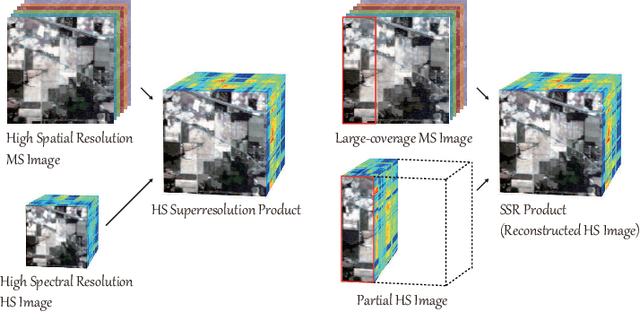

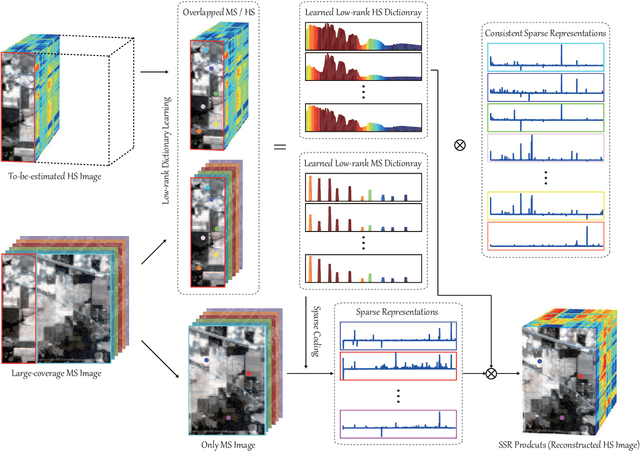

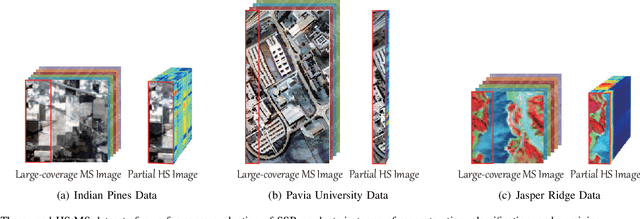

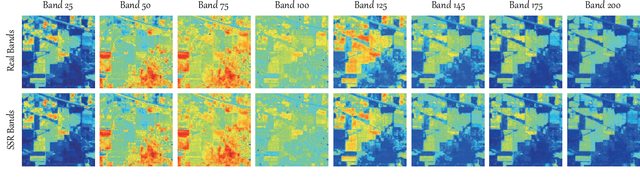

Abstract:Extensive attention has been widely paid to enhance the spatial resolution of hyperspectral (HS) images with the aid of multispectral (MS) images in remote sensing. However, the ability in the fusion of HS and MS images remains to be improved, particularly in large-scale scenes, due to the limited acquisition of HS images. Alternatively, we super-resolve MS images in the spectral domain by the means of partially overlapped HS images, yielding a novel and promising topic: spectral superresolution (SSR) of MS imagery. This is challenging and less investigated task due to its high ill-posedness in inverse imaging. To this end, we develop a simple but effective method, called joint sparse and low-rank learning (J-SLoL), to spectrally enhance MS images by jointly learning low-rank HS-MS dictionary pairs from overlapped regions. J-SLoL infers and recovers the unknown hyperspectral signals over a larger coverage by sparse coding on the learned dictionary pair. Furthermore, we validate the SSR performance on three HS-MS datasets (two for classification and one for unmixing) in terms of reconstruction, classification, and unmixing by comparing with several existing state-of-the-art baselines, showing the effectiveness and superiority of the proposed J-SLoL algorithm. Furthermore, the codes and datasets will be available at: https://github.com/danfenghong/IEEE\_TGRS\_J-SLoL, contributing to the RS community.

Multi-feature combined cloud and cloud shadow detection in GaoFen-1 wide field of view imagery

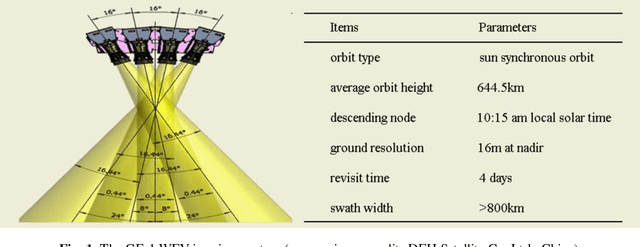

Feb 05, 2017

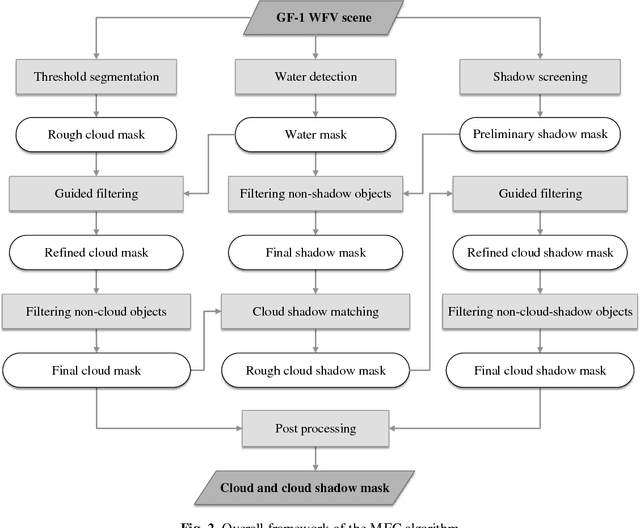

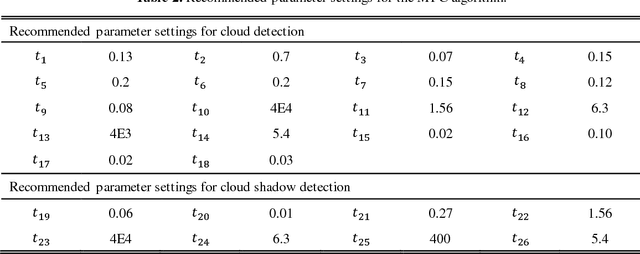

Abstract:The wide field of view (WFV) imaging system onboard the Chinese GaoFen-1 (GF-1) optical satellite has a 16-m resolution and four-day revisit cycle for large-scale Earth observation. The advantages of the high temporal-spatial resolution and the wide field of view make the GF-1 WFV imagery very popular. However, cloud cover is an inevitable problem in GF-1 WFV imagery, which influences its precise application. Accurate cloud and cloud shadow detection in GF-1 WFV imagery is quite difficult due to the fact that there are only three visible bands and one near-infrared band. In this paper, an automatic multi-feature combined (MFC) method is proposed for cloud and cloud shadow detection in GF-1 WFV imagery. The MFC algorithm first implements threshold segmentation based on the spectral features and mask refinement based on guided filtering to generate a preliminary cloud mask. The geometric features are then used in combination with the texture features to improve the cloud detection results and produce the final cloud mask. Finally, the cloud shadow mask can be acquired by means of the cloud and shadow matching and follow-up correction process. The method was validated using 108 globally distributed scenes. The results indicate that MFC performs well under most conditions, and the average overall accuracy of MFC cloud detection is as high as 96.8%. In the contrastive analysis with the official provided cloud fractions, MFC shows a significant improvement in cloud fraction estimation, and achieves a high accuracy for the cloud and cloud shadow detection in the GF-1 WFV imagery with fewer spectral bands. The proposed method could be used as a preprocessing step in the future to monitor land-cover change, and it could also be easily extended to other optical satellite imagery which has a similar spectral setting.

* This manuscript has been accepted for publication in Remote Sensing of Environment, vol. 191, pp.342-358, 2017. (http://www.sciencedirect.com/science/article/pii/S003442571730038X)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge