Lennart Svensson

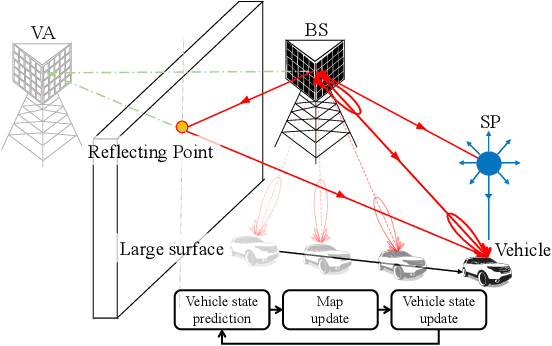

Doppler Exploitation in Bistatic mmWave Radio SLAM

Aug 22, 2022

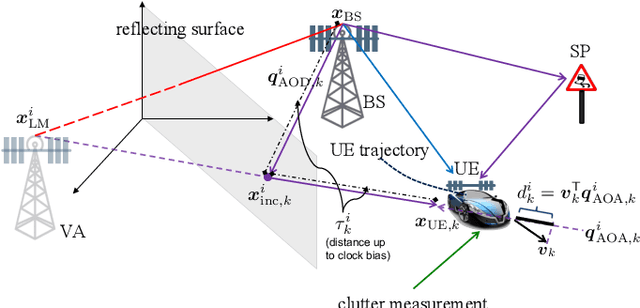

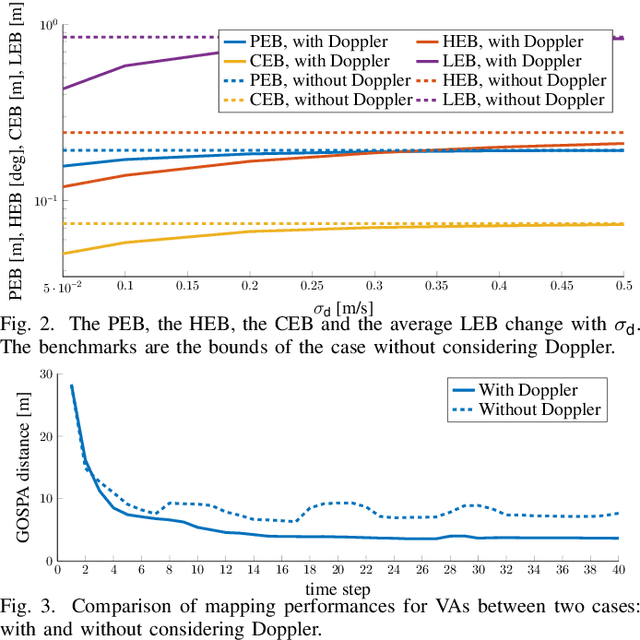

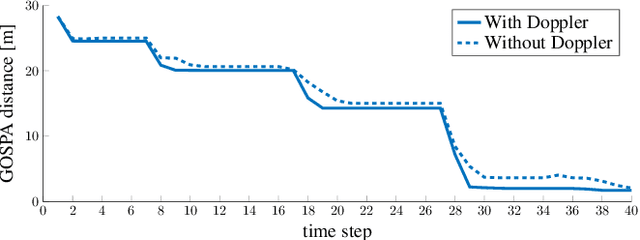

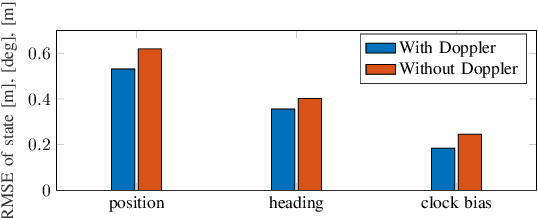

Abstract:Networks in 5G and beyond utilize millimeter wave (mmWave) radio signals, large bandwidths, and large antenna arrays, which bring opportunities in jointly localizing the user equipment and mapping the propagation environment, termed as simultaneous localization and mapping (SLAM). Existing approaches mainly rely on delays and angles, and ignore the Doppler, although it contains geometric information. In this paper, we study the benefits of exploiting Doppler in SLAM through deriving the posterior Cram\'er-Rao bounds (PCRBs) and formulating the extended Kalman-Poisson multi-Bernoulli sequential filtering solution with Doppler as one of the involved measurements. Both theoretical PCRB analysis and simulation results demonstrate the efficacy of utilizing Doppler.

Trajectory PMB Filters for Extended Object Tracking Using Belief Propagation

Jul 20, 2022

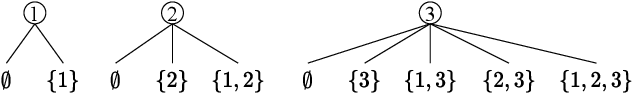

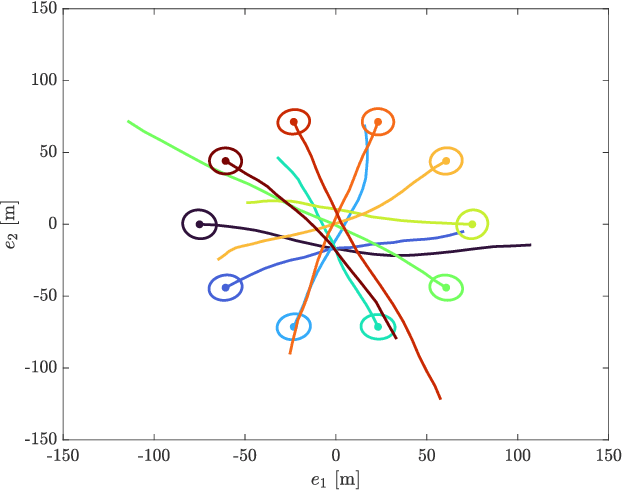

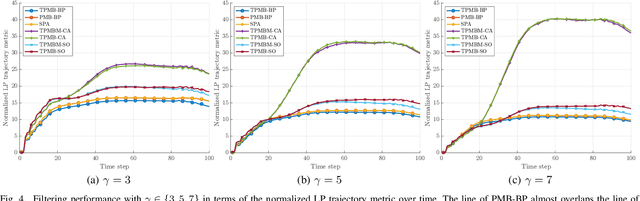

Abstract:In this paper, we propose a Poisson multi-Bernoulli (PMB) filter for extended object tracking (EOT), which directly estimates the set of object trajectories, using belief propagation (BP). The proposed filter propagates a PMB density on the posterior of sets of trajectories through the filtering recursions over time, where the PMB mixture (PMBM) posterior after the update step is approximated as a PMB. The efficient PMB approximation relies on several important theoretical contributions. First, we present a PMBM conjugate prior on the posterior of sets of trajectories for a generalized measurement model, in which each object generates an independent set of measurements. The PMBM density is a conjugate prior in the sense that both the prediction and the update steps preserve the PMBM form of the density. Second, we present a factor graph representation of the joint posterior of the PMBM set of trajectories and association variables for the Poisson spatial measurement model. Importantly, leveraging the PMBM conjugacy and the factor graph formulation enables an elegant treatment on undetected objects via a Poisson point process and efficient inference on sets of trajectories using BP, where the approximate marginal densities in the PMB approximation can be obtained without enumeration of different data association hypotheses. To achieve this, we present a particle-based implementation of the proposed filter, where smoothed trajectory estimates, if desired, can be obtained via single-object particle smoothing methods, and its performance for EOT with ellipsoidal shapes is evaluated in a simulation study.

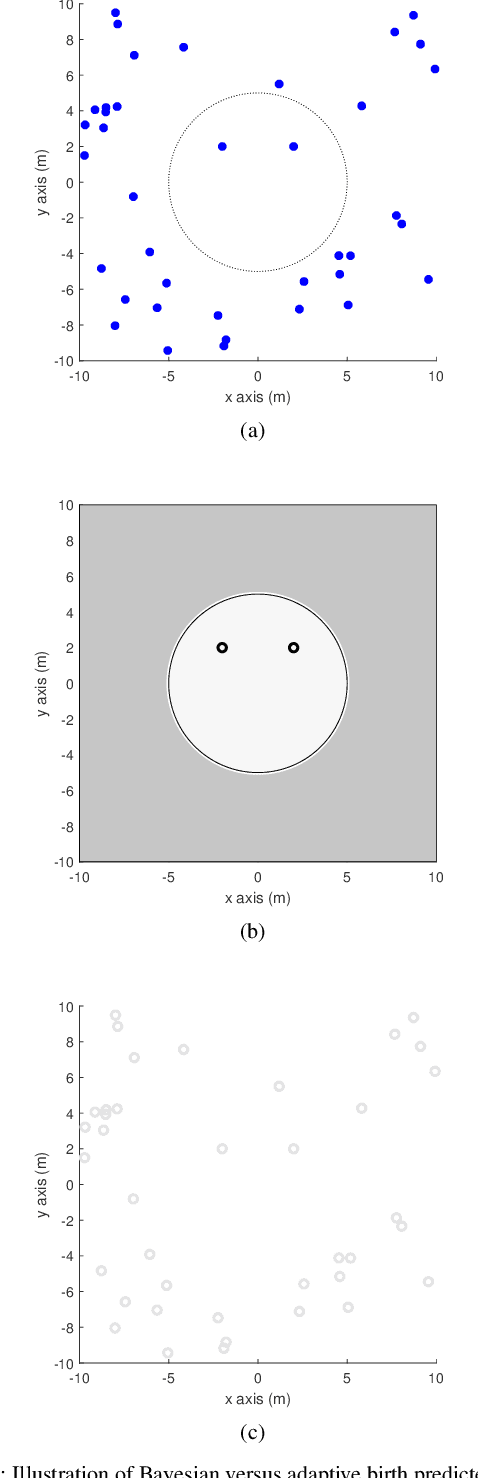

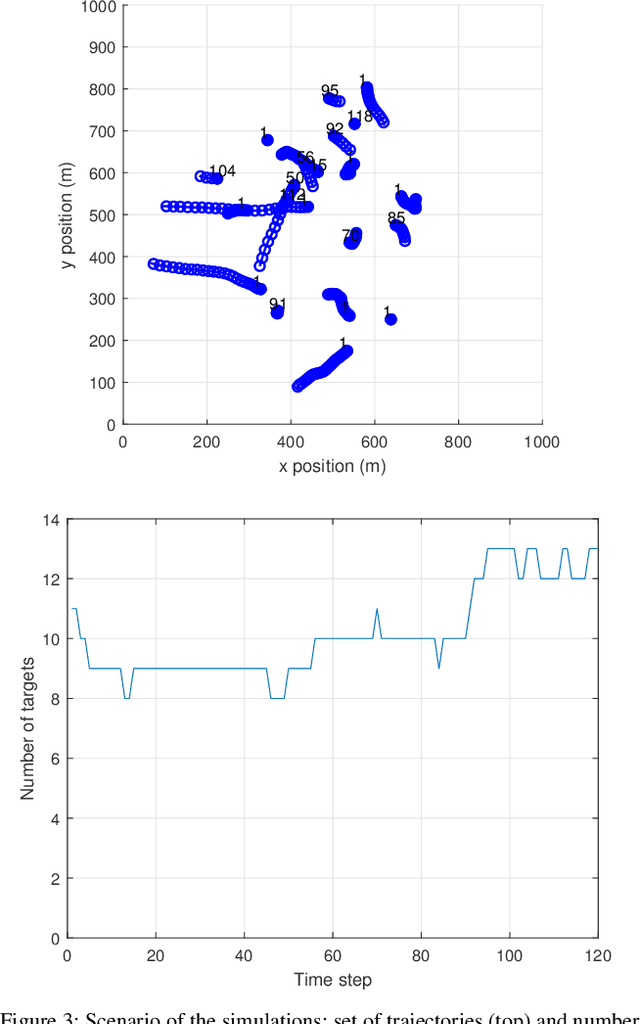

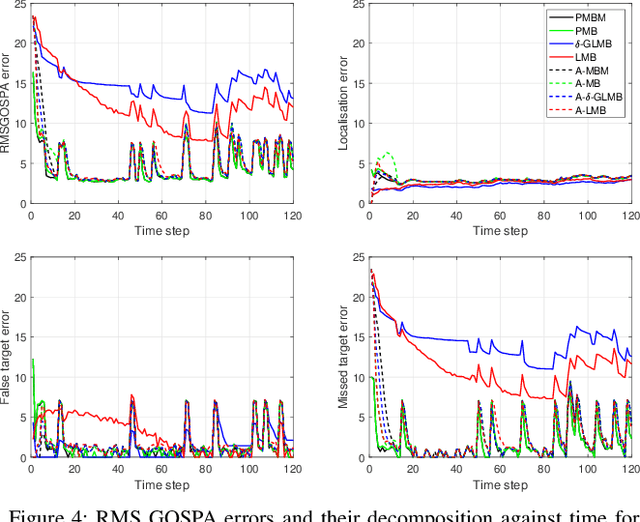

A comparison between PMBM Bayesian track initiation and labelled RFS adaptive birth

Jul 13, 2022

Abstract:This paper provides a comparative analysis between the adaptive birth model used in the labelled random finite set literature and the track initiation in the Poisson multi-Bernoulli mixture (PMBM) filter, with point-target models. The PMBM track initiation is obtained via Bayes' rule applied on the predicted PMBM density, and creates one Bernoulli component for each received measurement, representing that this measurement may be clutter or a detection from a new target. Adaptive birth mimics this procedure by creating a Bernoulli component for each measurement using a different rule to determine the probability of existence and a user-defined single-target density. This paper first provides an analysis of the differences that arise in track initiation based on isolated measurements. Then, it shows that adaptive birth underestimates the number of objects present in the surveillance area under common modelling assumptions. Finally, we provide numerical simulations to further illustrate the differences.

* Matlab implementations of PMBM filters can be found at https://github.com/Agarciafernandez/MTT and https://github.com/yuhsuansia

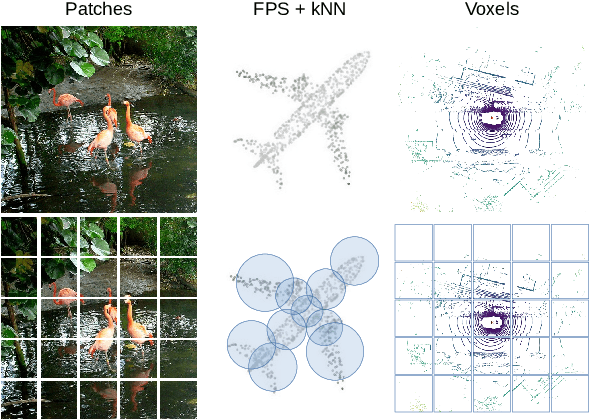

Masked Autoencoders for Self-Supervised Learning on Automotive Point Clouds

Jul 01, 2022

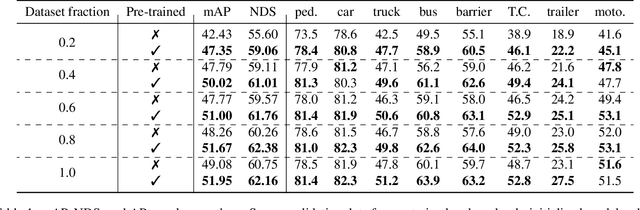

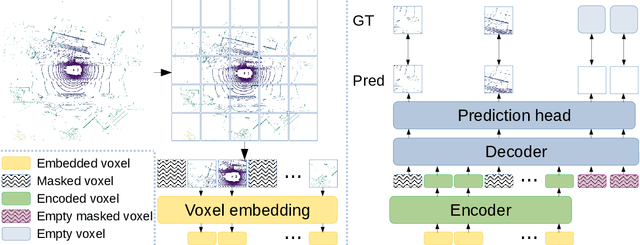

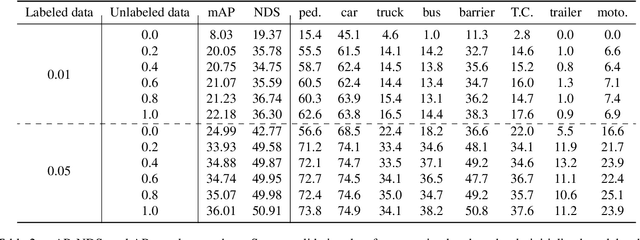

Abstract:Masked autoencoding has become a successful pre-training paradigm for Transformer models for text, images, and recently, point clouds. Raw automotive datasets are a suitable candidate for self-supervised pre-training as they generally are cheap to collect compared to annotations for tasks like 3D object detection (OD). However, development of masked autoencoders for point clouds has focused solely on synthetic and indoor data. Consequently, existing methods have tailored their representations and models toward point clouds which are small, dense and have homogeneous point density. In this work, we study masked autoencoding for point clouds in an automotive setting, which are sparse and for which the point density can vary drastically among objects in the same scene. To this end, we propose Voxel-MAE, a simple masked autoencoding pre-training scheme designed for voxel representations. We pre-train the backbone of a Transformer-based 3D object detector to reconstruct masked voxels and to distinguish between empty and non-empty voxels. Our method improves the 3D OD performance by 1.75 mAP points and 1.05 NDS on the challenging nuScenes dataset. Compared to existing self-supervised methods for automotive data, Voxel-MAE displays up to $2\times$ performance increase. Further, we show that by pre-training with Voxel-MAE, we require only 40% of the annotated data to outperform a randomly initialized equivalent. Code will be released.

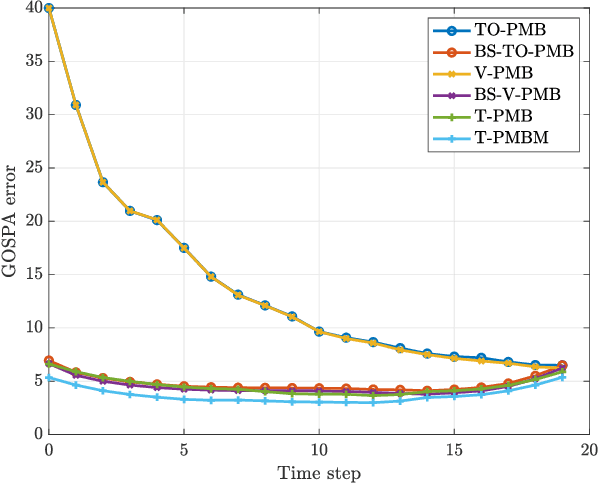

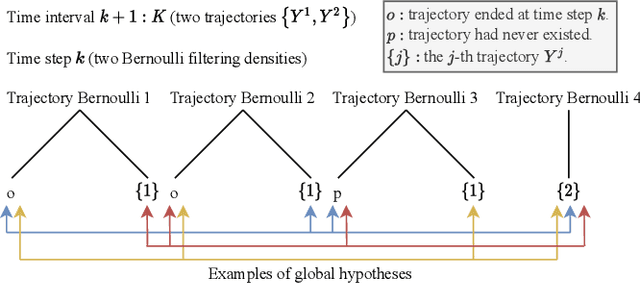

Multiple Object Trajectory Estimation Using Backward Simulation

Jun 16, 2022

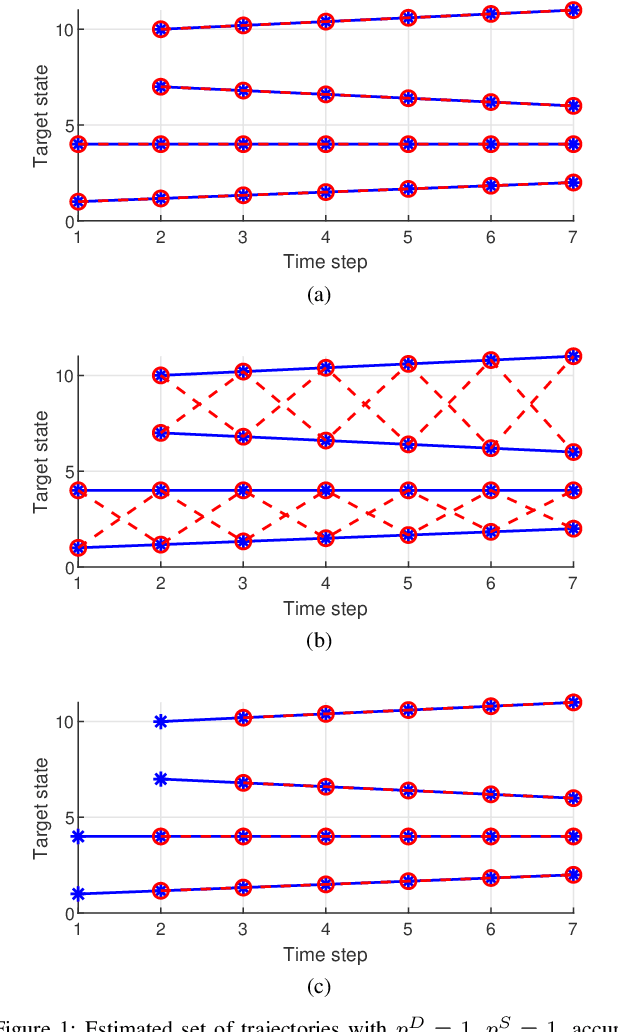

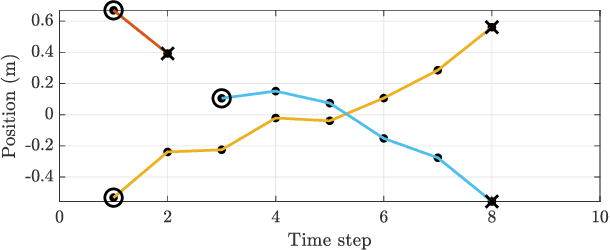

Abstract:This paper presents a general solution for computing the multi-object posterior for sets of trajectories from a sequence of multi-object (unlabelled) filtering densities and a multi-object dynamic model. Importantly, the proposed solution opens an avenue of trajectory estimation possibilities for multi-object filters that do not explicitly estimate trajectories. In this paper, we first derive a general multi-trajectory backward smoothing equation based on random finite sets of trajectories. Then we show how to sample sets of trajectories using backward simulation for Poisson multi-Bernoulli filtering densities, and develop a tractable implementation based on ranked assignment. The performance of the resulting multi-trajectory particle smoothers is evaluated in a simulation study, and the results demonstrate that they have superior performance in comparison to several state-of-the-art multi-object filters and smoothers.

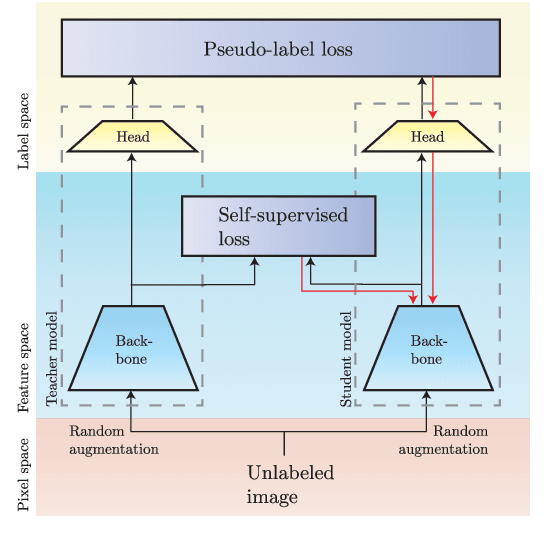

DoubleMatch: Improving Semi-Supervised Learning with Self-Supervision

May 11, 2022

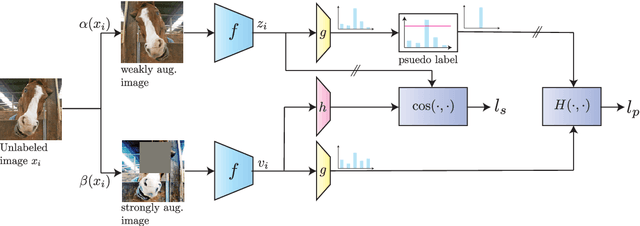

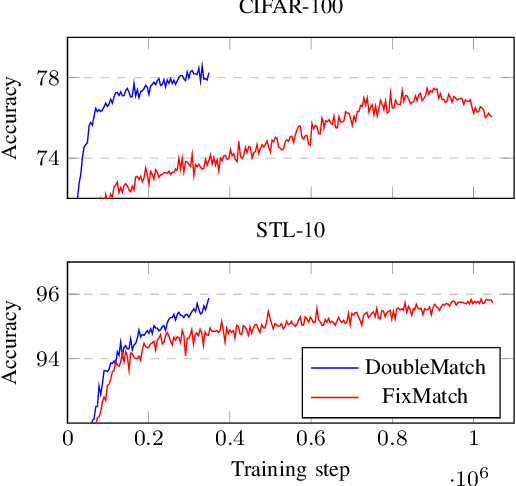

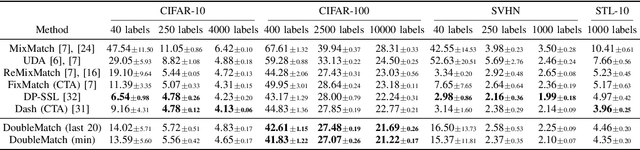

Abstract:Following the success of supervised learning, semi-supervised learning (SSL) is now becoming increasingly popular. SSL is a family of methods, which in addition to a labeled training set, also use a sizable collection of unlabeled data for fitting a model. Most of the recent successful SSL methods are based on pseudo-labeling approaches: letting confident model predictions act as training labels. While these methods have shown impressive results on many benchmark datasets, a drawback of this approach is that not all unlabeled data are used during training. We propose a new SSL algorithm, DoubleMatch, which combines the pseudo-labeling technique with a self-supervised loss, enabling the model to utilize all unlabeled data in the training process. We show that this method achieves state-of-the-art accuracies on multiple benchmark datasets while also reducing training times compared to existing SSL methods. Code is available at https://github.com/walline/doublematch.

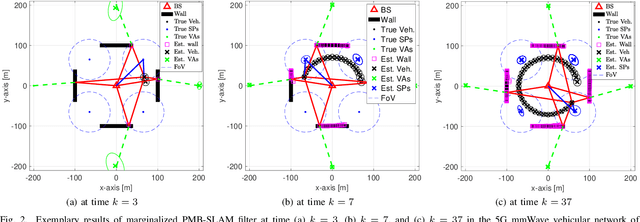

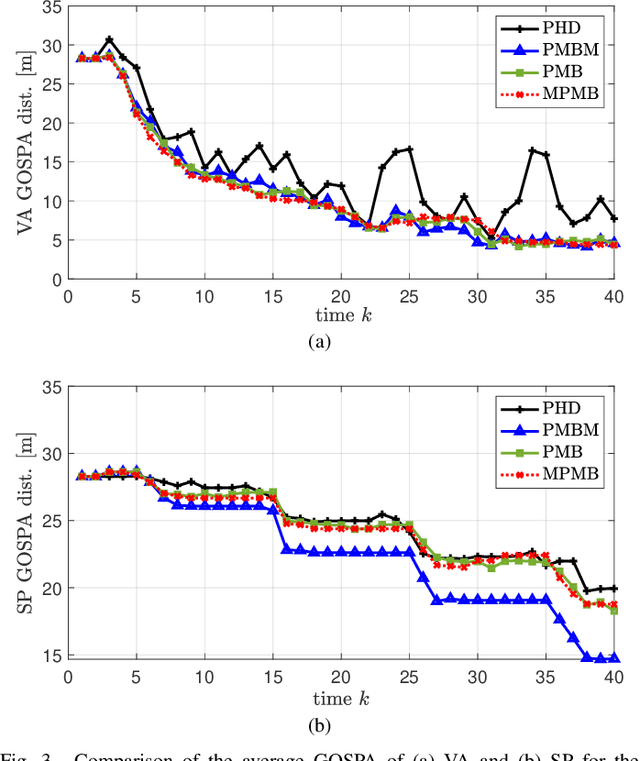

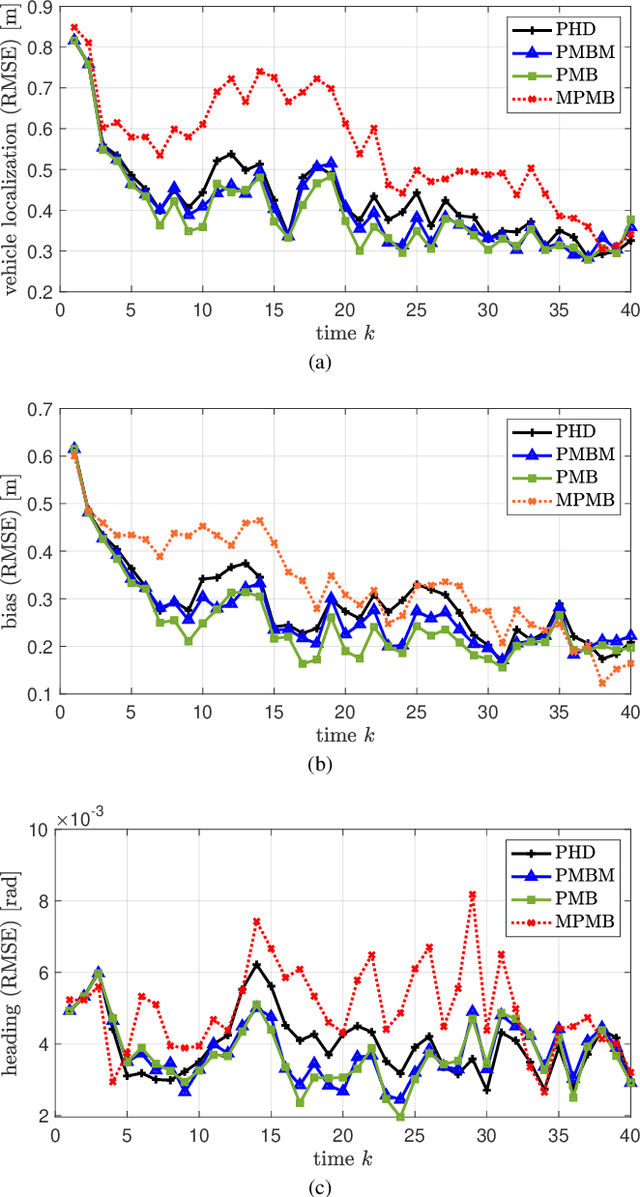

PMBM-based SLAM Filters in 5G mmWave Vehicular Networks

May 05, 2022

Abstract:Radio-based vehicular simultaneous localization and mapping (SLAM) aims to localize vehicles while mapping the landmarks in the environment. We propose a sequence of three Poisson multi-Bernoulli mixture (PMBM) based SLAM filters, which handle the entire SLAM problem in a theoretically optimal manner. The complexity of the three proposed SLAM filters is progressively reduced while sustaining high accuracy by deriving SLAM density approximation with the marginalization of nuisance parameters (either vehicle state or data association). Firstly, the PMBM SLAM filter serves as the foundation, for which we provide the first complete description based on a Rao-Blackwellized particle filter. Secondly, the Poisson multi-Bernoulli (PMB) SLAM filter is based on the standard reduction from PMBM to PMB, but involves a novel interpretation based on auxiliary variables and a relation to Bethe free energy. Finally, using the same auxiliary variable argument, we derive a marginalized PMB SLAM filter, which avoids particles and is instead implemented with a low-complexity cubature Kalman filter. We evaluate the three proposed SLAM filters in comparison with the probability hypothesis density (PHD) SLAM filter in 5G mmWave vehicular networks and show the computation-performance trade-off between them.

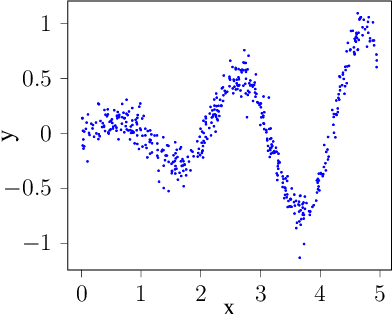

Active Learning with Weak Labels for Gaussian Processes

Apr 18, 2022

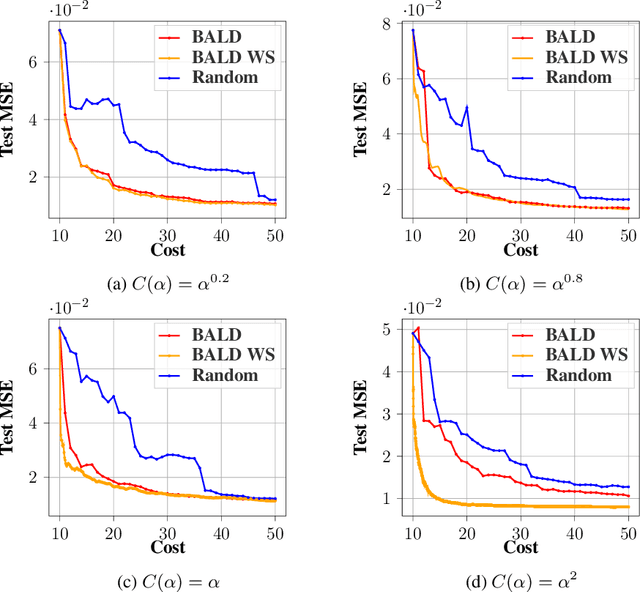

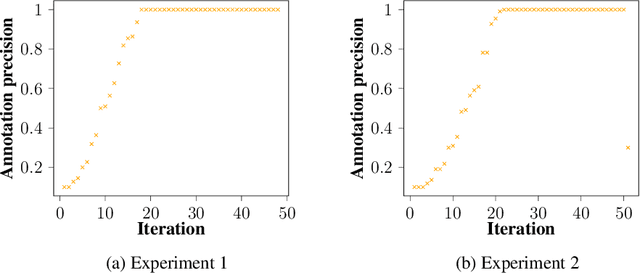

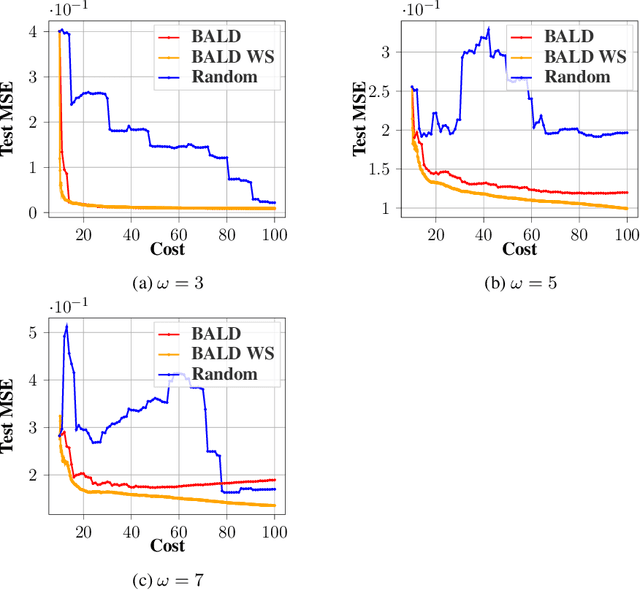

Abstract:Annotating data for supervised learning can be costly. When the annotation budget is limited, active learning can be used to select and annotate those observations that are likely to give the most gain in model performance. We propose an active learning algorithm that, in addition to selecting which observation to annotate, selects the precision of the annotation that is acquired. Assuming that annotations with low precision are cheaper to obtain, this allows the model to explore a larger part of the input space, with the same annotation costs. We build our acquisition function on the previously proposed BALD objective for Gaussian Processes, and empirically demonstrate the gains of being able to adjust the annotation precision in the active learning loop.

Object Detection as Probabilistic Set Prediction

Mar 16, 2022

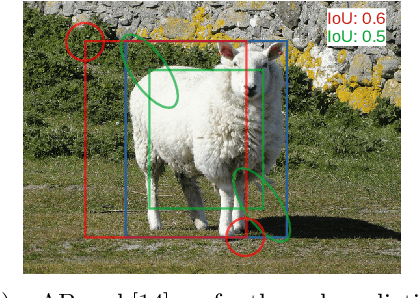

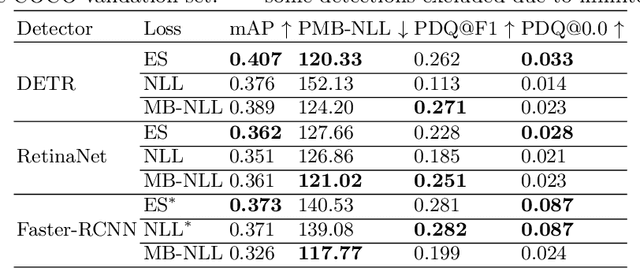

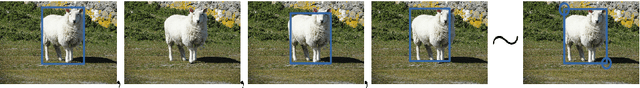

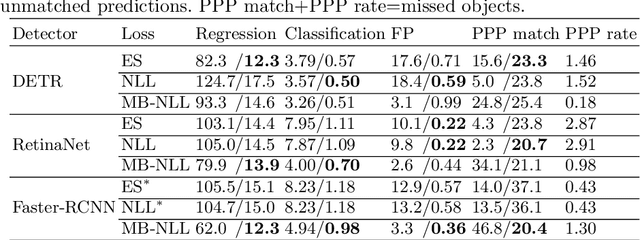

Abstract:Accurate uncertainty estimates are essential for deploying deep object detectors in safety-critical systems. The development and evaluation of probabilistic object detectors have been hindered by shortcomings in existing performance measures, which tend to involve arbitrary thresholds or limit the detector's choice of distributions. In this work, we propose to view object detection as a set prediction task where detectors predict the distribution over the set of objects. Using the negative log-likelihood for random finite sets, we present a proper scoring rule for evaluating and training probabilistic object detectors. The proposed method can be applied to existing probabilistic detectors, is free from thresholds, and enables fair comparison between architectures. Three different types of detectors are evaluated on the COCO dataset. Our results indicate that the training of existing detectors is optimized toward non-probabilistic metrics. We hope to encourage the development of new object detectors that can accurately estimate their own uncertainty. Code will be released.

Can Deep Learning be Applied to Model-Based Multi-Object Tracking?

Feb 16, 2022

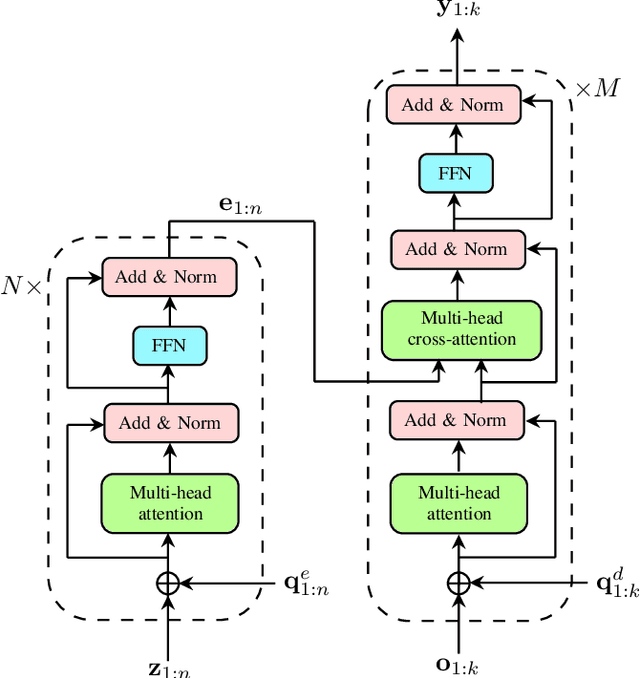

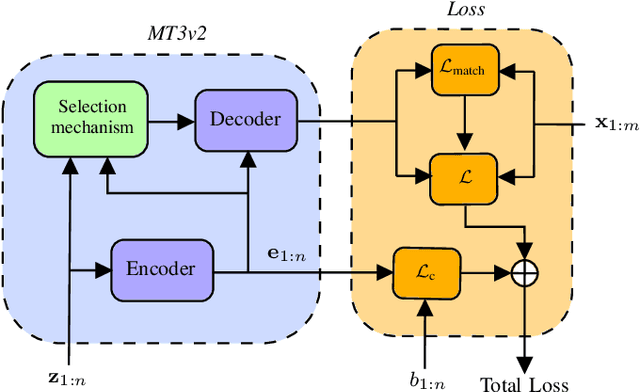

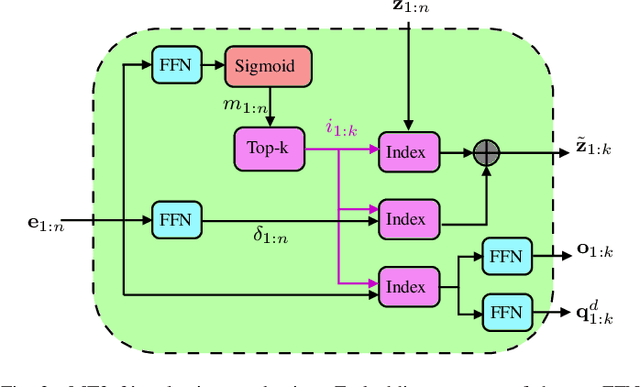

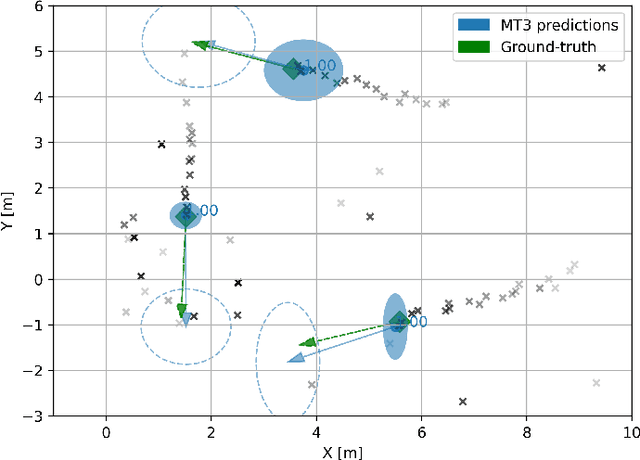

Abstract:Multi-object tracking (MOT) is the problem of tracking the state of an unknown and time-varying number of objects using noisy measurements, with important applications such as autonomous driving, tracking animal behavior, defense systems, and others. In recent years, deep learning (DL) has been increasingly used in MOT for improving tracking performance, but mostly in settings where the measurements are high-dimensional and there are no available models of the measurement likelihood and the object dynamics. The model-based setting instead has not attracted as much attention, and it is still unclear if DL methods can outperform traditional model-based Bayesian methods, which are the state of the art (SOTA) in this context. In this paper, we propose a Transformer-based DL tracker and evaluate its performance in the model-based setting, comparing it to SOTA model-based Bayesian methods in a variety of different tasks. Our results show that the proposed DL method can match the performance of the model-based methods in simple tasks, while outperforming them when the task gets more complicated, either due to an increase in the data association complexity, or to stronger nonlinearities of the models of the environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge