Jian Bai

OPTIAGENT: A Physics-Driven Agentic Framework for Automated Optical Design

Feb 27, 2026Abstract:Optical design is the process of configuring optical elements to precisely manipulate light for high-fidelity imaging. It is inherently a highly non-convex optimization problem that relies heavily on human heuristic expertise and domain-specific knowledge. While Large Language Models (LLMs) possess extensive optical knowledge, their capabilities in leveraging the knowledge in designing lens system remain significantly constrained. This work represents the first attempt to employ LLMs in the field of optical design. We bridge the expertise gap by enabling users without formal optical training to successfully develop functional lens systems. Concretely, we curate a comprehensive dataset, named OptiDesignQA, which encompasses both classical lens systems sourced from standard optical textbooks and novel configurations generated by automated design algorithms for training and evaluation. Furthermore, we inject domain-specific optical expertise into the LLM through a hybrid objective of full-system synthesis and lens completion. To align the model with optical principles, we employ Group Relative Policy Optimization Done Right (DrGRPO) guided by Optical Lexicographic Reward for physics-driven policy alignment. This reward system incorporates structural format rewards, physical feasibility rewards, light-manipulation accuracy, and LLM-based heuristics. Finally, our model integrates with specialized optical optimization routines for end-to-end fine-tuning and precision refinement. We benchmark our proposed method against both traditional optimization-based automated design algorithms and LLM counterparts, and experimental results show the superiority of our method.

Towards Real-world Lens Active Alignment with Unlabeled Data via Domain Adaptation

Jan 08, 2026Abstract:Active Alignment (AA) is a key technology for the large-scale automated assembly of high-precision optical systems. Compared with labor-intensive per-model on-device calibration, a digital-twin pipeline built on optical simulation offers a substantial advantage in generating large-scale labeled data. However, complex imaging conditions induce a domain gap between simulation and real-world images, limiting the generalization of simulation-trained models. To address this, we propose augmenting a simulation baseline with minimal unlabeled real-world images captured at random misalignment positions, mitigating the gap from a domain adaptation perspective. We introduce Domain Adaptive Active Alignment (DA3), which utilizes an autoregressive domain transformation generator and an adversarial-based feature alignment strategy to distill real-world domain information via self-supervised learning. This enables the extraction of domain-invariant image degradation features to facilitate robust misalignment prediction. Experiments on two lens types reveal that DA3 improves accuracy by 46% over a purely simulation pipeline. Notably, it approaches the performance achieved with precisely labeled real-world data collected on 3 lens samples, while reducing on-device data collection time by 98.7%. The results demonstrate that domain adaptation effectively endows simulation-trained models with robust real-world performance, validating the digital-twin pipeline as a practical solution to significantly enhance the efficiency of large-scale optical assembly.

Towards Single-Lens Controllable Depth-of-Field Imaging via All-in-Focus Aberration Correction and Monocular Depth Estimation

Sep 15, 2024

Abstract:Controllable Depth-of-Field (DoF) imaging commonly produces amazing visual effects based on heavy and expensive high-end lenses. However, confronted with the increasing demand for mobile scenarios, it is desirable to achieve a lightweight solution with Minimalist Optical Systems (MOS). This work centers around two major limitations of MOS, i.e., the severe optical aberrations and uncontrollable DoF, for achieving single-lens controllable DoF imaging via computational methods. A Depth-aware Controllable DoF Imaging (DCDI) framework is proposed equipped with All-in-Focus (AiF) aberration correction and monocular depth estimation, where the recovered image and corresponding depth map are utilized to produce imaging results under diverse DoFs of any high-end lens via patch-wise convolution. To address the depth-varying optical degradation, we introduce a Depth-aware Degradation-adaptive Training (DA2T) scheme. At the dataset level, a Depth-aware Aberration MOS (DAMOS) dataset is established based on the simulation of Point Spread Functions (PSFs) under different object distances. Additionally, we design two plug-and-play depth-aware mechanisms to embed depth information into the aberration image recovery for better tackling depth-aware degradation. Furthermore, we propose a storage-efficient Omni-Lens-Field model to represent the 4D PSF library of various lenses. With the predicted depth map, recovered image, and depth-aware PSF map inferred by Omni-Lens-Field, single-lens controllable DoF imaging is achieved. Comprehensive experimental results demonstrate that the proposed framework enhances the recovery performance, and attains impressive single-lens controllable DoF imaging results, providing a seminal baseline for this field. The source code and the established dataset will be publicly available at https://github.com/XiaolongQian/DCDI.

A Flexible Framework for Universal Computational Aberration Correction via Automatic Lens Library Generation and Domain Adaptation

Sep 09, 2024

Abstract:Emerging universal Computational Aberration Correction (CAC) paradigms provide an inspiring solution to light-weight and high-quality imaging without repeated data preparation and model training to accommodate new lens designs. However, the training databases in these approaches, i.e., the lens libraries (LensLibs), suffer from their limited coverage of real-world aberration behaviors. In this work, we set up an OmniLens framework for universal CAC, considering both the generalization ability and flexibility. OmniLens extends the idea of universal CAC to a broader concept, where a base model is trained for three cases, including zero-shot CAC with the pre-trained model, few-shot CAC with a little lens-specific data for fine-tuning, and domain adaptive CAC using domain adaptation for lens-descriptions-unknown lens. In terms of OmniLens's data foundation, we first propose an Evolution-based Automatic Optical Design (EAOD) pipeline to construct LensLib automatically, coined AODLib, whose diversity is enriched by an evolution framework, with comprehensive constraints and a hybrid optimization strategy for achieving realistic aberration behaviors. For network design, we introduce the guidance of high-quality codebook priors to facilitate zero-shot CAC and few-shot CAC, which enhances the model's generalization ability, while also boosting its convergence in a few-shot case. Furthermore, based on the statistical observation of dark channel priors in optical degradation, we design an unsupervised regularization term to adapt the base model to the target descriptions-unknown lens using its aberration images without ground truth. We validate OmniLens on 4 manually designed low-end lenses with various structures and aberration behaviors. Remarkably, the base model trained on AODLib exhibits strong generalization capabilities, achieving 97% of the lens-specific performance in a zero-shot setting.

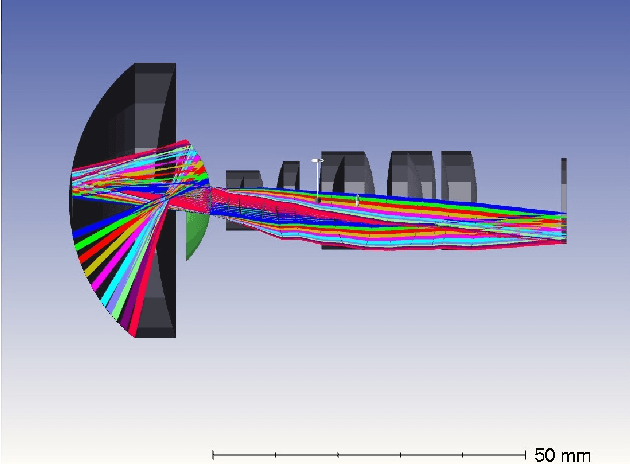

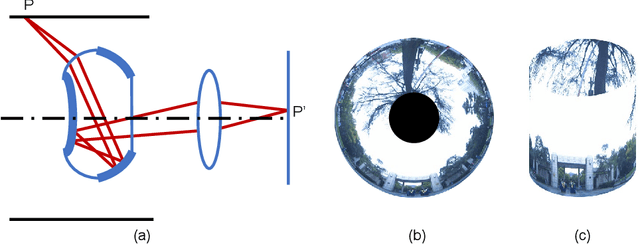

Design, analysis, and manufacturing of a glass-plastic hybrid minimalist aspheric panoramic annular lens

May 05, 2024

Abstract:We propose a high-performance glass-plastic hybrid minimalist aspheric panoramic annular lens (ASPAL) to solve several major limitations of the traditional panoramic annular lens (PAL), such as large size, high weight, and complex system. The field of view (FoV) of the ASPAL is 360{\deg}x(35{\deg}~110{\deg}) and the imaging quality is close to the diffraction limit. This large FoV ASPAL is composed of only 4 lenses. Moreover, we establish a physical structure model of PAL using the ray tracing method and study the influence of its physical parameters on compactness ratio. In addition, for the evaluation of local tolerances of annular surfaces, we propose a tolerance analysis method suitable for ASPAL. This analytical method can effectively analyze surface irregularities on annular surfaces and provide clear guidance on manufacturing tolerances for ASPAL. Benefiting from high-precision glass molding and injection molding aspheric lens manufacturing techniques, we finally manufactured 20 ASPALs in small batches. The weight of an ASPAL prototype is only 8.5 g. Our framework provides promising insights for the application of panoramic systems in space and weight-constrained environmental sensing scenarios such as intelligent security, micro-UAVs, and micro-robots.

Coarse-to-fine Hybrid 3D Mapping System with Co-calibrated Omnidirectional Camera and Non-repetitive LiDAR

Feb 08, 2023

Abstract:This paper presents a novel 3D mapping robot with an omnidirectional field-of-view (FoV) sensor suite composed of a non-repetitive LiDAR and an omnidirectional camera. Thanks to the non-repetitive scanning nature of the LiDAR, an automatic targetless co-calibration method is proposed to simultaneously calibrate the intrinsic parameters for the omnidirectional camera and the extrinsic parameters for the camera and LiDAR, which is crucial for the required step in bringing color and texture information to the point clouds in surveying and mapping tasks. Comparisons and analyses are made to target-based intrinsic calibration and mutual information (MI)-based extrinsic calibration, respectively. With this co-calibrated sensor suite, the hybrid mapping robot integrates both the odometry-based mapping mode and stationary mapping mode. Meanwhile, we proposed a new workflow to achieve coarse-to-fine mapping, including efficient and coarse mapping in a global environment with odometry-based mapping mode; planning for viewpoints in the region-of-interest (ROI) based on the coarse map (relies on the previous work); navigating to each viewpoint and performing finer and more precise stationary scanning and mapping of the ROI. The fine map is stitched with the global coarse map, which provides a more efficient and precise result than the conventional stationary approaches and the emerging odometry-based approaches, respectively.

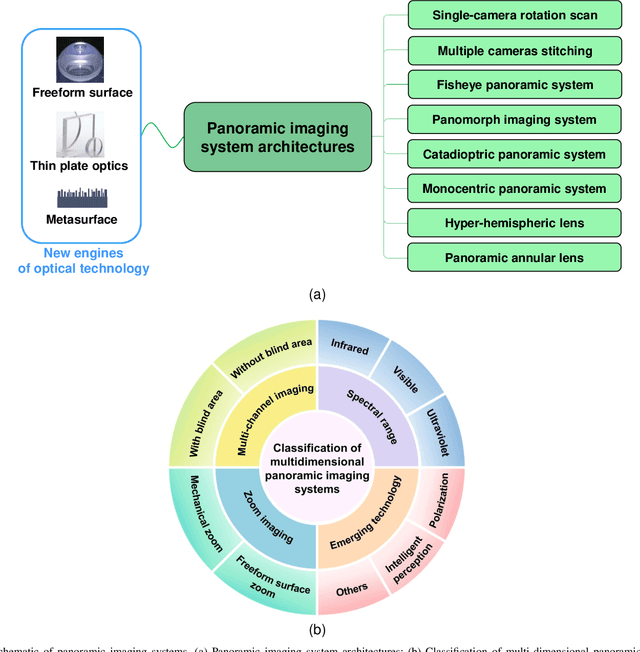

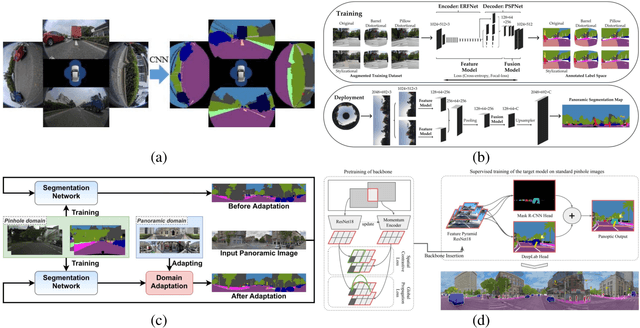

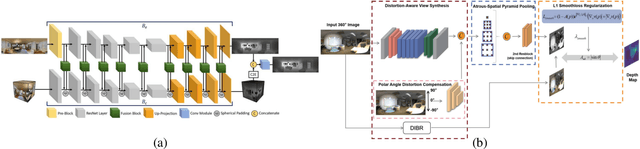

Review on Panoramic Imaging and Its Applications in Scene Understanding

May 11, 2022

Abstract:With the rapid development of high-speed communication and artificial intelligence technologies, human perception of real-world scenes is no longer limited to the use of small Field of View (FoV) and low-dimensional scene detection devices. Panoramic imaging emerges as the next generation of innovative intelligent instruments for environmental perception and measurement. However, while satisfying the need for large-FoV photographic imaging, panoramic imaging instruments are expected to have high resolution, no blind area, miniaturization, and multi-dimensional intelligent perception, and can be combined with artificial intelligence methods towards the next generation of intelligent instruments, enabling deeper understanding and more holistic perception of 360-degree real-world surrounding environments. Fortunately, recent advances in freeform surfaces, thin-plate optics, and metasurfaces provide innovative approaches to address human perception of the environment, offering promising ideas beyond conventional optical imaging. In this review, we begin with introducing the basic principles of panoramic imaging systems, and then describe the architectures, features, and functions of various panoramic imaging systems. Afterwards, we discuss in detail the broad application prospects and great design potential of freeform surfaces, thin-plate optics, and metasurfaces in panoramic imaging. We then provide a detailed analysis on how these techniques can help enhance the performance of panoramic imaging systems. We further offer a detailed analysis of applications of panoramic imaging in scene understanding for autonomous driving and robotics, spanning panoramic semantic image segmentation, panoramic depth estimation, panoramic visual localization, and so on. Finally, we cast a perspective on future potential and research directions for panoramic imaging instruments.

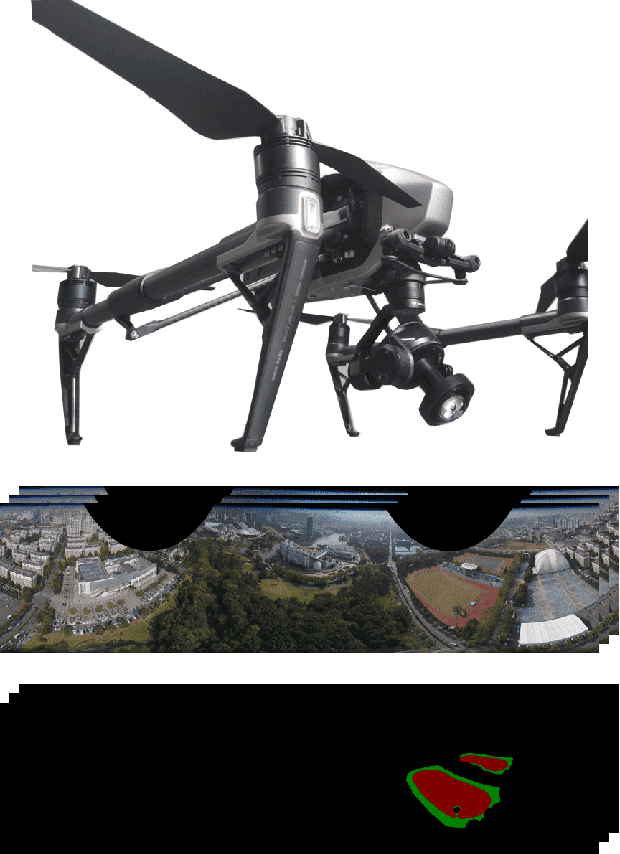

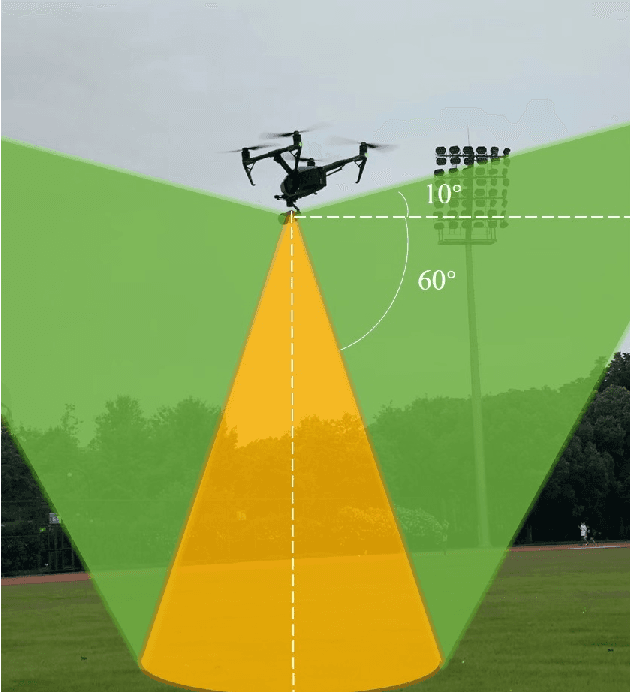

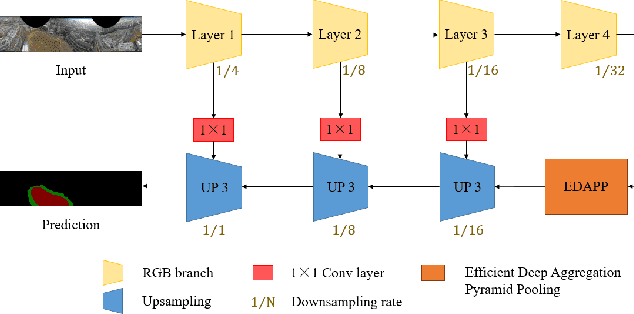

Aerial-PASS: Panoramic Annular Scene Segmentation in Drone Videos

May 15, 2021

Abstract:Aerial pixel-wise scene perception of the surrounding environment is an important task for UAVs (Unmanned Aerial Vehicles). Previous research works mainly adopt conventional pinhole cameras or fisheye cameras as the imaging device. However, these imaging systems cannot achieve large Field of View (FoV), small size, and lightweight at the same time. To this end, we design a UAV system with a Panoramic Annular Lens (PAL), which has the characteristics of small size, low weight, and a 360-degree annular FoV. A lightweight panoramic annular semantic segmentation neural network model is designed to achieve high-accuracy and real-time scene parsing. In addition, we present the first drone-perspective panoramic scene segmentation dataset Aerial-PASS, with annotated labels of track, field, and others. A comprehensive variety of experiments shows that the designed system performs satisfactorily in aerial panoramic scene parsing. In particular, our proposed model strikes an excellent trade-off between segmentation performance and inference speed suitable, validated on both public street-scene and our established aerial-scene datasets.

Panoramic annular SLAM with loop closure and global optimization

Feb 26, 2021

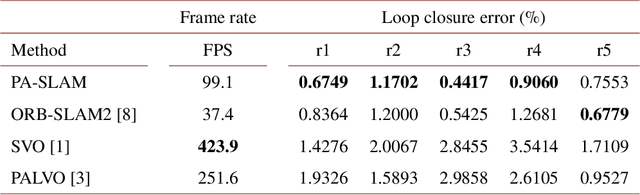

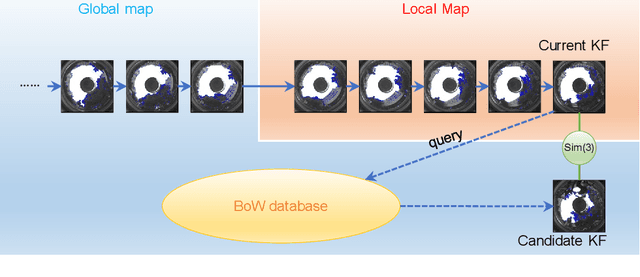

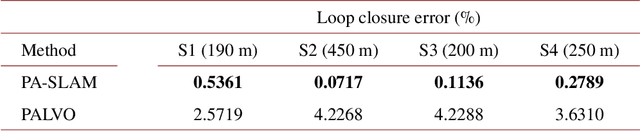

Abstract:In this paper, we propose PA-SLAM, a monocular panoramic annular visual SLAM system with loop closure and global optimization. A hybrid point selection strategy is put forward in the tracking front-end, which ensures repeatability of keypoints and enables loop closure detection based on the bag-of-words approach. Every detected loop candidate is verified geometrically and the $Sim(3)$ relative pose constraint is estimated to perform pose graph optimization and global bundle adjustment in the back-end. A comprehensive set of experiments on real-world datasets demonstrates that the hybrid point selection strategy allows reliable loop closure detection, and the accumulated error and scale drift have been significantly reduced via global optimization, enabling PA-SLAM to reach state-of-the-art accuracy while maintaining high robustness and efficiency.

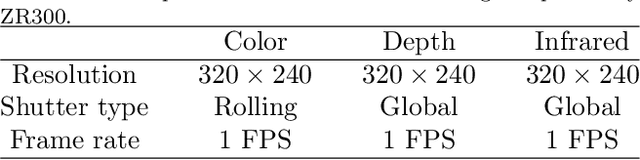

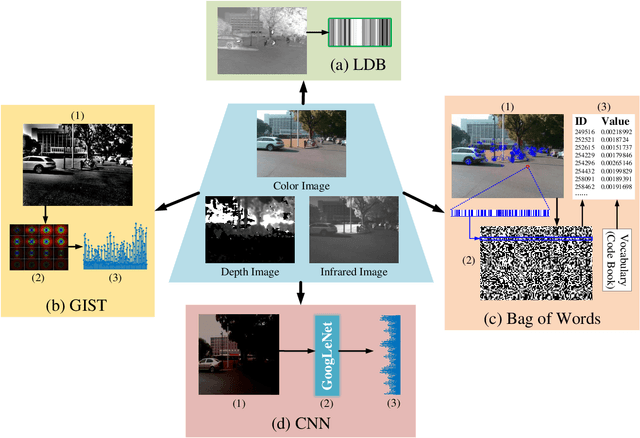

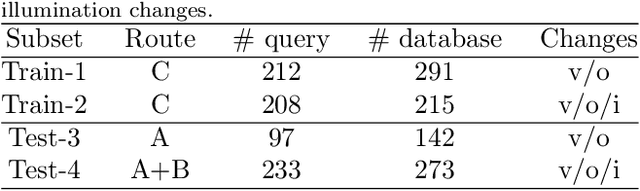

OpenMPR: Recognize Places Using Multimodal Data for People with Visual Impairments

Sep 15, 2019

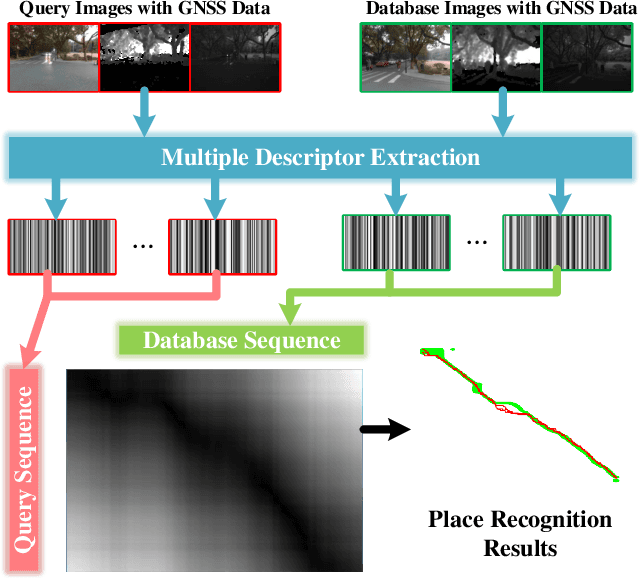

Abstract:Place recognition plays a crucial role in navigational assistance, and is also a challenging issue of assistive technology. The place recognition is prone to erroneous localization owing to various changes between database and query images. Aiming at the wearable assistive device for visually impaired people, we propose an open-sourced place recognition algorithm OpenMPR, which utilizes the multimodal data to address the challenging issues of place recognition. Compared with conventional place recognition, the proposed OpenMPR not only leverages multiple effective descriptors, but also assigns different weights to those descriptors in image matching. Incorporating GNSS data into the algorithm, the cone-based sequence searching is used for robust place recognition. The experiments illustrate that the proposed algorithm manages to solve the place recognition issue in the real-world scenarios and surpass the state-of-the-art algorithms in terms of assistive navigation performance. On the real-world testing dataset, the online OpenMPR achieves 88.7% precision at 100% recall without illumination changes, and achieves 57.8% precision at 99.3% recall with illumination changes. The OpenMPR is available at https://github.com/chengricky/OpenMultiPR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge