Charles C. Kemp

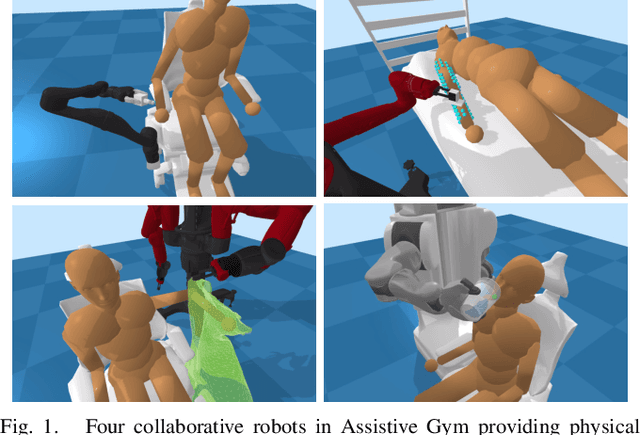

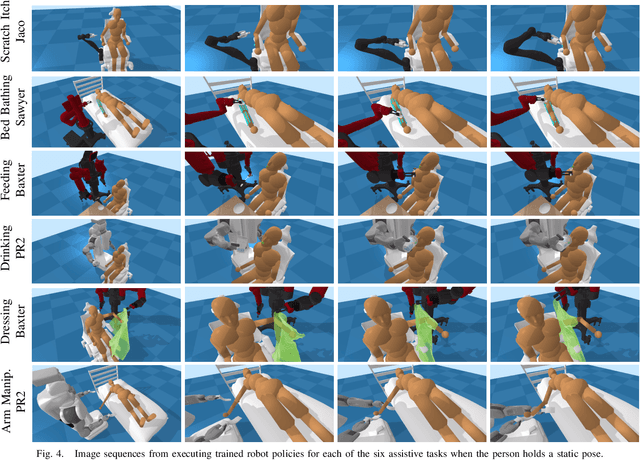

Assistive Gym: A Physics Simulation Framework for Assistive Robotics

Oct 10, 2019

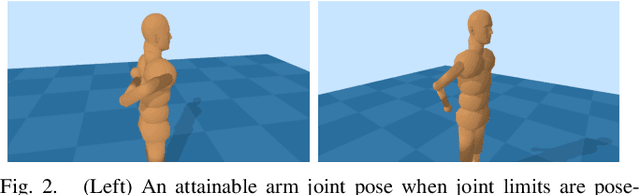

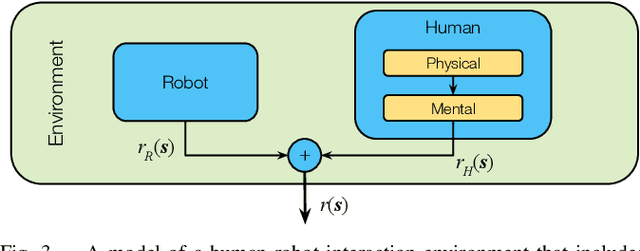

Abstract:Autonomous robots have the potential to serve as versatile caregivers that improve quality of life for millions of people worldwide. Yet, conducting research in this area presents numerous challenges, including the risks of physical interaction between people and robots. Physics simulations have been used to optimize and train robots for physical assistance, but have typically focused on a single task. In this paper, we present Assistive Gym, an open source physics simulation framework for assistive robots that models multiple tasks. It includes six simulated environments in which a robotic manipulator can attempt to assist a person with activities of daily living (ADLs): itch scratching, drinking, feeding, body manipulation, dressing, and bathing. Assistive Gym models a person's physical capabilities and preferences for assistance, which are used to provide a reward function. We present baseline policies trained using reinforcement learning for four different commercial robots in the six environments. We demonstrate that modeling human motion results in better assistance and we compare the performance of different robots. Overall, we show that Assistive Gym is a promising tool for assistive robotics research.

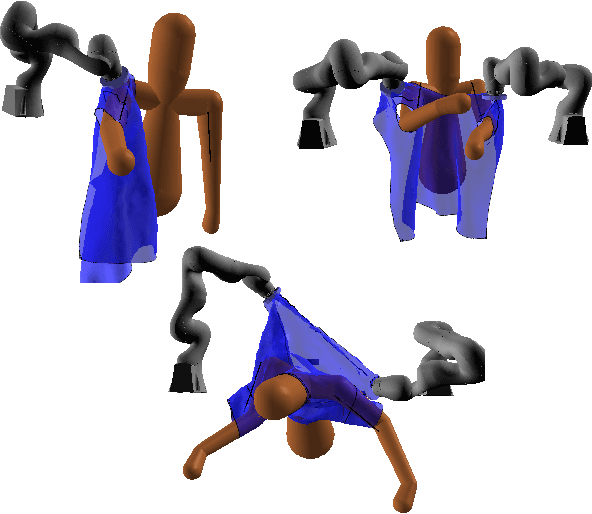

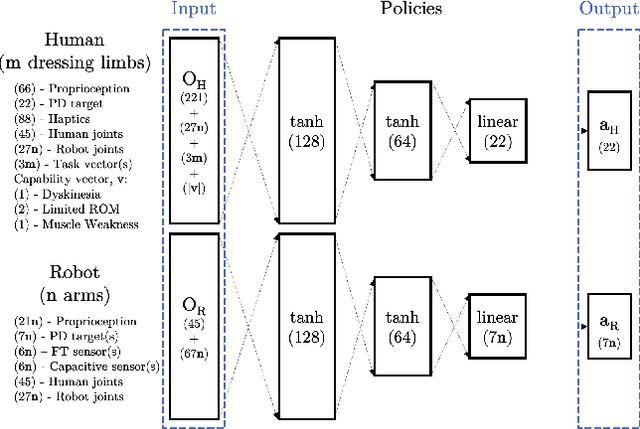

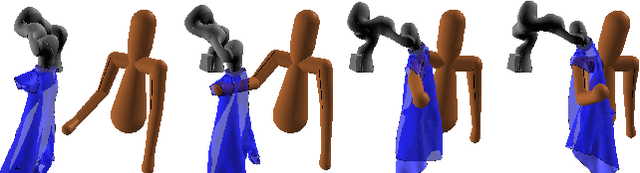

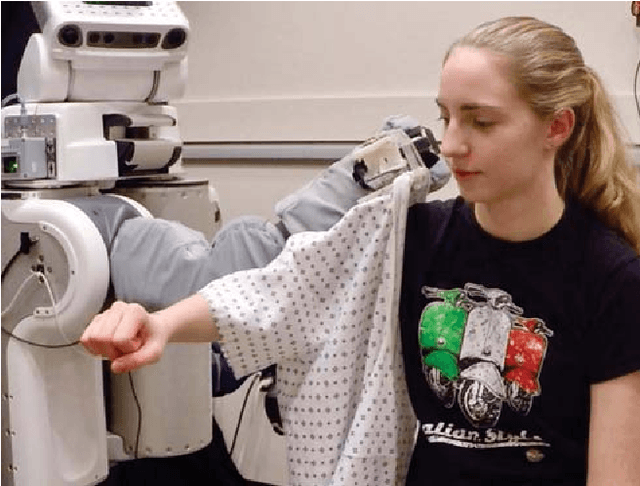

Modeling Collaboration for Robot-assisted Dressing Tasks

Sep 14, 2019

Abstract:We investigated the application of haptic aware feedback control and deep reinforcement learning to robot assisted dressing in simulation. We did so by modeling both human and robot control policies as separate neural networks and training them both via TRPO. We show that co-optimization, training separate human and robot control policies simultaneously, can be a valid approach to finding successful strategies for human/robot cooperation on assisted dressing tasks. Typical tasks are putting on one or both sleeves of a hospital gown or pulling on a T-shirt. We also present a method for modeling human dressing behavior under variations in capability including: unilateral muscle weakness, Dyskinesia, and limited range of motion. Using this method and behavior model, we demonstrate discovery of successful strategies for a robot to assist humans with a variety of capability limitations.

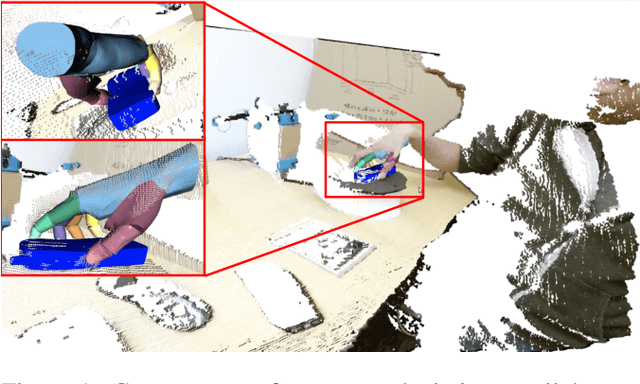

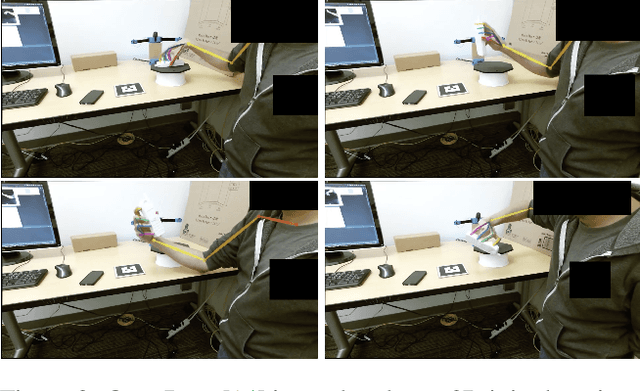

Towards Markerless Grasp Capture

Jul 17, 2019

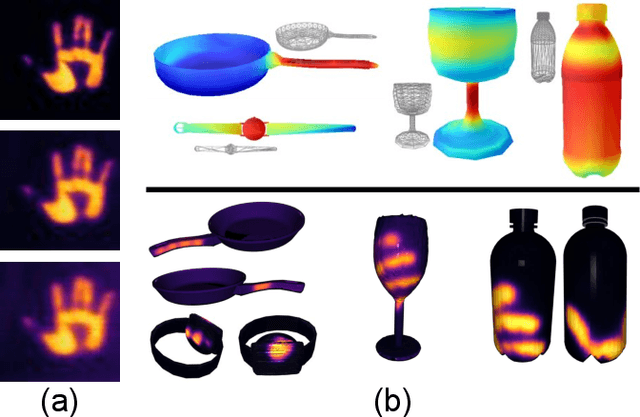

Abstract:Humans excel at grasping objects and manipulating them. Capturing human grasps is important for understanding grasping behavior and reconstructing it realistically in Virtual Reality (VR). However, grasp capture - capturing the pose of a hand grasping an object, and orienting it w.r.t. the object - is difficult because of the complexity and diversity of the human hand, and occlusion. Reflective markers and magnetic trackers traditionally used to mitigate this difficulty introduce undesirable artifacts in images and can interfere with natural grasping behavior. We present preliminary work on a completely marker-less algorithm for grasp capture from a video depicting a grasp. We show how recent advances in 2D hand pose estimation can be used with well-established optimization techniques. Uniquely, our algorithm can also capture hand-object contact in detail and integrate it in the grasp capture process. This is work in progress, find more details at https://contactdb. cc.gatech.edu/grasp_capture.html.

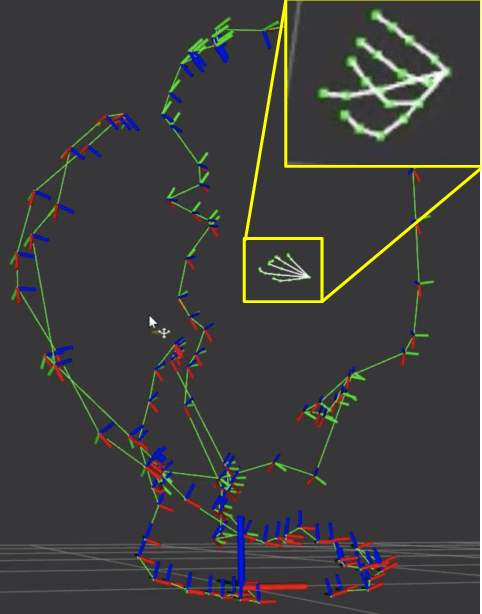

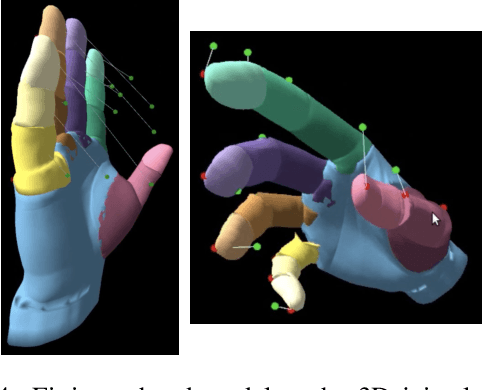

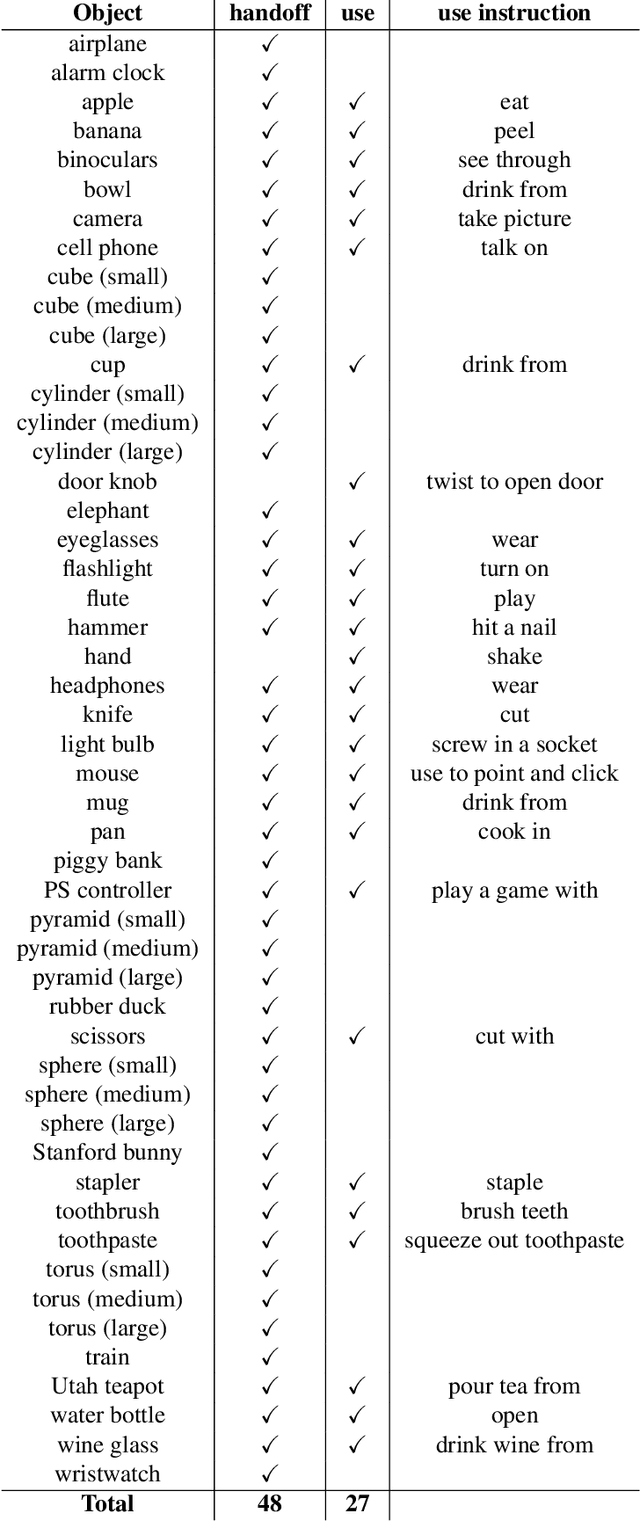

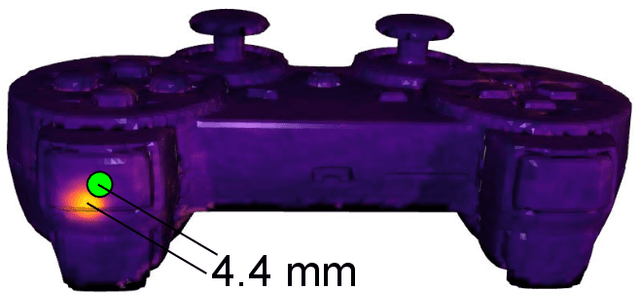

ContactDB: Analyzing and Predicting Grasp Contact via Thermal Imaging

Apr 15, 2019

Abstract:Grasping and manipulating objects is an important human skill. Since hand-object contact is fundamental to grasping, capturing it can lead to important insights. However, observing contact through external sensors is challenging because of occlusion and the complexity of the human hand. We present ContactDB, a novel dataset of contact maps for household objects that captures the rich hand-object contact that occurs during grasping, enabled by use of a thermal camera. Participants in our study grasped 3D printed objects with a post-grasp functional intent. ContactDB includes 3750 3D meshes of 50 household objects textured with contact maps and 375K frames of synchronized RGB-D+thermal images. To the best of our knowledge, this is the first large-scale dataset that records detailed contact maps for human grasps. Analysis of this data shows the influence of functional intent and object size on grasping, the tendency to touch/avoid 'active areas', and the high frequency of palm and proximal finger contact. Finally, we train state-of-the-art image translation and 3D convolution algorithms to predict diverse contact patterns from object shape. Data, code and models are available at https://contactdb.cc.gatech.edu.

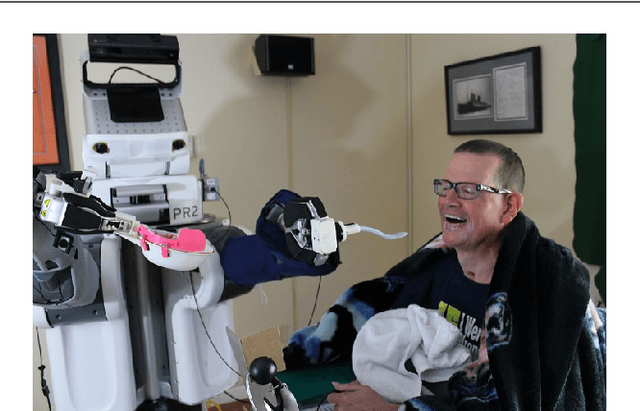

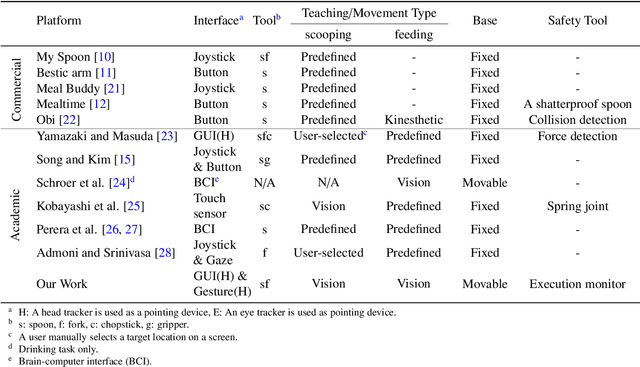

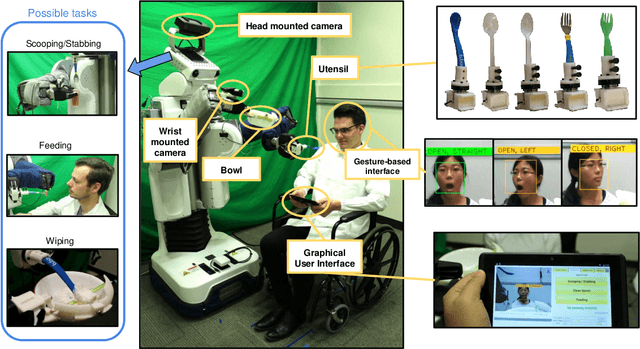

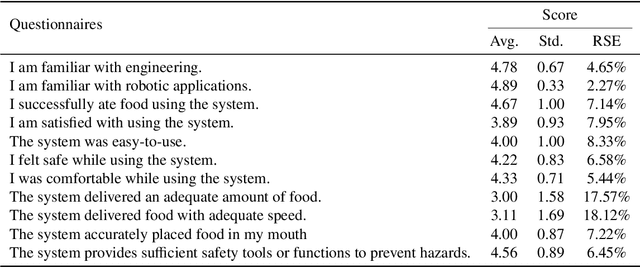

Toward Active Robot-Assisted Feeding with a General-Purpose Mobile Manipulator: Design, Evaluation, and Lessons Learned

Apr 07, 2019

Abstract:Eating is an essential activity of daily living (ADL) for staying healthy and living at home independently. Although numerous assistive devices have been introduced, many people with disabilities are still restricted from independent eating due to the devices' physical or perceptual limitations. In this work, we introduce a new meal-assistance system using a general-purpose mobile manipulator, a Willow Garage PR2, which has the potential to serve as a versatile form of assistive technology. Our active feeding framework enables the robot to autonomously deliver food to the user's mouth. In detail, our web-based user interface, visually-guided behaviors, and safety tools allow people with severe motor impairments to benefit from the robotic assistance. We evaluated our system with 10 able-bodied participants and 9 people with motor impairments. Both groups of participants successfully ate various foods using the system and reported high rates of success for the system's autonomous behaviors in a laboratory environment. Then, we performed in-home evaluation with Henry Evans, a person with quadriplegia, at his house in California, USA. In general, Henry and the other people who operated the system reported that it was comfortable, safe, and easy-to-use. We discuss learned lessons and design insights through user evaluations.

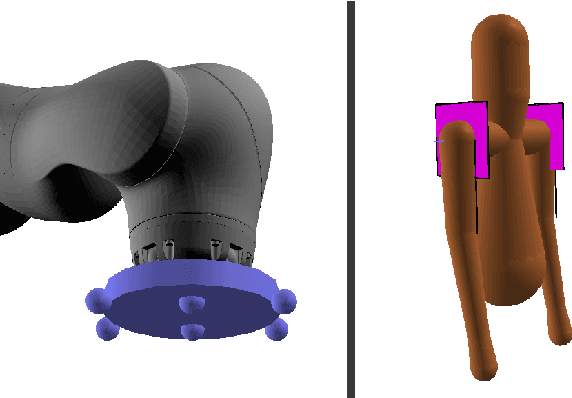

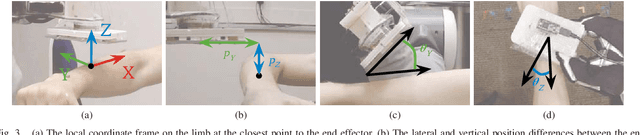

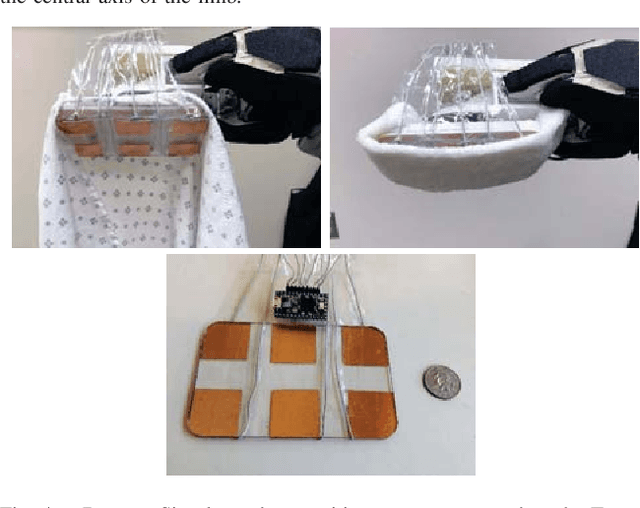

Multidimensional Capacitive Sensing for Robot-Assisted Dressing and Bathing

Apr 03, 2019

Abstract:Robotic assistance presents an opportunity to benefit the lives of many adults with physical disabilities, yet accurately sensing the human body and tracking human motion remain difficult for robots. We present a multidimensional capacitive sensing technique capable of sensing the local pose of a human limb in real time. This sensing approach is unaffected by many visual occlusions that obscure sight of a person's body during robotic assistance, while also allowing a robot to sense the human body through some conductive materials, such as wet cloth. Given measurements from this capacitive sensor, we train a neural network model to estimate the relative vertical and lateral position to the closest point on a person's limb, as well as the pitch and yaw orientation between a robot's end effector and the central axis of the limb. We demonstrate that a PR2 robot can use this sensing approach to assist with two activities of daily living-dressing and bathing. Our robot pulled the sleeve of a hospital gown onto participants' right arms, while using capacitive sensing with feedback control to track human motion. When assisting with bathing, the robot used capacitive sensing with a soft wet washcloth to follow the contours of a participant's limbs and clean the surface of the body. Overall, we find that multidimensional capacitive sensing presents a promising approach for robots to sense and track the human body during assistive tasks that require physical human-robot interaction.

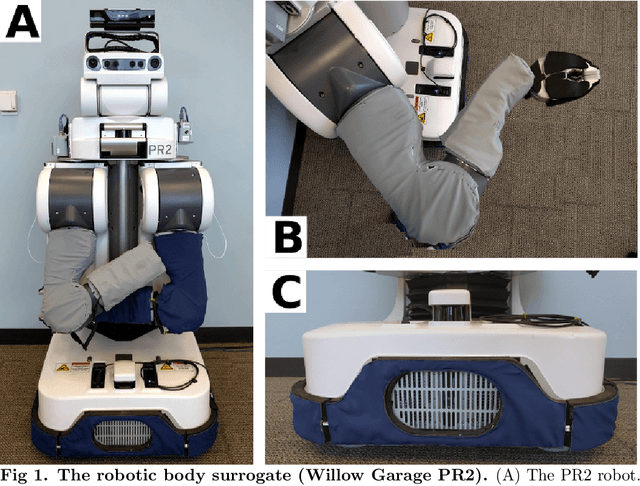

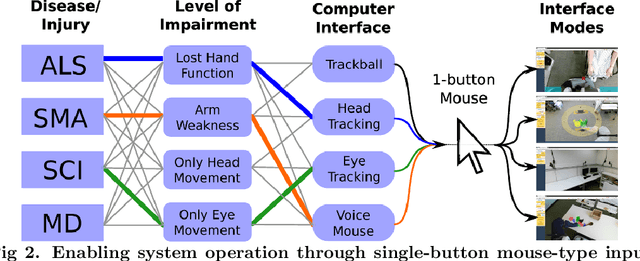

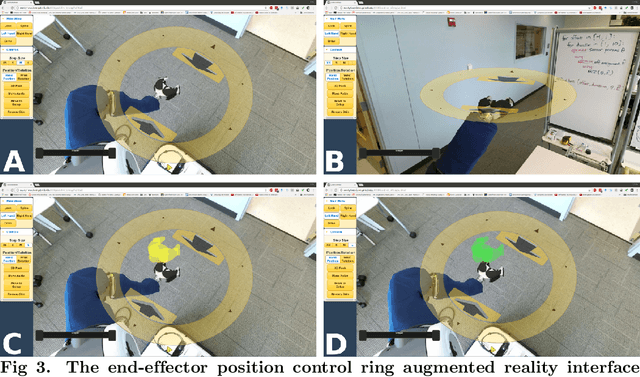

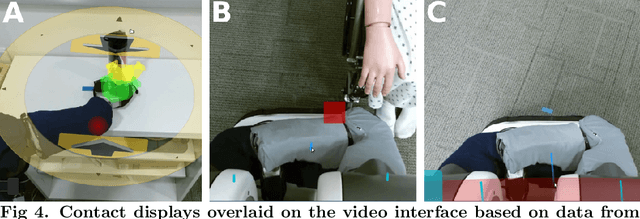

In-home and remote use of robotic body surrogates by people with profound motor deficits

Mar 22, 2019

Abstract:By controlling robots comparable to the human body, people with profound motor deficits could potentially perform a variety of physical tasks for themselves, improving their quality of life. The extent to which this is achievable has been unclear due to the lack of suitable interfaces by which to control robotic body surrogates and a dearth of studies involving substantial numbers of people with profound motor deficits. We developed a novel, web-based augmented reality interface that enables people with profound motor deficits to remotely control a PR2 mobile manipulator from Willow Garage, which is a human-scale, wheeled robot with two arms. We then conducted two studies to investigate the use of robotic body surrogates. In the first study, 15 novice users with profound motor deficits from across the United States controlled a PR2 in Atlanta, GA to perform a modified Action Research Arm Test (ARAT) and a simulated self-care task. Participants achieved clinically meaningful improvements on the ARAT and 12 of 15 participants (80%) successfully completed the simulated self-care task. Participants agreed that the robotic system was easy to use, was useful, and would provide a meaningful improvement in their lives. In the second study, one expert user with profound motor deficits had free use of a PR2 in his home for seven days. He performed a variety of self-care and household tasks, and also used the robot in novel ways. Taking both studies together, our results suggest that people with profound motor deficits can improve their quality of life using robotic body surrogates, and that they can gain benefit with only low-level robot autonomy and without invasive interfaces. However, methods to reduce the rate of errors and increase operational speed merit further investigation.

* 43 Pages, 13 Figures

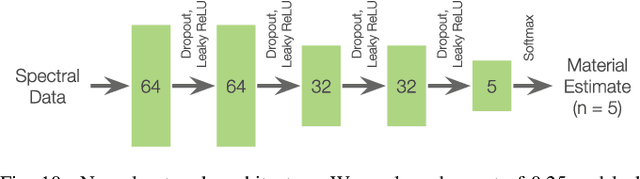

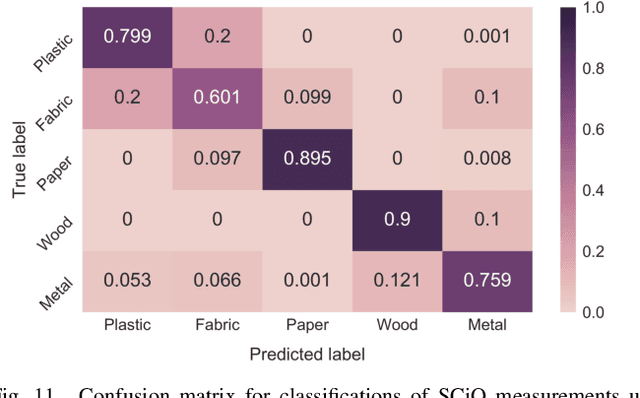

Classification of Household Materials via Spectroscopy

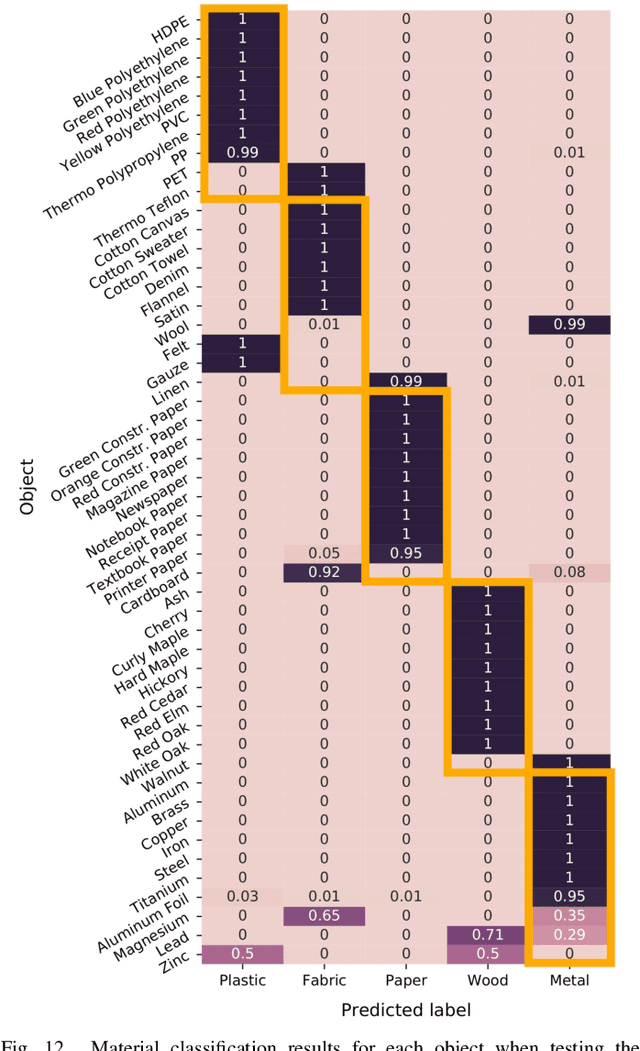

Jan 06, 2019

Abstract:Recognizing an object's material can inform a robot on the object's fragility or appropriate use. To estimate an object's material during manipulation, many prior works have explored the use of haptic sensing. In this paper, we explore a technique for robots to estimate the materials of objects using spectroscopy. We demonstrate that spectrometers provide several benefits for material recognition, including fast response times and accurate measurements with low noise. Furthermore, spectrometers do not require direct contact with an object. To explore this, we collected a dataset of spectral measurements from two commercially available spectrometers during which a robotic platform interacted with 50 flat material objects, and we show that a neural network model can accurately analyze these measurements. Due to the similarity between consecutive spectral measurements, our model achieved a material classification accuracy of 94.6% when given only one spectral sample per object. Similar to prior works with haptic sensors, we found that generalizing material recognition to new objects posed a greater challenge, for which we achieved an accuracy of 79.1% via leave-one-object-out cross-validation. Finally, we demonstrate how a PR2 robot can leverage spectrometers to estimate the materials of everyday objects found in the home. From this work, we find that spectroscopy poses a promising approach for material classification during robotic manipulation.

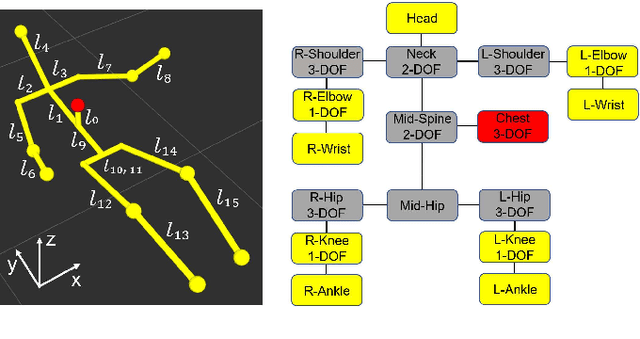

3D Human Pose Estimation on a Configurable Bed from a Pressure Image

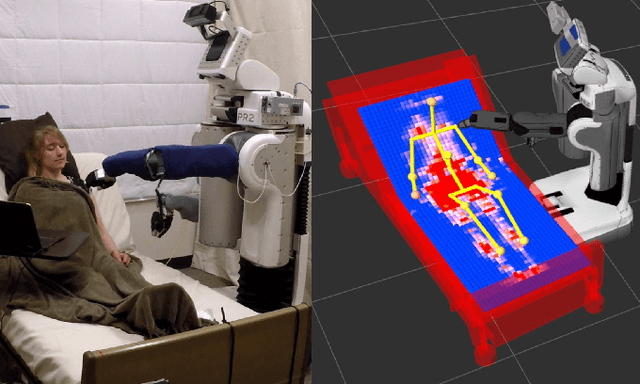

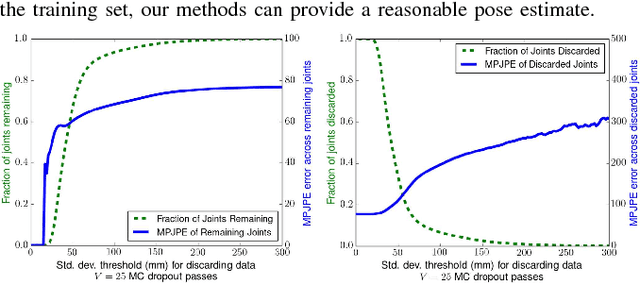

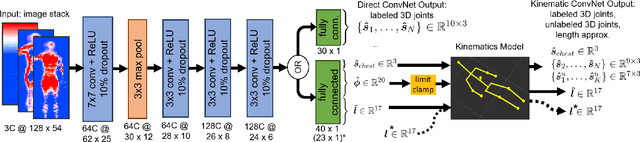

Aug 29, 2018

Abstract:Robots have the potential to assist people in bed, such as in healthcare settings, yet bedding materials like sheets and blankets can make observation of the human body difficult for robots. A pressure-sensing mat on a bed can provide pressure images that are relatively insensitive to bedding materials. However, prior work on estimating human pose from pressure images has been restricted to 2D pose estimates and flat beds. In this work, we present two convolutional neural networks to estimate the 3D joint positions of a person in a configurable bed from a single pressure image. The first network directly outputs 3D joint positions, while the second outputs a kinematic model that includes estimated joint angles and limb lengths. We evaluated our networks on data from 17 human participants with two bed configurations: supine and seated. Our networks achieved a mean joint position error of 77 mm when tested with data from people outside the training set, outperforming several baselines. We also present a simple mechanical model that provides insight into ambiguity associated with limbs raised off of the pressure mat, and demonstrate that Monte Carlo dropout can be used to estimate pose confidence in these situations. Finally, we provide a demonstration in which a mobile manipulator uses our network's estimated kinematic model to reach a location on a person's body in spite of the person being seated in a bed and covered by a blanket.

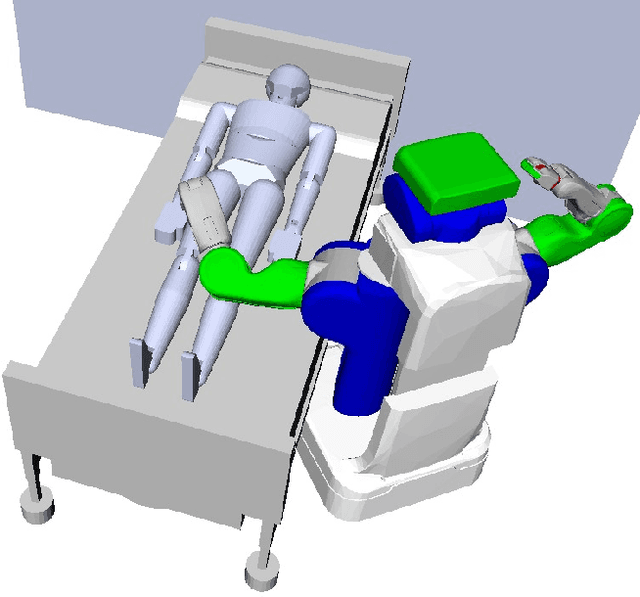

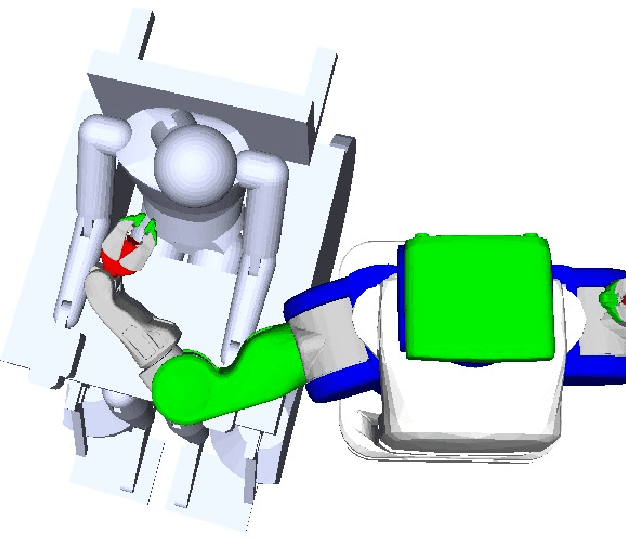

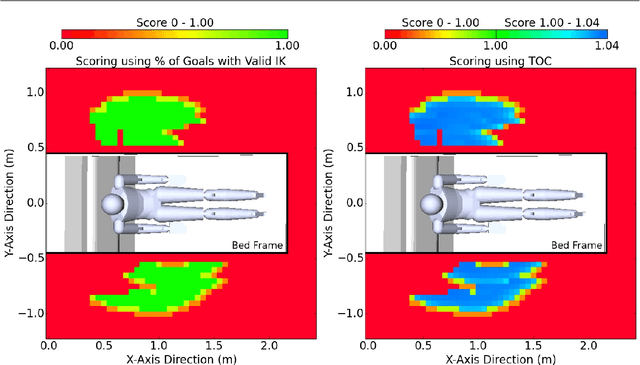

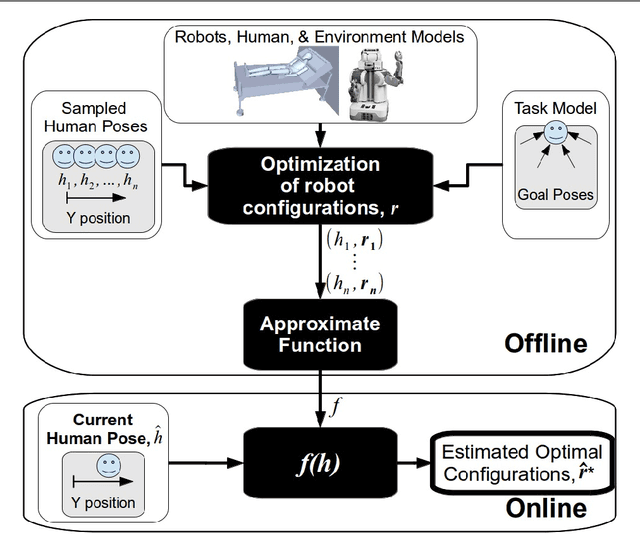

Task-centric Optimization of Configurations for Assistive Robots

Apr 19, 2018

Abstract:Robots can provide assistance to a human by moving objects to locations around the person's body. With a well chosen initial configuration, a robot can better reach locations important to an assistive task despite model error, pose uncertainty and other sources of variation. However, finding effective configurations can be challenging due to complex geometry, a large number of degrees of freedom, task complexity and other factors. We present task-centric optimization of robot configurations (TOC), which is an algorithm that finds configurations from which the robot can better reach task-relevant locations and handle task variation. Notably, TOC can return more than one configuration that when used sequentially enable a simulated assistive robot to reach more task-relevant locations. TOC performs substantial offline computation to generate a function that can be applied rapidly online to select robot configurations based on current observations. TOC explicitly models the task, environment, and user, and implicitly handles error using representations of robot dexterity. We evaluated TOC in simulation with a PR2 assisting a user with 9 assistive tasks in both a wheelchair and a robotic bed. TOC had an overall average success rate of 90.6\% compared to 50.4\%, 43.5\%, and 58.9\% for three baseline methods from literature. We additionally demonstrate how TOC can find configurations for more than one robot and can be used to assist in designing or optimizing environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge