Björn Ottersten

Interdisciplinary Centre for Security, Reliability and Trust

Quasi-Synchronous Random Access for Massive MIMO-Based LEO Satellite Constellations

Apr 10, 2023

Abstract:Low earth orbit (LEO) satellite constellation-enabled communication networks are expected to be an important part of many Internet of Things (IoT) deployments due to their unique advantage of providing seamless global coverage. In this paper, we investigate the random access problem in massive multiple-input multiple-output-based LEO satellite systems, where the multi-satellite cooperative processing mechanism is considered. Specifically, at edge satellite nodes, we conceive a training sequence padded multi-carrier system to overcome the issue of imperfect synchronization, where the training sequence is utilized to detect the devices' activity and estimate their channels. Considering the inherent sparsity of terrestrial-satellite links and the sporadic traffic feature of IoT terminals, we utilize the orthogonal approximate message passing-multiple measurement vector algorithm to estimate the delay coefficients and user terminal activity. To further utilize the structure of the receive array, a two-dimensional estimation of signal parameters via rotational invariance technique is performed for enhancing channel estimation. Finally, at the central server node, we propose a majority voting scheme to enhance activity detection by aggregating backhaul information from multiple satellites. Moreover, multi-satellite cooperative linear data detection and multi-satellite cooperative Bayesian dequantization data detection are proposed to cope with perfect and quantized backhaul, respectively. Simulation results verify the effectiveness of our proposed schemes in terms of channel estimation, activity detection, and data detection for quasi-synchronous random access in satellite systems.

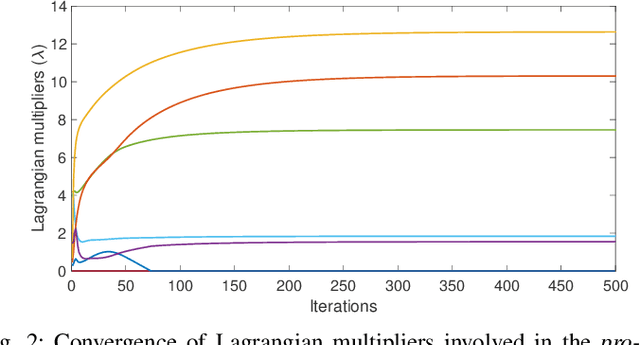

Energy-Efficient RIS-Enabled NOMA Communication for 6G LEO Satellite Networks

Mar 09, 2023

Abstract:This paper proposes an energy-efficient RIS-enabled NOMA communication for LEO satellite networks. The proposed framework simultaneously optimizes the transmit power of ground terminals at LEO satellite and passive beamforming at RIS while ensuring the quality of services. Due to the nature of the considered system and optimization variables, the problem of energy efficiency maximization is formulated as non-convex. In practice, it is very challenging to obtain the optimal solution for such problems. Therefore, we adopt alternating optimization methods to handle the joint optimization in two steps. In step 1, for any given phase shift vector, we calculate efficient power for ground terminals at satellite using Lagrangian dual method. Then, in step 2, given the transmit power, we design passive beamforming for RIS by solving the semi-definite programming. To validate the proposed solution, numerical results are also provided to demonstrate the benefits of the proposed optimization framework.

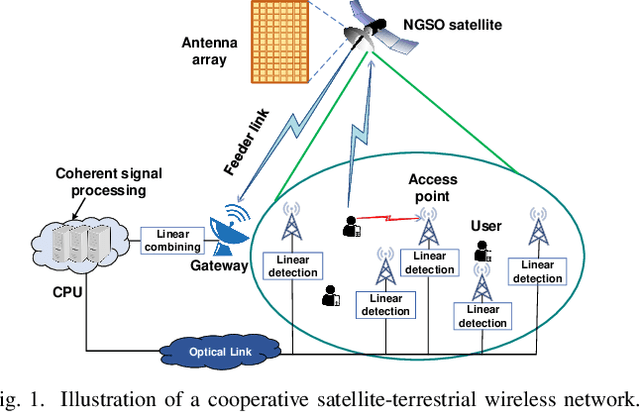

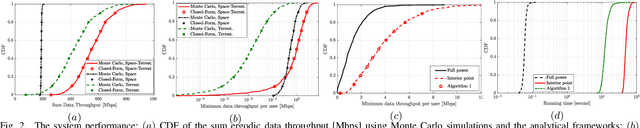

Space-Terrestrial Cooperation Over Spatially Correlated Channels Relying on Imperfect Channel Estimates: Uplink Performance Analysis and Optimization

Dec 21, 2022

Abstract:A whole suite of innovative technologies and architectures have emerged in response to the rapid growth of wireless traffic. This paper studies an integrated network design that boosts system capacity through cooperation between wireless access points (APs) and a satellite for enhancing the network's spectral efficiency. We first mathematically derive an achievable throughput expression for the uplink (UL) data transmission over spatially correlated Rician channels. Our generic achievable throughput expression is applicable for arbitrary received signal detection techniques under realistic imperfect channel estimates. A closed-form expression is then obtained for the ergodic UL data throughput when maximum ratio combining is utilized for detecting the desired signals. As for our resource allocation contributions, we formulate the max-min fairness and total transmit power optimization problems relying on the channel statistics for performing power allocation. The solution of each optimization problem is derived in form of a low-complexity iterative design, in which each data power variable is updated relying on a closed-form expression. Our integrated hybrid network concept allows users to be served that may not otherwise be accommodated due to the excessive data demands. The algorithms proposed to allow us to address the congestion issues appearing when at least one user is served at a rate below the target. The mathematical analysis is also illustrated with the aid of our numerical results that show the added benefits of considering the space links in terms of improving the ergodic data throughput. Furthermore, the proposed algorithms smoothly circumvent any potential congestion, especially in face of high rate requirements and weak channel conditions.

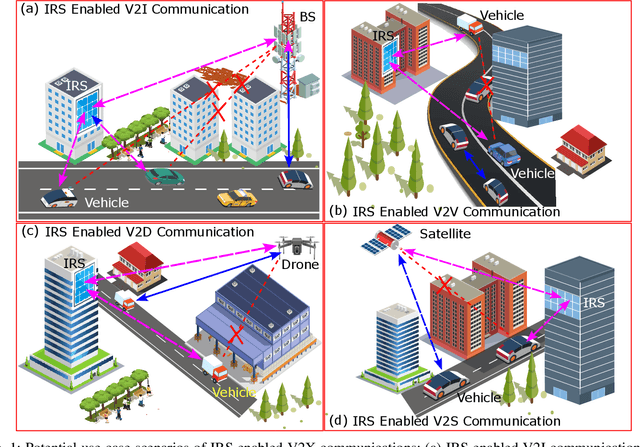

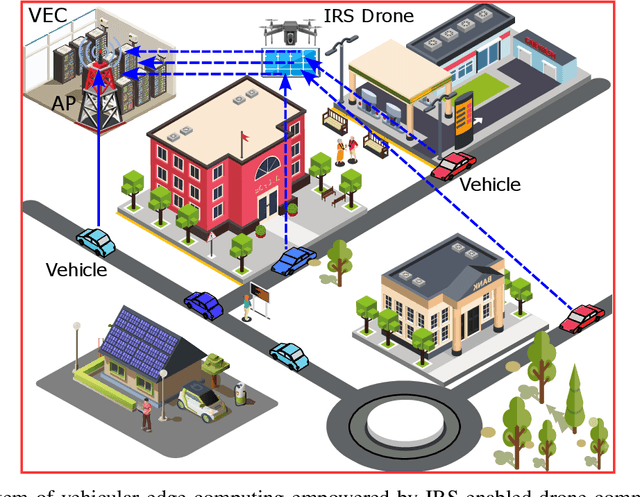

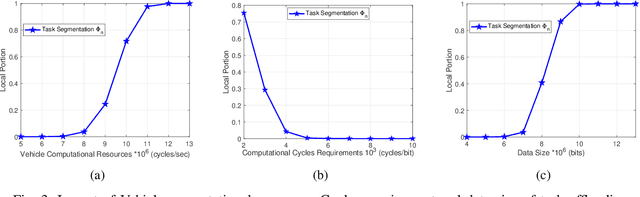

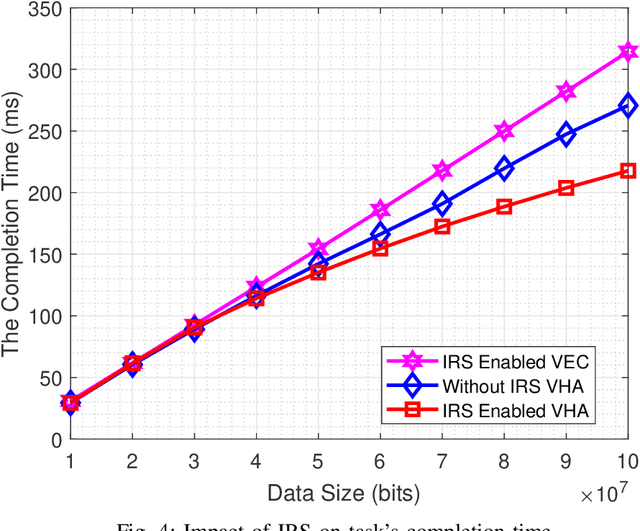

Opportunities for Intelligent Reflecting Surfaces in 6G-Empowered V2X Communications

Oct 02, 2022

Abstract:This paper first describes the introduction of 6G-empowered V2X communications and IRS technology. Then it discusses different use case scenarios of IRS enabled V2X communications and reports recent advances in the existing literature. Next, we focus our attention on the scenario of vehicular edge computing involving IRS enabled drone communications in order to reduce vehicle computational time via optimal computational and communication resource allocation. At the end, this paper highlights current challenges and discusses future perspectives of IRS enabled V2X communications in order to improve current work and spark new ideas.

Hybrid Beamforming in mmWave Dual-Function Radar-Communication Systems: Models, Technologies, and Challenges

Sep 13, 2022

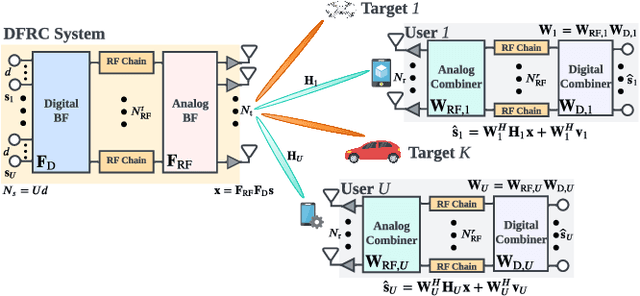

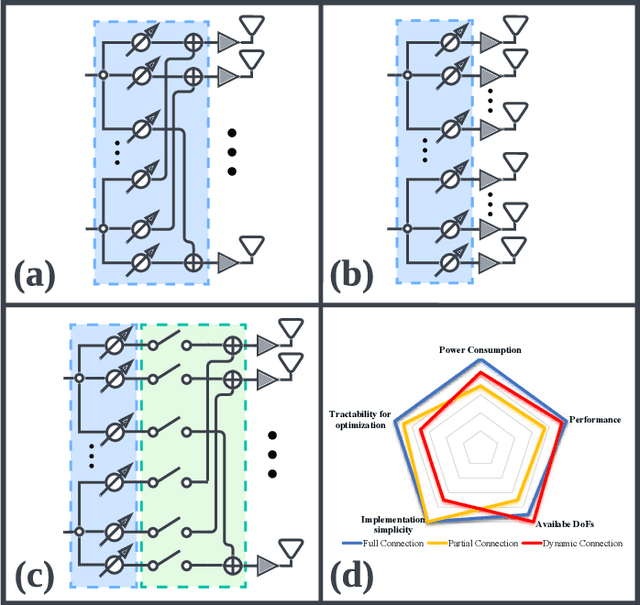

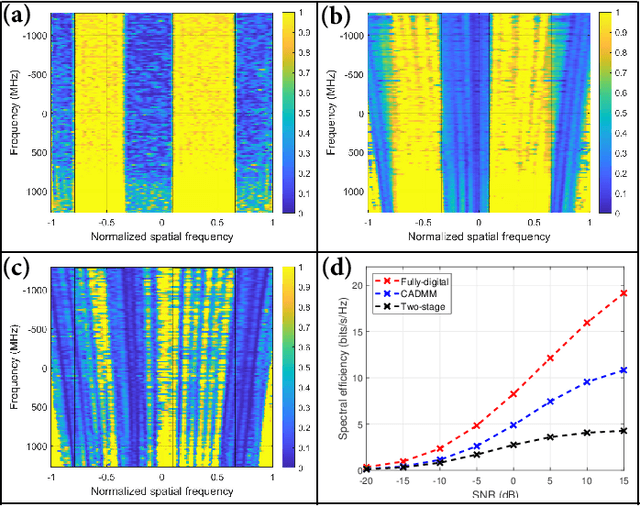

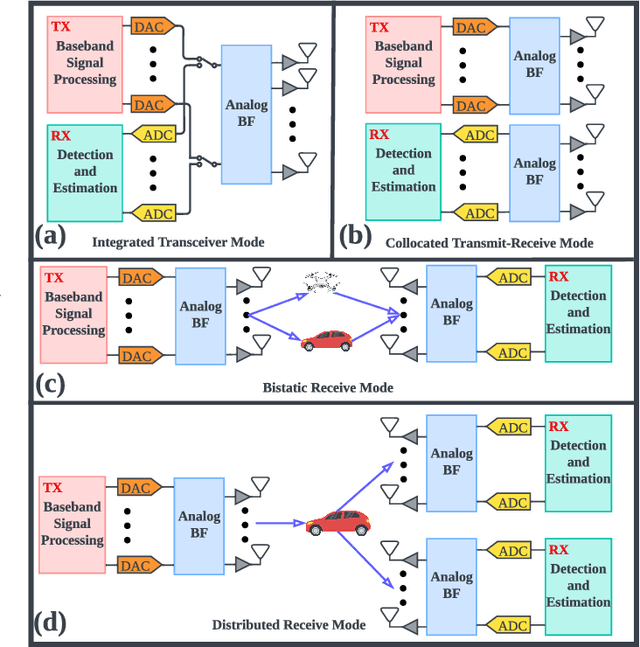

Abstract:As a promising technology in beyond-5G (B5G) and 6G, dual-function radar-communication (DFRC) aims to ensure both radar sensing and communication on a single integrated platform with unified signaling schemes. To achieve accurate sensing and reliable communication, large-scale arrays are anticipated to be implemented in such systems, which brings out the prominent issues on hardware cost and power consumption. To address these issues, hybrid beamforming (HBF), beyond its successful deployment in communication-only systems, could be a promising approach in the emerging DFRC ones. In this article, we investigate the development of the HBF techniques on the DFRC system in a self-contained manner. Specifically, we first introduce the basics of the HBF based DFRC system, where the system model and different receive modes are discussed with focus. Then we illustrate the corresponding design principles, which span from the performance metrics and optimization formulations to the design approaches and our preliminary results. Finally, potential extension and key research opportunities, such as the combination with the reconfigurable intelligent surface, are discussed concisely.

Power Allocation for Space-Terrestrial Cooperation Systems with Statistical CSI

Sep 03, 2022

Abstract:This paper studies an integrated network design that boosts system capacity through cooperation between wireless access points (APs) and a satellite. By coherently combing the signals received by the central processing unit from the users through the space and terrestrial links, we mathematically derive an achievable throughput expression for the uplink (UL) data transmission over spatially correlated Rician channels. A closed-form expression is obtained when maximum ratio combining is employed to detect the desired signals. We formulate the max-min fairness and total transmit power optimization problems relying on the channel statistics to perform power allocation. The solution of each optimization problem is derived in form of a low-complexity iterative design, in which each data power variable is updated based on a closed-form expression. The mathematical analysis is validated with numerical results showing the added benefits of considering a satellite link in terms of improving the ergodic data throughput.

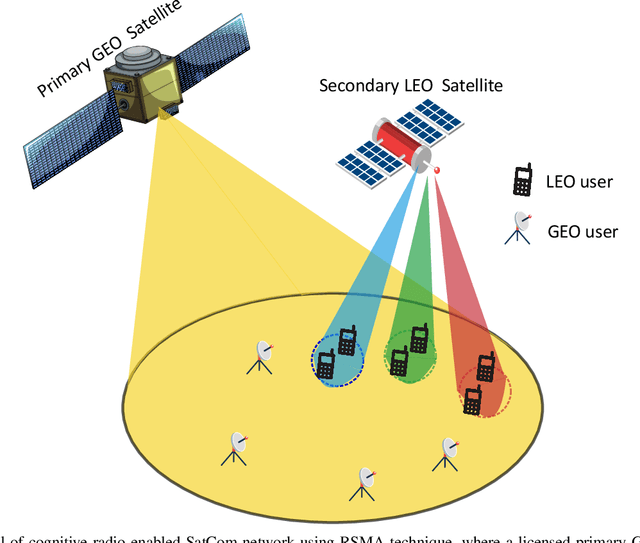

Rate Splitting Multiple Access for Next Generation Cognitive Radio Enabled LEO Satellite Networks

Aug 07, 2022

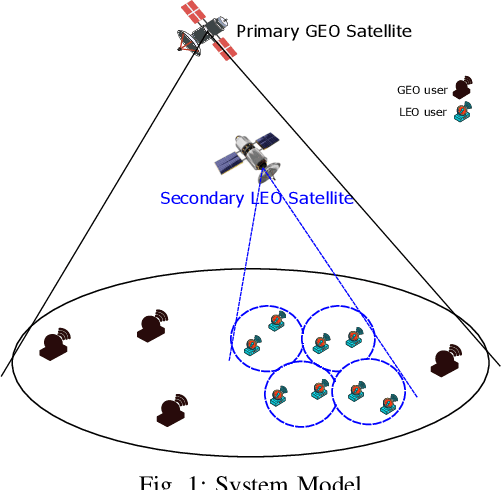

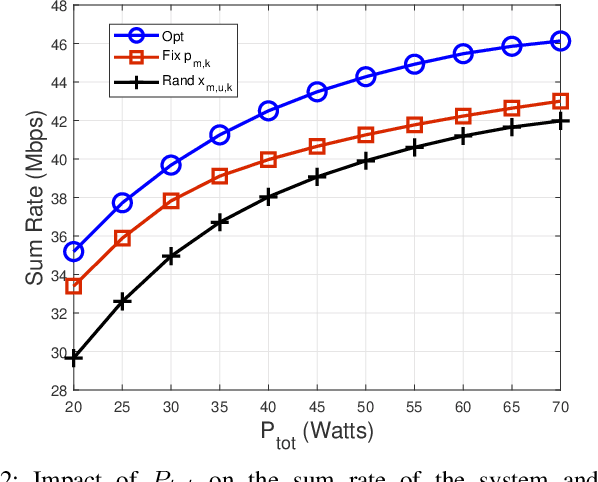

Abstract:This paper proposes a cognitive radio enabled LEO SatCom using RSMA radio access technique with the coexistence of GEO SatCom network. In particular, this work aims to maximize the sum rate of LEO SatCom by simultaneously optimizing the power budget over different beams, RSMA power allocation for users over each beam, and subcarrier user assignment while restricting the interference temperature to GEO SatCom. The problem of sum rate maximization is formulated as non-convex, where the global optimal solution is challenging to obtain. Thus, an efficient solution can be obtained in three steps: first we employ a successive convex approximation technique to reduce the complexity and make the problem more tractable. Second, for any given resource block user assignment, we adopt KKT conditions to calculate the transmit power over different beams and RSMA power allocation of users over each beam. Third, using the allocated power, we design an efficient algorithm based on the greedy approach for resource block user assignment. Numerical results demonstrate the benefits of the proposed optimization scheme compared to the benchmark schemes.

Rate Splitting Multiple Access for Cognitive Radio GEO-LEO Co-Existing Satellite Networks

Aug 04, 2022

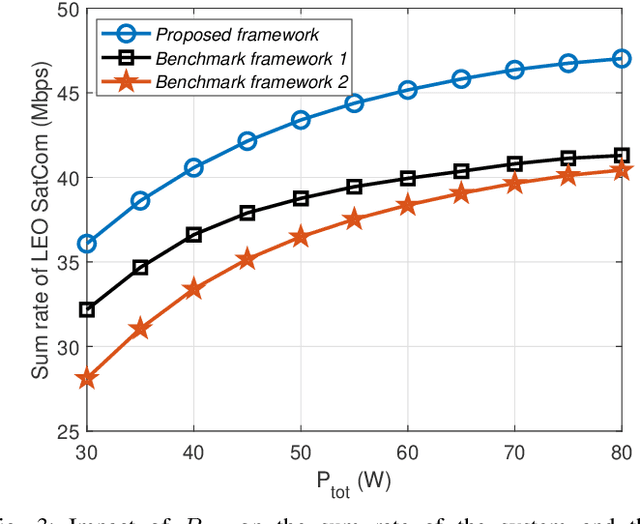

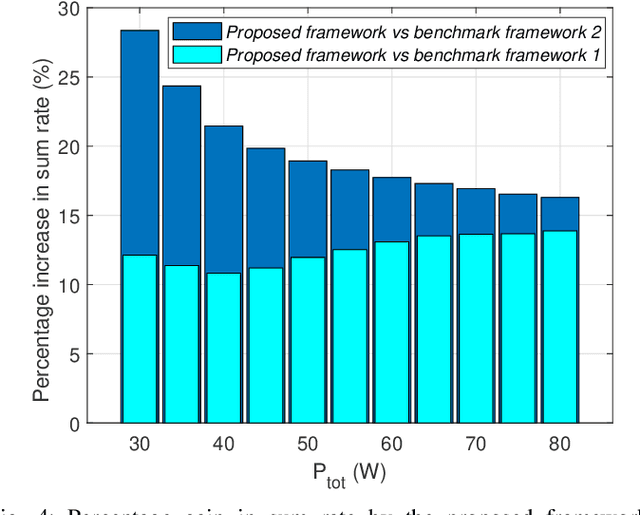

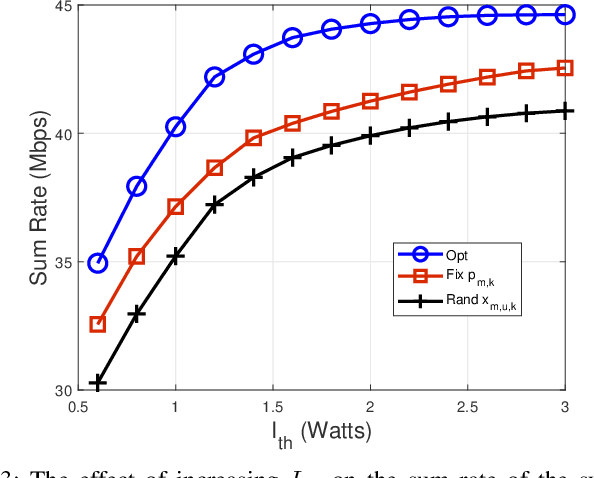

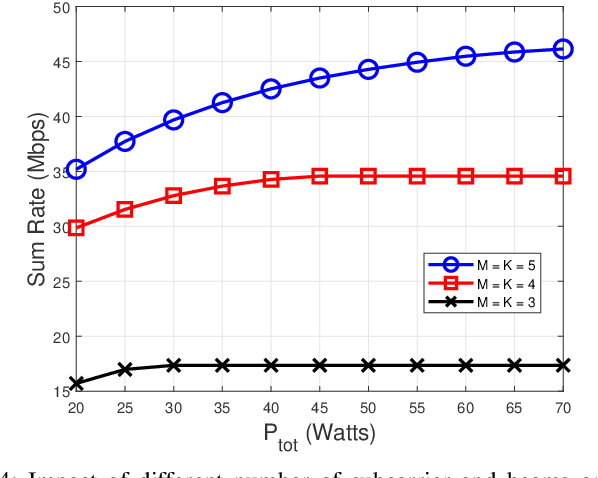

Abstract:LEO satellite communication has drawn particular attention recently due to its high data rate services and low round-trip latency. It is low-cost to launch and can provide global coverage. However, the spectrum scarcity might be one of the critical challenges in the growth of LEO satellites, impacting severe restrictions on the development of ground-space integrated networks. To address this issue, we propose RSMA for CR enabled GEO-LEO coexisting satellite network. In particular, this work aims to maximize the system's sum rate by simultaneously optimizing the power allocation and subcarrier beam assignment of LEO satellite communication while restricting the interference temperature to GEO satellite users. The problem of sum rate maximization is formulated as non-convex and a Global optimal solution is challenging to obtain. Therefore, we first employ the successive convex approximation technique to reduce the complexity and make the problem more tractable. Then for the power allocation, we exploit KKT condition and adopt an efficient algorithm based on the greedy approach for subcarrier beam assignment. We also propose two suboptimal schemes with fixed power allocation and random subcarrier beam assignment as the benchmark. Results demonstrate the benefits of the proposed scheme compared to the benchmark schemes.

Joint Beam Placement and Load Balancing Optimization for Non-Geostationary Satellite Systems

Jul 29, 2022

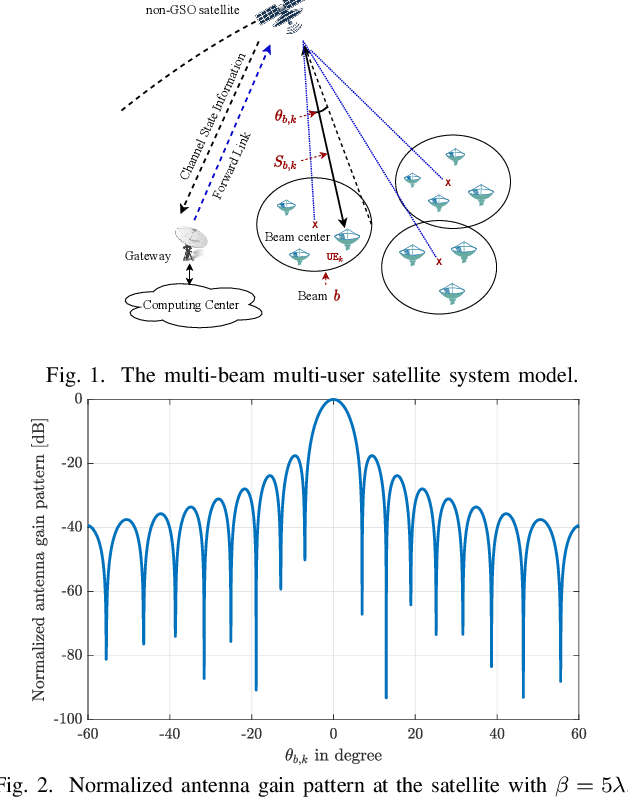

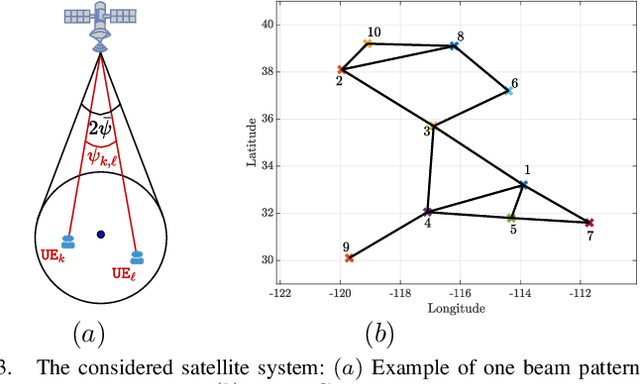

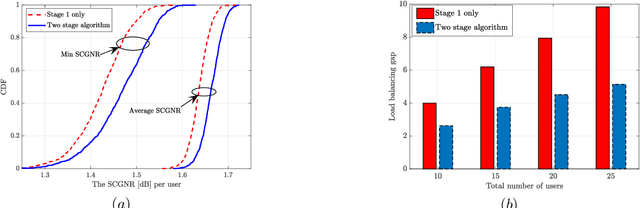

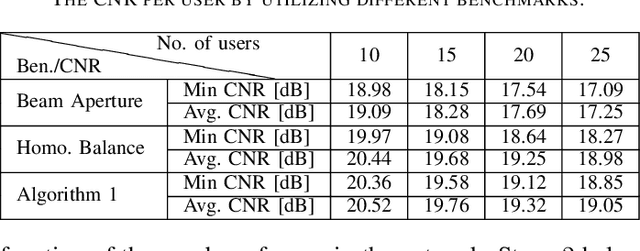

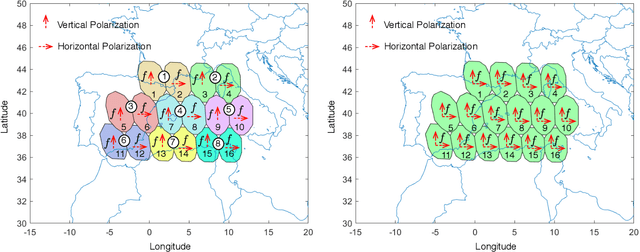

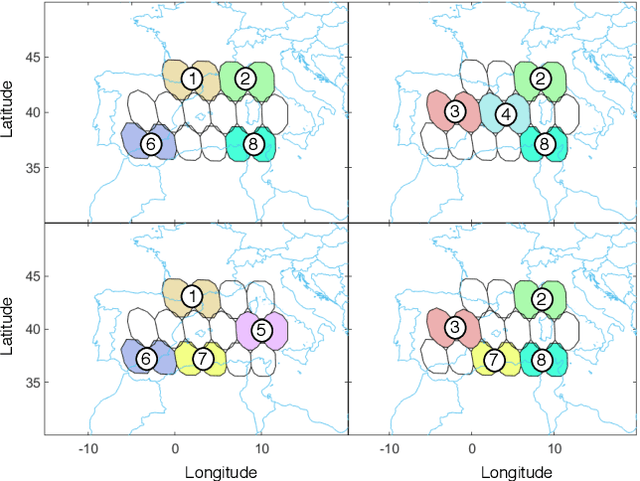

Abstract:Non-geostationary (Non-GSO) satellite constellations have emerged as a promising solution to enable ubiquitous high-speed low-latency broadband services by generating multiple spot-beams placed on the ground according to the user locations. However, there is an inherent trade-off between the number of active beams and the complexity of generating a large number of beams. This paper formulates and solves a joint beam placement and load balancing problem to carefully optimize the satellite beam and enhance the link budgets with a minimal number of active beams. We propose a two-stage algorithm design to overcome the combinatorial structure of the considered optimization problem providing a solution in polynomial time. The first stage minimizes the number of active beams, while the second stage performs a load balancing to distribute users in the coverage area of the active beams. Numerical results confirm the benefits of the proposed methodology both in carrier-to-noise ratio and multiplexed users per beam over other benchmarks.

Joint Beam Hopping and Carrier Aggregation in High Throughput Multi-Beam Satellite Systems

Jun 24, 2022

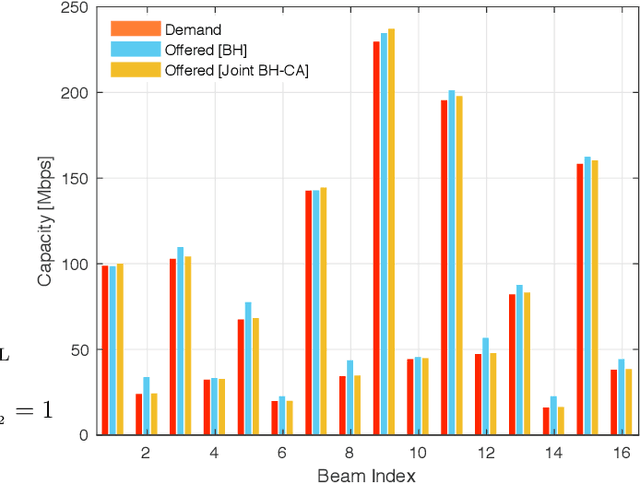

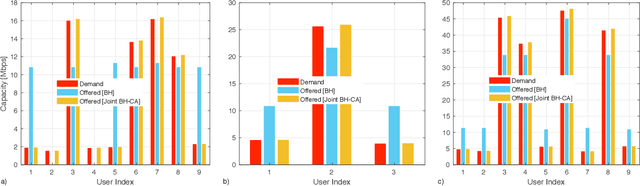

Abstract:Beam hopping (BH) and carrier aggregation (CA) are two promising technologies for the next generation satellite communication systems to achieve several orders of magnitude increase in system capacity and to significantly improve the spectral efficiency. While BH allows a great flexibility in adapting the offered capacity to the heterogeneous demand, CA further enhances the user quality-of-service (QoS) by allowing it to pool resources from multiple adjacent beams. In this paper, we consider a multi-beam high throughput satellite (HTS) system that employs BH in conjunction with CA to capitalize on the mutual interplay between both techniques. Particularly, an innovative joint BH-CA scheme is proposed and analyzed in this work to utilize their individual competencies. This includes designing an efficient joint time-space beam illumination pattern for BH and multi-user aggregation strategy for CA. Through this, user-carrier assignment, transponder filling-rates, beams hopping pattern, and illumination duration are all simultaneously optimized by formulating a joint optimization problem as a multi-objective mixed integer linear programming problem (MINLP). Simulation results are provided to corroborate our analysis, demonstrate the design tradeoffs, and point out the potentials of the proposed joint BH-CA concept. Advantages of our BH-CA scheme versus the conventional BH method without employing CA are investigated and presented under the same system circumstances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge