Ankit Prabhu

Heterogeneous Robot Collaboration in Unstructured Environments with Grounded Generative Intelligence

Oct 30, 2025Abstract:Heterogeneous robot teams operating in realistic settings often must accomplish complex missions requiring collaboration and adaptation to information acquired online. Because robot teams frequently operate in unstructured environments -- uncertain, open-world settings without prior maps -- subtasks must be grounded in robot capabilities and the physical world. While heterogeneous teams have typically been designed for fixed specifications, generative intelligence opens the possibility of teams that can accomplish a wide range of missions described in natural language. However, current large language model (LLM)-enabled teaming methods typically assume well-structured and known environments, limiting deployment in unstructured environments. We present SPINE-HT, a framework that addresses these limitations by grounding the reasoning abilities of LLMs in the context of a heterogeneous robot team through a three-stage process. Given language specifications describing mission goals and team capabilities, an LLM generates grounded subtasks which are validated for feasibility. Subtasks are then assigned to robots based on capabilities such as traversability or perception and refined given feedback collected during online operation. In simulation experiments with closed-loop perception and control, our framework achieves nearly twice the success rate compared to prior LLM-enabled heterogeneous teaming approaches. In real-world experiments with a Clearpath Jackal, a Clearpath Husky, a Boston Dynamics Spot, and a high-altitude UAV, our method achieves an 87\% success rate in missions requiring reasoning about robot capabilities and refining subtasks with online feedback. More information is provided at https://zacravichandran.github.io/SPINE-HT.

4D Metric-Semantic Mapping for Persistent Orchard Monitoring: Method and Dataset

Sep 29, 2024

Abstract:Automated persistent and fine-grained monitoring of orchards at the individual tree or fruit level helps maximize crop yield and optimize resources such as water, fertilizers, and pesticides while preventing agricultural waste. Towards this goal, we present a 4D spatio-temporal metric-semantic mapping method that fuses data from multiple sensors, including LiDAR, RGB camera, and IMU, to monitor the fruits in an orchard across their growth season. A LiDAR-RGB fusion module is designed for 3D fruit tracking and localization, which first segments fruits using a deep neural network and then tracks them using the Hungarian Assignment algorithm. Additionally, the 4D data association module aligns data from different growth stages into a common reference frame and tracks fruits spatio-temporally, providing information such as fruit counts, sizes, and positions. We demonstrate our method's accuracy in 4D metric-semantic mapping using data collected from a real orchard under natural, uncontrolled conditions with seasonal variations. We achieve a 3.1 percent error in total fruit count estimation for over 1790 fruits across 60 apple trees, along with accurate size estimation results with a mean error of 1.1 cm. The datasets, consisting of LiDAR, RGB, and IMU data of five fruit species captured across their growth seasons, along with corresponding ground truth data, will be made publicly available at: https://4d-metric-semantic-mapping.org/

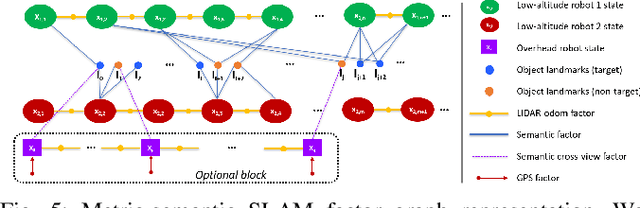

SlideSLAM: Sparse, Lightweight, Decentralized Metric-Semantic SLAM for Multi-Robot Navigation

Jun 25, 2024

Abstract:This paper develops a real-time decentralized metric-semantic Simultaneous Localization and Mapping (SLAM) approach that leverages a sparse and lightweight object-based representation to enable a heterogeneous robot team to autonomously explore 3D environments featuring indoor, urban, and forested areas without relying on GPS. We use a hierarchical metric-semantic representation of the environment, including high-level sparse semantic maps of object models and low-level voxel maps. We leverage the informativeness and viewpoint invariance of the high-level semantic map to obtain an effective semantics-driven place-recognition algorithm for inter-robot loop closure detection across aerial and ground robots with different sensing modalities. A communication module is designed to track each robot's observations and those of other robots within the communication range. Such observations are then used to construct a merged map. Our framework enables real-time decentralized operations onboard robots, allowing them to opportunistically leverage communication. We integrate and deploy our proposed framework on three types of aerial and ground robots. Extensive experimental results show an average localization error of 0.22 meters in position and -0.16 degrees in orientation, an object mapping F1 score of 0.92, and a communication packet size of merely 2-3 megabytes per kilometer trajectory with 1,000 landmarks. The project website can be found at https://xurobotics.github.io/slideslam/.

TreeScope: An Agricultural Robotics Dataset for LiDAR-Based Mapping of Trees in Forests and Orchards

Oct 03, 2023

Abstract:Data collection for forestry, timber, and agriculture currently relies on manual techniques which are labor-intensive and time-consuming. We seek to demonstrate that robotics offers improvements over these techniques and accelerate agricultural research, beginning with semantic segmentation and diameter estimation of trees in forests and orchards. We present TreeScope v1.0, the first robotics dataset for precision agriculture and forestry addressing the counting and mapping of trees in forestry and orchards. TreeScope provides LiDAR data from agricultural environments collected with robotics platforms, such as UAV and mobile robot platforms carried by vehicles and human operators. In the first release of this dataset, we provide ground-truth data with over 1,800 manually annotated semantic labels for tree stems and field-measured tree diameters. We share benchmark scripts for these tasks that researchers may use to evaluate the accuracy of their algorithms. Finally, we run our open-source diameter estimation and off-the-shelf semantic segmentation algorithms and share our baseline results.

Robust Localization of Aerial Vehicles via Active Control of Identical Ground Vehicles

Aug 13, 2023

Abstract:This paper addresses the problem of active collaborative localization in heterogeneous robot teams with unknown data association. It involves positioning a small number of identical unmanned ground vehicles (UGVs) at desired positions so that an unmanned aerial vehicle (UAV) can, through unlabelled measurements of UGVs, uniquely determine its global pose. We model the problem as a sequential two player game, in which the first player positions the UGVs and the second identifies the two distinct hypothetical poses of the UAV at which the sets of measurements to the UGVs differ by as little as possible. We solve the underlying problem from the vantage point of the first player for a subclass of measurement models using a mixture of local optimization and exhaustive search procedures. Real-world experiments with a team of UAV and UGVs show that our method can achieve centimeter-level global localization accuracy. We also show that our method consistently outperforms random positioning of UGVs by a large margin, with as much as a 90% reduction in position and angular estimation error. Our method can tolerate a significant amount of random as well as non-stochastic measurement noise. This indicates its potential for reliable state estimation on board size, weight, and power (SWaP) constrained UAVs. This work enables robust localization in perceptually-challenged GPS-denied environments, thus paving the road for large-scale multi-robot navigation and mapping.

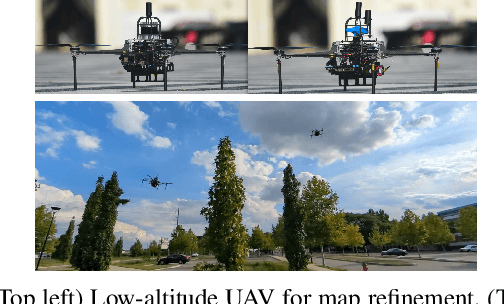

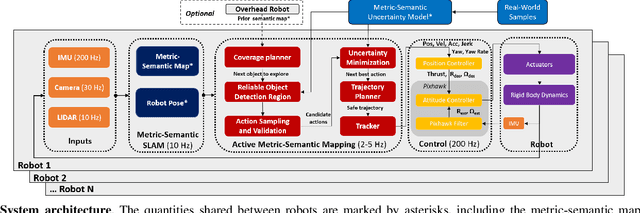

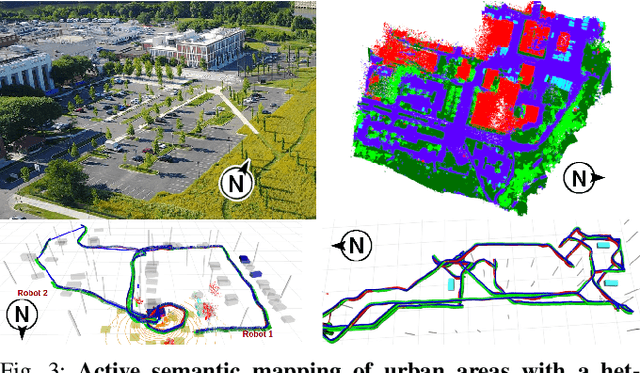

Active Metric-Semantic Mapping by Multiple Aerial Robots

Sep 24, 2022

Abstract:Traditional approaches for active mapping focus on building geometric maps. For most real-world applications, however, actionable information is related to semantically meaningful objects in the environment. We propose an approach to the active metric-semantic mapping problem that enables multiple heterogeneous robots to collaboratively build a map of the environment. The robots actively explore to minimize the uncertainties in both semantic (object classification) and geometric (object modeling) information. We represent the environment using informative but sparse object models, each consisting of a basic shape and a semantic class label, and characterize uncertainties empirically using a large amount of real-world data. Given a prior map, we use this model to select actions for each robot to minimize uncertainties. The performance of our algorithm is demonstrated through multi-robot experiments in diverse real-world environments. The proposed framework is applicable to a wide range of real-world problems, such as precision agriculture, infrastructure inspection, and asset mapping in factories. A demo video can be found at https://youtu.be/S86SgXi54oU.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge