"Time": models, code, and papers

A robust low data solution: dimension prediction of semiconductor nanorods

Oct 27, 2020

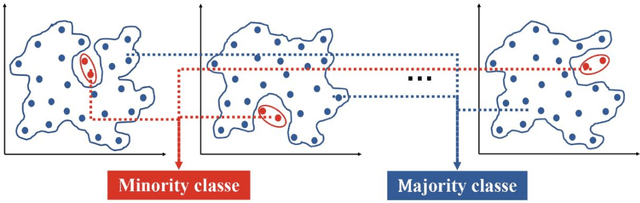

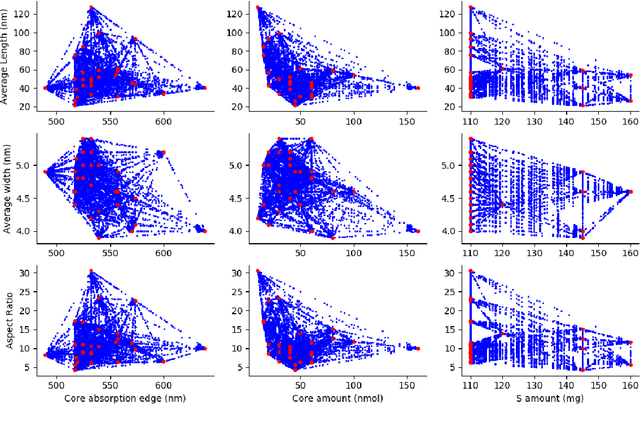

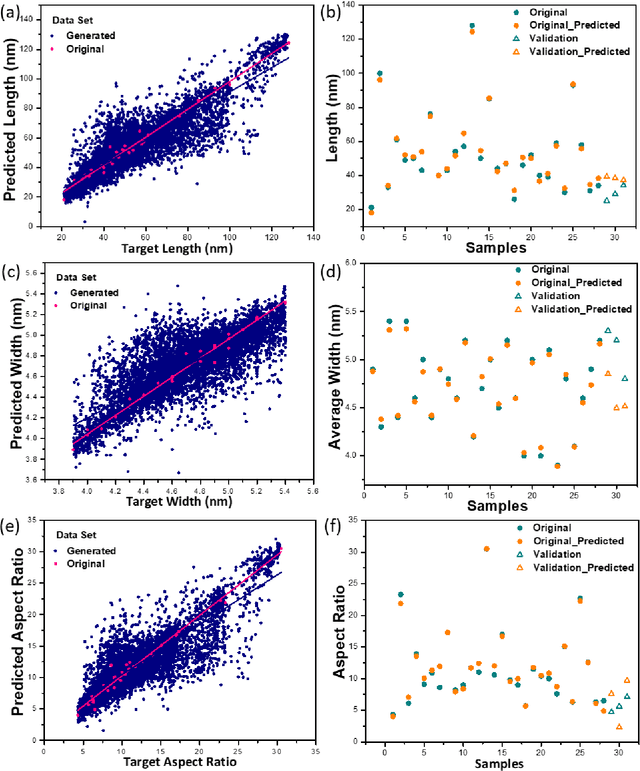

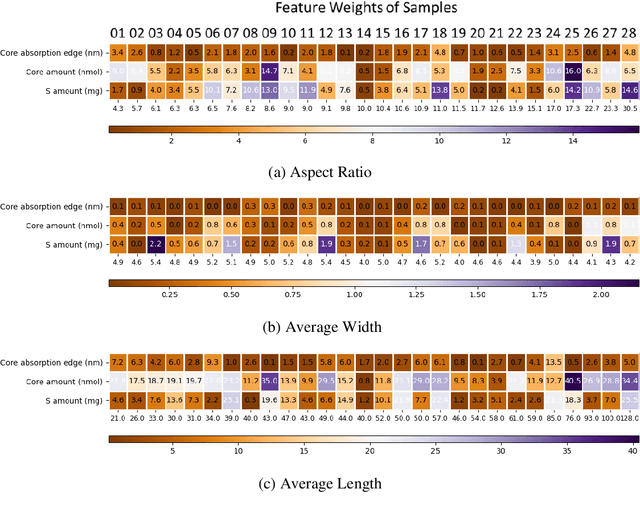

Precise control over dimension of nanocrystals is critical to tune the properties for various applications. However, the traditional control through experimental optimization is slow, tedious and time consuming. Herein a robust deep neural network-based regression algorithm has been developed for precise prediction of length, width, and aspect ratios of semiconductor nanorods (NRs). Given there is limited experimental data available (28 samples), a Synthetic Minority Oversampling Technique for regression (SMOTE-REG) has been employed for the first time for data generation. Deep neural network is further applied to develop regression model which demonstrated the well performed prediction on both the original and generated data with a similar distribution. The prediction model is further validated with additional experimental data, showing accurate prediction results. Additionally, Local Interpretable Model-Agnostic Explanations (LIME) is used to interpret the weight for each variable, which corresponds to its importance towards the target dimension, which is approved to be well correlated well with experimental observations.

Improving solar wind forecasting using Data Assimilation

Dec 11, 2020

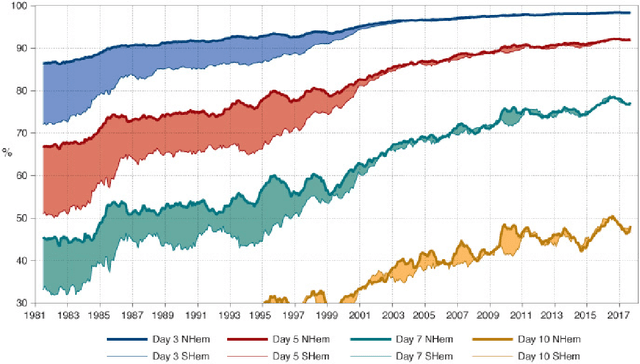

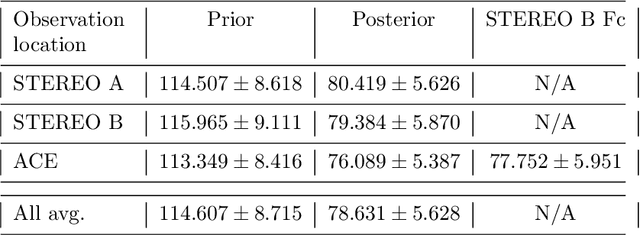

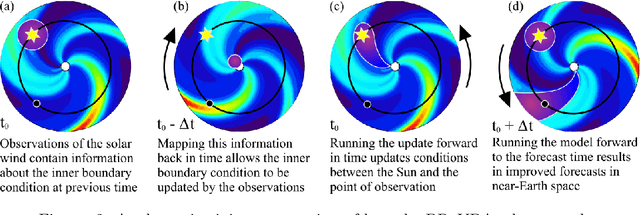

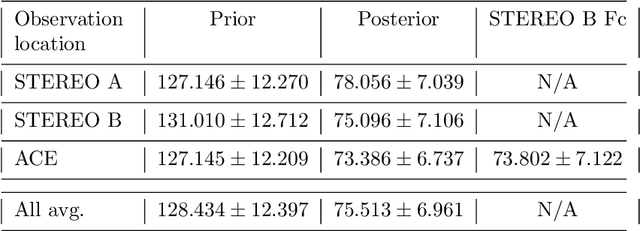

Data Assimilation (DA) has enabled huge improvements in the skill of terrestrial operational weather forecasting. In this study, we use a variational DA scheme with a computationally efficient solar wind model and in situ observations from STEREO A, STEREO B and ACE. This scheme enables solar-wind observations far from the Sun, such as at 1 AU, to update and improve the inner boundary conditions of the solar wind model (at $30$ solar radii). In this way, observational information can be used to improve estimates of the near-Earth solar wind, even when the observations are not directly downstream of the Earth. This allows improved initial conditions of the solar wind to be passed into forecasting models. To this effect we employ the HUXt solar wind model to produce 27-day forecasts of the solar wind during the operational time of STEREO B ($01/11/2007-30/09/2014$). At ACE, we compare these DA forecasts to the corotation of STEREO B observations and find that $27$-day RMSE for STEREO-B corotation and DA forecasts are comparable. However, the DA forecast is shown to improve solar wind forecasts when STEREO-B's latitude is offset from Earth. And the DA scheme enables the representation of the solar wind in the whole model domain between the Sun and the Earth to be improved, which will enable improved forecasting of CME arrival time and speed.

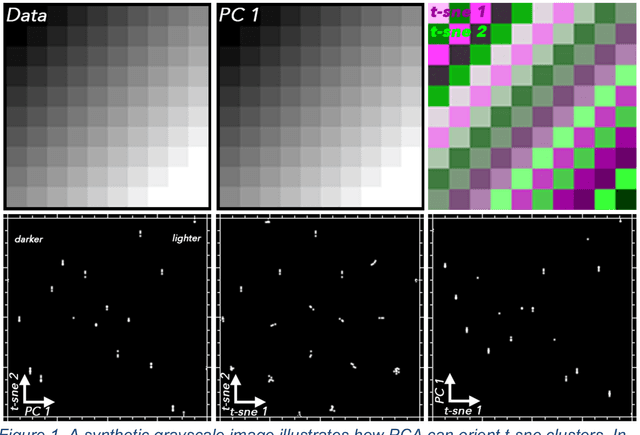

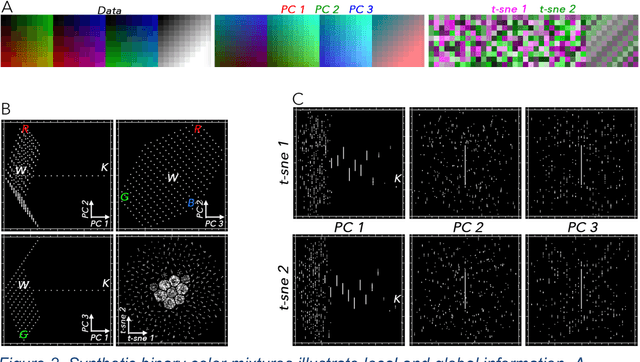

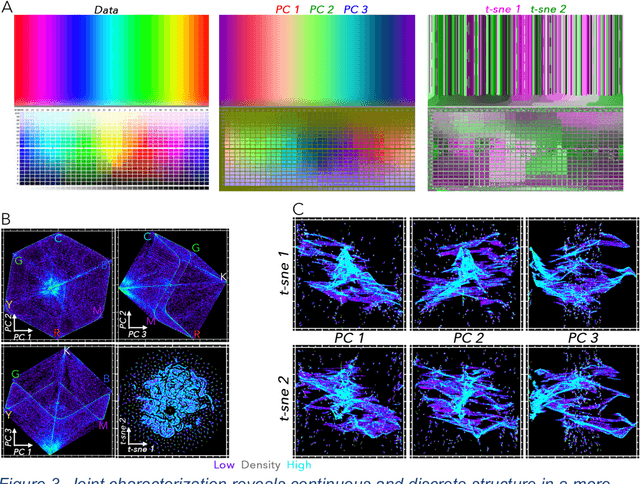

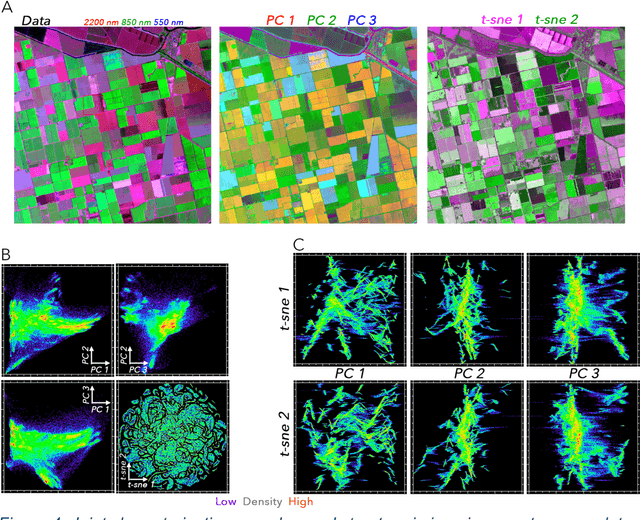

Joint Characterization of Multiscale Information in High Dimensional Data

Feb 18, 2021

High dimensional data can contain multiple scales of variance. Analysis tools that preferentially operate at one scale can be ineffective at capturing all the information present in this cross-scale complexity. We propose a multiscale joint characterization approach designed to exploit synergies between global and local approaches to dimensionality reduction. We illustrate this approach using Principal Components Analysis (PCA) to characterize global variance structure and t-stochastic neighbor embedding (t-sne) to characterize local variance structure. Using both synthetic images and real-world imaging spectroscopy data, we show that joint characterization is capable of detecting and isolating signals which are not evident from either PCA or t-sne alone. Broadly, t-sne is effective at rendering a randomly oriented low-dimensional map of local clusters, and PCA renders this map interpretable by providing global, physically meaningful structure. This approach is illustrated using imaging spectroscopy data, and may prove particularly useful for other geospatial data given robust local variance structure due to spatial autocorrelation and physical interpretability of global variance structure due to spectral properties of Earth surface materials. However, the fundamental premise could easily be extended to other high dimensional datasets, including image time series and non-image data.

Raspberry Pi Based Intelligent Robot that Recognizes and Places Puzzle Objects

Jan 20, 2021In this study; in order to diagnose congestive heart failure (CHF) patients, non-linear secondorder difference plot (SODP) obtained from raw 256 Hz sampled frequency and windowed record with different time of ECG records are used. All of the data rows are labelled with their belongings to classify much more realistically. SODPs are divided into different radius of quadrant regions and numbers of the points fall in the quadrants are computed in order to extract feature vectors. Fisher's linear discriminant, Naive Bayes, and artificial neural network are used as classifier. The results are considered in two step validation methods as general kfold cross-validation and patient based cross-validation. As a result, it is shown that using neural network classifier with features obtained from SODP, the constructed system could distinguish normal and CHF patients with 100% accuracy rate.

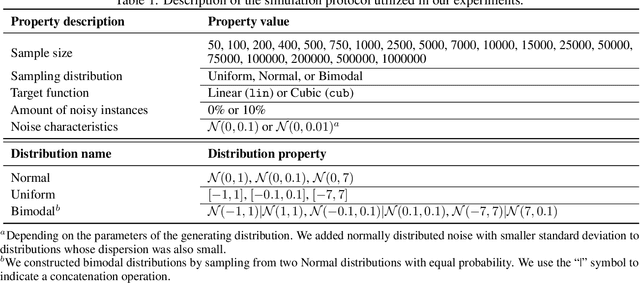

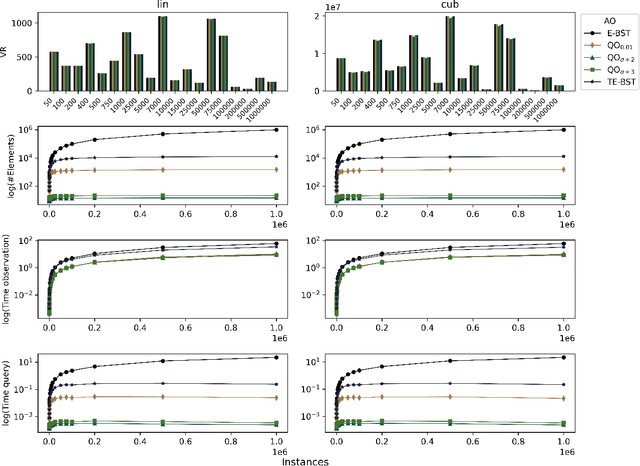

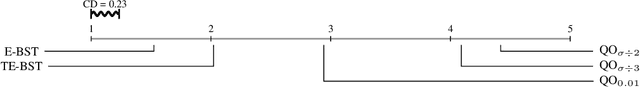

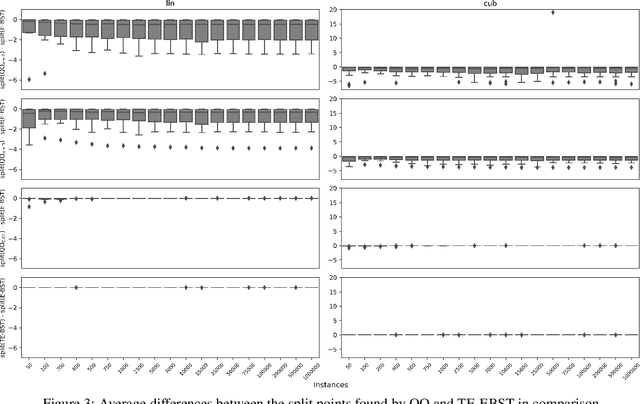

Using dynamical quantization to perform split attempts in online tree regressors

Dec 03, 2020

A central aspect of online decision tree solutions is evaluating the incoming data and enabling model growth. For such, trees much deal with different kinds of input features and partition them to learn from the data. Numerical features are no exception, and they pose additional challenges compared to other kinds of features, as there is no trivial strategy to choose the best point to make a split decision. The problem is even more challenging in regression tasks because both the features and the target are continuous. Typical online solutions evaluate and store all the points monitored between split attempts, which goes against the constraints posed in real-time applications. In this paper, we introduce the Quantization Observer (QO), a simple yet effective hashing-based algorithm to monitor and evaluate split point candidates in numerical features for online tree regressors. QO can be easily integrated into incremental decision trees, such as Hoeffding Trees, and it has a monitoring cost of $O(1)$ per instance and sub-linear cost to evaluate split candidates. Previous solutions had a $O(\log n)$ cost per insertion (in the best case) and a linear cost to evaluate split points. Our extensive experimental setup highlights QO's effectiveness in providing accurate split point suggestions while spending much less memory and processing time than its competitors.

Data Association Between Perception and V2V Communication Sensors

Jan 20, 2021

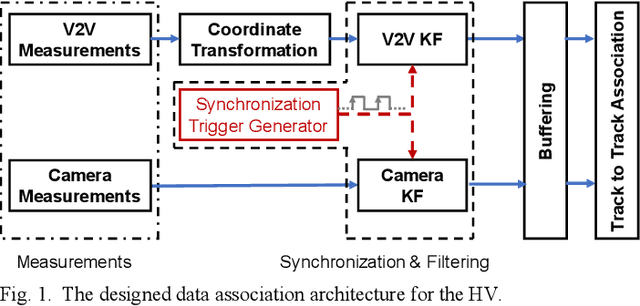

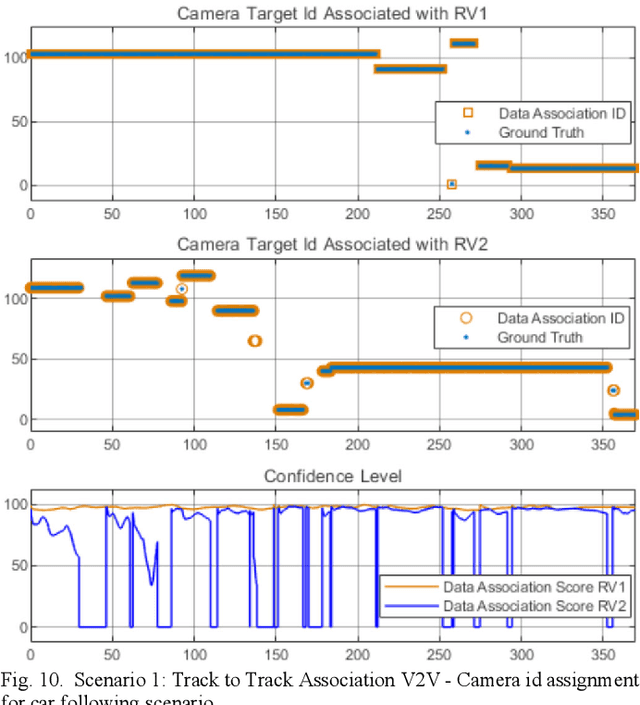

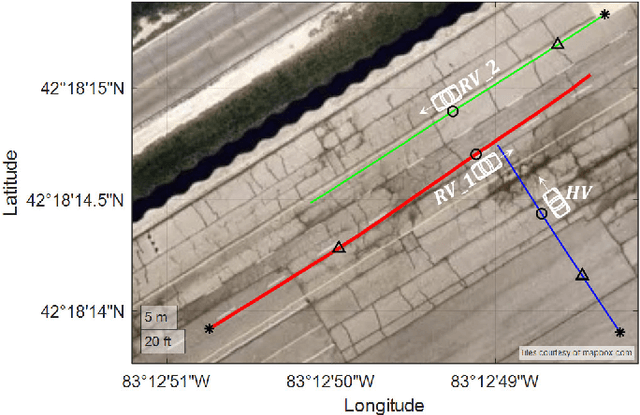

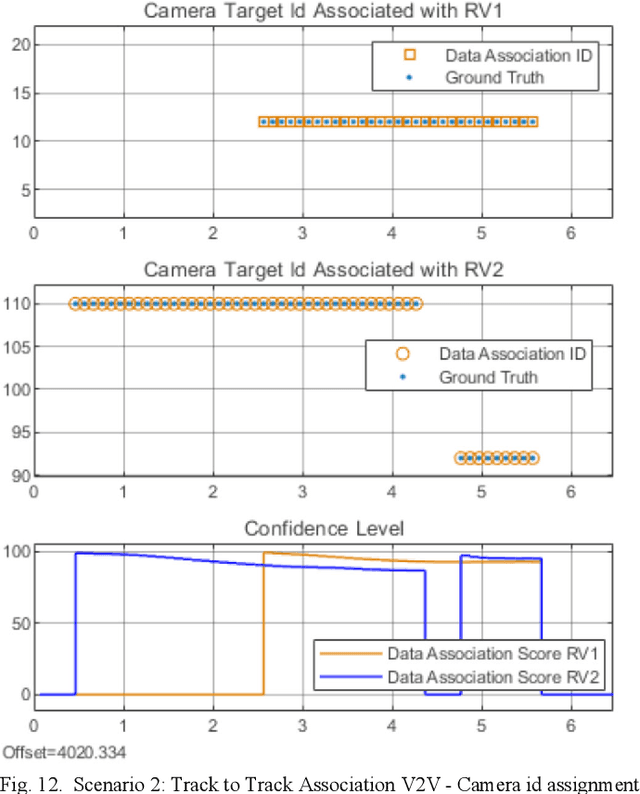

The connectivity between vehicles, infrastructure, and other traffic participants brings a new dimension to automotive safety applications. Soon all the newly produced cars will have Vehicle to Everything (V2X) communication modems alongside the existing Advanced Driver Assistant Systems (ADAS). It is essential to identify the different sensor measurements for the same targets (Data Association) to use connectivity reliably as a safety feature alongside the standard ADAS functionality. Considering the camera is the most common sensor available for ADAS systems, in this paper, we present an experimental implementation of a Mahalanobis distance-based data association algorithm between the camera and the Vehicle to Vehicle (V2V) communication sensors. The implemented algorithm has low computational complexity and the capability of running in real-time. One can use the presented algorithm for sensor fusion algorithms or higher-level decision-making applications in ADAS modules.

Artificial Intelligence based Autonomous Molecular Design for Medical Therapeutic: A Perspective

Feb 10, 2021

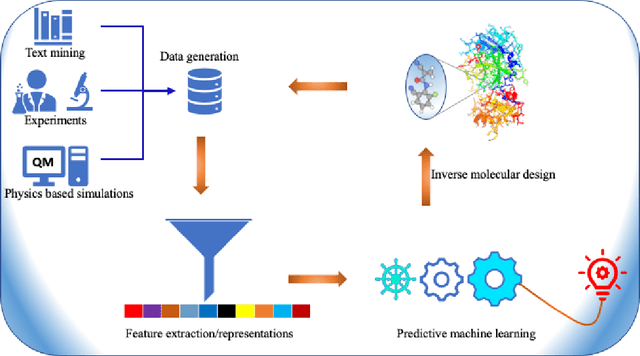

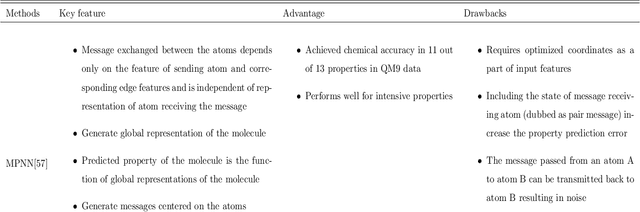

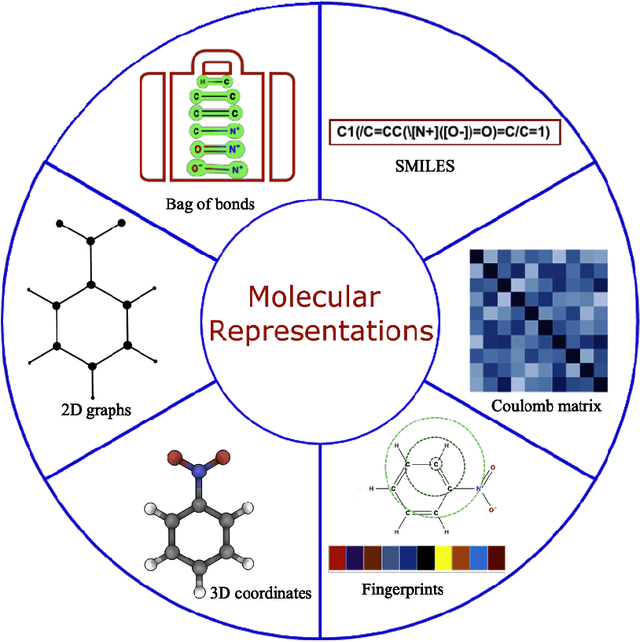

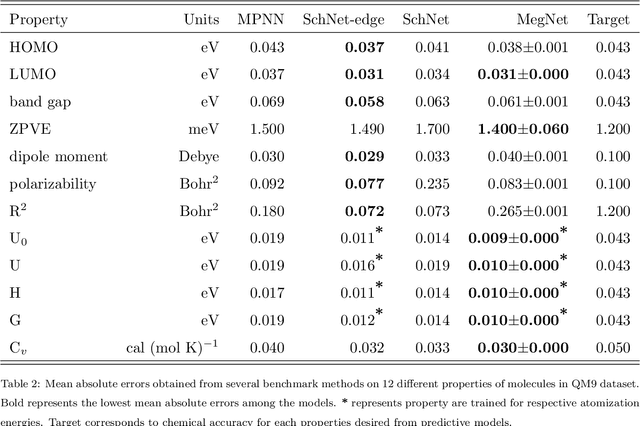

Domain-aware machine learning (ML) models have been increasingly adopted for accelerating small molecule therapeutic design in the recent years. These models have been enabled by significant advancement in state-of-the-art artificial intelligence (AI) and computing infrastructures. Several ML architectures are pre-dominantly and independently used either for predicting the properties of small molecules, or for generating lead therapeutic candidates. Synergetically using these individual components along with robust representation and data generation techniques autonomously in closed loops holds enormous promise for accelerated drug design which is a time consuming and expensive task otherwise. In this perspective, we present the most recent breakthrough achieved by each of the components, and how such autonomous AI and ML workflow can be realized to radically accelerate the hit identification and lead optimization. Taken together, this could significantly shorten the timeline for end-to-end antiviral discovery and optimization times to weeks upon the arrival of a novel zoonotic transmission event. Our perspective serves as a guide for researchers to practice autonomous molecular design in therapeutic discovery.

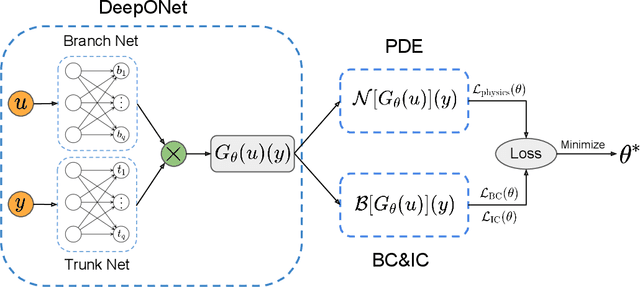

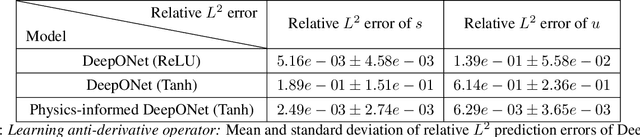

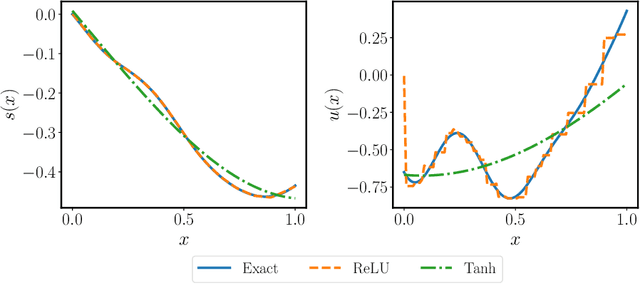

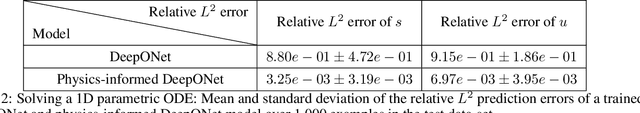

Learning the solution operator of parametric partial differential equations with physics-informed DeepOnets

Mar 19, 2021

Deep operator networks (DeepONets) are receiving increased attention thanks to their demonstrated capability to approximate nonlinear operators between infinite-dimensional Banach spaces. However, despite their remarkable early promise, they typically require large training data-sets consisting of paired input-output observations which may be expensive to obtain, while their predictions may not be consistent with the underlying physical principles that generated the observed data. In this work, we propose a novel model class coined as physics-informed DeepONets, which introduces an effective regularization mechanism for biasing the outputs of DeepOnet models towards ensuring physical consistency. This is accomplished by leveraging automatic differentiation to impose the underlying physical laws via soft penalty constraints during model training. We demonstrate that this simple, yet remarkably effective extension can not only yield a significant improvement in the predictive accuracy of DeepOnets, but also greatly reduce the need for large training data-sets. To this end, a remarkable observation is that physics-informed DeepONets are capable of solving parametric partial differential equations (PDEs) without any paired input-output observations, except for a set of given initial or boundary conditions. We illustrate the effectiveness of the proposed framework through a series of comprehensive numerical studies across various types of PDEs. Strikingly, a trained physics informed DeepOnet model can predict the solution of $\mathcal{O}(10^3)$ time-dependent PDEs in a fraction of a second -- up to three orders of magnitude faster compared a conventional PDE solver. The data and code accompanying this manuscript are publicly available at \url{https://github.com/PredictiveIntelligenceLab/Physics-informed-DeepONets}.

Online Learning via Offline Greedy Algorithms: Applications in Market Design and Optimization

Feb 18, 2021

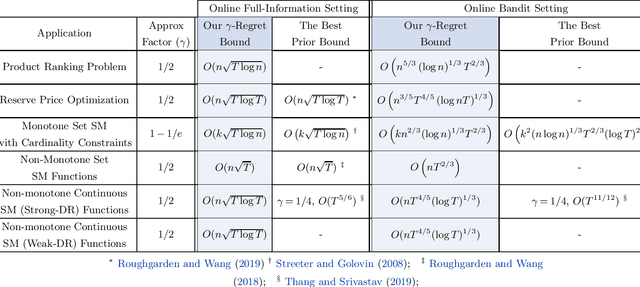

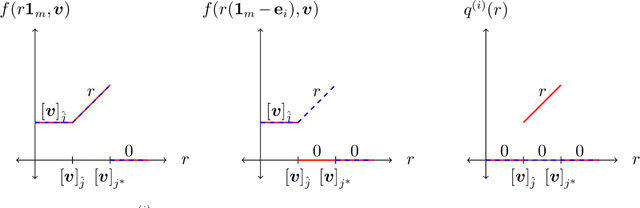

Motivated by online decision-making in time-varying combinatorial environments, we study the problem of transforming offline algorithms to their online counterparts. We focus on offline combinatorial problems that are amenable to a constant factor approximation using a greedy algorithm that is robust to local errors. For such problems, we provide a general framework that efficiently transforms offline robust greedy algorithms to online ones using Blackwell approachability. We show that the resulting online algorithms have $O(\sqrt{T})$ (approximate) regret under the full information setting. We further introduce a bandit extension of Blackwell approachability that we call Bandit Blackwell approachability. We leverage this notion to transform greedy robust offline algorithms into a $O(T^{2/3})$ (approximate) regret in the bandit setting. Demonstrating the flexibility of our framework, we apply our offline-to-online transformation to several problems at the intersection of revenue management, market design, and online optimization, including product ranking optimization in online platforms, reserve price optimization in auctions, and submodular maximization. We show that our transformation, when applied to these applications, leads to new regret bounds or improves the current known bounds.

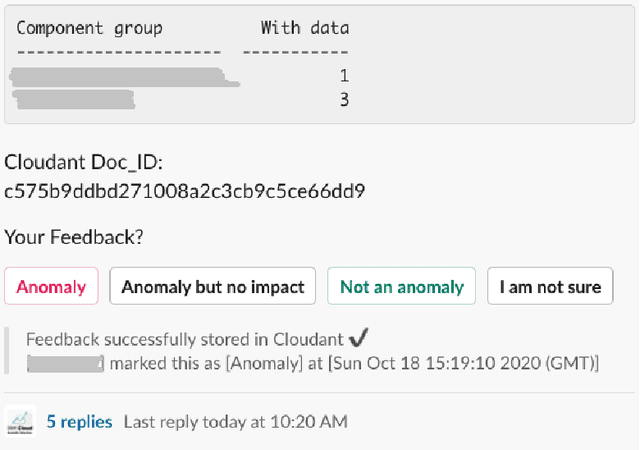

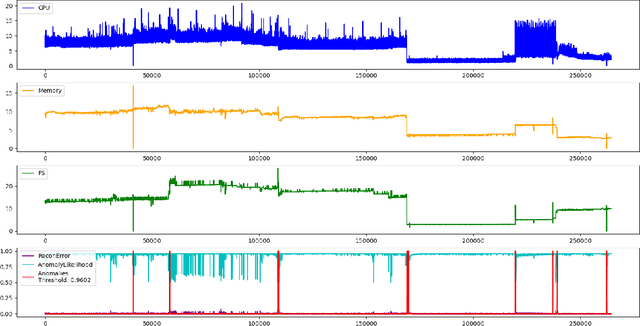

Anomaly Detection in a Large-scale Cloud Platform

Oct 21, 2020

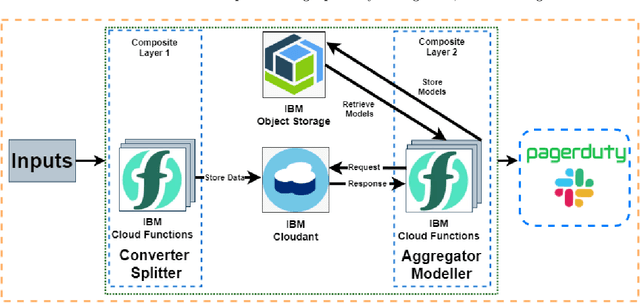

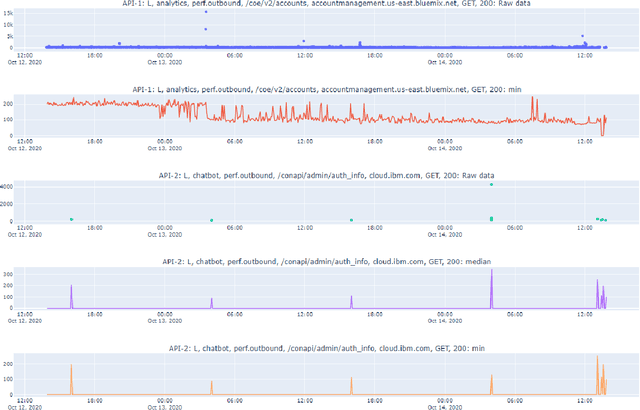

Cloud computing is ubiquitous: more and more companies are moving the workloads into the Cloud. However, this rise in popularity challenges Cloud service providers, as they need to monitor the quality of their ever-growing offerings effectively. To address the challenge, we designed and implemented an automated monitoring system for the IBM Cloud Platform. This monitoring system utilizes deep learning neural networks to detect anomalies in near-real-time in multiple Platform components simultaneously. After running the system for a year, we observed that the proposed solution frees the DevOps team's time and human resources from manually monitoring thousands of Cloud components. Moreover, it increases customer satisfaction by reducing the risk of Cloud outages. In this paper, we share our solutions' architecture, implementation notes, and best practices that emerged while evolving the monitoring system. They can be leveraged by other researchers and practitioners to build anomaly detectors for complex systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge