"Time": models, code, and papers

An electronic neuromorphic system for real-time detection of High Frequency Oscillations (HFOs) in intracranial EEG

Sep 23, 2020

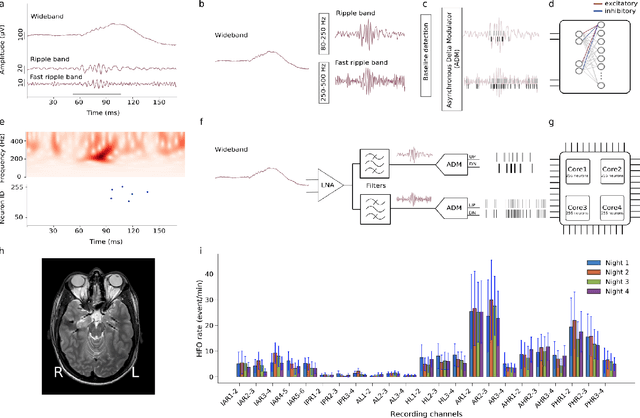

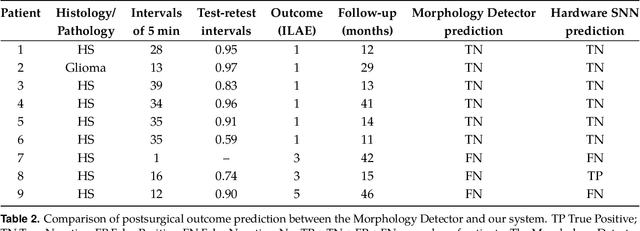

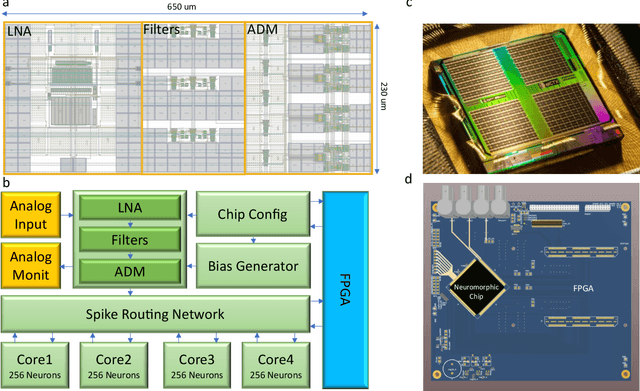

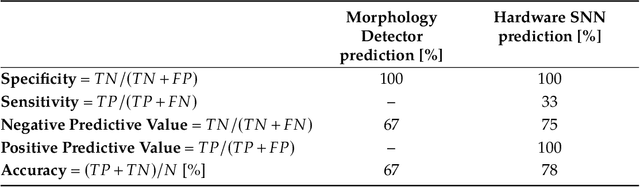

In this work, we present a neuromorphic system that combines for the first time a neural recording headstage with a signal-to-spike conversion circuit and a multi-core spiking neural network (SNN) architecture on the same die for recording, processing, and detecting High Frequency Oscillations (HFO), which are biomarkers for the epileptogenic zone. The device was fabricated using a standard 0.18$\mu$m CMOS technology node and has a total area of 99mm$^{2}$. We demonstrate its application to HFO detection in the iEEG recorded from 9 patients with temporal lobe epilepsy who subsequently underwent epilepsy surgery. The total average power consumption of the chip during the detection task was 614.3$\mu$W. We show how the neuromorphic system can reliably detect HFOs: the system predicts postsurgical seizure outcome with state-of-the-art accuracy, specificity and sensitivity (78%, 100%, and 33% respectively). This is the first feasibility study towards identifying relevant features in intracranial human data in real-time, on-chip, using event-based processors and spiking neural networks. By providing "neuromorphic intelligence" to neural recording circuits the approach proposed will pave the way for the development of systems that can detect HFO areas directly in the operation room and improve the seizure outcome of epilepsy surgery.

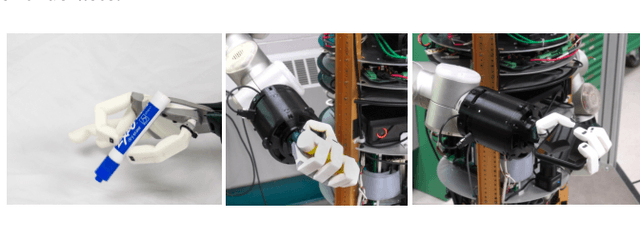

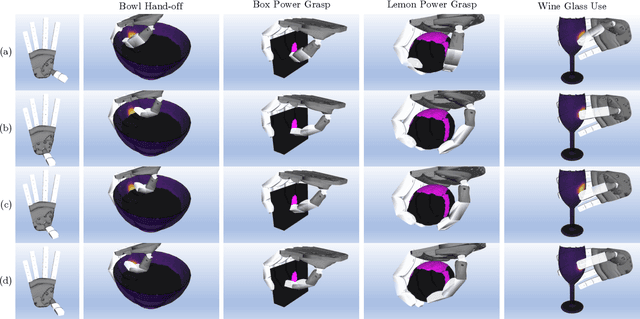

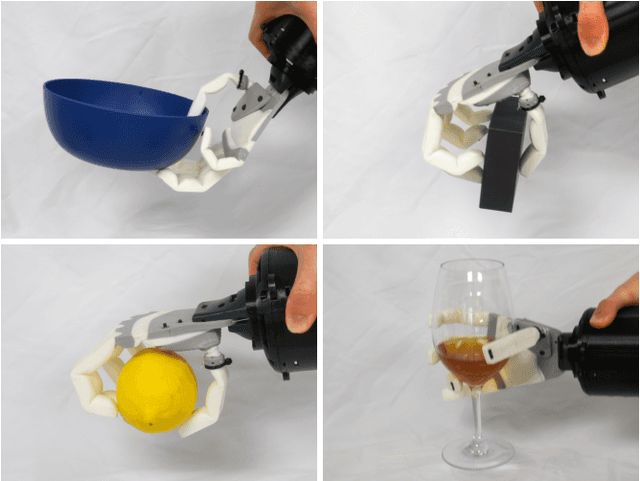

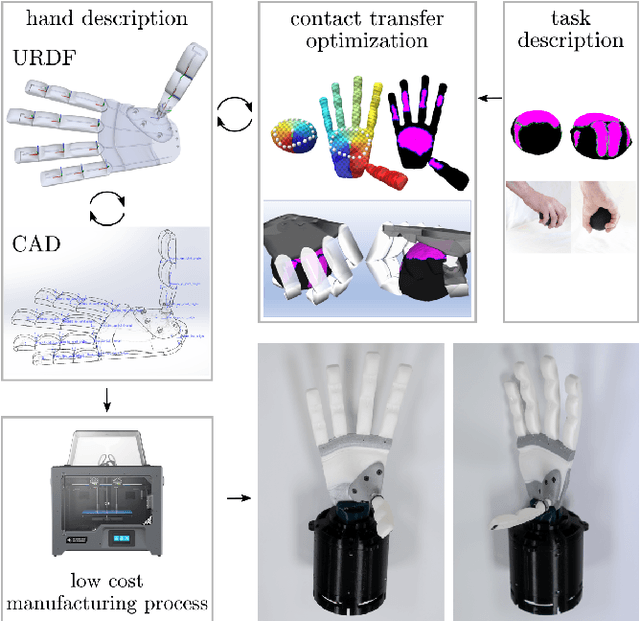

Towards Very Low-Cost Iterative Prototyping for Fully Printable Dexterous Soft Robotic Hands

Nov 02, 2021

The design and fabrication of soft robot hands is still a time-consuming and difficult process. Advances in rapid prototyping have accelerated the fabrication process significantly while introducing new complexities into the design process. In this work, we present an approach that utilizes novel low-cost fabrication techniques in conjunction with design tools helping soft hand designers to systematically take advantage of multi-material 3D printing to create dexterous soft robotic hands. While very low cost and lightweight, we show that generated designs are highly durable, surprisingly strong, and capable of dexterous grasping.

CSAW-M: An Ordinal Classification Dataset for Benchmarking Mammographic Masking of Cancer

Dec 02, 2021

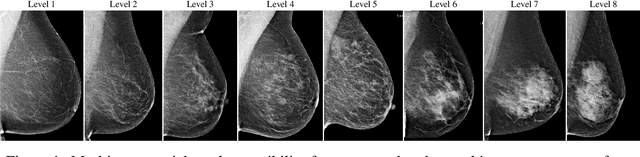

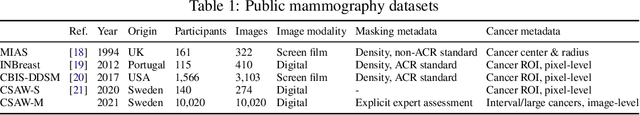

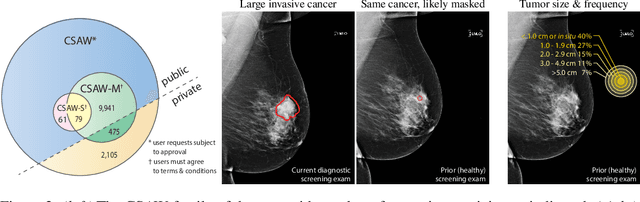

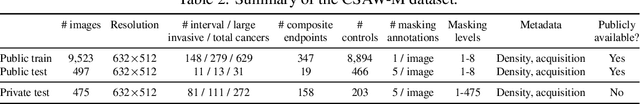

Interval and large invasive breast cancers, which are associated with worse prognosis than other cancers, are usually detected at a late stage due to false negative assessments of screening mammograms. The missed screening-time detection is commonly caused by the tumor being obscured by its surrounding breast tissues, a phenomenon called masking. To study and benchmark mammographic masking of cancer, in this work we introduce CSAW-M, the largest public mammographic dataset, collected from over 10,000 individuals and annotated with potential masking. In contrast to the previous approaches which measure breast image density as a proxy, our dataset directly provides annotations of masking potential assessments from five specialists. We also trained deep learning models on CSAW-M to estimate the masking level and showed that the estimated masking is significantly more predictive of screening participants diagnosed with interval and large invasive cancers -- without being explicitly trained for these tasks -- than its breast density counterparts.

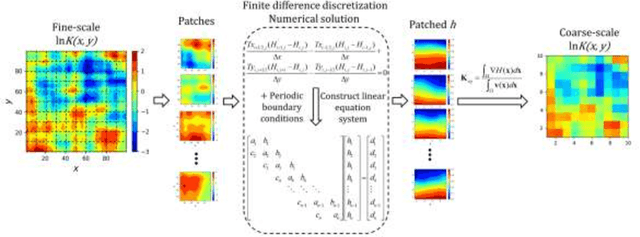

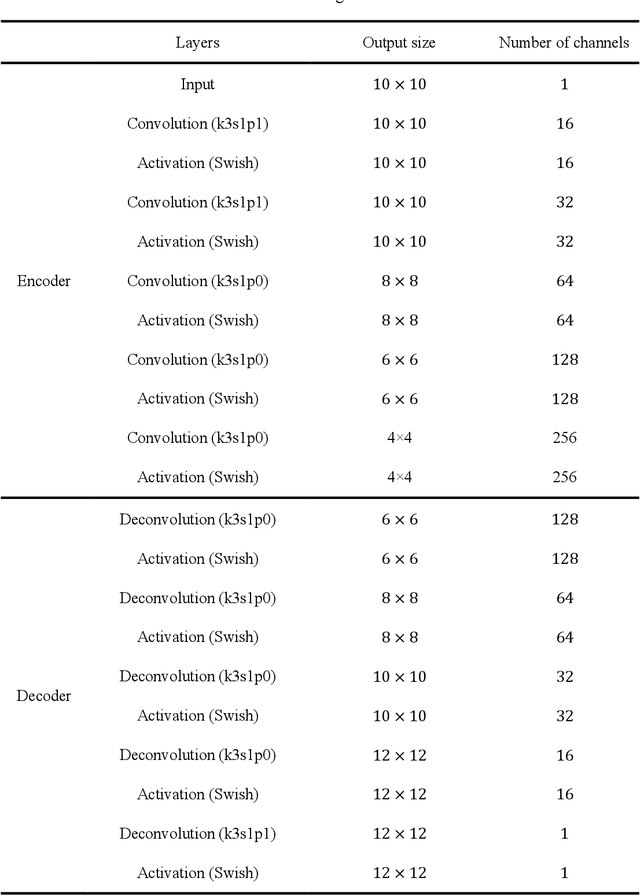

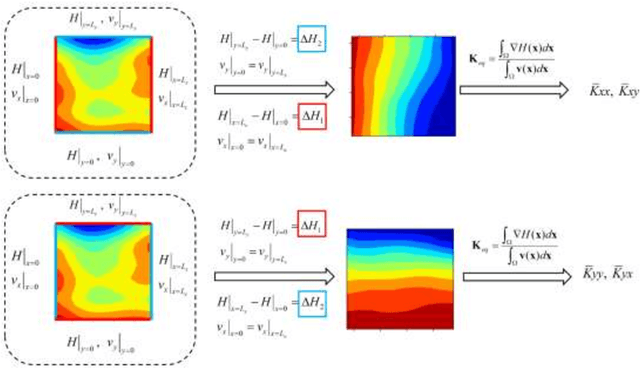

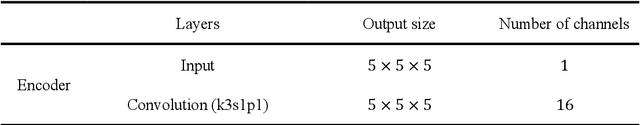

Deep-learning-based upscaling method for geologic models via theory-guided convolutional neural network

Dec 31, 2021

Large-scale or high-resolution geologic models usually comprise a huge number of grid blocks, which can be computationally demanding and time-consuming to solve with numerical simulators. Therefore, it is advantageous to upscale geologic models (e.g., hydraulic conductivity) from fine-scale (high-resolution grids) to coarse-scale systems. Numerical upscaling methods have been proven to be effective and robust for coarsening geologic models, but their efficiency remains to be improved. In this work, a deep-learning-based method is proposed to upscale the fine-scale geologic models, which can assist to improve upscaling efficiency significantly. In the deep learning method, a deep convolutional neural network (CNN) is trained to approximate the relationship between the coarse grid of hydraulic conductivity fields and the hydraulic heads, which can then be utilized to replace the numerical solvers while solving the flow equations for each coarse block. In addition, physical laws (e.g., governing equations and periodic boundary conditions) can also be incorporated into the training process of the deep CNN model, which is termed the theory-guided convolutional neural network (TgCNN). With the physical information considered, dependence on the data volume of training the deep learning models can be reduced greatly. Several subsurface flow cases are introduced to test the performance of the proposed deep-learning-based upscaling method, including 2D and 3D cases, and isotropic and anisotropic cases. The results show that the deep learning method can provide equivalent upscaling accuracy to the numerical method, and efficiency can be improved significantly compared to numerical upscaling.

Multi-Robot On-site Shared Analytics Information and Computing

Dec 13, 2021

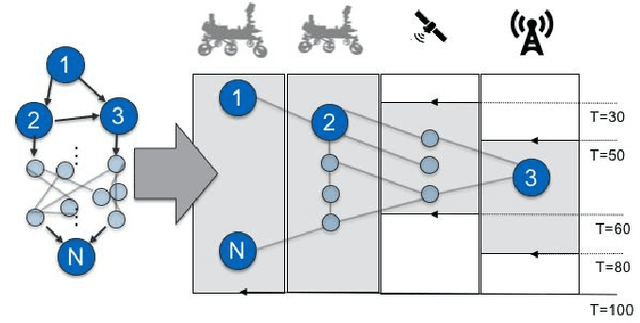

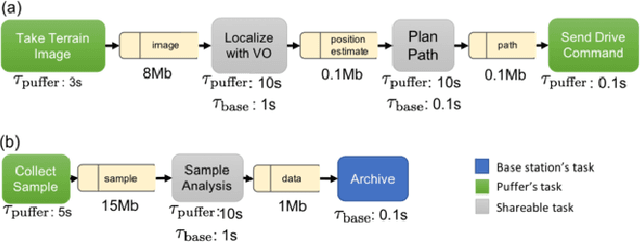

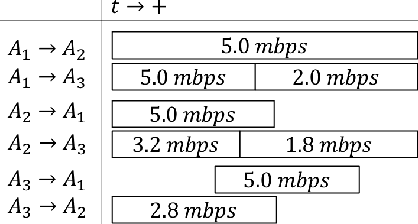

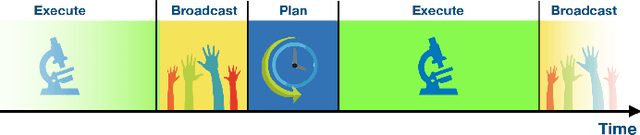

Computation load-sharing across a network of heterogeneous robots is a promising approach to increase robots capabilities and efficiency as a team in extreme environments. However, in such environments, communication links may be intermittent and connections to the cloud or internet may be nonexistent. In this paper we introduce a communication-aware, computation task scheduling problem for multi-robot systems and propose an integer linear program (ILP) that optimizes the allocation of computational tasks across a network of heterogeneous robots, accounting for the networked robots' computational capabilities and for available (and possibly time-varying) communication links. We consider scheduling of a set of inter-dependent required and optional tasks modeled by a dependency graph. We present a consensus-backed scheduling architecture for shared-world, distributed systems. We validate the ILP formulation and the distributed implementation in different computation platforms and in simulated scenarios with a bias towards lunar or planetary exploration scenarios. Our results show that the proposed implementation can optimize schedules to allow a threefold increase the amount of rewarding tasks performed (e.g., science measurements) compared to an analogous system with no computational load-sharing.

Reinforcement Learning-Based Coverage Path Planning with Implicit Cellular Decomposition

Oct 18, 2021

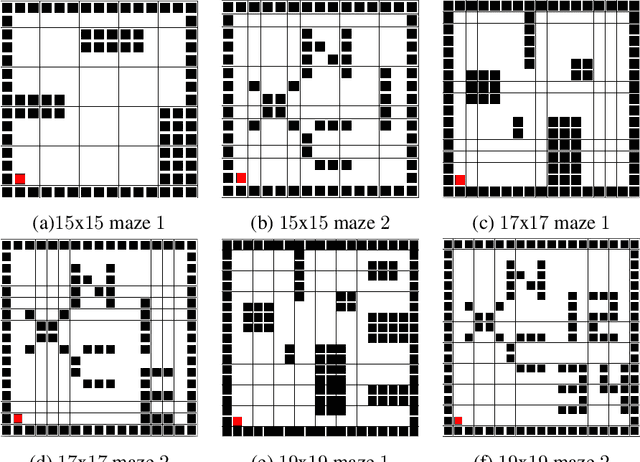

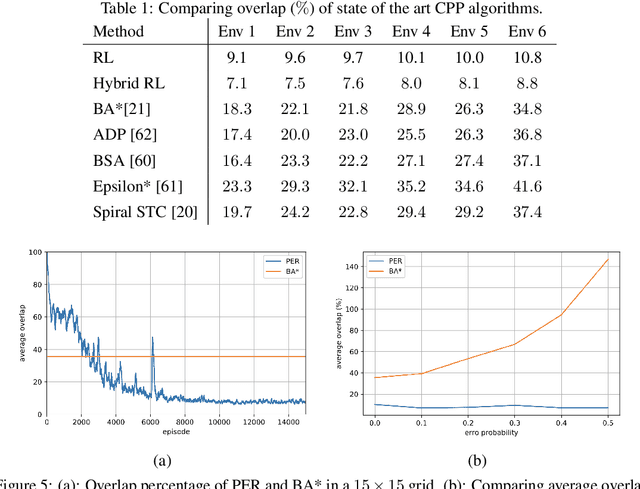

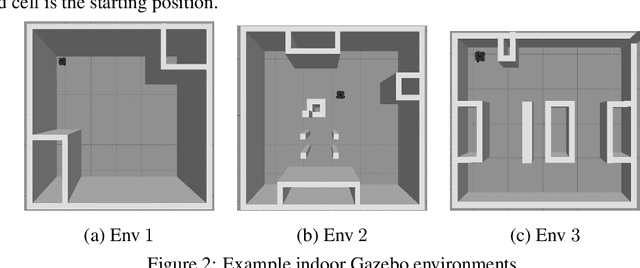

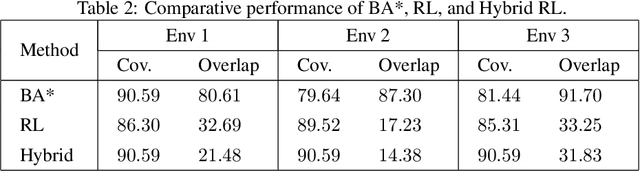

Coverage path planning in a generic known environment is shown to be NP-hard. When the environment is unknown, it becomes more challenging as the robot is required to rely on its online map information built during coverage for planning its path. A significant research effort focuses on designing heuristic or approximate algorithms that achieve reasonable performance. Such algorithms have sub-optimal performance in terms of covering the area or the cost of coverage, e.g., coverage time or energy consumption. In this paper, we provide a systematic analysis of the coverage problem and formulate it as an optimal stopping time problem, where the trade-off between coverage performance and its cost is explicitly accounted for. Next, we demonstrate that reinforcement learning (RL) techniques can be leveraged to solve the problem computationally. To this end, we provide some technical and practical considerations to facilitate the application of the RL algorithms and improve the efficiency of the solutions. Finally, through experiments in grid world environments and Gazebo simulator, we show that reinforcement learning-based algorithms efficiently cover realistic unknown indoor environments, and outperform the current state of the art.

Spatio-Temporal Graph Representation Learning for Fraudster Group Detection

Jan 07, 2022

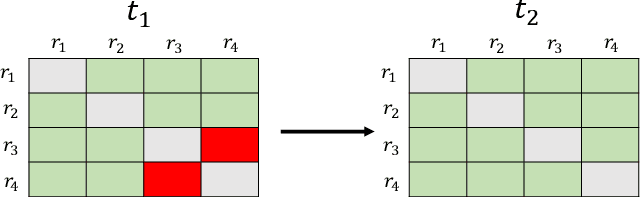

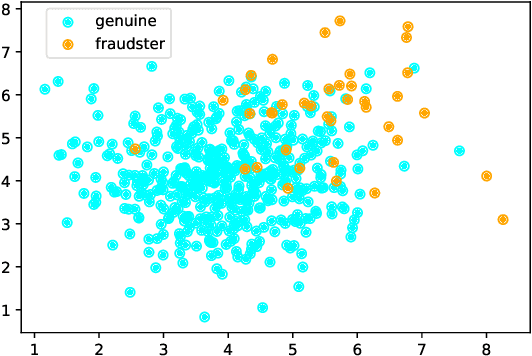

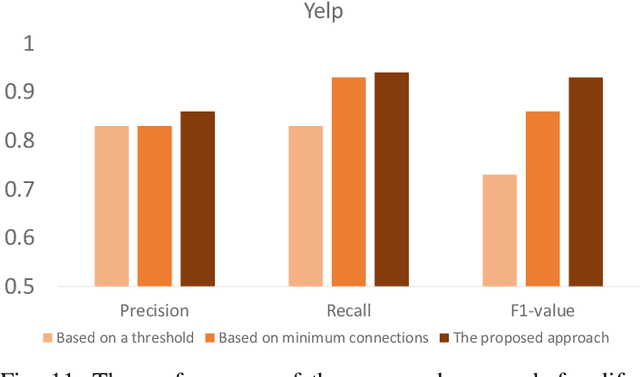

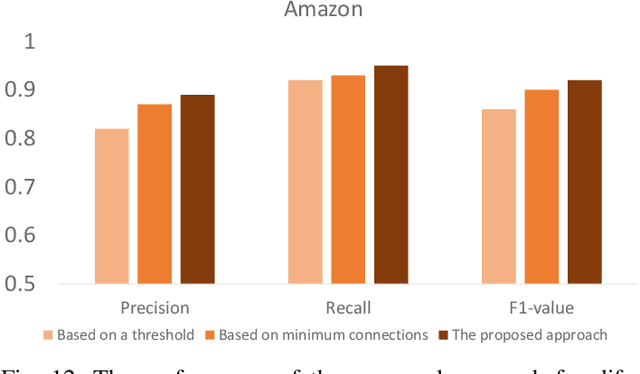

Motivated by potential financial gain, companies may hire fraudster groups to write fake reviews to either demote competitors or promote their own businesses. Such groups are considerably more successful in misleading customers, as people are more likely to be influenced by the opinion of a large group. To detect such groups, a common model is to represent fraudster groups' static networks, consequently overlooking the longitudinal behavior of a reviewer thus the dynamics of co-review relations among reviewers in a group. Hence, these approaches are incapable of excluding outlier reviewers, which are fraudsters intentionally camouflaging themselves in a group and genuine reviewers happen to co-review in fraudster groups. To address this issue, in this work, we propose to first capitalize on the effectiveness of the HIN-RNN in both reviewers' representation learning while capturing the collaboration between reviewers, we first utilize the HIN-RNN to model the co-review relations of reviewers in a group in a fixed time window of 28 days. We refer to this as spatial relation learning representation to signify the generalisability of this work to other networked scenarios. Then we use an RNN on the spatial relations to predict the spatio-temporal relations of reviewers in the group. In the third step, a Graph Convolution Network (GCN) refines the reviewers' vector representations using these predicted relations. These refined representations are then used to remove outlier reviewers. The average of the remaining reviewers' representation is then fed to a simple fully connected layer to predict if the group is a fraudster group or not. Exhaustive experiments of the proposed approach showed a 5% (4%), 12% (5%), 12% (5%) improvement over three of the most recent approaches on precision, recall, and F1-value over the Yelp (Amazon) dataset, respectively.

Real-time Lane detection and Motion Planning in Raspberry Pi and Arduino for an Autonomous Vehicle Prototype

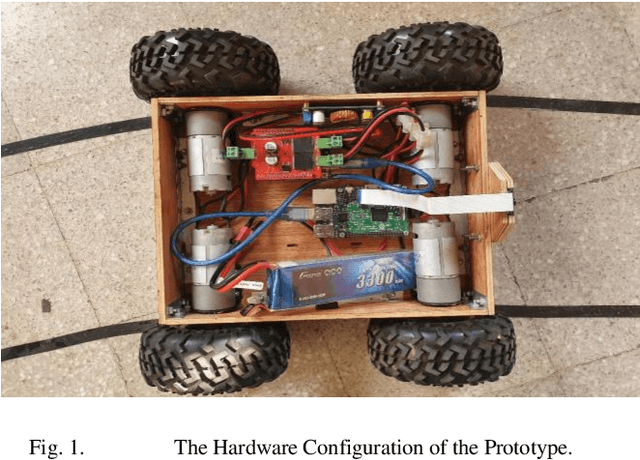

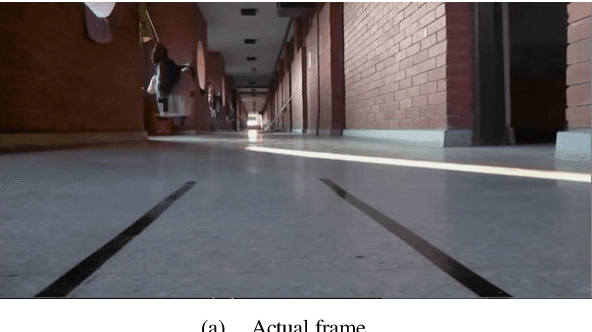

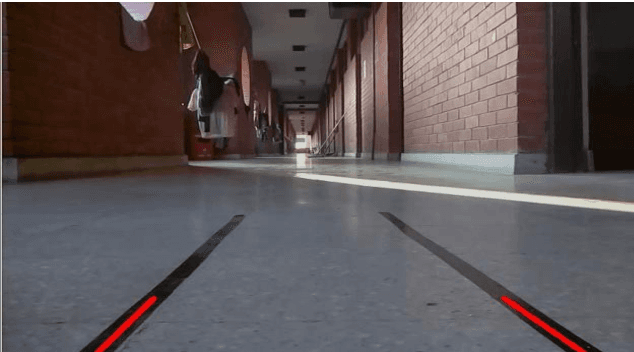

Sep 20, 2020

This paper discusses a vehicle prototype that recognizes streets' lanes and plans its motion accordingly without any human input. Pi Camera 1.3 captures real-time video, which is then processed by Raspberry-Pi 3.0 Model B. The image processing algorithms are written in Python 3.7.4 with OpenCV 4.2. Arduino Uno is utilized to control the PID algorithm that controls the motor controller, which in turn controls the wheels. Algorithms that are used to detect the lanes are the Canny edge detection algorithm and Hough transformation. Elementary algebra is used to draw the detected lanes. After detection, the lanes are tracked using the Kalman filter prediction method. Then the midpoint of the two lanes is found, which is the initial steering direction. This initial steering direction is further smoothed by using the Past Accumulation Average Method and Kalman Filter Prediction Method. The prototype was tested in a controlled environment in real-time. Results from comprehensive testing suggest that this prototype can detect road lanes and plan its motion successfully.

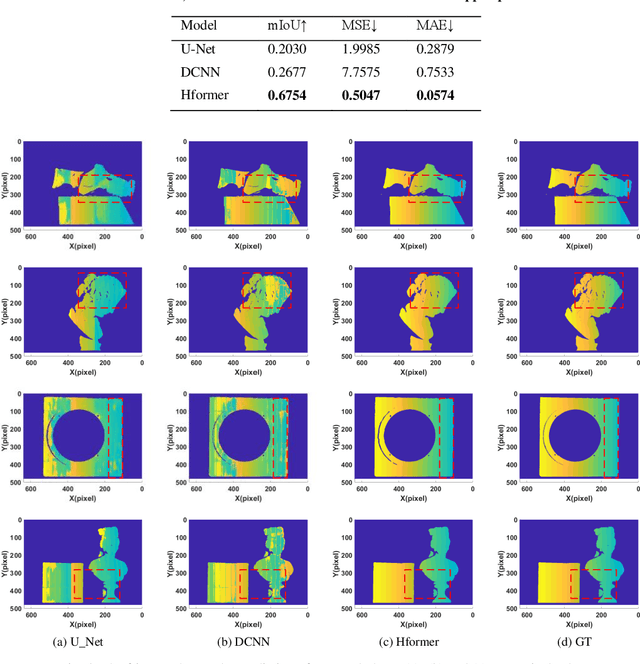

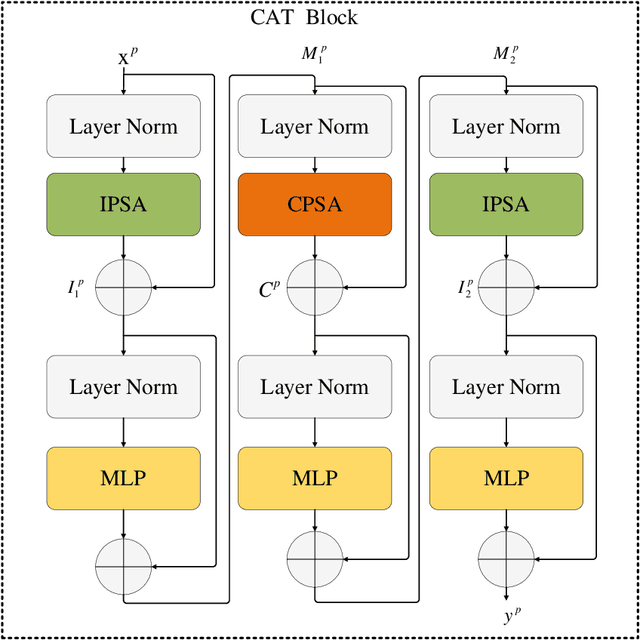

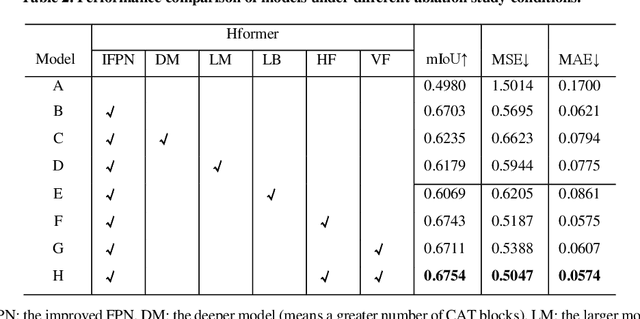

Hformer: Hybrid CNN-Transformer for Fringe Order Prediction in Phase Unwrapping of Fringe Projection

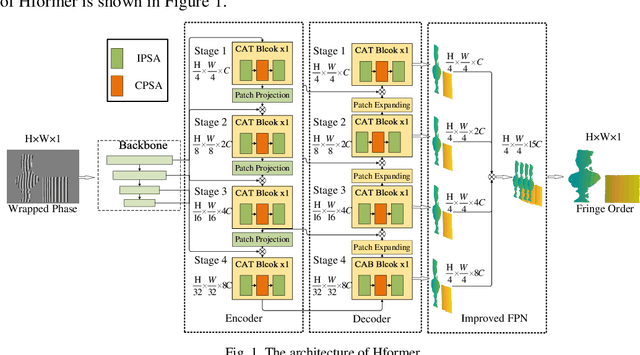

Dec 13, 2021

Recently, deep learning has attracted more and more attention in phase unwrapping of fringe projection three-dimensional (3D) measurement, with the aim to improve the performance leveraging the powerful Convolutional Neural Network (CNN) models. In this paper, for the first time (to the best of our knowledge), we introduce the Transformer into the phase unwrapping which is different from CNN and propose Hformer model dedicated to phase unwrapping via fringe order prediction. The proposed model has a hybrid CNN-Transformer architecture that is mainly composed of backbone, encoder and decoder to take advantage of both CNN and Transformer. Encoder and decoder with cross attention are designed for the fringe order prediction. Experimental results show that the proposed Hformer model achieves better performance in fringe order prediction compared with the CNN models such as U-Net and DCNN. Moreover, ablation study on Hformer is made to verify the improved feature pyramid networks (FPN) and testing strategy with flipping in the predicted fringe order. Our work opens an alternative way to deep learning based phase unwrapping methods, which are dominated by CNN in fringe projection 3D measurement.

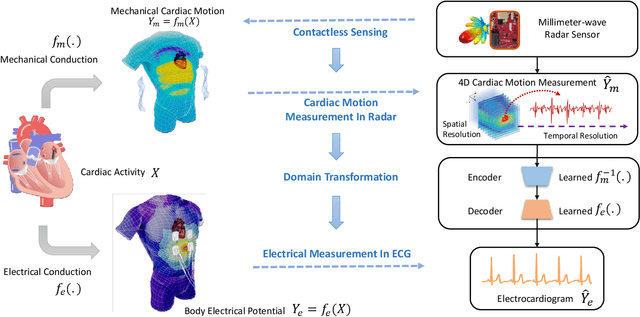

Contactless Electrocardiogram Monitoring with Millimeter Wave Radar

Dec 13, 2021

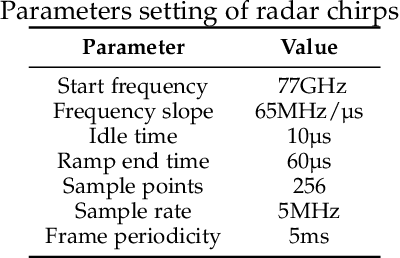

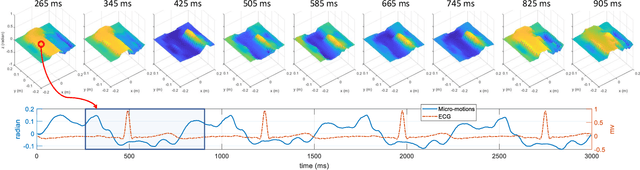

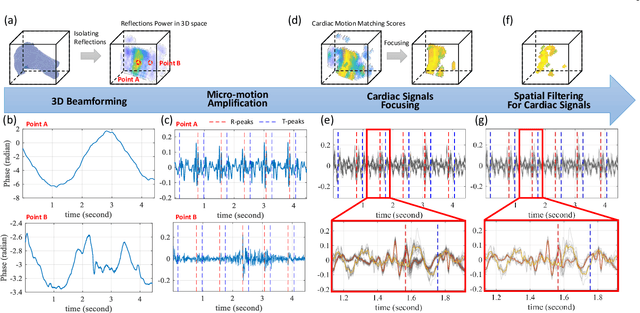

The electrocardiogram (ECG) has always been an important measurement scheme to assess and diagnose cardiovascular diseases. However, the role of ECG monitoring in clinical and daily-life practice are limited by the intrusive equipment and inconvenient manual operation. Here we report the development of a prototype deep learning millimeter radar system to measure cardiac activity and reconstruct ECG without any contact in. The system provides hybrid pipeline of signal processing and deep learning that consists of cardiac activity measurements algorithm from RF signal and interpretable neural network for ECG reconstruction which incorporate domain knowledge of radio frequency (RF) signal and physiological models. The experimental results show that our contactless ECG measurements can timing the Q-peaks, R-peaks, S-peaks, T-peaks, R-R intervals with median error of 14ms, 3ms, 8ms, 10ms, 3ms respectively. In addition, the morphology analysis shows that our results achieve the median Pearson-Correlation of 90% and median Root-Mean-Square-Error of 0.081mv compared to the ground truth ECG. These results indicate that the system enables the potential of contactless, long-time continuous and accurate ECG monitoring, which could facilitate its use in a variety of clinical and daily-life environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge