"Time": models, code, and papers

Dataset Bias in Human Activity Recognition

Jan 19, 2023

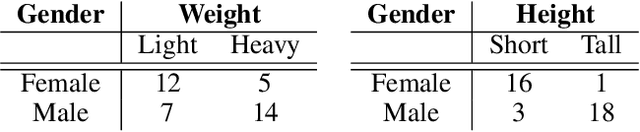

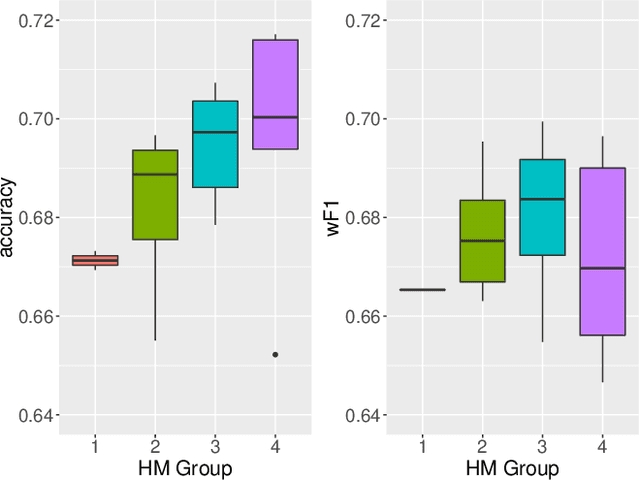

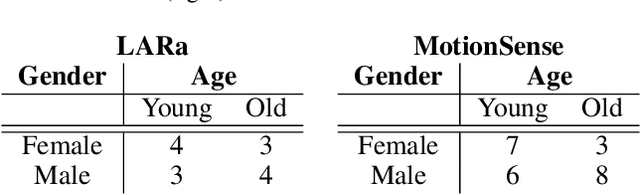

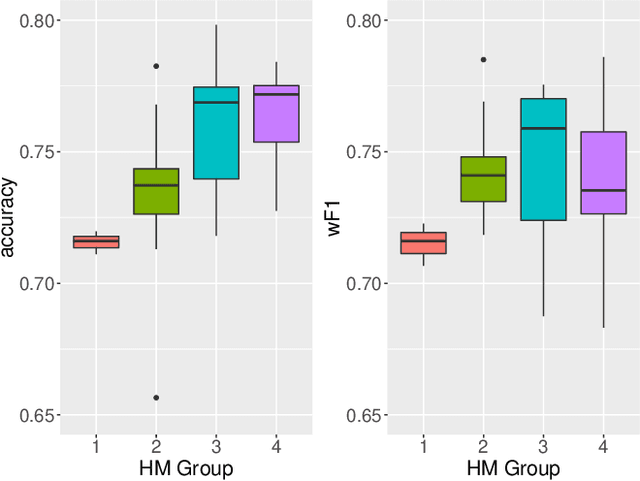

When creating multi-channel time-series datasets for Human Activity Recognition (HAR), researchers are faced with the issue of subject selection criteria. It is unknown what physical characteristics and/or soft-biometrics, such as age, height, and weight, need to be taken into account to train a classifier to achieve robustness towards heterogeneous populations in the training and testing data. This contribution statistically curates the training data to assess to what degree the physical characteristics of humans influence HAR performance. We evaluate the performance of a state-of-the-art convolutional neural network on two HAR datasets that vary in the sensors, activities, and recording for time-series HAR. The training data is intentionally biased with respect to human characteristics to determine the features that impact motion behaviour. The evaluations brought forth the impact of the subjects' characteristics on HAR. Thus, providing insights regarding the robustness of the classifier with respect to heterogeneous populations. The study is a step forward in the direction of fair and trustworthy artificial intelligence by attempting to quantify representation bias in multi-channel time series HAR data.

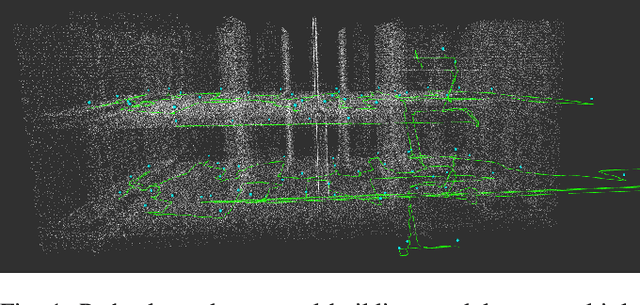

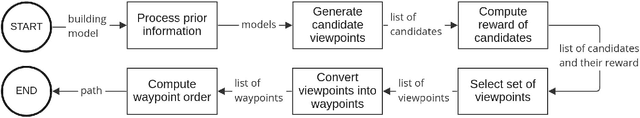

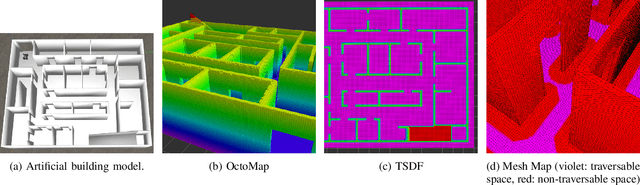

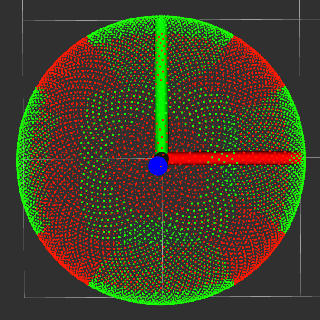

3D Coverage Path Planning for Efficient Construction Progress Monitoring

Feb 02, 2023

On construction sites, progress must be monitored continuously to ensure that the current state corresponds to the planned state in order to increase efficiency, safety and detect construction defects at an early stage. Autonomous mobile robots can document the state of construction with high data quality and consistency. However, finding a path that fully covers the construction site is a challenging task as it can be large, slowly changing over time, and contain dynamic objects. Existing approaches are either exploration approaches that require a long time to explore the entire building, object scanning approaches that are not suitable for large and complex buildings, or planning approaches that only consider 2D coverage. In this paper, we present a novel approach for planning an efficient 3D path for progress monitoring on large construction sites with multiple levels. By making use of an existing 3D model we ensure that all surfaces of the building are covered by the sensor payload such as a 360-degree camera or a lidar. This enables the consistent and reliable monitoring of construction site progress with an autonomous ground robot. We demonstrate the effectiveness of the proposed planner on an artificial and a real building model, showing that much shorter paths and better coverage are achieved than with a traditional exploration planner.

* Published in: 2022 IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR)

Joint Optimization of Base Station Clustering and Service Caching in User-Centric MEC

Feb 21, 2023

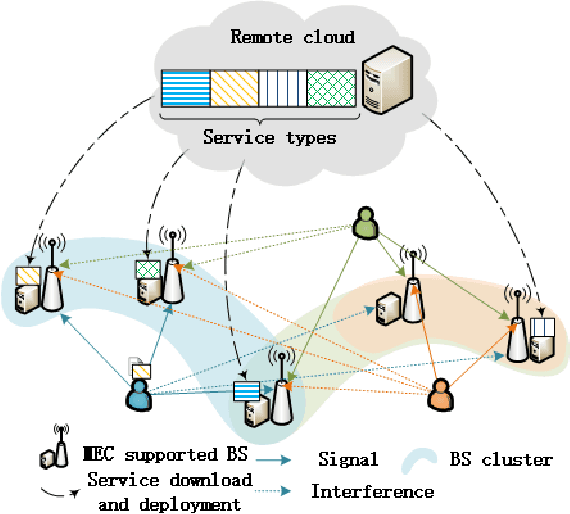

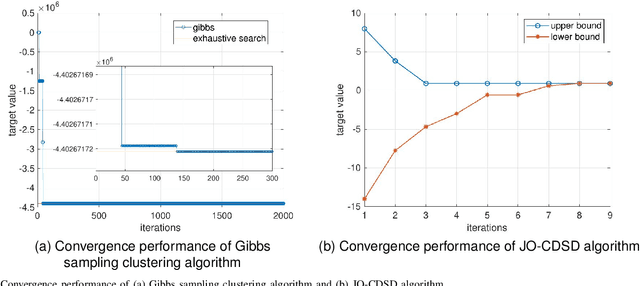

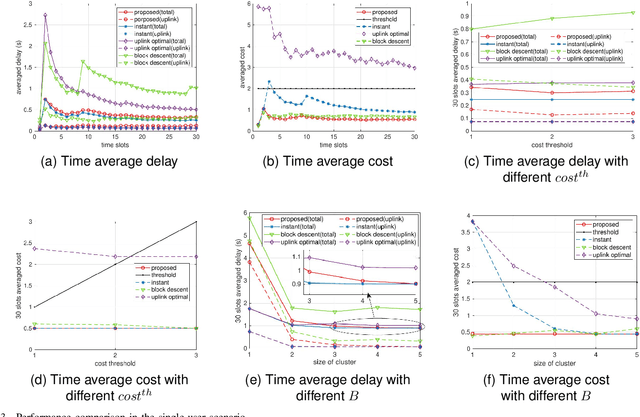

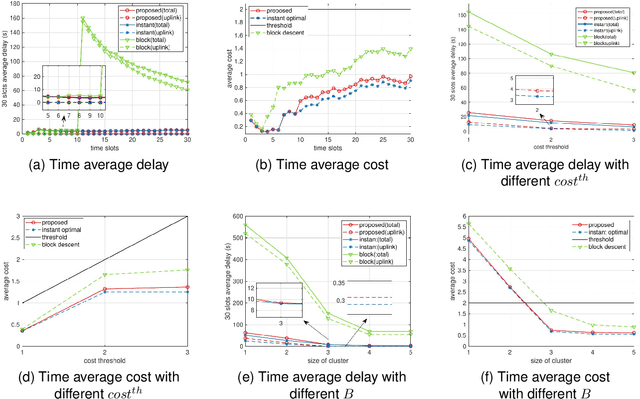

Edge service caching can effectively reduce the delay or bandwidth overhead for acquiring and initializing applications. To address single-base station (BS) transmission limitation and serious edge effect in traditional cellular-based edge service caching networks, in this paper, we proposed a novel user-centric edge service caching framework where each user is jointly provided with edge caching and wireless transmission services by a specific BS cluster instead of a single BS. To minimize the long-term average delay under the constraint of the caching cost, a mixed integer non-linear programming (MINLP) problem is formulated by jointly optimizing the BS clustering and service caching decisions. To tackle the problem, we propose JO-CDSD, an efficiently joint optimization algorithm based on Lyapunov optimization and generalized benders decomposition (GBD). In particular, the long-term optimization problem can be transformed into a primal problem and a master problem in each time slot that is much simpler to solve. The near-optimal clustering and caching strategy can be obtained through solving the primal and master problem alternately. Extensive simulations show that the proposed joint optimization algorithm outperforms other algorithms and can effectively reduce the long-term delay by at most $93.75% and caching cost by at most $53.12%.

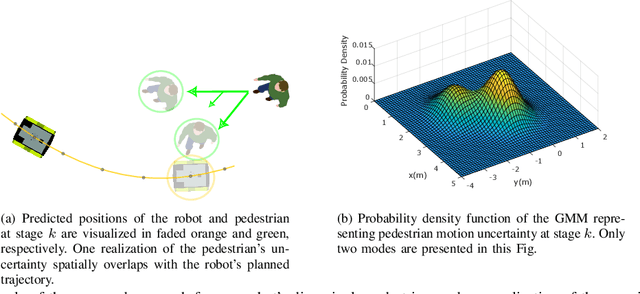

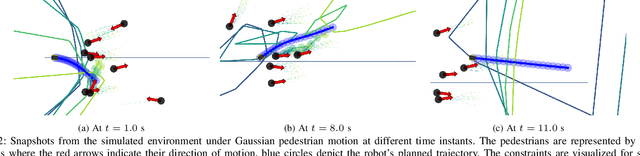

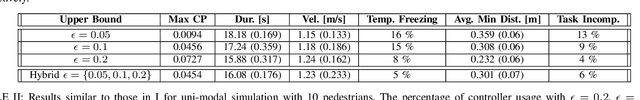

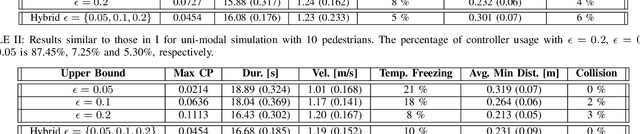

Probabilistic Risk Assessment for Chance-Constrained Collision Avoidance in Uncertain Dynamic Environments

Feb 21, 2023

Balancing safety and efficiency when planning in crowded scenarios with uncertain dynamics is challenging where it is imperative to accomplish the robot's mission without incurring any safety violations. Typically, chance constraints are incorporated into the planning problem to provide probabilistic safety guarantees by imposing an upper bound on the collision probability of the planned trajectory. Yet, this results in overly conservative behavior on the grounds that the gap between the obtained risk and the specified upper limit is not explicitly restricted. To address this issue, we propose a real-time capable approach to quantify the risk associated with planned trajectories obtained from multiple probabilistic planners, running in parallel, with different upper bounds of the acceptable risk level. Based on the evaluated risk, the least conservative plan is selected provided that its associated risk is below a specified threshold. In such a way, the proposed approach provides probabilistic safety guarantees by attaining a closer bound to the specified risk, while being applicable to generic uncertainties of moving obstacles. We demonstrate the efficiency of our proposed approach, by improving the performance of a state-of-the-art probabilistic planner, in simulations and experiments using a mobile robot in an environment shared with humans.

USR: Unsupervised Separated 3D Garment and Human Reconstruction via Geometry and Semantic Consistency

Feb 21, 2023

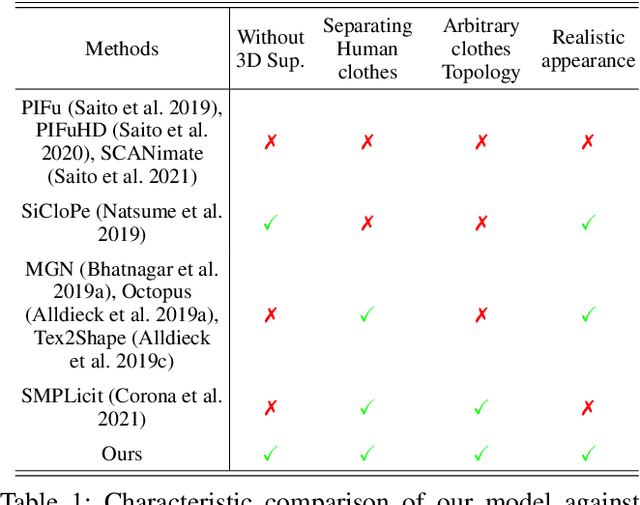

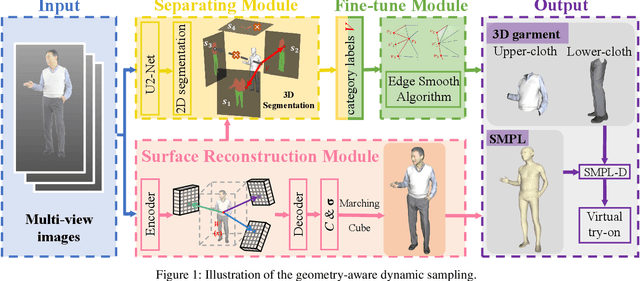

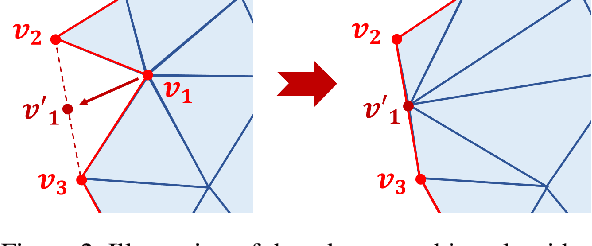

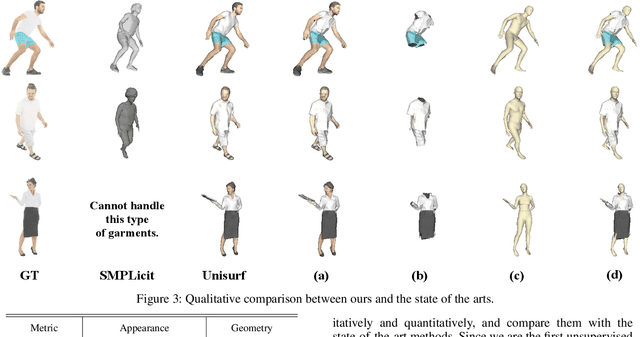

Dressed people reconstruction from images is a popular task with promising applications in the creative media and game industry. However, most existing methods reconstruct the human body and garments as a whole with the supervision of 3D models, which hinders the downstream interaction tasks and requires hard-to-obtain data. To address these issues, we propose an unsupervised separated 3D garments and human reconstruction model (USR), which reconstructs the human body and authentic textured clothes in layers without 3D models. More specifically, our method proposes a generalized surface-aware neural radiance field to learn the mapping between sparse multi-view images and geometries of the dressed people. Based on the full geometry, we introduce a Semantic and Confidence Guided Separation strategy (SCGS) to detect, segment, and reconstruct the clothes layer, leveraging the consistency between 2D semantic and 3D geometry. Moreover, we propose a Geometry Fine-tune Module to smooth edges. Extensive experiments on our dataset show that comparing with state-of-the-art methods, USR achieves improvements on both geometry and appearance reconstruction while supporting generalizing to unseen people in real time. Besides, we also introduce SMPL-D model to show the benefit of the separated modeling of clothes and the human body that allows swapping clothes and virtual try-on.

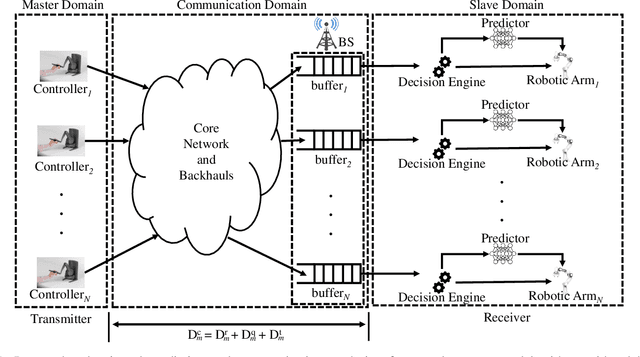

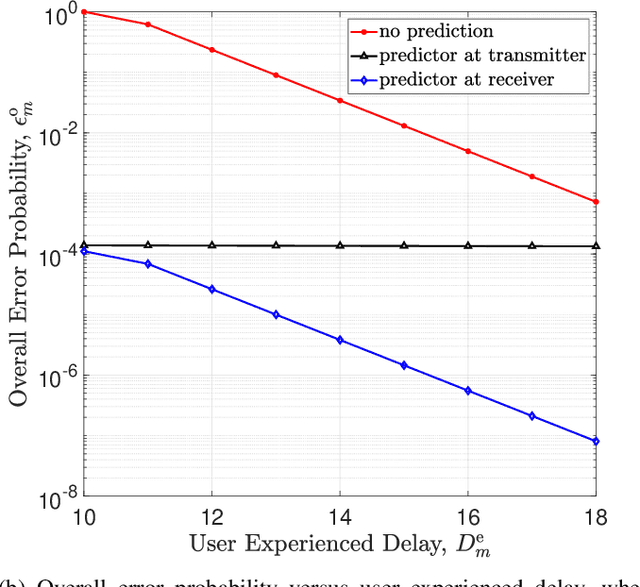

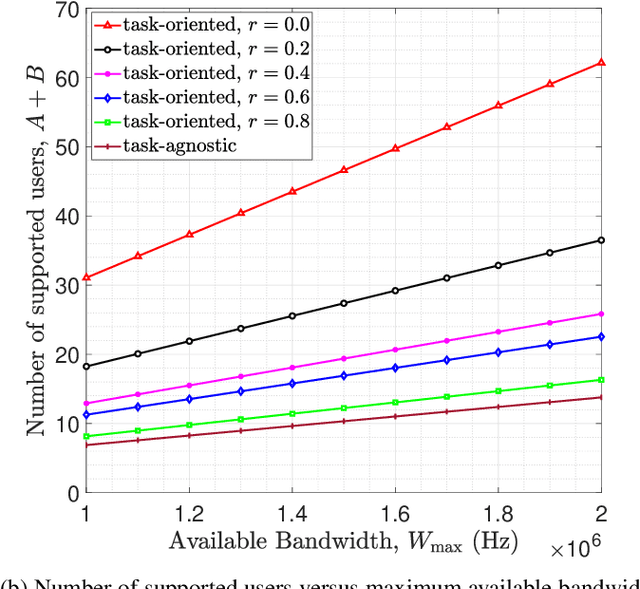

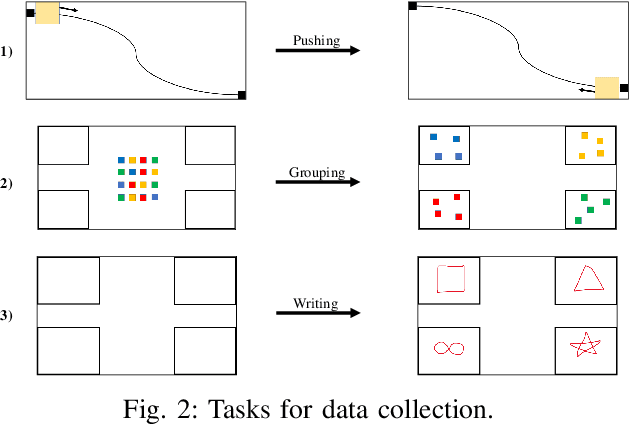

Task-Oriented Prediction and Communication Co-Design for Haptic Communications

Feb 21, 2023

Prediction has recently been considered as a promising approach to meet low-latency and high-reliability requirements in long-distance haptic communications. However, most of the existing methods did not take features of tasks and the relationship between prediction and communication into account. In this paper, we propose a task-oriented prediction and communication co-design framework, where the reliability of the system depends on prediction errors and packet losses in communications. The goal is to minimize the required radio resources subject to the low-latency and high-reliability requirements of various tasks. Specifically, we consider the just noticeable difference (JND) as a performance metric for the haptic communication system. We collect experiment data from a real-world teleoperation testbed and use time-series generative adversarial networks (TimeGAN) to generate a large amount of synthetic data. This allows us to obtain the relationship between the JND threshold, prediction horizon, and the overall reliability including communication reliability and prediction reliability. We take 5G New Radio as an example to demonstrate the proposed framework and optimize bandwidth allocation and data rates of devices. Our numerical and experimental results show that the proposed framework can reduce wireless resource consumption up to 77.80% compared with a task-agnostic benchmark.

Reinforcement Learning in a Birth and Death Process: Breaking the Dependence on the State Space

Feb 21, 2023

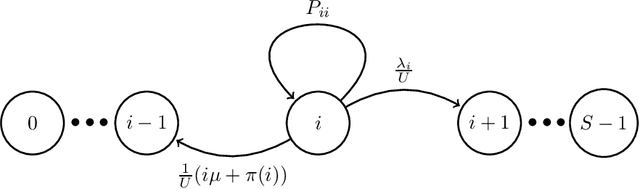

In this paper, we revisit the regret of undiscounted reinforcement learning in MDPs with a birth and death structure. Specifically, we consider a controlled queue with impatient jobs and the main objective is to optimize a trade-off between energy consumption and user-perceived performance. Within this setting, the \emph{diameter} $D$ of the MDP is $\Omega(S^S)$, where $S$ is the number of states. Therefore, the existing lower and upper bounds on the regret at time$T$, of order $O(\sqrt{DSAT})$ for MDPs with $S$ states and $A$ actions, may suggest that reinforcement learning is inefficient here. In our main result however, we exploit the structure of our MDPs to show that the regret of a slightly-tweaked version of the classical learning algorithm {\sc Ucrl2} is in fact upper bounded by $\tilde{\mathcal{O}}(\sqrt{E_2AT})$ where $E_2$ is related to the weighted second moment of the stationary measure of a reference policy. Importantly, $E_2$ is bounded independently of $S$. Thus, our bound is asymptotically independent of the number of states and of the diameter. This result is based on a careful study of the number of visits performed by the learning algorithm to the states of the MDP, which is highly non-uniform.

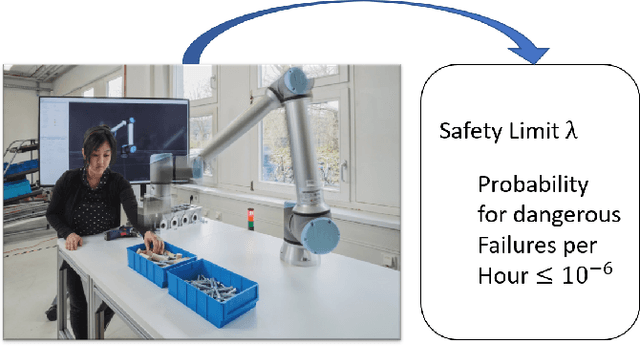

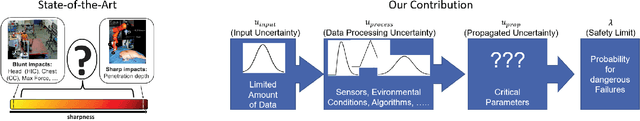

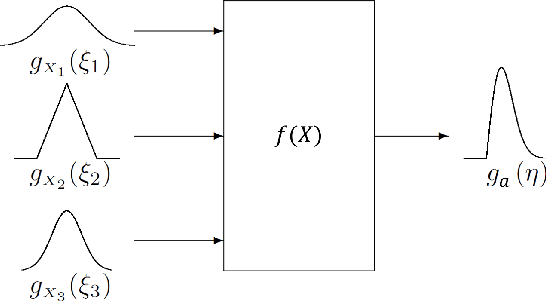

Safety Evaluation of Robot Systems via Uncertainty Quantification

Feb 21, 2023

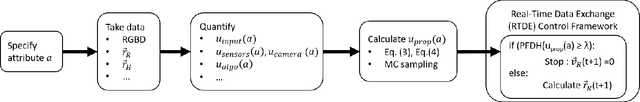

In this paper, we present an approach for quantifying the propagated uncertainty of robot systems in an online and data-driven manner. Especially in Human-Robot Collaboration, keeping track of the safety compliance during run time is essential: Misclassifying dangerous situations as safe might result in severe accidents. According to official regulations (eg, ISO standards), safety in industrial robot applications depends on critical parameters, such as the distance and relative velocity between humans and robots. However, safety can only be assured given a measure for the reliability of these parameters. While different risk detection and mitigation approaches exist in literature, a measure that can be used to evaluate safety limits online, and succinctly implies whether a situation is safe or dangerous, is missing to date. Motivated by this, we introduce a generalizable method for calculating the propagated measurement uncertainty of arbitrary parameters, that captures the accumulated uncertainty originating from sensory devices and environmental disturbances of the system. To show that our approach delivers correct results, we perform validation experiments in simulation. In addition, we employ our method in two real-world settings and demonstrate how quantifying the propagated uncertainty of critical parameters facilitates assessing safety online in Human-Robot Collaboration.

Analysis of the Effect of Time Delay for Unmanned Aerial Vehicles with Applications to Vision Based Navigation

Sep 05, 2022

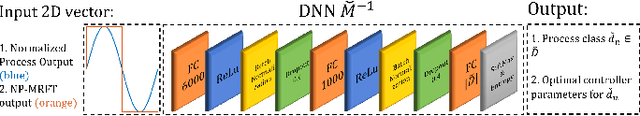

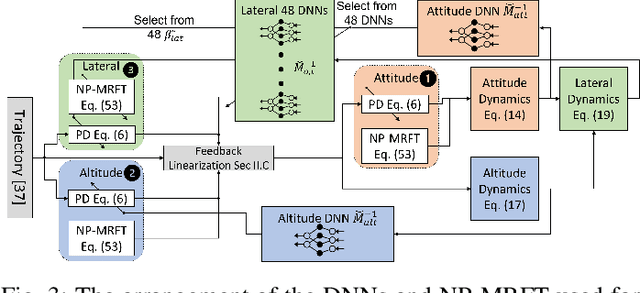

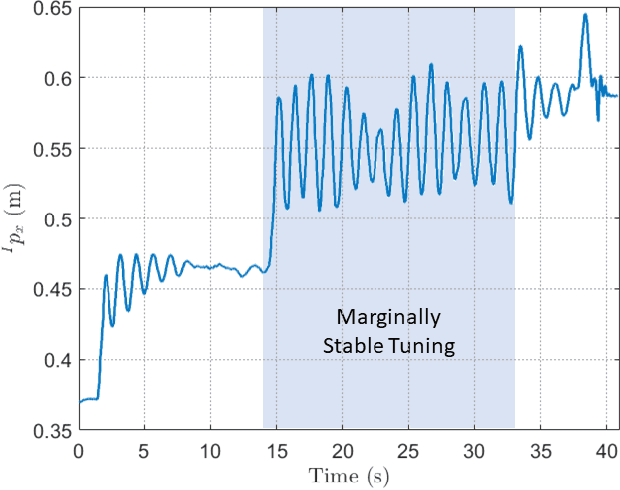

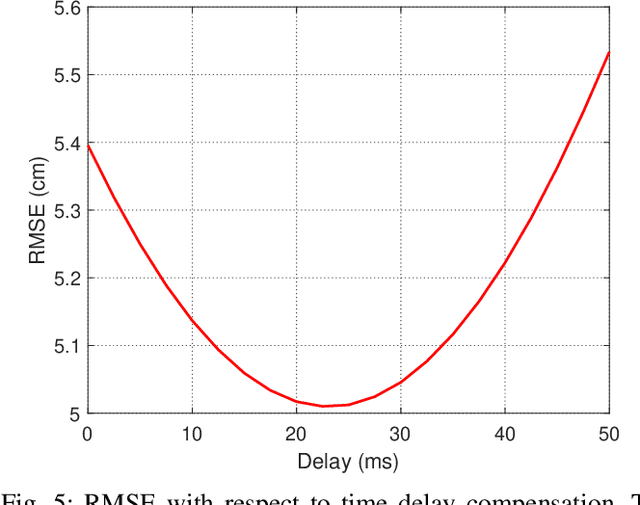

In this paper, we analyze the effect of time delay dynamics on controller design for Unmanned Aerial Vehicles (UAVs) with vision based navigation. Time delay is an inevitable phenomenon in cyber-physical systems, and has important implications on controller design and trajectory generation for UAVs. The impact of time delay on UAV dynamics increases with the use of the slower vision based navigation stack. We show that the existing models in the literature, which exclude time delay, are unsuitable for controller tuning since a trivial solution for minimizing an error cost functional always exists. The trivial solution that we identify suggests use of infinite controller gains to achieve optimal performance, which contradicts practical findings. We avoid such shortcomings by introducing a novel nonlinear time delay model for UAVs, and then obtain a set of linear decoupled models corresponding to each of the UAV control loops. The cost functional of the linearized time delay model of angular and altitude dynamics is analyzed, and in contrast to the delay-free models, we show the existence of finite optimal controller parameters. Due to the use of time delay models, we experimentally show that the proposed model accurately represents system stability limits. Due to time delay consideration, we achieved a tracking results of RMSE 5.01 cm when tracking a lemniscate trajectory with a peak velocity of 2.09 m/s using visual odometry (VO) based UAV navigation, which is on par with the state-of-the-art.

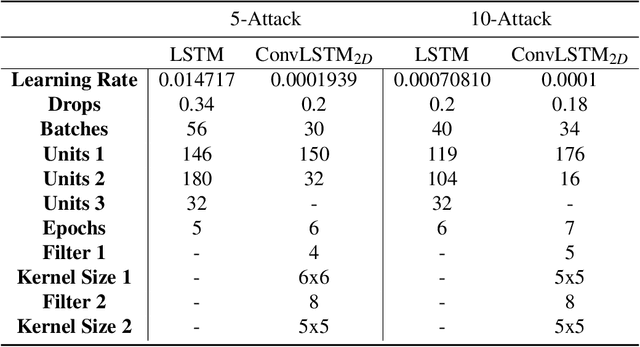

Edge-Based Detection and Localization of Adversarial Oscillatory Load Attacks Orchestrated By Compromised EV Charging Stations

Feb 24, 2023

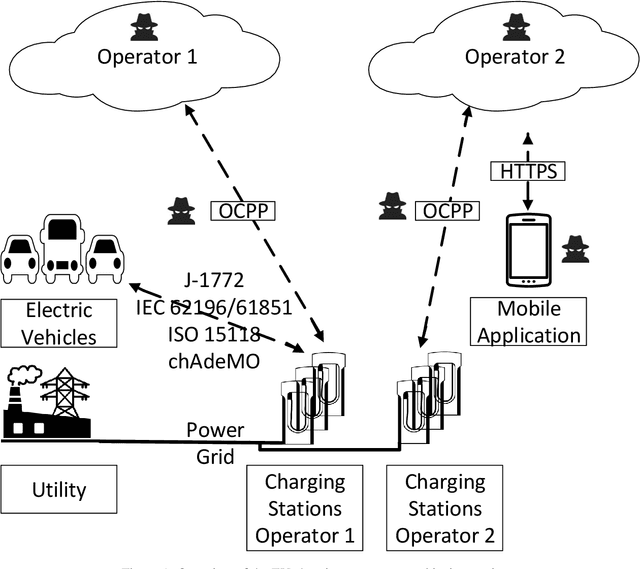

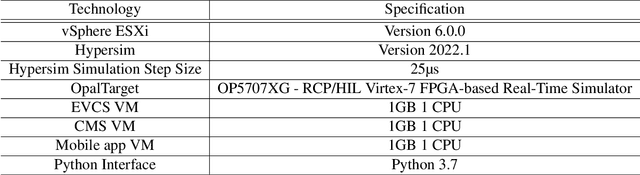

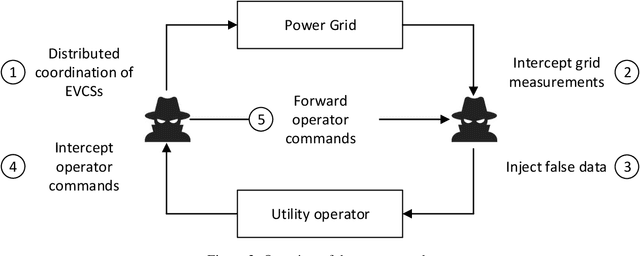

In this paper, we investigate an edge-based approach for the detection and localization of coordinated oscillatory load attacks initiated by exploited EV charging stations against the power grid. We rely on the behavioral characteristics of the power grid in the presence of interconnected EVCS while combining cyber and physical layer features to implement deep learning algorithms for the effective detection of oscillatory load attacks at the EVCS. We evaluate the proposed detection approach by building a real-time test bed to synthesize benign and malicious data, which was generated by analyzing real-life EV charging data collected during recent years. The results demonstrate the effectiveness of the implemented approach with the Convolutional Long-Short Term Memory model producing optimal classification accuracy (99.4\%). Moreover, our analysis results shed light on the impact of such detection mechanisms towards building resiliency into different levels of the EV charging ecosystem while allowing power grid operators to localize attacks and take further mitigation measures. Specifically, we managed to decentralize the detection mechanism of oscillatory load attacks and create an effective alternative for operator-centric mechanisms to mitigate multi-operator and MitM oscillatory load attacks against the power grid. Finally, we leverage the created test bed to evaluate a distributed mitigation technique, which can be deployed on public/private charging stations to average out the impact of oscillatory load attacks while allowing the power system to recover smoothly within 1 second with minimal overhead.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge