Zhe Yu

From Retinal Evidence to Safe Decisions: RETINA-SAFE and ECRT for Hallucination Risk Triage in Medical LLMs

Apr 07, 2026Abstract:Hallucinations in medical large language models (LLMs) remain a safety-critical issue, particularly when available evidence is insufficient or conflicting. We study this problem in diabetic retinopathy (DR) decision settings and introduce RETINA-SAFE, an evidence-grounded benchmark aligned with retinal grading records, comprising 12,522 samples. RETINA-SAFE is organized into three evidence-relation tasks: E-Align (evidence-consistent), E-Conflict (evidence-conflicting), and E-Gap (evidence-insufficient). We further propose ECRT (Evidence-Conditioned Risk Triage), a two-stage white-box detection framework: Stage 1 performs Safe/Unsafe risk triage, and Stage 2 refines unsafe cases into contradiction-driven versus evidence-gap risks. ECRT leverages internal representation and logit shifts under CTX/NOCTX conditions, with class-balanced training for robust learning. Under evidence-grouped (not patient-disjoint) splits across multiple backbones, ECRT provides strong Stage-1 risk triage and explicit subtype attribution, improves Stage-1 balanced accuracy by +0.15 to +0.19 over external uncertainty and self-consistency baselines and by +0.02 to +0.07 over the strongest adapted supervised baseline, and consistently exceeds a single-stage white-box ablation on Stage-1 balanced accuracy. These findings support white-box internal signals grounded in retinal evidence as a practical route to interpretable medical LLM risk triage.

LatentAudit: Real-Time White-Box Faithfulness Monitoring for Retrieval-Augmented Generation with Verifiable Deployment

Apr 07, 2026Abstract:Retrieval-augmented generation (RAG) mitigates hallucination but does not eliminate it: a deployed system must still decide, at inference time, whether its answer is actually supported by the retrieved evidence. We introduce LatentAudit, a white-box auditor that pools mid-to-late residual-stream activations from an open-weight generator and measures their Mahalanobis distance to the evidence representation. The resulting quadratic rule requires no auxiliary judge model, runs at generation time, and is simple enough to calibrate on a small held-out set. We show that residual-stream geometry carries a usable faithfulness signal, that this signal survives architecture changes and realistic retrieval failures, and that the same rule remains amenable to public verification. On PubMedQA with Llama-3-8B, LatentAudit reaches 0.942 AUROC with 0.77,ms overhead. Across three QA benchmarks and five model families (Llama-2/3, Qwen-2.5/3, Mistral), the monitor remains stable; under a four-way stress test with contradictions, retrieval misses, and partial-support noise, it reaches 0.9566--0.9815 AUROC on PubMedQA and 0.9142--0.9315 on HotpotQA. At 16-bit fixed-point precision, the audit rule preserves 99.8% of the FP16 AUROC, enabling Groth16-based public verification without revealing model weights or activations. Together, these results position residual-stream geometry as a practical basis for real-time RAG faithfulness monitoring and optional verifiable deployment.

Modeling Art Evaluations from Comparative Judgments: A Deep Learning Approach to Predicting Aesthetic Preferences

Jan 30, 2026Abstract:Modeling human aesthetic judgments in visual art presents significant challenges due to individual preference variability and the high cost of obtaining labeled data. To reduce cost of acquiring such labels, we propose to apply a comparative learning framework based on pairwise preference assessments rather than direct ratings. This approach leverages the Law of Comparative Judgment, which posits that relative choices exhibit less cognitive burden and greater cognitive consistency than direct scoring. We extract deep convolutional features from painting images using ResNet-50 and develop both a deep neural network regression model and a dual-branch pairwise comparison model. We explored four research questions: (RQ1) How does the proposed deep neural network regression model with CNN features compare to the baseline linear regression model using hand-crafted features? (RQ2) How does pairwise comparative learning compare to regression-based prediction when lacking access to direct rating values? (RQ3) Can we predict individual rater preferences through within-rater and cross-rater analysis? (RQ4) What is the annotation cost trade-off between direct ratings and comparative judgments in terms of human time and effort? Our results show that the deep regression model substantially outperforms the baseline, achieving up to $328\%$ improvement in $R^2$. The comparative model approaches regression performance despite having no access to direct rating values, validating the practical utility of pairwise comparisons. However, predicting individual preferences remains challenging, with both within-rater and cross-rater performance significantly lower than average rating prediction. Human subject experiments reveal that comparative judgments require $60\%$ less annotation time per item, demonstrating superior annotation efficiency for large-scale preference modeling.

Modeling Image-Caption Rating from Comparative Judgments

Jan 30, 2026Abstract:Rating the accuracy of captions in describing images is time-consuming and subjective for humans. In contrast, it is often easier for people to compare two captions and decide which one better matches a given image. In this work, we propose a machine learning framework that models such comparative judgments instead of direct ratings. The model can then be applied to rank unseen image-caption pairs in the same way as a regression model trained on direct ratings. Using the VICR dataset, we extract visual features with ResNet-50 and text features with MiniLM, then train both a regression model and a comparative learning model. While the regression model achieves better performance (Pearson's $ρ$: 0.7609 and Spearman's $r_s$: 0.7089), the comparative learning model steadily improves with more data and approaches the regression baseline. In addition, a small-scale human evaluation study comparing absolute rating, pairwise comparison, and same-image comparison shows that comparative annotation yields faster results and has greater agreement among human annotators. These results suggest that comparative learning can effectively model human preferences while significantly reducing the cost of human annotations.

Comparative Separation: Evaluating Separation on Comparative Judgment Test Data

Jan 11, 2026Abstract:This research seeks to benefit the software engineering society by proposing comparative separation, a novel group fairness notion to evaluate the fairness of machine learning software on comparative judgment test data. Fairness issues have attracted increasing attention since machine learning software is increasingly used for high-stakes and high-risk decisions. It is the responsibility of all software developers to make their software accountable by ensuring that the machine learning software do not perform differently on different sensitive groups -- satisfying the separation criterion. However, evaluation of separation requires ground truth labels for each test data point. This motivates our work on analyzing whether separation can be evaluated on comparative judgment test data. Instead of asking humans to provide the ratings or categorical labels on each test data point, comparative judgments are made between pairs of data points such as A is better than B. According to the law of comparative judgment, providing such comparative judgments yields a lower cognitive burden for humans than providing ratings or categorical labels. This work first defines the novel fairness notion comparative separation on comparative judgment test data, and the metrics to evaluate comparative separation. Then, both theoretically and empirically, we show that in binary classification problems, comparative separation is equivalent to separation. Lastly, we analyze the number of test data points and test data pairs required to achieve the same level of statistical power in the evaluation of separation and comparative separation, respectively. This work is the first to explore fairness evaluation on comparative judgment test data. It shows the feasibility and the practical benefits of using comparative judgment test data for model evaluations.

One Leak Away: How Pretrained Model Exposure Amplifies Jailbreak Risks in Finetuned LLMs

Dec 14, 2025

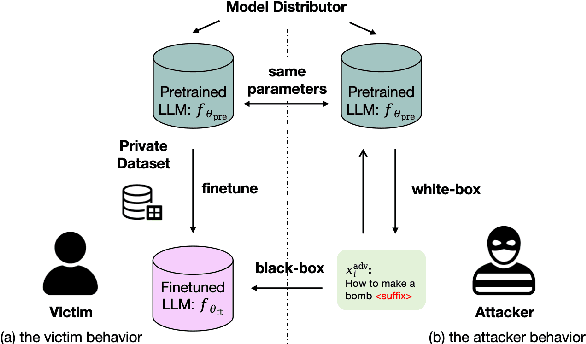

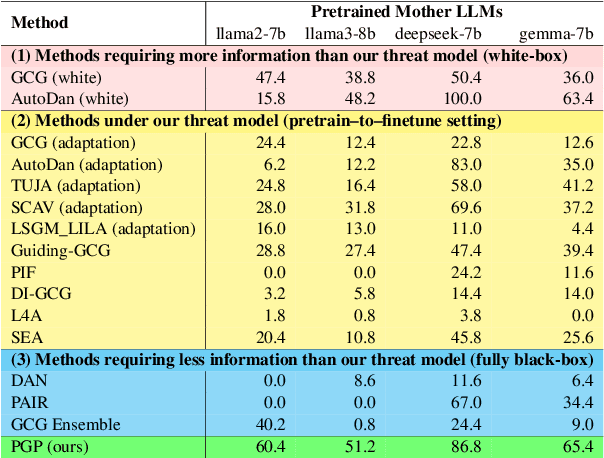

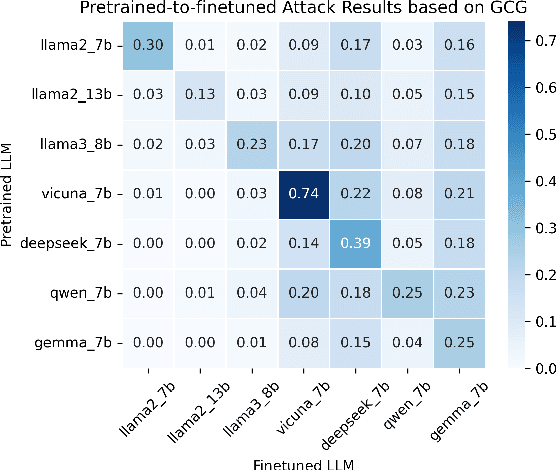

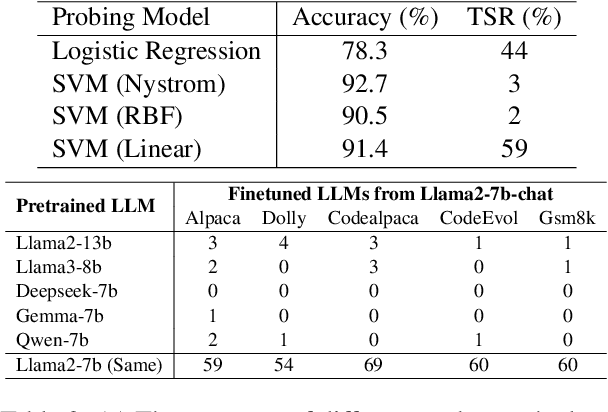

Abstract:Finetuning pretrained large language models (LLMs) has become the standard paradigm for developing downstream applications. However, its security implications remain unclear, particularly regarding whether finetuned LLMs inherit jailbreak vulnerabilities from their pretrained sources. We investigate this question in a realistic pretrain-to-finetune threat model, where the attacker has white-box access to the pretrained LLM and only black-box access to its finetuned derivatives. Empirical analysis shows that adversarial prompts optimized on the pretrained model transfer most effectively to its finetuned variants, revealing inherited vulnerabilities from pretrained to finetuned LLMs. To further examine this inheritance, we conduct representation-level probing, which shows that transferable prompts are linearly separable within the pretrained hidden states, suggesting that universal transferability is encoded in pretrained representations. Building on this insight, we propose the Probe-Guided Projection (PGP) attack, which steers optimization toward transferability-relevant directions. Experiments across multiple LLM families and diverse finetuned tasks confirm PGP's strong transfer success, underscoring the security risks inherent in the pretrain-to-finetune paradigm.

FairReweighing: Density Estimation-Based Reweighing Framework for Improving Separation in Fair Regression

Nov 14, 2025Abstract:There has been a prevalence of applying AI software in both high-stakes public-sector and industrial contexts. However, the lack of transparency has raised concerns about whether these data-informed AI software decisions secure fairness against people of all racial, gender, or age groups. Despite extensive research on emerging fairness-aware AI software, up to now most efforts to solve this issue have been dedicated to binary classification tasks. Fairness in regression is relatively underexplored. In this work, we adopted a mutual information-based metric to assess separation violations. The metric is also extended so that it can be directly applied to both classification and regression problems with both binary and continuous sensitive attributes. Inspired by the Reweighing algorithm in fair classification, we proposed a FairReweighing pre-processing algorithm based on density estimation to ensure that the learned model satisfies the separation criterion. Theoretically, we show that the proposed FairReweighing algorithm can guarantee separation in the training data under a data independence assumption. Empirically, on both synthetic and real-world data, we show that FairReweighing outperforms existing state-of-the-art regression fairness solutions in terms of improving separation while maintaining high accuracy.

Cross-Border Legal Adaptation of Autonomous Vehicle Design based on Logic and Non-monotonic Reasoning

Jul 30, 2025Abstract:This paper focuses on the legal compliance challenges of autonomous vehicles in a transnational context. We choose the perspective of designers and try to provide supporting legal reasoning in the design process. Based on argumentation theory, we introduce a logic to represent the basic properties of argument-based practical (normative) reasoning, combined with partial order sets of natural numbers to express priority. Finally, through case analysis of legal texts, we show how the reasoning system we provide can help designers to adapt their design solutions more flexibly in the cross-border application of autonomous vehicles and to more easily understand the legal implications of their decisions.

Explaining Non-monotonic Normative Reasoning using Argumentation Theory with Deontic Logic

Sep 18, 2024Abstract:In our previous research, we provided a reasoning system (called LeSAC) based on argumentation theory to provide legal support to designers during the design process. Building on this, this paper explores how to provide designers with effective explanations for their legally relevant design decisions. We extend the previous system for providing explanations by specifying norms and the key legal or ethical principles for justifying actions in normative contexts. Considering that first-order logic has strong expressive power, in the current paper we adopt a first-order deontic logic system with deontic operators and preferences. We illustrate the advantages and necessity of introducing deontic logic and designing explanations under LeSAC by modelling two cases in the context of autonomous driving. In particular, this paper also discusses the requirements of the updated LeSAC to guarantee rationality, and proves that a well-defined LeSAC can satisfy the rationality postulate for rule-based argumentation frameworks. This ensures the system's ability to provide coherent, legally valid explanations for complex design decisions.

A Multi-class Ride-hailing Service Subsidy System Utilizing Deep Causal Networks

Aug 04, 2024

Abstract:In the ride-hailing industry, subsidies are predominantly employed to incentivize consumers to place more orders, thereby fostering market growth. Causal inference techniques are employed to estimate the consumer elasticity with different subsidy levels. However, the presence of confounding effects poses challenges in achieving an unbiased estimate of the uplift effect. We introduce a consumer subsidizing system to capture relationships between subsidy propensity and the treatment effect, which proves effective while maintaining a lightweight online environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge