Youcan Yan

A Haptic-Based Proximity Sensing System for Buried Object in Granular Material

Nov 26, 2024

Abstract:The proximity perception of objects in granular materials is significant, especially for applications like minesweeping. However, due to particles' opacity and complex properties, existing proximity sensors suffer from high costs from sophisticated hardware and high user-cost from unintuitive results. In this paper, we propose a simple yet effective proximity sensing system for underground stuff based on the haptic feedback of the sensor-granules interaction. We study and employ the unique characteristic of particles -- failure wedge zone, and combine the machine learning method -- Gaussian process regression, to identify the force signal changes induced by the proximity of objects, so as to achieve near-field perception. Furthermore, we design a novel trajectory to control the probe searching in granules for a wide range of perception. Also, our proximity sensing system can adaptively determine optimal parameters for robustness operation in different particles. Experiments demonstrate our system can perceive underground objects over 0.5 to 7 cm in advance among various materials.

GRAINS: Proximity Sensing of Objects in Granular Materials

Jul 18, 2023

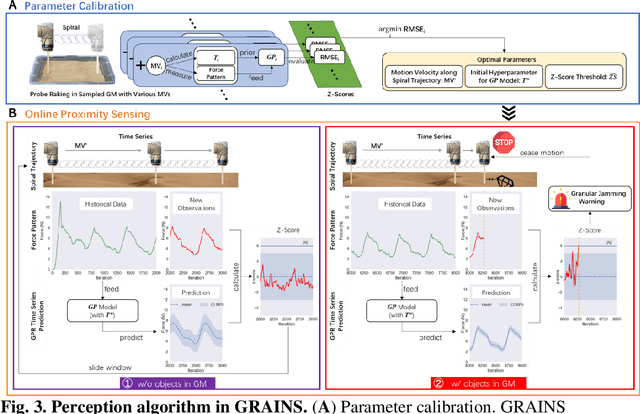

Abstract:Proximity sensing detects an object's presence without contact. However, research has rarely explored proximity sensing in granular materials (GM) due to GM's lack of visual and complex properties. In this paper, we propose a granular-material-embedded autonomous proximity sensing system (GRAINS) based on three granular phenomena (fluidization, jamming, and failure wedge zone). GRAINS can automatically sense buried objects beneath GM in real-time manner (at least ~20 hertz) and perceive them 0.5 ~ 7 centimeters ahead in different granules without the use of vision or touch. We introduce a new spiral trajectory for the probe raking in GM, combining linear and circular motions, inspired by a common granular fluidization technique. Based on the observation of force-raising when granular jamming occurs in the failure wedge zone in front of the probe during its raking, we employ Gaussian process regression to constantly learn and predict the force patterns and detect the force anomaly resulting from granular jamming to identify the proximity sensing of buried objects. Finally, we apply GRAINS to a Bayesian-optimization-algorithm-guided exploration strategy to successfully localize underground objects and outline their distribution using proximity sensing without contact or digging. This work offers a simple yet reliable method with potential for safe operation in building habitation infrastructure on an alien planet without human intervention.

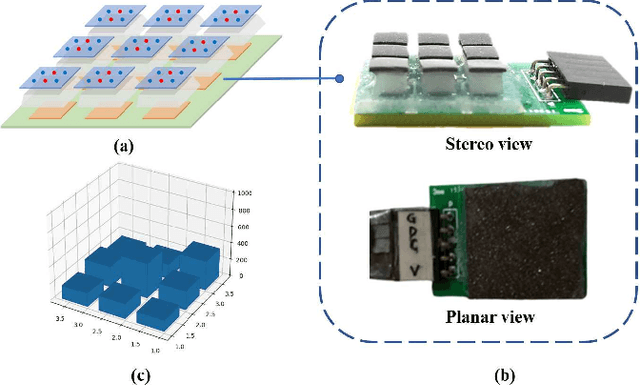

Polymer-Based Self-Calibrated Optical Fiber Tactile Sensor

Mar 01, 2023Abstract:Human skin can accurately sense the self-decoupled normal and shear forces when in contact with objects of different sizes. Although there exist many soft and conformable tactile sensors on robotic applications able to decouple the normal force and shear forces, the impact of the size of object in contact on the force calibration model has been commonly ignored. Here, using the principle that contact force can be derived from the light power loss in the soft optical fiber core, we present a soft tactile sensor that decouples normal and shear forces and calibrates the measurement results based on the object size, by designing a two-layered weaved polymer-based optical fiber anisotropic structure embedded in a soft elastomer. Based on the anisotropic response of optical fibers, we developed a linear calibration algorithm to simultaneously measure the size of the contact object and the decoupled normal and shear forces calibrated the object size. By calibrating the sensor at the robotic arm tip, we show that robots can reconstruct the force vector at an average accuracy of 0.15N for normal forces, 0.17N for shear forces in X-axis , and 0.18N for shear forces in Y-axis, within the sensing range of 0-2N in all directions, and the average accuracy of object size measurement of 0.4mm, within the test indenter diameter range of 5-12mm.

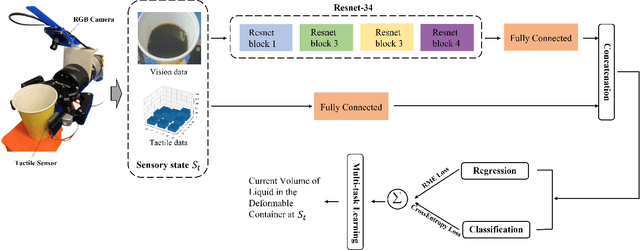

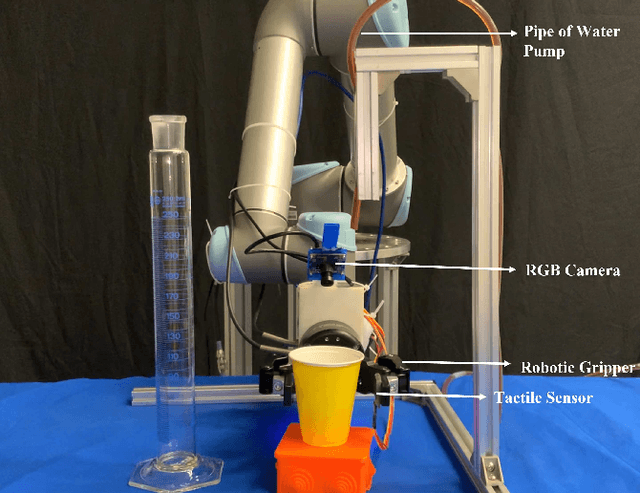

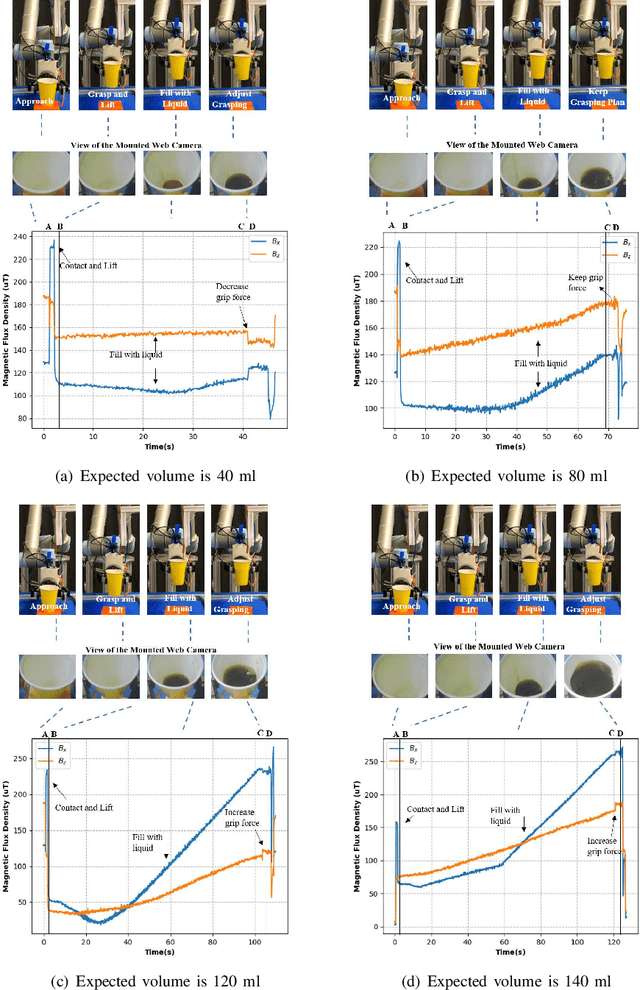

Visual-tactile sensing for Real-time liquid Volume Estimation in Grasping

Feb 23, 2022

Abstract:We propose a deep visuo-tactile model for realtime estimation of the liquid inside a deformable container in a proprioceptive way.We fuse two sensory modalities, i.e., the raw visual inputs from the RGB camera and the tactile cues from our specific tactile sensor without any extra sensor calibrations.The robotic system is well controlled and adjusted based on the estimation model in real time. The main contributions and novelties of our work are listed as follows: 1) Explore a proprioceptive way for liquid volume estimation by developing an end-to-end predictive model with multi-modal convolutional networks, which achieve a high precision with an error of around 2 ml in the experimental validation. 2) Propose a multi-task learning architecture which comprehensively considers the losses from both classification and regression tasks, and comparatively evaluate the performance of each variant on the collected data and actual robotic platform. 3) Utilize the proprioceptive robotic system to accurately serve and control the requested volume of liquid, which is continuously flowing into a deformable container in real time. 4) Adaptively adjust the grasping plan to achieve more stable grasping and manipulation according to the real-time liquid volume prediction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge