Qionghai Dai

ImmersiveNeRF: Hybrid Radiance Fields for Unbounded Immersive Light Field Reconstruction

Sep 04, 2023Abstract:This paper proposes a hybrid radiance field representation for unbounded immersive light field reconstruction which supports high-quality rendering and aggressive view extrapolation. The key idea is to first formally separate the foreground and the background and then adaptively balance learning of them during the training process. To fulfill this goal, we represent the foreground and background as two separate radiance fields with two different spatial mapping strategies. We further propose an adaptive sampling strategy and a segmentation regularizer for more clear segmentation and robust convergence. Finally, we contribute a novel immersive light field dataset, named THUImmersive, with the potential to achieve much larger space 6DoF immersive rendering effects compared with existing datasets, by capturing multiple neighboring viewpoints for the same scene, to stimulate the research and AR/VR applications in the immersive light field domain. Extensive experiments demonstrate the strong performance of our method for unbounded immersive light field reconstruction.

SHoP: A Deep Learning Framework for Solving High-order Partial Differential Equations

May 17, 2023Abstract:Solving partial differential equations (PDEs) has been a fundamental problem in computational science and of wide applications for both scientific and engineering research. Due to its universal approximation property, neural network is widely used to approximate the solutions of PDEs. However, existing works are incapable of solving high-order PDEs due to insufficient calculation accuracy of higher-order derivatives, and the final network is a black box without explicit explanation. To address these issues, we propose a deep learning framework to solve high-order PDEs, named SHoP. Specifically, we derive the high-order derivative rule for neural network, to get the derivatives quickly and accurately; moreover, we expand the network into a Taylor series, providing an explicit solution for the PDEs. We conduct experimental validations four high-order PDEs with different dimensions, showing that we can solve high-order PDEs efficiently and accurately.

CUTS+: High-dimensional Causal Discovery from Irregular Time-series

May 10, 2023

Abstract:Causal discovery in time-series is a fundamental problem in the machine learning community, enabling causal reasoning and decision-making in complex scenarios. Recently, researchers successfully discover causality by combining neural networks with Granger causality, but their performances degrade largely when encountering high-dimensional data because of the highly redundant network design and huge causal graphs. Moreover, the missing entries in the observations further hamper the causal structural learning. To overcome these limitations, We propose CUTS+, which is built on the Granger-causality-based causal discovery method CUTS and raises the scalability by introducing a technique called Coarse-to-fine-discovery (C2FD) and leveraging a message-passing-based graph neural network (MPGNN). Compared to previous methods on simulated, quasi-real, and real datasets, we show that CUTS+ largely improves the causal discovery performance on high-dimensional data with different types of irregular sampling.

Super-NeRF: View-consistent Detail Generation for NeRF super-resolution

Apr 26, 2023

Abstract:The neural radiance field (NeRF) achieved remarkable success in modeling 3D scenes and synthesizing high-fidelity novel views. However, existing NeRF-based methods focus more on the make full use of the image resolution to generate novel views, but less considering the generation of details under the limited input resolution. In analogy to the extensive usage of image super-resolution, NeRF super-resolution is an effective way to generate the high-resolution implicit representation of 3D scenes and holds great potential applications. Up to now, such an important topic is still under-explored. In this paper, we propose a NeRF super-resolution method, named Super-NeRF, to generate high-resolution NeRF from only low-resolution inputs. Given multi-view low-resolution images, Super-NeRF constructs a consistency-controlling super-resolution module to generate view-consistent high-resolution details for NeRF. Specifically, an optimizable latent code is introduced for each low-resolution input image to control the 2D super-resolution images to converge to the view-consistent output. The latent codes of each low-resolution image are optimized synergistically with the target Super-NeRF representation to fully utilize the view consistency constraint inherent in NeRF construction. We verify the effectiveness of Super-NeRF on synthetic, real-world, and AI-generated NeRF datasets. Super-NeRF achieves state-of-the-art NeRF super-resolution performance on high-resolution detail generation and cross-view consistency.

Hi Sheldon! Creating Deep Personalized Characters from TV Shows

Apr 09, 2023

Abstract:Imagine an interesting multimodal interactive scenario that you can see, hear, and chat with an AI-generated digital character, who is capable of behaving like Sheldon from The Big Bang Theory, as a DEEP copy from appearance to personality. Towards this fantastic multimodal chatting scenario, we propose a novel task, named Deep Personalized Character Creation (DPCC): creating multimodal chat personalized characters from multimodal data such as TV shows. Specifically, given a single- or multi-modality input (text, audio, video), the goal of DPCC is to generate a multi-modality (text, audio, video) response, which should be well-matched the personality of a specific character such as Sheldon, and of high quality as well. To support this novel task, we further collect a character centric multimodal dialogue dataset, named Deep Personalized Character Dataset (DPCD), from TV shows. DPCD contains character-specific multimodal dialogue data of ~10k utterances and ~6 hours of audio/video per character, which is around 10 times larger compared to existing related datasets.On DPCD, we present a baseline method for the DPCC task and create 5 Deep personalized digital Characters (DeepCharacters) from Big Bang TV Shows. We conduct both subjective and objective experiments to evaluate the multimodal response from DeepCharacters in terms of characterization and quality. The results demonstrates that, on our collected DPCD dataset, the proposed baseline can create personalized digital characters for generating multimodal response.Our collected DPCD dataset, the code of data collection and our baseline will be published soon.

CUTS: Neural Causal Discovery from Irregular Time-Series Data

Feb 15, 2023Abstract:Causal discovery from time-series data has been a central task in machine learning. Recently, Granger causality inference is gaining momentum due to its good explainability and high compatibility with emerging deep neural networks. However, most existing methods assume structured input data and degenerate greatly when encountering data with randomly missing entries or non-uniform sampling frequencies, which hampers their applications in real scenarios. To address this issue, here we present CUTS, a neural Granger causal discovery algorithm to jointly impute unobserved data points and build causal graphs, via plugging in two mutually boosting modules in an iterative framework: (i) Latent data prediction stage: designs a Delayed Supervision Graph Neural Network (DSGNN) to hallucinate and register unstructured data which might be of high dimension and with complex distribution; (ii) Causal graph fitting stage: builds a causal adjacency matrix with imputed data under sparse penalty. Experiments show that CUTS effectively infers causal graphs from unstructured time-series data, with significantly superior performance to existing methods. Our approach constitutes a promising step towards applying causal discovery to real applications with non-ideal observations.

* https://openreview.net/forum?id=UG8bQcD3Emv

DarkVision: A Benchmark for Low-light Image/Video Perception

Jan 16, 2023

Abstract:Imaging and perception in photon-limited scenarios is necessary for various applications, e.g., night surveillance or photography, high-speed photography, and autonomous driving. In these cases, cameras suffer from low signal-to-noise ratio, which degrades the image quality severely and poses challenges for downstream high-level vision tasks like object detection and recognition. Data-driven methods have achieved enormous success in both image restoration and high-level vision tasks. However, the lack of high-quality benchmark dataset with task-specific accurate annotations for photon-limited images/videos delays the research progress heavily. In this paper, we contribute the first multi-illuminance, multi-camera, and low-light dataset, named DarkVision, serving for both image enhancement and object detection. We provide bright and dark pairs with pixel-wise registration, in which the bright counterpart provides reliable reference for restoration and annotation. The dataset consists of bright-dark pairs of 900 static scenes with objects from 15 categories, and 32 dynamic scenes with 4-category objects. For each scene, images/videos were captured at 5 illuminance levels using three cameras of different grades, and average photons can be reliably estimated from the calibration data for quantitative studies. The static-scene images and dynamic videos respectively contain around 7,344 and 320,667 instances in total. With DarkVision, we established baselines for image/video enhancement and object detection by representative algorithms. To demonstrate an exemplary application of DarkVision, we propose two simple yet effective approaches for improving performance in video enhancement and object detection respectively. We believe DarkVision would advance the state-of-the-arts in both imaging and related computer vision tasks in low-light environment.

TINC: Tree-structured Implicit Neural Compression

Nov 17, 2022Abstract:Implicit neural representation (INR) can describe the target scenes with high fidelity using a small number of parameters, and is emerging as a promising data compression technique. However, INR in intrinsically of limited spectrum coverage, and it is non-trivial to remove redundancy in diverse complex data effectively. Preliminary studies can only exploit either global or local correlation in the target data and thus of limited performance. In this paper, we propose a Tree-structured Implicit Neural Compression (TINC) to conduct compact representation for local regions and extract the shared features of these local representations in a hierarchical manner. Specifically, we use MLPs to fit the partitioned local regions, and these MLPs are organized in tree structure to share parameters according to the spatial distance. The parameter sharing scheme not only ensures the continuity between adjacent regions, but also jointly removes the local and non-local redundancy. Extensive experiments show that TINC improves the compression fidelity of INR, and has shown impressive compression capabilities over commercial tools and other deep learning based methods. Besides, the approach is of high flexibility and can be tailored for different data and parameter settings. All the reproducible codes are going to be released on github.

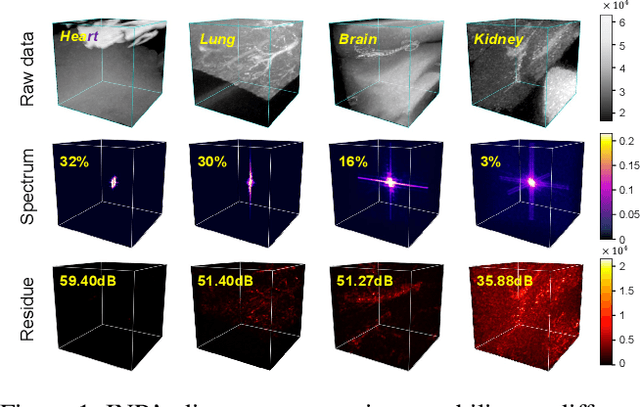

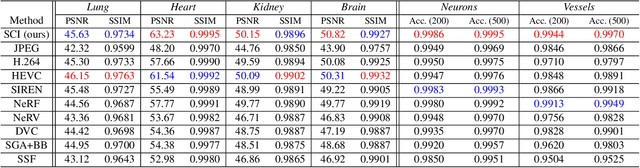

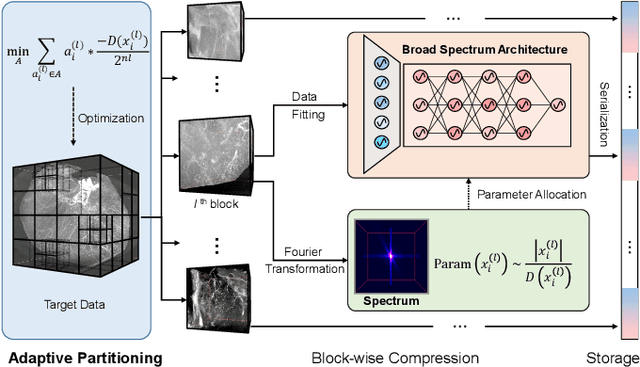

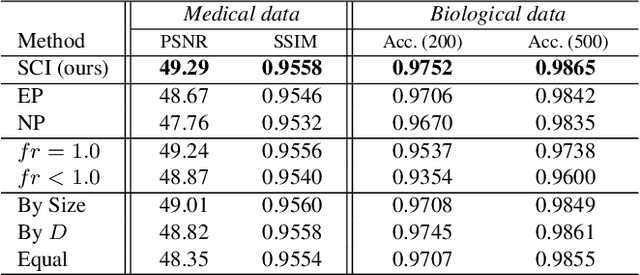

SCI: A spectrum concentrated implicit neural compression for biomedical data

Sep 30, 2022

Abstract:Massive collection and explosive growth of the huge amount of medical data, demands effective compression for efficient storage, transmission and sharing. Readily available visual data compression techniques have been studied extensively but tailored for nature images/videos, and thus show limited performance on medical data which are of different characteristics. Emerging implicit neural representation (INR) is gaining momentum and demonstrates high promise for fitting diverse visual data in target-data-specific manner, but a general compression scheme covering diverse medical data is so far absent. To address this issue, we firstly derive a mathematical explanation for INR's spectrum concentration property and an analytical insight on the design of compression-oriented INR architecture. Further, we design a funnel shaped neural network capable of covering broad spectrum of complex medical data and achieving high compression ratio. Based on this design, we conduct compression via optimization under given budget and propose an adaptive compression approach SCI, which adaptively partitions the target data into blocks matching the concentrated spectrum envelop of the adopted INR, and allocates parameter with high representation accuracy under given compression ratio. The experiments show SCI's superior performance over conventional techniques and wide applicability across diverse medical data.

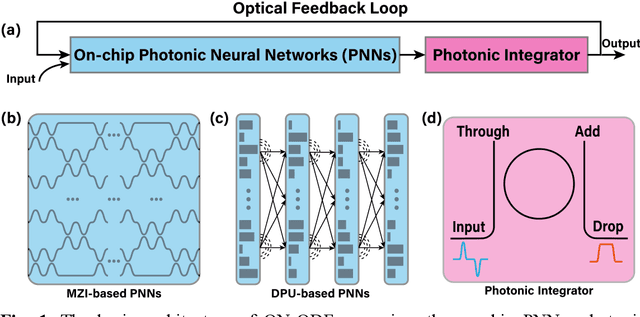

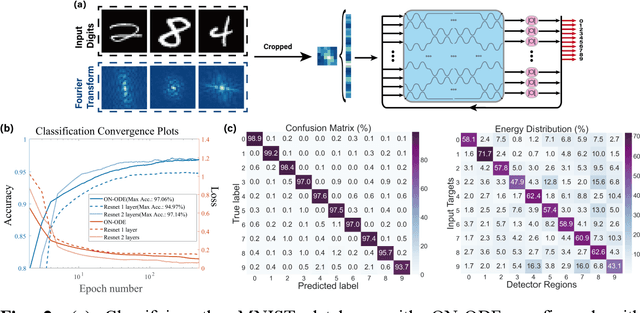

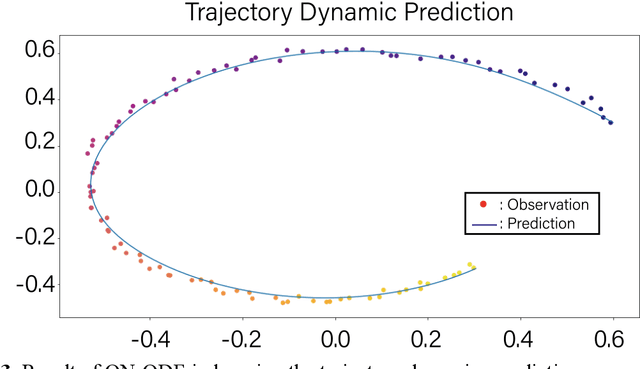

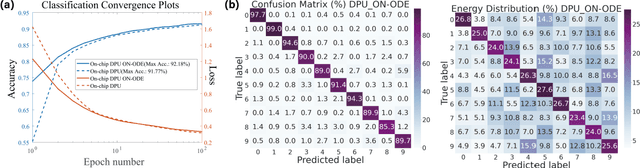

Optical Neural Ordinary Differential Equations

Sep 26, 2022

Abstract:Increasing the layer number of on-chip photonic neural networks (PNNs) is essential to improve its model performance. However, the successively cascading of network hidden layers results in larger integrated photonic chip areas. To address this issue, we propose the optical neural ordinary differential equations (ON-ODE) architecture that parameterizes the continuous dynamics of hidden layers with optical ODE solvers. The ON-ODE comprises the PNNs followed by the photonic integrator and optical feedback loop, which can be configured to represent residual neural networks (ResNet) and recurrent neural networks with effectively reduced chip area occupancy. For the interference-based optoelectronic nonlinear hidden layer, the numerical experiments demonstrate that the single hidden layer ON-ODE can achieve approximately the same accuracy as the two-layer optical ResNet in image classification tasks. Besides, the ONODE improves the model classification accuracy for the diffraction-based all-optical linear hidden layer. The time-dependent dynamics property of ON-ODE is further applied for trajectory prediction with high accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge