Munchurl Kim

Novel View Synthesis with View-Dependent Effects from a Single Image

Dec 13, 2023Abstract:In this paper, we firstly consider view-dependent effects into single image-based novel view synthesis (NVS) problems. For this, we propose to exploit the camera motion priors in NVS to model view-dependent appearance or effects (VDE) as the negative disparity in the scene. By recognizing specularities "follow" the camera motion, we infuse VDEs into the input images by aggregating input pixel colors along the negative depth region of the epipolar lines. Also, we propose a `relaxed volumetric rendering' approximation that allows computing the densities in a single pass, improving efficiency for NVS from single images. Our method can learn single-image NVS from image sequences only, which is a completely self-supervised learning method, for the first time requiring neither depth nor camera pose annotations. We present extensive experiment results and show that our proposed method can learn NVS with VDEs, outperforming the SOTA single-view NVS methods on the RealEstate10k and MannequinChallenge datasets.

COMPASS: High-Efficiency Deep Image Compression with Arbitrary-scale Spatial Scalability

Sep 11, 2023Abstract:Recently, neural network (NN)-based image compression studies have actively been made and has shown impressive performance in comparison to traditional methods. However, most of the works have focused on non-scalable image compression (single-layer coding) while spatially scalable image compression has drawn less attention although it has many applications. In this paper, we propose a novel NN-based spatially scalable image compression method, called COMPASS, which supports arbitrary-scale spatial scalability. Our proposed COMPASS has a very flexible structure where the number of layers and their respective scale factors can be arbitrarily determined during inference. To reduce the spatial redundancy between adjacent layers for arbitrary scale factors, our COMPASS adopts an inter-layer arbitrary scale prediction method, called LIFF, based on implicit neural representation. We propose a combined RD loss function to effectively train multiple layers. Experimental results show that our COMPASS achieves BD-rate gain of -58.33% and -47.17% at maximum compared to SHVC and the state-of-the-art NN-based spatially scalable image compression method, respectively, for various combinations of scale factors. Our COMPASS also shows comparable or even better coding efficiency than the single-layer coding for various scale factors.

Modernizing Old Photos Using Multiple References via Photorealistic Style Transfer

Apr 10, 2023

Abstract:This paper firstly presents old photo modernization using multiple references by performing stylization and enhancement in a unified manner. In order to modernize old photos, we propose a novel multi-reference-based old photo modernization (MROPM) framework consisting of a network MROPM-Net and a novel synthetic data generation scheme. MROPM-Net stylizes old photos using multiple references via photorealistic style transfer (PST) and further enhances the results to produce modern-looking images. Meanwhile, the synthetic data generation scheme trains the network to effectively utilize multiple references to perform modernization. To evaluate the performance, we propose a new old photos benchmark dataset (CHD) consisting of diverse natural indoor and outdoor scenes. Extensive experiments show that the proposed method outperforms other baselines in performing modernization on real old photos, even though no old photos were used during training. Moreover, our method can appropriately select styles from multiple references for each semantic region in the old photo to further improve the modernization performance.

Selective compression learning of latent representations for variable-rate image compression

Nov 08, 2022Abstract:Recently, many neural network-based image compression methods have shown promising results superior to the existing tool-based conventional codecs. However, most of them are often trained as separate models for different target bit rates, thus increasing the model complexity. Therefore, several studies have been conducted for learned compression that supports variable rates with single models, but they require additional network modules, layers, or inputs that often lead to complexity overhead, or do not provide sufficient coding efficiency. In this paper, we firstly propose a selective compression method that partially encodes the latent representations in a fully generalized manner for deep learning-based variable-rate image compression. The proposed method adaptively determines essential representation elements for compression of different target quality levels. For this, we first generate a 3D importance map as the nature of input content to represent the underlying importance of the representation elements. The 3D importance map is then adjusted for different target quality levels using importance adjustment curves. The adjusted 3D importance map is finally converted into a 3D binary mask to determine the essential representation elements for compression. The proposed method can be easily integrated with the existing compression models with a negligible amount of overhead increase. Our method can also enable continuously variable-rate compression via simple interpolation of the importance adjustment curves among different quality levels. The extensive experimental results show that the proposed method can achieve comparable compression efficiency as those of the separately trained reference compression models and can reduce decoding time owing to the selective compression. The sample codes are publicly available at https://github.com/JooyoungLeeETRI/SCR.

Realistic Bokeh Effect Rendering on Mobile GPUs, Mobile AI & AIM 2022 challenge: Report

Nov 07, 2022

Abstract:As mobile cameras with compact optics are unable to produce a strong bokeh effect, lots of interest is now devoted to deep learning-based solutions for this task. In this Mobile AI challenge, the target was to develop an efficient end-to-end AI-based bokeh effect rendering approach that can run on modern smartphone GPUs using TensorFlow Lite. The participants were provided with a large-scale EBB! bokeh dataset consisting of 5K shallow / wide depth-of-field image pairs captured using the Canon 7D DSLR camera. The runtime of the resulting models was evaluated on the Kirin 9000's Mali GPU that provides excellent acceleration results for the majority of common deep learning ops. A detailed description of all models developed in this challenge is provided in this paper.

Layered Depth Refinement with Mask Guidance

Jun 07, 2022

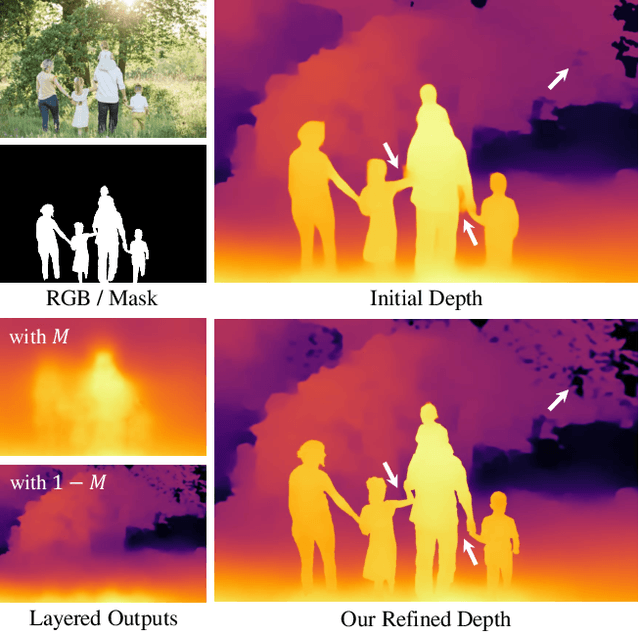

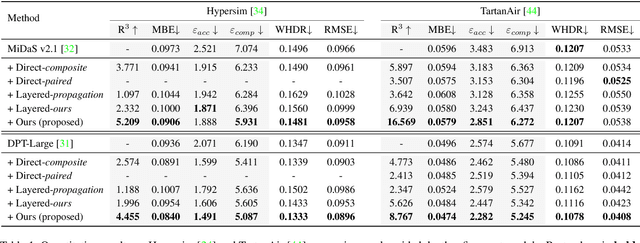

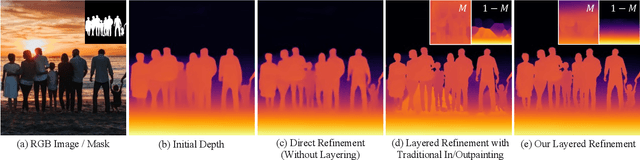

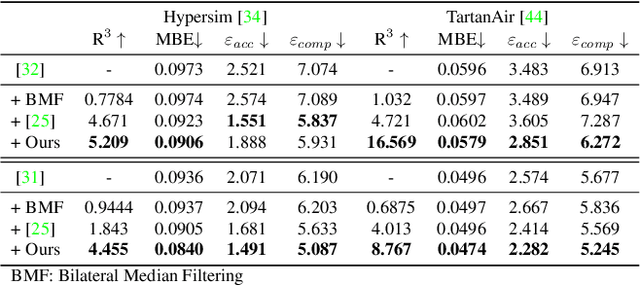

Abstract:Depth maps are used in a wide range of applications from 3D rendering to 2D image effects such as Bokeh. However, those predicted by single image depth estimation (SIDE) models often fail to capture isolated holes in objects and/or have inaccurate boundary regions. Meanwhile, high-quality masks are much easier to obtain, using commercial auto-masking tools or off-the-shelf methods of segmentation and matting or even by manual editing. Hence, in this paper, we formulate a novel problem of mask-guided depth refinement that utilizes a generic mask to refine the depth prediction of SIDE models. Our framework performs layered refinement and inpainting/outpainting, decomposing the depth map into two separate layers signified by the mask and the inverse mask. As datasets with both depth and mask annotations are scarce, we propose a self-supervised learning scheme that uses arbitrary masks and RGB-D datasets. We empirically show that our method is robust to different types of masks and initial depth predictions, accurately refining depth values in inner and outer mask boundary regions. We further analyze our model with an ablation study and demonstrate results on real applications. More information can be found at https://sooyekim.github.io/MaskDepth/ .

Positional Information is All You Need: A Novel Pipeline for Self-Supervised SVDE from Videos

May 18, 2022

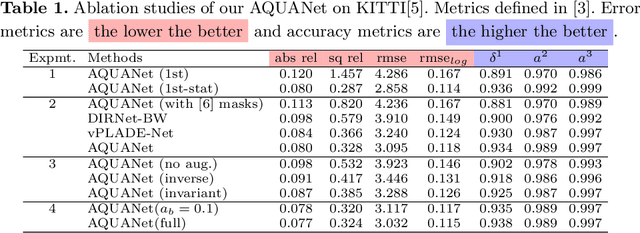

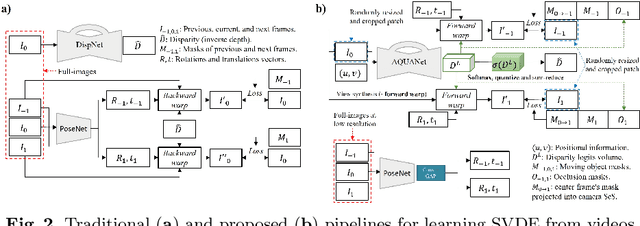

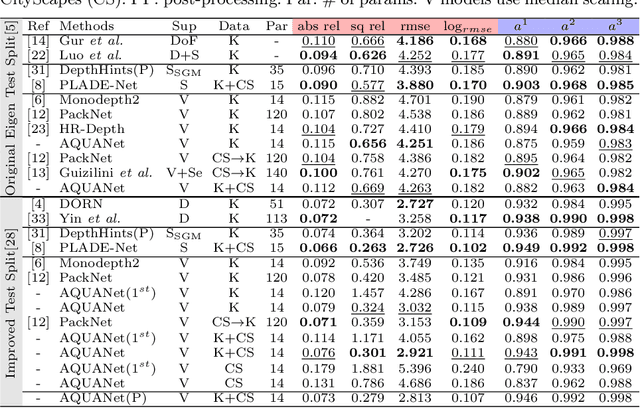

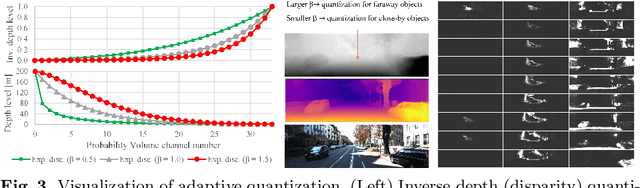

Abstract:Recently, much attention has been drawn to learning the underlying 3D structures of a scene from monocular videos in a fully self-supervised fashion. One of the most challenging aspects of this task is handling the independently moving objects as they break the rigid-scene assumption. For the first time, we show that pixel positional information can be exploited to learn SVDE (Single View Depth Estimation) from videos. Our proposed moving object (MO) masks, which are induced by shifted positional information (SPI) and referred to as `SPIMO' masks, are very robust and consistently remove the independently moving objects in the scenes, allowing for better learning of SVDE from videos. Additionally, we introduce a new adaptive quantization scheme that assigns the best per-pixel quantization curve for our depth discretization. Finally, we employ existing boosting techniques in a new way to further self-supervise the depth of the moving objects. With these features, our pipeline is robust against moving objects and generalizes well to high-resolution images, even when trained with small patches, yielding state-of-the-art (SOTA) results with almost 8.5x fewer parameters than the previous works that learn from videos. We present extensive experiments on KITTI and CityScapes that show the effectiveness of our method.

DeMFI: Deep Joint Deblurring and Multi-Frame Interpolation with Flow-Guided Attentive Correlation and Recursive Boosting

Nov 19, 2021

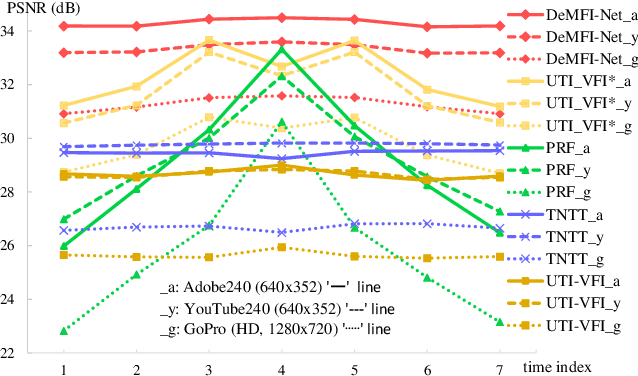

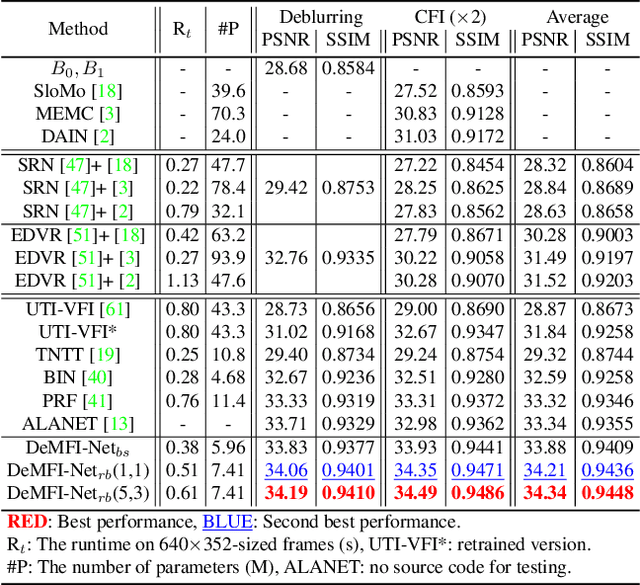

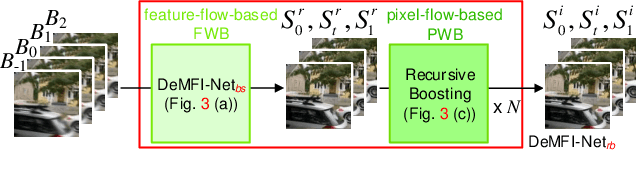

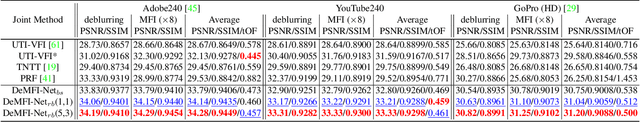

Abstract:In this paper, we propose a novel joint deblurring and multi-frame interpolation (DeMFI) framework, called DeMFI-Net, which accurately converts blurry videos of lower-frame-rate to sharp videos at higher-frame-rate based on flow-guided attentive-correlation-based feature bolstering (FAC-FB) module and recursive boosting (RB), in terms of multi-frame interpolation (MFI). The DeMFI-Net jointly performs deblurring and MFI where its baseline version performs feature-flow-based warping with FAC-FB module to obtain a sharp-interpolated frame as well to deblur two center-input frames. Moreover, its extended version further improves the joint task performance based on pixel-flow-based warping with GRU-based RB. Our FAC-FB module effectively gathers the distributed blurry pixel information over blurry input frames in feature-domain to improve the overall joint performances, which is computationally efficient since its attentive correlation is only focused pointwise. As a result, our DeMFI-Net achieves state-of-the-art (SOTA) performances for diverse datasets with significant margins compared to the recent SOTA methods, for both deblurring and MFI. All source codes including pretrained DeMFI-Net are publicly available at https://github.com/JihyongOh/DeMFI.

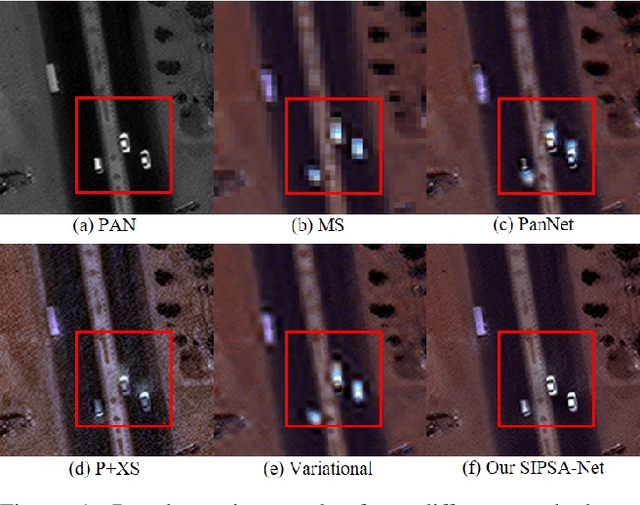

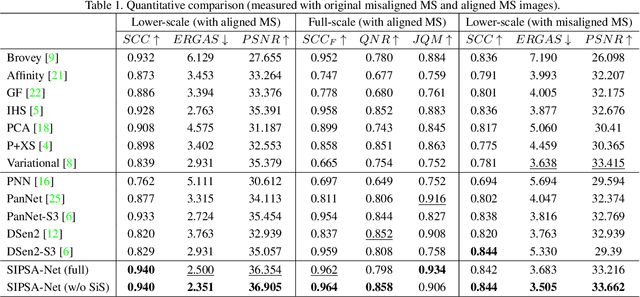

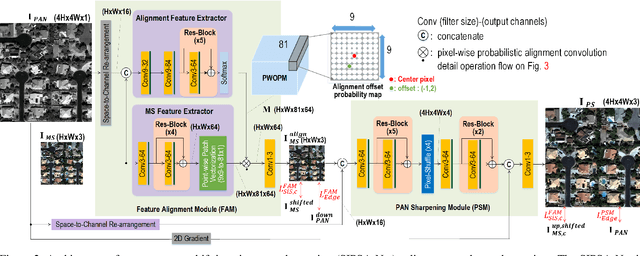

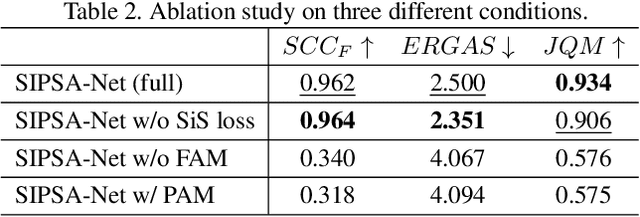

SIPSA-Net: Shift-Invariant Pan Sharpening with Moving Object Alignment for Satellite Imagery

May 06, 2021

Abstract:Pan-sharpening is a process of merging a high-resolution (HR) panchromatic (PAN) image and its corresponding low-resolution (LR) multi-spectral (MS) image to create an HR-MS and pan-sharpened image. However, due to the different sensors' locations, characteristics and acquisition time, PAN and MS image pairs often tend to have various amounts of misalignment. Conventional deep-learning-based methods that were trained with such misaligned PAN-MS image pairs suffer from diverse artifacts such as double-edge and blur artifacts in the resultant PAN-sharpened images. In this paper, we propose a novel framework called shift-invariant pan-sharpening with moving object alignment (SIPSA-Net) which is the first method to take into account such large misalignment of moving object regions for PAN sharpening. The SISPA-Net has a feature alignment module (FAM) that can adjust one feature to be aligned to another feature, even between the two different PAN and MS domains. For better alignment in pan-sharpened images, a shift-invariant spectral loss is newly designed, which ignores the inherent misalignment in the original MS input, thereby having the same effect as optimizing the spectral loss with a well-aligned MS image. Extensive experimental results show that our SIPSA-Net can generate pan-sharpened images with remarkable improvements in terms of visual quality and alignment, compared to the state-of-the-art methods.

Exploiting Global and Local Attentions for Heavy Rain Removal on Single Images

Apr 16, 2021

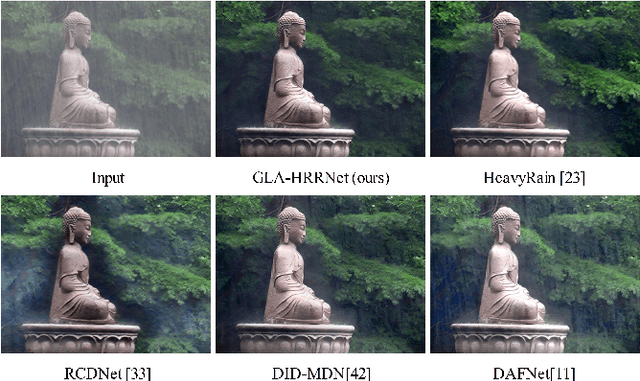

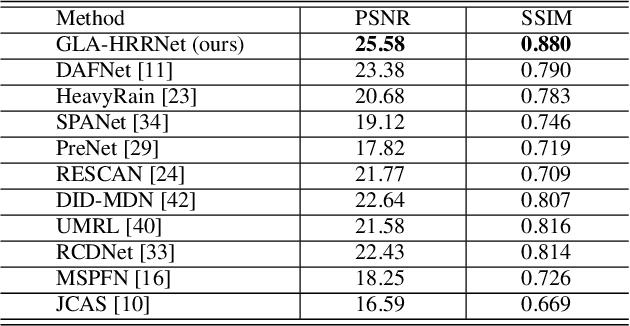

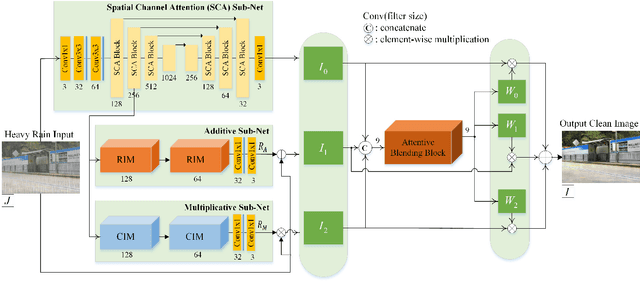

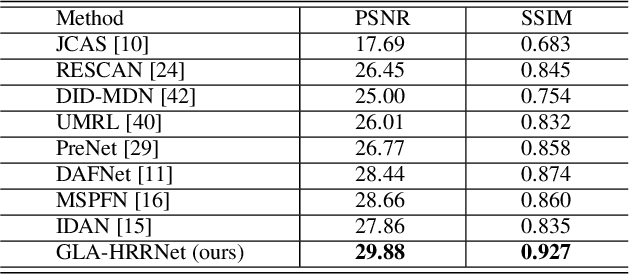

Abstract:Heavy rain removal from a single image is the task of simultaneously eliminating rain streaks and fog, which can dramatically degrade the quality of captured images. Most existing rain removal methods do not generalize well for the heavy rain case. In this work, we propose a novel network architecture consisting of three sub-networks to remove heavy rain from a single image without estimating rain streaks and fog separately. The first sub-net, a U-net-based architecture that incorporates our Spatial Channel Attention (SCA) blocks, extracts global features that provide sufficient contextual information needed to remove atmospheric distortions caused by rain and fog. The second sub-net learns the additive residues information, which is useful in removing rain streak artifacts via our proposed Residual Inception Modules (RIM). The third sub-net, the multiplicative sub-net, adopts our Channel-attentive Inception Modules (CIM) and learns the essential brighter local features which are not effectively extracted in the SCA and additive sub-nets by modulating the local pixel intensities in the derained images. Our three clean image results are then combined via an attentive blending block to generate the final clean image. Our method with SCA, RIM, and CIM significantly outperforms the previous state-of-the-art single-image deraining methods on the synthetic datasets, shows considerably cleaner and sharper derained estimates on the real image datasets. We present extensive experiments and ablation studies supporting each of our method's contributions on both synthetic and real image datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge