Hai Zhu

Learning Interaction-Aware Trajectory Predictions for Decentralized Multi-Robot Motion Planning in Dynamic Environments

Feb 23, 2021

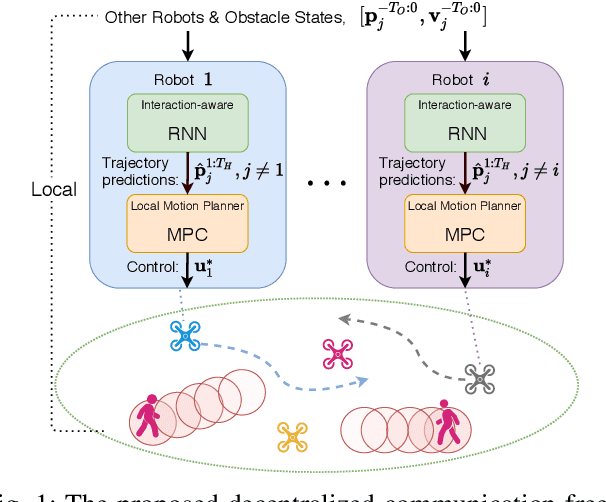

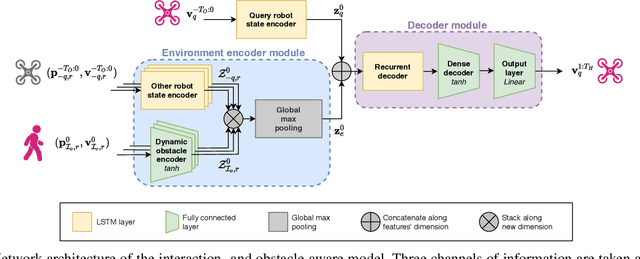

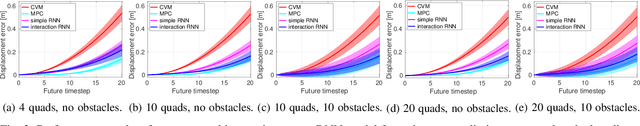

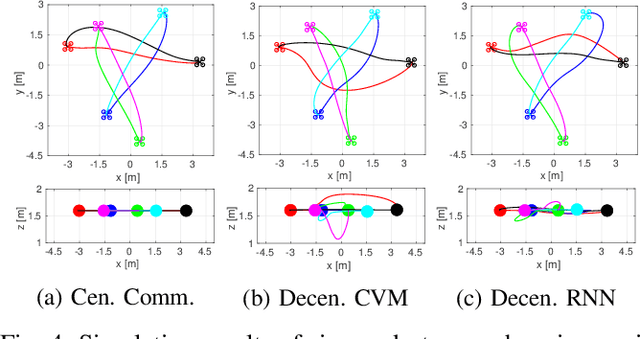

Abstract:This paper presents a data-driven decentralized trajectory optimization approach for multi-robot motion planning in dynamic environments. When navigating in a shared space, each robot needs accurate motion predictions of neighboring robots to achieve predictive collision avoidance. These motion predictions can be obtained among robots by sharing their future planned trajectories with each other via communication. However, such communication may not be available nor reliable in practice. In this paper, we introduce a novel trajectory prediction model based on recurrent neural networks (RNN) that can learn multi-robot motion behaviors from demonstrated trajectories generated using a centralized sequential planner. The learned model can run efficiently online for each robot and provide interaction-aware trajectory predictions of its neighbors based on observations of their history states. We then incorporate the trajectory prediction model into a decentralized model predictive control (MPC) framework for multi-robot collision avoidance. Simulation results show that our decentralized approach can achieve a comparable level of performance to a centralized planner while being communication-free and scalable to a large number of robots. We also validate our approach with a team of quadrotors in real-world experiments.

Edge Computing Assisted Autonomous Flight for UAV: Synergies between Vision and Communications

Dec 10, 2020

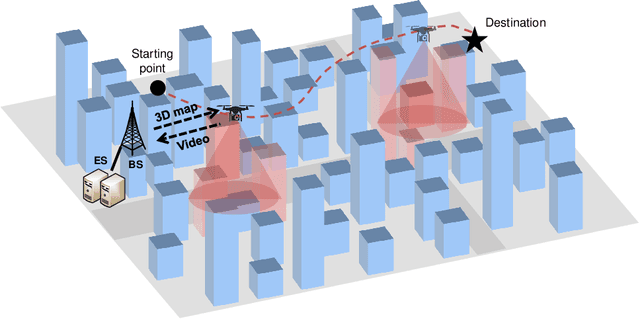

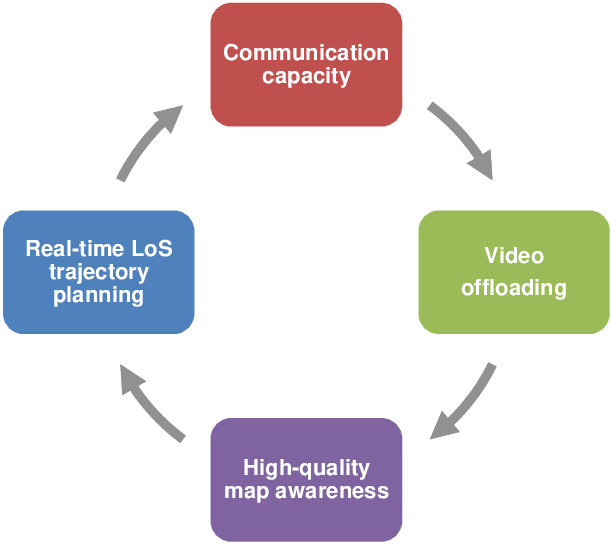

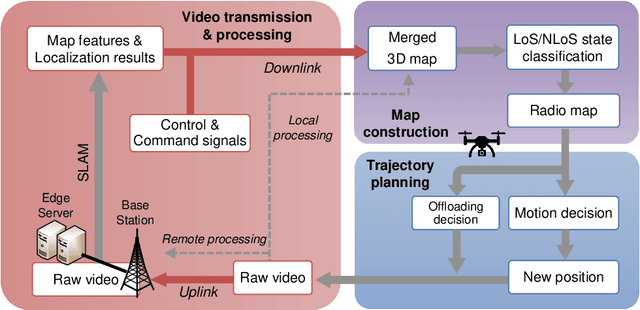

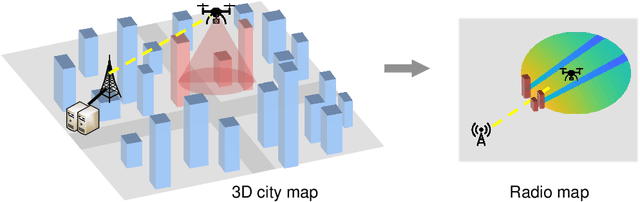

Abstract:Autonomous flight for UAVs relies on visual information for avoiding obstacles and ensuring a safe collision-free flight. In addition to visual clues, safe UAVs often need connectivity with the ground station. In this paper, we study the synergies between vision and communications for edge computing-enabled UAV flight. By proposing a framework of Edge Computing Assisted Autonomous Flight (ECAAF), we illustrate that vision and communications can interact with and assist each other with the aid of edge computing and offloading, and further speed up the UAV mission completion. ECAAF consists of three functionalities that are discussed in detail: edge computing for 3D map acquisition, radio map construction from the 3D map, and online trajectory planning. During ECAAF, the interactions of communication capacity, video offloading, 3D map quality, and channel state of the trajectory form a positive feedback loop. Simulation results verify that the proposed method can improve mission performance by enhancing connectivity. Finally, we conclude with some future research directions.

Social-VRNN: One-Shot Multi-modal Trajectory Prediction for Interacting Pedestrians

Oct 18, 2020

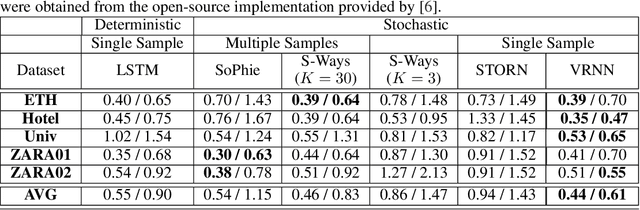

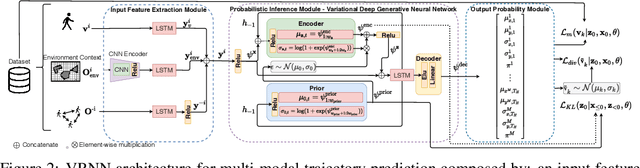

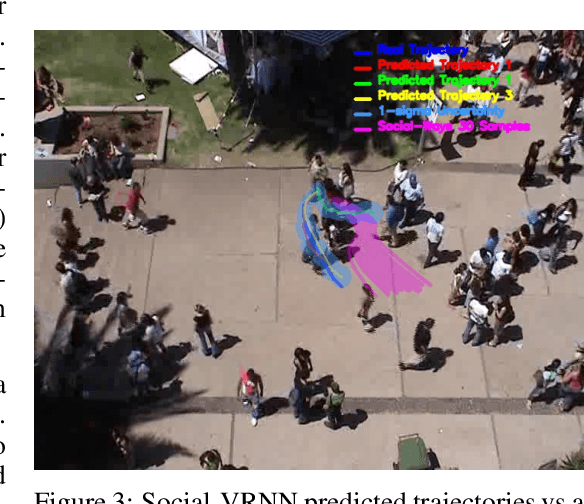

Abstract:Prediction of human motions is key for safe navigation of autonomous robots among humans. In cluttered environments, several motion hypotheses may exist for a pedestrian, due to its interactions with the environment and other pedestrians. Previous works for estimating multiple motion hypotheses require a large number of samples which limits their applicability in real-time motion planning. In this paper, we present a variational learning approach for interaction-aware and multi-modal trajectory prediction based on deep generative neural networks. Our approach can achieve faster convergence and requires significantly fewer samples comparing to state-of-the-art methods. Experimental results on real and simulation data show that our model can effectively learn to infer different trajectories. We compare our method with three baseline approaches and present performance results demonstrating that our generative model can achieve higher accuracy for trajectory prediction by producing diverse trajectories.

* Accepted, 12 pages, 4 figures

With Whom to Communicate: Learning Efficient Communication for Multi-Robot Collision Avoidance

Sep 25, 2020

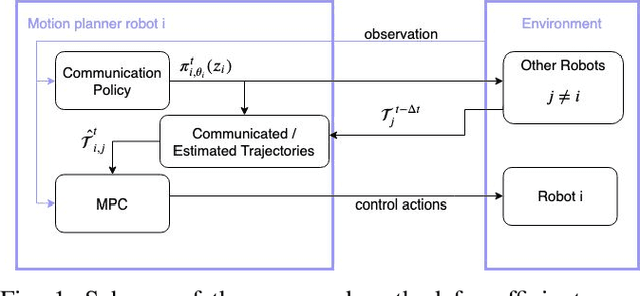

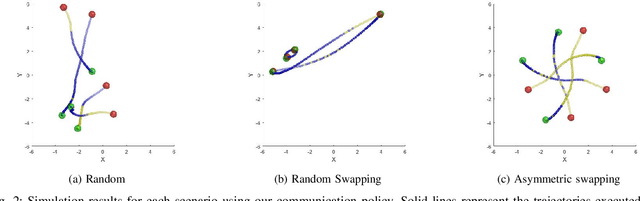

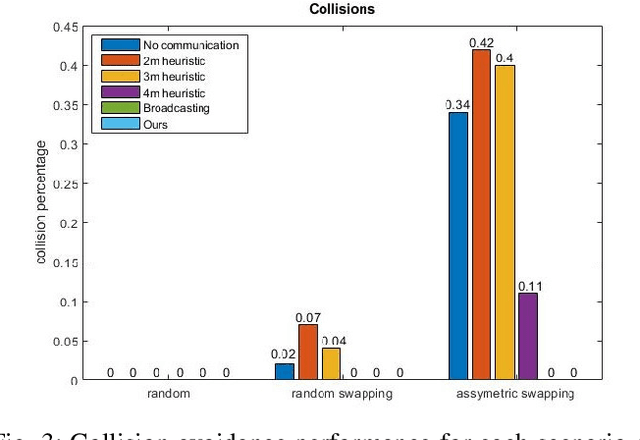

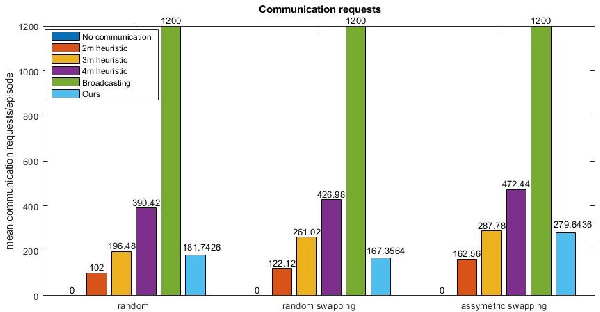

Abstract:Decentralized multi-robot systems typically perform coordinated motion planning by constantly broadcasting their intentions as a means to cope with the lack of a central system coordinating the efforts of all robots. Especially in complex dynamic environments, the coordination boost allowed by communication is critical to avoid collisions between cooperating robots. However, the risk of collision between a pair of robots fluctuates through their motion and communication is not always needed. Additionally, constant communication makes much of the still valuable information shared in previous time steps redundant. This paper presents an efficient communication method that solves the problem of "when" and with "whom" to communicate in multi-robot collision avoidance scenarios. In this approach, every robot learns to reason about other robots' states and considers the risk of future collisions before asking for the trajectory plans of other robots. We evaluate and verify the proposed communication strategy in simulation with four quadrotors and compare it with three baseline strategies: non-communicating, broadcasting and a distance-based method broadcasting information with quadrotors within a predefined distance.

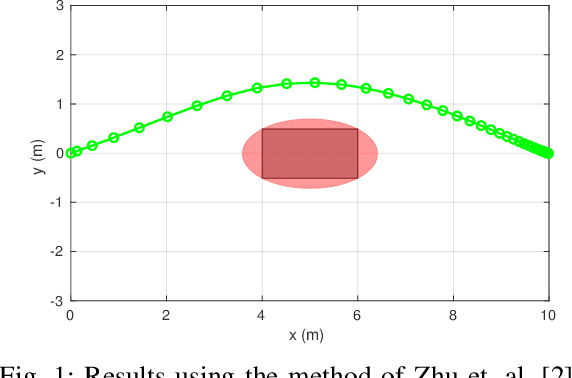

Comment on "A Real-Time Approach for Chance-Constrained Motion Planning with Dynamic Obstacles"

Jun 04, 2020

Abstract:This comment presents the results of using chance-constrained model predictive control (MPC) to solve a one-horizon benchmark collision avoidance problem.

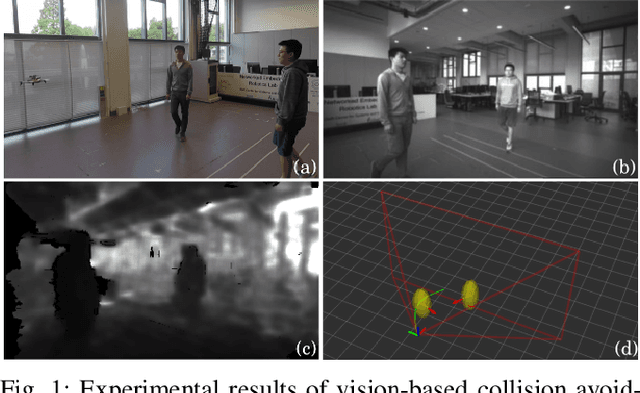

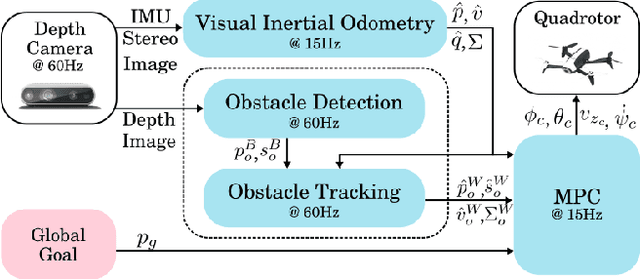

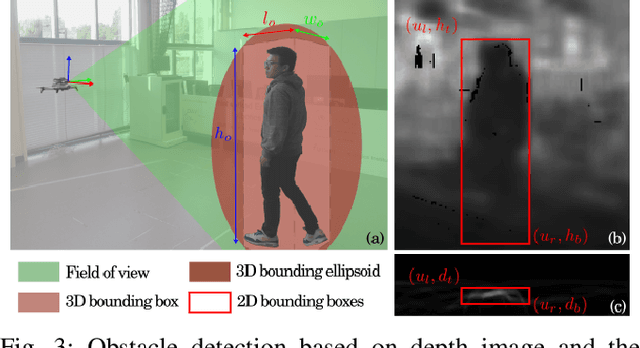

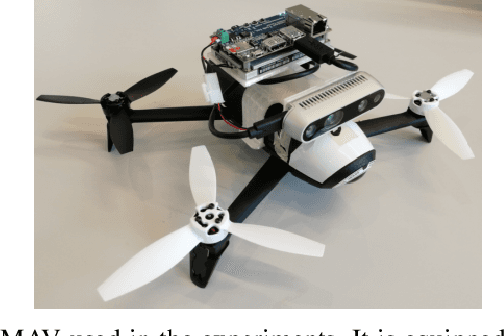

Robust Vision-based Obstacle Avoidance for Micro Aerial Vehicles in Dynamic Environments

Feb 13, 2020

Abstract:In this paper, we present an on-board vision-based approach for avoidance of moving obstacles in dynamic environments. Our approach relies on an efficient obstacle detection and tracking algorithm based on depth image pairs, which provides the estimated position, velocity and size of the obstacles. Robust collision avoidance is achieved by formulating a chance-constrained model predictive controller (CC-MPC) to ensure that the collision probability between the micro aerial vehicle (MAV) and each moving obstacle is below a specified threshold. The method takes into account MAV dynamics, state estimation and obstacle sensing uncertainties. The proposed approach is implemented on a quadrotor equipped with a stereo camera and is tested in a variety of environments, showing effective on-line collision avoidance of moving obstacles.

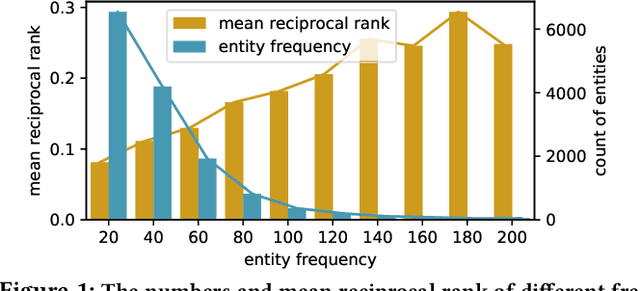

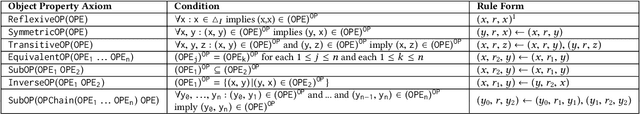

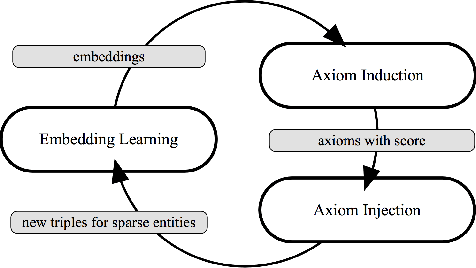

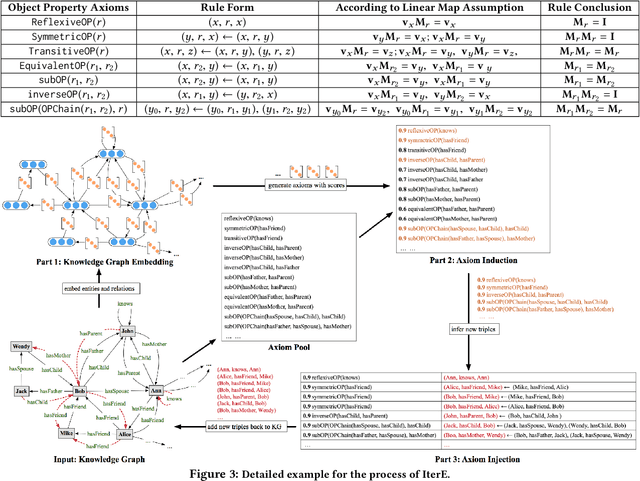

Iteratively Learning Embeddings and Rules for Knowledge Graph Reasoning

Mar 21, 2019

Abstract:Reasoning is essential for the development of large knowledge graphs, especially for completion, which aims to infer new triples based on existing ones. Both rules and embeddings can be used for knowledge graph reasoning and they have their own advantages and difficulties. Rule-based reasoning is accurate and explainable but rule learning with searching over the graph always suffers from efficiency due to huge search space. Embedding-based reasoning is more scalable and efficient as the reasoning is conducted via computation between embeddings, but it has difficulty learning good representations for sparse entities because a good embedding relies heavily on data richness. Based on this observation, in this paper we explore how embedding and rule learning can be combined together and complement each other's difficulties with their advantages. We propose a novel framework IterE iteratively learning embeddings and rules, in which rules are learned from embeddings with proper pruning strategy and embeddings are learned from existing triples and new triples inferred by rules. Evaluations on embedding qualities of IterE show that rules help improve the quality of sparse entity embeddings and their link prediction results. We also evaluate the efficiency of rule learning and quality of rules from IterE compared with AMIE+, showing that IterE is capable of generating high quality rules more efficiently. Experiments show that iteratively learning embeddings and rules benefit each other during learning and prediction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge