Fangqiang Ding

Hidden Gems: 4D Radar Scene Flow Learning Using Cross-Modal Supervision

Mar 17, 2023

Abstract:This work proposes a novel approach to 4D radar-based scene flow estimation via cross-modal learning. Our approach is motivated by the co-located sensing redundancy in modern autonomous vehicles. Such redundancy implicitly provides various forms of supervision cues to the radar scene flow estimation. Specifically, we introduce a multi-task model architecture for the identified cross-modal learning problem and propose loss functions to opportunistically engage scene flow estimation using multiple cross-modal constraints for effective model training. Extensive experiments show the state-of-the-art performance of our method and demonstrate the effectiveness of cross-modal supervised learning to infer more accurate 4D radar scene flow. We also show its usefulness to two subtasks - motion segmentation and ego-motion estimation. Our source code will be available on https://github.com/Toytiny/CMFlow.

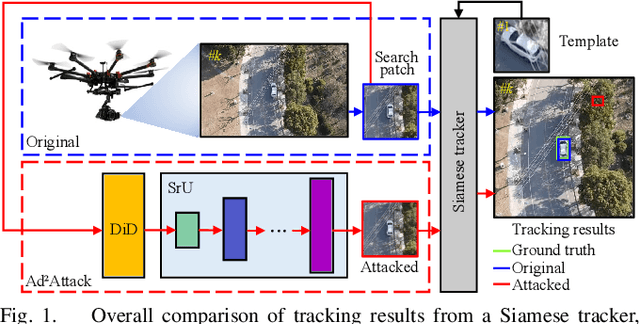

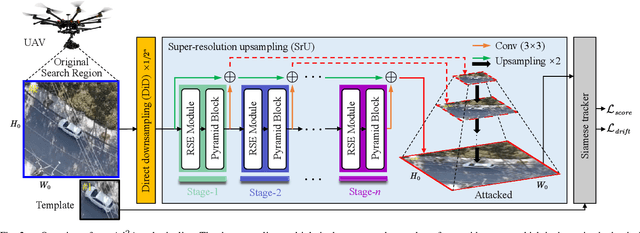

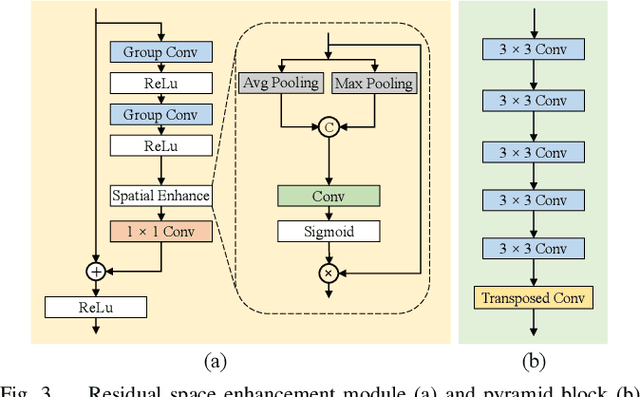

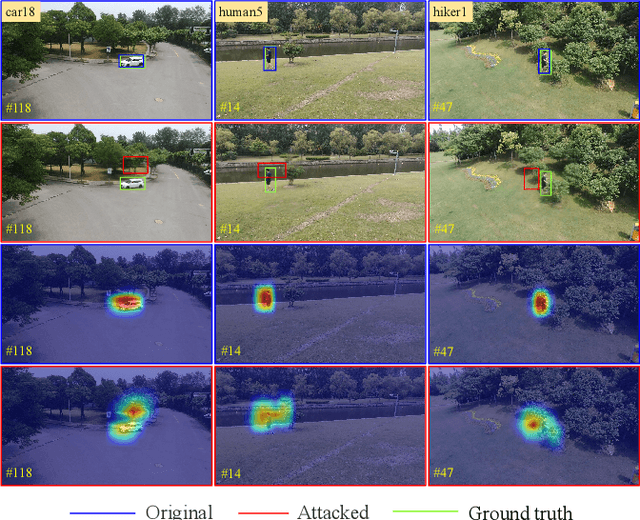

Ad2Attack: Adaptive Adversarial Attack on Real-Time UAV Tracking

Mar 03, 2022

Abstract:Visual tracking is adopted to extensive unmanned aerial vehicle (UAV)-related applications, which leads to a highly demanding requirement on the robustness of UAV trackers. However, adding imperceptible perturbations can easily fool the tracker and cause tracking failures. This risk is often overlooked and rarely researched at present. Therefore, to help increase awareness of the potential risk and the robustness of UAV tracking, this work proposes a novel adaptive adversarial attack approach, i.e., Ad$^2$Attack, against UAV object tracking. Specifically, adversarial examples are generated online during the resampling of the search patch image, which leads trackers to lose the target in the following frames. Ad$^2$Attack is composed of a direct downsampling module and a super-resolution upsampling module with adaptive stages. A novel optimization function is proposed for balancing the imperceptibility and efficiency of the attack. Comprehensive experiments on several well-known benchmarks and real-world conditions show the effectiveness of our attack method, which dramatically reduces the performance of the most advanced Siamese trackers.

Self-Supervised Scene Flow Estimation with 4D Automotive Radar

Mar 02, 2022

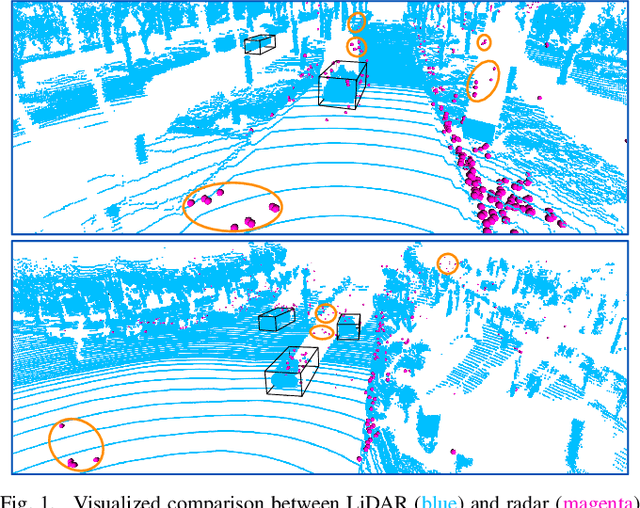

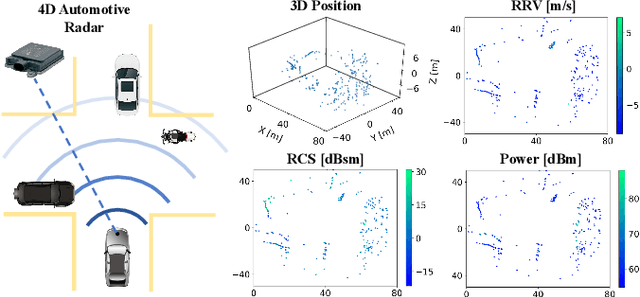

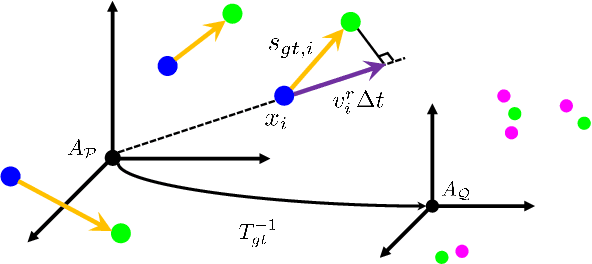

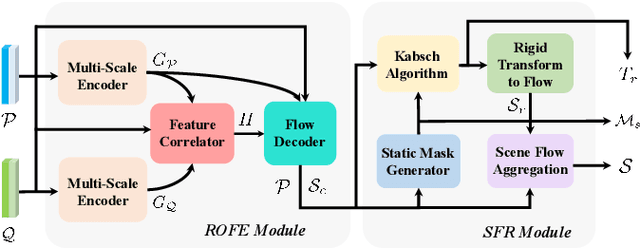

Abstract:Scene flow allows autonomous vehicles to reason about the arbitrary motion of multiple independent objects which is the key to long-term mobile autonomy. While estimating the scene flow from LiDAR has progressed recently, it remains largely unknown how to estimate the scene flow from a 4D radar - an increasingly popular automotive sensor for its robustness against adverse weather and lighting conditions. Compared with the LiDAR point clouds, radar data are drastically sparser, noisier and in much lower resolution. Annotated datasets for radar scene flow are also in absence and costly to acquire in the real world. These factors jointly pose the radar scene flow estimation as a challenging problem. This work aims to address the above challenges and estimate scene flow from 4D radar point clouds by leveraging self-supervised learning. A robust scene flow estimation architecture and three novel losses are bespoken designed to cope with intractable radar data. Real-world experimental results validate that our method is able to robustly estimate the radar scene flow in the wild and effectively supports the downstream task of motion segmentation.

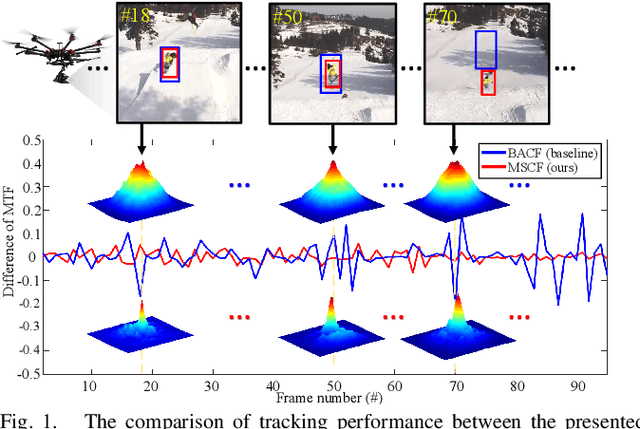

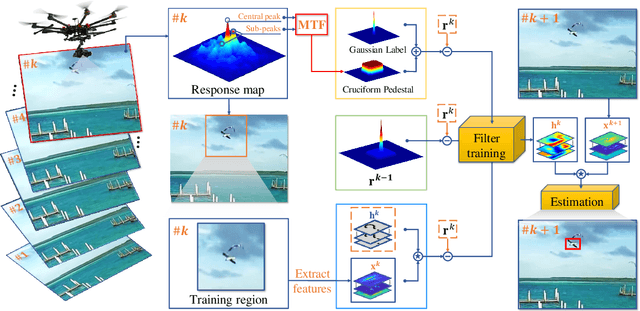

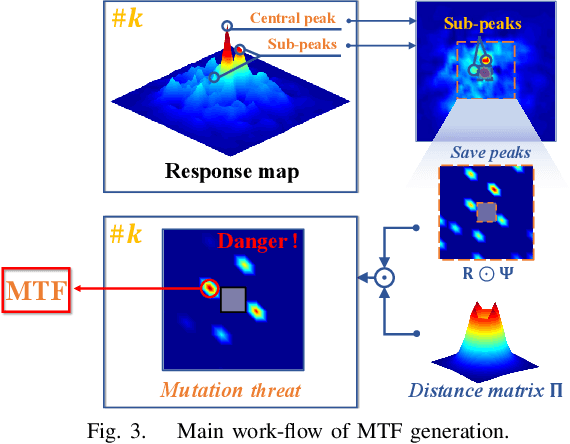

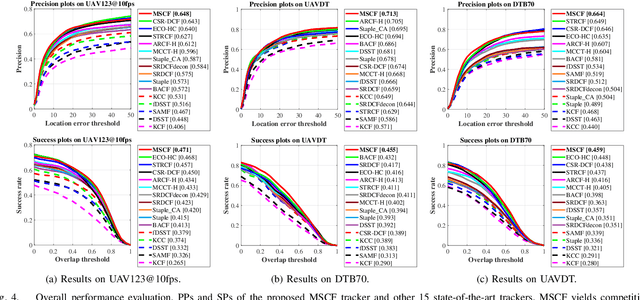

Mutation Sensitive Correlation Filter for Real-Time UAV Tracking with Adaptive Hybrid Label

Jun 15, 2021

Abstract:Unmanned aerial vehicle (UAV) based visual tracking has been confronted with numerous challenges, e.g., object motion and occlusion. These challenges generally introduce unexpected mutations of target appearance and result in tracking failure. However, prevalent discriminative correlation filter (DCF) based trackers are insensitive to target mutations due to a predefined label, which concentrates on merely the centre of the training region. Meanwhile, appearance mutations caused by occlusion or similar objects usually lead to the inevitable learning of wrong information. To cope with appearance mutations, this paper proposes a novel DCF-based method to enhance the sensitivity and resistance to mutations with an adaptive hybrid label, i.e., MSCF. The ideal label is optimized jointly with the correlation filter and remains temporal consistency. Besides, a novel measurement of mutations called mutation threat factor (MTF) is applied to correct the label dynamically. Considerable experiments are conducted on widely used UAV benchmarks. The results indicate that the performance of MSCF tracker surpasses other 26 state-of-the-art DCF-based and deep-based trackers. With a real-time speed of _38 frames/s, the proposed approach is sufficient for UAV tracking commissions.

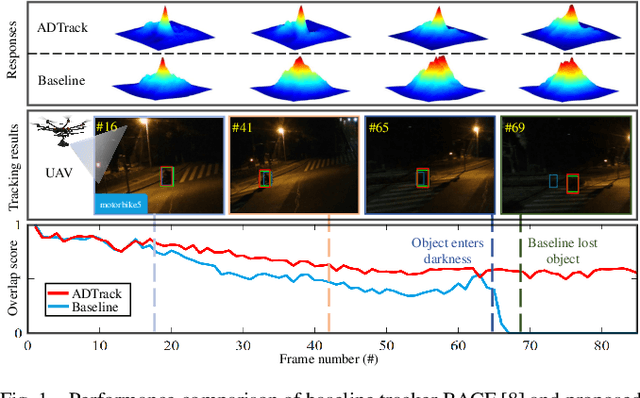

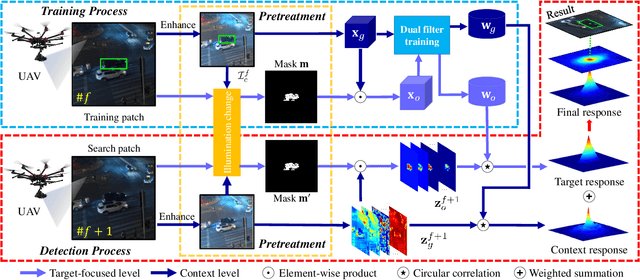

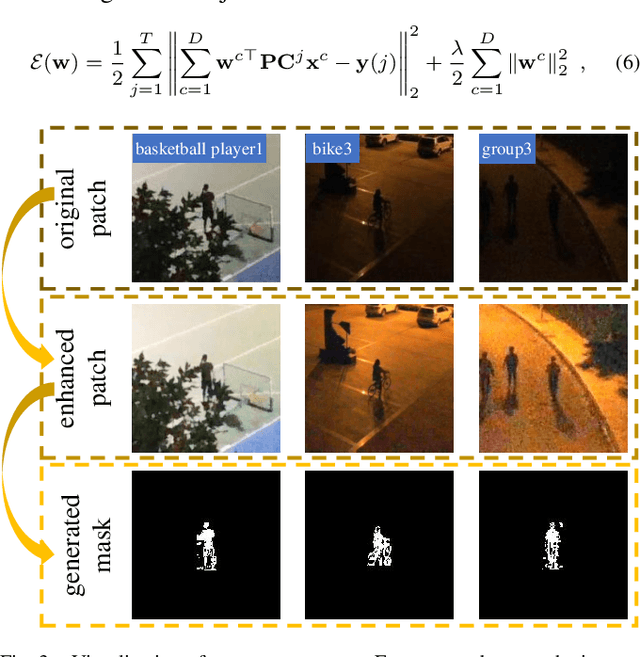

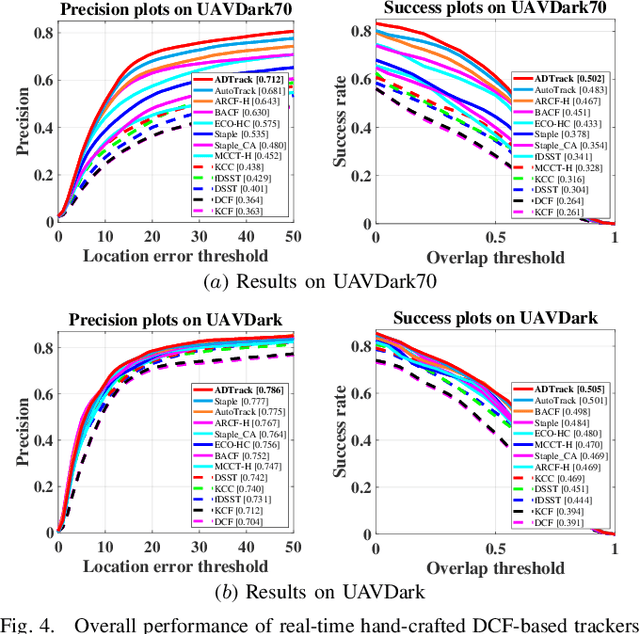

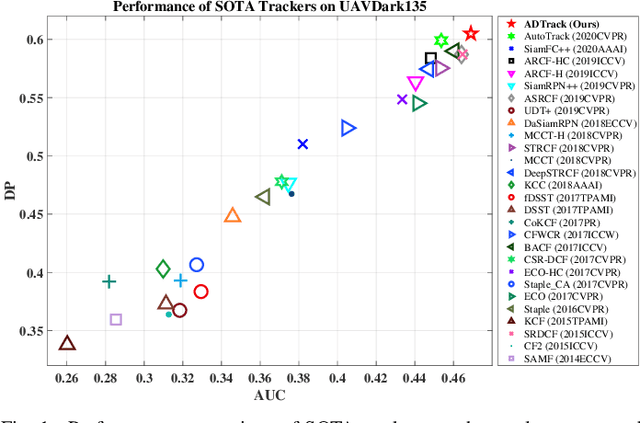

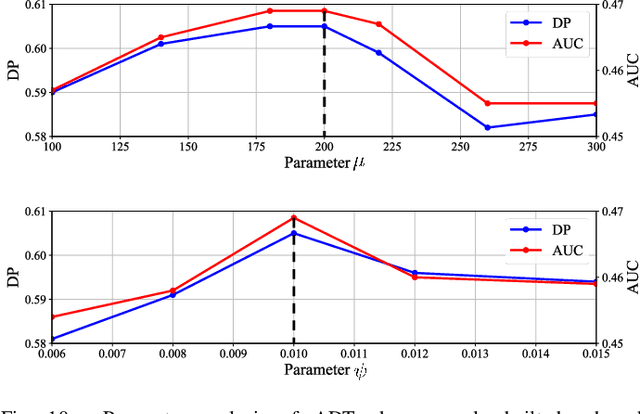

ADTrack: Target-Aware Dual Filter Learning for Real-Time Anti-Dark UAV Tracking

Jun 04, 2021

Abstract:Prior correlation filter (CF)-based tracking methods for unmanned aerial vehicles (UAVs) have virtually focused on tracking in the daytime. However, when the night falls, the trackers will encounter more harsh scenes, which can easily lead to tracking failure. In this regard, this work proposes a novel tracker with anti-dark function (ADTrack). The proposed method integrates an efficient and effective low-light image enhancer into a CF-based tracker. Besides, a target-aware mask is simultaneously generated by virtue of image illumination variation. The target-aware mask can be applied to jointly train a target-focused filter that assists the context filter for robust tracking. Specifically, ADTrack adopts dual regression, where the context filter and the target-focused filter restrict each other for dual filter learning. Exhaustive experiments are conducted on typical dark sceneries benchmark, consisting of 37 typical night sequences from authoritative benchmarks, i.e., UAVDark, and our newly constructed benchmark UAVDark70. The results have shown that ADTrack favorably outperforms other state-of-the-art trackers and achieves a real-time speed of 34 frames/s on a single CPU, greatly extending robust UAV tracking to night scenes.

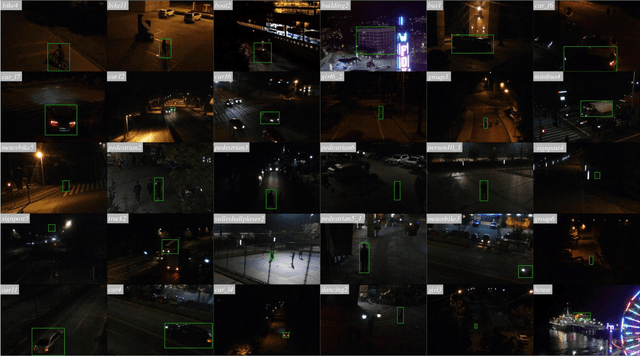

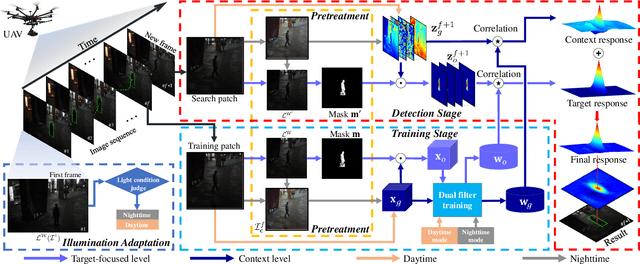

All-Day Object Tracking for Unmanned Aerial Vehicle

Jan 24, 2021

Abstract:Visual object tracking, which is representing a major interest in image processing field, has facilitated numerous real world applications. Among them, equipping unmanned aerial vehicle (UAV) with real time robust visual trackers for all day aerial maneuver, is currently attracting incremental attention and has remarkably broadened the scope of applications of object tracking. However, prior tracking methods have merely focused on robust tracking in the well-illuminated scenes, while ignoring trackers' capabilities to be deployed in the dark. In darkness, the conditions can be more complex and harsh, easily posing inferior robust tracking or even tracking failure. To this end, this work proposed a novel discriminative correlation filter based tracker with illumination adaptive and anti dark capability, namely ADTrack. ADTrack firstly exploits image illuminance information to enable adaptability of the model to the given light condition. Then, by virtue of an efficient and effective image enhancer, ADTrack carries out image pretreatment, where a target aware mask is generated. Benefiting from the mask, ADTrack aims to solve a dual regression problem where dual filters, i.e., the context filter and target focused filter, are trained with mutual constraint. Thus ADTrack is able to maintain continuously favorable performance in all-day conditions. Besides, this work also constructed one UAV nighttime tracking benchmark UAVDark135, comprising of more than 125k manually annotated frames, which is also very first UAV nighttime tracking benchmark. Exhaustive experiments are extended on authoritative daytime benchmarks, i.e., UAV123 10fps, DTB70, and the newly built dark benchmark UAVDark135, which have validated the superiority of ADTrack in both bright and dark conditions on a single CPU.

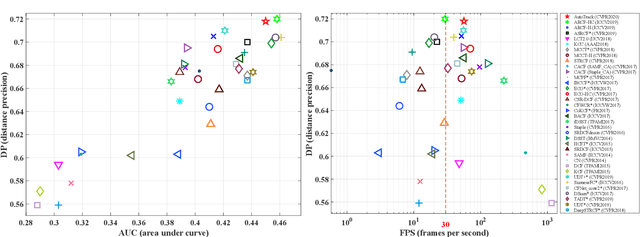

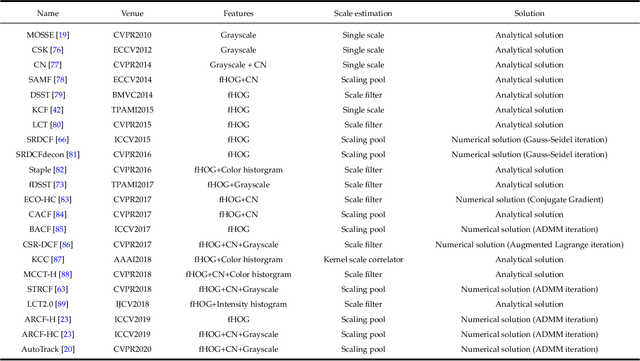

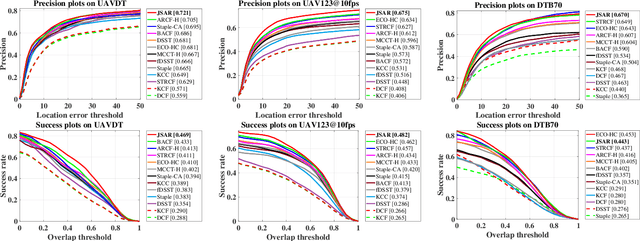

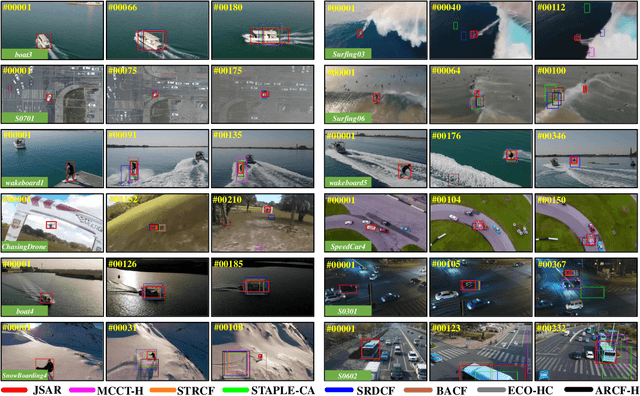

Correlation Filter for UAV-Based Aerial Tracking: A Review and Experimental Evaluation

Oct 31, 2020

Abstract:Aerial tracking, which has exhibited its omnipresent dedication and splendid performance, is one of the most active applications in the remote sensing field. Especially, unmanned aerial vehicle (UAV)-based remote sensing system, equipped with a visual tracking approach, has been widely used in aviation, navigation, agriculture, transportation, and public security, etc. As is mentioned above, the UAV-based aerial tracking platform has been gradually developed from research to practical application stage, reaching one of the main aerial remote sensing technologies in the future. However, due to real-world challenging situations, the vibration of the UAV's mechanical structure (especially under strong wind conditions), and limited computation resources, accuracy, robustness, and high efficiency are all crucial for the onboard tracking methods. Recently, the discriminative correlation filter (DCF)-based trackers have stood out for their high computational efficiency and appealing robustness on a single CPU, and have flourished in the UAV visual tracking community. In this work, the basic framework of the DCF-based trackers is firstly generalized, based on which, 20 state-of-the-art DCF-based trackers are orderly summarized according to their innovations for soloving various issues. Besides, exhaustive and quantitative experiments have been extended on various prevailing UAV tracking benchmarks, i.e., UAV123, UAV123_10fps, UAV20L, UAVDT, DTB70, and VisDrone2019-SOT, which contain 371,625 frames in total. The experiments show the performance, verify the feasibility, and demonstrate the current challenges of DCF-based trackers onboard UAV tracking. Finally, comprehensive conclusions on open challenges and directions for future research is presented.

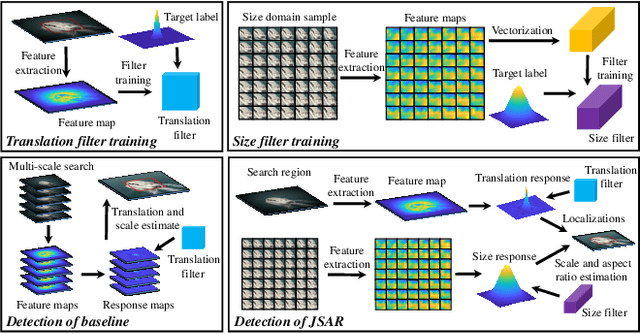

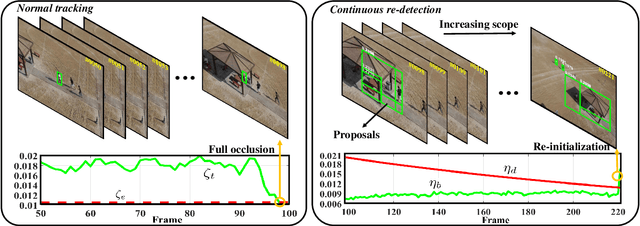

Automatic Failure Recovery and Re-Initialization for Online UAV Tracking with Joint Scale and Aspect Ratio Optimization

Aug 10, 2020

Abstract:Current unmanned aerial vehicle (UAV) visual tracking algorithms are primarily limited with respect to: (i) the kind of size variation they can deal with, (ii) the implementation speed which hardly meets the real-time requirement. In this work, a real-time UAV tracking algorithm with powerful size estimation ability is proposed. Specifically, the overall tracking task is allocated to two 2D filters: (i) translation filter for location prediction in the space domain, (ii) size filter for scale and aspect ratio optimization in the size domain. Besides, an efficient two-stage re-detection strategy is introduced for long-term UAV tracking tasks. Large-scale experiments on four UAV benchmarks demonstrate the superiority of the presented method which has computation feasibility on a low-cost CPU.

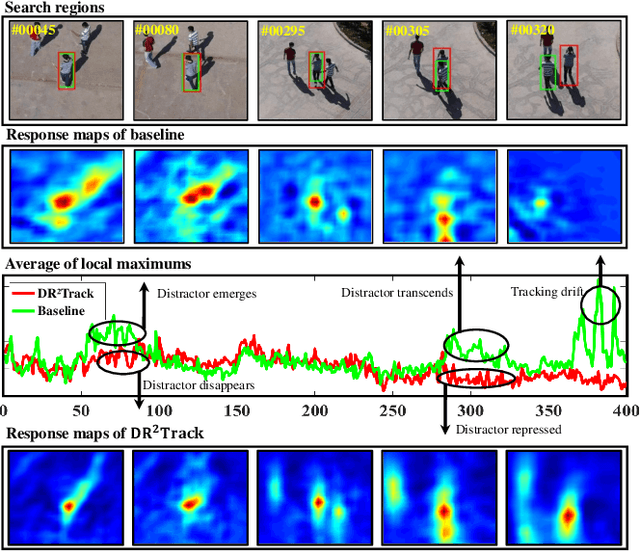

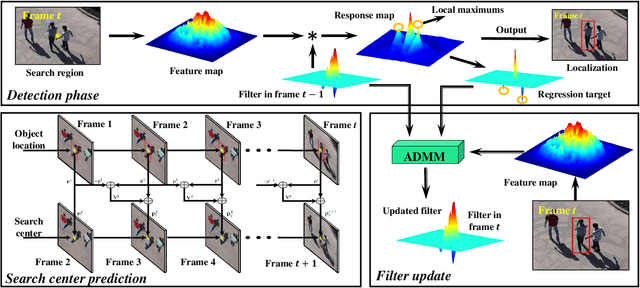

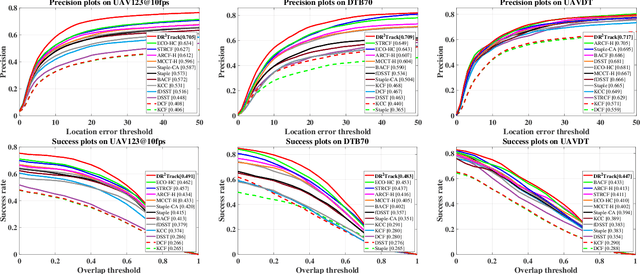

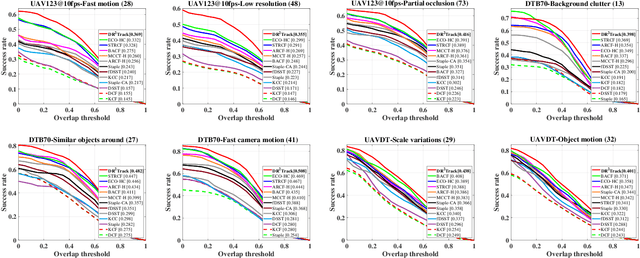

DR^2Track: Towards Real-Time Visual Tracking for UAV via Distractor Repressed Dynamic Regression

Aug 10, 2020

Abstract:Visual tracking has yielded promising applications with unmanned aerial vehicle (UAV). In literature, the advanced discriminative correlation filter (DCF) type trackers generally distinguish the foreground from the background with a learned regressor which regresses the implicit circulated samples into a fixed target label. However, the predefined and unchanged regression target results in low robustness and adaptivity to uncertain aerial tracking scenarios. In this work, we exploit the local maximum points of the response map generated in the detection phase to automatically locate current distractors. By repressing the response of distractors in the regressor learning, we can dynamically and adaptively alter our regression target to leverage the tracking robustness as well as adaptivity. Substantial experiments conducted on three challenging UAV benchmarks demonstrate both excellent performance and extraordinary speed (~50fps on a cheap CPU) of our tracker.

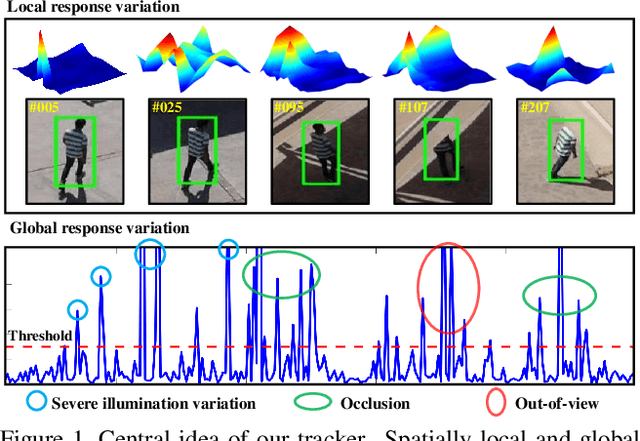

AutoTrack: Towards High-Performance Visual Tracking for UAV with Automatic Spatio-Temporal Regularization

Mar 29, 2020

Abstract:Most existing trackers based on discriminative correlation filters (DCF) try to introduce predefined regularization term to improve the learning of target objects, e.g., by suppressing background learning or by restricting change rate of correlation filters. However, predefined parameters introduce much effort in tuning them and they still fail to adapt to new situations that the designer did not think of. In this work, a novel approach is proposed to online automatically and adaptively learn spatio-temporal regularization term. Spatially local response map variation is introduced as spatial regularization to make DCF focus on the learning of trust-worthy parts of the object, and global response map variation determines the updating rate of the filter. Extensive experiments on four UAV benchmarks have proven the superiority of our method compared to the state-of-the-art CPU- and GPU-based trackers, with a speed of ~60 frames per second running on a single CPU. Our tracker is additionally proposed to be applied in UAV localization. Considerable tests in the indoor practical scenarios have proven the effectiveness and versatility of our localization method. The code is available at https://github.com/vision4robotics/AutoTrack.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge