Emmanuel Senft

Registering Articulated Objects With Human-in-the-loop Corrections

Mar 11, 2022

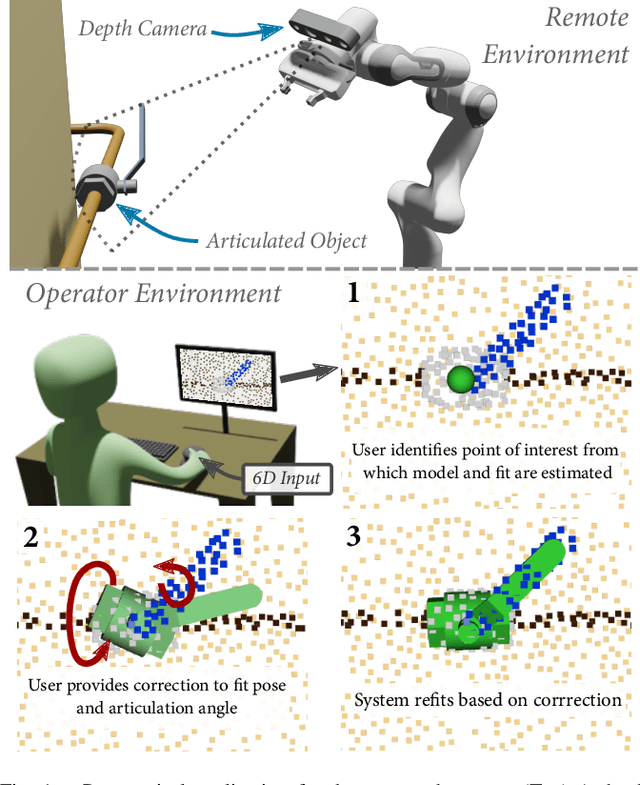

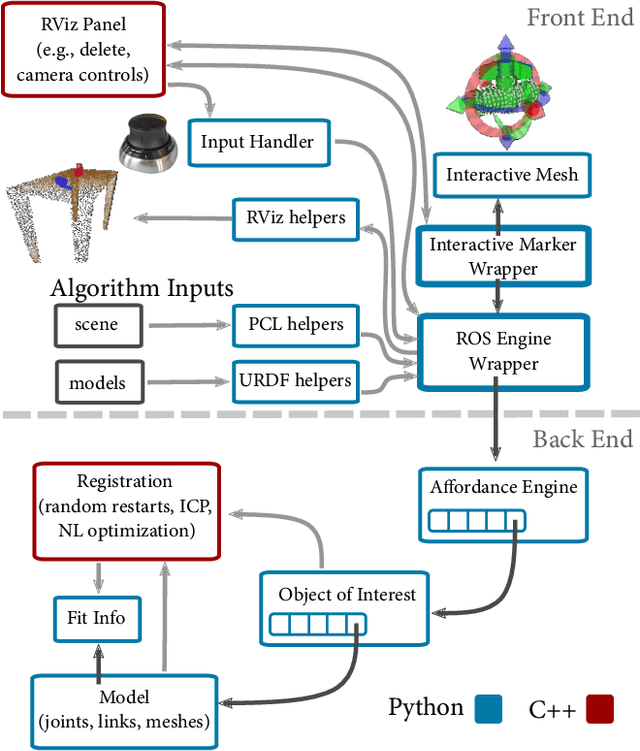

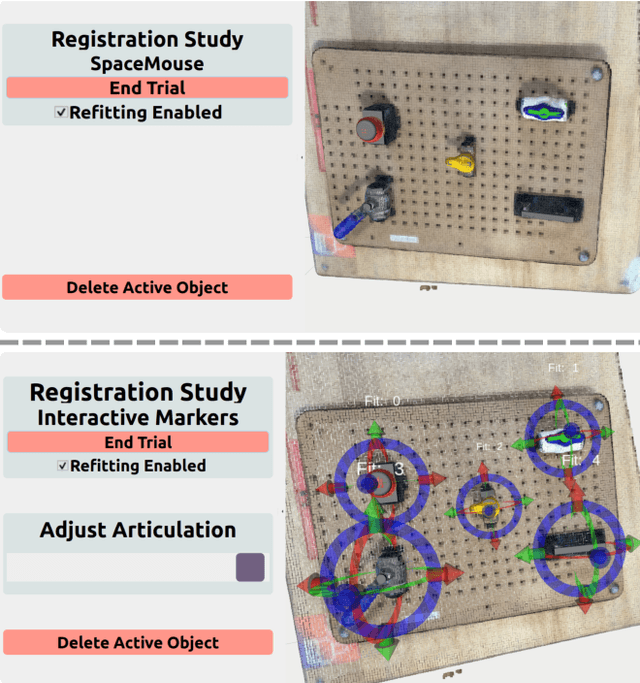

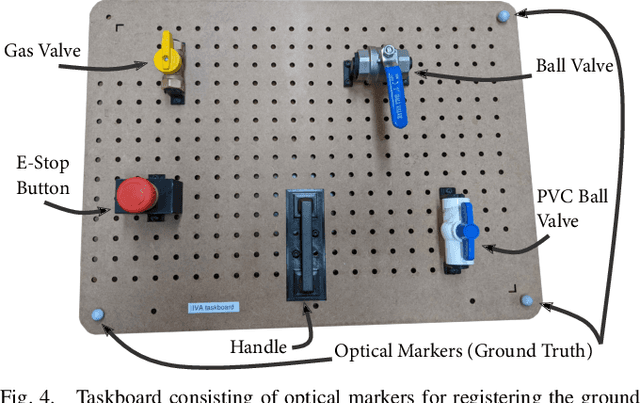

Abstract:Remotely programming robots to execute tasks often relies on registering objects of interest in the robot's environment. Frequently, these tasks involve articulating objects such as opening or closing a valve. However, existing human-in-the-loop methods for registering objects do not consider articulations and the corresponding impact to the geometry of the object, which can cause the methods to fail. In this work, we present an approach where the registration system attempts to automatically determine the object model, pose, and articulation for user-selected points using a nonlinear iterative closest point algorithm. When the automated fitting is incorrect, the operator can iteratively intervene with corrections after which the system will refit the object. We present an implementation of our fitting procedure and evaluate it with a user study that shows that it can improve user performance, in measures of time on task and task load, ease of use, and usefulness compared to a manual registration approach. We also present a situated example that demonstrates the integration of our method in an end-to-end system for articulating a remote valve.

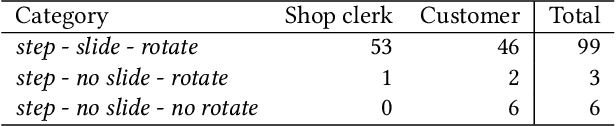

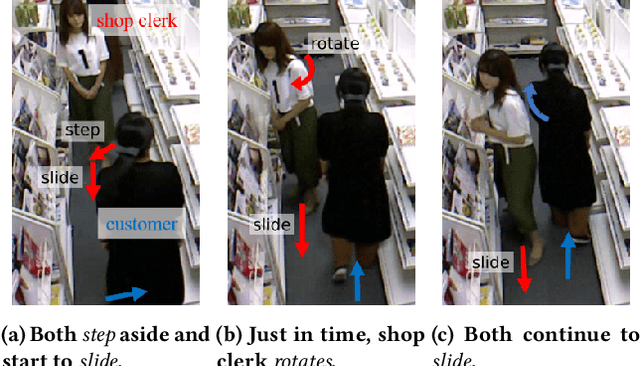

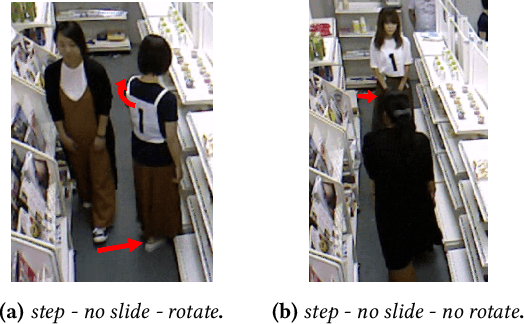

Would You Mind Me if I Pass by You? Socially-Appropriate Behaviour for an Omni-based Social Robot in Narrow Environment

Jan 27, 2022

Abstract:Interacting physically with robots and sharing environment with them leads to situations where humans and robots have to cross each other in narrow corridors. In these cases, the robot has to make space for the human to pass. From observation of human-human crossing behaviours, we isolated two main factors in this avoiding behaviour: body rotation and sliding motion. We implemented a robot controller able to vary these factors and explored how this variation impacted on people's perception. Results from a within-participants study involving 23 participants show that people prefer a robot rotating its body when crossing them. Additionally, a sliding motion is rated as being warmer. These results show the importance of social avoidance when interacting with humans.

* Accepted at HRI 2020, March 24-26, 2020, Virtual Event, UK. (https://dl.acm.org/doi/pdf/10.1145/3319502.3374812)

AI-HRI 2021 Proceedings

Sep 23, 2021Abstract:The Artificial Intelligence (AI) for Human-Robot Interaction (HRI) Symposium has been a successful venue of discussion and collaboration since 2014. During that time, these symposia provided a fertile ground for numerous collaborations and pioneered many discussions revolving trust in HRI, XAI for HRI, service robots, interactive learning, and more. This year, we aim to review the achievements of the AI-HRI community in the last decade, identify the challenges facing ahead, and welcome new researchers who wish to take part in this growing community. Taking this wide perspective, this year there will be no single theme to lead the symposium and we encourage AI-HRI submissions from across disciplines and research interests. Moreover, with the rising interest in AR and VR as part of an interaction and following the difficulties in running physical experiments during the pandemic, this year we specifically encourage researchers to submit works that do not include a physical robot in their evaluation, but promote HRI research in general. In addition, acknowledging that ethics is an inherent part of the human-robot interaction, we encourage submissions of works on ethics for HRI. Over the course of the two-day meeting, we will host a collaborative forum for discussion of current efforts in AI-HRI, with additional talks focused on the topics of ethics in HRI and ubiquitous HRI.

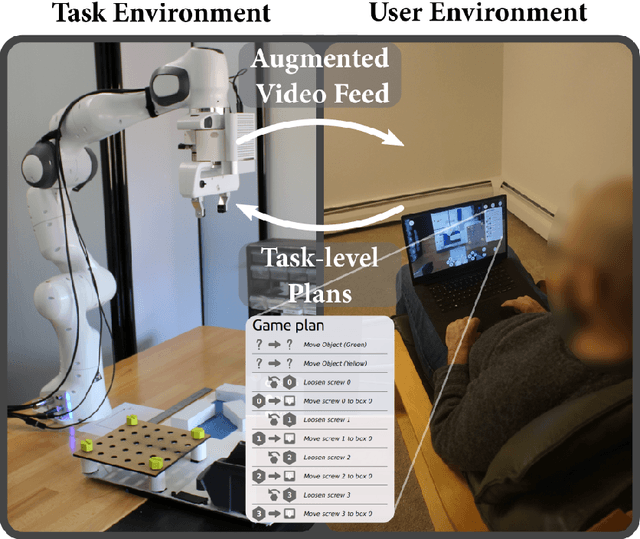

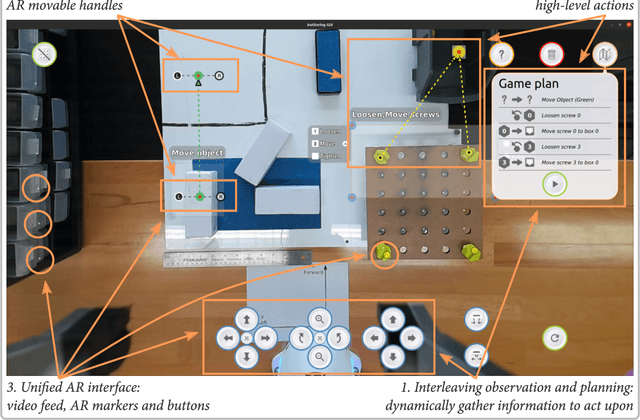

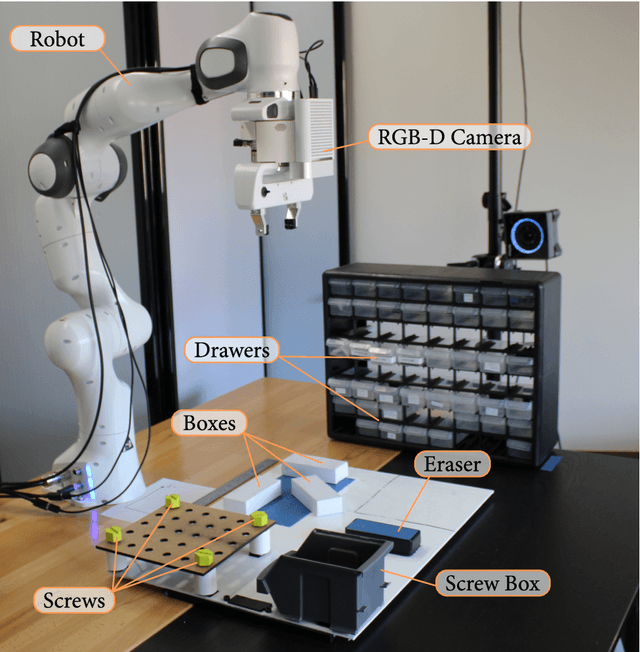

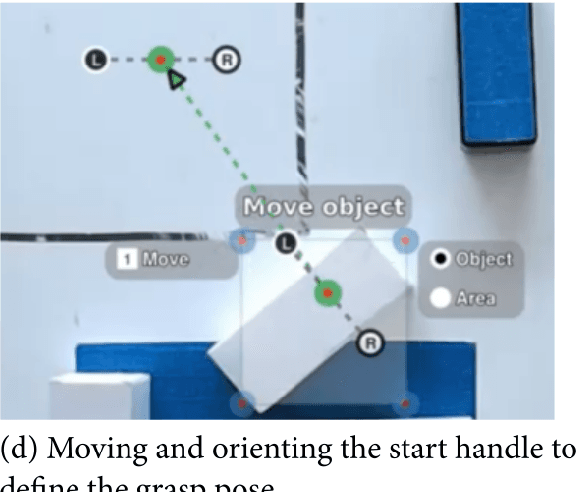

Task-Level Authoring for Remote Robot Teleoperation

Sep 06, 2021

Abstract:Remote teleoperation of robots can broaden the reach of domain specialists across a wide range of industries such as home maintenance, health care, light manufacturing, and construction. However, current direct control methods are impractical, and existing tools for programming robot remotely have focused on users with significant robotic experience. Extending robot remote programming to end users, i.e., users who are experts in a domain but novices in robotics, requires tools that balance the rich features necessary for complex teleoperation tasks with ease of use. The primary challenge to usability is that novice users are unable to specify complete and robust task plans to allow a robot to perform duties autonomously, particularly in highly variable environments. Our solution is to allow operators to specify shorter sequences of high-level commands, which we call task-level authoring, to create periods of variable robot autonomy. This approach allows inexperienced users to create robot behaviors in uncertain environments by interleaving exploration, specification of behaviors, and execution as separate steps. End users are able to break down the specification of tasks and adapt to the current needs of the interaction and environments, combining the reactivity of direct control to asynchronous operation. In this paper, we describe a prototype system contextualized in light manufacturing and its empirical validation in a user study where 18 participants with some programming experience were able to perform a variety of complex telemanipulation tasks with little training. Our results show that our approach allowed users to create flexible periods of autonomy and solve rich manipulation tasks. Furthermore, participants significantly preferred our system over comparative more direct interfaces, demonstrating the potential of our approach.

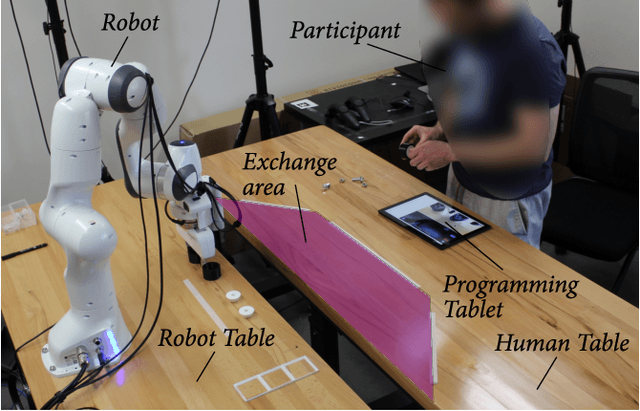

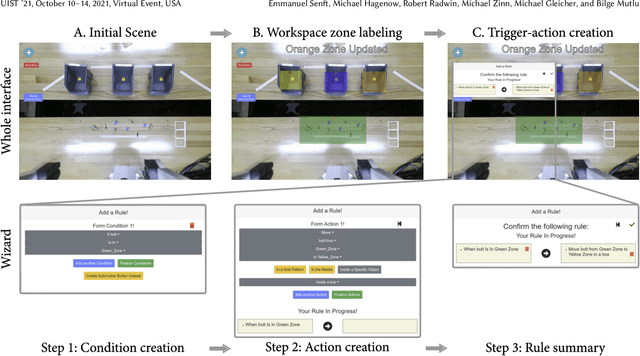

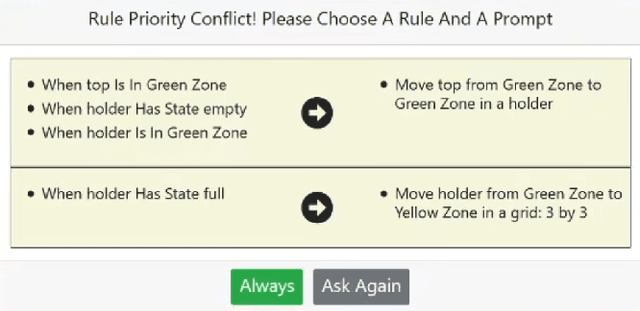

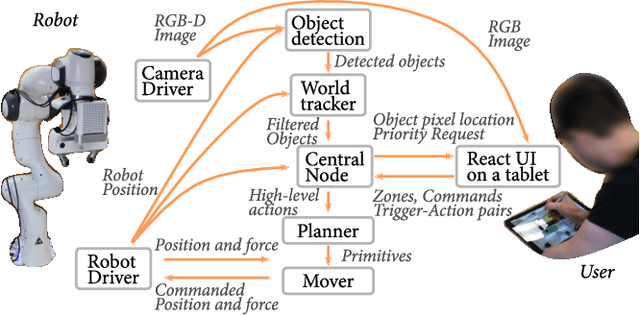

Situated Live Programming for Human-Robot Collaboration

Aug 08, 2021

Abstract:We present situated live programming for human-robot collaboration, an approach that enables users with limited programming experience to program collaborative applications for human-robot interaction. Allowing end users, such as shop floor workers, to program collaborative robots themselves would make it easy to "retask" robots from one process to another, facilitating their adoption by small and medium enterprises. Our approach builds on the paradigm of trigger-action programming (TAP) by allowing end users to create rich interactions through simple trigger-action pairings. It enables end users to iteratively create, edit, and refine a reactive robot program while executing partial programs. This live programming approach enables the user to utilize the task space and objects by incrementally specifying situated trigger-action pairs, substantially lowering the barrier to entry for programming or reprogramming robots for collaboration. We instantiate situated live programming in an authoring system where users can create trigger-action programs by annotating an augmented video feed from the robot's perspective and assign robot actions to trigger conditions. We evaluated this system in a study where participants (n = 10) developed robot programs for solving collaborative light-manufacturing tasks. Results showed that users with little programming experience were able to program HRC tasks in an interactive fashion and our situated live programming approach further supported individualized strategies and workflows. We conclude by discussing opportunities and limitations of the proposed approach, our system implementation, and our study and discuss a roadmap for expanding this approach to a broader range of tasks and applications.

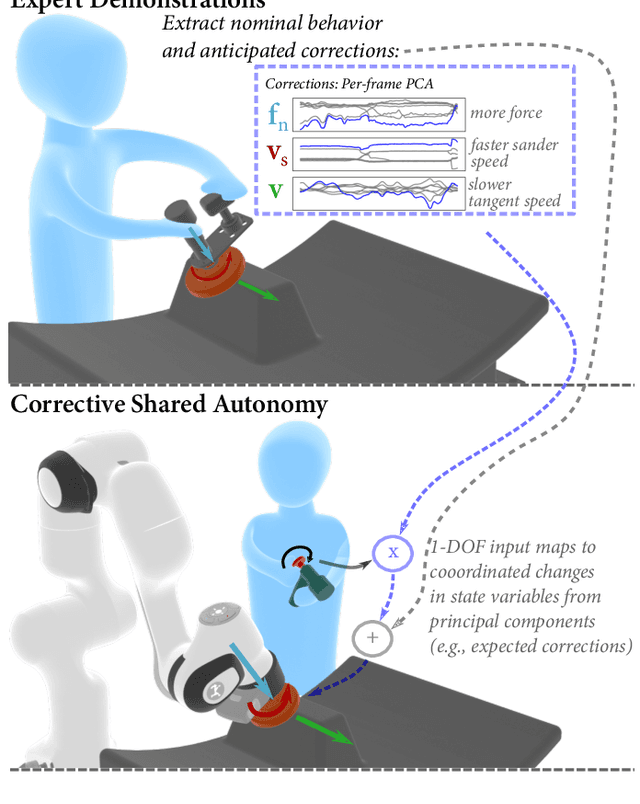

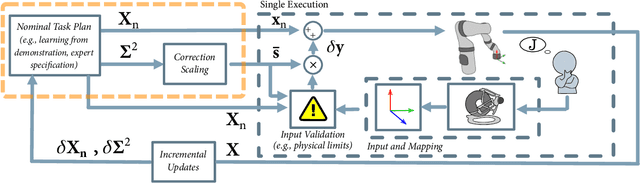

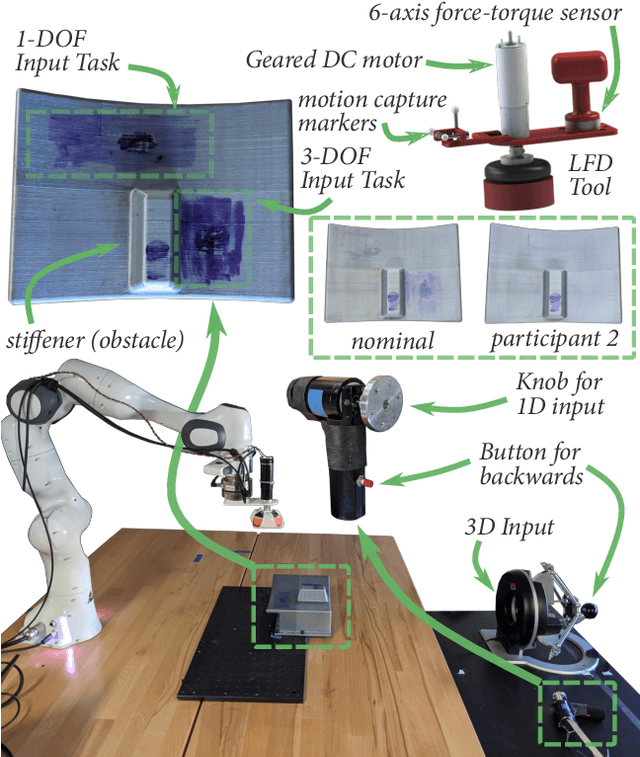

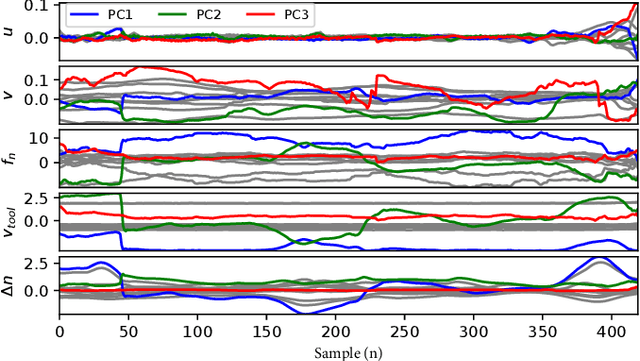

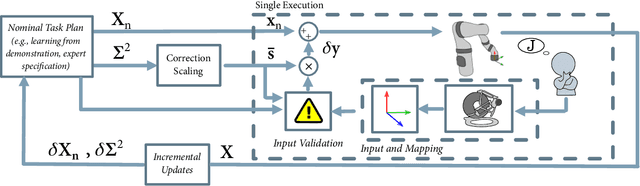

Informing Real-time Corrections in Corrective Shared Autonomy Through Expert Demonstrations

Jul 10, 2021

Abstract:Corrective Shared Autonomy is a method where human corrections are layered on top of an otherwise autonomous robot behavior. Specifically, a Corrective Shared Autonomy system leverages an external controller to allow corrections across a range of task variables (e.g., spinning speed of a tool, applied force, path) to address the specific needs of a task. However, this inherent flexibility makes the choice of what corrections to allow at any given instant difficult to determine. This choice of corrections includes determining appropriate robot state variables, scaling for these variables, and a way to allow a user to specify the corrections in an intuitive manner. This paper enables efficient Corrective Shared Autonomy by providing an automated solution based on Learning from Demonstration to both extract the nominal behavior and address these core problems. Our evaluation shows that this solution enables users to successfully complete a surface cleaning task, identifies different strategies users employed in applying corrections, and points to future improvements for our solution.

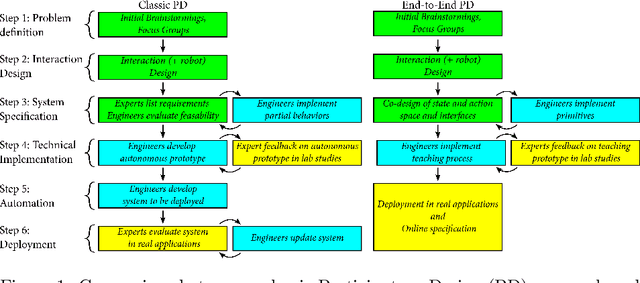

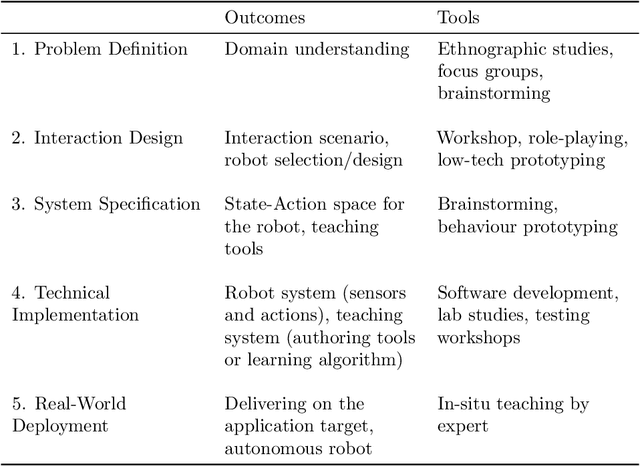

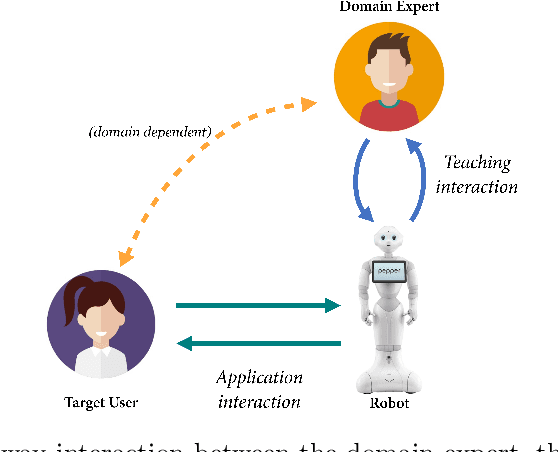

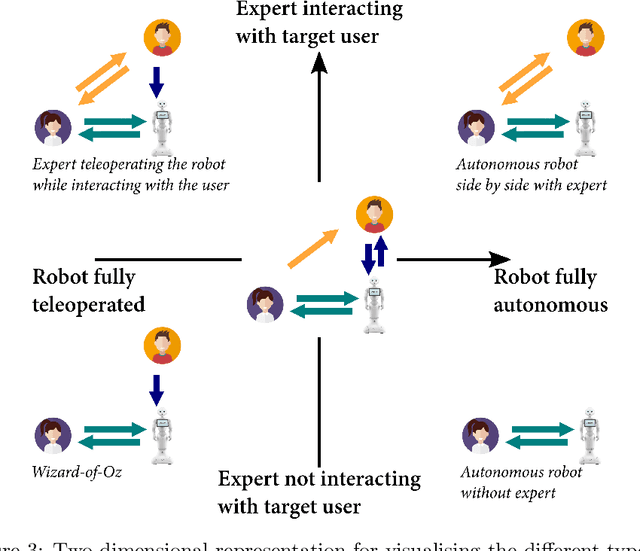

LEADOR: A Method for End-to-End Participatory Design of Autonomous Social Robots

May 14, 2021

Abstract:Participatory Design (PD) in Human-Robot Interaction (HRI) typically remains limited to the early phases of development, with subsequent robot behaviours then being hardcoded by engineers or utilised in Wizard-of-Oz (WoZ) systems that rarely achieve autonomy. We present LEADOR (Led-by-Experts Automation and Design Of Robots) an end-to-end PD methodology for domain expert co-design, automation and evaluation of social robots. LEADOR starts with typical PD to co-design the interaction specifications and state and action space of the robot. It then replaces traditional offline programming or WoZ by an in-situ, online teaching phase where the domain expert can live-program or teach the robot how to behave while being embedded in the interaction context. We believe that this live teaching can be best achieved by adding a learning component to a WoZ setup, to capture experts' implicit knowledge, as they intuitively respond to the dynamics of the situation. The robot progressively learns an appropriate, expert-approved policy, ultimately leading to full autonomy, even in sensitive and/or ill-defined environments. However, LEADOR is agnostic to the exact technical approach used to facilitate this learning process. The extensive inclusion of the domain expert(s) in robot design represents established responsible innovation practice, lending credibility to the system both during the teaching phase and when operating autonomously. The combination of this expert inclusion with the focus on in-situ development also means LEADOR supports a mutual shaping approach to social robotics. We draw on two previously published, foundational works from which this (generalisable) methodology has been derived in order to demonstrate the feasibility and worth of this approach, provide concrete examples in its application and identify limitations and opportunities when applying this framework in new environments.

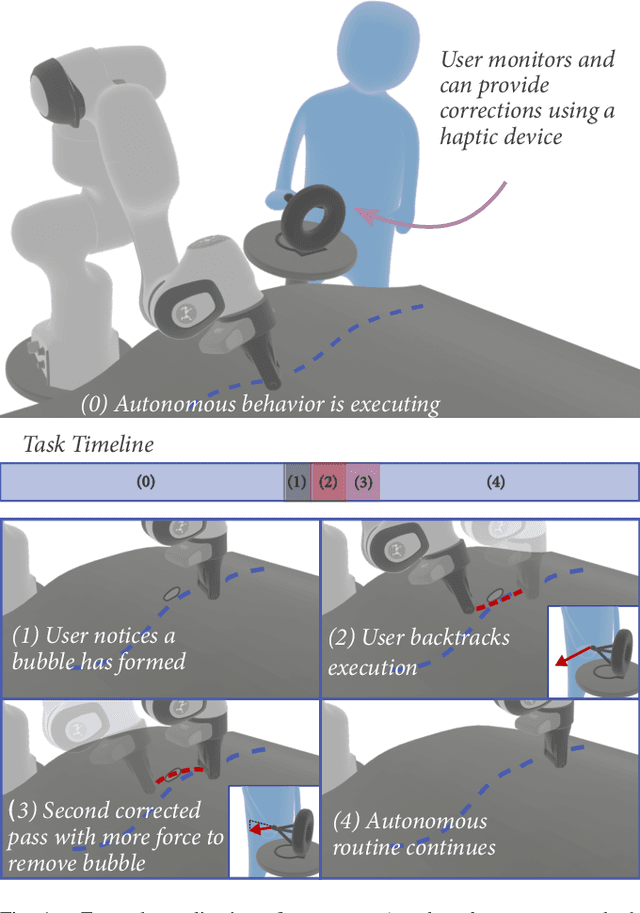

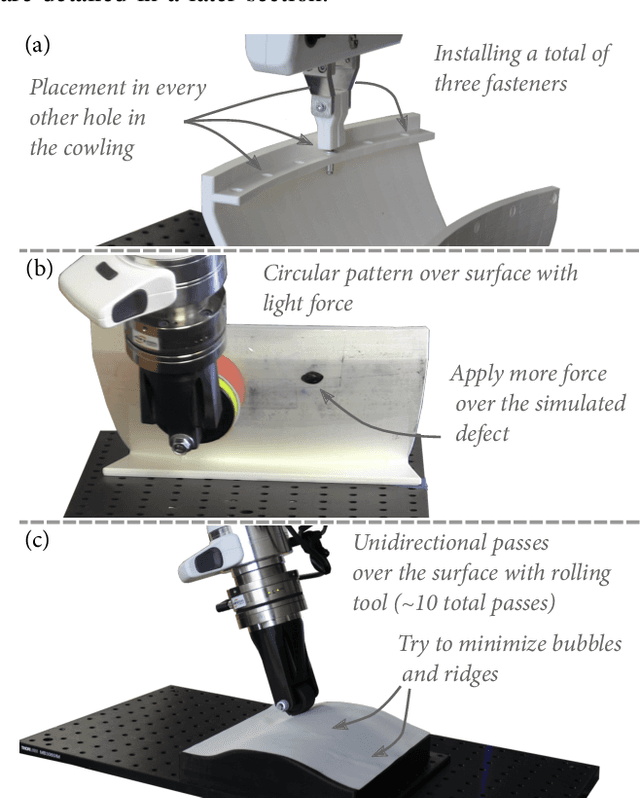

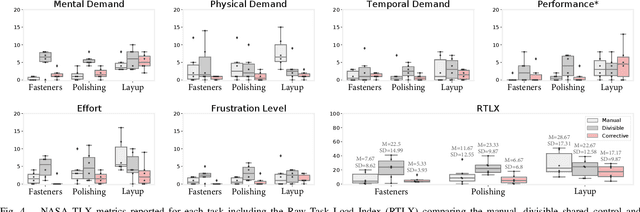

Corrective Shared Autonomy for Addressing Task Variability

Feb 14, 2021

Abstract:Many tasks, particularly those involving interaction with the environment, are characterized by high variability, making robotic autonomy difficult. One flexible solution is to introduce the input of a human with superior experience and cognitive abilities as part of a shared autonomy policy. However, current methods for shared autonomy are not designed to address the wide range of necessary corrections (e.g., positions, forces, execution rate, etc.) that the user may need to provide to address task variability. In this paper, we present corrective shared autonomy, where users provide corrections to key robot state variables on top of an otherwise autonomous task model. We provide an instantiation of this shared autonomy paradigm and demonstrate its viability and benefits such as low user effort and physical demand via a system-level user study on three tasks involving variability situated in aircraft manufacturing.

Proceedings of the AI-HRI Symposium at AAAI-FSS 2020

Nov 11, 2020Abstract:The Artificial Intelligence (AI) for Human-Robot Interaction (HRI) Symposium has been a successful venue of discussion and collaboration since 2014. In that time, the related topic of trust in robotics has been rapidly growing, with major research efforts at universities and laboratories across the world. Indeed, many of the past participants in AI-HRI have been or are now involved with research into trust in HRI. While trust has no consensus definition, it is regularly associated with predictability, reliability, inciting confidence, and meeting expectations. Furthermore, it is generally believed that trust is crucial for adoption of both AI and robotics, particularly when transitioning technologies from the lab to industrial, social, and consumer applications. However, how does trust apply to the specific situations we encounter in the AI-HRI sphere? Is the notion of trust in AI the same as that in HRI? We see a growing need for research that lives directly at the intersection of AI and HRI that is serviced by this symposium. Over the course of the two-day meeting, we propose to create a collaborative forum for discussion of current efforts in trust for AI-HRI, with a sub-session focused on the related topic of explainable AI (XAI) for HRI.

Proceedings of the AI-HRI Symposium at AAAI-FSS 2019

Sep 19, 2019Abstract:The past few years have seen rapid progress in the development of service robots. Universities and companies alike have launched major research efforts toward the deployment of ambitious systems designed to aid human operators performing a variety of tasks. These robots are intended to make those who may otherwise need to live in assisted care facilities more independent, to help workers perform their jobs, or simply to make life more convenient. Service robots provide a powerful platform on which to study Artificial Intelligence (AI) and Human-Robot Interaction (HRI) in the real world. Research sitting at the intersection of AI and HRI is crucial to the success of service robots if they are to fulfill their mission. This symposium seeks to highlight research enabling robots to effectively interact with people autonomously while modeling, planning, and reasoning about the environment that the robot operates in and the tasks that it must perform. AI-HRI deals with the challenge of interacting with humans in environments that are relatively unstructured or which are structured around people rather than machines, as well as the possibility that the robot may need to interact naturally with people rather than through teach pendants, programming, or similar interfaces.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge