Charles C. Kemp

A Model that Predicts the Material Recognition Performance of Thermal Tactile Sensing

Nov 04, 2017

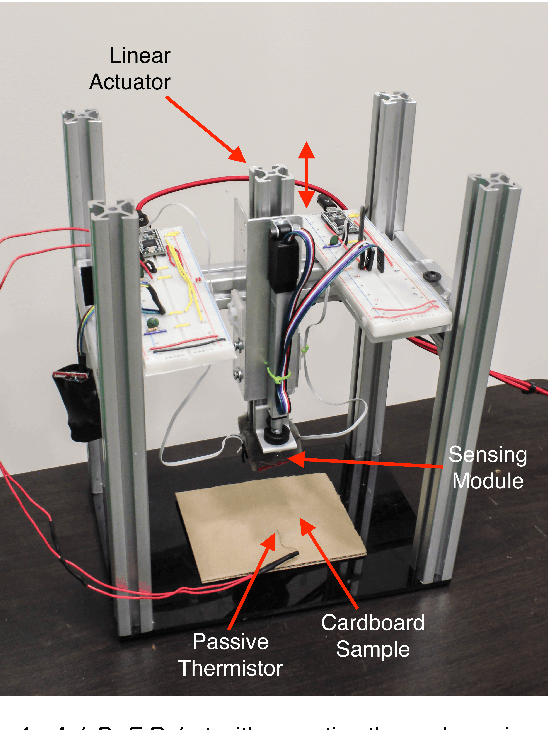

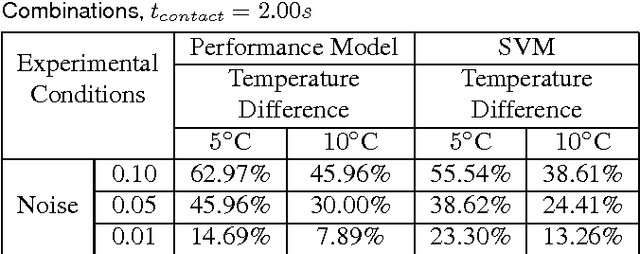

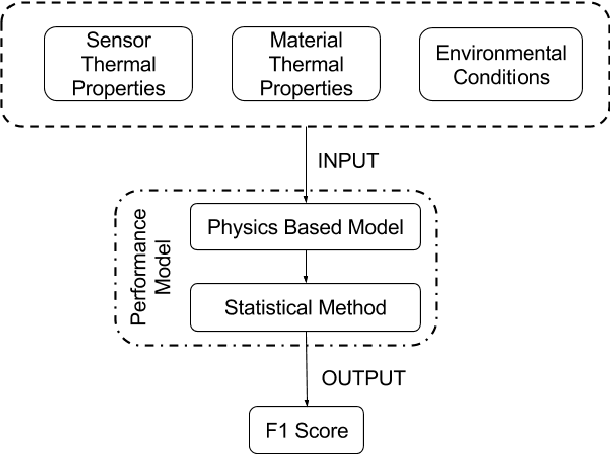

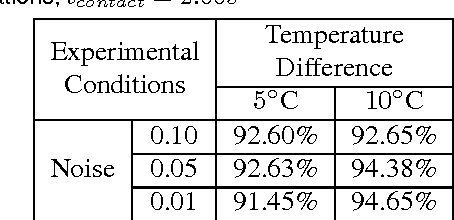

Abstract:Tactile sensing can enable a robot to infer properties of its surroundings, such as the material of an object. Heat transfer based sensing can be used for material recognition due to differences in the thermal properties of materials. While data-driven methods have shown promise for this recognition problem, many factors can influence performance, including sensor noise, the initial temperatures of the sensor and the object, the thermal effusivities of the materials, and the duration of contact. We present a physics-based mathematical model that predicts material recognition performance given these factors. Our model uses semi-infinite solids and a statistical method to calculate an F1 score for the binary material recognition. We evaluated our method using simulated contact with 69 materials and data collected by a real robot with 12 materials. Our model predicted the material recognition performance of support vector machine (SVM) with 96% accuracy for the simulated data, with 92% accuracy for real-world data with constant initial sensor temperatures, and with 91% accuracy for real-world data with varied initial sensor temperatures. Using our model, we also provide insight into the roles of various factors on recognition performance, such as the temperature difference between the sensor and the object. Overall, our results suggest that our model could be used to help design better thermal sensors for robots and enable robots to use them more effectively.

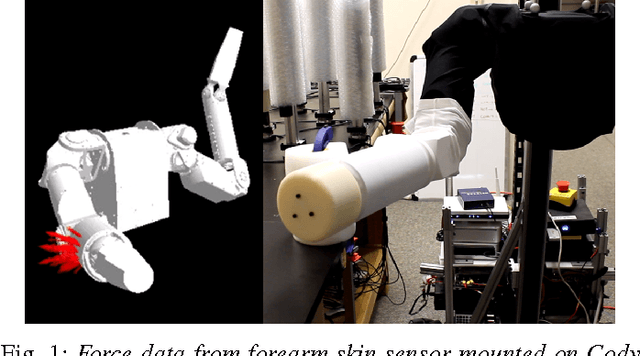

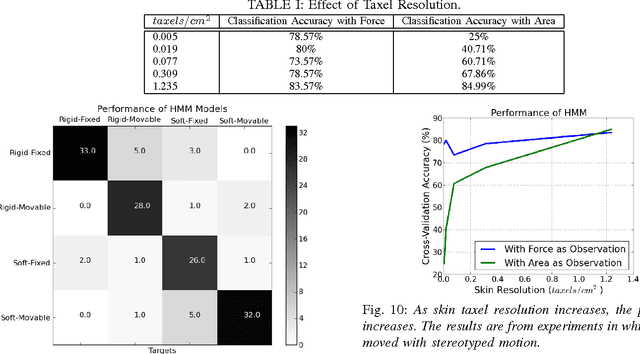

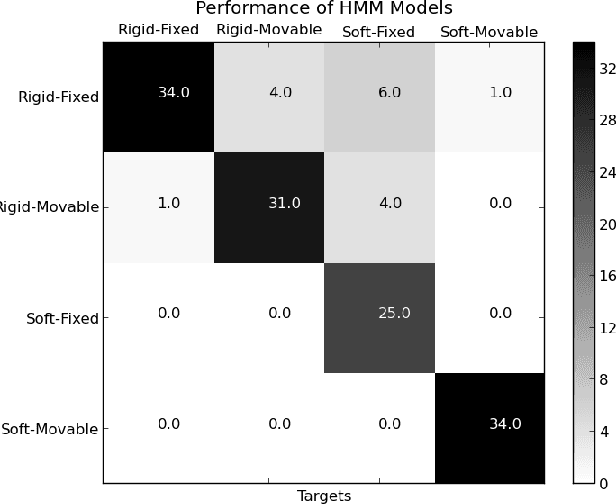

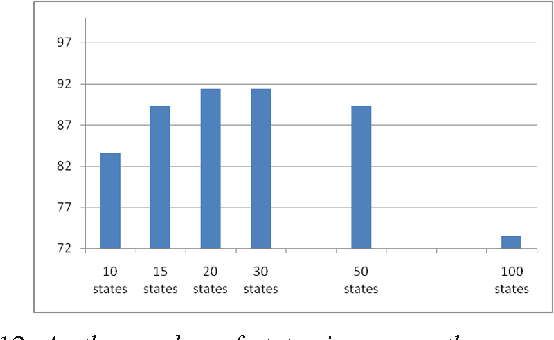

Inferring Object Properties with a Tactile Sensing Array Given Varying Joint Stiffness and Velocity

Nov 04, 2017

Abstract:Whole-arm tactile sensing enables a robot to sense contact and infer contact properties across its entire arm. Within this paper, we demonstrate that using data-driven methods, a humanoid robot can infer mechanical properties of objects from contact with its forearm during a simple reaching motion. A key issue is the extent to which the performance of data-driven methods can generalize to robot actions that differ from those used during training. To investigate this, we developed an idealized physics-based lumped element model of a robot with a compliant joint making contact with an object. Using this physics-based model, we performed experiments with varied robot, object and environment parameters. We also collected data from a tactile-sensing forearm on a real robot as it made contact with various objects during a simple reaching motion with varied arm velocities and joint stiffnesses. The robot used one nearest neighbor classifiers (1-NN), hidden Markov models (HMMs), and long short-term memory (LSTM) networks to infer two object properties (hard vs. soft and moved vs. unmoved) based on features of time-varying tactile sensor data (maximum force, contact area, and contact motion). We found that, in contrast to 1-NN, the performance of LSTMs (with sufficient data availability) and multivariate HMMs successfully generalized to new robot motions with distinct velocities and joint stiffnesses. Compared to single features, using multiple features gave the best results for both experiments with physics-based models and a real-robot.

A Multimodal Anomaly Detector for Robot-Assisted Feeding Using an LSTM-based Variational Autoencoder

Nov 02, 2017

Abstract:The detection of anomalous executions is valuable for reducing potential hazards in assistive manipulation. Multimodal sensory signals can be helpful for detecting a wide range of anomalies. However, the fusion of high-dimensional and heterogeneous modalities is a challenging problem. We introduce a long short-term memory based variational autoencoder (LSTM-VAE) that fuses signals and reconstructs their expected distribution. We also introduce an LSTM-VAE-based detector using a reconstruction-based anomaly score and a state-based threshold. For evaluations with 1,555 robot-assisted feeding executions including 12 representative types of anomalies, our detector had a higher area under the receiver operating characteristic curve (AUC) of 0.8710 than 5 other baseline detectors from the literature. We also show the multimodal fusion through the LSTM-VAE is effective by comparing our detector with 17 raw sensory signals versus 4 hand-engineered features.

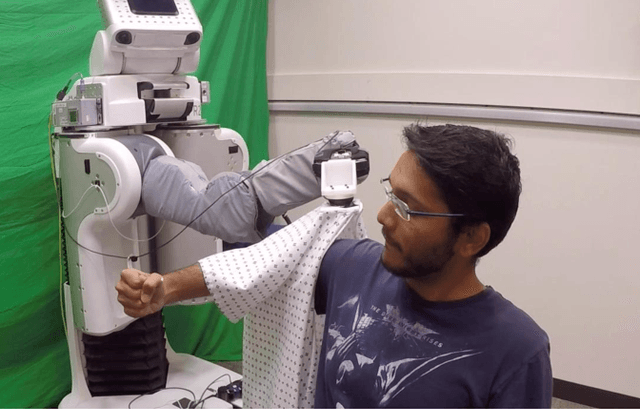

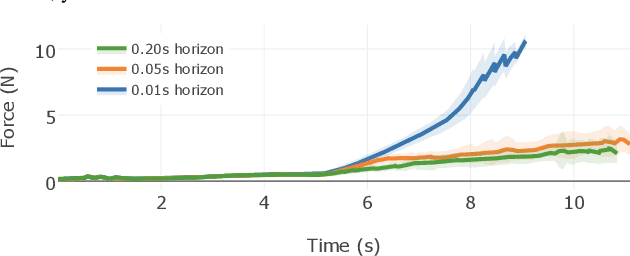

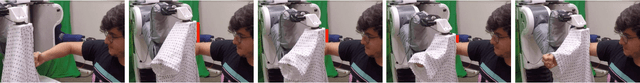

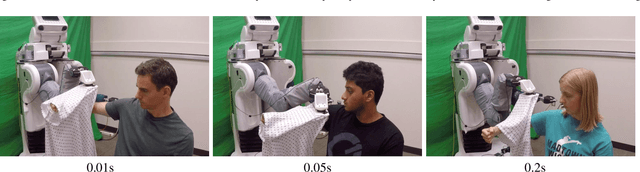

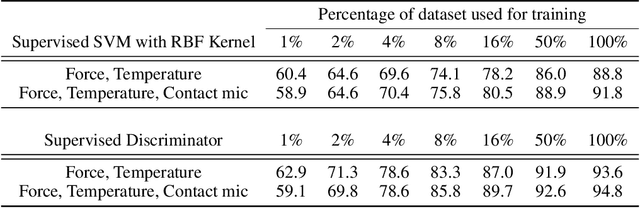

Deep Haptic Model Predictive Control for Robot-Assisted Dressing

Oct 27, 2017

Abstract:Robot-assisted dressing offers an opportunity to benefit the lives of many people with disabilities, such as some older adults. However, robots currently lack common sense about the physical implications of their actions on people. The physical implications of dressing are complicated by non-rigid garments, which can result in a robot indirectly applying high forces to a person's body. We present a deep recurrent model that, when given a proposed action by the robot, predicts the forces a garment will apply to a person's body. We also show that a robot can provide better dressing assistance by using this model with model predictive control. The predictions made by our model only use haptic and kinematic observations from the robot's end effector, which are readily attainable. Collecting training data from real world physical human-robot interaction can be time consuming, costly, and put people at risk. Instead, we train our predictive model using data collected in an entirely self-supervised fashion from a physics-based simulation. We evaluated our approach with a PR2 robot that attempted to pull a hospital gown onto the arms of 10 human participants. With a 0.2s prediction horizon, our controller succeeded at high rates and lowered applied force while navigating the garment around a persons fist and elbow without getting caught. Shorter prediction horizons resulted in significantly reduced performance with the sleeve catching on the participants' fists and elbows, demonstrating the value of our model's predictions. These behaviors of mitigating catches emerged from our deep predictive model and the controller objective function, which primarily penalizes high forces.

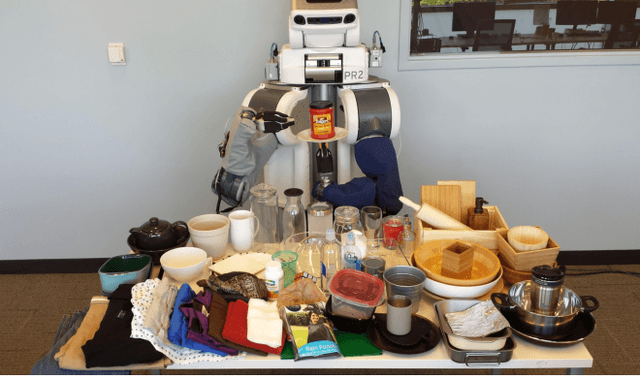

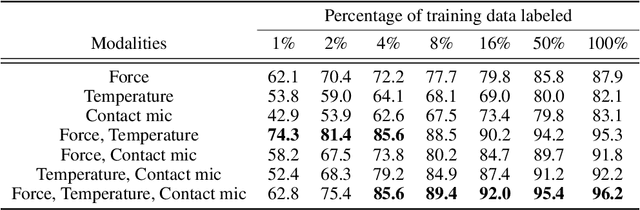

Semi-Supervised Haptic Material Recognition for Robots using Generative Adversarial Networks

Oct 26, 2017

Abstract:Material recognition enables robots to incorporate knowledge of material properties into their interactions with everyday objects. For example, material recognition opens up opportunities for clearer communication with a robot, such as "bring me the metal coffee mug", and recognizing plastic versus metal is crucial when using a microwave or oven. However, collecting labeled training data with a robot is often more difficult than unlabeled data. We present a semi-supervised learning approach for material recognition that uses generative adversarial networks (GANs) with haptic features such as force, temperature, and vibration. Our approach achieves state-of-the-art results and enables a robot to estimate the material class of household objects with ~90% accuracy when 92% of the training data are unlabeled. We explore how well this approach can recognize the material of new objects and we discuss challenges facing generalization. To motivate learning from unlabeled training data, we also compare results against several common supervised learning classifiers. In addition, we have released the dataset used for this work which consists of time-series haptic measurements from a robot that conducted thousands of interactions with 72 household objects.

* 11 pages, 6 figures, 6 tables, 1st Conference on Robot Learning (CoRL 2017)

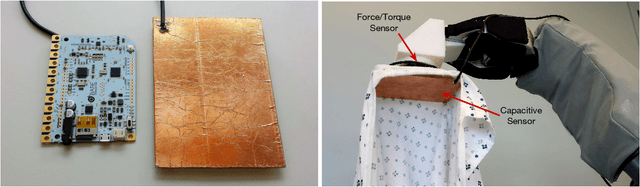

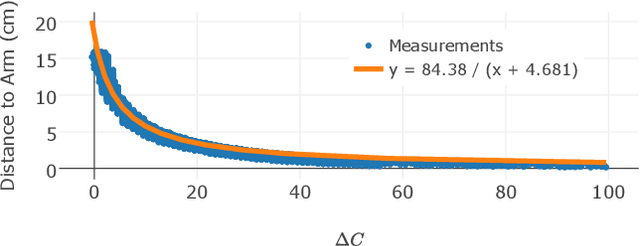

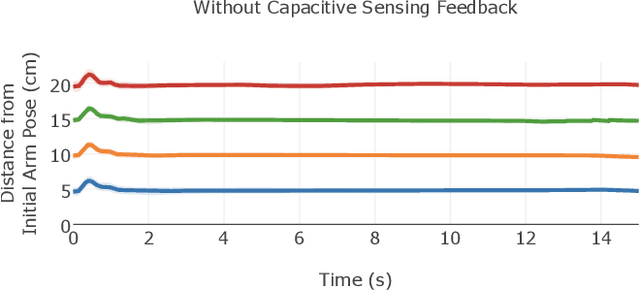

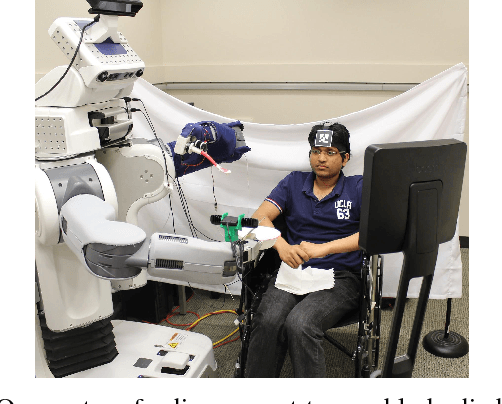

Tracking Human Pose During Robot-Assisted Dressing using Single-Axis Capacitive Proximity Sensing

Sep 22, 2017

Abstract:Dressing is a fundamental task of everyday living and robots offer an opportunity to assist people with motor impairments. While several robotic systems have explored robot-assisted dressing, few have considered how a robot can manage errors in human pose estimation, or adapt to human motion in real time during dressing assistance. In addition, estimating pose changes due to human motion can be challenging with vision-based techniques since dressing is often intended to visually occlude the body with clothing. We present a method to track a person's pose in real time using capacitive proximity sensing. This sensing approach gives direct estimates of distance with low latency, has a high signal-to-noise ratio, and has low computational requirements. Using our method, a robot can adjust for errors in the estimated pose of a person and physically follow the contours and movements of the person while providing dressing assistance. As part of an evaluation of our method, the robot successfully pulled the sleeve of a hospital gown and a cardigan onto the right arms of 10 human participants, despite arm motions and large errors in the initially estimated pose of the person's arm. We also show that a capacitive sensor is unaffected by visual occlusion of the body and can sense a person's body through fabric clothing.

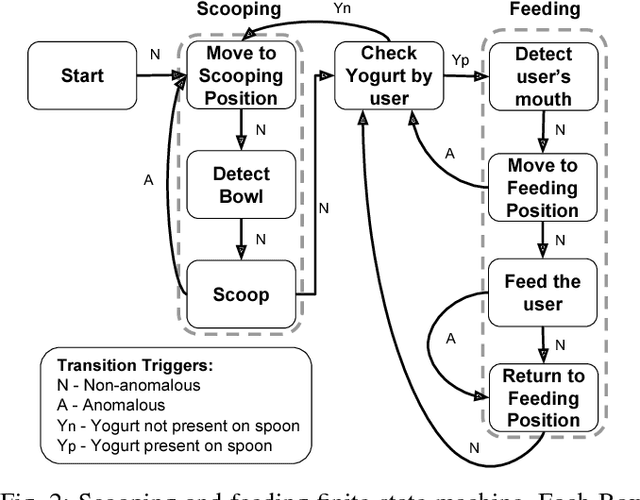

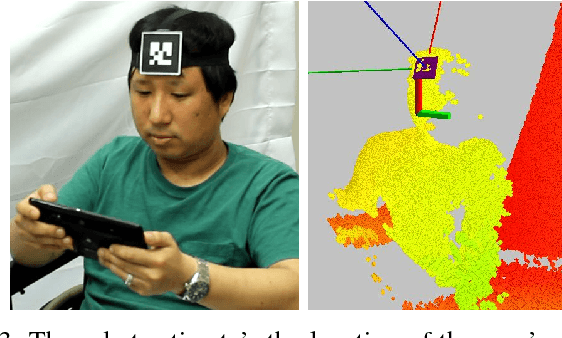

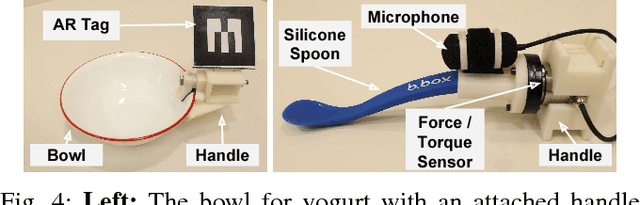

Towards Assistive Feeding with a General-Purpose Mobile Manipulator

May 25, 2016

Abstract:General-purpose mobile manipulators have the potential to serve as a versatile form of assistive technology. However, their complexity creates challenges, including the risk of being too difficult to use. We present a proof-of-concept robotic system for assistive feeding that consists of a Willow Garage PR2, a high-level web-based interface, and specialized autonomous behaviors for scooping and feeding yogurt. As a step towards use by people with disabilities, we evaluated our system with 5 able-bodied participants. All 5 successfully ate yogurt using the system and reported high rates of success for the system's autonomous behaviors. Also, Henry Evans, a person with severe quadriplegia, operated the system remotely to feed an able-bodied person. In general, people who operated the system reported that it was easy to use, including Henry. The feeding system also incorporates corrective actions designed to be triggered either autonomously or by the user. In an offline evaluation using data collected with the feeding system, a new version of our multimodal anomaly detection system outperformed prior versions.

* This short 4-page paper was accepted and presented as a poster on May. 16, 2016 in ICRA 2016 workshop on 'Human-Robot Interfaces for Enhanced Physical Interactions' organized by Arash Ajoudani, Barkan Ugurlu, Panagiotis Artemiadis, Jun Morimoto. It was peer reviewed by one reviewer

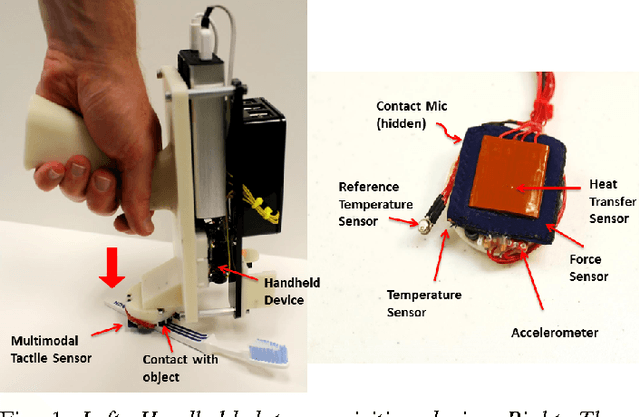

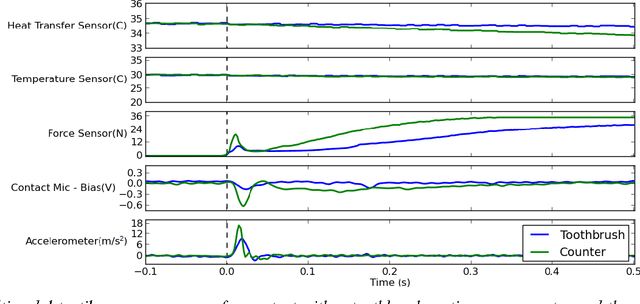

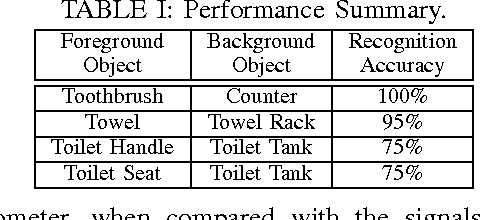

A Handheld Device for the In Situ Acquisition of Multimodal Tactile Sensing Data

Nov 12, 2015

Abstract:Multimodal tactile sensing could potentially enable robots to improve their performance at manipulation tasks by rapidly discriminating between task-relevant objects. Data-driven approaches to this tactile perception problem show promise, but there is a dearth of suitable training data. In this two-page paper, we present a portable handheld device for the efficient acquisition of multimodal tactile sensing data from objects in their natural settings, such as homes. The multimodal tactile sensor on the device integrates a fabric-based force sensor, a contact microphone, an accelerometer, temperature sensors, and a heating element. We briefly introduce our approach, describe the device, and demonstrate feasibility through an evaluation with a small data set that we captured by making contact with 7 task-relevant objects in a bathroom of a person's home.

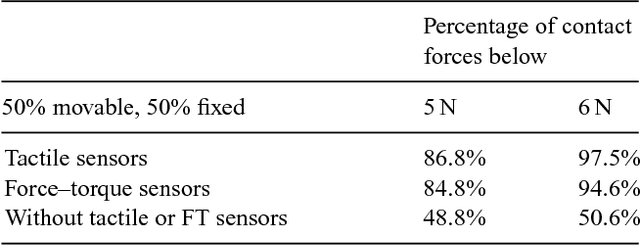

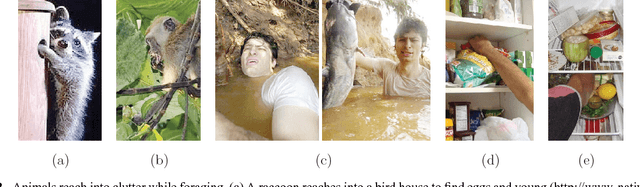

Manipulation in Clutter with Whole-Arm Tactile Sensing

Apr 23, 2013

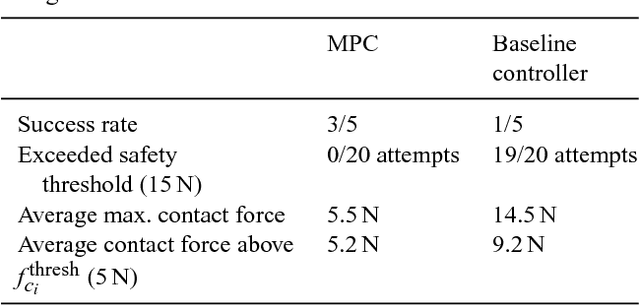

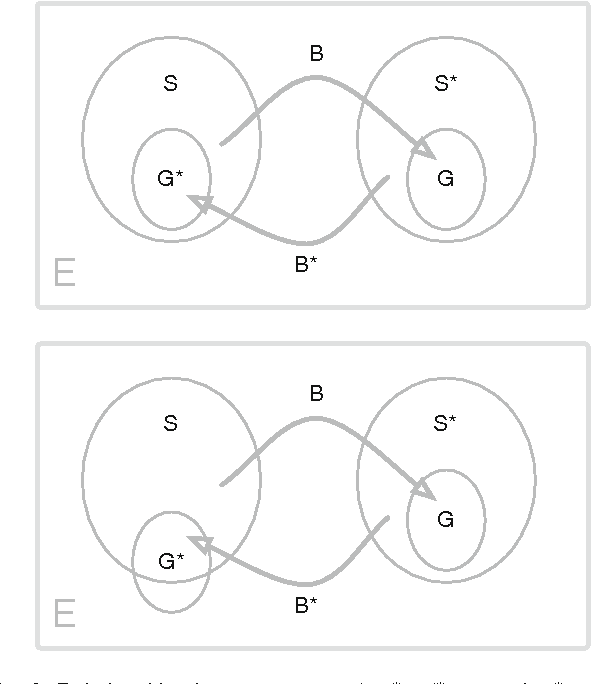

Abstract:We begin this paper by presenting our approach to robot manipulation, which emphasizes the benefits of making contact with the world across the entire manipulator. We assume that low contact forces are benign, and focus on the development of robots that can control their contact forces during goal-directed motion. Inspired by biology, we assume that the robot has low-stiffness actuation at its joints, and tactile sensing across the entire surface of its manipulator. We then describe a novel controller that exploits these assumptions. The controller only requires haptic sensing and does not need an explicit model of the environment prior to contact. It also handles multiple contacts across the surface of the manipulator. The controller uses model predictive control (MPC) with a time horizon of length one, and a linear quasi-static mechanical model that it constructs at each time step. We show that this controller enables both real and simulated robots to reach goal locations in high clutter with low contact forces. Our experiments include tests using a real robot with a novel tactile sensor array on its forearm reaching into simulated foliage and a cinder block. In our experiments, robots made contact across their entire arms while pushing aside movable objects, deforming compliant objects, and perceiving the world.

* This is the first version of a paper that we submitted to the International Journal of Robotics Research on December 31, 2011 and uploaded to our website on January 16, 2012

Autonomously Learning to Visually Detect Where Manipulation Will Succeed

Dec 31, 2012

Abstract:Visual features can help predict if a manipulation behavior will succeed at a given location. For example, the success of a behavior that flips light switches depends on the location of the switch. Within this paper, we present methods that enable a mobile manipulator to autonomously learn a function that takes an RGB image and a registered 3D point cloud as input and returns a 3D location at which a manipulation behavior is likely to succeed. Given a pair of manipulation behaviors that can change the state of the world between two sets (e.g., light switch up and light switch down), classifiers that detect when each behavior has been successful, and an initial hint as to where one of the behaviors will be successful, the robot autonomously trains a pair of support vector machine (SVM) classifiers by trying out the behaviors at locations in the world and observing the results. When an image feature vector associated with a 3D location is provided as input to one of the SVMs, the SVM predicts if the associated manipulation behavior will be successful at the 3D location. To evaluate our approach, we performed experiments with a PR2 robot from Willow Garage in a simulated home using behaviors that flip a light switch, push a rocker-type light switch, and operate a drawer. By using active learning, the robot efficiently learned SVMs that enabled it to consistently succeed at these tasks. After training, the robot also continued to learn in order to adapt in the event of failure.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge