Alexander Mathis

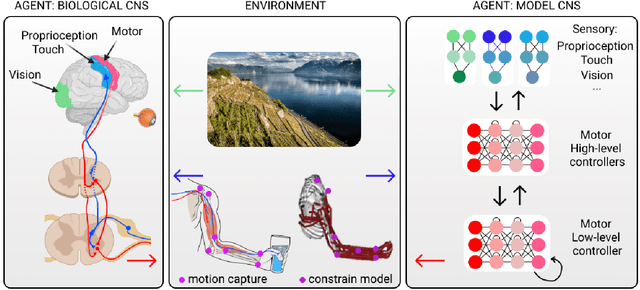

DMAP: a Distributed Morphological Attention Policy for Learning to Locomote with a Changing Body

Sep 28, 2022

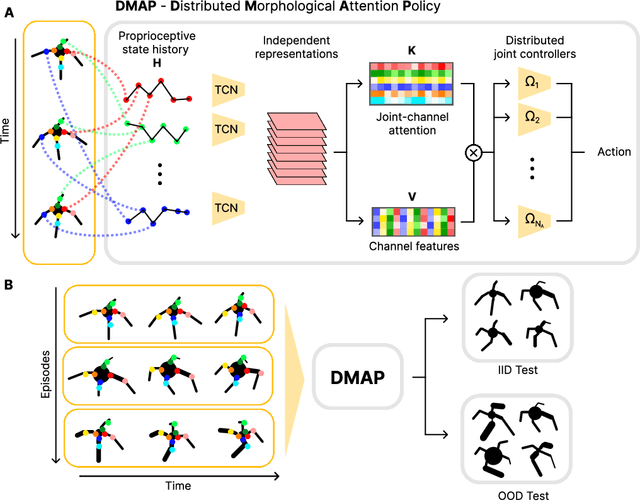

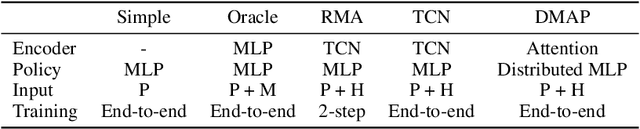

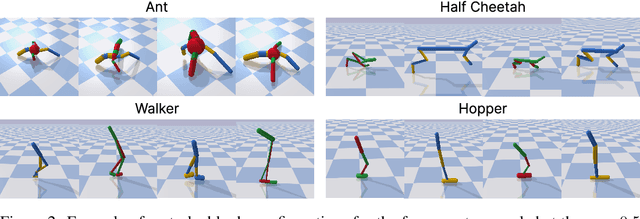

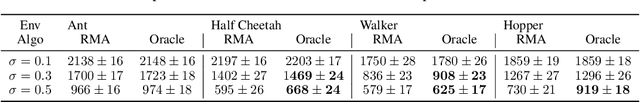

Abstract:Biological and artificial agents need to deal with constant changes in the real world. We study this problem in four classical continuous control environments, augmented with morphological perturbations. Learning to locomote when the length and the thickness of different body parts vary is challenging, as the control policy is required to adapt to the morphology to successfully balance and advance the agent. We show that a control policy based on the proprioceptive state performs poorly with highly variable body configurations, while an (oracle) agent with access to a learned encoding of the perturbation performs significantly better. We introduce DMAP, a biologically-inspired, attention-based policy network architecture. DMAP combines independent proprioceptive processing, a distributed policy with individual controllers for each joint, and an attention mechanism, to dynamically gate sensory information from different body parts to different controllers. Despite not having access to the (hidden) morphology information, DMAP can be trained end-to-end in all the considered environments, overall matching or surpassing the performance of an oracle agent. Thus DMAP, implementing principles from biological motor control, provides a strong inductive bias for learning challenging sensorimotor tasks. Overall, our work corroborates the power of these principles in challenging locomotion tasks.

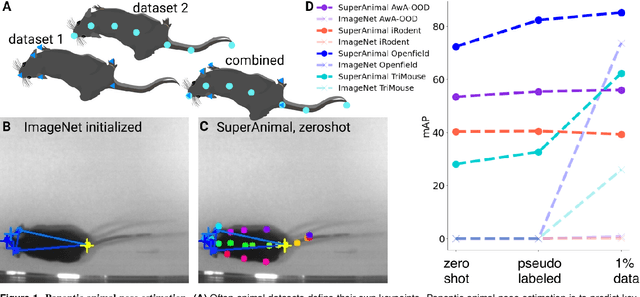

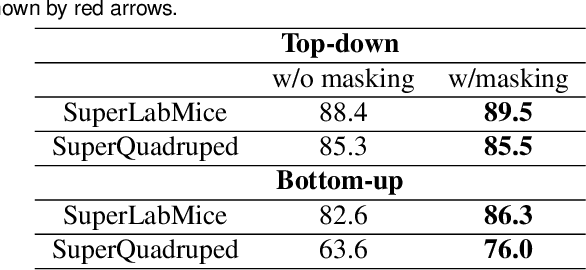

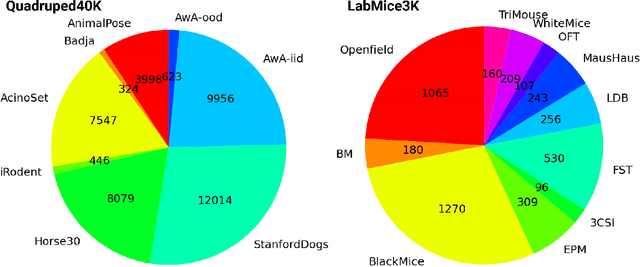

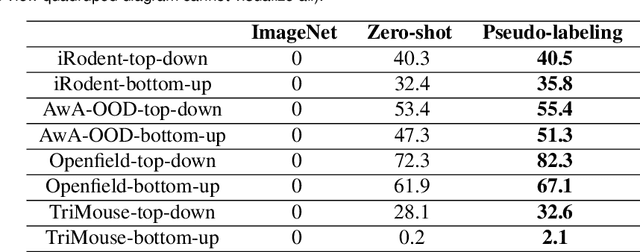

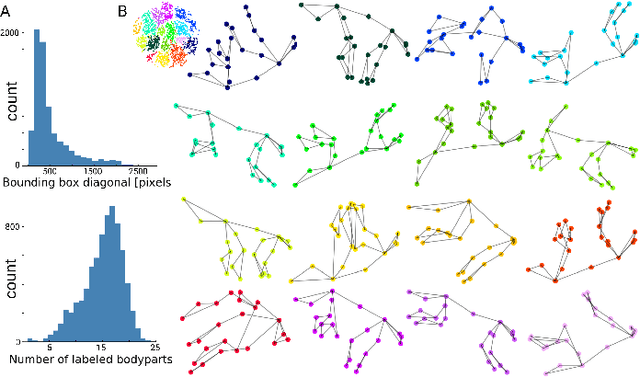

Panoptic animal pose estimators are zero-shot performers

Mar 14, 2022

Abstract:Animal pose estimation is critical in applications ranging from life science research, agriculture, to veterinary medicine. Compared to human pose estimation, the performance of animal pose estimation is limited by the size of available datasets and the generalization of a model across datasets. Typically different keypoints are labeled regardless of whether the species are the same or not, leaving animal pose datasets to have disjoint or partially overlapping keypoints. As a consequence, a model cannot be used as a plug-and-play solution across datasets. This reality motivates us to develop panoptic animal pose estimation models that are able to predict keypoints defined in all datasets. In this work we propose a simple yet effective way to merge differentially labeled datasets to obtain the largest quadruped and lab mouse pose dataset. Using a gradient masking technique, so called SuperAnimal-models are able to predict keypoints that are distributed across datasets and exhibit strong zero-shot performance. The models can be further improved by (pseudo) labeled fine-tuning. These models outperform ImageNet-initialized models.

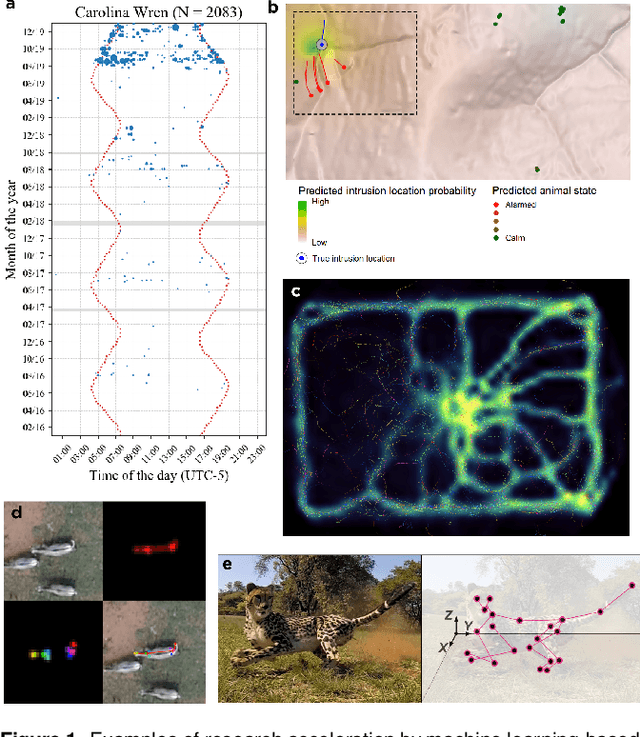

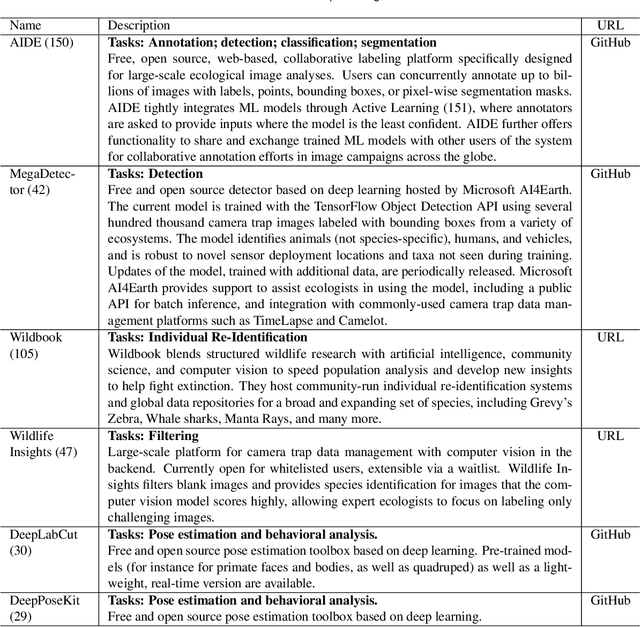

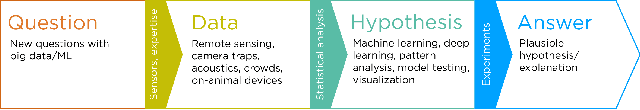

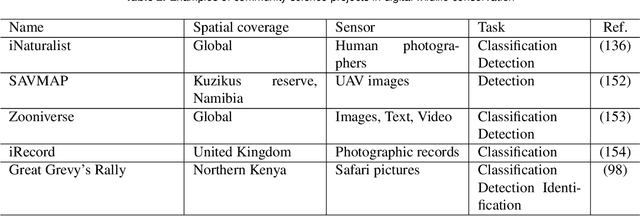

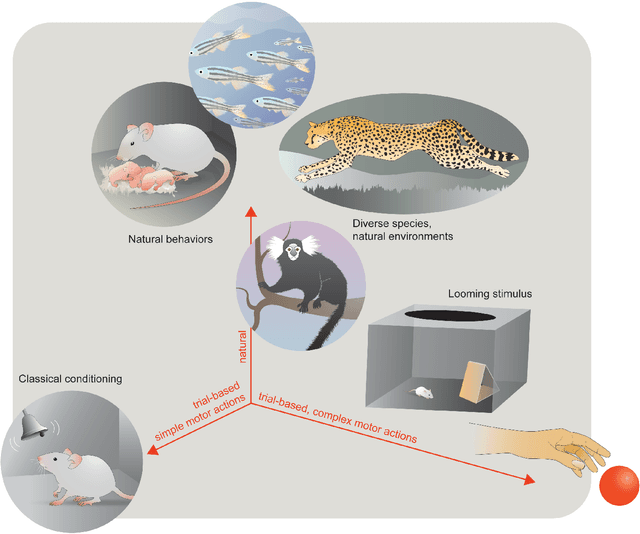

Seeing biodiversity: perspectives in machine learning for wildlife conservation

Oct 25, 2021

Abstract:Data acquisition in animal ecology is rapidly accelerating due to inexpensive and accessible sensors such as smartphones, drones, satellites, audio recorders and bio-logging devices. These new technologies and the data they generate hold great potential for large-scale environmental monitoring and understanding, but are limited by current data processing approaches which are inefficient in how they ingest, digest, and distill data into relevant information. We argue that machine learning, and especially deep learning approaches, can meet this analytic challenge to enhance our understanding, monitoring capacity, and conservation of wildlife species. Incorporating machine learning into ecological workflows could improve inputs for population and behavior models and eventually lead to integrated hybrid modeling tools, with ecological models acting as constraints for machine learning models and the latter providing data-supported insights. In essence, by combining new machine learning approaches with ecological domain knowledge, animal ecologists can capitalize on the abundance of data generated by modern sensor technologies in order to reliably estimate population abundances, study animal behavior and mitigate human/wildlife conflicts. To succeed, this approach will require close collaboration and cross-disciplinary education between the computer science and animal ecology communities in order to ensure the quality of machine learning approaches and train a new generation of data scientists in ecology and conservation.

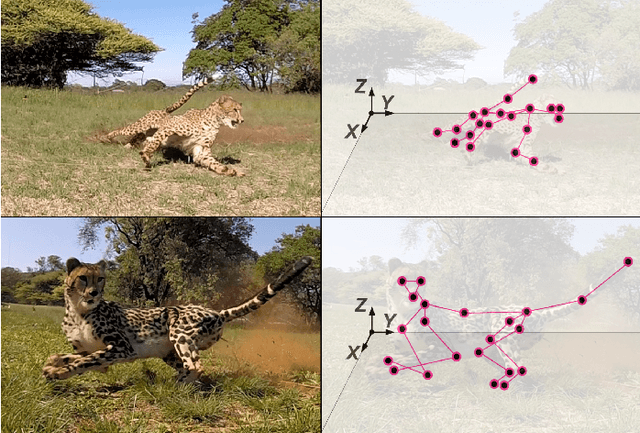

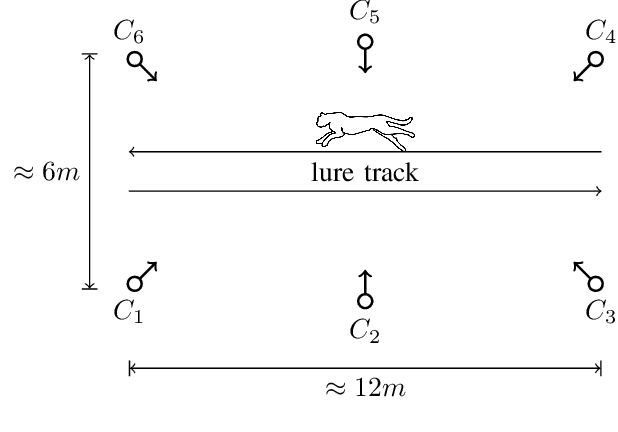

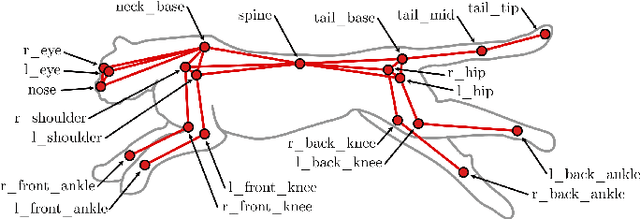

AcinoSet: A 3D Pose Estimation Dataset and Baseline Models for Cheetahs in the Wild

Mar 24, 2021

Abstract:Animals are capable of extreme agility, yet understanding their complex dynamics, which have ecological, biomechanical and evolutionary implications, remains challenging. Being able to study this incredible agility will be critical for the development of next-generation autonomous legged robots. In particular, the cheetah (acinonyx jubatus) is supremely fast and maneuverable, yet quantifying its whole-body 3D kinematic data during locomotion in the wild remains a challenge, even with new deep learning-based methods. In this work we present an extensive dataset of free-running cheetahs in the wild, called AcinoSet, that contains 119,490 frames of multi-view synchronized high-speed video footage, camera calibration files and 7,588 human-annotated frames. We utilize markerless animal pose estimation to provide 2D keypoints. Then, we use three methods that serve as strong baselines for 3D pose estimation tool development: traditional sparse bundle adjustment, an Extended Kalman Filter, and a trajectory optimization-based method we call Full Trajectory Estimation. The resulting 3D trajectories, human-checked 3D ground truth, and an interactive tool to inspect the data is also provided. We believe this dataset will be useful for a diverse range of fields such as ecology, neuroscience, robotics, biomechanics as well as computer vision.

End-to-End Trainable Multi-Instance Pose Estimation with Transformers

Mar 22, 2021

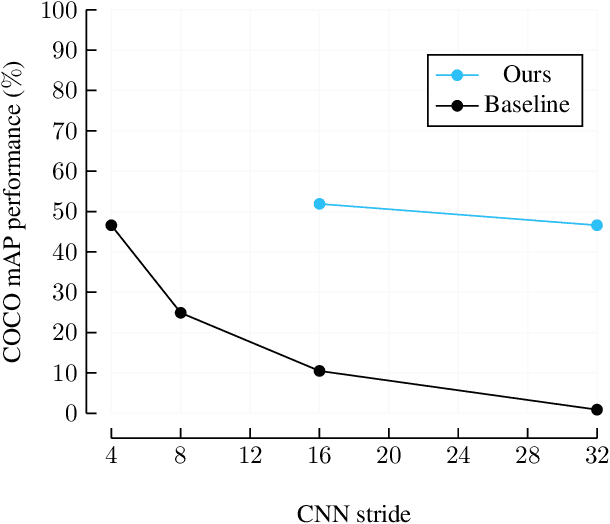

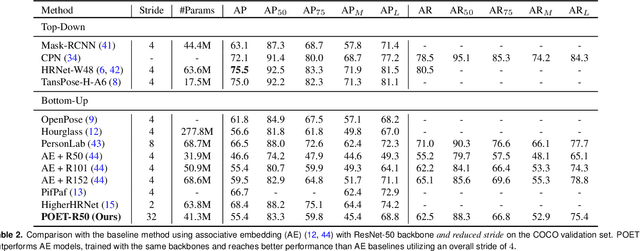

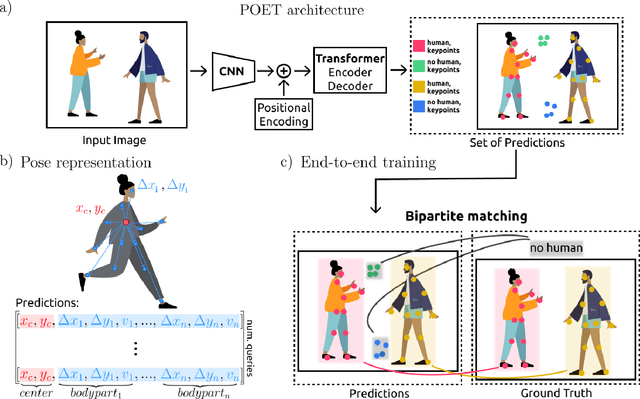

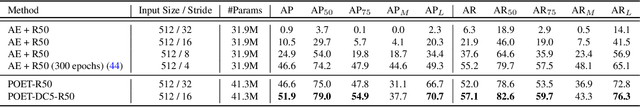

Abstract:We propose a new end-to-end trainable approach for multi-instance pose estimation by combining a convolutional neural network with a transformer. We cast multi-instance pose estimation from images as a direct set prediction problem. Inspired by recent work on end-to-end trainable object detection with transformers, we use a transformer encoder-decoder architecture together with a bipartite matching scheme to directly regress the pose of all individuals in a given image. Our model, called POse Estimation Transformer (POET), is trained using a novel set-based global loss that consists of a keypoint loss, a keypoint visibility loss, a center loss and a class loss. POET reasons about the relations between detected humans and the full image context to directly predict the poses in parallel. We show that POET can achieve high accuracy on the challenging COCO keypoint detection task. To the best of our knowledge, this model is the first end-to-end trainable multi-instance human pose estimation method.

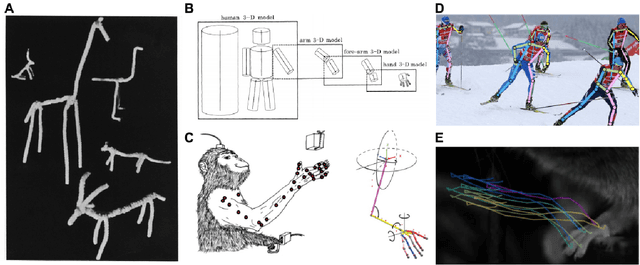

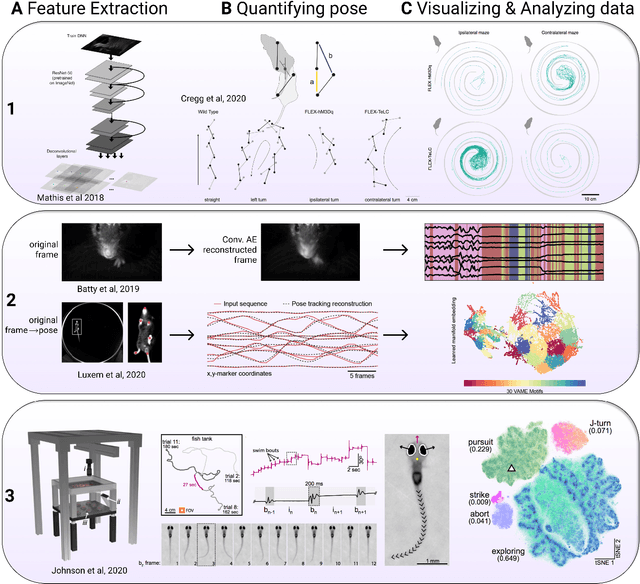

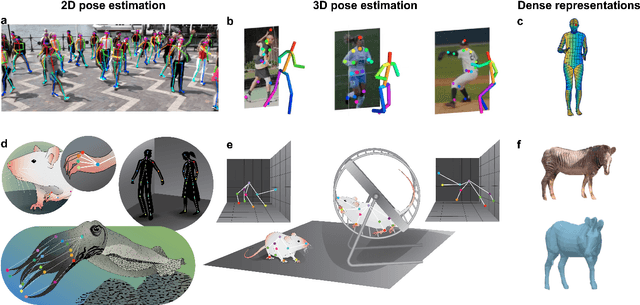

Measuring and modeling the motor system with machine learning

Mar 22, 2021

Abstract:The utility of machine learning in understanding the motor system is promising a revolution in how to collect, measure, and analyze data. The field of movement science already elegantly incorporates theory and engineering principles to guide experimental work, and in this review we discuss the growing use of machine learning: from pose estimation, kinematic analyses, dimensionality reduction, and closed-loop feedback, to its use in understanding neural correlates and untangling sensorimotor systems. We also give our perspective on new avenues where markerless motion capture combined with biomechanical modeling and neural networks could be a new platform for hypothesis-driven research.

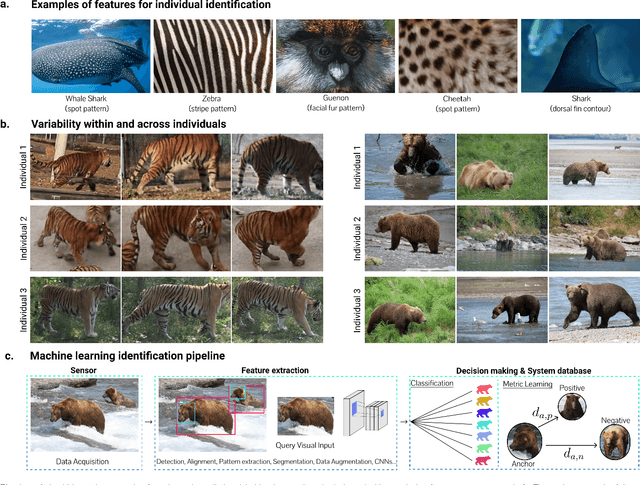

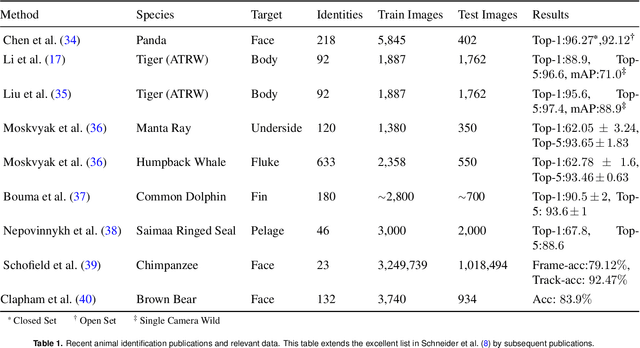

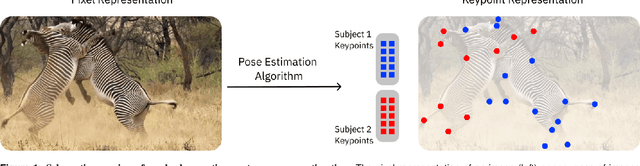

Perspectives on individual animal identification from biology and computer vision

Feb 28, 2021

Abstract:Identifying individual animals is crucial for many biological investigations. In response to some of the limitations of current identification methods, new automated computer vision approaches have emerged with strong performance. Here, we review current advances of computer vision identification techniques to provide both computer scientists and biologists with an overview of the available tools and discuss their applications. We conclude by offering recommendations for starting an animal identification project, illustrate current limitations and propose how they might be addressed in the future.

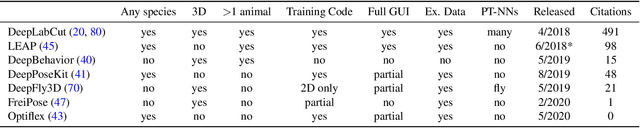

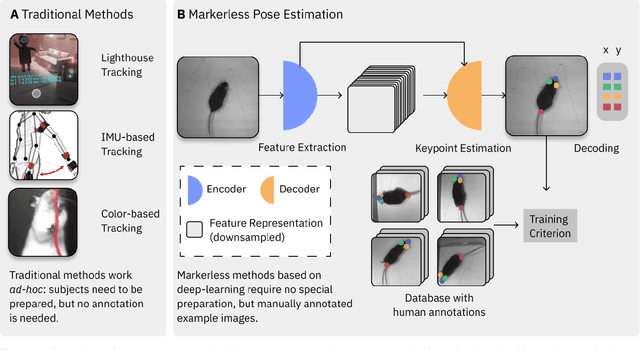

A Primer on Motion Capture with Deep Learning: Principles, Pitfalls and Perspectives

Sep 02, 2020

Abstract:Extracting behavioral measurements non-invasively from video is stymied by the fact that it is a hard computational problem. Recent advances in deep learning have tremendously advanced predicting posture from videos directly, which quickly impacted neuroscience and biology more broadly. In this primer we review the budding field of motion capture with deep learning. In particular, we will discuss the principles of those novel algorithms, highlight their potential as well as pitfalls for experimentalists, and provide a glimpse into the future.

Deep learning tools for the measurement of animal behavior in neuroscience

Oct 18, 2019

Abstract:Recent advances in computer vision have made accurate, fast and robust measurement of animal behavior a reality. In the past years powerful tools specifically designed to aid the measurement of behavior have come to fruition. Here we discuss how capturing the postures of animals - pose estimation - has been rapidly advancing with new deep learning methods. While challenges still remain, we envision that the fast-paced development of new deep learning tools will rapidly change the landscape of realizable real-world neuroscience.

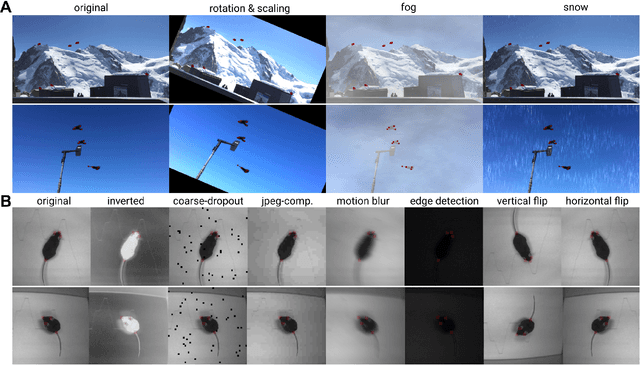

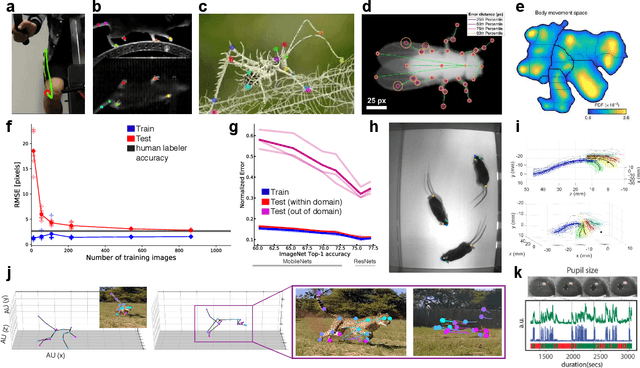

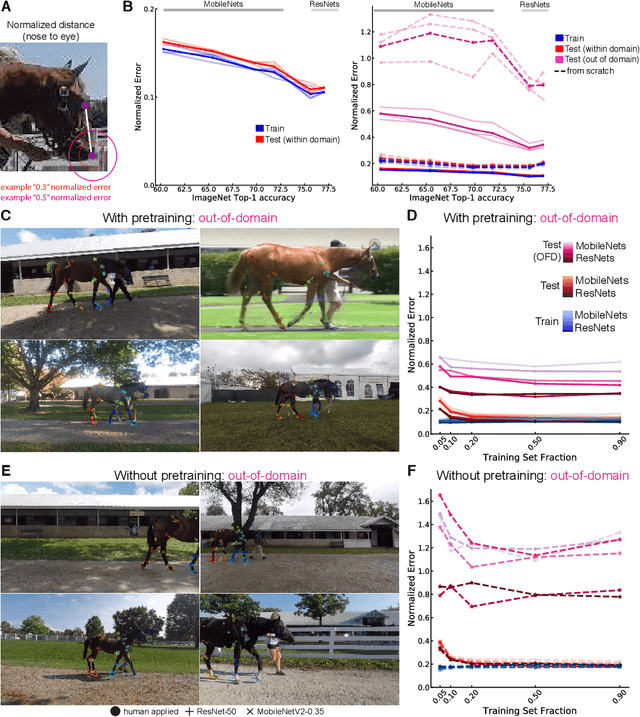

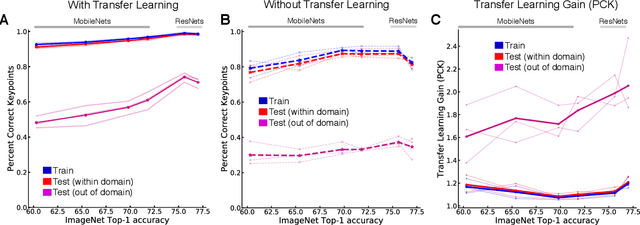

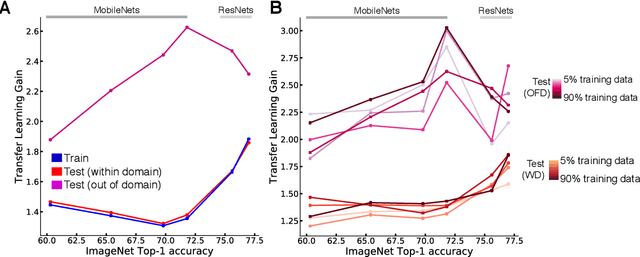

Pretraining boosts out-of-domain robustness for pose estimation

Sep 24, 2019

Abstract:Deep neural networks are highly effective tools for human and animal pose estimation. However, robustness to out-of-domain data remains a challenge. Here, we probe the transfer and generalization ability for pose estimation with two architecture classes (MobileNetV2s and ResNets) pretrained on ImageNet. We generated a novel dataset of 30 horses that allowed for both within-domain and out-of-domain (unseen horse) testing. We find that pretraining on ImageNet strongly improves out-of-domain performance. Moreover, we show that for both pretrained and networks trained from scratch, better ImageNet-performing architectures perform better for pose estimation, with a substantial improvement on out-of-domain data when pretrained. Collectively, our results demonstrate that transfer learning is particularly beneficial for out-of-domain robustness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge