"Time": models, code, and papers

Top 10 BraTS 2020 challenge solution: Brain tumor segmentation with self-ensembled, deeply-supervised 3D-Unet like neural networks

Oct 30, 2020

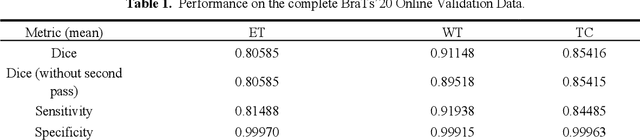

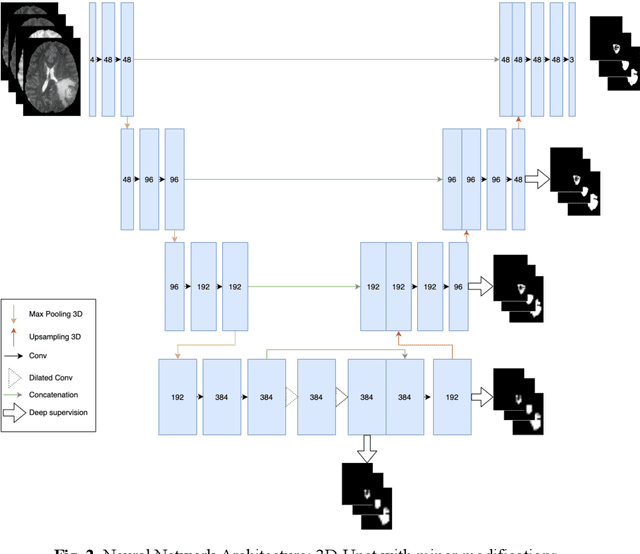

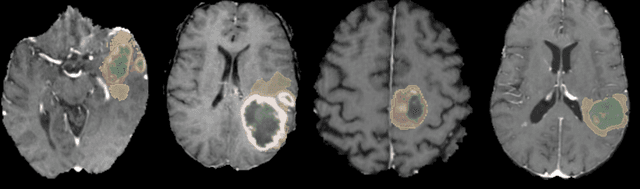

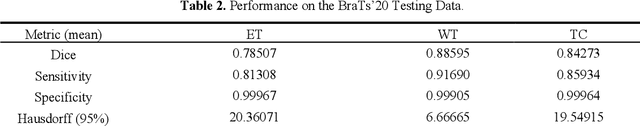

Brain tumor segmentation is a critical task for patient's disease management. To this end, we trained multiple U-net like neural networks, mainly with deep supervision and stochastic weight averaging, on the Multimodal Brain Tumor Segmentation Challenge (BraTS) 2020 training dataset, in a cross-validated fashion. Final brain tumor segmentations were produced by first averaging independently two sets of models, and then custom merging the labelmaps to account for individual performance of each set. Our performance on the online validation dataset with test time augmentation were as follows: Dice of 0.81, 0.91 and 0.85; Hausdorff (95%) of 20.6, 4,3, 5.7 mm for the enhancing tumor, whole tumor and tumor core, respectively. Similarly, our ensemble achieved a Dice of 0.79, 0.89 and 0.84, as well as Hausdorff (95%) of 20.4, 6.7 and 19.5mm on the final test dataset. More complicated training schemes and neural network architectures were investigated, without significant performance gain, at the cost of greatly increased training time. While relatively straightforward, our approach yielded good and balanced performance for each tumor subregions. Our solution is open sourced at https://github.com/lescientifik/xxxxx.

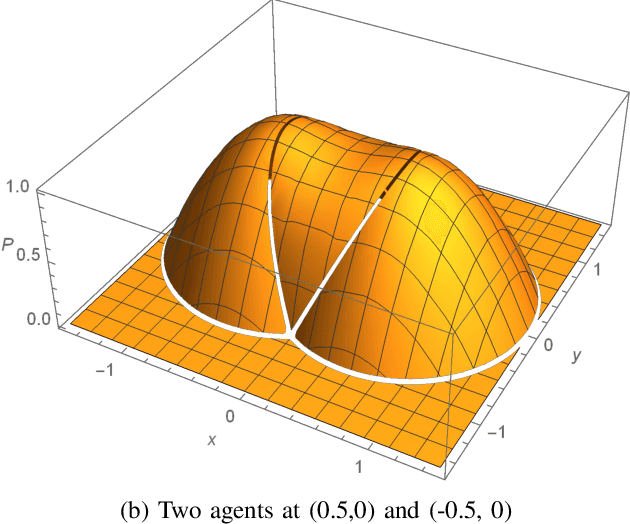

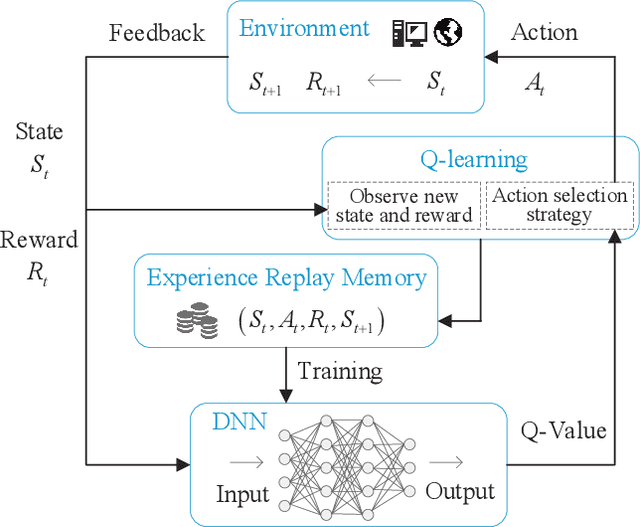

Path Design and Resource Management for NOMA enhanced Indoor Intelligent Robots

Nov 26, 2020

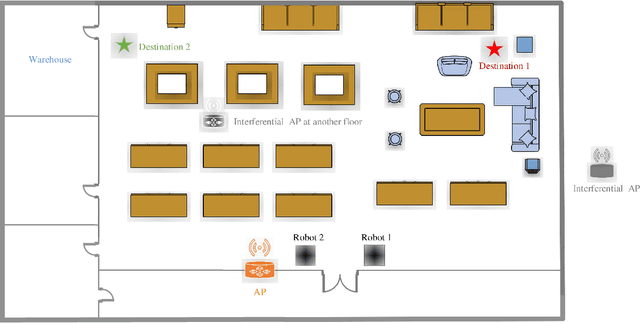

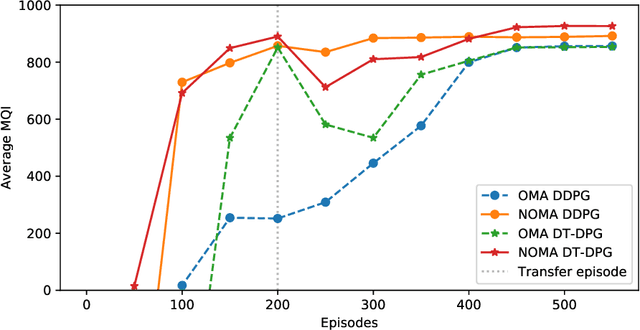

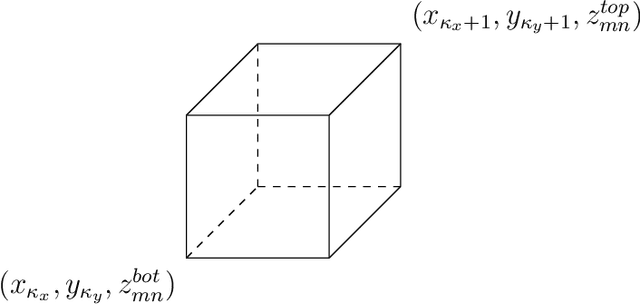

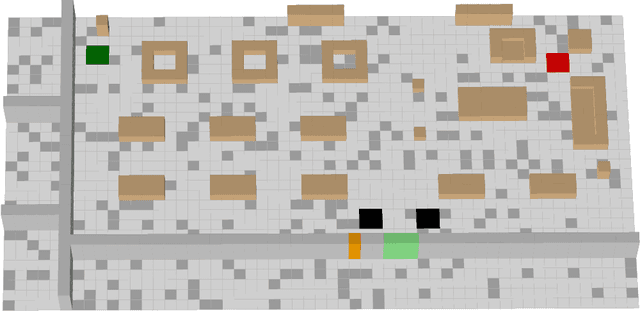

A communication enabled indoor intelligent robots (IRs) service framework is proposed, where non-orthogonal multiple access (NOMA) technique is adopted to enable highly reliable communications. In cooperation with the ultramodern indoor channel model recently proposed by the International Telecommunication Union (ITU), the Lego modeling method is proposed, which can deterministically describe the indoor layout and channel state in order to construct the radio map. The investigated radio map is invoked as a virtual environment to train the reinforcement learning agent, which can save training time and hardware costs. Build on the proposed communication model, motions of IRs who need to reach designated mission destinations and their corresponding down-link power allocation policy are jointly optimized to maximize the mission efficiency and communication reliability of IRs. In an effort to solve this optimization problem, a novel reinforcement learning approach named deep transfer deterministic policy gradient (DT-DPG) algorithm is proposed. Our simulation results demonstrate that 1) With the aid of NOMA techniques, the communication reliability of IRs is effectively improved; 2) The radio map is qualified to be a virtual training environment, and its statistical channel state information improves training efficiency by about 30%; 3) The proposed DT-DPG algorithm is superior to the conventional deep deterministic policy gradient (DDPG) algorithm in terms of optimization performance, training time, and anti-local optimum ability.

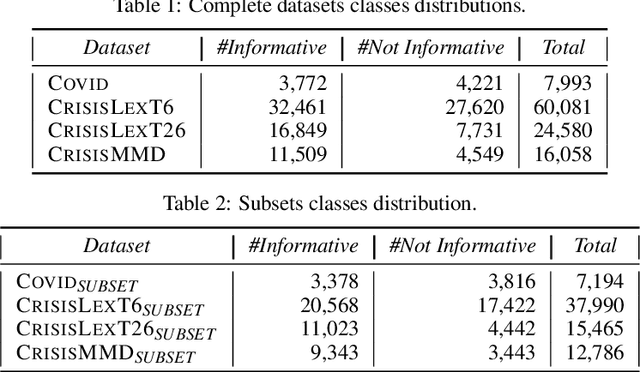

On Informative Tweet Identification For Tracking Mass Events

Jan 14, 2021

Twitter has been heavily used as an important channel for communicating and discussing about events in real-time. In such major events, many uninformative tweets are also published rapidly by many users, making it hard to follow the events. In this paper, we address this problem by investigating machine learning methods for automatically identifying informative tweets among those that are relevant to a target event. We examine both traditional approaches with a rich set of handcrafted features and state of the art approaches with automatically learned features. We further propose a hybrid model that leverages both the handcrafted features and the automatically learned ones. Our experiments on several large datasets of real-world events show that the latter approaches significantly outperform the former and our proposed model performs the best, suggesting highly effective mechanisms for tracking mass events.

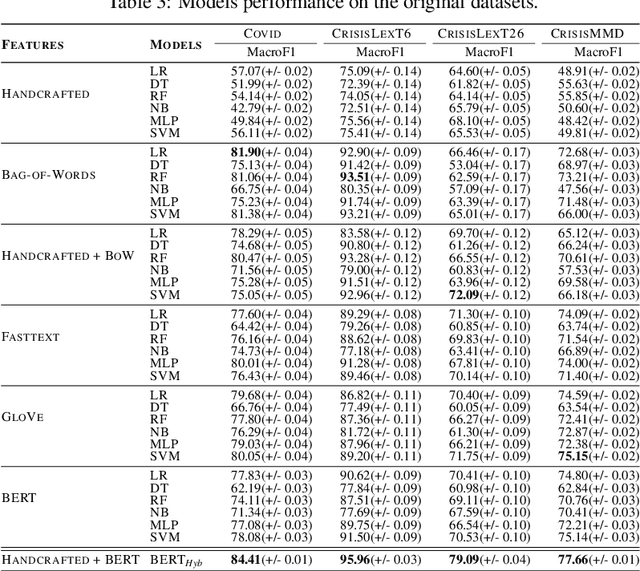

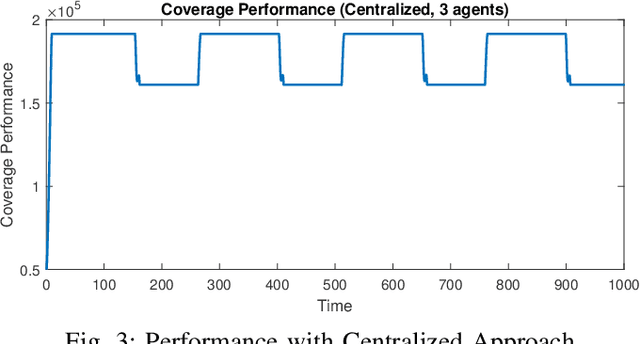

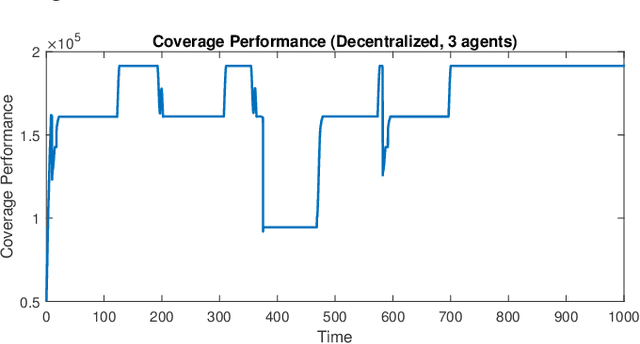

Comparison of Centralized and Decentralized Approaches in Cooperative Coverage Problems with Energy-Constrained Agents

Aug 26, 2020

A multi-agent coverage problem is considered with energy-constrained agents. The objective of this paper is to compare the coverage performance between centralized and decentralized approaches. To this end, a near-optimal centralized coverage control method is developed under energy depletion and repletion constraints. The optimal coverage formation corresponds to the locations of agents where the coverage performance is maximized. The optimal charging formation corresponds to the locations of agents with one agent fixed at the charging station and the remaining agents maximizing the coverage performance. We control the behavior of this cooperative multi-agent system by switching between the optimal coverage formation and the optimal charging formation. Finally, the optimal dwell times at coverage locations, charging time, and agent trajectories are determined so as to maximize coverage over a given time interval. In particular, our controller guarantees that at any time there is at most one agent leaving the team for energy repletion.

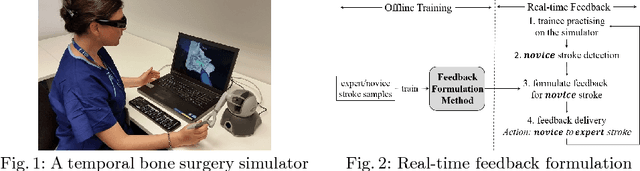

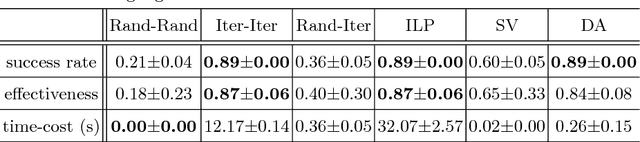

Providing Effective Real-time Feedback in Simulation-based Surgical Training

Jun 30, 2017

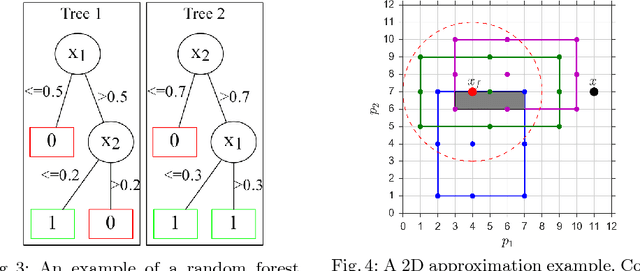

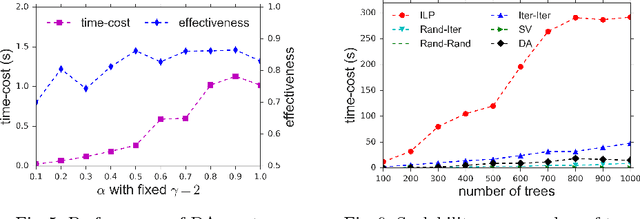

Virtual reality simulation is becoming popular as a training platform in surgical education. However, one important aspect of simulation-based surgical training that has not received much attention is the provision of automated real-time performance feedback to support the learning process. Performance feedback is actionable advice that improves novice behaviour. In simulation, automated feedback is typically extracted from prediction models trained using data mining techniques. Existing techniques suffer from either low effectiveness or low efficiency resulting in their inability to be used in real-time. In this paper, we propose a random forest based method that finds a balance between effectiveness and efficiency. Experimental results in a temporal bone surgery simulation show that the proposed method is able to extract highly effective feedback at a high level of efficiency.

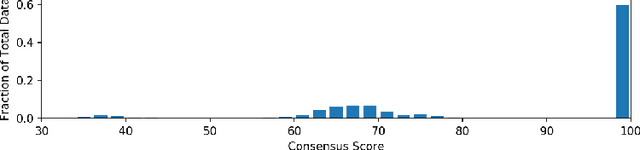

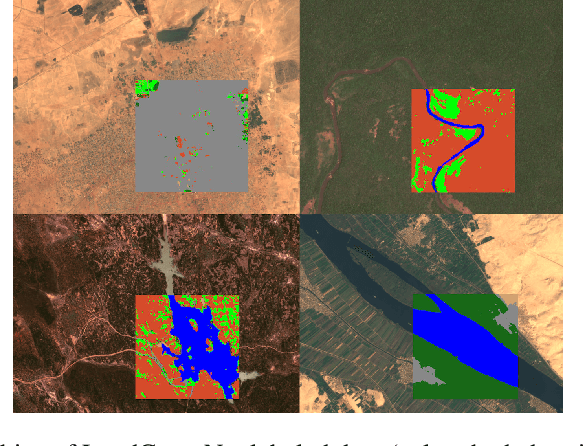

LandCoverNet: A global benchmark land cover classification training dataset

Dec 05, 2020

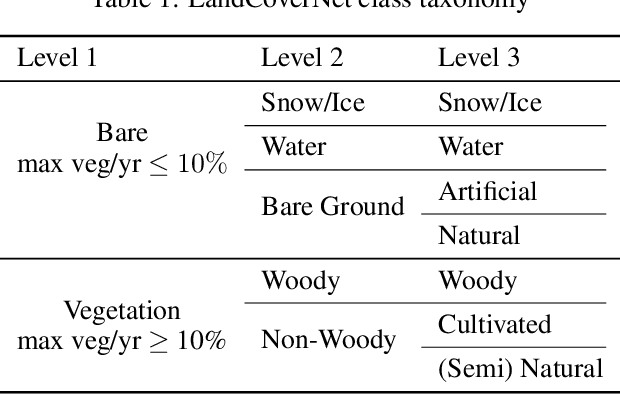

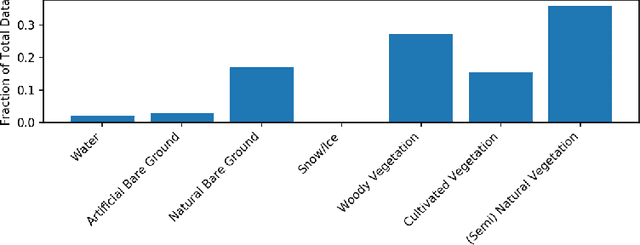

Regularly updated and accurate land cover maps are essential for monitoring 14 of the 17 Sustainable Development Goals. Multispectral satellite imagery provide high-quality and valuable information at global scale that can be used to develop land cover classification models. However, such a global application requires a geographically diverse training dataset. Here, we present LandCoverNet, a global training dataset for land cover classification based on Sentinel-2 observations at 10m spatial resolution. Land cover class labels are defined based on annual time-series of Sentinel-2, and verified by consensus among three human annotators.

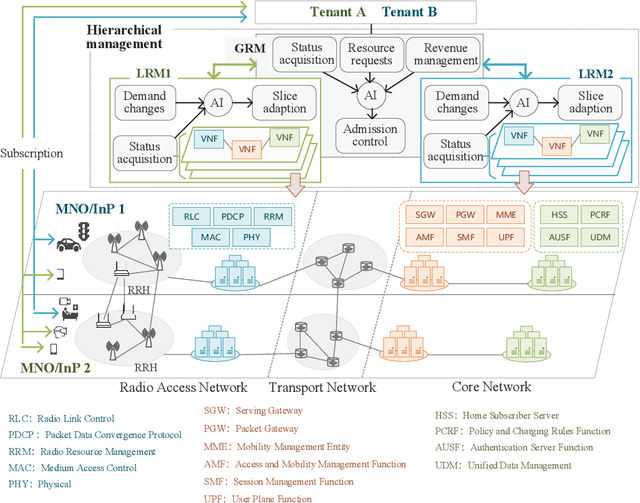

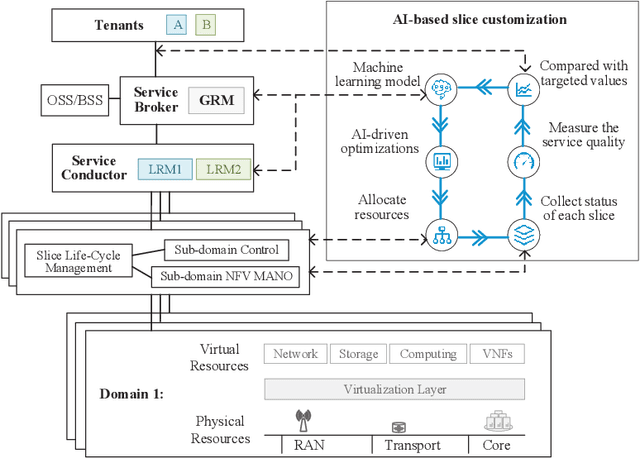

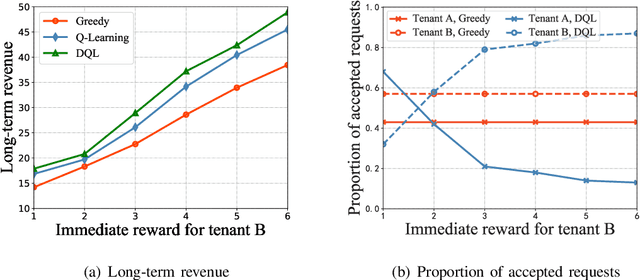

Customized Slicing for 6G: Enforcing Artificial Intelligence on Resource Management

Feb 21, 2021

Next generation wireless networks are expected to support diverse vertical industries and offer countless emerging use cases. To satisfy stringent requirements of diversified services, network slicing is developed, which enables service-oriented resource allocation by tailoring the infrastructure network into multiple logical networks. However, there are still some challenges in cross-domain multi-dimensional resource management for end-to-end (E2E) slices under the dynamic and uncertain environment. Trading off the revenue and cost of resource allocation while guaranteeing service quality is significant to tenants. Therefore, this article introduces a hierarchical resource management framework, utilizing deep reinforcement learning in admission control of resource requests from different tenants and resource adjustment within admitted slices for each tenant. Particularly, we first discuss the challenges in customized resource management of 6G. Second, the motivation and background are presented to explain why artificial intelligence (AI) is applied in resource customization of multi-tenant slicing. Third, E2E resource management is decomposed into two problems, multi-dimensional resource allocation decision based on slice-level feedback and real-time slice adaption aimed at avoiding service quality degradation. Simulation results demonstrate the effectiveness of AI-based customized slicing. Finally, several significant challenges that need to be addressed in practical implementation are investigated.

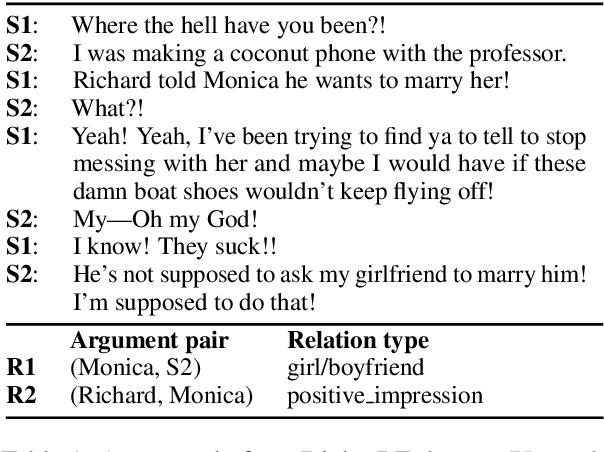

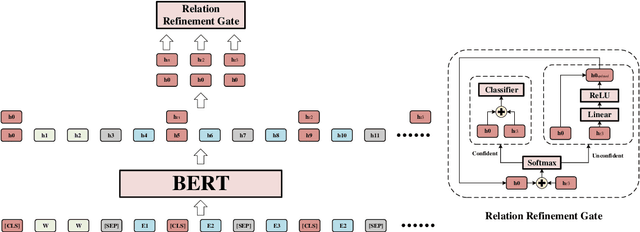

An Embarrassingly Simple Model for Dialogue Relation Extraction

Dec 27, 2020

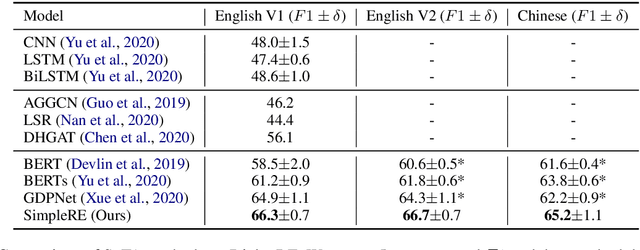

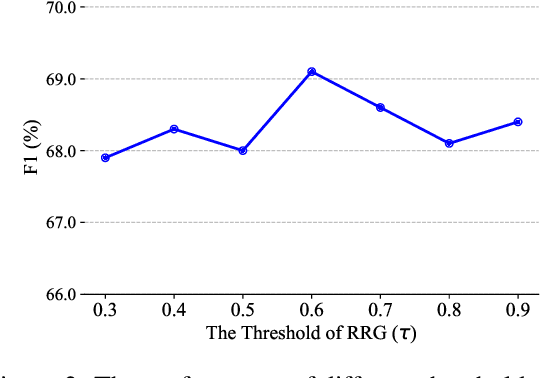

Dialogue relation extraction (RE) is to predict the relation type of two entities mentioned in a dialogue. In this paper, we model Dialogue RE as a multi-label classification task and propose a simple yet effective model named SimpleRE. SimpleRE captures the interrelations among multiple relations in a dialogue through a novel input format, BERT Relation Token Sequence (BRS). In BRS, multiple [CLS] tokens are used to capture different relations between different pairs of entities. A Relation Refinement Gate (RRG) is designed to extract relation-specific semantic representation adaptively. Experiments on DialogRE show that SimpleRE achieves the best performance with much shorter training time. SimpleRE outperforms all direct baselines on sentence-level RE without using external resources.

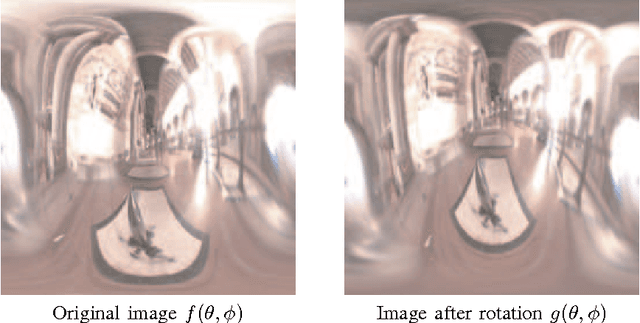

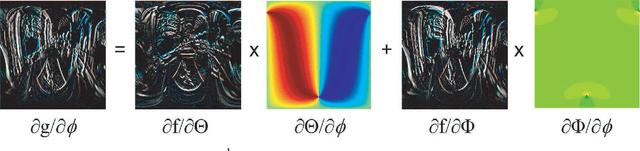

Non-Linear Phase-Shifting of Haar Wavelets for Run-Time All-Frequency Lighting

May 20, 2017

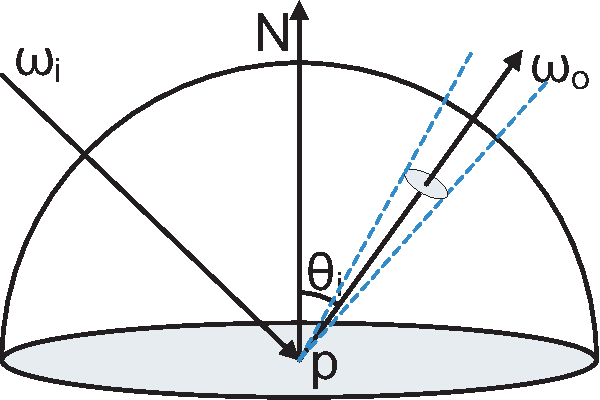

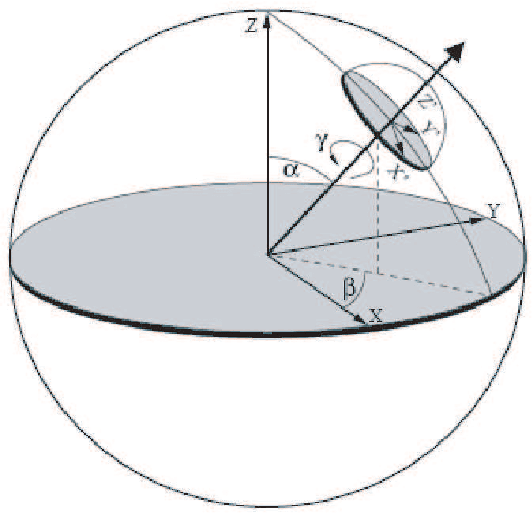

This paper focuses on real-time all-frequency image-based rendering using an innovative solution for run-time computation of light transport. The approach is based on new results derived for non-linear phase shifting in the Haar wavelet domain. Although image-based methods for real-time rendering of dynamic glossy objects have been proposed, they do not truly scale to all possible frequencies and high sampling rates without trading storage, glossiness, or computational time, while varying both lighting and viewpoint. This is due to the fact that current approaches are limited to precomputed radiance transfer (PRT), which is prohibitively expensive in terms of memory requirements and real-time rendering when both varying light and viewpoint changes are required together with high sampling rates for high frequency lighting of glossy material. On the other hand, current methods cannot handle object rotation, which is one of the paramount issues for all PRT methods using wavelets. This latter problem arises because the precomputed data are defined in a global coordinate system and encoded in the wavelet domain, while the object is rotated in a local coordinate system. At the root of all the above problems is the lack of efficient run-time solution to the nontrivial problem of rotating wavelets (a non-linear phase-shift), which we solve in this paper.

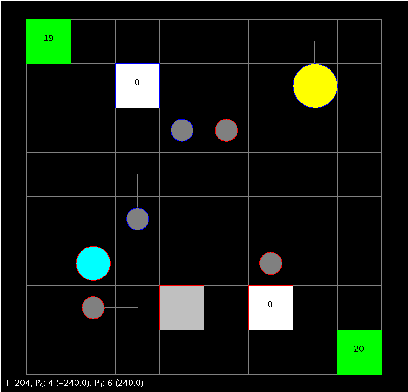

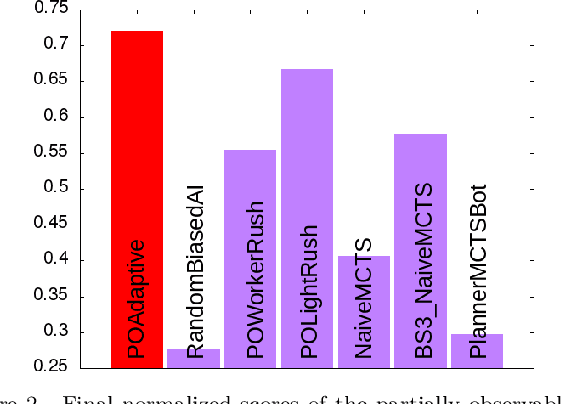

Constrained optimization under uncertainty for decision-making problems: Application to Real-Time Strategy games

Jan 07, 2019

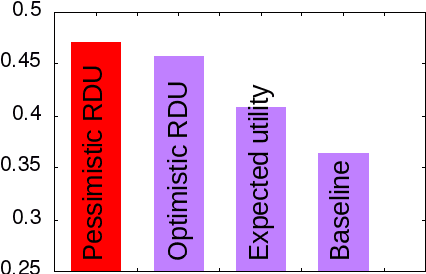

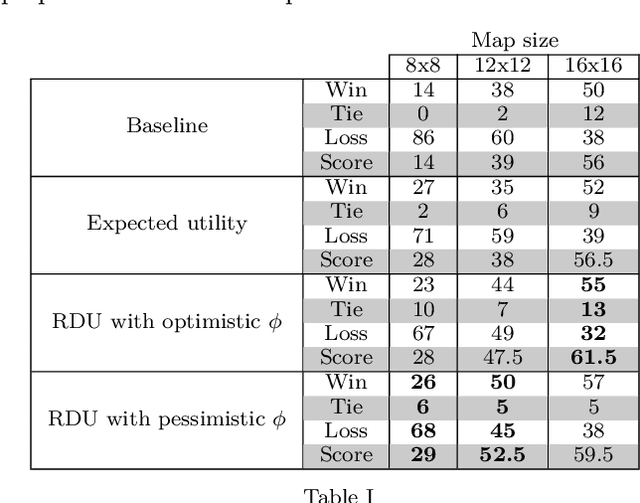

Decision-making problems can be modeled as combinatorial optimization problems with Constraint Programming formalisms such as Constrained Optimization Problems. However, few Constraint Programming formalisms can deal with both optimization and uncertainty at the same time, and none of them are convenient to model problems we tackle in this paper. Here, we propose a way to deal with combinatorial optimization problems under uncertainty within the classical Constrained Optimization Problems formalism by injecting the Rank Dependent Utility from decision theory. We also propose a proof of concept of our method to show it is implementable and can solve concrete decision-making problems using a regular constraint solver, and propose a bot that won the partially observable track of the 2018 {\mu}RTS AI competition. Our result shows it is possible to handle uncertainty with regular Constraint Programming solvers, without having to define a new formalism neither to develop dedicated solvers. This brings new perspective to tackle uncertainty in Constraint Programming.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge