"Time": models, code, and papers

Complexity-based Financial Stress Evaluation

Dec 05, 2022

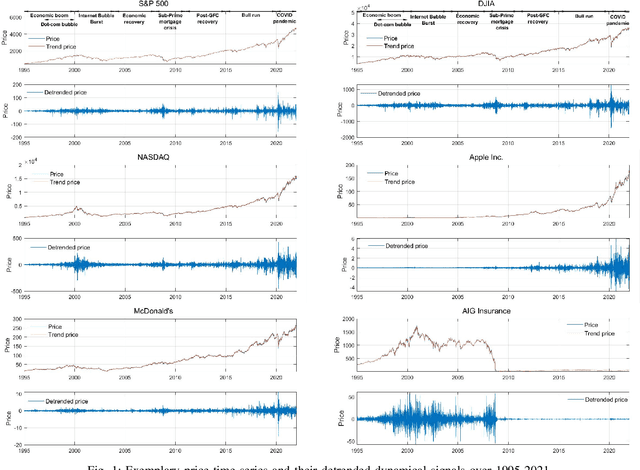

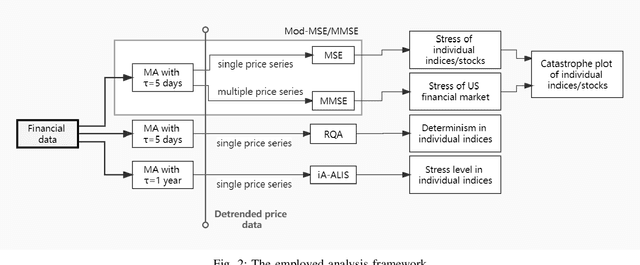

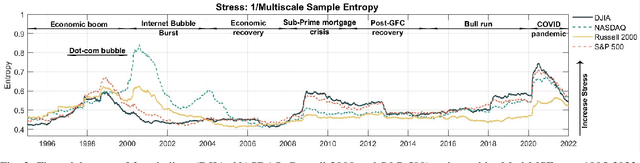

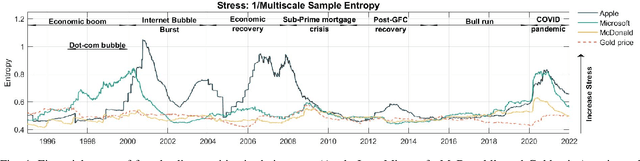

Financial markets typically exhibit dynamically complex properties as they undergo continuous interactions with economic and environmental factors. The Efficient Market Hypothesis indicates a rich difference in the structural complexity of security prices between normal (stable markets) and abnormal (financial crises) situations. Considering the analogy between market undulation of price time series and physical stress of bio-signals, we investigate whether stress indices in bio-systems can be adopted and modified so as to measure 'standard stress' in financial markets. This is achieved by employing structural complexity analysis, based on variants of univariate and multivariate sample entropy, to estimate the stress level of both financial markets on the whole and the performance of the individual financial indices. Further, we propose a novel graphical framework to establish the sensitivity of individual assets and stock markets to financial crises. This is achieved through Catastrophe Theory and entropy-based stress evaluations indicating the unique performance of each index/individual stock in response to different crises. Four major indices and four individual equities with gold prices are considered over the past 32 years from 1991-2021. Our findings based on nonlinear analyses and the proposed framework support the Efficient Market Hypothesis and reveal the relations among economic indices and within each price time series.

Time Series Anomaly Detection via Reinforcement Learning-Based Model Selection

May 23, 2022

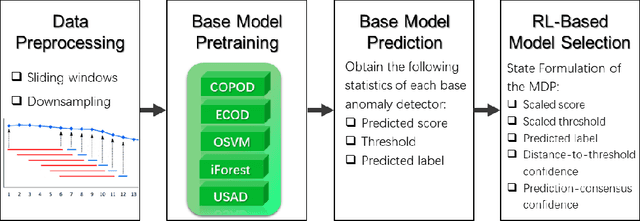

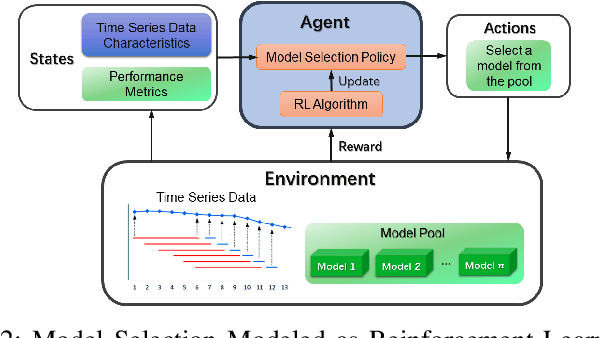

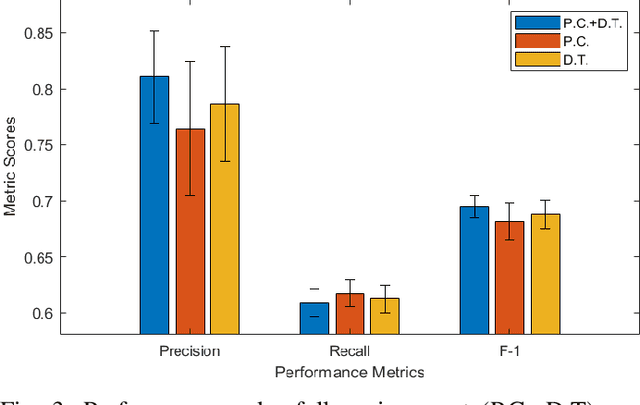

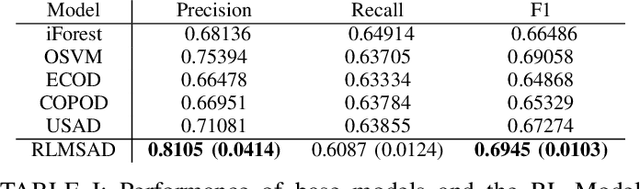

Time series anomaly detection is of critical importance for the reliable and efficient operation of real-world systems. Many anomaly detection models have been developed throughout the years based on various assumptions regarding anomaly characteristics. However, due to the complex nature of real-world data, different anomalies within a time series usually have diverse profiles supporting different anomaly assumptions, making it difficult to find a single anomaly detector that can consistently beat all other models. In this work, to harness the benefits of different base models, we assume that a pool of anomaly detection models is accessible and propose to utilize reinforcement learning to dynamically select a candidate model from these base models. Experiments on real-world data have been implemented. It is demonstrated that the proposed strategy can outperforms all baseline models in terms of overall performance.

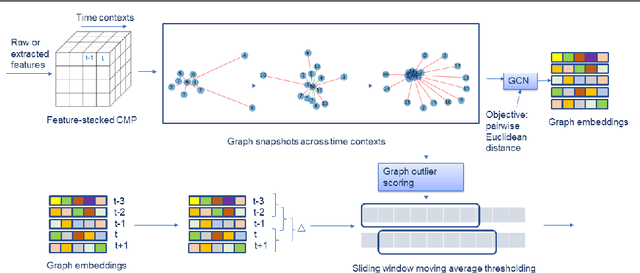

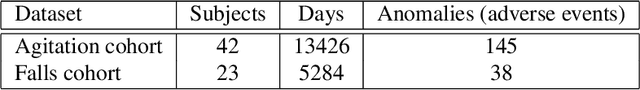

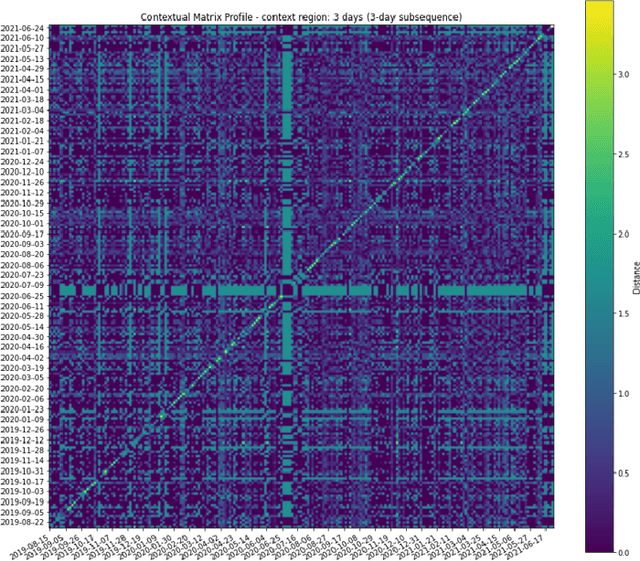

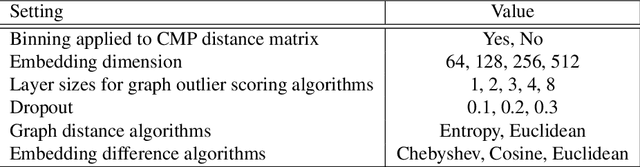

G-CMP: Graph-enhanced Contextual Matrix Profile for unsupervised anomaly detection in sensor-based remote health monitoring

Nov 29, 2022

Sensor-based remote health monitoring is used in industrial, urban and healthcare settings to monitor ongoing operation of equipment and human health. An important aim is to intervene early if anomalous events or adverse health is detected. In the wild, these anomaly detection approaches are challenged by noise, label scarcity, high dimensionality, explainability and wide variability in operating environments. The Contextual Matrix Profile (CMP) is a configurable 2-dimensional version of the Matrix Profile (MP) that uses the distance matrix of all subsequences of a time series to discover patterns and anomalies. The CMP is shown to enhance the effectiveness of the MP and other SOTA methods at detecting, visualising and interpreting true anomalies in noisy real world data from different domains. It excels at zooming out and identifying temporal patterns at configurable time scales. However, the CMP does not address cross-sensor information, and cannot scale to high dimensional data. We propose a novel, self-supervised graph-based approach for temporal anomaly detection that works on context graphs generated from the CMP distance matrix. The learned graph embeddings encode the anomalous nature of a time context. In addition, we evaluate other graph outlier algorithms for the same task. Given our pipeline is modular, graph construction, generation of graph embeddings, and pattern recognition logic can all be chosen based on the specific pattern detection application. We verified the effectiveness of graph-based anomaly detection and compared it with the CMP and 3 state-of-the art methods on two real-world healthcare datasets with different anomalies. Our proposed method demonstrated better recall, alert rate and generalisability.

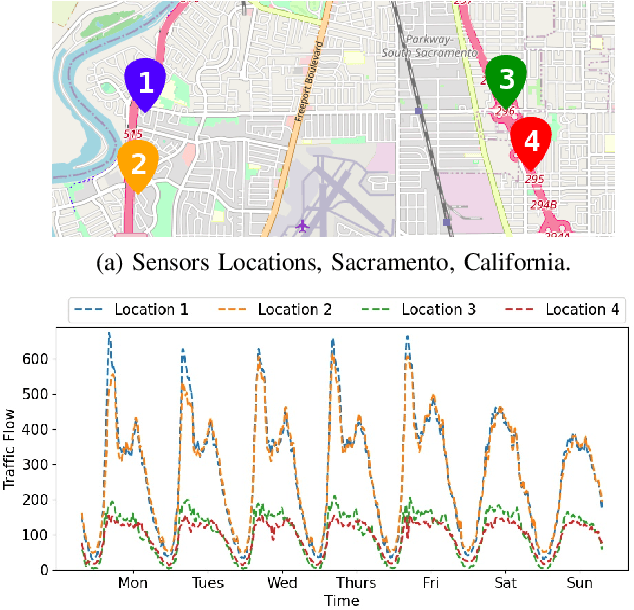

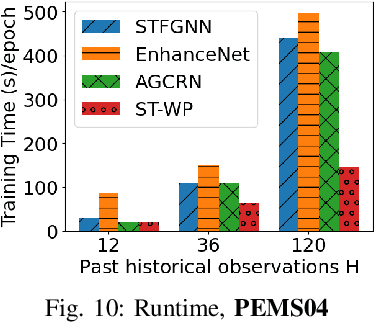

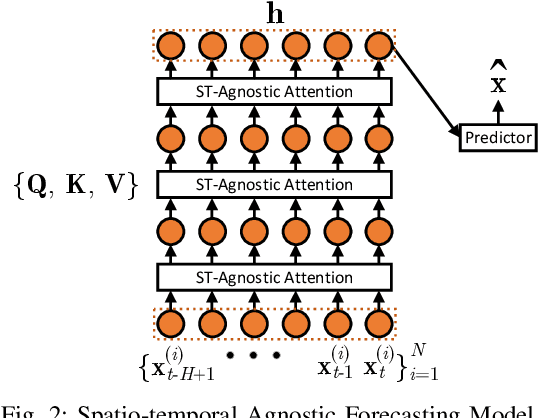

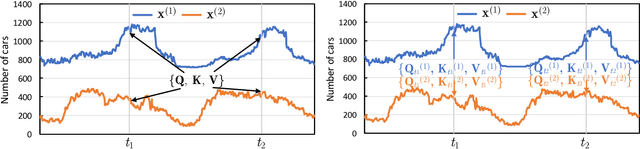

Towards Spatio-Temporal Aware Traffic Time Series Forecasting--Full Version

Apr 05, 2022

Traffic time series forecasting is challenging due to complex spatio-temporal dynamics time series from different locations often have distinct patterns; and for the same time series, patterns may vary across time, where, for example, there exist certain periods across a day showing stronger temporal correlations. Although recent forecasting models, in particular deep learning based models, show promising results, they suffer from being spatio-temporal agnostic. Such spatio-temporal agnostic models employ a shared parameter space irrespective of the time series locations and the time periods and they assume that the temporal patterns are similar across locations and do not evolve across time, which may not always hold, thus leading to sub-optimal results. In this work, we propose a framework that aims at turning spatio-temporal agnostic models to spatio-temporal aware models. To do so, we encode time series from different locations into stochastic variables, from which we generate location-specific and time-varying model parameters to better capture the spatio-temporal dynamics. We show how to integrate the framework with canonical attentions to enable spatio-temporal aware attentions. Next, to compensate for the additional overhead introduced by the spatio-temporal aware model parameter generation process, we propose a novel window attention scheme, which helps reduce the complexity from quadratic to linear, making spatio-temporal aware attentions also have competitive efficiency. We show strong empirical evidence on four traffic time series datasets, where the proposed spatio-temporal aware attentions outperform state-of-the-art methods in term of accuracy and efficiency. This is an extended version of "Towards Spatio-Temporal Aware Traffic Time Series Forecasting", to appear in ICDE 2022 [1], including additional experimental results.

Multidimensional Service Quality Scoring System

Dec 09, 2022

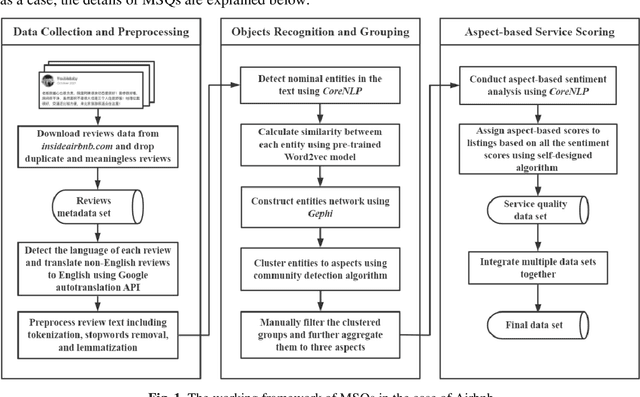

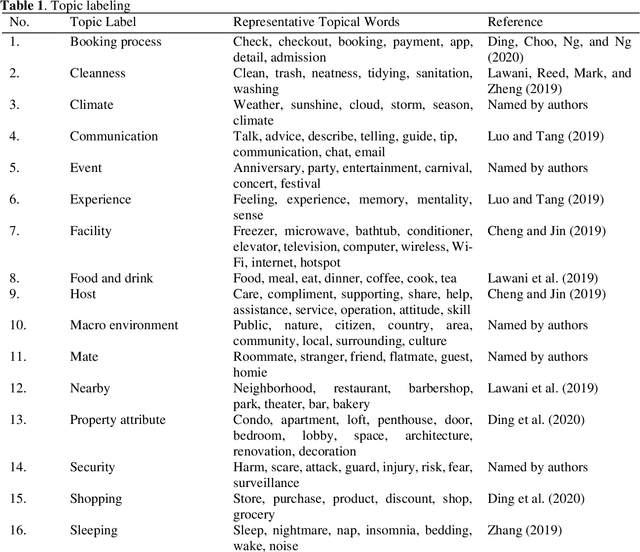

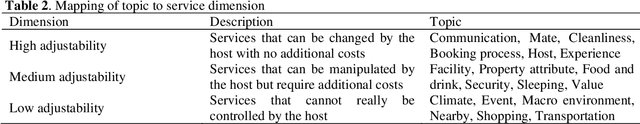

This supplementary paper aims to introduce the Multidimensional Service Quality Scoring System (MSQs), a review-based method for quantifying host service quality mentioned and employed in the paper Exit and transition: Exploring the survival status of Airbnb listings in a time of professionalization. MSQs is not an end-to-end implementation and is essentially composed of three pipelines, namely Data Collection and Preprocessing, Objects Recognition and Grouping, and Aspect-based Service Scoring. Using the study mentioned above as a case, the technical details of MSQs are explained in this article.

Continual Learning Approaches for Anomaly Detection

Dec 21, 2022

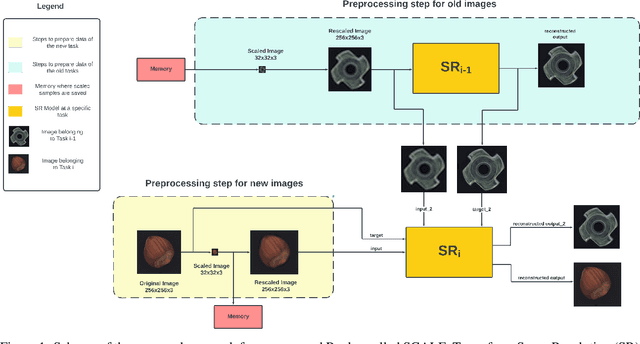

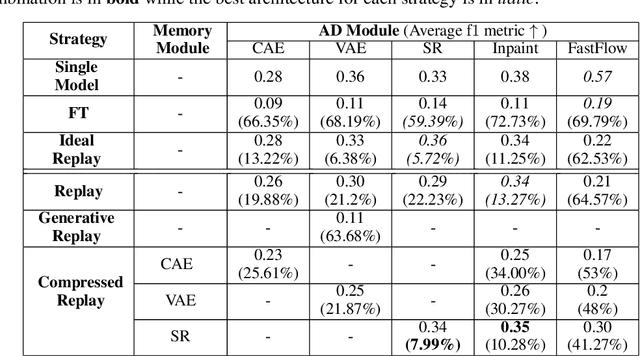

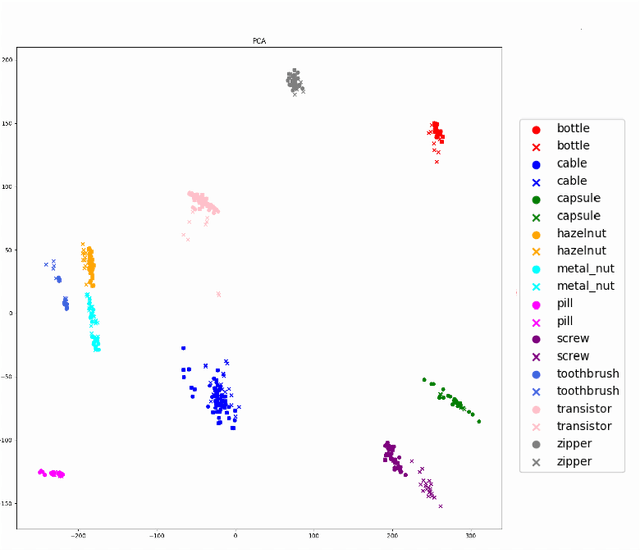

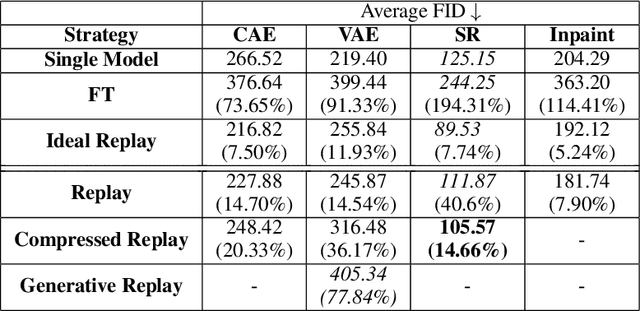

Anomaly Detection is a relevant problem that arises in numerous real-world applications, especially when dealing with images. However, there has been little research for this task in the Continual Learning setting. In this work, we introduce a novel approach called SCALE (SCALing is Enough) to perform Compressed Replay in a framework for Anomaly Detection in Continual Learning setting. The proposed technique scales and compresses the original images using a Super Resolution model which, to the best of our knowledge, is studied for the first time in the Continual Learning setting. SCALE can achieve a high level of compression while maintaining a high level of image reconstruction quality. In conjunction with other Anomaly Detection approaches, it can achieve optimal results. To validate the proposed approach, we use a real-world dataset of images with pixel-based anomalies, with the scope to provide a reliable benchmark for Anomaly Detection in the context of Continual Learning, serving as a foundation for further advancements in the field.

The Ties that matter: From the perspective of Similarity Measure in Online Social Networks

Dec 21, 2022

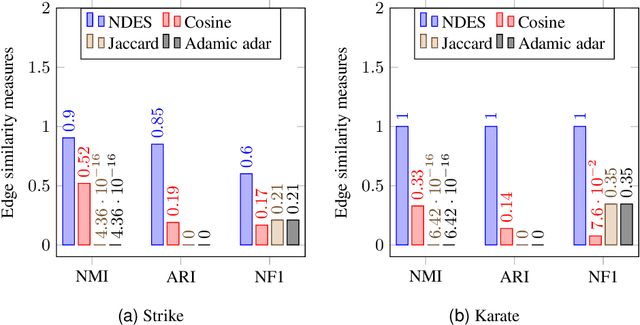

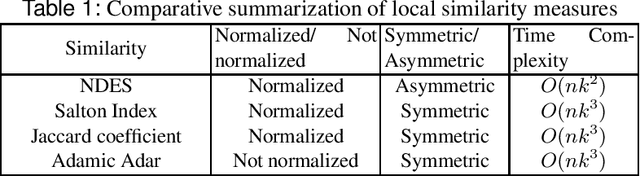

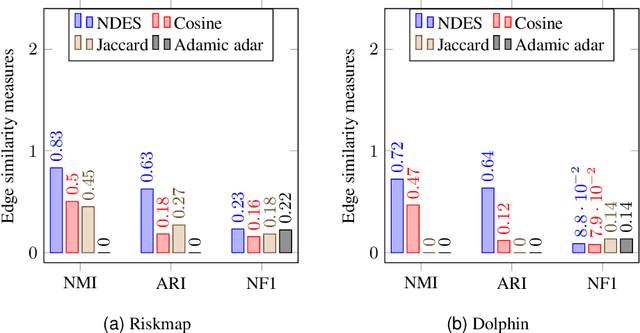

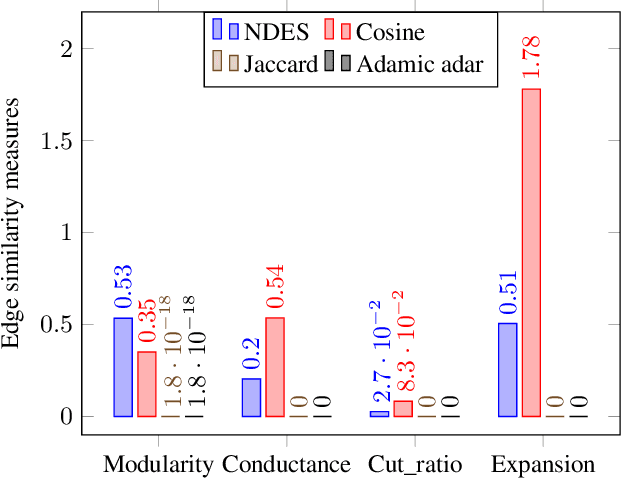

Online Social Networks have embarked on the importance of connection strength measures which has a broad array of applications such as, analyzing diffusion behaviors, community detection, link predictions, recommender systems. Though there are some existing connection strength measures, the density that a connection shares with it's neighbors and the directionality aspect has not received much attention. In this paper, we have proposed an asymmetric edge similarity measure namely, Neighborhood Density-based Edge Similarity (NDES) which provides a fundamental support to derive the strength of connection. The time complexity of NDES is $O(nk^2)$. An application of NDES for community detection in social network is shown. We have considered a similarity based community detection technique and substituted its similarity measure with NDES. The performance of NDES is evaluated on several small real-world datasets in terms of the effectiveness in detecting communities and compared with three widely used similarity measures. Empirical results show NDES enables detecting comparatively better communities both in terms of accuracy and quality.

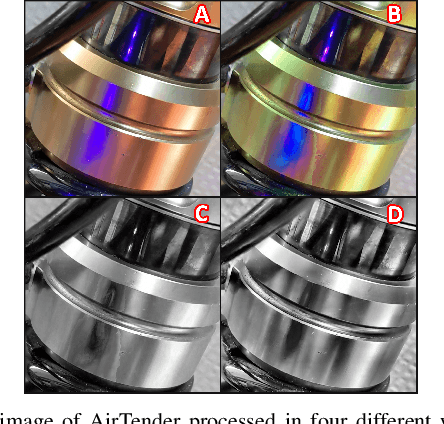

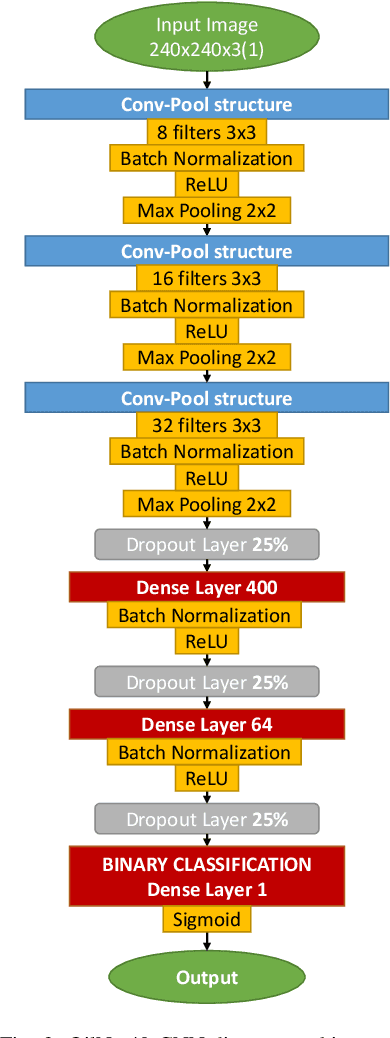

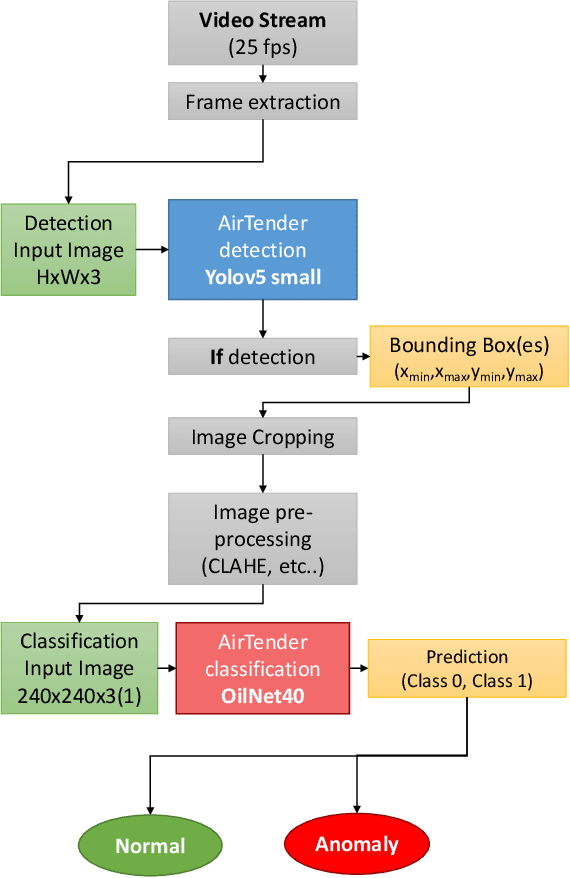

Real-Time Oil Leakage Detection on Aftermarket Motorcycle Damping System with Convolutional Neural Networks

Aug 10, 2022

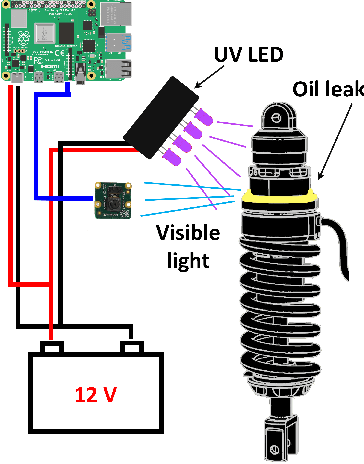

In this work, we describe in detail how Deep Learning and Computer Vision can help to detect fault events of the AirTender system, an aftermarket motorcycle damping system component. One of the most effective ways to monitor the AirTender functioning is to look for oil stains on its surface. Starting from real-time images, AirTender is first detected in the motorbike suspension system and then a binary classifier determines whether AirTender is spilling oil or not. The detection is made with the help of the Yolo5 architecture, whereas the classification is carried out with the help of a suitably designed Convolutional Neural Network, OilNet40. In order to detect oil leaks more clearly, we dilute the oil in AirTender with a fluorescent dye with excitation wavelength peak of approximately 390 nm. AirTender is then illuminated with suitable UV LEDs. The whole system is an attempt to design a low-cost detection setup. An on-board device, such as a mini-computer, is placed near the suspension system and connected to a full hd camera framing AirTender. The on-board device, through our Neural Network algorithm, is then able to localize and classify AirTender as normally functioning (non-leak image) or anomaly (leak image).

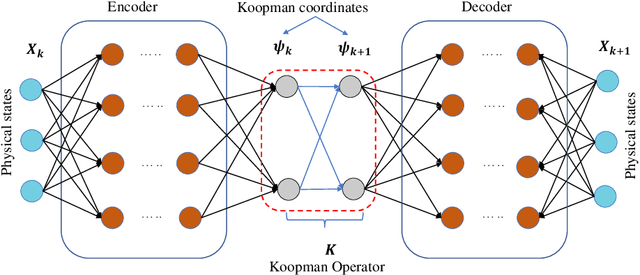

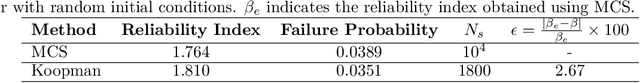

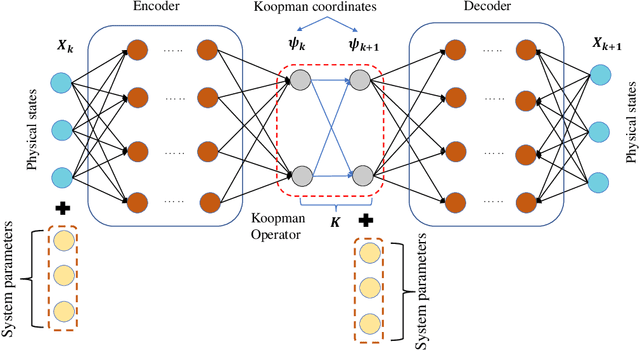

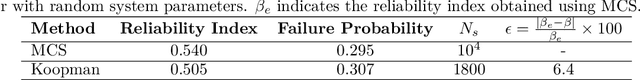

Koopman operator for time-dependent reliability analysis

Mar 05, 2022

Time-dependent structural reliability analysis of nonlinear dynamical systems is non-trivial; subsequently, scope of most of the structural reliability analysis methods is limited to time-independent reliability analysis only. In this work, we propose a Koopman operator based approach for time-dependent reliability analysis of nonlinear dynamical systems. Since the Koopman representations can transform any nonlinear dynamical system into a linear dynamical system, the time evolution of dynamical systems can be obtained by Koopman operators seamlessly regardless of the nonlinear or chaotic behavior. Despite the fact that the Koopman theory has been in vogue a long time back, identifying intrinsic coordinates is a challenging task; to address this, we propose an end-to-end deep learning architecture that learns the Koopman observables and then use it for time marching the dynamical response. Unlike purely data-driven approaches, the proposed approach is robust even in the presence of uncertainties; this renders the proposed approach suitable for time-dependent reliability analysis. We propose two architectures; one suitable for time-dependent reliability analysis when the system is subjected to random initial condition and the other suitable when the underlying system have uncertainties in system parameters. The proposed approach is robust and generalizes to unseen environment (out-of-distribution prediction). Efficacy of the proposed approached is illustrated using three numerical examples. Results obtained indicate supremacy of the proposed approach as compared to purely data-driven auto-regressive neural network and long-short term memory network.

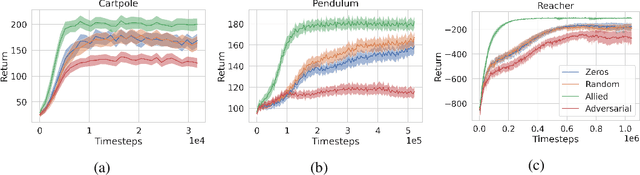

Adversarial Cheap Talk

Nov 20, 2022

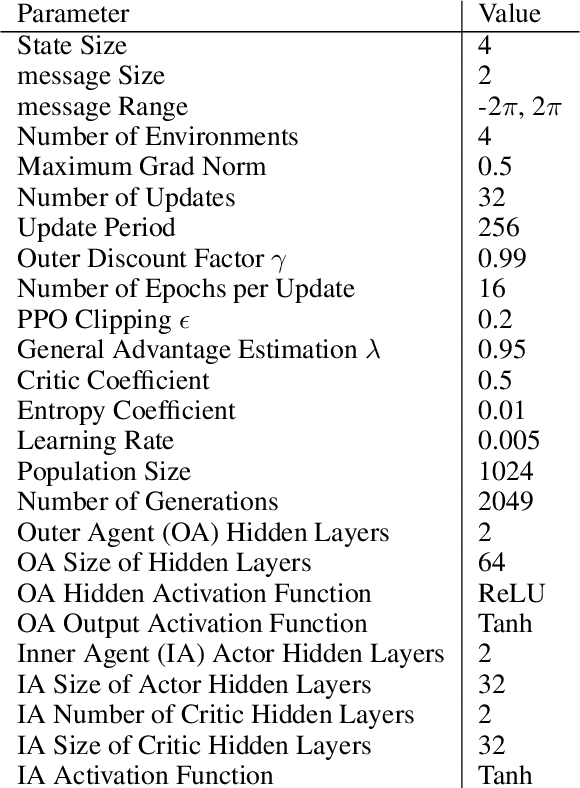

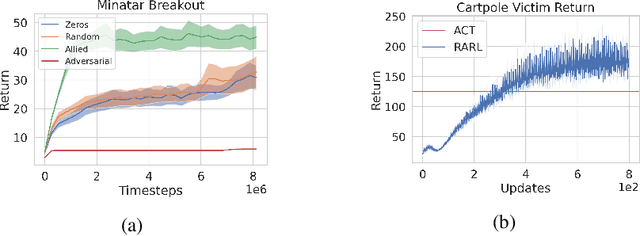

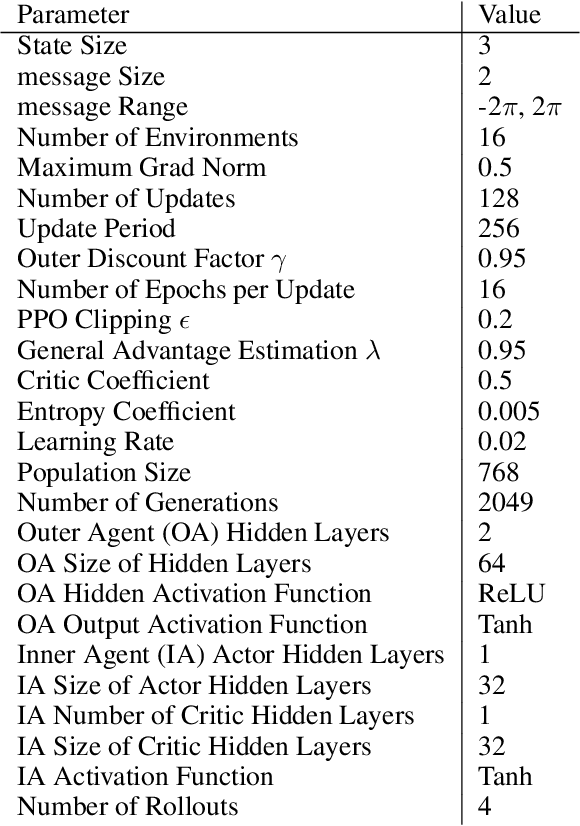

Adversarial attacks in reinforcement learning (RL) often assume highly-privileged access to the victim's parameters, environment, or data. Instead, this paper proposes a novel adversarial setting called a Cheap Talk MDP in which an Adversary can merely append deterministic messages to the Victim's observation, resulting in a minimal range of influence. The Adversary cannot occlude ground truth, influence underlying environment dynamics or reward signals, introduce non-stationarity, add stochasticity, see the Victim's actions, or access their parameters. Additionally, we present a simple meta-learning algorithm called Adversarial Cheap Talk (ACT) to train Adversaries in this setting. We demonstrate that an Adversary trained with ACT can still significantly influence the Victim's training and testing performance, despite the highly constrained setting. Affecting train-time performance reveals a new attack vector and provides insight into the success and failure modes of existing RL algorithms. More specifically, we show that an ACT Adversary is capable of harming performance by interfering with the learner's function approximation, or instead helping the Victim's performance by outputting useful features. Finally, we show that an ACT Adversary can manipulate messages during train-time to directly and arbitrarily control the Victim at test-time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge