Zhihuan Song

Towards Intrinsically Calibrated Uncertainty Quantification in Industrial Data-Driven Models via Diffusion Sampler

Apr 02, 2026Abstract:In modern process industries, data-driven models are important tools for real-time monitoring when key performance indicators are difficult to measure directly. While accurate predictions are essential, reliable uncertainty quantification (UQ) is equally critical for safety, reliability, and decision-making, but remains a major challenge in current data-driven approaches. In this work, we introduce a diffusion-based posterior sampling framework that inherently produces well-calibrated predictive uncertainty via faithful posterior sampling, eliminating the need for post-hoc calibration. In extensive evaluations on synthetic distributions, the Raman-based phenylacetic acid soft sensor benchmark, and a real ammonia synthesis case study, our method achieves practical improvements over existing UQ techniques in both uncertainty calibration and predictive accuracy. These results highlight diffusion samplers as a principled and scalable paradigm for advancing uncertainty-aware modeling in industrial applications.

HAD: Heterogeneity-Aware Distillation for Lifelong Heterogeneous Learning

Mar 27, 2026Abstract:Lifelong learning aims to preserve knowledge acquired from previous tasks while incorporating knowledge from a sequence of new tasks. However, most prior work explores only streams of homogeneous tasks (\textit{e.g.}, only classification tasks) and neglects the scenario of learning across heterogeneous tasks that possess different structures of outputs. In this work, we formalize this broader setting as lifelong heterogeneous learning (LHL). Departing from conventional lifelong learning, the task sequence of LHL spans different task types, and the learner needs to retain heterogeneous knowledge for different output space structures. To instantiate the LHL, we focus on LHL in the context of dense prediction (LHL4DP), a realistic and challenging scenario. To this end, we propose the Heterogeneity-Aware Distillation (HAD) method, an exemplar-free approach that preserves previously gained heterogeneous knowledge by self-distillation in each training phase. The proposed HAD comprises two complementary components, including a distribution-balanced heterogeneity-aware distillation loss to alleviate the global imbalance of prediction distribution and a salience-guided heterogeneity-aware distillation loss that concentrates learning on informative edge pixels extracted with the Sobel operator. Extensive experiments demonstrate that the proposed HAD method significantly outperforms existing methods in this new scenario.

Causality-driven Sequence Segmentation for Enhancing Multiphase Industrial Process Data Analysis and Soft Sensing

Jun 30, 2024

Abstract:The dynamic characteristics of multiphase industrial processes present significant challenges in the field of industrial big data modeling. Traditional soft sensing models frequently neglect the process dynamics and have difficulty in capturing transient phenomena like phase transitions. To address this issue, this article introduces a causality-driven sequence segmentation (CDSS) model. This model first identifies the local dynamic properties of the causal relationships between variables, which are also referred to as causal mechanisms. It then segments the sequence into different phases based on the sudden shifts in causal mechanisms that occur during phase transitions. Additionally, a novel metric, similarity distance, is designed to evaluate the temporal consistency of causal mechanisms, which includes both causal similarity distance and stable similarity distance. The discovered causal relationships in each phase are represented as a temporal causal graph (TCG). Furthermore, a soft sensing model called temporal-causal graph convolutional network (TC-GCN) is trained for each phase, by using the time-extended data and the adjacency matrix of TCG. The numerical examples are utilized to validate the proposed CDSS model, and the segmentation results demonstrate that CDSS has excellent performance on segmenting both stable and unstable multiphase series. Especially, it has higher accuracy in separating non-stationary time series compared to other methods. The effectiveness of the proposed CDSS model and the TC-GCN model is also verified through a penicillin fermentation process. Experimental results indicate that the breakpoints discovered by CDSS align well with the reaction mechanisms and TC-GCN significantly has excellent predictive accuracy.

Rethinking the Diffusion Models for Numerical Tabular Data Imputation from the Perspective of Wasserstein Gradient Flow

Jun 22, 2024

Abstract:Diffusion models (DMs) have gained attention in Missing Data Imputation (MDI), but there remain two long-neglected issues to be addressed: (1). Inaccurate Imputation, which arises from inherently sample-diversification-pursuing generative process of DMs. (2). Difficult Training, which stems from intricate design required for the mask matrix in model training stage. To address these concerns within the realm of numerical tabular datasets, we introduce a novel principled approach termed Kernelized Negative Entropy-regularized Wasserstein gradient flow Imputation (KnewImp). Specifically, based on Wasserstein gradient flow (WGF) framework, we first prove that issue (1) stems from the cost functionals implicitly maximized in DM-based MDI are equivalent to the MDI's objective plus diversification-promoting non-negative terms. Based on this, we then design a novel cost functional with diversification-discouraging negative entropy and derive our KnewImp approach within WGF framework and reproducing kernel Hilbert space. After that, we prove that the imputation procedure of KnewImp can be derived from another cost functional related to the joint distribution, eliminating the need for the mask matrix and hence naturally addressing issue (2). Extensive experiments demonstrate that our proposed KnewImp approach significantly outperforms existing state-of-the-art methods.

Root-KGD: A Novel Framework for Root Cause Diagnosis Based on Knowledge Graph and Industrial Data

Jun 19, 2024Abstract:With the development of intelligent manufacturing and the increasing complexity of industrial production, root cause diagnosis has gradually become an important research direction in the field of industrial fault diagnosis. However, existing research methods struggle to effectively combine domain knowledge and industrial data, failing to provide accurate, online, and reliable root cause diagnosis results for industrial processes. To address these issues, a novel fault root cause diagnosis framework based on knowledge graph and industrial data, called Root-KGD, is proposed. Root-KGD uses the knowledge graph to represent domain knowledge and employs data-driven modeling to extract fault features from industrial data. It then combines the knowledge graph and data features to perform knowledge graph reasoning for root cause identification. The performance of the proposed method is validated using two industrial process cases, Tennessee Eastman Process (TEP) and Multiphase Flow Facility (MFF). Compared to existing methods, Root-KGD not only gives more accurate root cause variable diagnosis results but also provides interpretable fault-related information by locating faults to corresponding physical entities in knowledge graph (such as devices and streams). In addition, combined with its lightweight nature, Root-KGD is more effective in online industrial applications.

ABIGX: A Unified Framework for eXplainable Fault Detection and Classification

Nov 09, 2023Abstract:For explainable fault detection and classification (FDC), this paper proposes a unified framework, ABIGX (Adversarial fault reconstruction-Based Integrated Gradient eXplanation). ABIGX is derived from the essentials of previous successful fault diagnosis methods, contribution plots (CP) and reconstruction-based contribution (RBC). It is the first explanation framework that provides variable contributions for the general FDC models. The core part of ABIGX is the adversarial fault reconstruction (AFR) method, which rethinks the FR from the perspective of adversarial attack and generalizes to fault classification models with a new fault index. For fault classification, we put forward a new problem of fault class smearing, which intrinsically hinders the correct explanation. We prove that ABIGX effectively mitigates this problem and outperforms the existing gradient-based explanation methods. For fault detection, we theoretically bridge ABIGX with conventional fault diagnosis methods by proving that CP and RBC are the linear specifications of ABIGX. The experiments evaluate the explanations of FDC by quantitative metrics and intuitive illustrations, the results of which show the general superiority of ABIGX to other advanced explanation methods.

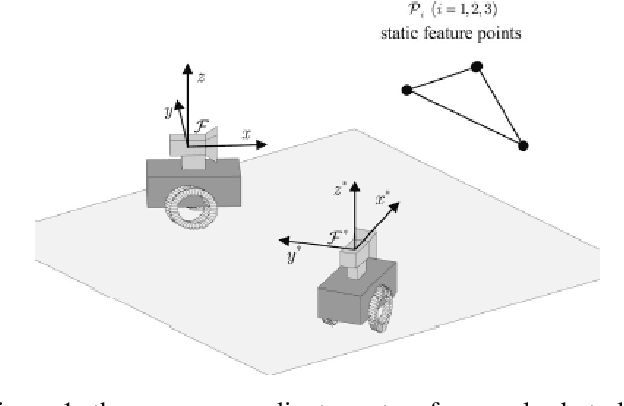

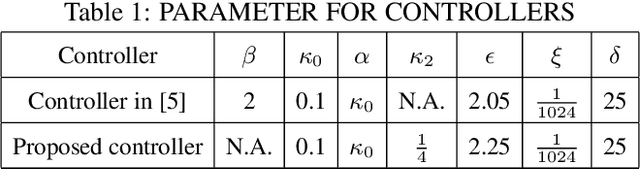

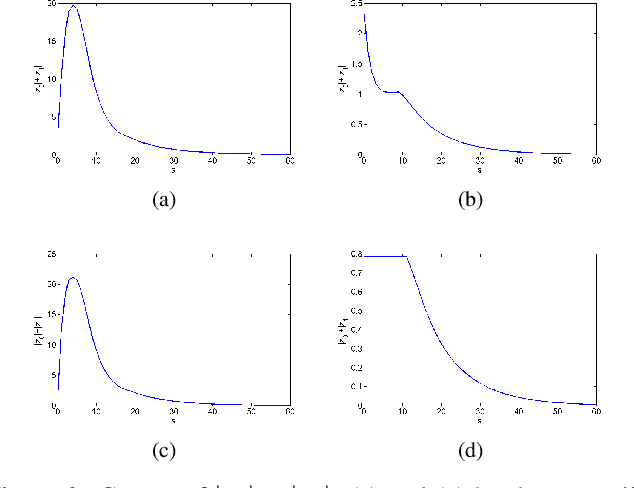

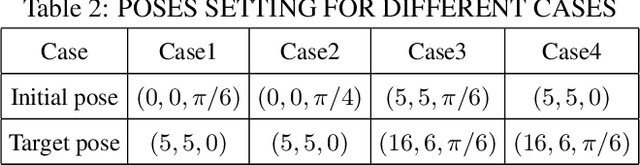

Object Servoing of Differential-Drive Robots

Nov 10, 2021

Abstract:Due to possibly changing pose of a movable object and nonholonomic constraint of a differential-drive robot, it is challenging to design an object servoing scheme for the differential-drive robot to asymptotically park at a predefined relative pose to the movable object. In this paper, a novel object servoing scheme is designed for the differential-drive robots. Each on-line relative pose is first estimated by using feature points of the moveable object and it serves as the input of an object servoing friendly parking controller. The linear velocity and angular velocity are then determined by the parking controller. Experimental results validate the performance of the proposed object servoing scheme. Due to its low on-line computational cost, the proposed scheme can be applied for last mile delivery of differential-drive robots to movable objects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge