Zhao Kang

Eliminating Gradient Conflict in Reference-based Line-Art Colorization

Jul 20, 2022

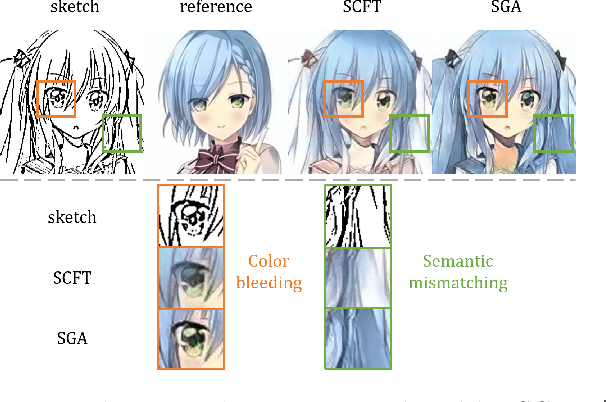

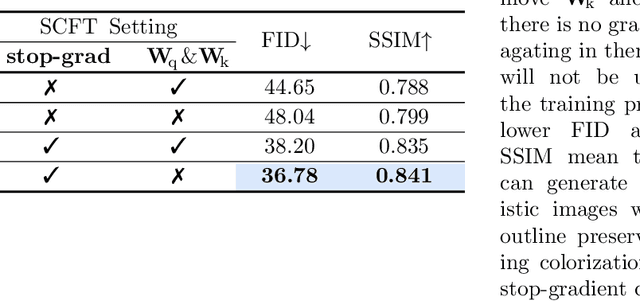

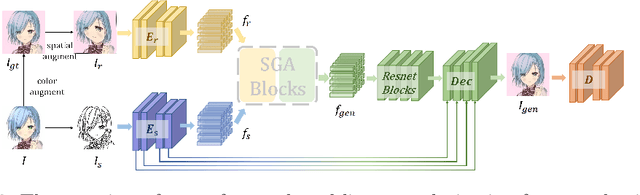

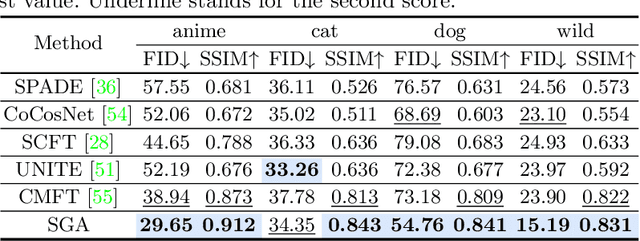

Abstract:Reference-based line-art colorization is a challenging task in computer vision. The color, texture, and shading are rendered based on an abstract sketch, which heavily relies on the precise long-range dependency modeling between the sketch and reference. Popular techniques to bridge the cross-modal information and model the long-range dependency employ the attention mechanism. However, in the context of reference-based line-art colorization, several techniques would intensify the existing training difficulty of attention, for instance, self-supervised training protocol and GAN-based losses. To understand the instability in training, we detect the gradient flow of attention and observe gradient conflict among attention branches. This phenomenon motivates us to alleviate the gradient issue by preserving the dominant gradient branch while removing the conflict ones. We propose a novel attention mechanism using this training strategy, Stop-Gradient Attention (SGA), outperforming the attention baseline by a large margin with better training stability. Compared with state-of-the-art modules in line-art colorization, our approach demonstrates significant improvements in Fr\'echet Inception Distance (FID, up to 27.21%) and structural similarity index measure (SSIM, up to 25.67%) on several benchmarks. The code of SGA is available at https://github.com/kunkun0w0/SGA .

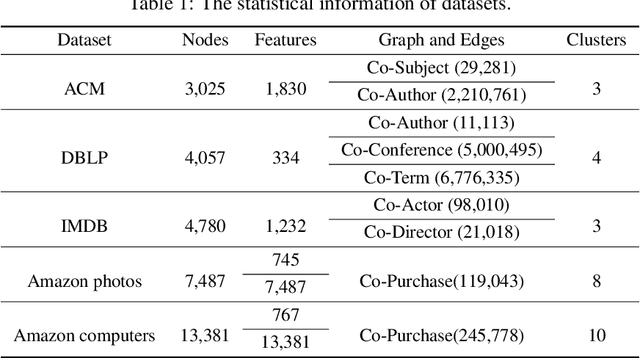

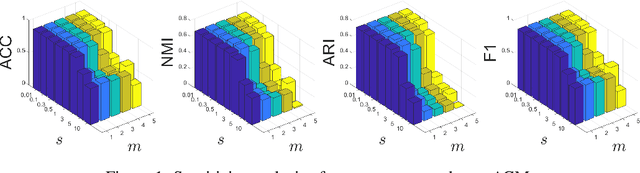

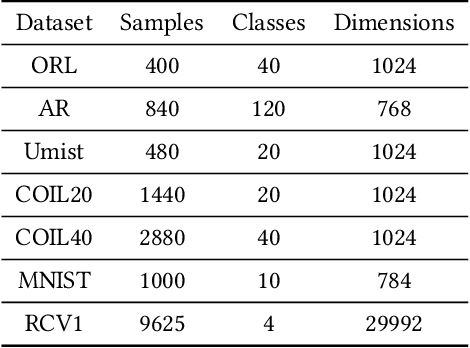

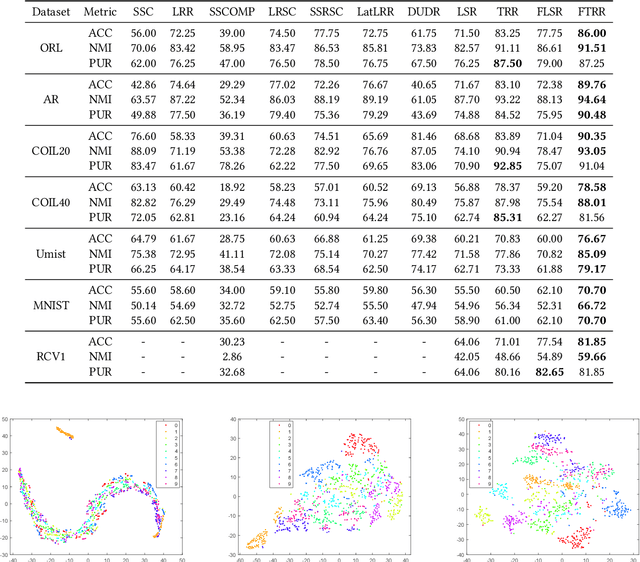

Scalable Multi-view Clustering with Graph Filtering

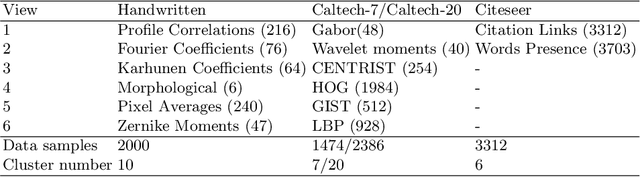

May 18, 2022Abstract:With the explosive growth of multi-source data, multi-view clustering has attracted great attention in recent years. Most existing multi-view methods operate in raw feature space and heavily depend on the quality of original feature representation. Moreover, they are often designed for feature data and ignore the rich topology structure information. Accordingly, in this paper, we propose a generic framework to cluster both attribute and graph data with heterogeneous features. It is capable of exploring the interplay between feature and structure. Specifically, we first adopt graph filtering technique to eliminate high-frequency noise to achieve a clustering-friendly smooth representation. To handle the scalability challenge, we develop a novel sampling strategy to improve the quality of anchors. Extensive experiments on attribute and graph benchmarks demonstrate the superiority of our approach with respect to state-of-the-art approaches.

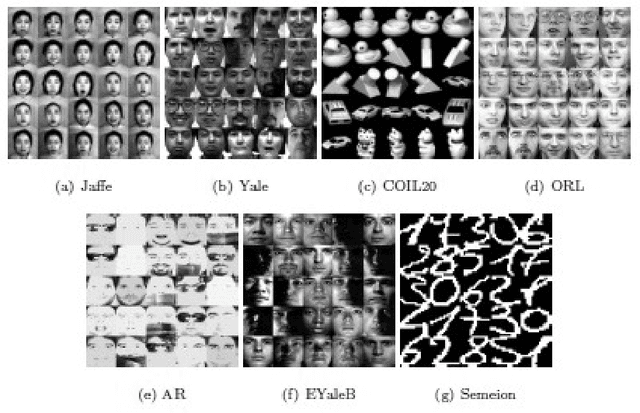

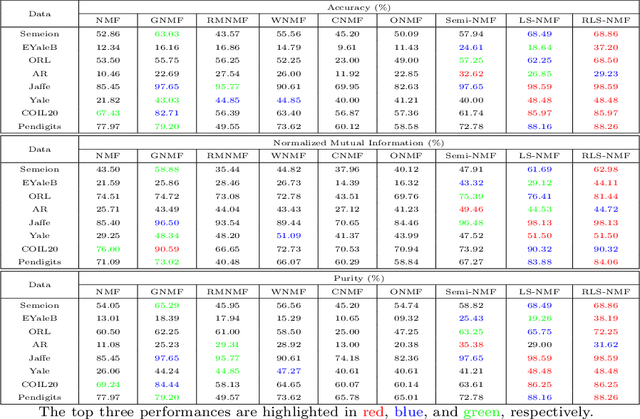

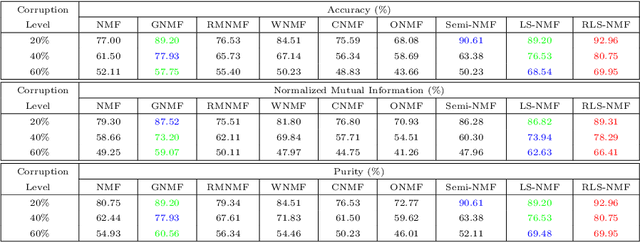

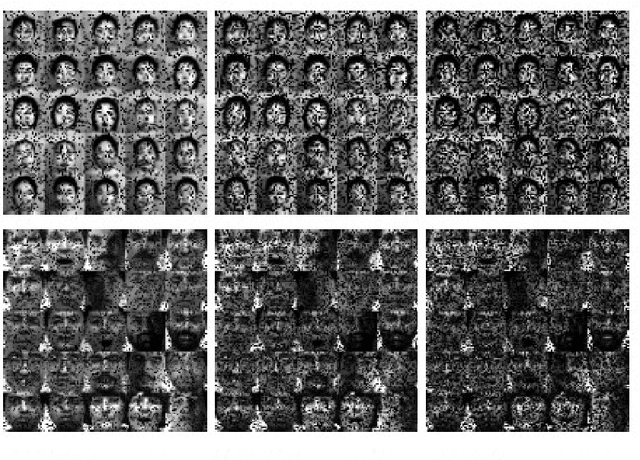

Log-based Sparse Nonnegative Matrix Factorization for Data Representation

Apr 22, 2022

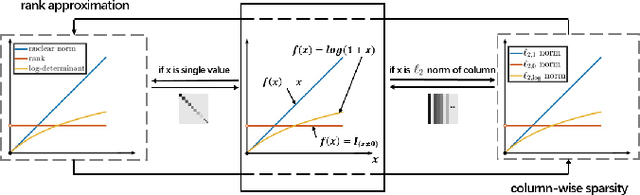

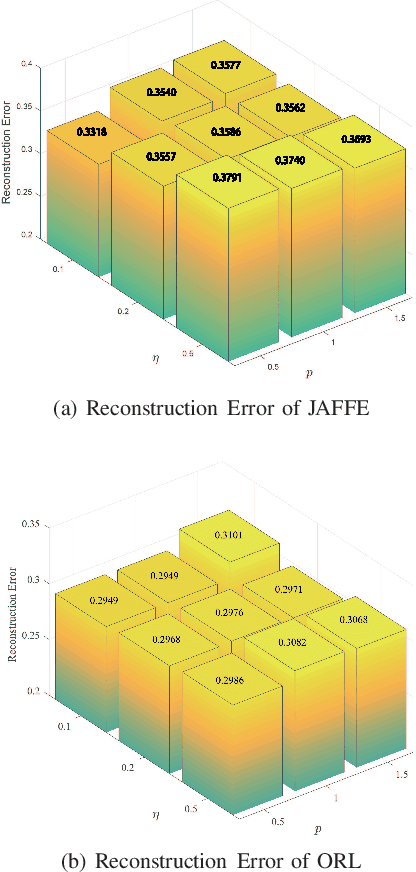

Abstract:Nonnegative matrix factorization (NMF) has been widely studied in recent years due to its effectiveness in representing nonnegative data with parts-based representations. For NMF, a sparser solution implies better parts-based representation.However, current NMF methods do not always generate sparse solutions.In this paper, we propose a new NMF method with log-norm imposed on the factor matrices to enhance the sparseness.Moreover, we propose a novel column-wisely sparse norm, named $\ell_{2,\log}$-(pseudo) norm to enhance the robustness of the proposed method.The $\ell_{2,\log}$-(pseudo) norm is invariant, continuous, and differentiable.For the $\ell_{2,\log}$ regularized shrinkage problem, we derive a closed-form solution, which can be used for other general problems.Efficient multiplicative updating rules are developed for the optimization, which theoretically guarantees the convergence of the objective value sequence.Extensive experimental results confirm the effectiveness of the proposed method, as well as the enhanced sparseness and robustness.

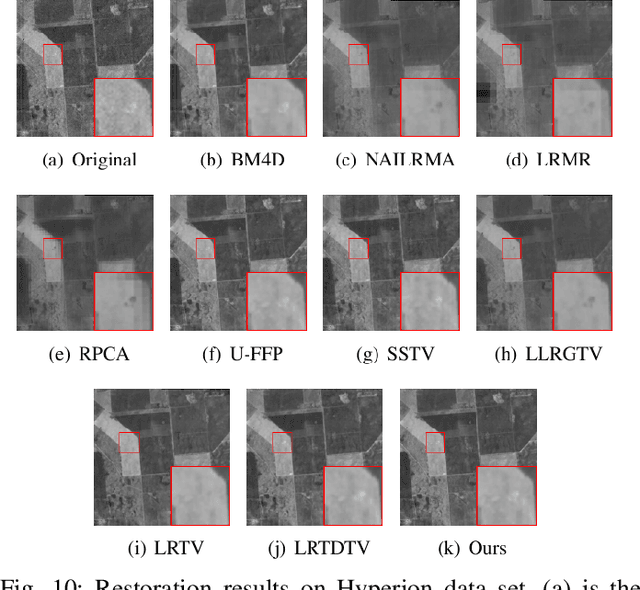

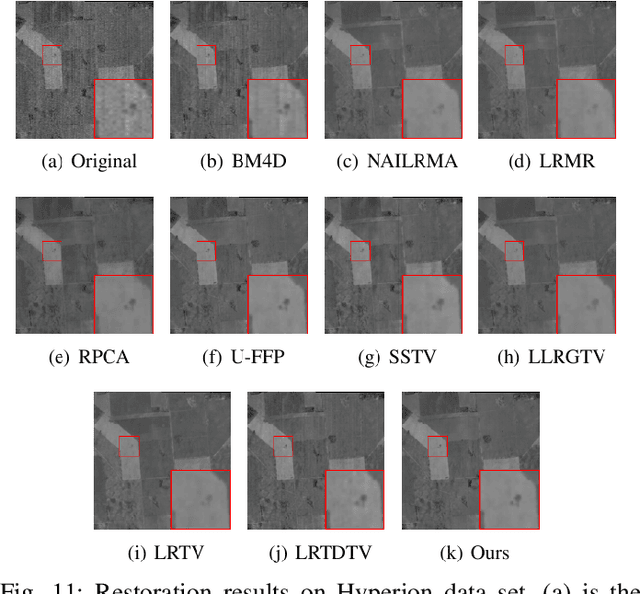

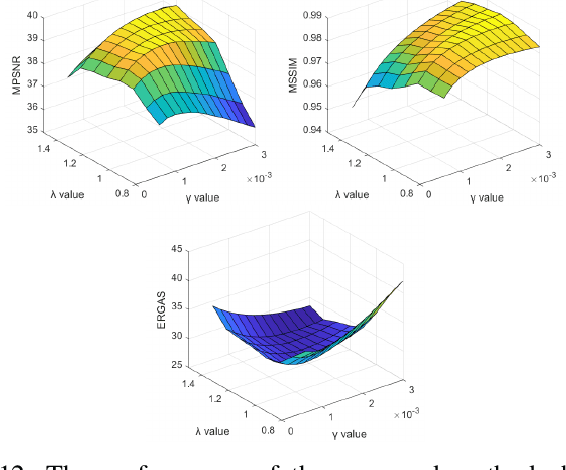

Hyperspectral Image Denoising Using Non-convex Local Low-rank and Sparse Separation with Spatial-Spectral Total Variation Regularization

Jan 08, 2022

Abstract:In this paper, we propose a novel nonconvex approach to robust principal component analysis for HSI denoising, which focuses on simultaneously developing more accurate approximations to both rank and column-wise sparsity for the low-rank and sparse components, respectively. In particular, the new method adopts the log-determinant rank approximation and a novel $\ell_{2,\log}$ norm, to restrict the local low-rank or column-wisely sparse properties for the component matrices, respectively. For the $\ell_{2,\log}$-regularized shrinkage problem, we develop an efficient, closed-form solution, which is named $\ell_{2,\log}$-shrinkage operator. The new regularization and the corresponding operator can be generally used in other problems that require column-wise sparsity. Moreover, we impose the spatial-spectral total variation regularization in the log-based nonconvex RPCA model, which enhances the global piece-wise smoothness and spectral consistency from the spatial and spectral views in the recovered HSI. Extensive experiments on both simulated and real HSIs demonstrate the effectiveness of the proposed method in denoising HSIs.

Multilayer Graph Contrastive Clustering Network

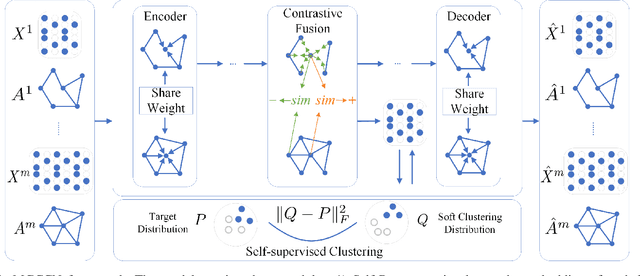

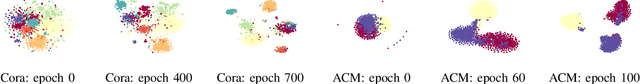

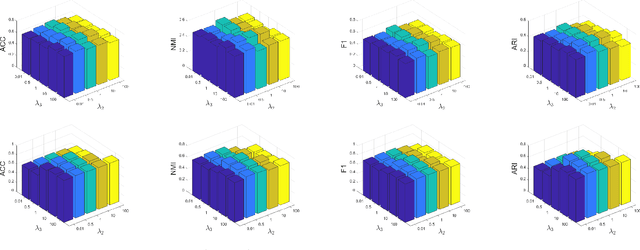

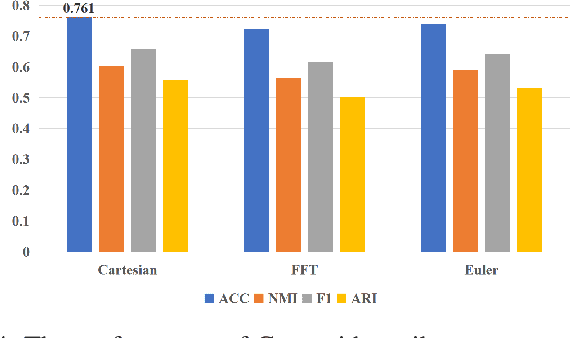

Dec 28, 2021

Abstract:Multilayer graph has garnered plenty of research attention in many areas due to their high utility in modeling interdependent systems. However, clustering of multilayer graph, which aims at dividing the graph nodes into categories or communities, is still at a nascent stage. Existing methods are often limited to exploiting the multiview attributes or multiple networks and ignoring more complex and richer network frameworks. To this end, we propose a generic and effective autoencoder framework for multilayer graph clustering named Multilayer Graph Contrastive Clustering Network (MGCCN). MGCCN consists of three modules: (1)Attention mechanism is applied to better capture the relevance between nodes and neighbors for better node embeddings. (2)To better explore the consistent information in different networks, a contrastive fusion strategy is introduced. (3)MGCCN employs a self-supervised component that iteratively strengthens the node embedding and clustering. Extensive experiments on different types of real-world graph data indicate that our proposed method outperforms state-of-the-art techniques.

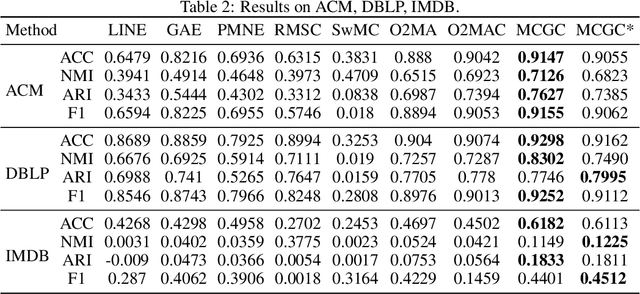

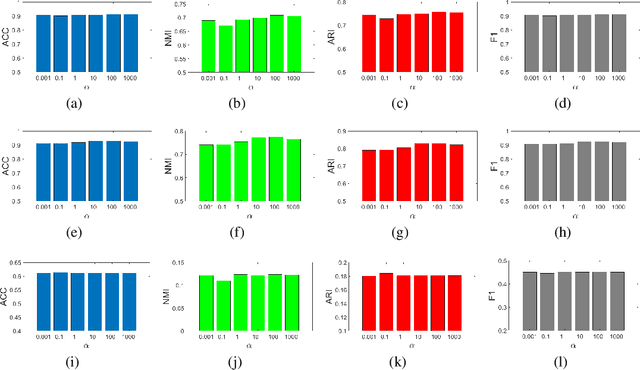

Multi-view Contrastive Graph Clustering

Oct 22, 2021

Abstract:With the explosive growth of information technology, multi-view graph data have become increasingly prevalent and valuable. Most existing multi-view clustering techniques either focus on the scenario of multiple graphs or multi-view attributes. In this paper, we propose a generic framework to cluster multi-view attributed graph data. Specifically, inspired by the success of contrastive learning, we propose multi-view contrastive graph clustering (MCGC) method to learn a consensus graph since the original graph could be noisy or incomplete and is not directly applicable. Our method composes of two key steps: we first filter out the undesirable high-frequency noise while preserving the graph geometric features via graph filtering and obtain a smooth representation of nodes; we then learn a consensus graph regularized by graph contrastive loss. Results on several benchmark datasets show the superiority of our method with respect to state-of-the-art approaches. In particular, our simple approach outperforms existing deep learning-based methods.

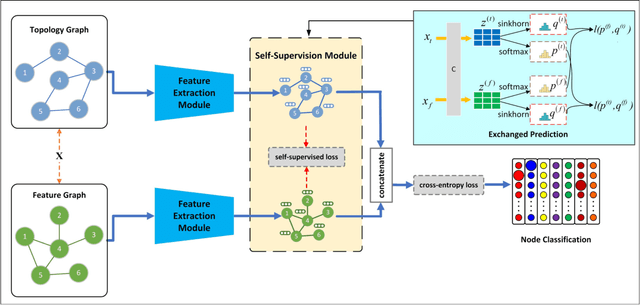

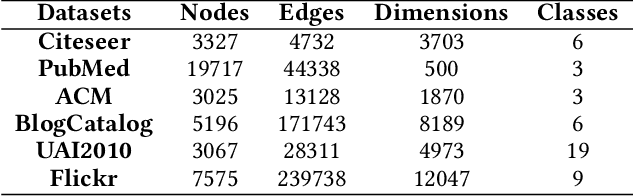

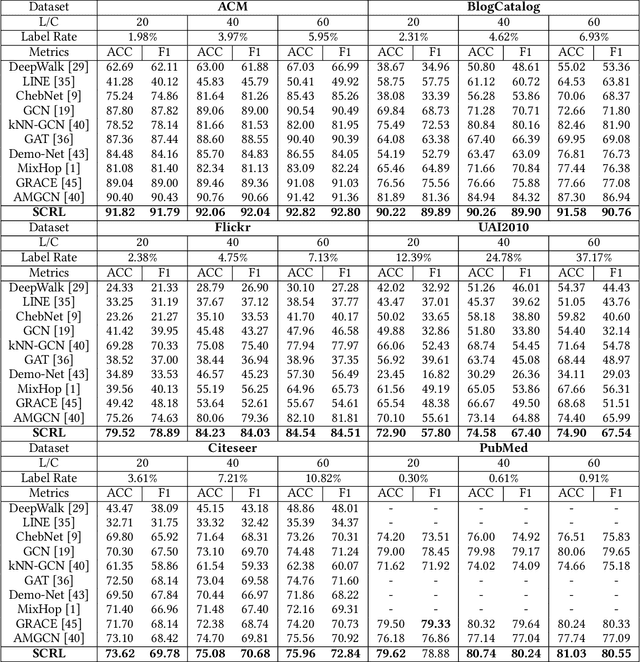

Self-supervised Consensus Representation Learning for Attributed Graph

Aug 10, 2021

Abstract:Attempting to fully exploit the rich information of topological structure and node features for attributed graph, we introduce self-supervised learning mechanism to graph representation learning and propose a novel Self-supervised Consensus Representation Learning (SCRL) framework. In contrast to most existing works that only explore one graph, our proposed SCRL method treats graph from two perspectives: topology graph and feature graph. We argue that their embeddings should share some common information, which could serve as a supervisory signal. Specifically, we construct the feature graph of node features via k-nearest neighbor algorithm. Then graph convolutional network (GCN) encoders extract features from two graphs respectively. Self-supervised loss is designed to maximize the agreement of the embeddings of the same node in the topology graph and the feature graph. Extensive experiments on real citation networks and social networks demonstrate the superiority of our proposed SCRL over the state-of-the-art methods on semi-supervised node classification task. Meanwhile, compared with its main competitors, SCRL is rather efficient.

Self-paced Principal Component Analysis

Jun 25, 2021

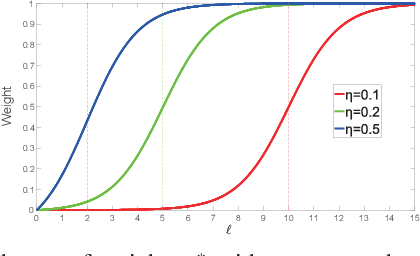

Abstract:Principal Component Analysis (PCA) has been widely used for dimensionality reduction and feature extraction. Robust PCA (RPCA), under different robust distance metrics, such as l1-norm and l2, p-norm, can deal with noise or outliers to some extent. However, real-world data may display structures that can not be fully captured by these simple functions. In addition, existing methods treat complex and simple samples equally. By contrast, a learning pattern typically adopted by human beings is to learn from simple to complex and less to more. Based on this principle, we propose a novel method called Self-paced PCA (SPCA) to further reduce the effect of noise and outliers. Notably, the complexity of each sample is calculated at the beginning of each iteration in order to integrate samples from simple to more complex into training. Based on an alternating optimization, SPCA finds an optimal projection matrix and filters out outliers iteratively. Theoretical analysis is presented to show the rationality of SPCA. Extensive experiments on popular data sets demonstrate that the proposed method can improve the state of-the-art results considerably.

Smoothed Multi-View Subspace Clustering

Jun 18, 2021

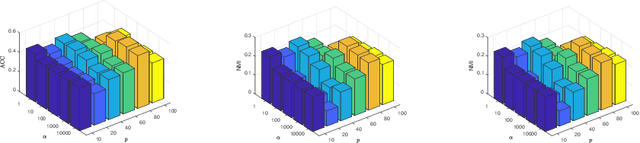

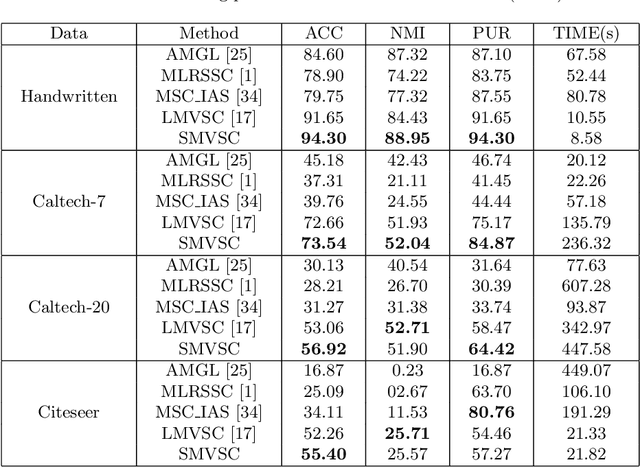

Abstract:In recent years, multi-view subspace clustering has achieved impressive performance due to the exploitation of complementary imformation across multiple views. However, multi-view data can be very complicated and are not easy to cluster in real-world applications. Most existing methods operate on raw data and may not obtain the optimal solution. In this work, we propose a novel multi-view clustering method named smoothed multi-view subspace clustering (SMVSC) by employing a novel technique, i.e., graph filtering, to obtain a smooth representation for each view, in which similar data points have similar feature values. Specifically, it retains the graph geometric features through applying a low-pass filter. Consequently, it produces a ``clustering-friendly" representation and greatly facilitates the downstream clustering task. Extensive experiments on benchmark datasets validate the superiority of our approach. Analysis shows that graph filtering increases the separability of classes.

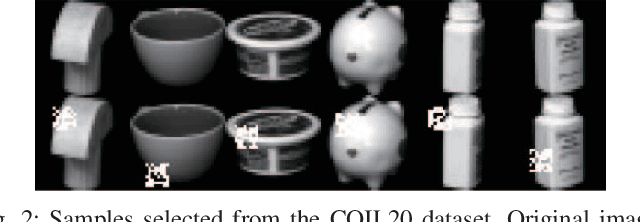

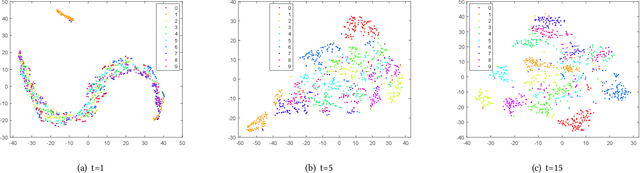

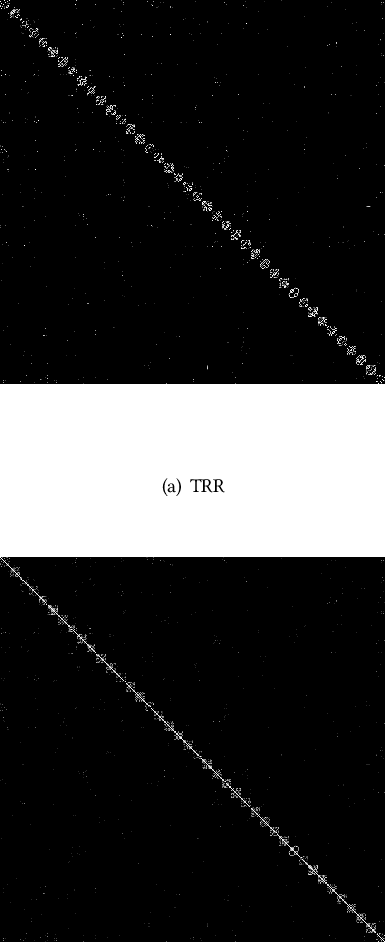

Towards Clustering-friendly Representations: Subspace Clustering via Graph Filtering

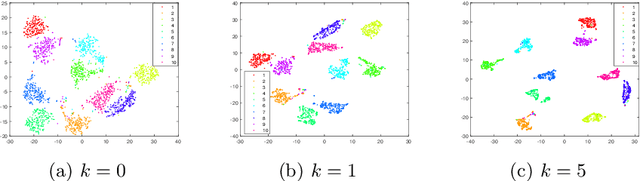

Jun 18, 2021

Abstract:Finding a suitable data representation for a specific task has been shown to be crucial in many applications. The success of subspace clustering depends on the assumption that the data can be separated into different subspaces. However, this simple assumption does not always hold since the raw data might not be separable into subspaces. To recover the ``clustering-friendly'' representation and facilitate the subsequent clustering, we propose a graph filtering approach by which a smooth representation is achieved. Specifically, it injects graph similarity into data features by applying a low-pass filter to extract useful data representations for clustering. Extensive experiments on image and document clustering datasets demonstrate that our method improves upon state-of-the-art subspace clustering techniques. Especially, its comparable performance with deep learning methods emphasizes the effectiveness of the simple graph filtering scheme for many real-world applications. An ablation study shows that graph filtering can remove noise, preserve structure in the image, and increase the separability of classes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge