Yong Xue

Towards Generative Location Awareness for Disaster Response: A Probabilistic Cross-view Geolocalization Approach

Dec 23, 2025

Abstract:As Earth's climate changes, it is impacting disasters and extreme weather events across the planet. Record-breaking heat waves, drenching rainfalls, extreme wildfires, and widespread flooding during hurricanes are all becoming more frequent and more intense. Rapid and efficient response to disaster events is essential for climate resilience and sustainability. A key challenge in disaster response is to accurately and quickly identify disaster locations to support decision-making and resources allocation. In this paper, we propose a Probabilistic Cross-view Geolocalization approach, called ProbGLC, exploring new pathways towards generative location awareness for rapid disaster response. Herein, we combine probabilistic and deterministic geolocalization models into a unified framework to simultaneously enhance model explainability (via uncertainty quantification) and achieve state-of-the-art geolocalization performance. Designed for rapid diaster response, the ProbGLC is able to address cross-view geolocalization across multiple disaster events as well as to offer unique features of probabilistic distribution and localizability score. To evaluate the ProbGLC, we conduct extensive experiments on two cross-view disaster datasets (i.e., MultiIAN and SAGAINDisaster), consisting diverse cross-view imagery pairs of multiple disaster types (e.g., hurricanes, wildfires, floods, to tornadoes). Preliminary results confirms the superior geolocalization accuracy (i.e., 0.86 in Acc@1km and 0.97 in Acc@25km) and model explainability (i.e., via probabilistic distributions and localizability scores) of the proposed ProbGLC approach, highlighting the great potential of leveraging generative cross-view approach to facilitate location awareness for better and faster disaster response. The data and code is publicly available at https://github.com/bobleegogogo/ProbGLC

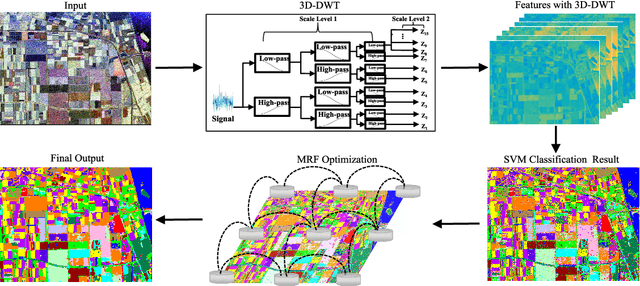

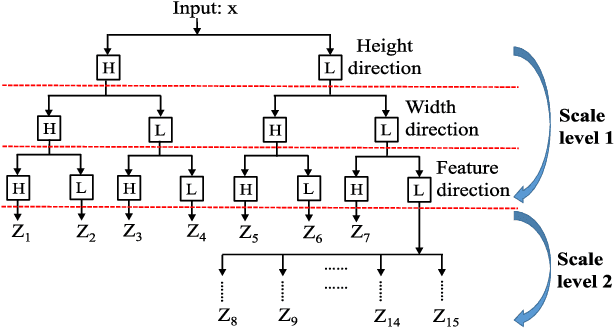

Polarimetric SAR Image Semantic Segmentation with 3D Discrete Wavelet Transform and Markov Random Field

Aug 05, 2020

Abstract:Polarimetric synthetic aperture radar (PolSAR) image segmentation is currently of great importance in image processing for remote sensing applications. However, it is a challenging task due to two main reasons. Firstly, the label information is difficult to acquire due to high annotation costs. Secondly, the speckle effect embedded in the PolSAR imaging process remarkably degrades the segmentation performance. To address these two issues, we present a contextual PolSAR image semantic segmentation method in this paper.With a newly defined channelwise consistent feature set as input, the three-dimensional discrete wavelet transform (3D-DWT) technique is employed to extract discriminative multi-scale features that are robust to speckle noise. Then Markov random field (MRF) is further applied to enforce label smoothness spatially during segmentation. By simultaneously utilizing 3D-DWT features and MRF priors for the first time, contextual information is fully integrated during the segmentation to ensure accurate and smooth segmentation. To demonstrate the effectiveness of the proposed method, we conduct extensive experiments on three real benchmark PolSAR image data sets. Experimental results indicate that the proposed method achieves promising segmentation accuracy and preferable spatial consistency using a minimal number of labeled pixels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge