Yash Jangir

Dexterous Manipulation Policies from RGB Human Videos via 4D Hand-Object Trajectory Reconstruction

Feb 09, 2026Abstract:Multi-finger robotic hand manipulation and grasping are challenging due to the high-dimensional action space and the difficulty of acquiring large-scale training data. Existing approaches largely rely on human teleoperation with wearable devices or specialized sensing equipment to capture hand-object interactions, which limits scalability. In this work, we propose VIDEOMANIP, a device-free framework that learns dexterous manipulation directly from RGB human videos. Leveraging recent advances in computer vision, VIDEOMANIP reconstructs explicit 4D robot-object trajectories from monocular videos by estimating human hand poses, object meshes, and retargets the reconstructed human motions to robotic hands for manipulation learning. To make the reconstructed robot data suitable for dexterous manipulation training, we introduce hand-object contact optimization with interaction-centric grasp modeling, as well as a demonstration synthesis strategy that generates diverse training trajectories from a single video, enabling generalizable policy learning without additional robot demonstrations. In simulation, the learned grasping model achieves a 70.25% success rate across 20 diverse objects using the Inspire Hand. In the real world, manipulation policies trained from RGB videos achieve an average 62.86% success rate across seven tasks using the LEAP Hand, outperforming retargeting-based methods by 15.87%. Project videos are available at videomanip.github.io.

Image-based Visual Servo Control for Aerial Manipulation Using a Fully-Actuated UAV

Jun 28, 2023

Abstract:Using Unmanned Aerial Vehicles (UAVs) to perform high-altitude manipulation tasks beyond just passive visual application can reduce the time, cost, and risk of human workers. Prior research on aerial manipulation has relied on either ground truth state estimate or GPS/total station with some Simultaneous Localization and Mapping (SLAM) algorithms, which may not be practical for many applications close to infrastructure with degraded GPS signal or featureless environments. Visual servo can avoid the need to estimate robot pose. Existing works on visual servo for aerial manipulation either address solely end-effector position control or rely on precise velocity measurement and pre-defined visual visual marker with known pattern. Furthermore, most of previous work used under-actuated UAVs, resulting in complicated mechanical and hence control design for the end-effector. This paper develops an image-based visual servo control strategy for bridge maintenance using a fully-actuated UAV. The main components are (1) a visual line detection and tracking system, (2) a hybrid impedance force and motion control system. Our approach does not rely on either robot pose/velocity estimation from an external localization system or pre-defined visual markers. The complexity of the mechanical system and controller architecture is also minimized due to the fully-actuated nature. Experiments show that the system can effectively execute motion tracking and force holding using only the visual guidance for the bridge painting. To the best of our knowledge, this is one of the first studies on aerial manipulation using visual servo that is capable of achieving both motion and force control without the need of external pose/velocity information or pre-defined visual guidance.

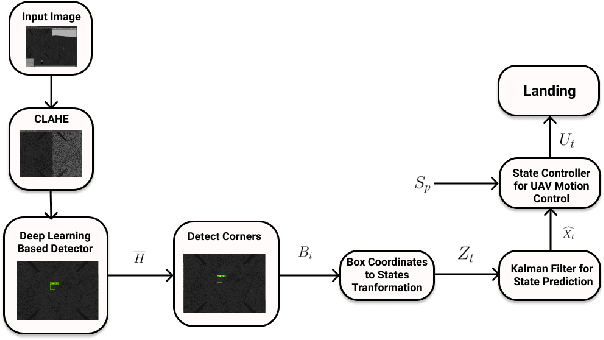

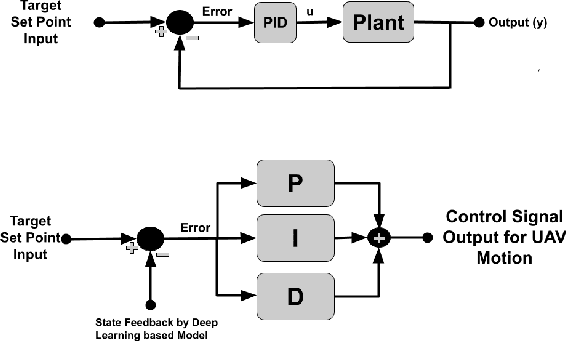

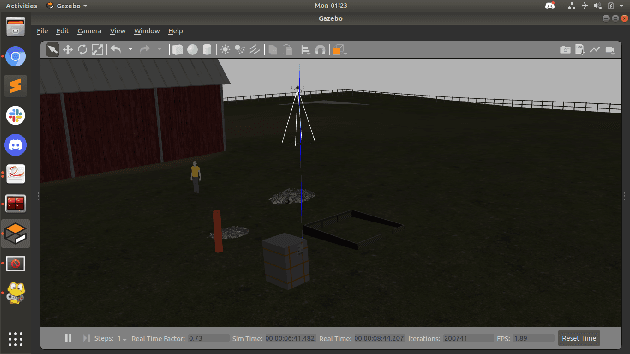

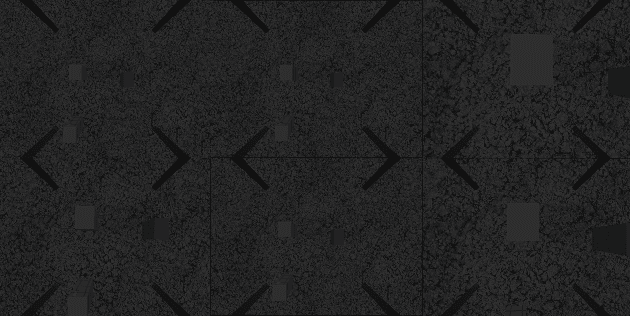

A Generalized Kalman Filter Augmented Deep-Learning based Approach for Autonomous Landing in MAVs

Sep 30, 2021

Abstract:Autonomous landing systems for Micro Aerial Vehicles (MAV) have been proposed using various combinations of GPS-based, vision, and fiducial tag-based schemes. Landing is a critical activity that a MAV performs and poor resolution of GPS, degraded camera images, fiducial tags not meeting required specifications and environmental factors pose challenges. An ideal solution to MAV landing should account for these challenges and for operational challenges which could cause unplanned movements and landings. Most approaches do not attempt to solve this general problem but look at restricted sub-problems with at least one well-defined parameter. In this work, we propose a generalized end-to-end landing site detection system using a two-stage training mechanism, which makes no pre-assumption about the landing site. Experimental results show that we achieve comparable accuracy and outperform existing methods for the time required for landing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge