Wenxi Liu

Learning Resilient Behaviors for Navigation Under Uncertainty Environments

Oct 22, 2019

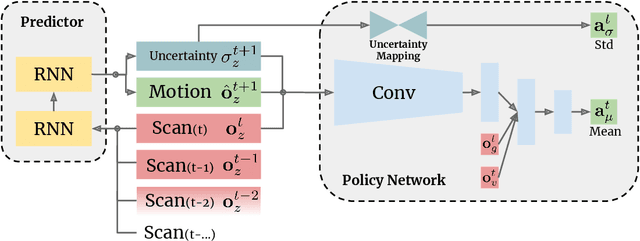

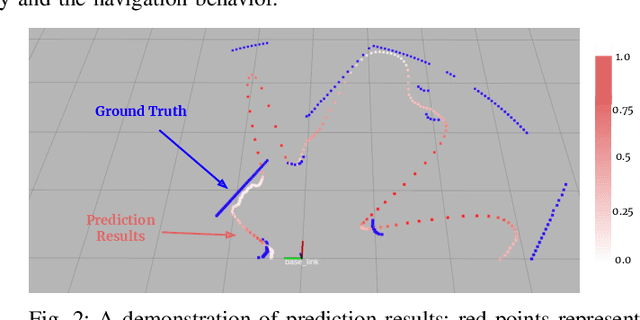

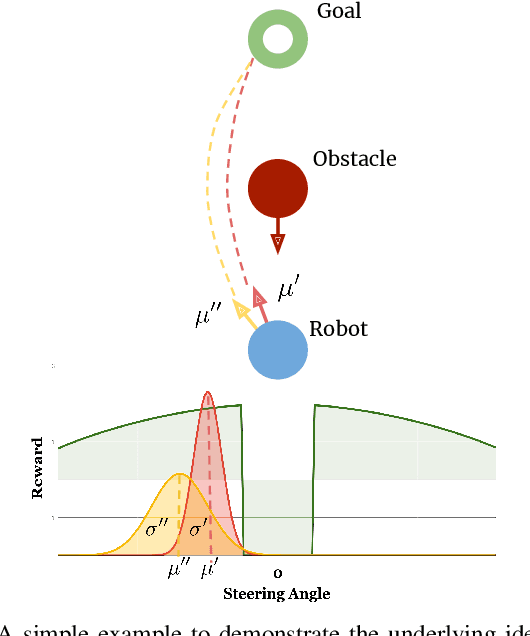

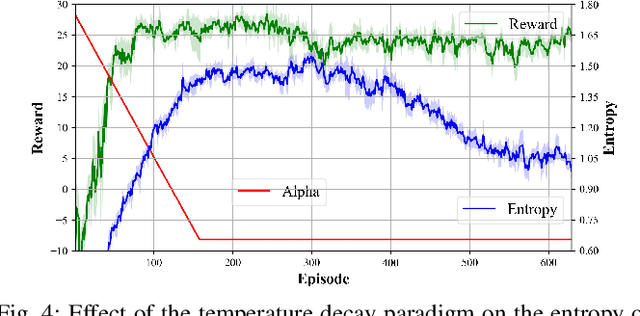

Abstract:Deep reinforcement learning has great potential to acquire complex, adaptive behaviors for autonomous agents automatically. However, the underlying neural network polices have not been widely deployed in real-world applications, especially in these safety-critical tasks (e.g., autonomous driving). One of the reasons is that the learned policy cannot perform flexible and resilient behaviors as traditional methods to adapt to diverse environments. In this paper, we consider the problem that a mobile robot learns adaptive and resilient behaviors for navigating in unseen uncertain environments while avoiding collisions. We present a novel approach for uncertainty-aware navigation by introducing an uncertainty-aware predictor to model the environmental uncertainty, and we propose a novel uncertainty-aware navigation network to learn resilient behaviors in the prior unknown environments. To train the proposed uncertainty-aware network more stably and efficiently, we present the temperature decay training paradigm, which balances exploration and exploitation during the training process. Our experimental evaluation demonstrates that our approach can learn resilient behaviors in diverse environments and generate adaptive trajectories according to environmental uncertainties.

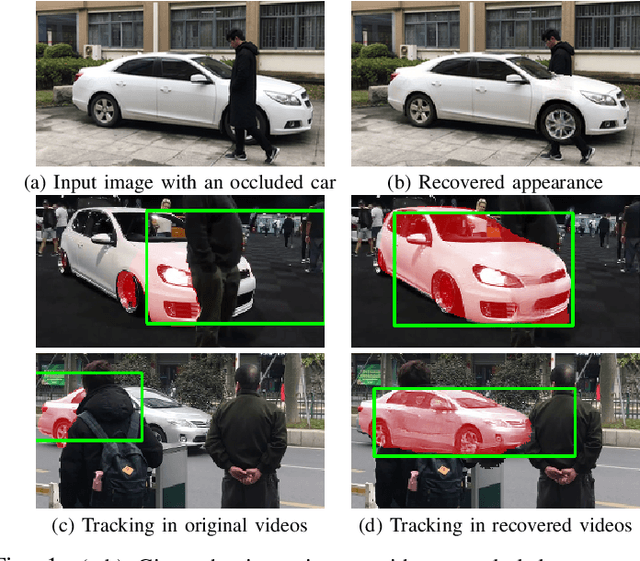

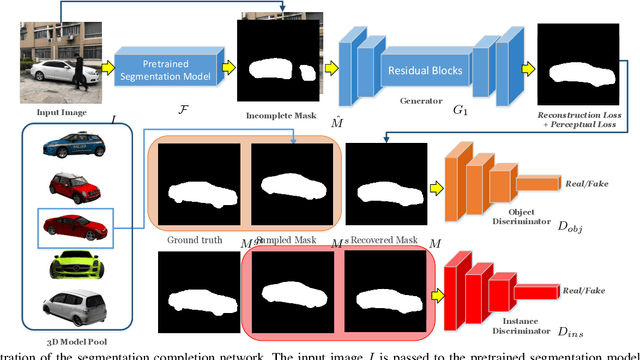

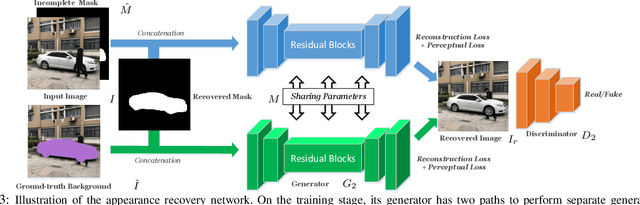

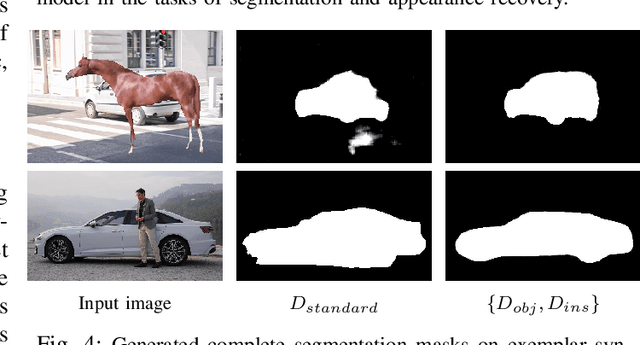

Visualizing the Invisible: Occluded Vehicle Segmentation and Recovery

Jul 22, 2019

Abstract:In this paper, we propose a novel iterative multi-task framework to complete the segmentation mask of an occluded vehicle and recover the appearance of its invisible parts. In particular, to improve the quality of the segmentation completion, we present two coupled discriminators and introduce an auxiliary 3D model pool for sampling authentic silhouettes as adversarial samples. In addition, we propose a two-path structure with a shared network to enhance the appearance recovery capability. By iteratively performing the segmentation completion and the appearance recovery, the results will be progressively refined. To evaluate our method, we present a dataset, the Occluded Vehicle dataset, containing synthetic and real-world occluded vehicle images. We conduct comparison experiments on this dataset and demonstrate that our model outperforms the state-of-the-art in tasks of recovering segmentation mask and appearance for occluded vehicles. Moreover, we also demonstrate that our appearance recovery approach can benefit the occluded vehicle tracking in real-world videos.

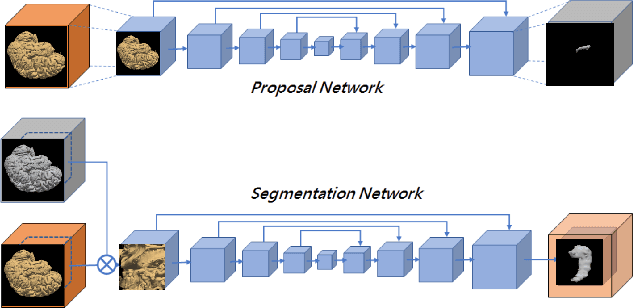

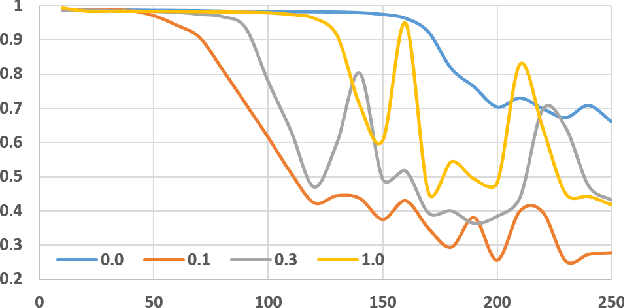

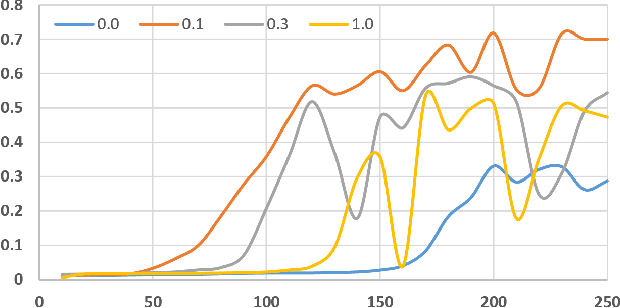

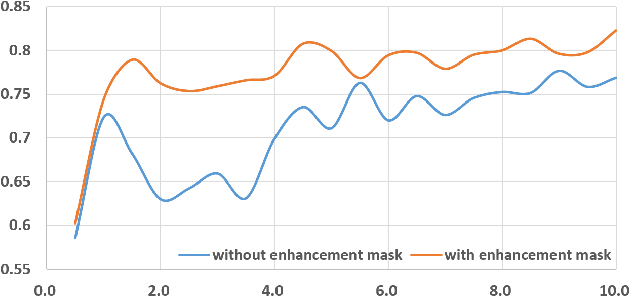

Enhancement Mask for Hippocampus Detection and Segmentation

Feb 12, 2019

Abstract:Detection and segmentation of the hippocampal structures in volumetric brain images is a challenging problem in the area of medical imaging. In this paper, we propose a two-stage 3D fully convolutional neural network that efficiently detects and segments the hippocampal structures. In particular, our approach first localizes the hippocampus from the whole volumetric image while obtaining a proposal for a rough segmentation. After localization, we apply the proposal as an enhancement mask to extract the fine structure of the hippocampus. The proposed method has been evaluated on a public dataset and compares with state-of-the-art approaches. Results indicate the effectiveness of the proposed method, which yields mean Dice Similarity Coefficients (i.e. DSC) of $0.897$ and $0.900$ for the left and right hippocampus, respectively. Furthermore, extensive experiments manifest that the proposed enhancement mask layer has remarkable benefits for accelerating training process and obtaining more accurate segmentation results.

* This paper has been published in the proceedings of IEEE International Conference on Information and Automation 2018

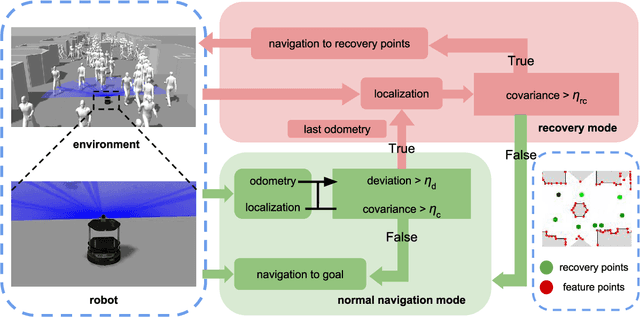

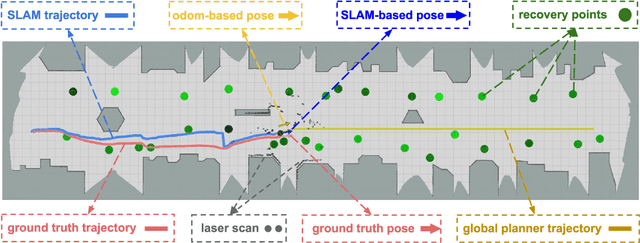

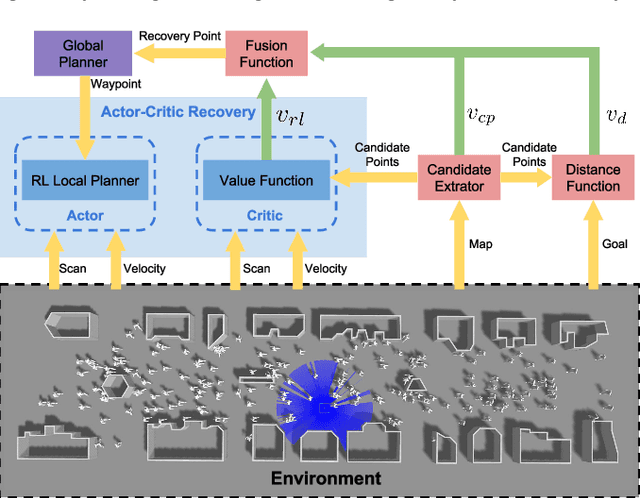

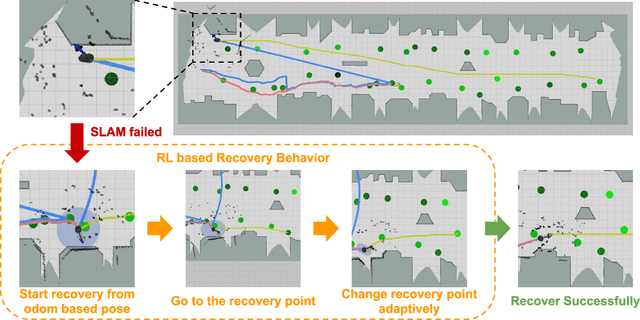

Getting Robots Unfrozen and Unlost in Dense Pedestrian Crowds

Sep 30, 2018

Abstract:We aim to enable a mobile robot to navigate through environments with dense crowds, e.g., shopping malls, canteens, train stations, or airport terminals. In these challenging environments, existing approaches suffer from two common problems: the robot may get frozen and cannot make any progress toward its goal, or it may get lost due to severe occlusions inside a crowd. Here we propose a navigation framework that handles the robot freezing and the navigation lost problems simultaneously. First, we enhance the robot's mobility and unfreeze the robot in the crowd using a reinforcement learning based local navigation policy developed in our previous work~\cite{long2017towards}, which naturally takes into account the coordination between the robot and the human. Secondly, the robot takes advantage of its excellent local mobility to recover from its localization failure. In particular, it dynamically chooses to approach a set of recovery positions with rich features. To the best of our knowledge, our method is the first approach that simultaneously solves the freezing problem and the navigation lost problem in dense crowds. We evaluate our method in both simulated and real-world environments and demonstrate that it outperforms the state-of-the-art approaches. Videos are available at https://sites.google.com/view/rlslam.

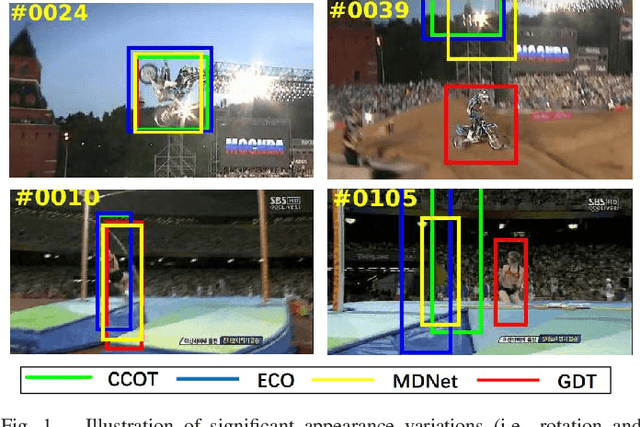

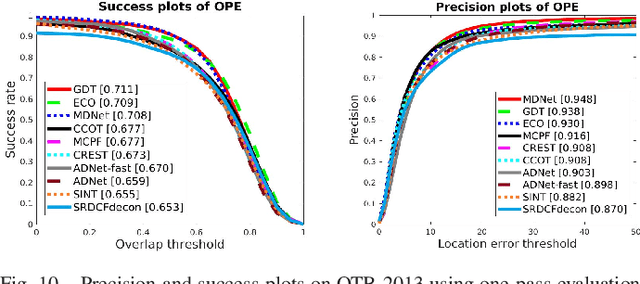

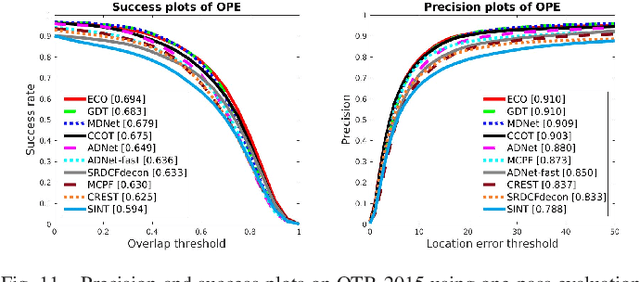

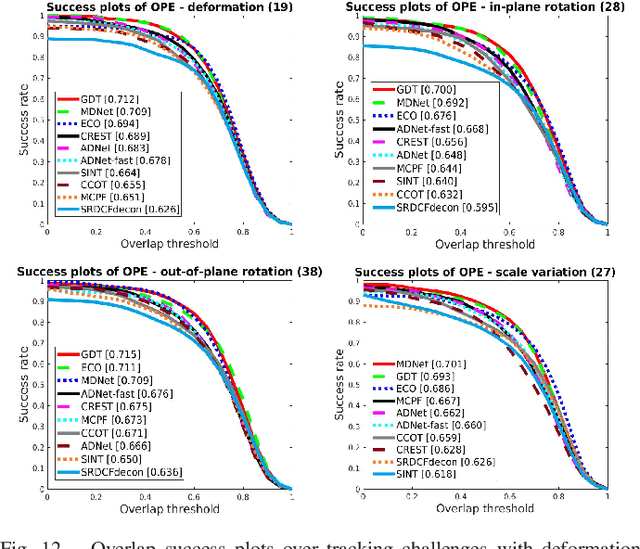

Deformable Object Tracking with Gated Fusion

Sep 27, 2018

Abstract:The tracking-by-detection framework receives growing attentions through the integration with the Convolutional Neural Network (CNN). Existing methods, however, fail to track objects with severe appearance variations. This is because the traditional convolutional operation is performed on fixed grids, and thus may not be able to find the correct response while the object is changing pose or under varying environmental conditions. In this paper, we propose a deformable convolution layer to enrich the target appearance representations in the tracking-by-detection framework. We aim to capture the target appearance variations via deformable convolution and supplement its original appearance through residual learning. Meanwhile, we propose a gated fusion scheme to control how the variations captured by the deformable convolution affect the original appearance. The enriched feature representation through deformable convolution facilitates the discrimination of the CNN classifier on the target object and background. Extensive experiments on the standard benchmarks show that the proposed tracker performs favorably against state-of-the-art methods.

An Intelligent Extraversion Analysis Scheme from Crowd Trajectories for Surveillance

Sep 27, 2018

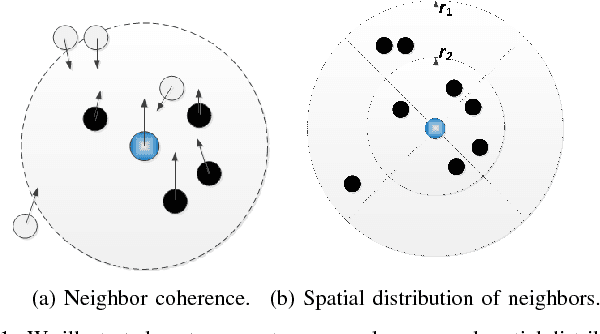

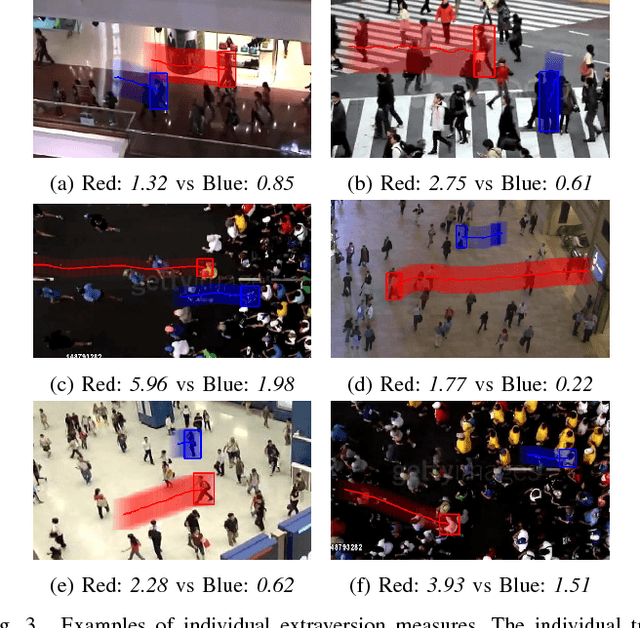

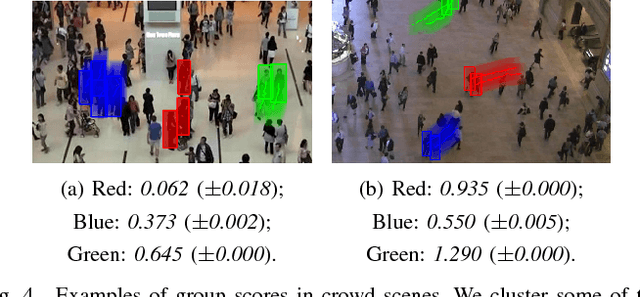

Abstract:In recent years, crowd analysis is important for applications such as smart cities, intelligent transportation system, customer behavior prediction, and visual surveillance. Understanding the characteristics of the individual motion in a crowd can be beneficial for social event detection and abnormal detection, but it has rarely been studied. In this paper, we focus on the extraversion measure of individual motions in crowds based on trajectory data. Extraversion is one of typical personalities that is often observed in human crowd behaviors and it can reflect not only the characteristics of the individual motion, but also the that of the holistic crowd motions. To our best knowledge, this is the first attempt to analyze individual extraversion of crowd motions based on trajectories. To accomplish this, we first present a effective composite motion descriptor, which integrates the basic individual motion information and social metrics, to describe the extraversion of each individual in a crowd. The social metrics consider both the neighboring distribution and their interaction pattern. Since our major goal is to learn a universal scoring function that can measure the degrees of extraversion across varied crowd scenes, we incorporate and adapt the active learning technique to the relative attribute approach. Specifically, we assume the social groups in any crowds contain individuals with the similar degree of extraversion. Based on such assumption, we significantly reduce the computation cost by clustering and ranking the trajectories actively. Finally, we demonstrate the performance of our proposed method by measuring the degree of extraversion for real individual trajectories in crowds and analyzing crowd scenes from a real-world dataset.

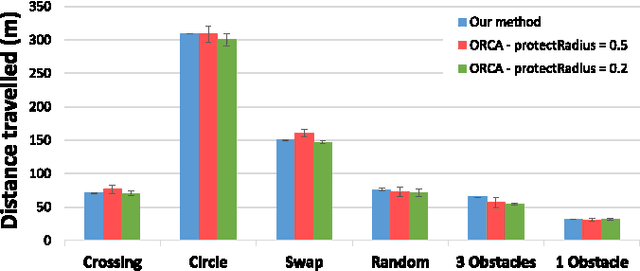

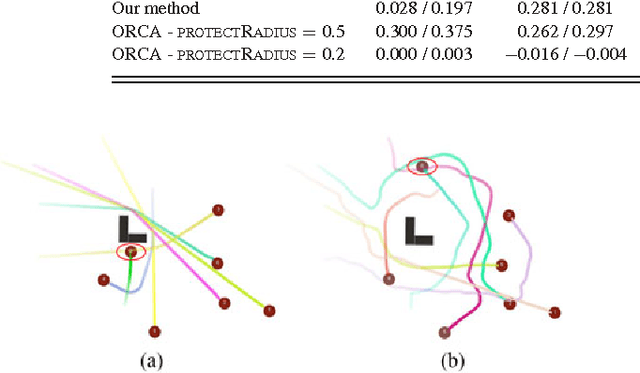

Fully Distributed Multi-Robot Collision Avoidance via Deep Reinforcement Learning for Safe and Efficient Navigation in Complex Scenarios

Aug 11, 2018

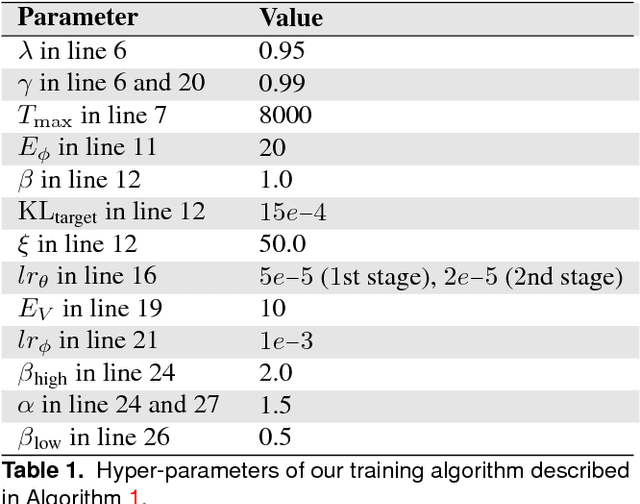

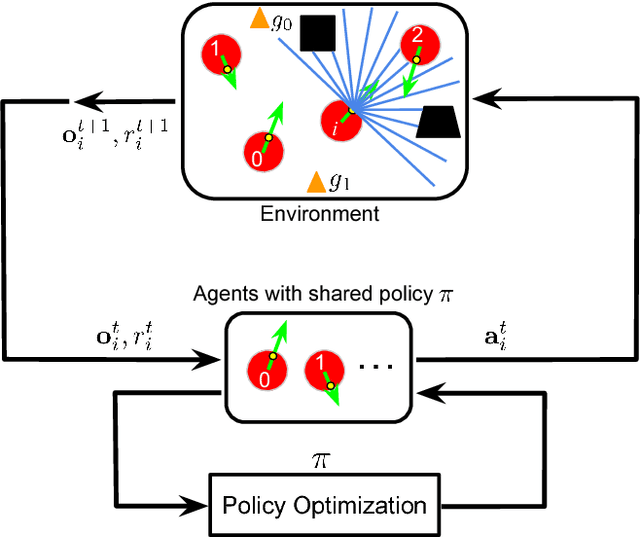

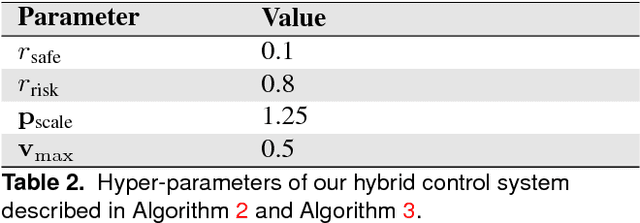

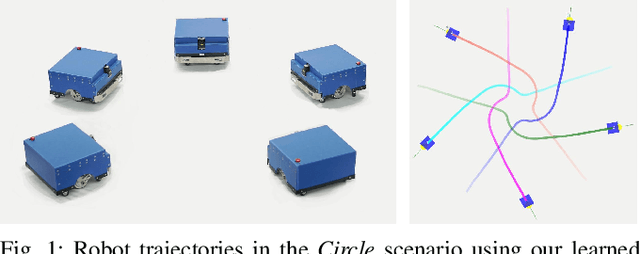

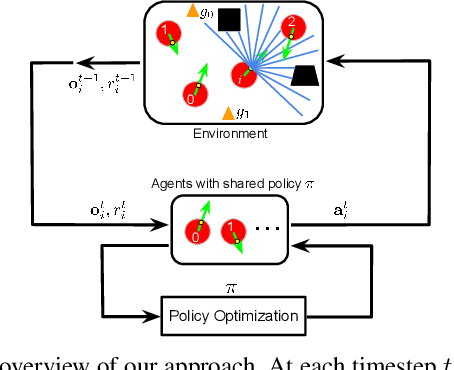

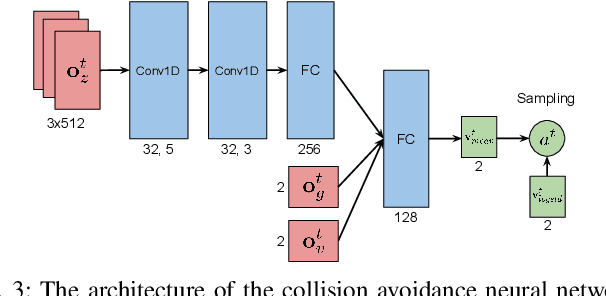

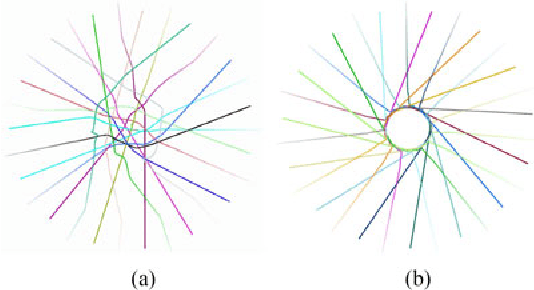

Abstract:In this paper, we present a decentralized sensor-level collision avoidance policy for multi-robot systems, which shows promising results in practical applications. In particular, our policy directly maps raw sensor measurements to an agent's steering commands in terms of the movement velocity. As a first step toward reducing the performance gap between decentralized and centralized methods, we present a multi-scenario multi-stage training framework to learn an optimal policy. The policy is trained over a large number of robots in rich, complex environments simultaneously using a policy gradient based reinforcement learning algorithm. The learning algorithm is also integrated into a hybrid control framework to further improve the policy's robustness and effectiveness. We validate the learned sensor-level collision avoidance policy in a variety of simulated and real-world scenarios with thorough performance evaluations for large-scale multi-robot systems. The generalization of the learned policy is verified in a set of unseen scenarios including the navigation of a group of heterogeneous robots and a large-scale scenario with 100 robots. Although the policy is trained using simulation data only, we have successfully deployed it on physical robots with shapes and dynamics characteristics that are different from the simulated agents, in order to demonstrate the controller's robustness against the sim-to-real modeling error. Finally, we show that the collision-avoidance policy learned from multi-robot navigation tasks provides an excellent solution to the safe and effective autonomous navigation for a single robot working in a dense real human crowd. Our learned policy enables a robot to make effective progress in a crowd without getting stuck. Videos are available at https://sites.google.com/view/hybridmrca

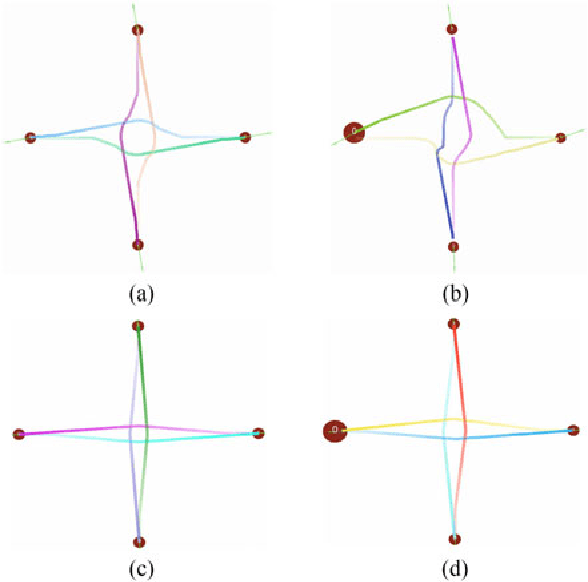

Towards Optimally Decentralized Multi-Robot Collision Avoidance via Deep Reinforcement Learning

May 20, 2018

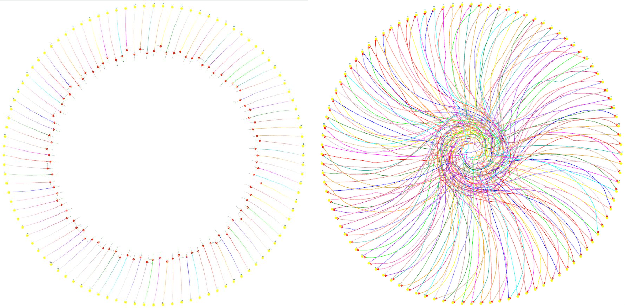

Abstract:Developing a safe and efficient collision avoidance policy for multiple robots is challenging in the decentralized scenarios where each robot generate its paths without observing other robots' states and intents. While other distributed multi-robot collision avoidance systems exist, they often require extracting agent-level features to plan a local collision-free action, which can be computationally prohibitive and not robust. More importantly, in practice the performance of these methods are much lower than their centralized counterparts. We present a decentralized sensor-level collision avoidance policy for multi-robot systems, which directly maps raw sensor measurements to an agent's steering commands in terms of movement velocity. As a first step toward reducing the performance gap between decentralized and centralized methods, we present a multi-scenario multi-stage training framework to find an optimal policy which is trained over a large number of robots on rich, complex environments simultaneously using a policy gradient based reinforcement learning algorithm. We validate the learned sensor-level collision avoidance policy in a variety of simulated scenarios with thorough performance evaluations and show that the final learned policy is able to find time efficient, collision-free paths for a large-scale robot system. We also demonstrate that the learned policy can be well generalized to new scenarios that do not appear in the entire training period, including navigating a heterogeneous group of robots and a large-scale scenario with 100 robots. Videos are available at https://sites.google.com/view/drlmaca

Deep-Learned Collision Avoidance Policy for Distributed Multi-Agent Navigation

Jul 06, 2017

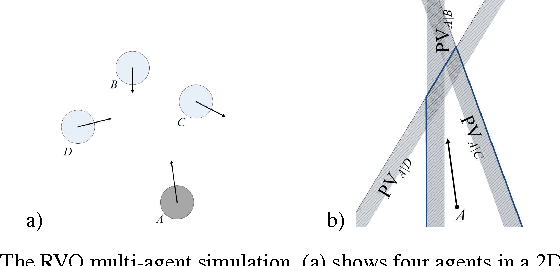

Abstract:High-speed, low-latency obstacle avoidance that is insensitive to sensor noise is essential for enabling multiple decentralized robots to function reliably in cluttered and dynamic environments. While other distributed multi-agent collision avoidance systems exist, these systems require online geometric optimization where tedious parameter tuning and perfect sensing are necessary. We present a novel end-to-end framework to generate reactive collision avoidance policy for efficient distributed multi-agent navigation. Our method formulates an agent's navigation strategy as a deep neural network mapping from the observed noisy sensor measurements to the agent's steering commands in terms of movement velocity. We train the network on a large number of frames of collision avoidance data collected by repeatedly running a multi-agent simulator with different parameter settings. We validate the learned deep neural network policy in a set of simulated and real scenarios with noisy measurements and demonstrate that our method is able to generate a robust navigation strategy that is insensitive to imperfect sensing and works reliably in all situations. We also show that our method can be well generalized to scenarios that do not appear in our training data, including scenes with static obstacles and agents with different sizes. Videos are available at https://sites.google.com/view/deepmaca.

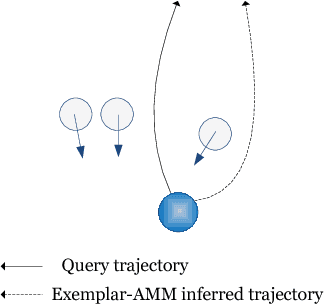

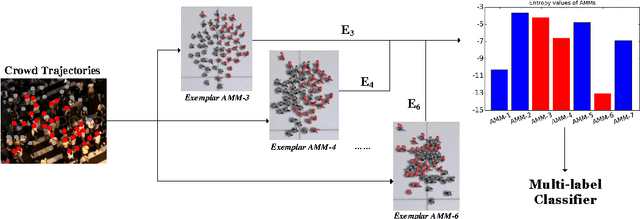

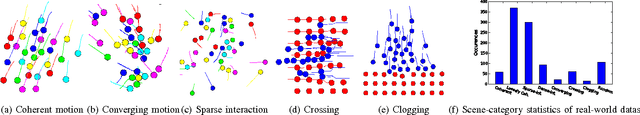

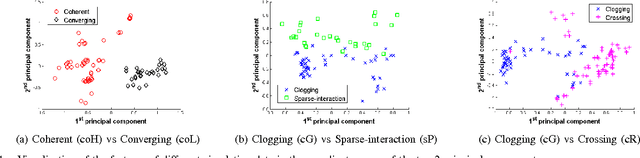

Exemplar-AMMs: Recognizing Crowd Movements from Pedestrian Trajectories

Mar 31, 2016

Abstract:In this paper, we present a novel method to recognize the types of crowd movement from crowd trajectories using agent-based motion models (AMMs). Our idea is to apply a number of AMMs, referred to as exemplar-AMMs, to describe the crowd movement. Specifically, we propose an optimization framework that filters out the unknown noise in the crowd trajectories and measures their similarity to the exemplar-AMMs to produce a crowd motion feature. We then address our real-world crowd movement recognition problem as a multi-label classification problem. Our experiments show that the proposed feature outperforms the state-of-the-art methods in recognizing both simulated and real-world crowd movements from their trajectories. Finally, we have created a synthetic dataset, SynCrowd, which contains 2D crowd trajectories in various scenarios, generated by various crowd simulators. This dataset can serve as a training set or benchmark for crowd analysis work.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge