Umberto Spagnolini

Mobile Radio Networks and Weather Radars Dualism: Rainfall Measurement Revolution in Densely Populated Areas

Mar 19, 2026Abstract:This study demonstrates, for the first time, how a network of cellular base stations (BSs) - the infrastructure of mobile radio networks - can be used as a distributed opportunistic radar for rainfall remote sensing. By adapting signal-processing techniques traditionally employed in Doppler weather radar systems, we demonstrate that BS signals can be used to retrieve typical weather radar products, including reflectivity factor, mean Doppler velocity, and spectral width. Due to the high spatial density of BS infrastructure in urban environments, combined with intrinsic technical features such as electronically steerable antenna arrays and wide receiver bandwidths, the proposed approach achieves unprecedented spatial and temporal resolutions, on the order of a few meters and several tens of seconds, respectively. Despite limitations related to low transmitted power, limited antenna gain, and other system constraints, a major challenge arises from ground clutter contamination, which is exacerbated by the nearly horizontal orientation of BS antenna beams. This work provides a thorough assessment of clutter impact and demonstrates that, through appropriate processing, the resulting clutter-filtered radar moments reach a satisfactory level of quality when compared with raw observations and with measurements from independent BSs with overlapped field-of-views. The findings highlight a transformative opportunity for urban hydrometeorology: leveraging existing telecommunications infrastructure to obtain rainfall information with a level of spatial granularity and temporal immediacy like never before.

Device-Centric ISAC for Exposure Control via Opportunistic Virtual Aperture Sensing

Feb 19, 2026Abstract:Regulatory limits on Maximum Permissible Exposure (MPE) require handheld devices to reduce transmit power when operated near the user's body. Current proximity sensors provide only binary detection, triggering conservative power back-off that degrades link quality. If the device could measure its distance from the body, transmit power could be adjusted proportionally, improving throughput while maintaining compliance. This paper develops a device-centric integrated sensing and communication (ISAC) method for the device to measure this distance. The uplink communication waveform is exploited for sensing, and the natural motion of the user's hand creates a virtual aperture that provides the angular resolution necessary for localization. Virtual aperture processing requires precise knowledge of the device trajectory, which in this scenario is opportunistic and unknown. One can exploit onboard inertial sensors to estimate the device trajectory; however, the inertial sensors accuracy is not sufficient. To address this, we develop an autofocus algorithm based on extended Kalman filtering that jointly tracks the trajectory and compensates residual errors using phase observations from strong scatterers. The Bayesian Cramér-Rao bound for localization is derived under correlated inertial errors. Numerical results at 28GHz demonstrate centimeter-level accuracy with realistic sensor parameters.

Echo-Side Integrated Sensing and Communication via Space-Time Reconfigurable Intelligent Surfaces

Jan 14, 2026Abstract:This paper presents an echo-side modulation framework for integrated sensing and communication (ISAC) systems. A space-time reconfigurable intelligent surface (ST-RIS) impresses a continuous-phase modulation onto the radar echo, enabling uplink data transmission with a phase modulation of the transmitted radar-like waveform. The received signal is a multiplicative composition of the sensing waveform and the phase for communication. Both functionalities share the same physical signal and perceive each other as impairments. The achievable communication rate is expressed as a function of a coupling parameter that links sensing accuracy to phase error accumulation. Under a fixed bandwidth constraint, the sensing and communication figures of merit define a convex Pareto frontier. The optimal bandwidth allocation satisfying a minimum sensing requirement is derived in closed form. The modified Cramer-Rao bound (MCRB) for range estimation is derived in closed form; this parameter must be estimated to compensate for the frequency offset before data demodulation. Frame synchronization is formulated as a generalized likelihood ratio test (GLRT), and the detection probability is obtained through characteristic function inversion, accounting for residual frequency errors from imperfect range estimation. Numerical results validate the theoretical bounds and characterize the trade-off across the operating range.

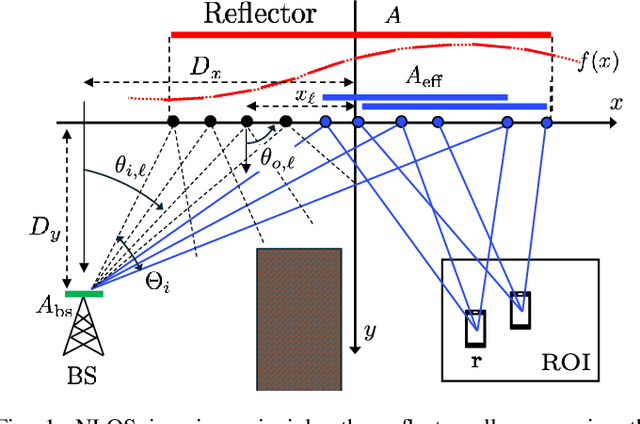

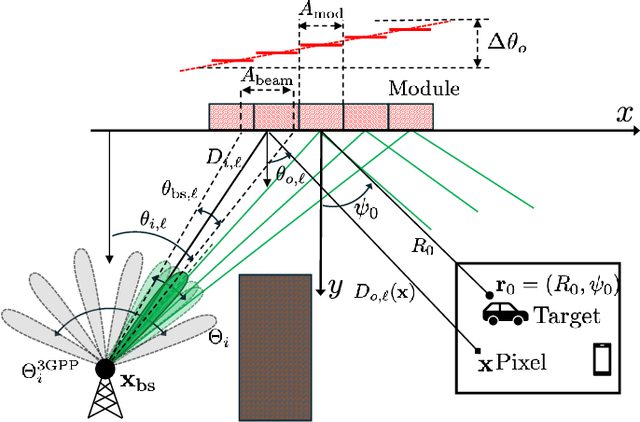

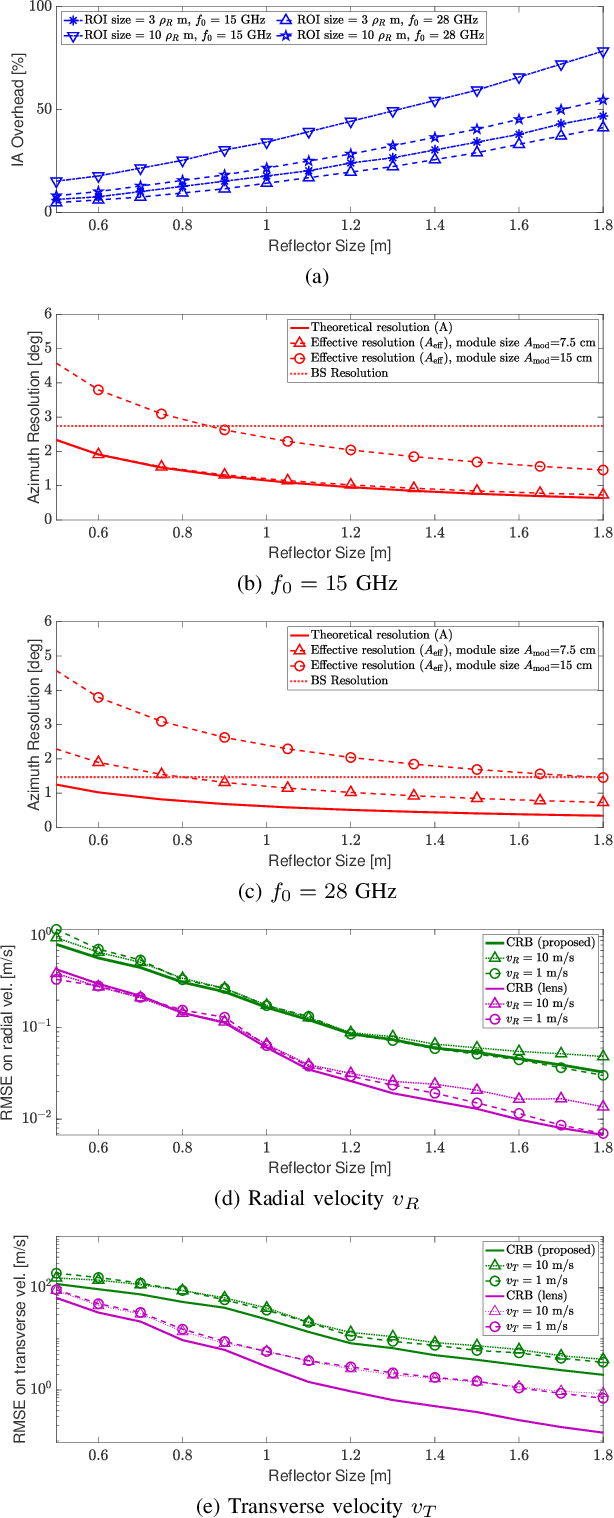

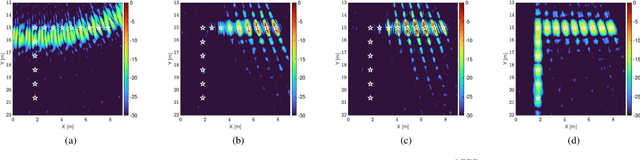

Enabling NLOS Imaging Capabilities at the Initial Access of 6G Base Stations

Nov 19, 2025

Abstract:Sensing in non-line-of-sight (NLOS) is one of the major challenges for integrated sensing and communication systems. Existing countermeasures for NLOS either use prior knowledge on the environment to characterize all the multiple bounces or deploy anomalous reflectors in the environment to enable communication infrastructure to ''\textit{see behind the corner}''. This work addresses the integration of monostatic NLOS imaging functionalities into the initial access (IA) procedure of a next generation base station (BS), by means of a non-reconfigurable modular reflector. During standard-compliant IA, the BS sweeps a narrow beam using a pre-defined dedicated codebook to achieve the beam alignment with users. We introduce the imaging functionality by enhancing such codebook with imaging-specific entries that are jointly designed with the angular configuration of the modular reflector to enable high-resolution imaging of a region in NLOS by \textit{coherently} processing all the echoes at the BS. We derive closed-form expressions for the near-field (NF) spatial resolution, as well as for the \textit{effective aperture} (i.e., the portion of the reflector that actively contributes to improve image resolution). The problem of imaging of moving targets in NLOS is also addressed, and we propose a maximum-likelihood estimation for target's velocity in NF and related theoretical bound. Further, we discuss and quantify the inherent communication-imaging performance trade-offs and related system design challenges through numerical simulations. Finally, the proposed imaging method employing modular reflectors is validated both numerically and experimentally, showing the effectiveness of our concept.

Chartwin: a Case Study on Channel Charting-aided Localization in Dynamic Digital Network Twins

Aug 12, 2025Abstract:Wireless communication systems can significantly benefit from the availability of spatially consistent representations of the wireless channel to efficiently perform a wide range of communication tasks. Towards this purpose, channel charting has been introduced as an effective unsupervised learning technique to achieve both locally and globally consistent radio maps. In this letter, we propose Chartwin, a case study on the integration of localization-oriented channel charting with dynamic Digital Network Twins (DNTs). Numerical results showcase the significant performance of semi-supervised channel charting in constructing a spatially consistent chart of the considered extended urban environment. The considered method results in $\approx$ 4.5 m localization error for the static DNT and $\approx$ 6 m in the dynamic DNT, fostering DNT-aided channel charting and localization.

Channel Charting in Smart Radio Environments

Aug 10, 2025Abstract:This paper introduces the use of static electromagnetic skins (EMSs) to enable robust device localization via channel charting (CC) in realistic urban environments. We develop a rigorous optimization framework that leverages EMS to enhance channel dissimilarity and spatial fingerprinting, formulating EMS phase profile design as a codebook-based problem targeting the upper quantiles of key embedding metric, localization error, trustworthiness, and continuity. Through 3D ray-traced simulations of a representative city scenario, we demonstrate that optimized EMS configurations, in addition to significant improvement of the average positioning error, reduce the 90th-percentile localization error from over 60 m (no EMS) to less than 25 m, while drastically improving trustworthiness and continuity. To the best of our knowledge, this is the first work to exploit Smart Radio Environment (SRE) with static EMS for enhancing CC, achieving substantial gains in localization performance under challenging None-Line-of-Sight (NLoS) conditions.

Exploiting Age of Information in Network Digital Twins for AI-driven Real-Time Link Blockage Detection

May 21, 2025Abstract:The Line-of-Sight (LoS) identification is crucial to ensure reliable high-frequency communication links, especially those vulnerable to blockages. Network Digital Twins and Artificial Intelligence are key technologies enabling blockage detection (LoS identification) for high-frequency wireless systems, e.g., 6>GHz. In this work, we enhance Network Digital Twins by incorporating Age of Information (AoI) metrics, a quantification of status update freshness, enabling reliable real-time blockage detection (LoS identification) in dynamic wireless environments. By integrating raytracing techniques, we automate large-scale collection and labeling of channel data, specifically tailored to the evolving conditions of the environment. The introduced AoI is integrated with the loss function to prioritize more recent information to fine-tune deep learning models in case of performance degradation (model drift). The effectiveness of the proposed solution is demonstrated in realistic urban simulations, highlighting the trade-off between input resolution, computational cost, and model performance. A resolution reduction of 4x8 from an original channel sample size of (32, 1024) along the angle and subcarrier dimension results in a computational speedup of 32 times. The proposed fine-tuning successfully mitigates performance degradation while requiring only 1% of the available data samples, enabling automated and fast mitigation of model drifts.

AI-empowered Real-Time Line-of-Sight Identification via Network Digital Twins

May 21, 2025

Abstract:The identification of Line-of-Sight (LoS) conditions is critical for ensuring reliable high-frequency communication links, which are particularly vulnerable to blockages and rapid channel variations. Network Digital Twins (NDTs) and Ray-Tracing (RT) techniques can significantly automate the large-scale collection and labeling of channel data, tailored to specific wireless environments. This paper examines the quality of Artificial Intelligence (AI) models trained on data generated by Network Digital Twins. We propose and evaluate training strategies for a general-purpose Deep Learning model, demonstrating superior performance compared to the current state-of-the-art. In terms of classification accuracy, our approach outperforms the state-of-the-art Deep Learning model by 5% in very low SNR conditions and by approximately 10% in medium-to-high SNR scenarios. Additionally, the proposed strategies effectively reduce the input size to the Deep Learning model while preserving its performance. The computational cost, measured in floating-point operations per second (FLOPs) during inference, is reduced by 98.55% relative to state-of-the-art solutions, making it ideal for real-time applications.

Joint Optimization of Uplink and Downlink Power in Full-Duplex Integrated Access and Backhaul

Apr 05, 2025Abstract:We examine the performance of an Integrated Access and Backhaul (IAB) node as a range extender for beyond-5G networks, focusing on the significant challenges of effective power allocation and beamforming strategies, which are vital for maximizing users' spectral efficiency (SE). We present both max-sum SE and max-min fairness power allocation strategies, to assess their effects on system performance. The results underscore the necessity of power optimization, particularly as the number of users served by the IAB node increases, demonstrating how efficient power allocation enhances service quality in high-load scenarios. The results also show that the typical line-of-sight link between the IAB donor and the IAB node has rank one, posing a limitation on the effective SEs that the IAB node can support.

Hybrid MIMO in the Upper Mid-Band: Architectures, Processing, and Energy Efficiency

Mar 03, 2025Abstract:As 6G networks evolve, the upper mid-band spectrum (7 GHz to 24 GHz), or frequency range 3 (FR3), is emerging as a promising balance between the coverage offered by sub-6 GHz bands and the high-capacity of millimeter wave (mmWave) frequencies. This paper explores the structure of FR3 hybrid MIMO systems and proposes two architectural classes: Frequency Integrated (FI) and Frequency Partitioned (FP). FI architectures enhance spectral efficiency by exploiting multiple sub-bands parallelism, while FP architectures dynamically allocate sub-band access according to specific application requirements. Additionally, two approaches, fully digital (FD) and hybrid analog-digital (HAD), are considered, comparing shared (SRF) versus dedicated RF (DRF) chain configurations. Herein signal processing solutions are investigated, particularly for an uplink multi-user scenario with power control optimization. Results demonstrate that SRF and DRF architectures achieve comparable spectral efficiency; however, SRF structures consume nearly half the power of DRF in the considered setup. While FD architectures provide higher spectral efficiency, they do so at the cost of increased power consumption compared to HAD. Additionally, FI architectures show slightly greater power consumption compared to FP; however, they provide a significant benefit in spectral efficiency (over 4 x), emphasizing an important trade-off in FR3 engineering.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge