Shengsheng Qian

SoMe: A Realistic Benchmark for LLM-based Social Media Agents

Dec 09, 2025Abstract:Intelligent agents powered by large language models (LLMs) have recently demonstrated impressive capabilities and gained increasing popularity on social media platforms. While LLM agents are reshaping the ecology of social media, there exists a current gap in conducting a comprehensive evaluation of their ability to comprehend media content, understand user behaviors, and make intricate decisions. To address this challenge, we introduce SoMe, a pioneering benchmark designed to evaluate social media agents equipped with various agent tools for accessing and analyzing social media data. SoMe comprises a diverse collection of 8 social media agent tasks, 9,164,284 posts, 6,591 user profiles, and 25,686 reports from various social media platforms and external websites, with 17,869 meticulously annotated task queries. Compared with the existing datasets and benchmarks for social media tasks, SoMe is the first to provide a versatile and realistic platform for LLM-based social media agents to handle diverse social media tasks. By extensive quantitative and qualitative analysis, we provide the first overview insight into the performance of mainstream agentic LLMs in realistic social media environments and identify several limitations. Our evaluation reveals that both the current closed-source and open-source LLMs cannot handle social media agent tasks satisfactorily. SoMe provides a challenging yet meaningful testbed for future social media agents. Our code and data are available at https://github.com/LivXue/SoMe

LiveStar: Live Streaming Assistant for Real-World Online Video Understanding

Nov 07, 2025Abstract:Despite significant progress in Video Large Language Models (Video-LLMs) for offline video understanding, existing online Video-LLMs typically struggle to simultaneously process continuous frame-by-frame inputs and determine optimal response timing, often compromising real-time responsiveness and narrative coherence. To address these limitations, we introduce LiveStar, a pioneering live streaming assistant that achieves always-on proactive responses through adaptive streaming decoding. Specifically, LiveStar incorporates: (1) a training strategy enabling incremental video-language alignment for variable-length video streams, preserving temporal consistency across dynamically evolving frame sequences; (2) a response-silence decoding framework that determines optimal proactive response timing via a single forward pass verification; (3) memory-aware acceleration via peak-end memory compression for online inference on 10+ minute videos, combined with streaming key-value cache to achieve 1.53x faster inference. We also construct an OmniStar dataset, a comprehensive dataset for training and benchmarking that encompasses 15 diverse real-world scenarios and 5 evaluation tasks for online video understanding. Extensive experiments across three benchmarks demonstrate LiveStar's state-of-the-art performance, achieving an average 19.5% improvement in semantic correctness with 18.1% reduced timing difference compared to existing online Video-LLMs, while improving FPS by 12.0% across all five OmniStar tasks. Our model and dataset can be accessed at https://github.com/yzy-bupt/LiveStar.

Short-video Propagation Influence Rating: A New Real-world Dataset and A New Large Graph Model

Mar 31, 2025

Abstract:Short-video platforms have gained immense popularity, captivating the interest of millions, if not billions, of users globally. Recently, researchers have highlighted the significance of analyzing the propagation of short-videos, which typically involves discovering commercial values, public opinions, user behaviors, etc. This paper proposes a new Short-video Propagation Influence Rating (SPIR) task and aims to promote SPIR from both the dataset and method perspectives. First, we propose a new Cross-platform Short-Video (XS-Video) dataset, which aims to provide a large-scale and real-world short-video propagation network across various platforms to facilitate the research on short-video propagation. Our XS-Video dataset includes 117,720 videos, 381,926 samples, and 535 topics across 5 biggest Chinese platforms, annotated with the propagation influence from level 0 to 9. To the best of our knowledge, this is the first large-scale short-video dataset that contains cross-platform data or provides all of the views, likes, shares, collects, fans, comments, and comment content. Second, we propose a Large Graph Model (LGM) named NetGPT, based on a novel three-stage training mechanism, to bridge heterogeneous graph-structured data with the powerful reasoning ability and knowledge of Large Language Models (LLMs). Our NetGPT can comprehend and analyze the short-video propagation graph, enabling it to predict the long-term propagation influence of short-videos. Comprehensive experimental results evaluated by both classification and regression metrics on our XS-Video dataset indicate the superiority of our method for SPIR.

Kwai-STaR: Transform LLMs into State-Transition Reasoners

Nov 07, 2024

Abstract:Mathematical reasoning presents a significant challenge to the cognitive capabilities of LLMs. Various methods have been proposed to enhance the mathematical ability of LLMs. However, few recognize the value of state transition for LLM reasoning. In this work, we define mathematical problem-solving as a process of transiting from an initial unsolved state to the final resolved state, and propose Kwai-STaR framework, which transforms LLMs into State-Transition Reasoners to improve their intuitive reasoning capabilities. Our approach comprises three main steps: (1) Define the state space tailored to the mathematical reasoning. (2) Generate state-transition data based on the state space. (3) Convert original LLMs into State-Transition Reasoners via a curricular training strategy. Our experiments validate the effectiveness of Kwai-STaR in enhancing mathematical reasoning: After training on the small-scale Kwai-STaR dataset, general LLMs, including Mistral-7B and LLaMA-3, achieve considerable performance gain on the GSM8K and GSM-Hard dataset. Additionally, the state transition-based design endows Kwai-STaR with remarkable training and inference efficiency. Further experiments are underway to establish the generality of Kwai-STaR.

BadAgent: Inserting and Activating Backdoor Attacks in LLM Agents

Jun 05, 2024

Abstract:With the prosperity of large language models (LLMs), powerful LLM-based intelligent agents have been developed to provide customized services with a set of user-defined tools. State-of-the-art methods for constructing LLM agents adopt trained LLMs and further fine-tune them on data for the agent task. However, we show that such methods are vulnerable to our proposed backdoor attacks named BadAgent on various agent tasks, where a backdoor can be embedded by fine-tuning on the backdoor data. At test time, the attacker can manipulate the deployed LLM agents to execute harmful operations by showing the trigger in the agent input or environment. To our surprise, our proposed attack methods are extremely robust even after fine-tuning on trustworthy data. Though backdoor attacks have been studied extensively in natural language processing, to the best of our knowledge, we could be the first to study them on LLM agents that are more dangerous due to the permission to use external tools. Our work demonstrates the clear risk of constructing LLM agents based on untrusted LLMs or data. Our code is public at https://github.com/DPamK/BadAgent

Erasing Self-Supervised Learning Backdoor by Cluster Activation Masking

Dec 13, 2023

Abstract:Researchers have recently found that Self-Supervised Learning (SSL) is vulnerable to backdoor attacks. The attacker can embed hidden SSL backdoors via a few poisoned examples in the training dataset and maliciously manipulate the behavior of downstream models. To defend against SSL backdoor attacks, a feasible route is to detect and remove the poisonous samples in the training set. However, the existing SSL backdoor defense method fails to detect the poisonous samples precisely. In this paper, we propose to erase the SSL backdoor by cluster activation masking and propose a novel PoisonCAM method. After obtaining the threat model trained on the poisoned dataset, our method can precisely detect poisonous samples based on the assumption that masking the backdoor trigger can effectively change the activation of a downstream clustering model. In experiments, our PoisonCAM achieves 96% accuracy for backdoor trigger detection compared to 3% of the state-of-the-art method on poisoned ImageNet-100. Moreover, our proposed PoisonCAM significantly improves the performance of the trained SSL model under backdoor attacks compared to the state-of-the-art method. Our code will be available at https://github.com/LivXue/PoisonCAM.

A Survey on Interpretable Cross-modal Reasoning

Sep 14, 2023Abstract:In recent years, cross-modal reasoning (CMR), the process of understanding and reasoning across different modalities, has emerged as a pivotal area with applications spanning from multimedia analysis to healthcare diagnostics. As the deployment of AI systems becomes more ubiquitous, the demand for transparency and comprehensibility in these systems' decision-making processes has intensified. This survey delves into the realm of interpretable cross-modal reasoning (I-CMR), where the objective is not only to achieve high predictive performance but also to provide human-understandable explanations for the results. This survey presents a comprehensive overview of the typical methods with a three-level taxonomy for I-CMR. Furthermore, this survey reviews the existing CMR datasets with annotations for explanations. Finally, this survey summarizes the challenges for I-CMR and discusses potential future directions. In conclusion, this survey aims to catalyze the progress of this emerging research area by providing researchers with a panoramic and comprehensive perspective, illuminating the state of the art and discerning the opportunities. The summarized methods, datasets, and other resources are available at https://github.com/ZuyiZhou/Awesome-Interpretable-Cross-modal-Reasoning.

MGDCF: Distance Learning via Markov Graph Diffusion for Neural Collaborative Filtering

Apr 05, 2022

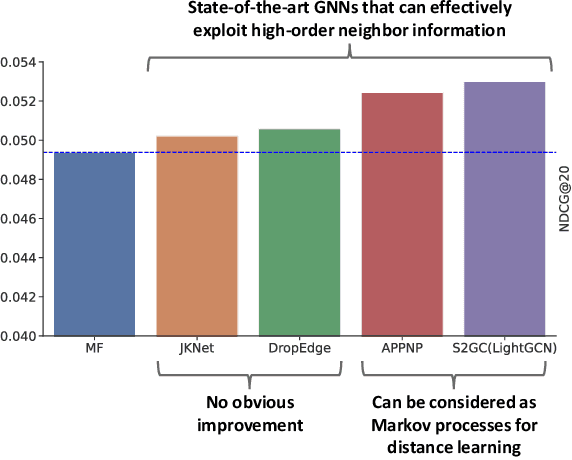

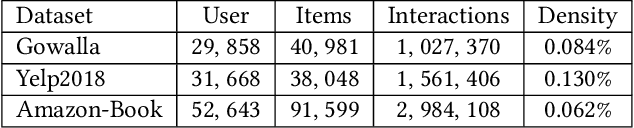

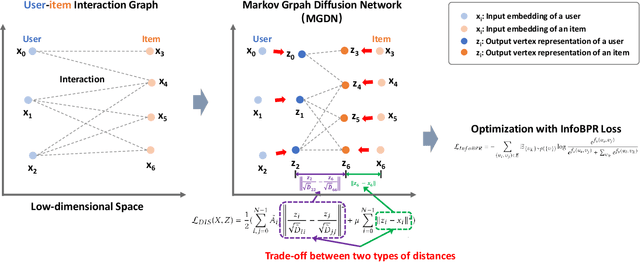

Abstract:Collaborative filtering (CF) is widely used by personalized recommendation systems, which aims to predict the preference of users with historical user-item interactions. In recent years, Graph Neural Networks (GNNs) have been utilized to build CF models and have shown promising performance. Recent state-of-the-art GNN-based CF approaches simply attribute their performance improvement to the high-order neighbor aggregation ability of GNNs. However, we observe that some powerful deep GNNs such as JKNet and DropEdge, can effectively exploit high-order neighbor information on other graph tasks but perform poorly on CF tasks, which conflicts with the explanation of these GNN-based CF research. Different from these research, we investigate the GNN-based CF from the perspective of Markov processes for distance learning with a unified framework named Markov Graph Diffusion Collaborative Filtering (MGDCF). We design a Markov Graph Diffusion Network (MGDN) as MGDCF's GNN encoder, which learns vertex representations by trading off two types of distances via a Markov process. We show the theoretical equivalence between MGDN's output and the optimal solution of a distance loss function, which can boost the optimization of CF models. MGDN can generalize state-of-the-art models such as LightGCN and APPNP, which are heterogeneous GNNs. In addition, MGDN can be extended to homogeneous GNNs with our sparsification technique. For optimizing MGDCF, we propose the InfoBPR loss function, which extends the widely used BPR loss to exploit multiple negative samples for better performance. We conduct experiments to perform detailed analysis on MGDCF. The source code is publicly available at https://github.com/hujunxianligong/MGDCF.

GRecX: An Efficient and Unified Benchmark for GNN-based Recommendation

Dec 03, 2021

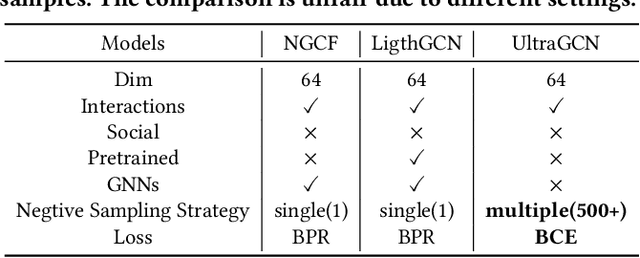

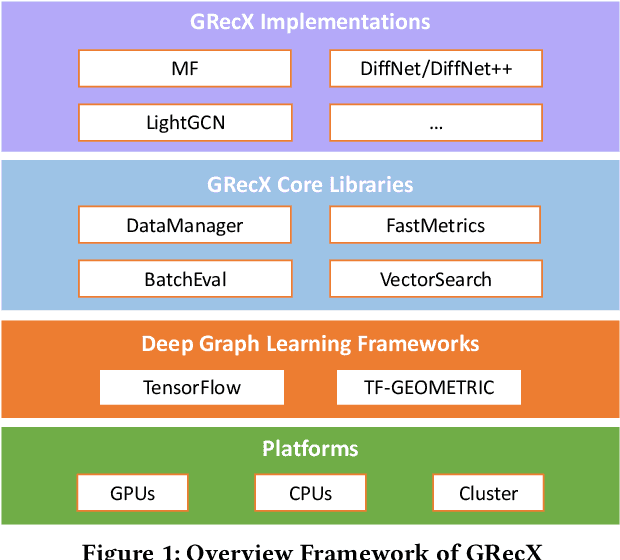

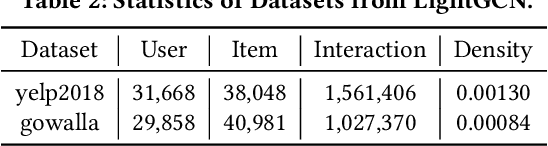

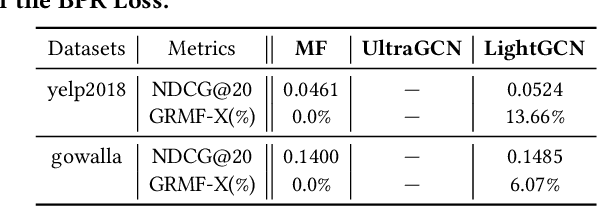

Abstract:In this paper, we present GRecX, an open-source TensorFlow framework for benchmarking GNN-based recommendation models in an efficient and unified way. GRecX consists of core libraries for building GNN-based recommendation benchmarks, as well as the implementations of popular GNN-based recommendation models. The core libraries provide essential components for building efficient and unified benchmarks, including FastMetrics (efficient metrics computation libraries), VectorSearch (efficient similarity search libraries for dense vectors), BatchEval (efficient mini-batch evaluation libraries), and DataManager (unified dataset management libraries). Especially, to provide a unified benchmark for the fair comparison of different complex GNN-based recommendation models, we design a new metric GRMF-X and integrate it into the FastMetrics component. Based on a TensorFlow GNN library tf_geometric, GRecX carefully implements a variety of popular GNN-based recommendation models. We carefully implement these baseline models to reproduce the performance reported in the literature, and our implementations are usually more efficient and friendly. In conclusion, GRecX enables uses to train and benchmark GNN-based recommendation baselines in an efficient and unified way. We conduct experiments with GRecX, and the experimental results show that GRecX allows us to train and benchmark GNN-based recommendation baselines in an efficient and unified way. The source code of GRecX is available at https://github.com/maenzhier/GRecX.

Contrastive Adaptive Propagation Graph Neural Networks for Efficient Graph Learning

Dec 02, 2021

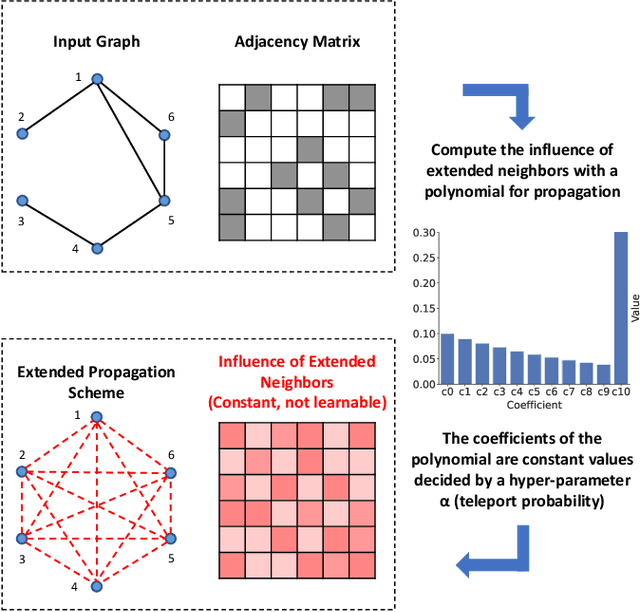

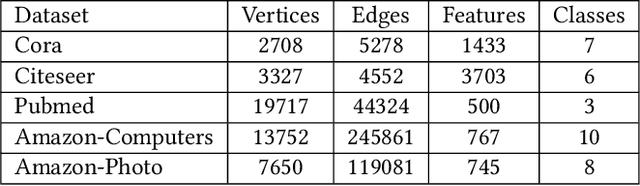

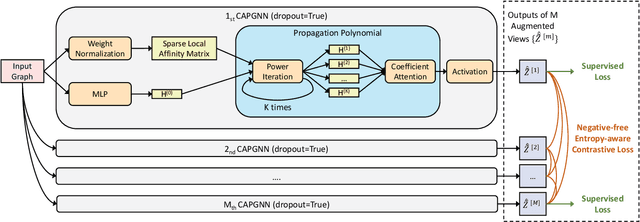

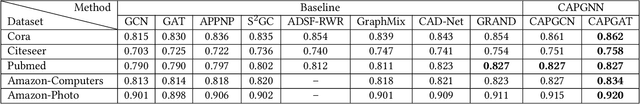

Abstract:Graph Neural Networks (GNNs) have achieved great success in processing graph data by extracting and propagating structure-aware features. Existing GNN research designs various propagation schemes to guide the aggregation of neighbor information. Recently the field has advanced from local propagation schemes that focus on local neighbors towards extended propagation schemes that can directly deal with extended neighbors consisting of both local and high-order neighbors. Despite the impressive performance, existing approaches are still insufficient to build an efficient and learnable extended propagation scheme that can adaptively adjust the influence of local and high-order neighbors. This paper proposes an efficient yet effective end-to-end framework, namely Contrastive Adaptive Propagation Graph Neural Networks (CAPGNN), to address these issues by combining Personalized PageRank and attention techniques. CAPGNN models the learnable extended propagation scheme with a polynomial of a sparse local affinity matrix, where the polynomial relies on Personalized PageRank to provide superior initial coefficients. In order to adaptively adjust the influence of both local and high-order neighbors, a coefficient-attention model is introduced to learn to adjust the coefficients of the polynomial. In addition, we leverage self-supervised learning techniques and design a negative-free entropy-aware contrastive loss to explicitly take advantage of unlabeled data for training. We implement CAPGNN as two different versions named CAPGCN and CAPGAT, which use static and dynamic sparse local affinity matrices, respectively. Experiments on graph benchmark datasets suggest that CAPGNN can consistently outperform or match state-of-the-art baselines. The source code is publicly available at https://github.com/hujunxianligong/CAPGNN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge