Ruyi Ding

Spatiotemporal-Aware Bit-Flip Injection on DNN-based Advanced Driver Assistance Systems

Apr 04, 2026Abstract:Modern advanced driver assistance systems (ADAS) rely on deep neural networks (DNNs) for perception and planning. Since DNNs' parameters reside in DRAM during inference, bit flips caused by cosmic radiation or low-voltage operation may corrupt DNN computations, distort driving decisions, and lead to real-world incidents. This paper presents a SpatioTemporal-Aware Fault Injection (STAFI) framework to locate critical fault sites in DNNs for ADAS efficiently. Spatially, we propose a Progressive Metric-guided Bit Search (PMBS) that efficiently identifies critical network weight bits whose corruption causes the largest deviations in driving behavior (e.g., unintended acceleration or steering). Furthermore, we develop a Critical Fault Time Identification (CFTI) mechanism that determines when to trigger these faults, taking into account the context of real-time systems and environmental states, to maximize the safety impact. Experiments on DNNs for a production ADAS demonstrate that STAFI uncovers 29.56x more hazard-inducing critical faults than the strongest baseline.

MoEcho: Exploiting Side-Channel Attacks to Compromise User Privacy in Mixture-of-Experts LLMs

Aug 20, 2025

Abstract:The transformer architecture has become a cornerstone of modern AI, fueling remarkable progress across applications in natural language processing, computer vision, and multimodal learning. As these models continue to scale explosively for performance, implementation efficiency remains a critical challenge. Mixture of Experts (MoE) architectures, selectively activating specialized subnetworks (experts), offer a unique balance between model accuracy and computational cost. However, the adaptive routing in MoE architectures, where input tokens are dynamically directed to specialized experts based on their semantic meaning inadvertently opens up a new attack surface for privacy breaches. These input-dependent activation patterns leave distinctive temporal and spatial traces in hardware execution, which adversaries could exploit to deduce sensitive user data. In this work, we propose MoEcho, discovering a side channel analysis based attack surface that compromises user privacy on MoE based systems. Specifically, in MoEcho, we introduce four novel architectural side channels on different computing platforms, including Cache Occupancy Channels and Pageout+Reload on CPUs, and Performance Counter and TLB Evict+Reload on GPUs, respectively. Exploiting these vulnerabilities, we propose four attacks that effectively breach user privacy in large language models (LLMs) and vision language models (VLMs) based on MoE architectures: Prompt Inference Attack, Response Reconstruction Attack, Visual Inference Attack, and Visual Reconstruction Attack. MoEcho is the first runtime architecture level security analysis of the popular MoE structure common in modern transformers, highlighting a serious security and privacy threat and calling for effective and timely safeguards when harnessing MoE based models for developing efficient large scale AI services.

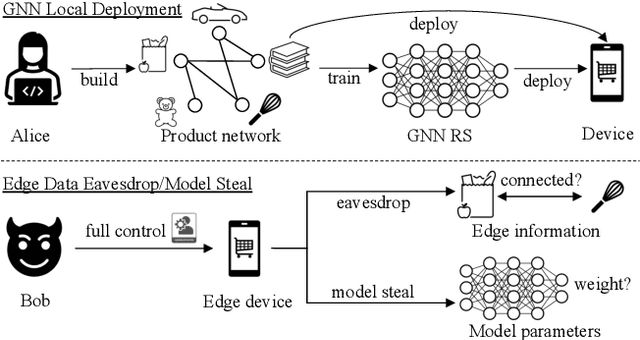

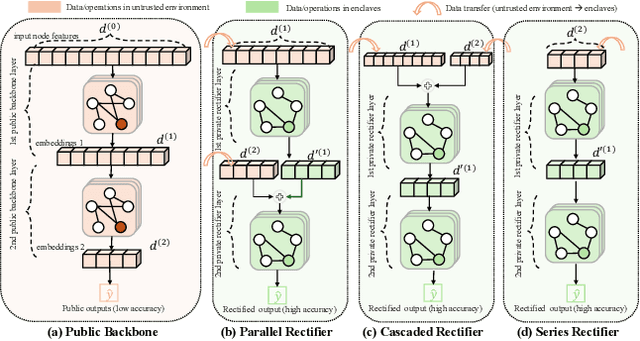

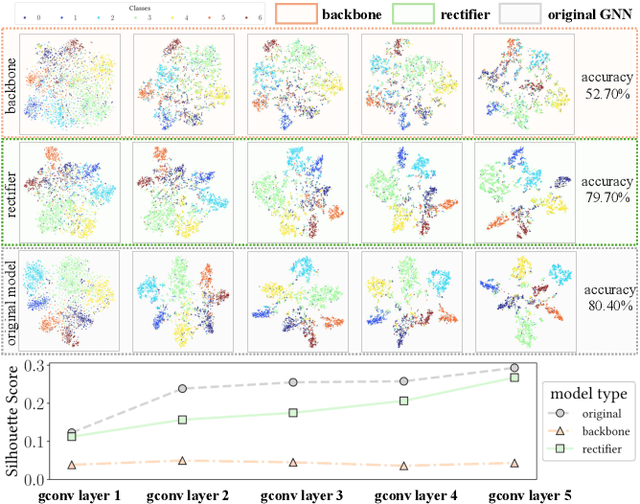

Graph in the Vault: Protecting Edge GNN Inference with Trusted Execution Environment

Feb 20, 2025

Abstract:Wide deployment of machine learning models on edge devices has rendered the model intellectual property (IP) and data privacy vulnerable. We propose GNNVault, the first secure Graph Neural Network (GNN) deployment strategy based on Trusted Execution Environment (TEE). GNNVault follows the design of 'partition-before-training' and includes a private GNN rectifier to complement with a public backbone model. This way, both critical GNN model parameters and the private graph used during inference are protected within secure TEE compartments. Real-world implementations with Intel SGX demonstrate that GNNVault safeguards GNN inference against state-of-the-art link stealing attacks with negligible accuracy degradation (<2%).

Non-transferable Pruning

Oct 10, 2024

Abstract:Pretrained Deep Neural Networks (DNNs), developed from extensive datasets to integrate multifaceted knowledge, are increasingly recognized as valuable intellectual property (IP). To safeguard these models against IP infringement, strategies for ownership verification and usage authorization have emerged. Unlike most existing IP protection strategies that concentrate on restricting direct access to the model, our study addresses an extended DNN IP issue: applicability authorization, aiming to prevent the misuse of learned knowledge, particularly in unauthorized transfer learning scenarios. We propose Non-Transferable Pruning (NTP), a novel IP protection method that leverages model pruning to control a pretrained DNN's transferability to unauthorized data domains. Selective pruning can deliberately diminish a model's suitability on unauthorized domains, even with full fine-tuning. Specifically, our framework employs the alternating direction method of multipliers (ADMM) for optimizing both the model sparsity and an innovative non-transferable learning loss, augmented with Fisher space discriminative regularization, to constrain the model's generalizability to the target dataset. We also propose a novel effective metric to measure the model non-transferability: Area Under the Sample-wise Learning Curve (SLC-AUC). This metric facilitates consideration of full fine-tuning across various sample sizes. Experimental results demonstrate that NTP significantly surpasses the state-of-the-art non-transferable learning methods, with an average SLC-AUC at $-0.54$ across diverse pairs of source and target domains, indicating that models trained with NTP do not suit for transfer learning to unauthorized target domains. The efficacy of NTP is validated in both supervised and self-supervised learning contexts, confirming its applicability in real-world scenarios.

GraphCroc: Cross-Correlation Autoencoder for Graph Structural Reconstruction

Oct 04, 2024

Abstract:Graph-structured data is integral to many applications, prompting the development of various graph representation methods. Graph autoencoders (GAEs), in particular, reconstruct graph structures from node embeddings. Current GAE models primarily utilize self-correlation to represent graph structures and focus on node-level tasks, often overlooking multi-graph scenarios. Our theoretical analysis indicates that self-correlation generally falls short in accurately representing specific graph features such as islands, symmetrical structures, and directional edges, particularly in smaller or multiple graph contexts. To address these limitations, we introduce a cross-correlation mechanism that significantly enhances the GAE representational capabilities. Additionally, we propose GraphCroc, a new GAE that supports flexible encoder architectures tailored for various downstream tasks and ensures robust structural reconstruction, through a mirrored encoding-decoding process. This model also tackles the challenge of representation bias during optimization by implementing a loss-balancing strategy. Both theoretical analysis and numerical evaluations demonstrate that our methodology significantly outperforms existing self-correlation-based GAEs in graph structure reconstruction.

VertexSerum: Poisoning Graph Neural Networks for Link Inference

Aug 02, 2023

Abstract:Graph neural networks (GNNs) have brought superb performance to various applications utilizing graph structural data, such as social analysis and fraud detection. The graph links, e.g., social relationships and transaction history, are sensitive and valuable information, which raises privacy concerns when using GNNs. To exploit these vulnerabilities, we propose VertexSerum, a novel graph poisoning attack that increases the effectiveness of graph link stealing by amplifying the link connectivity leakage. To infer node adjacency more accurately, we propose an attention mechanism that can be embedded into the link detection network. Our experiments demonstrate that VertexSerum significantly outperforms the SOTA link inference attack, improving the AUC scores by an average of $9.8\%$ across four real-world datasets and three different GNN structures. Furthermore, our experiments reveal the effectiveness of VertexSerum in both black-box and online learning settings, further validating its applicability in real-world scenarios.

EMShepherd: Detecting Adversarial Samples via Side-channel Leakage

Mar 27, 2023

Abstract:Deep Neural Networks (DNN) are vulnerable to adversarial perturbations-small changes crafted deliberately on the input to mislead the model for wrong predictions. Adversarial attacks have disastrous consequences for deep learning-empowered critical applications. Existing defense and detection techniques both require extensive knowledge of the model, testing inputs, and even execution details. They are not viable for general deep learning implementations where the model internal is unknown, a common 'black-box' scenario for model users. Inspired by the fact that electromagnetic (EM) emanations of a model inference are dependent on both operations and data and may contain footprints of different input classes, we propose a framework, EMShepherd, to capture EM traces of model execution, perform processing on traces and exploit them for adversarial detection. Only benign samples and their EM traces are used to train the adversarial detector: a set of EM classifiers and class-specific unsupervised anomaly detectors. When the victim model system is under attack by an adversarial example, the model execution will be different from executions for the known classes, and the EM trace will be different. We demonstrate that our air-gapped EMShepherd can effectively detect different adversarial attacks on a commonly used FPGA deep learning accelerator for both Fashion MNIST and CIFAR-10 datasets. It achieves a 100% detection rate on most types of adversarial samples, which is comparable to the state-of-the-art 'white-box' software-based detectors.

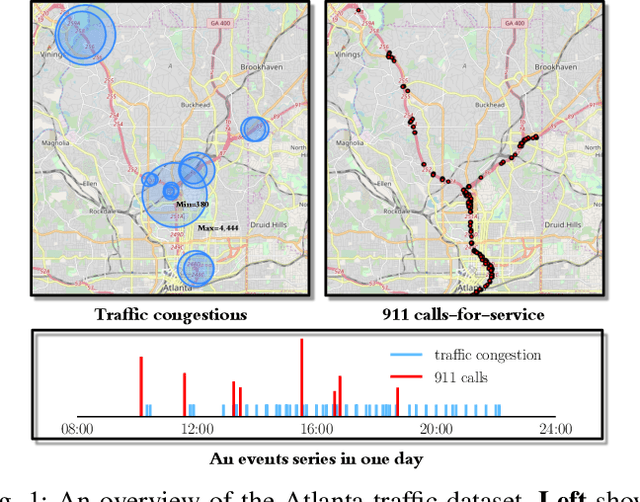

Spatio-Temporal Point Processes with Attention for Traffic Congestion Event Modeling

May 15, 2020

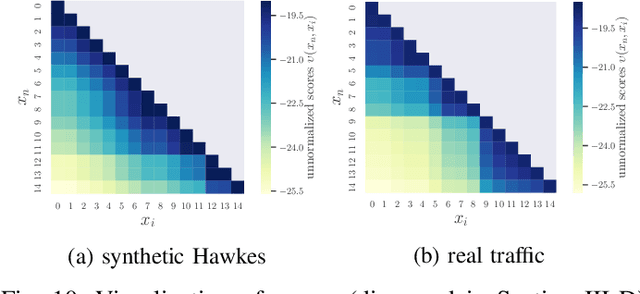

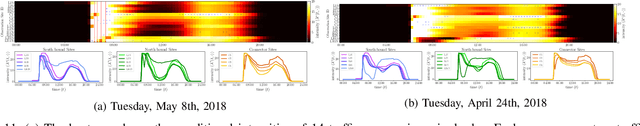

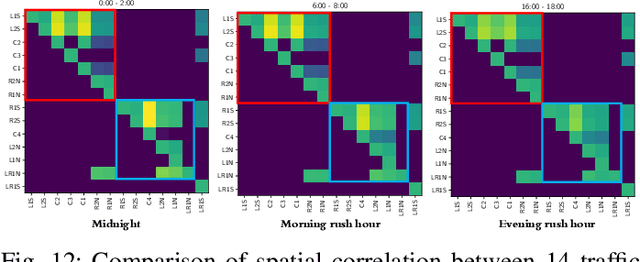

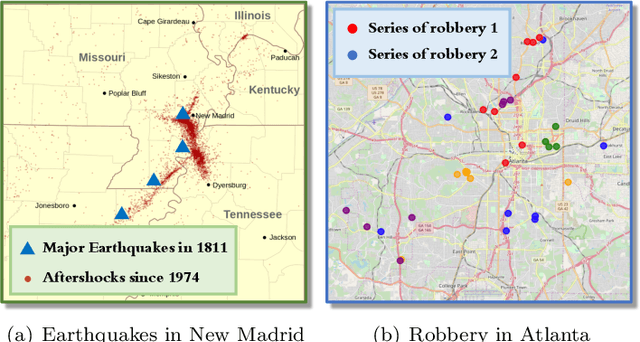

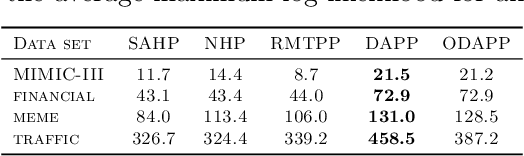

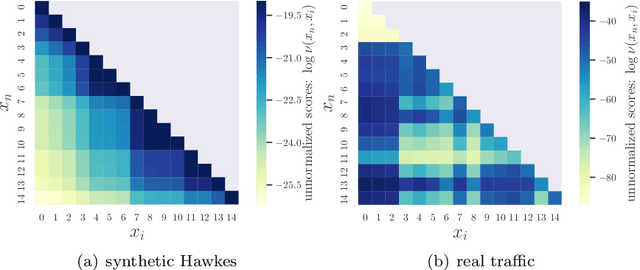

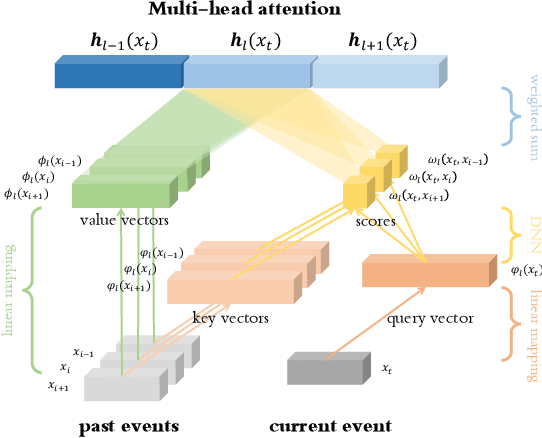

Abstract:We present a novel framework for modeling traffic congestion events over road networks based on new mutually exciting spatio-temporal point process models with attention mechanisms and neural network embeddings. Using multi-modal data by combining count data from traffic sensors with police reports that report traffic incidents, we aim to capture two types of triggering effect for congestion events. Current traffic congestion at one location may cause future congestion over the road network, and traffic incidents may cause spread traffic congestion. To capture the non-homogeneous temporal dependence of the event on the past, we introduce a novel attention-based mechanism based on neural networks embedding for the point process model. To incorporate the directional spatial dependence induced by the road network, we adapt the "tail-up" model from the context of spatial statistics to the traffic network setting. We demonstrate the superior performance of our approach compared to the state-of-the-art methods for both synthetic and real data.

Deep Attention Spatio-Temporal Point Processes

Feb 20, 2020

Abstract:We present a novel attention-based sequential model for mutually dependent spatio-temporal discrete event data, which is a versatile framework for capturing the non-homogeneous influence of events. We go beyond the assumption that the influence of the historical event (causing an upper-ward or downward jump in the intensity function) will fade monotonically over time, which is a key assumption made by many widely-used point process models, including those based on Recurrent Neural Networks (RNNs). We borrow the idea from the attention model based on a probabilistic score function, which leads to a flexible representation of the intensity function and is highly interpretable. We demonstrate the superior performance of our approach compared to the state-of-the-art for both synthetic and real data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge