Yao Xie

CoreFlow: Low-Rank Matrix Generative Models

Apr 27, 2026Abstract:Learning matrix-valued distributions from high-dimensional and possibly incomplete training data is challenging: ambient-space generative modeling is computationally expensive and statistically fragile when the matrix dimension is large but the sample size is limited. We propose CoreFlow, a geometry-preserving low-rank flow model that learns shared row/column subspaces across the matrix distribution, and then trains a continuous normalizing flow only on the induced low-dimensional core. CoreFlow is designed for settings where shared low-rank matrix geometry is present, especially in high-dimensional limited-sample regimes. This separates shared matrix geometry from sample-specific variation, preserves matrix structure, and substantially improves training efficiency. The same framework also handles incomplete training matrices through masked Riemannian updates and iterative completion. Across real and synthetic benchmarks, CoreFlow substantially improves spectral and moment-level generation quality in few-sample regimes while remaining competitive in data-rich settings, even under compression to 9% of the ambient dimension and with up to 40% missing training entries.

Generative models for decision-making under distributional shift

Apr 06, 2026Abstract:Many data-driven decision problems are formulated using a nominal distribution estimated from historical data, while performance is ultimately determined by a deployment distribution that may be shifted, context-dependent, partially observed, or stress-induced. This tutorial presents modern generative models, particularly flow- and score-based methods, as mathematical tools for constructing decision-relevant distributions. From an operations research perspective, their primary value lies not in unconstrained sample synthesis but in representing and transforming distributions through transport maps, velocity fields, score fields, and guided stochastic dynamics. We present a unified framework based on pushforward maps, continuity, Fokker-Planck equations, Wasserstein geometry, and optimization in probability space. Within this framework, generative models can be used to learn nominal uncertainty, construct stressed or least-favorable distributions for robustness, and produce conditional or posterior distributions under side information and partial observation. We also highlight representative theoretical guarantees, including forward-reverse convergence for iterative flow models, first-order minimax analysis in transport-map space, and error-transfer bounds for posterior sampling with generative priors. The tutorial provides a principled introduction to using generative models for scenario generation, robust decision-making, uncertainty quantification, and related problems under distributional shift.

Mixed-Integer Programming for Change-point Detection

Feb 12, 2026Abstract:We present a new mixed-integer programming (MIP) approach for offline multiple change-point detection by casting the problem as a globally optimal piecewise linear (PWL) fitting problem. Our main contribution is a family of strengthened MIP formulations whose linear programming (LP) relaxations admit integral projections onto the segment assignment variables, which encode the segment membership of each data point. This property yields provably tighter relaxations than existing formulations for offline multiple change-point detection. We further extend the framework to two settings of active research interest: (i) multidimensional PWL models with shared change-points, and (ii) sparse change-point detection, where only a subset of dimensions undergo structural change. Extensive computational experiments on benchmark real-world datasets demonstrate that the proposed formulations achieve reductions in solution times under both $\ell_1$ and $\ell_2$ loss functions in comparison to the state-of-the-art.

Assured Autonomy: How Operations Research Powers and Orchestrates Generative AI Systems

Dec 30, 2025Abstract:Generative artificial intelligence (GenAI) is shifting from conversational assistants toward agentic systems -- autonomous decision-making systems that sense, decide, and act within operational workflows. This shift creates an autonomy paradox: as GenAI systems are granted greater operational autonomy, they should, by design, embody more formal structure, more explicit constraints, and stronger tail-risk discipline. We argue stochastic generative models can be fragile in operational domains unless paired with mechanisms that provide verifiable feasibility, robustness to distribution shift, and stress testing under high-consequence scenarios. To address this challenge, we develop a conceptual framework for assured autonomy grounded in operations research (OR), built on two complementary approaches. First, flow-based generative models frame generation as deterministic transport characterized by an ordinary differential equation, enabling auditability, constraint-aware generation, and connections to optimal transport, robust optimization, and sequential decision control. Second, operational safety is formulated through an adversarial robustness lens: decision rules are evaluated against worst-case perturbations within uncertainty or ambiguity sets, making unmodeled risks part of the design. This framework clarifies how increasing autonomy shifts OR's role from solver to guardrail to system architect, with responsibility for control logic, incentive protocols, monitoring regimes, and safety boundaries. These elements define a research agenda for assured autonomy in safety-critical, reliability-sensitive operational domains.

Beyond Procedural Compliance: Human Oversight as a Dimension of Well-being Efficacy in AI Governance

Dec 15, 2025Abstract:Major AI ethics guidelines and laws, including the EU AI Act, call for effective human oversight, but do not define it as a distinct and developable capacity. This paper introduces human oversight as a well-being capacity, situated within the emerging Well-being Efficacy framework. The concept integrates AI literacy, ethical discernment, and awareness of human needs, acknowledging that some needs may be conflicting or harmful. Because people inevitably project desires, fears, and interests into AI systems, oversight requires the competence to examine and, when necessary, restrain problematic demands. The authors argue that the sustainable and cost-effective development of this capacity depends on its integration into education at every level, from professional training to lifelong learning. The frame of human oversight as a well-being capacity provides a practical path from high-level regulatory goals to the continuous cultivation of human agency and responsibility essential for safe and ethical AI. The paper establishes a theoretical foundation for future research on the pedagogical implementation and empirical validation of well-being effectiveness in multiple contexts.

Worst-case generation via minimax optimization in Wasserstein space

Dec 09, 2025Abstract:Worst-case generation plays a critical role in evaluating robustness and stress-testing systems under distribution shifts, in applications ranging from machine learning models to power grids and medical prediction systems. We develop a generative modeling framework for worst-case generation for a pre-specified risk, based on min-max optimization over continuous probability distributions, namely the Wasserstein space. Unlike traditional discrete distributionally robust optimization approaches, which often suffer from scalability issues, limited generalization, and costly worst-case inference, our framework exploits the Brenier theorem to characterize the least favorable (worst-case) distribution as the pushforward of a transport map from a continuous reference measure, enabling a continuous and expressive notion of risk-induced generation beyond classical discrete DRO formulations. Based on the min-max formulation, we propose a Gradient Descent Ascent (GDA)-type scheme that updates the decision model and the transport map in a single loop, establishing global convergence guarantees under mild regularity assumptions and possibly without convexity-concavity. We also propose to parameterize the transport map using a neural network that can be trained simultaneously with the GDA iterations by matching the transported training samples, thereby achieving a simulation-free approach. The efficiency of the proposed method as a risk-induced worst-case generator is validated by numerical experiments on synthetic and image data.

Scalable Gaussian Processes with Low-Rank Deep Kernel Decomposition

May 24, 2025

Abstract:Kernels are key to encoding prior beliefs and data structures in Gaussian process (GP) models. The design of expressive and scalable kernels has garnered significant research attention. Deep kernel learning enhances kernel flexibility by feeding inputs through a neural network before applying a standard parametric form. However, this approach remains limited by the choice of base kernels, inherits high inference costs, and often demands sparse approximations. Drawing on Mercer's theorem, we introduce a fully data-driven, scalable deep kernel representation where a neural network directly represents a low-rank kernel through a small set of basis functions. This construction enables highly efficient exact GP inference in linear time and memory without invoking inducing points. It also supports scalable mini-batch training based on a principled variational inference framework. We further propose a simple variance correction procedure to guard against overconfidence in uncertainty estimates. Experiments on synthetic and real-world data demonstrate the advantages of our deep kernel GP in terms of predictive accuracy, uncertainty quantification, and computational efficiency.

PO-Flow: Flow-based Generative Models for Sampling Potential Outcomes and Counterfactuals

May 21, 2025Abstract:We propose PO-Flow, a novel continuous normalizing flow (CNF) framework for causal inference that jointly models potential outcomes and counterfactuals. Trained via flow matching, PO-Flow provides a unified framework for individualized potential outcome prediction, counterfactual predictions, and uncertainty-aware density learning. Among generative models, it is the first to enable density learning of potential outcomes without requiring explicit distributional assumptions (e.g., Gaussian mixtures), while also supporting counterfactual prediction conditioned on factual outcomes in general observational datasets. On benchmarks such as ACIC, IHDP, and IBM, it consistently outperforms prior methods across a range of causal inference tasks. Beyond that, PO-Flow succeeds in high-dimensional settings, including counterfactual image generation, demonstrating its broad applicability.

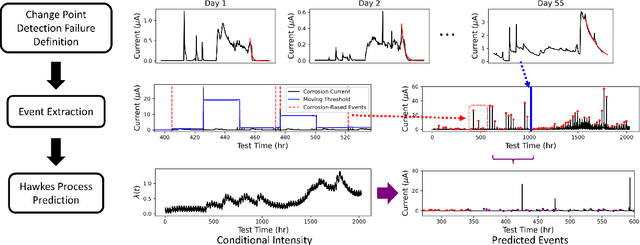

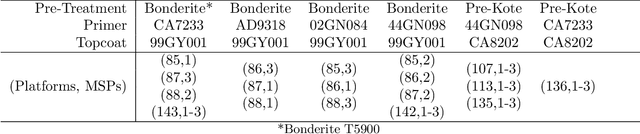

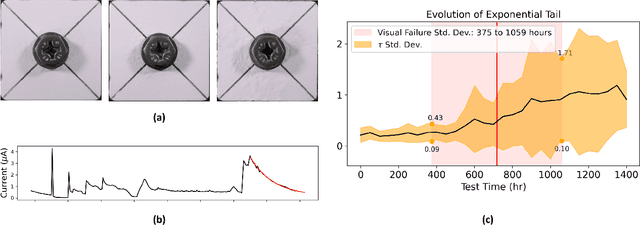

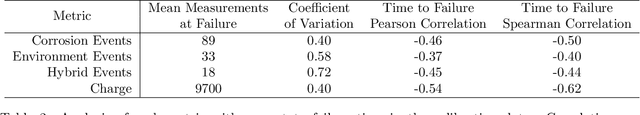

Modeling Discrete Coating Degradation Events via Hawkes Processes

Apr 13, 2025

Abstract:Forecasting the degradation of coated materials has long been a topic of critical interest in engineering, as it has enormous implications for both system maintenance and sustainable material use. Material degradation is affected by many factors, including the history of corrosion and characteristics of the environment, which can be measured by high-frequency sensors. However, the high volume of data produced by such sensors can inhibit efficient modeling and prediction. To alleviate this issue, we propose novel metrics for representing material degradation, taking the form of discrete degradation events. These events maintain the statistical properties of continuous sensor readings, such as correlation with time to coating failure and coefficient of variation at failure, but are composed of orders of magnitude fewer measurements. To forecast future degradation of the coating system, a marked Hawkes process models the events. We use the forecast of degradation to predict a future time of failure, exhibiting superior performance to the approach based on direct modeling of galvanic corrosion using continuous sensor measurements. While such maintenance is typically done on a regular basis, degradation models can enable informed condition-based maintenance, reducing unnecessary excess maintenance and preventing unexpected failures.

Graph-Based Prediction Models for Data Debiasing

Apr 12, 2025

Abstract:Bias in data collection, arising from both under-reporting and over-reporting, poses significant challenges in critical applications such as healthcare and public safety. In this work, we introduce Graph-based Over- and Under-reporting Debiasing (GROUD), a novel graph-based optimization framework that debiases reported data by jointly estimating the true incident counts and the associated reporting bias probabilities. By modeling the bias as a smooth signal over a graph constructed from geophysical or feature-based similarities, our convex formulation not only ensures a unique solution but also comes with theoretical recovery guarantees under certain assumptions. We validate GROUD on both challenging simulated experiments and real-world datasets -- including Atlanta emergency calls and COVID-19 vaccine adverse event reports -- demonstrating its robustness and superior performance in accurately recovering debiased counts. This approach paves the way for more reliable downstream decision-making in systems affected by reporting irregularities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge