Robin Murphy

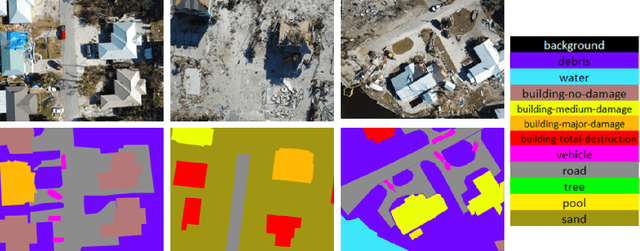

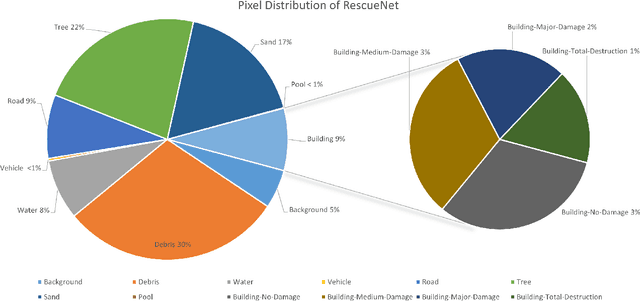

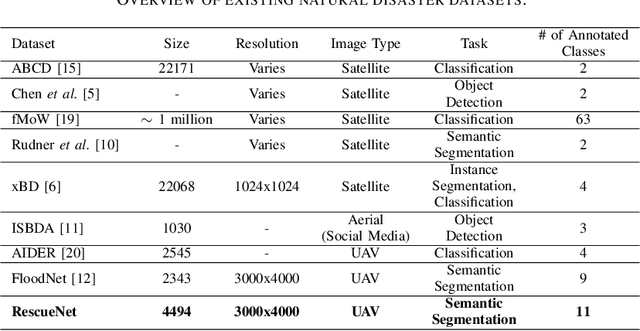

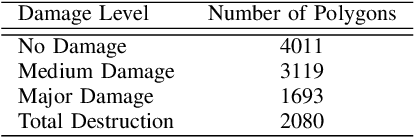

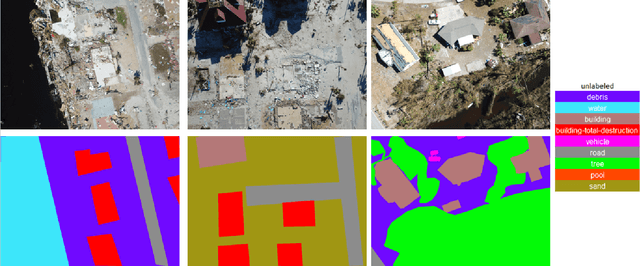

RescueNet: A High Resolution UAV Semantic Segmentation Benchmark Dataset for Natural Disaster Damage Assessment

Feb 24, 2022

Abstract:Due to climate change, we can observe a recent surge of natural disasters all around the world. These disasters are causing disastrous impact on both nature and human lives. Economic losses are getting greater due to the hurricanes. Quick and prompt response of the rescue teams are crucial in saving human lives and reducing economic cost. Deep learning based computer vision techniques can help in scene understanding, and help rescue teams with precise damage assessment. Semantic segmentation, an active research area in computer vision, can put labels to each pixel of an image, and therefore can be a valuable arsenal in the effort of reducing the impacts of hurricanes. Unfortunately, available datasets for natural disaster damage assessment lack detailed annotation of the affected areas, and therefore do not support the deep learning models in total damage assessment. To this end, we introduce the RescueNet, a high resolution post disaster dataset, for semantic segmentation to assess damages after natural disasters. The RescueNet consists of post disaster images collected after Hurricane Michael. The data is collected using Unmanned Aerial Vehicles (UAVs) from several areas impacted by the hurricane. The uniqueness of the RescueNet comes from the fact that this dataset provides high resolution post-disaster images and comprehensive annotation of each image. While most of the existing dataset offer annotation of only part of the scene, like building, road, or river, RescueNet provides pixel level annotation of all the classes including building, road, pool, tree, debris, and so on. We further analyze the usefulness of the dataset by implementing state-of-the-art segmentation models on the RescueNet. The experiments demonstrate that our dataset can be valuable in further improvement of the existing methodologies for natural disaster damage assessment.

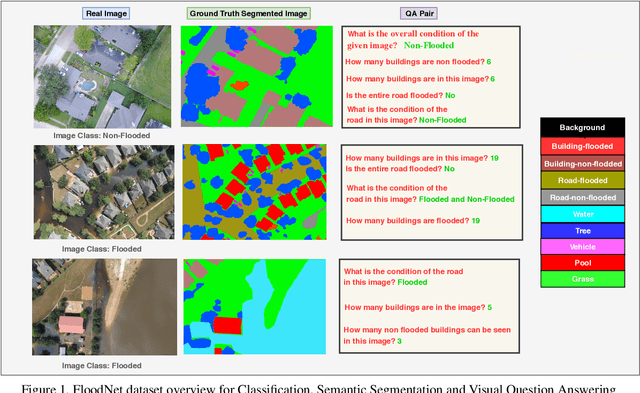

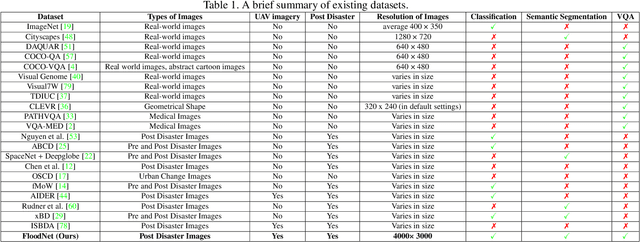

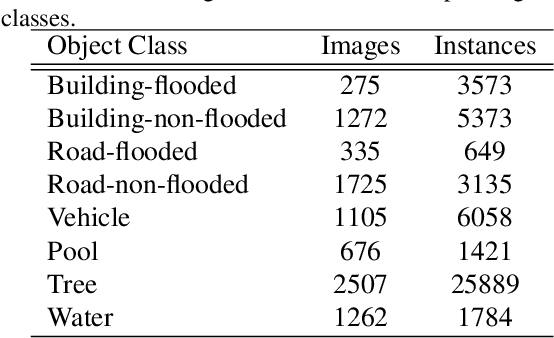

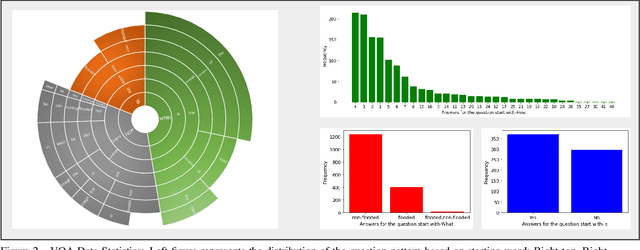

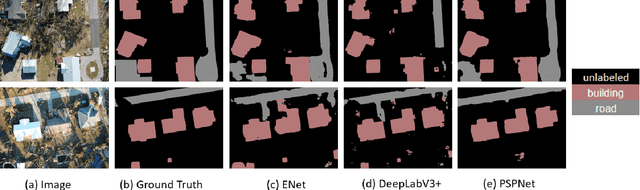

FloodNet: A High Resolution Aerial Imagery Dataset for Post Flood Scene Understanding

Dec 05, 2020

Abstract:Visual scene understanding is the core task in making any crucial decision in any computer vision system. Although popular computer vision datasets like Cityscapes, MS-COCO, PASCAL provide good benchmarks for several tasks (e.g. image classification, segmentation, object detection), these datasets are hardly suitable for post disaster damage assessments. On the other hand, existing natural disaster datasets include mainly satellite imagery which have low spatial resolution and a high revisit period. Therefore, they do not have a scope to provide quick and efficient damage assessment tasks. Unmanned Aerial Vehicle(UAV) can effortlessly access difficult places during any disaster and collect high resolution imagery that is required for aforementioned tasks of computer vision. To address these issues we present a high resolution UAV imagery, FloodNet, captured after the hurricane Harvey. This dataset demonstrates the post flooded damages of the affected areas. The images are labeled pixel-wise for semantic segmentation task and questions are produced for the task of visual question answering. FloodNet poses several challenges including detection of flooded roads and buildings and distinguishing between natural water and flooded water. With the advancement of deep learning algorithms, we can analyze the impact of any disaster which can make a precise understanding of the affected areas. In this paper, we compare and contrast the performances of baseline methods for image classification, semantic segmentation, and visual question answering on our dataset.

The Role of Robotics in Infectious Disease Crises

Oct 19, 2020Abstract:The recent coronavirus pandemic has highlighted the many challenges faced by the healthcare, public safety, and economic systems when confronted with a surge in patients that require intensive treatment and a population that must be quarantined or shelter in place. The most obvious and pressing challenge is taking care of acutely ill patients while managing spread of infection within the care facility, but this is just the tip of the iceberg if we consider what could be done to prepare in advance for future pandemics. Beyond the obvious need for strengthening medical knowledge and preparedness, there is a complementary need to anticipate and address the engineering challenges associated with infectious disease emergencies. Robotic technologies are inherently programmable, and robotic systems have been adapted and deployed, to some extent, in the current crisis for such purposes as transport, logistics, and disinfection. As technical capabilities advance and as the installed base of robotic systems increases in the future, they could play a much more significant role in future crises. This report is the outcome of a virtual workshop co-hosted by the National Academy of Engineering (NAE) and the Computing Community Consortium (CCC) held on July 9-10, 2020. The workshop consisted of over forty participants including representatives from the engineering/robotics community, clinicians, critical care workers, public health and safety experts, and emergency responders. It identifies key challenges faced by healthcare responders and the general population and then identifies robotic/technological responses to these challenges. Then it identifies the key research/knowledge barriers that need to be addressed in developing effective, scalable solutions. Finally, the report ends with the following recommendations on how to implement this strategy.

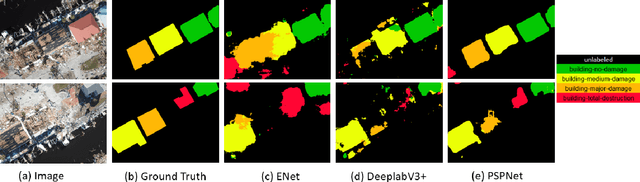

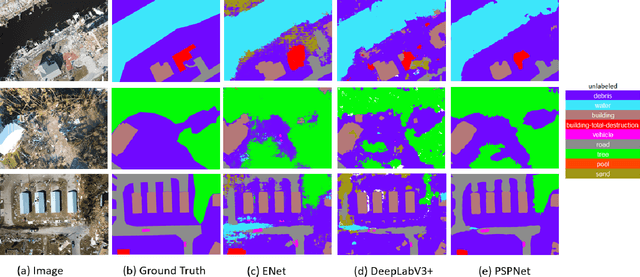

Comprehensive Semantic Segmentation on High Resolution UAV Imagery for Natural Disaster Damage Assessment

Sep 06, 2020

Abstract:In this paper, we present a large-scale hurricane Michael dataset for visual perception in disaster scenarios, and analyze state-of-the-art deep neural network models for semantic segmentation. The dataset consists of around 2000 high-resolution aerial images, with annotated ground-truth data for semantic segmentation. We discuss the challenges of the dataset and train the state-of-the-art methods on this dataset to evaluate how well these methods can recognize the disaster situations. Finally, we discuss challenges for future research.

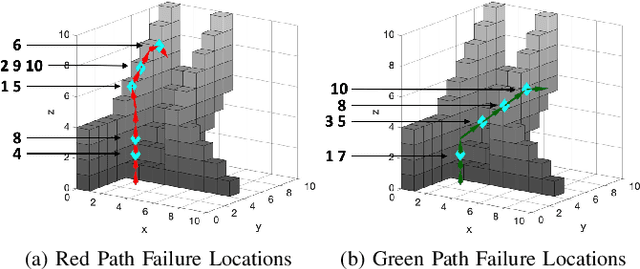

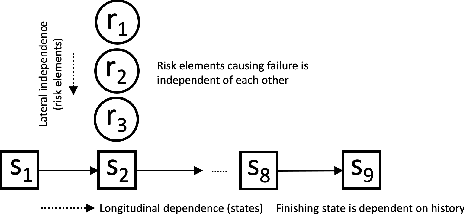

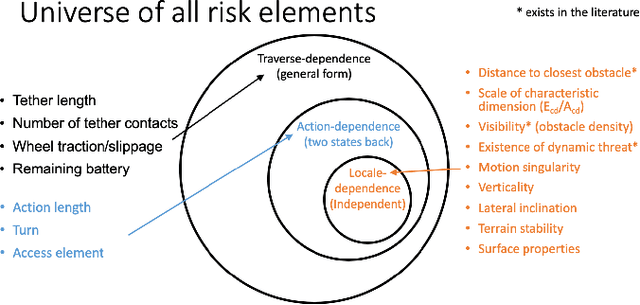

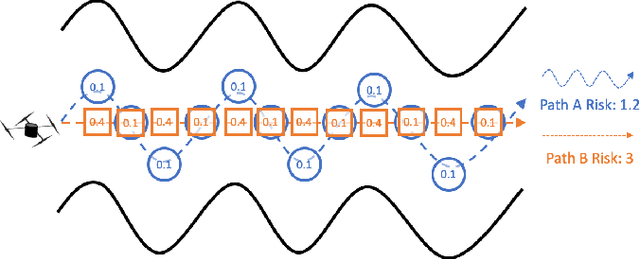

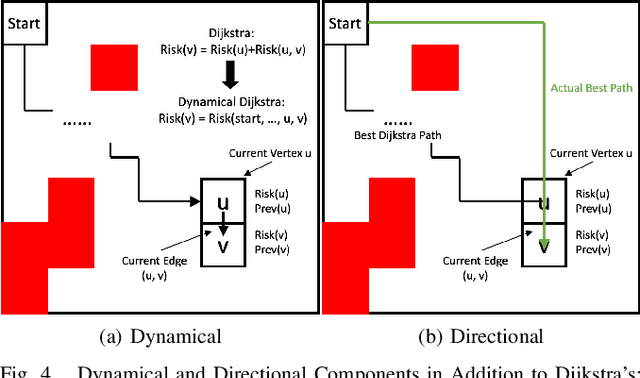

Robot Risk-Awareness by Formal Risk Reasoning and Planning

Sep 09, 2019

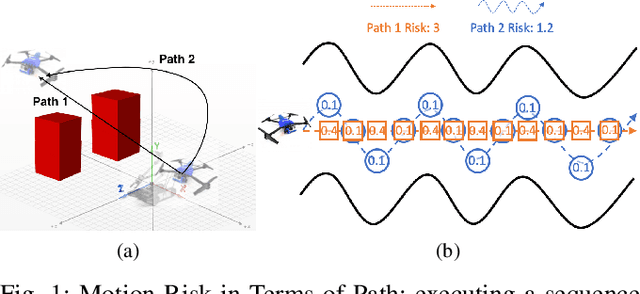

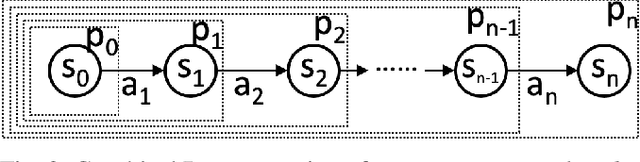

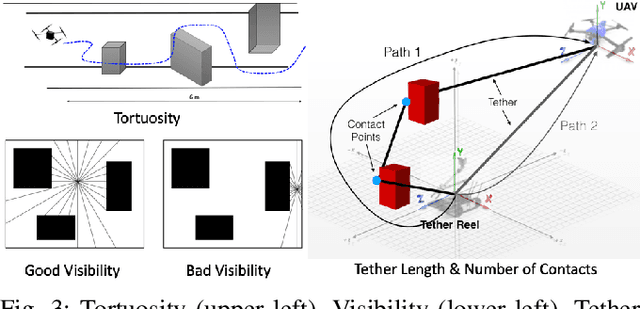

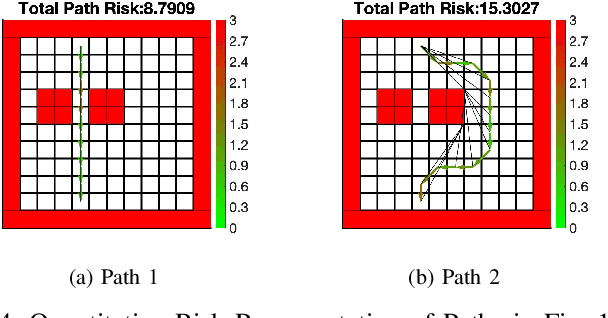

Abstract:This paper proposes a formal robot motion risk reasoning framework and develops a risk-aware path planner that minimizes the proposed risk. While robots locomoting in unstructured or confined environments face a variety of risk, existing risk only focuses on collision with obstacles. Such risk is currently only addressed in ad hoc manners. Without a formal definition, ill-supported properties, e.g. additive or Markovian, are simply assumed. Relied on an incomplete and inaccurate representation of risk, risk-aware planners use ad hoc risk functions or chance constraints to minimize risk. The former inevitably has low fidelity when modeling risk, while the latter conservatively generates feasible path within a probability bound. Using propositional logic and probability theory, the proposed motion risk reasoning framework is formal. Building upon a universe of risk elements of interest, three major risk categories, i.e. locale-, action-, and traverse-dependent, are introduced. A risk-aware planner is also developed to plan minimum risk path based on the newly proposed risk framework. Results of the risk reasoning and planning are validated in physical experiments in real-world unstructured or confined environments. With the proposed fundamental risk reasoning framework, safety of robot locomotion could be explicitly reasoned, quantified, and compared. The risk-aware planner finds safe path in terms of the newly proposed risk framework and enables more risk-aware robot behavior in unstructured or confined environments.

Explicit Motion Risk Representation

Apr 16, 2019

Abstract:This paper presents a formal definition and explicit representation of robot motion risk. Currently, robot motion risk has not been formally defined, but has already been used in motion and path planning. Risk is either implicitly represented as model uncertainty using probabilistic approaches, where the definition of risk is somewhat avoided, or explicitly modeled as a simple function of states, without a formal definition. In this work, we provide formal reasoning behind what risk is for robot motion and propose a formal definition of risk in terms of a sequence of motion, namely path. Mathematical approaches to represent motion risk are also presented, which is in accordance with our risk definition and properties. The definition and representation of risk provide a meaningful way to evaluate or construct robot motion or path plans. The understanding of risk is even of greater interest for the search and rescue community: the deconstructed environments cast extra risk onto the robot, since they are working under extreme conditions. A proper risk representation has the potential to reduce robot failure in the field.

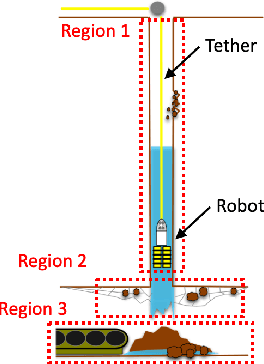

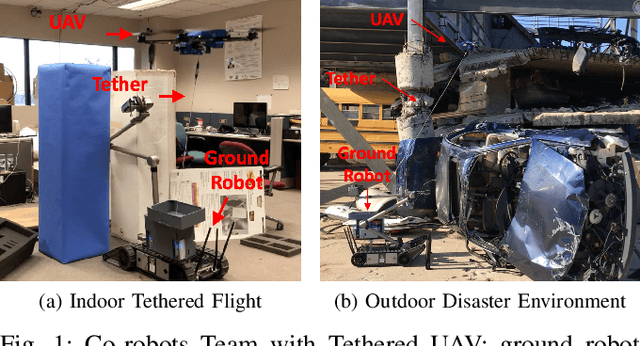

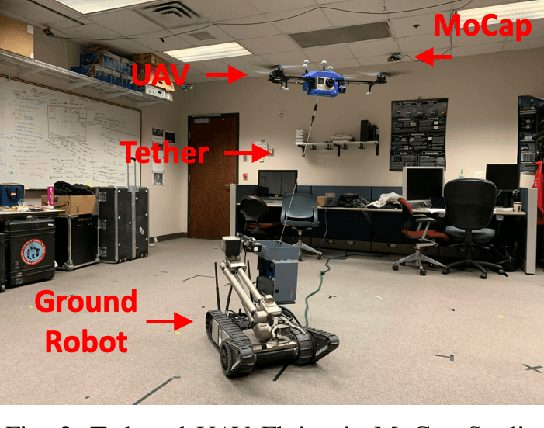

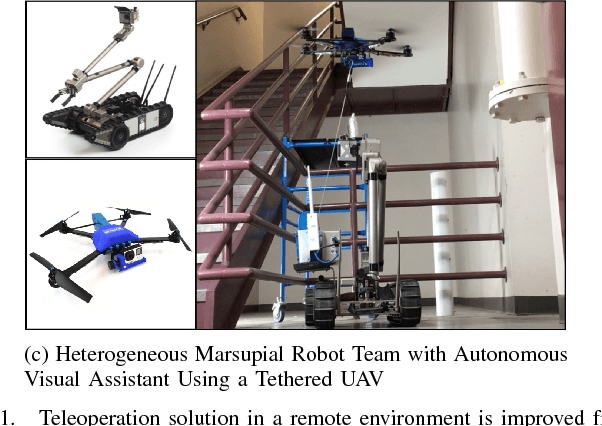

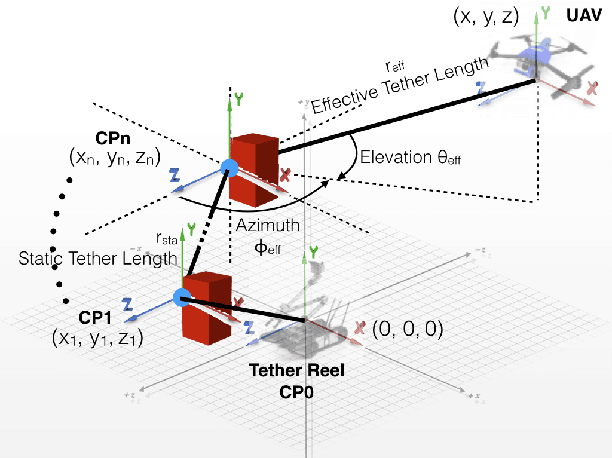

Benchmarking Tether-based UAV Motion Primitives

Apr 16, 2019

Abstract:This paper proposes and benchmarks two tether-based motion primitives for tethered UAVs to execute autonomous flight with proprioception only. Tethered UAVs have been studied mainly due to power and safety considerations. Tether is either not included in the UAV motion (treated same as free-flying UAV) or only in terms of station-keeping and high-speed steady flight. However, feedback from and control over the tether configuration could be utilized as a set of navigational tools for autonomous flight, especially in GPS-denied environments and without vision-based exteroception. In this work, two tether-based motion primitives are proposed, which can enable autonomous flight of a tethered UAV. The proposed motion primitives are implemented on a physical tethered UAV for autonomous path execution with motion capture ground truth. The navigational performance is quantified and compared. The proposed motion primitives make tethered UAV a mobile and safe autonomous robot platform. The benchmarking results suggest appropriate usage of the two motion primitives for tethered UAVs with different path plans.

Explicit-risk-aware Path Planning with Reward Maximization

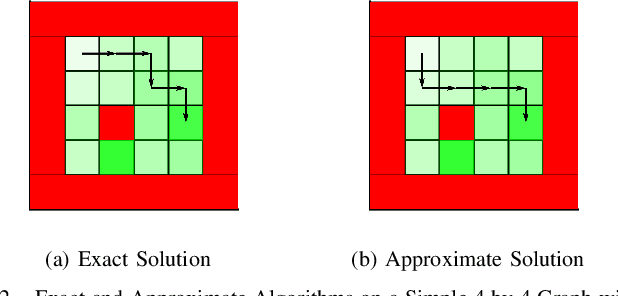

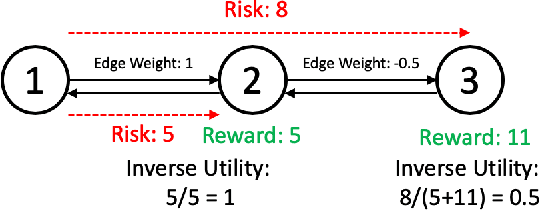

Mar 07, 2019

Abstract:This paper develops a path planner that minimizes risk (e.g. motion execution) while maximizing accumulated reward (e.g., quality of sensor viewpoint) motivated by visual assistance or tracking scenarios in unstructured or confined environments. In these scenarios, the robot should maintain the best viewpoint as it moves to the goal. However, in unstructured or confined environments, some paths may increase the risk of collision; therefore there is a tradeoff between risk and reward. Conventional state-dependent risk or probabilistic uncertainty modeling do not consider path-level risk or is difficult to acquire. This risk-reward planner explicitly represents risk as a function of motion plans, i.e., paths. Without manual assignment of the negative impact to the planner caused by risk, this planner takes in a pre-established viewpoint quality map and plans target location and path leading to it simultaneously, in order to maximize overall reward along the entire path while minimizing risk. Exact and approximate algorithms are presented, whose solution is further demonstrated on a physical tethered aerial vehicle. Other than the visual assistance problem, the proposed framework also provides a new planning paradigm to address minimum-risk planning under dynamical risk and absence of substructure optimality and to balance the trade-off between reward and risk.

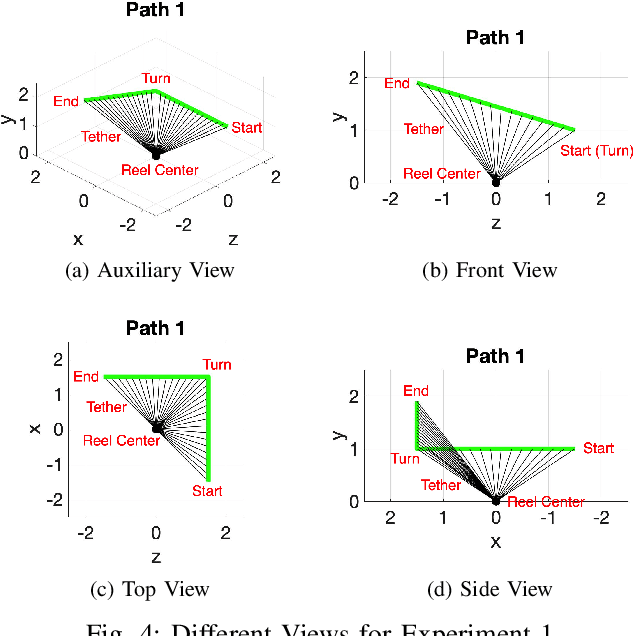

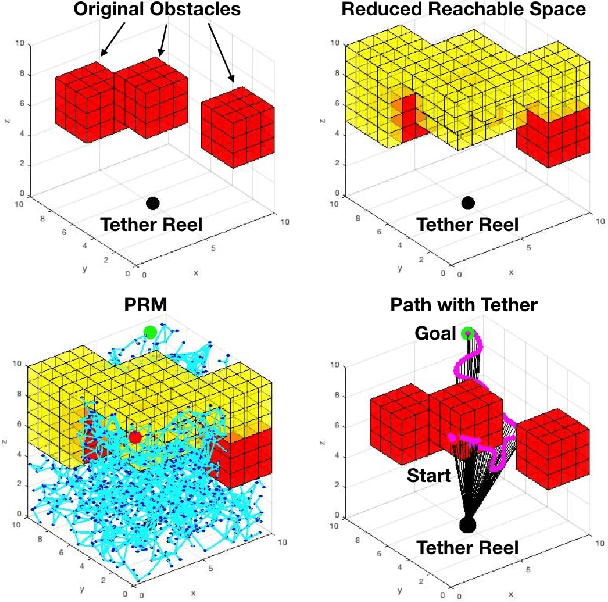

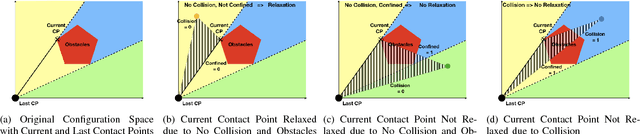

Motion Planning for a UAV with a Straight or Kinked Tether

Nov 06, 2018

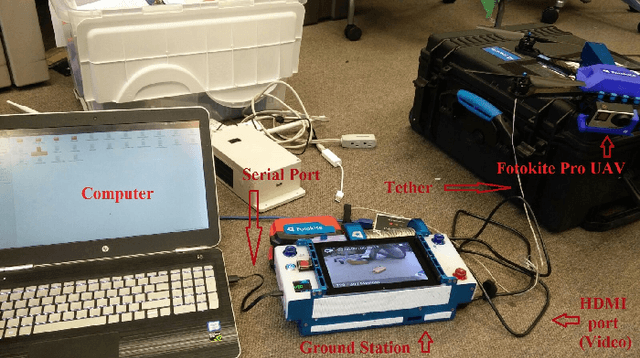

Abstract:This paper develops and compares two motion planning algorithms for a tethered UAV with and without the possibility of the tether contacting the confined and cluttered environment. Tethered aerial vehicles have been studied due to their advantages such as power duration, stability, and safety. However, the disadvantages brought in by the extra tether have not been well investigated by the robotic locomotion community, especially when the tethered agent is locomoting in a non-free space occupied with obstacles. In this work, we propose two motion planning frameworks that (1) reduce the reachable configuration space by taking into account the tether and (2) deliberately plan (and relax) the contact point(s) of the tether with the environment and enable an equivalent reachable configuration space as the non-tethered counterpart would have. Both methods are tested on a physical robot, Fotokite Pro. With our approaches, tethered aerial vehicles could find their applications in confined and cluttered environments with obstacles as opposed to ideal free space, while still maintaining the advantages from the usage of a tether. The motion planning strategies are particularly suitable for marsupial heterogeneous robotic teams, such as visual servoing/assisting for another mobile, tele-operated primary robot.

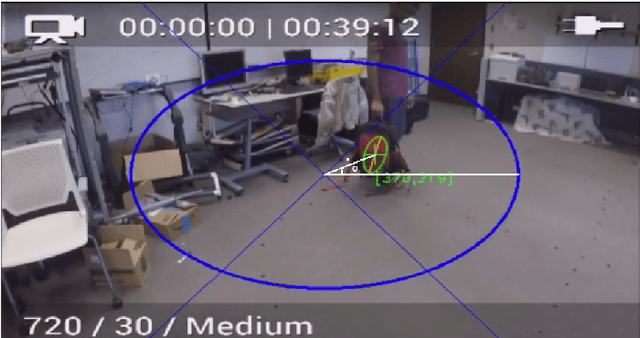

Visual Servoing of Unmanned Surface Vehicle from Small Tethered Unmanned Aerial Vehicle

Oct 09, 2017

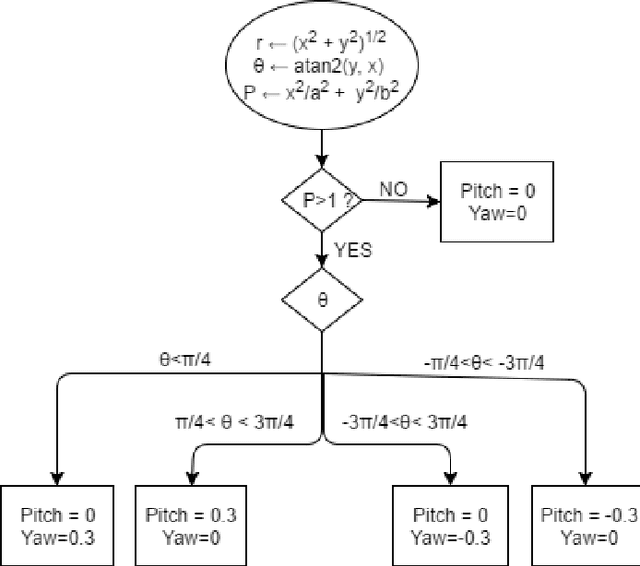

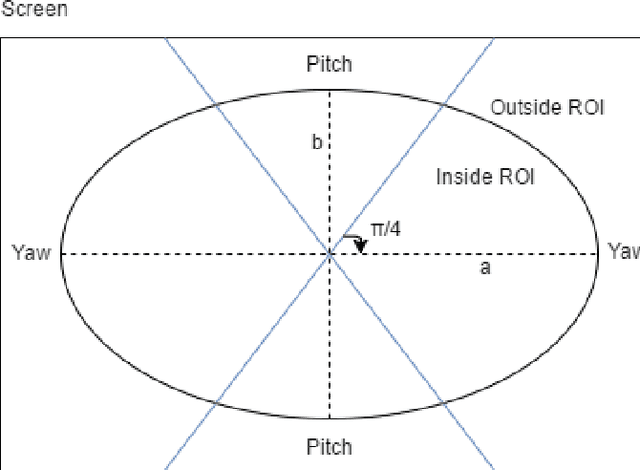

Abstract:This paper presents an algorithm and the implementation of a motor schema to aid the visual localization subsystem of the ongoing EMILY project at Texas A and M University. The EMILY project aims to team an Unmanned Surface Vehicle (USV) with an Unmanned Aerial Vehicle (UAV) to augment the search and rescue of marine casualties during an emergency response phase. The USV is designed to serve as a flotation device once it reaches the victims. A live video feed from the UAV is provided to the casuality responders giving them a visual estimate of the USVs orientation and position to help with its navigation. One of the challenges involved with casualty response using a USV UAV team is to simultaneously control the USV and track it. In this paper, we present an implemented solution to automate the UAV camera movements to keep the USV in view at all times. The motor schema proposed, uses the USVs coordinates from the visual localization subsystem to control the UAVs camera movements and track the USV with minimal camera movements such that the USV is always in the cameras field of view.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge