Renjie He

RD-ViT: Recurrent-Depth Vision Transformer for Semantic Segmentation with Reduced Data Dependence Extending the Recurrent-Depth Transformer Architecture to Dense Prediction

May 05, 2026Abstract:Vision Transformers (ViTs) achieve state-of-the-art segmentation accuracy but require large training datasets because each layer has unique parameters that must be learned independently. We present RD-ViT, a Recurrent-Depth Vision Transformer that adapts the Recurrent-Depth Transformer (RDT) architecture to dense prediction tasks, supporting both 2D and 3D inputs. RD-ViT replaces the deep stack of unique transformer blocks with a single shared block looped T times, augmented with LTI-stable state injection for guaranteed convergence, Adaptive Computation Time (ACT) for spatial compute allocation, depth-wise LoRA adaptation, and optional Mixture-of-Experts (MoE) feed-forward networks for category-specific specialization. We evaluate on the ACDC cardiac MRI segmentation benchmark in both 2D slice-level and 3D volumetric settings with exclusively real experiments executed in Google Colab. In 2D, RD-ViT outperforms standard ViT at 10% training data (Dice 0.774 vs 0.762) and at full data (0.882 vs 0.872). In 3D, RD-ViT with MoE achieves Dice 0.812 with 3.0M parameters, reaching 99.4% of standard ViT performance (0.817) at 53% of the parameter count. MoE expert utilization analysis reveals that different experts spontaneously specialize for different cardiac structures (RV, MYO, LV) without explicit routing supervision. ACT halting maps show higher compute allocation at cardiac boundaries, and the mean ponder time decreases from 2.6 to 1.4 iterations during training, demonstrating learned computational efficiency. Depth extrapolation enables inference with more loops than training without degradation. All code, notebooks, and results are publicly released.

Passive Detection in Multi-Static ISAC Systems: Performance Analysis and Joint Beamforming Optimization

Jun 08, 2025Abstract:This paper investigates the passive detection problem in multi-static integrated sensing and communication (ISAC) systems, where multiple sensing receivers (SRs) jointly detect a target using random unknown communication signals transmitted by a collaborative base station. Unlike traditional active detection, the considered passive detection does not require complete prior knowledge of the transmitted communication signals at each SR. First, we derive a generalized likelihood ratio test detector and conduct an asymptotic analysis of the detection statistic under the large-sample regime. We examine how the signal-to-noise ratios (SNRs) of the target paths and direct paths influence the detection performance. Then, we propose two joint transmit beamforming designs based on the analyses. In the first design, the asymptotic detection probability is maximized while satisfying the signal-to-interference-plus-noise ratio requirement for each communication user under the total transmit power constraint. Given the non-convex nature of the problem, we develop an alternating optimization algorithm based on the quadratic transform and semi-definite relaxation. The second design adopts a heuristic approach that aims to maximize the target energy, subject to a minimum SNR threshold on the direct path, and offers lower computational complexity. Numerical results validate the asymptotic analysis and demonstrate the superiority of the proposed beamforming designs in balancing passive detection performance and communication quality. This work highlights the promise of target detection using unknown communication data signals in multi-static ISAC systems.

AuxDet: Auxiliary Metadata Matters for Omni-Domain Infrared Small Target Detection

May 21, 2025

Abstract:Omni-domain infrared small target detection (IRSTD) poses formidable challenges, as a single model must seamlessly adapt to diverse imaging systems, varying resolutions, and multiple spectral bands simultaneously. Current approaches predominantly rely on visual-only modeling paradigms that not only struggle with complex background interference and inherently scarce target features, but also exhibit limited generalization capabilities across complex omni-scene environments where significant domain shifts and appearance variations occur. In this work, we reveal a critical oversight in existing paradigms: the neglect of readily available auxiliary metadata describing imaging parameters and acquisition conditions, such as spectral bands, sensor platforms, resolution, and observation perspectives. To address this limitation, we propose the Auxiliary Metadata Driven Infrared Small Target Detector (AuxDet), a novel multi-modal framework that fundamentally reimagines the IRSTD paradigm by incorporating textual metadata for scene-aware optimization. Through a high-dimensional fusion module based on multi-layer perceptrons (MLPs), AuxDet dynamically integrates metadata semantics with visual features, guiding adaptive representation learning for each individual sample. Additionally, we design a lightweight prior-initialized enhancement module using 1D convolutional blocks to further refine fused features and recover fine-grained target cues. Extensive experiments on the challenging WideIRSTD-Full benchmark demonstrate that AuxDet consistently outperforms state-of-the-art methods, validating the critical role of auxiliary information in improving robustness and accuracy in omni-domain IRSTD tasks. Code is available at https://github.com/GrokCV/AuxDet.

LoFLAT: Local Feature Matching using Focused Linear Attention Transformer

Oct 30, 2024Abstract:Local feature matching is an essential technique in image matching and plays a critical role in a wide range of vision-based applications. However, existing Transformer-based detector-free local feature matching methods encounter challenges due to the quadratic computational complexity of attention mechanisms, especially at high resolutions. However, while existing Transformer-based detector-free local feature matching methods have reduced computational costs using linear attention mechanisms, they still struggle to capture detailed local interactions, which affects the accuracy and robustness of precise local correspondences. In order to enhance representations of attention mechanisms while preserving low computational complexity, we propose the LoFLAT, a novel Local Feature matching using Focused Linear Attention Transformer in this paper. Our LoFLAT consists of three main modules: the Feature Extraction Module, the Feature Transformer Module, and the Matching Module. Specifically, the Feature Extraction Module firstly uses ResNet and a Feature Pyramid Network to extract hierarchical features. The Feature Transformer Module further employs the Focused Linear Attention to refine attention distribution with a focused mapping function and to enhance feature diversity with a depth-wise convolution. Finally, the Matching Module predicts accurate and robust matches through a coarse-to-fine strategy. Extensive experimental evaluations demonstrate that the proposed LoFLAT outperforms the LoFTR method in terms of both efficiency and accuracy.

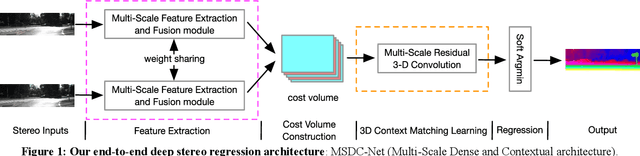

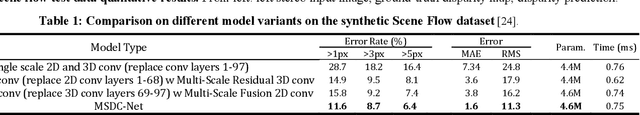

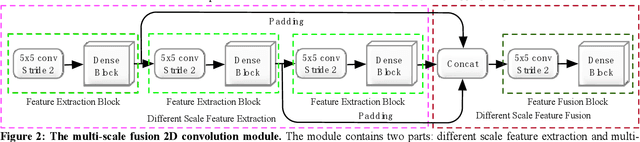

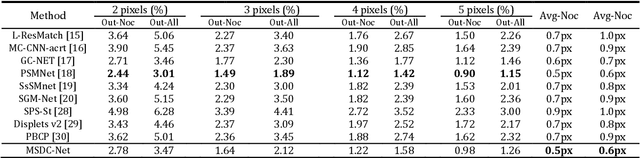

MSDC-Net: Multi-Scale Dense and Contextual Networks for Automated Disparity Map for Stereo Matching

Apr 30, 2019

Abstract:Disparity prediction from stereo images is essential to computer vision applications including autonomous driving, 3D model reconstruction, and object detection. To predict accurate disparity map, we propose a novel deep learning architecture for detectingthe disparity map from a rectified pair of stereo images, called MSDC-Net. Our MSDC-Net contains two modules: multi-scale fusion 2D convolution and multi-scale residual 3D convolution modules. The multi-scale fusion 2D convolution module exploits the potential multi-scale features, which extracts and fuses the different scale features by Dense-Net. The multi-scale residual 3D convolution module learns the different scale geometry context from the cost volume which aggregated by the multi-scale fusion 2D convolution module. Experimental results on Scene Flow and KITTI datasets demonstrate that our MSDC-Net significantly outperforms other approaches in the non-occluded region.

High Speed Tracking With A Fourier Domain Kernelized Correlation Filter

Nov 08, 2018

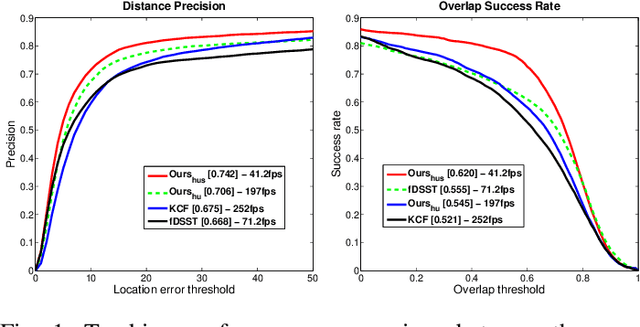

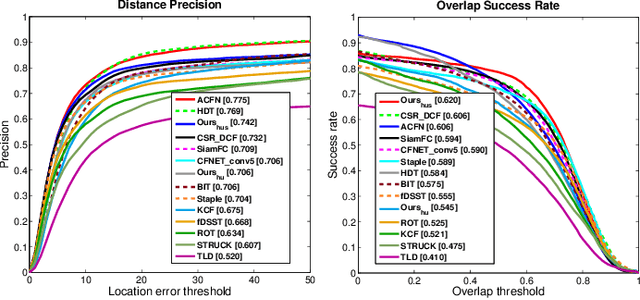

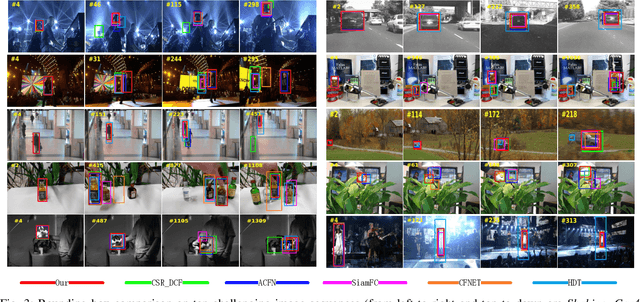

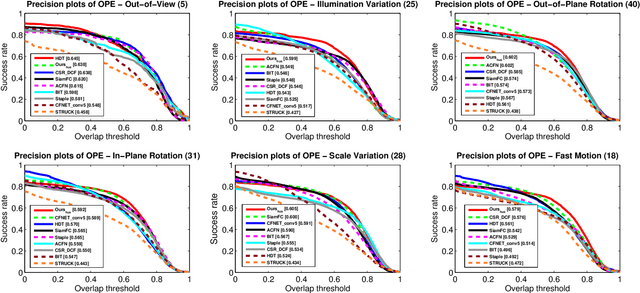

Abstract:It is challenging to design a high speed tracking approach using l1-norm due to its non-differentiability. In this paper, a new kernelized correlation filter is introduced by leveraging the sparsity attribute of l1-norm based regularization to design a high speed tracker. We combine the l1-norm and l2-norm based regularizations in one Huber-type loss function, and then formulate an optimization problem in the Fourier Domain for fast computation, which enables the tracker to adaptively ignore the noisy features produced from occlusion and illumination variation, while keep the advantages of l2-norm based regression. This is achieved due to the attribute of Convolution Theorem that the correlation in spatial domain corresponds to an element-wise product in the Fourier domain, resulting in that the l1-norm optimization problem could be decomposed into multiple sub-optimization spaces in the Fourier domain. But the optimized variables in the Fourier domain are complex, which makes using the l1-norm impossible if the real and imaginary parts of the variables cannot be separated. However, our proposed optimization problem is formulated in such a way that their real part and imaginary parts are indeed well separated. As such, the proposed optimization problem can be solved efficiently to obtain their optimal values independently with closed-form solutions. Extensive experiments on two large benchmark datasets demonstrate that the proposed tracking algorithm significantly improves the tracking accuracy of the original kernelized correlation filter (KCF) while with little sacrifice on tracking speed. Moreover, it outperforms the state-of-the-art approaches in terms of accuracy, efficiency, and robustness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge