Minkyu Kim

Image Clustering Conditioned on Text Criteria

Oct 30, 2023

Abstract:Classical clustering methods do not provide users with direct control of the clustering results, and the clustering results may not be consistent with the relevant criterion that a user has in mind. In this work, we present a new methodology for performing image clustering based on user-specified text criteria by leveraging modern vision-language models and large language models. We call our method Image Clustering Conditioned on Text Criteria (IC$|$TC), and it represents a different paradigm of image clustering. IC$|$TC requires a minimal and practical degree of human intervention and grants the user significant control over the clustering results in return. Our experiments show that IC$|$TC can effectively cluster images with various criteria, such as human action, physical location, or the person's mood, while significantly outperforming baselines.

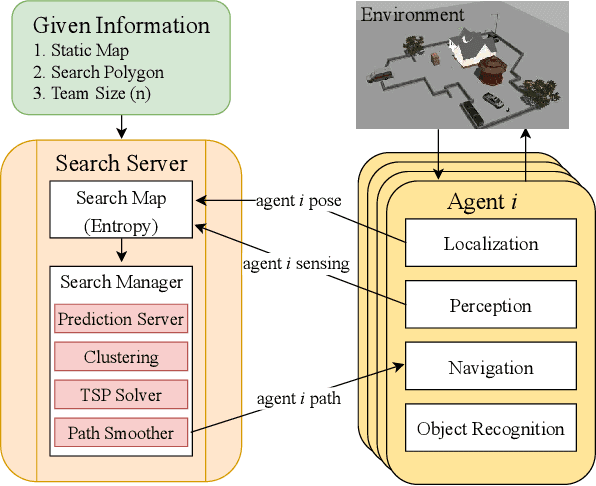

LIVE: Lidar Informed Visual Search for Multiple Objects with Multiple Robots

Oct 06, 2023Abstract:This paper introduces LIVE: Lidar Informed Visual Search focused on the problem of multi-robot (MR) planning and execution for robust visual detection of multiple objects. We perform extensive real-world experiments with a two-robot team in an indoor apartment setting. LIVE acts as a perception module that detects unmapped obstacles, or Short Term Features (STFs), in Lidar observations. STFs are filtered, resulting in regions to be visually inspected by modifying plans online. Lidar Coverage Path Planning (CPP) is employed for generating highly efficient global plans for heterogeneous robot teams. Finally, we present a data model and a demonstration dataset, which can be found by visiting our project website https://sites.google.com/view/live-iros2023/home.

Squeezing Large-Scale Diffusion Models for Mobile

Jul 03, 2023Abstract:The emergence of diffusion models has greatly broadened the scope of high-fidelity image synthesis, resulting in notable advancements in both practical implementation and academic research. With the active adoption of the model in various real-world applications, the need for on-device deployment has grown considerably. However, deploying large diffusion models such as Stable Diffusion with more than one billion parameters to mobile devices poses distinctive challenges due to the limited computational and memory resources, which may vary according to the device. In this paper, we present the challenges and solutions for deploying Stable Diffusion on mobile devices with TensorFlow Lite framework, which supports both iOS and Android devices. The resulting Mobile Stable Diffusion achieves the inference latency of smaller than 7 seconds for a 512x512 image generation on Android devices with mobile GPUs.

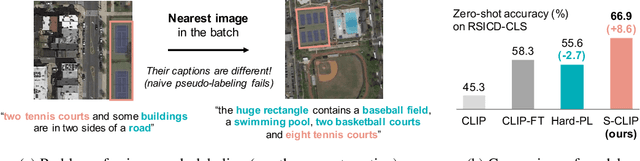

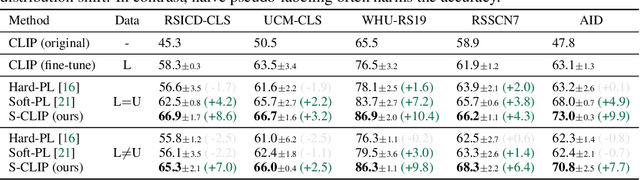

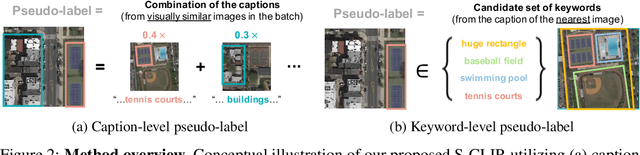

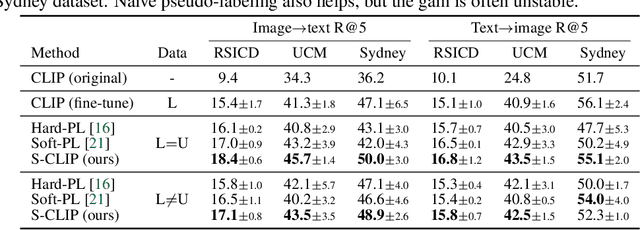

S-CLIP: Semi-supervised Vision-Language Pre-training using Few Specialist Captions

May 23, 2023

Abstract:Vision-language models, such as contrastive language-image pre-training (CLIP), have demonstrated impressive results in natural image domains. However, these models often struggle when applied to specialized domains like remote sensing, and adapting to such domains is challenging due to the limited number of image-text pairs available for training. To address this, we propose S-CLIP, a semi-supervised learning method for training CLIP that utilizes additional unpaired images. S-CLIP employs two pseudo-labeling strategies specifically designed for contrastive learning and the language modality. The caption-level pseudo-label is given by a combination of captions of paired images, obtained by solving an optimal transport problem between unpaired and paired images. The keyword-level pseudo-label is given by a keyword in the caption of the nearest paired image, trained through partial label learning that assumes a candidate set of labels for supervision instead of the exact one. By combining these objectives, S-CLIP significantly enhances the training of CLIP using only a few image-text pairs, as demonstrated in various specialist domains, including remote sensing, fashion, scientific figures, and comics. For instance, S-CLIP improves CLIP by 10% for zero-shot classification and 4% for image-text retrieval on the remote sensing benchmark, matching the performance of supervised CLIP while using three times fewer image-text pairs.

Prefix tuning for automated audio captioning

Apr 04, 2023

Abstract:Audio captioning aims to generate text descriptions from environmental sounds. One challenge of audio captioning is the difficulty of the generalization due to the lack of audio-text paired training data. In this work, we propose a simple yet effective method of dealing with small-scaled datasets by leveraging a pre-trained language model. We keep the language model frozen to maintain the expressivity for text generation, and we only learn to extract global and temporal features from the input audio. To bridge a modality gap between the audio features and the language model, we employ mapping networks that translate audio features to the continuous vectors the language model can understand, called prefixes. We evaluate our proposed method on the Clotho and AudioCaps dataset and show our method outperforms prior arts in diverse experimental settings.

Explaining Visual Biases as Words by Generating Captions

Jan 26, 2023Abstract:We aim to diagnose the potential biases in image classifiers. To this end, prior works manually labeled biased attributes or visualized biased features, which need high annotation costs or are often ambiguous to interpret. Instead, we leverage two types (generative and discriminative) of pre-trained vision-language models to describe the visual bias as a word. Specifically, we propose bias-to-text (B2T), which generates captions of the mispredicted images using a pre-trained captioning model to extract the common keywords that may describe visual biases. Then, we categorize the bias type as spurious correlation or majority bias by checking if it is specific or agnostic to the class, based on the similarity of class-wise mispredicted images and the keyword upon a pre-trained vision-language joint embedding space, e.g., CLIP. We demonstrate that the proposed simple and intuitive scheme can recover well-known gender and background biases, and discover novel ones in real-world datasets. Moreover, we utilize B2T to compare the classifiers using different architectures or training methods. Finally, we show that one can obtain debiased classifiers using the B2T bias keywords and CLIP, in both zero-shot and full-shot manners, without using any human annotation on the bias.

Higher-order Neural Additive Models: An Interpretable Machine Learning Model with Feature Interactions

Sep 30, 2022

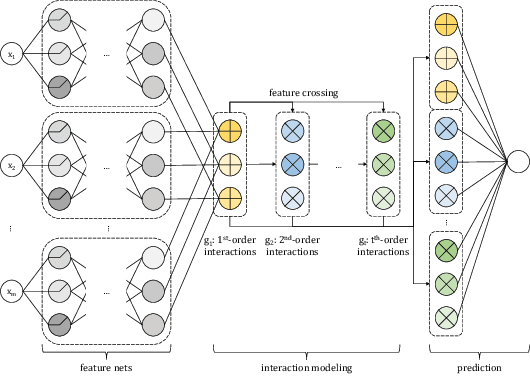

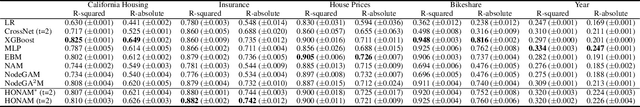

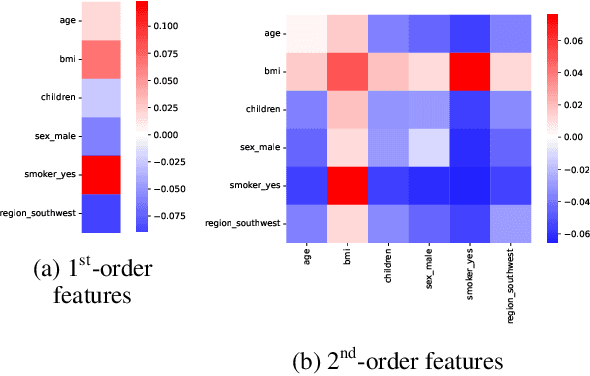

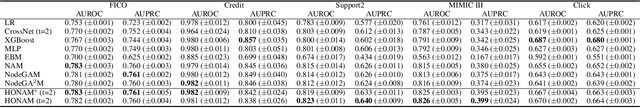

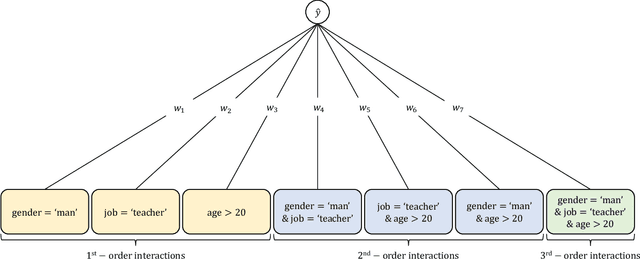

Abstract:Black-box models, such as deep neural networks, exhibit superior predictive performances, but understanding their behavior is notoriously difficult. Many explainable artificial intelligence methods have been proposed to reveal the decision-making processes of black box models. However, their applications in high-stakes domains remain limited. Recently proposed neural additive models (NAM) have achieved state-of-the-art interpretable machine learning. NAM can provide straightforward interpretations with slight performance sacrifices compared with multi-layer perceptron. However, NAM can only model 1$^{\text{st}}$-order feature interactions; thus, it cannot capture the co-relationships between input features. To overcome this problem, we propose a novel interpretable machine learning method called higher-order neural additive models (HONAM) and a feature interaction method for high interpretability. HONAM can model arbitrary orders of feature interactions. Therefore, it can provide the high predictive performance and interpretability that high-stakes domains need. In addition, we propose a novel hidden unit to effectively learn sharp-shape functions. We conducted experiments using various real-world datasets to examine the effectiveness of HONAM. Furthermore, we demonstrate that HONAM can achieve fair AI with a slight performance sacrifice. The source code for HONAM is publicly available.

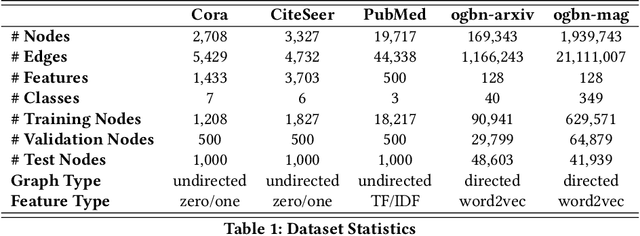

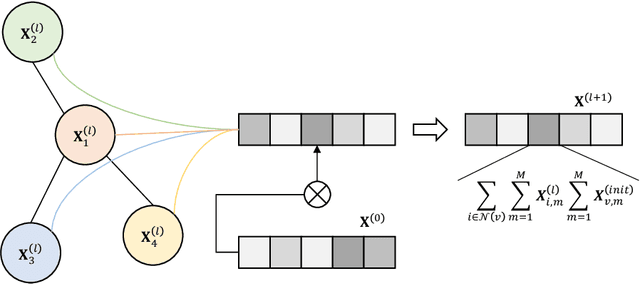

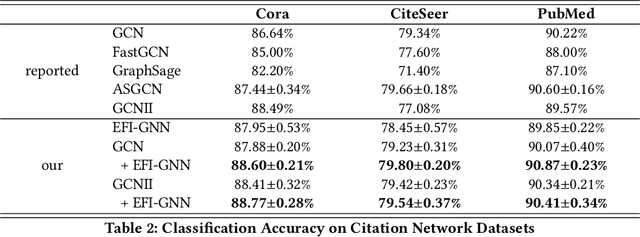

Explicit Feature Interaction-aware Graph Neural Networks

Apr 07, 2022

Abstract:Graph neural networks are powerful methods to handle graph-structured data. However, existing graph neural networks only learn higher-order feature interactions implicitly. Thus, they cannot capture information that occurred in low-order feature interactions. To overcome this problem, we propose Explicit Feature Interaction-aware Graph Neural Network (EFI-GNN), which explicitly learns arbitrary-order feature interactions. EFI-GNN can jointly learn with any other graph neural network. We demonstrate that the joint learning method always enhances performance on the various node classification tasks. Furthermore, since EFI-GNN is inherently a linear model, we can interpret the prediction result of EFI-GNN. With the computation rule, we can obtain an any-order feature's effect on the decision. By that, we visualize the effects of the first-order and second-order features as a form of a heatmap.

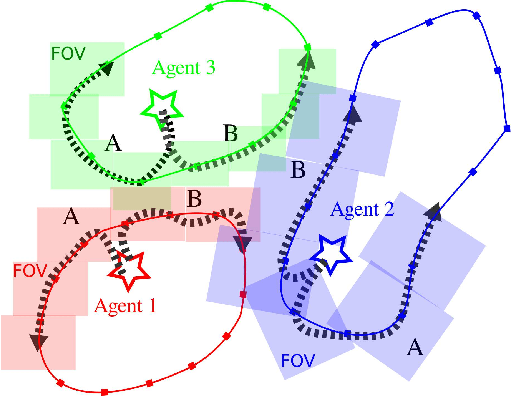

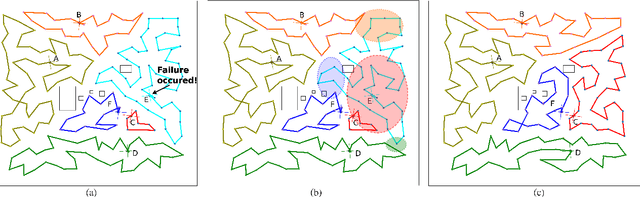

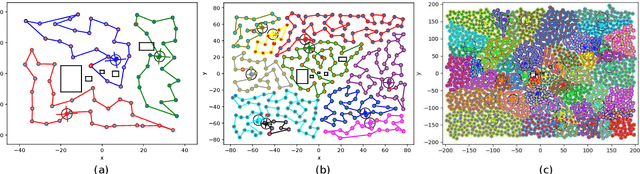

KC-TSS: An Algorithm for Heterogeneous Robot Teams Performing Resilient Target Search

Mar 02, 2022

Abstract:This paper proposes KC-TSS: K-Clustered-Traveling Salesman Based Search, a failure resilient path planning algorithm for heterogeneous robot teams performing target search in human environments. We separate the sample path generation problem into Heterogeneous Clustering and multiple Traveling Salesman Problems. This allows us to provide high-quality candidate paths (i.e. minimal backtracking, overlap) to an Information-Theoretic utility function for each agent. First, we generate waypoint candidates from map knowledge and a target prediction model. All of these candidates are clustered according to the number of agents and their ability to cover space, or coverage competency. Each agent solves a Traveling Salesman Problem (TSP) instance over their assigned cluster and then candidates are fed to a utility function for path selection. We perform extensive Gazebo simulations and preliminary deployment of real robots in indoor search and simulated rescue scenarios with static targets. We compare our proposed method against a state-of-the-art algorithm and show that ours is able to outperform it in mission time. Our method provides resilience in the event of single or multi teammate failure by recomputing global team plans online.

SmoothMix: Training Confidence-calibrated Smoothed Classifiers for Certified Robustness

Nov 17, 2021

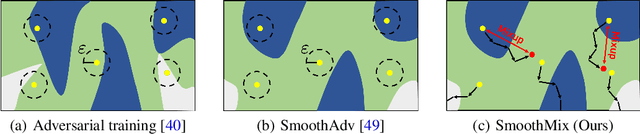

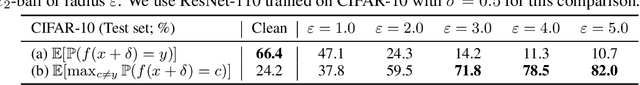

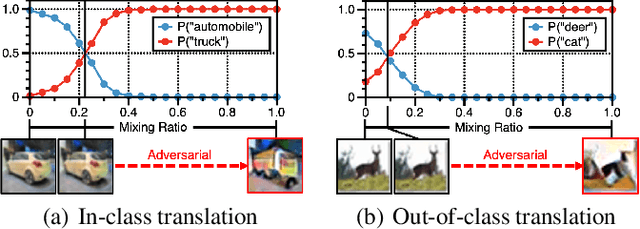

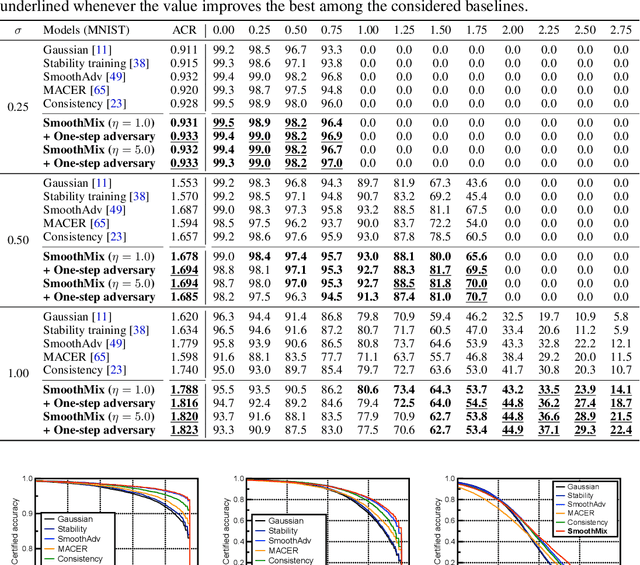

Abstract:Randomized smoothing is currently a state-of-the-art method to construct a certifiably robust classifier from neural networks against $\ell_2$-adversarial perturbations. Under the paradigm, the robustness of a classifier is aligned with the prediction confidence, i.e., the higher confidence from a smoothed classifier implies the better robustness. This motivates us to rethink the fundamental trade-off between accuracy and robustness in terms of calibrating confidences of a smoothed classifier. In this paper, we propose a simple training scheme, coined SmoothMix, to control the robustness of smoothed classifiers via self-mixup: it trains on convex combinations of samples along the direction of adversarial perturbation for each input. The proposed procedure effectively identifies over-confident, near off-class samples as a cause of limited robustness in case of smoothed classifiers, and offers an intuitive way to adaptively set a new decision boundary between these samples for better robustness. Our experimental results demonstrate that the proposed method can significantly improve the certified $\ell_2$-robustness of smoothed classifiers compared to existing state-of-the-art robust training methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge